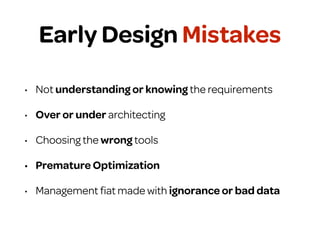

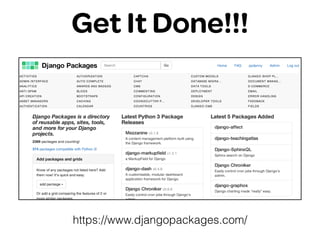

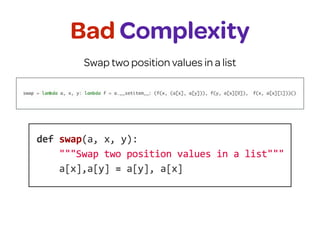

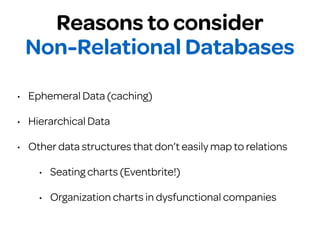

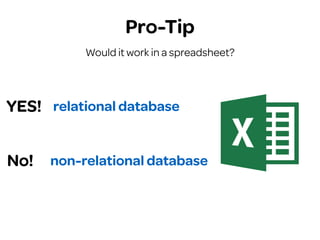

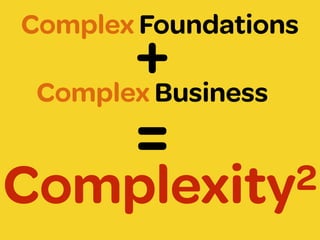

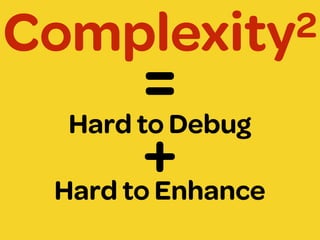

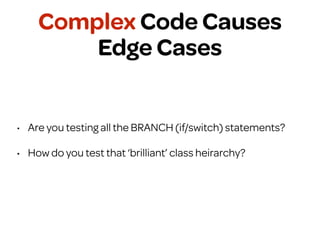

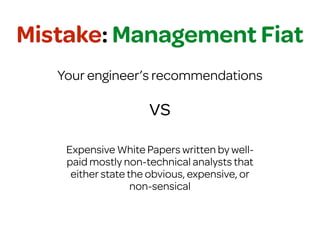

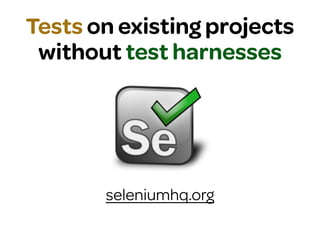

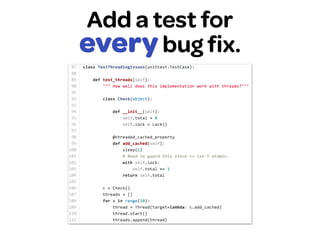

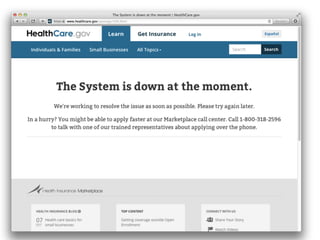

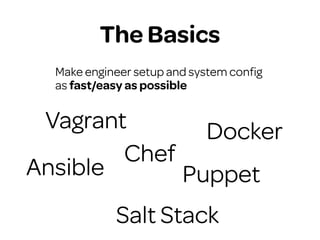

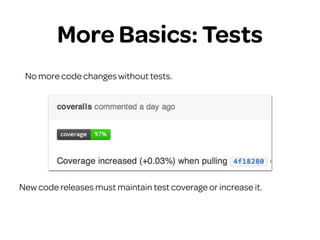

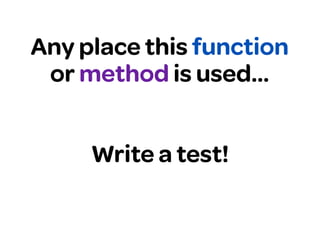

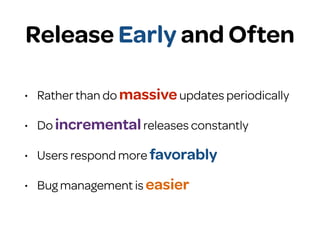

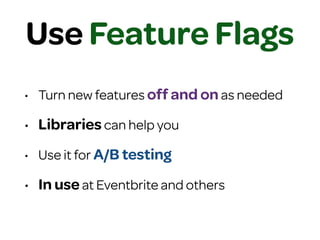

The document provides tips for building maintainable and scalable projects. It discusses the importance of following best practices like writing tests, using version control, and avoiding premature optimization. It also warns against technical debt and recommends focusing on simplicity over complexity when starting a new project.