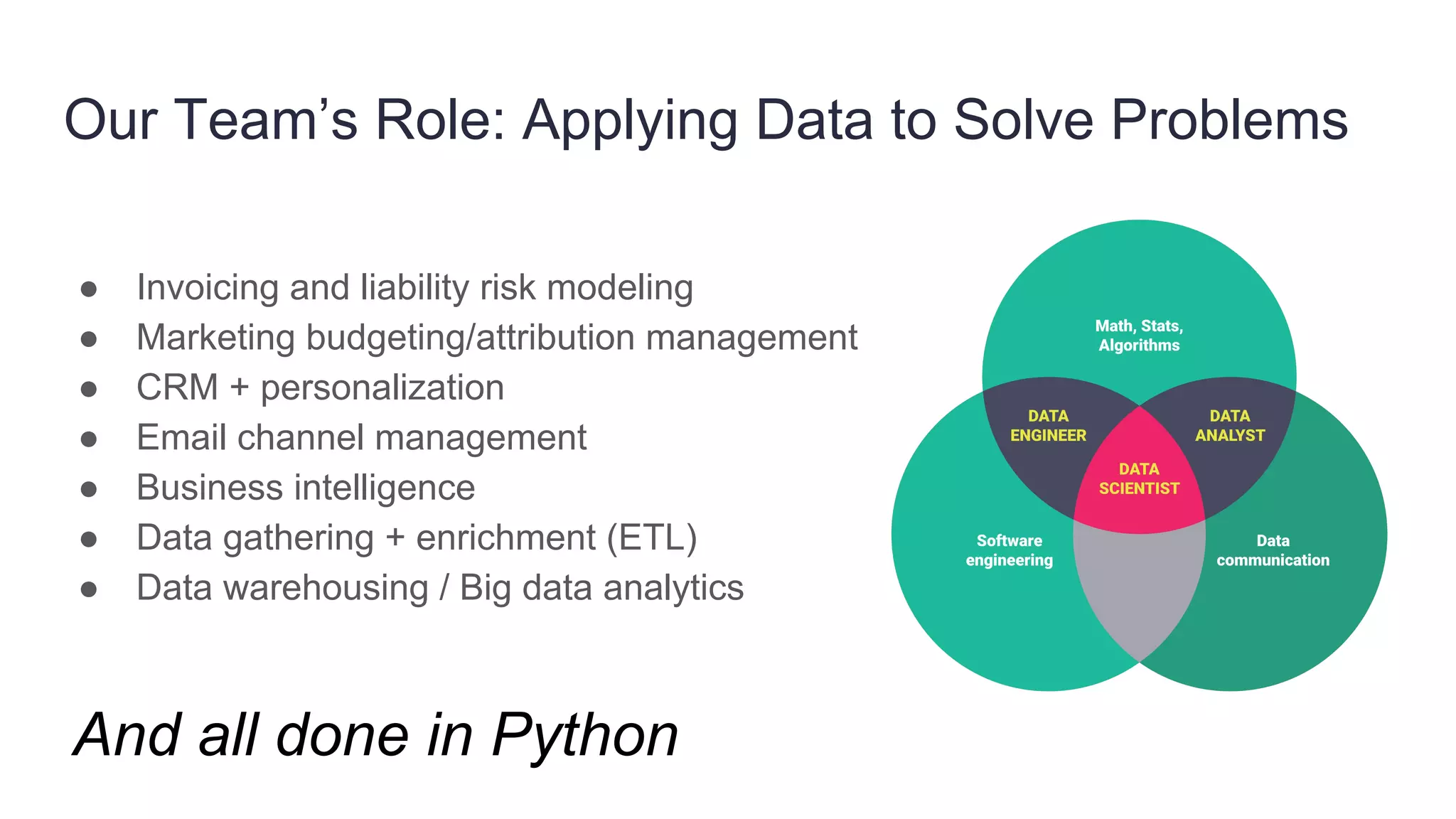

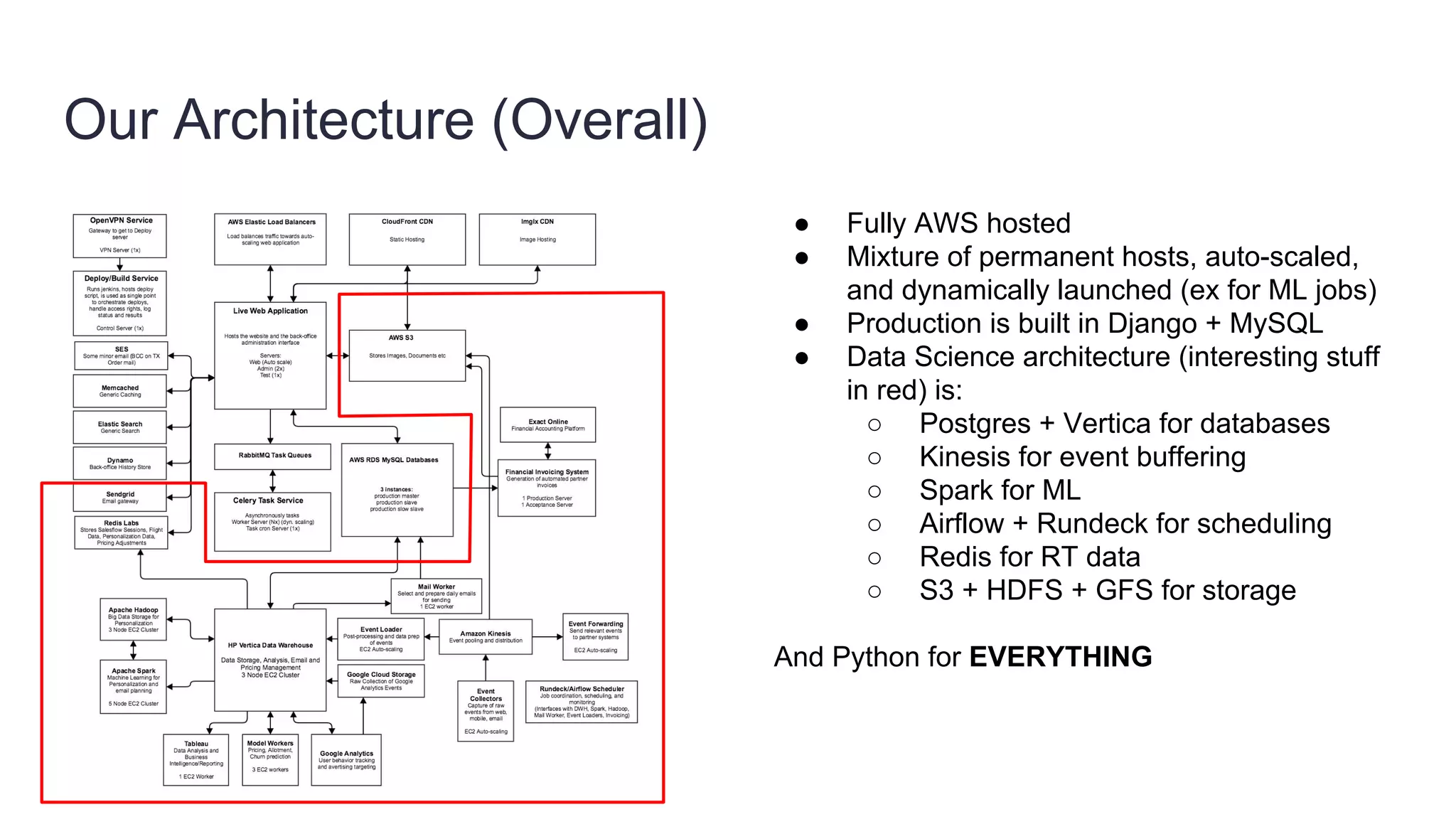

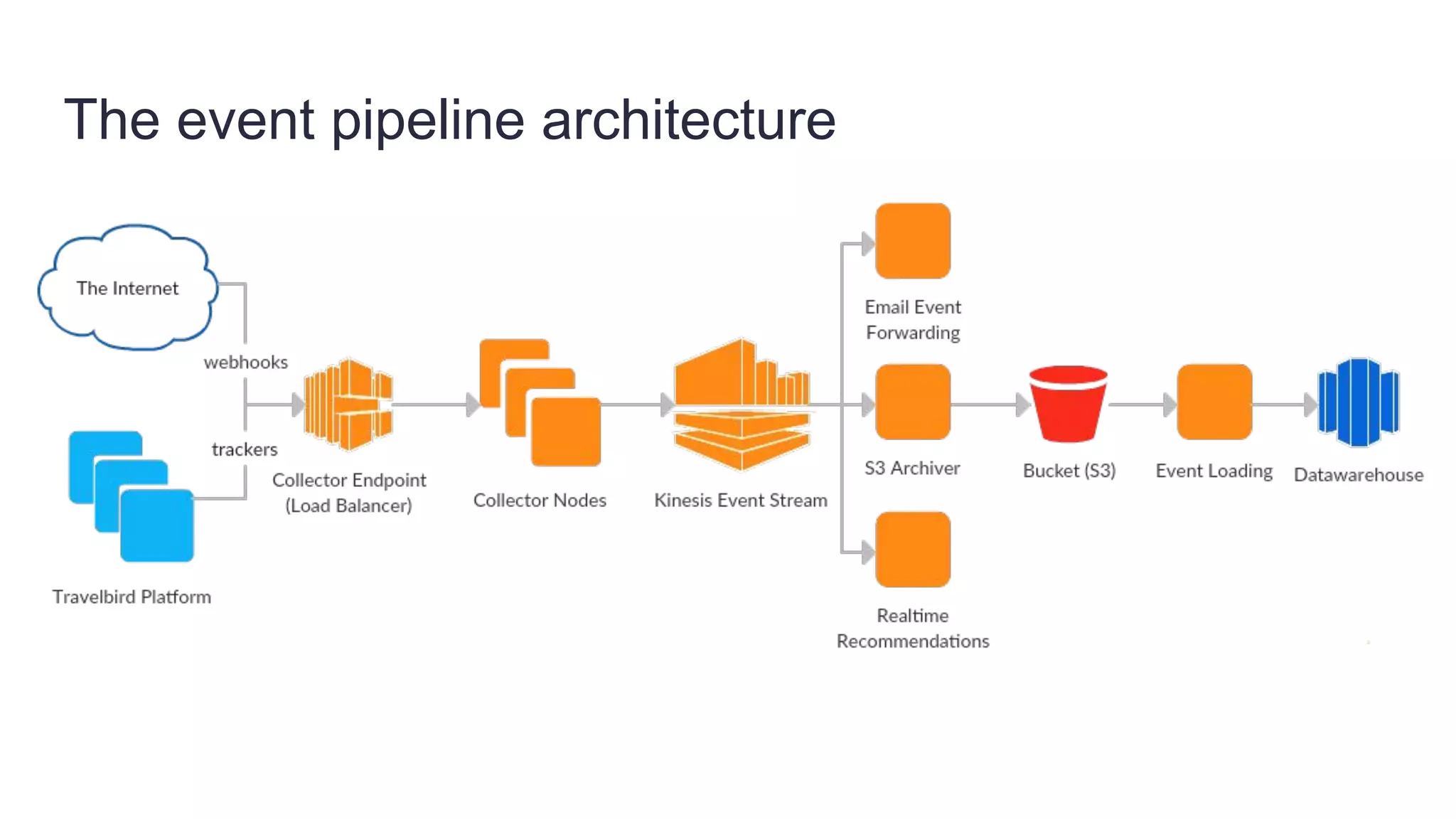

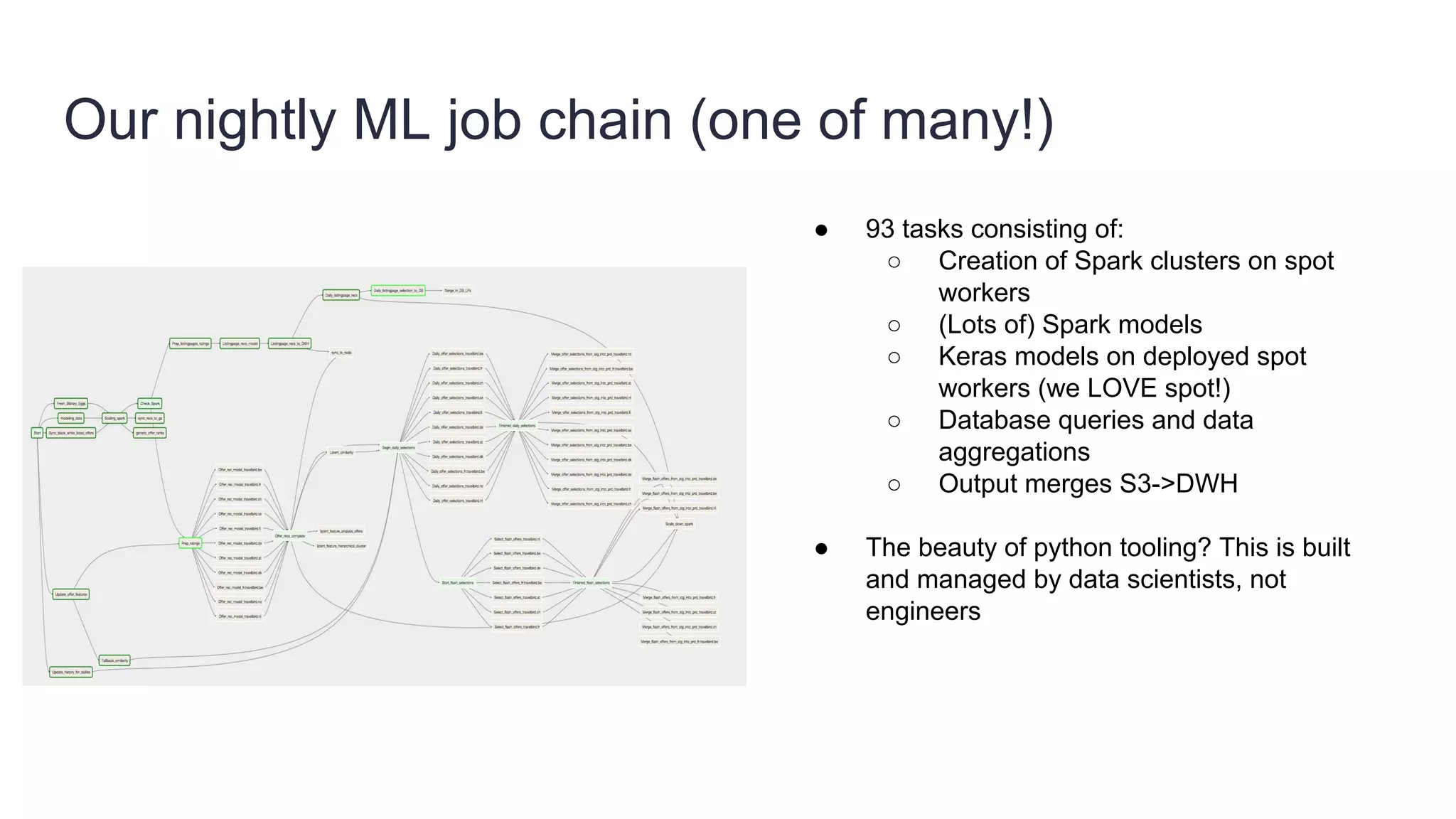

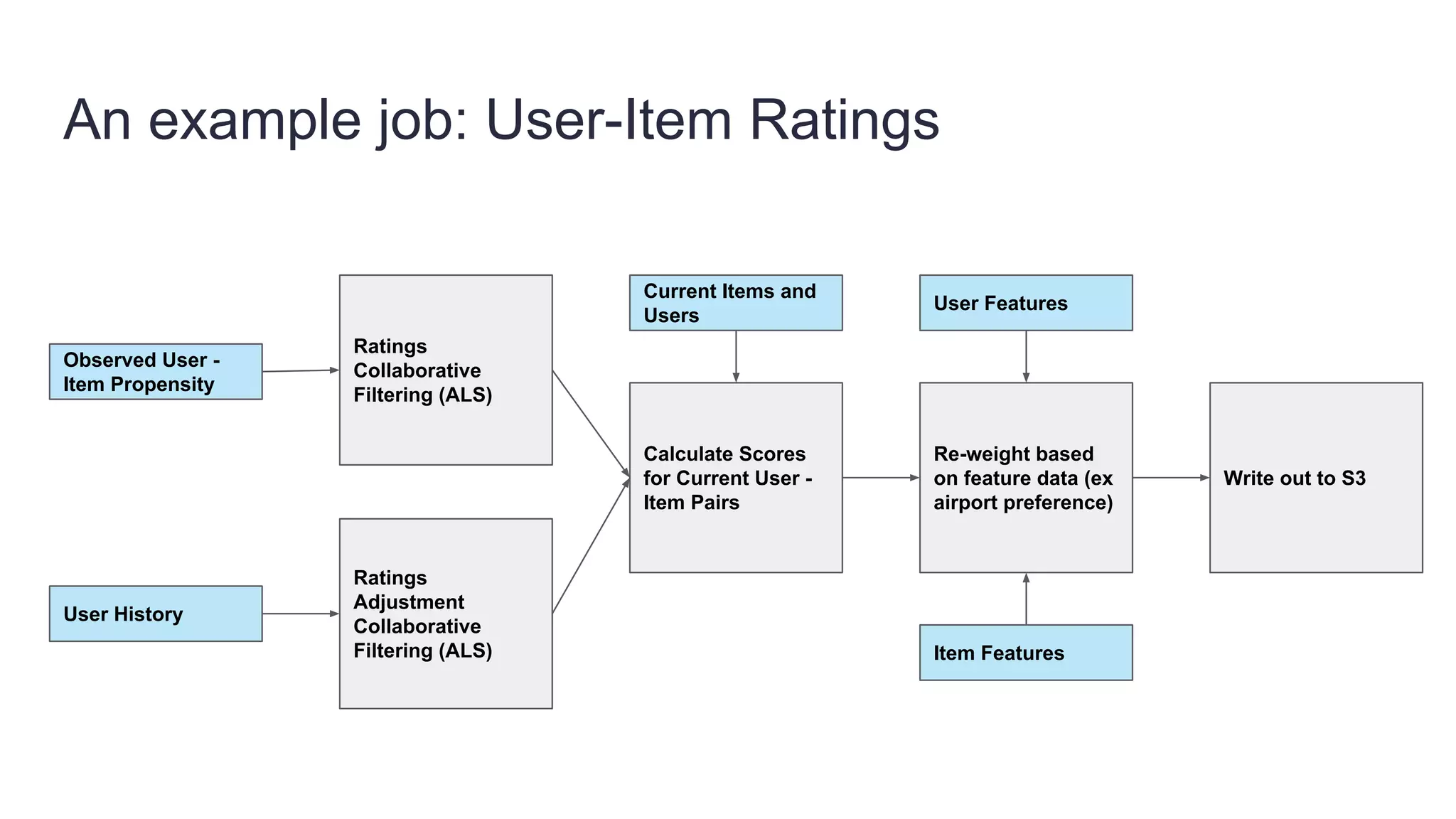

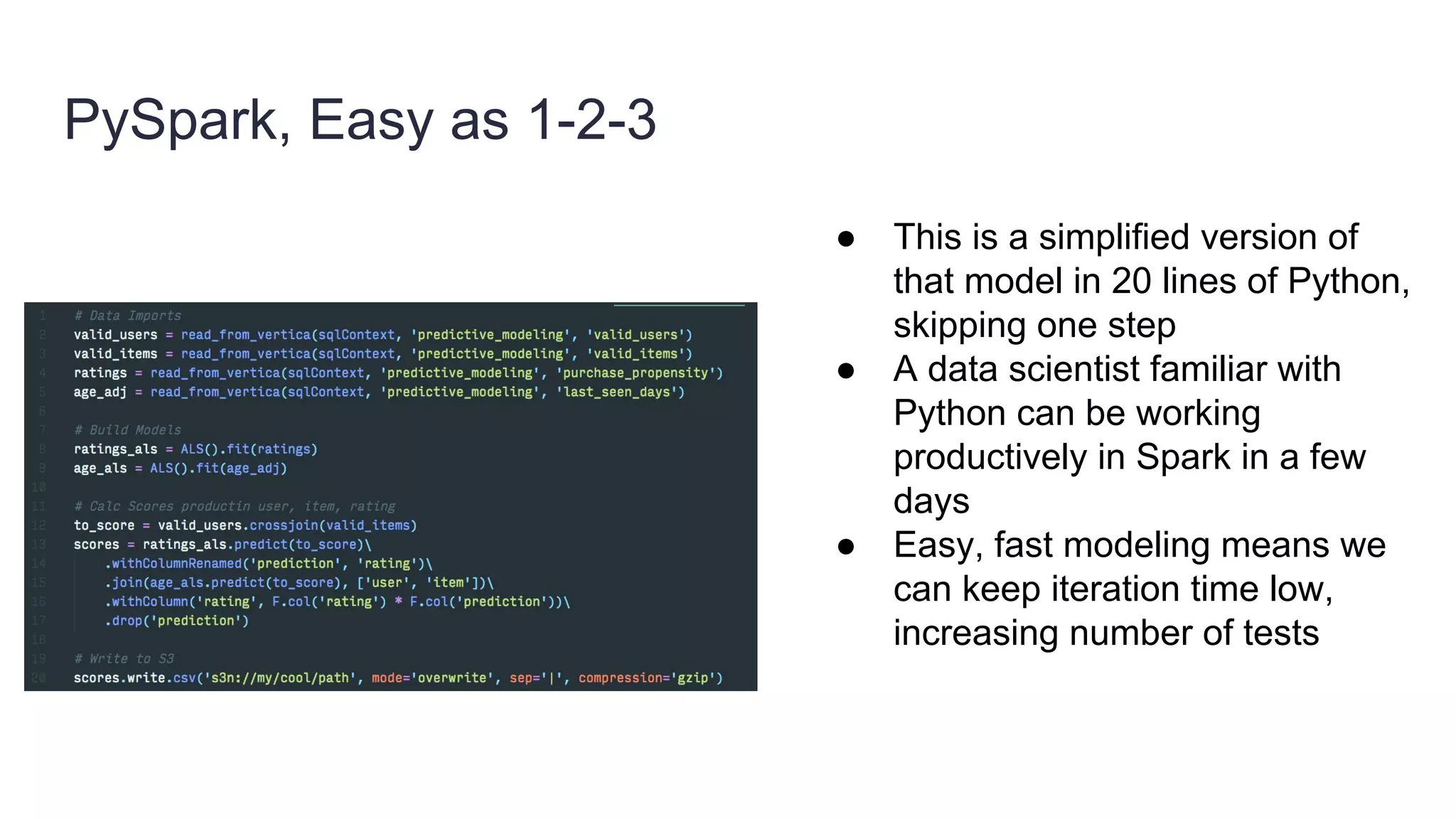

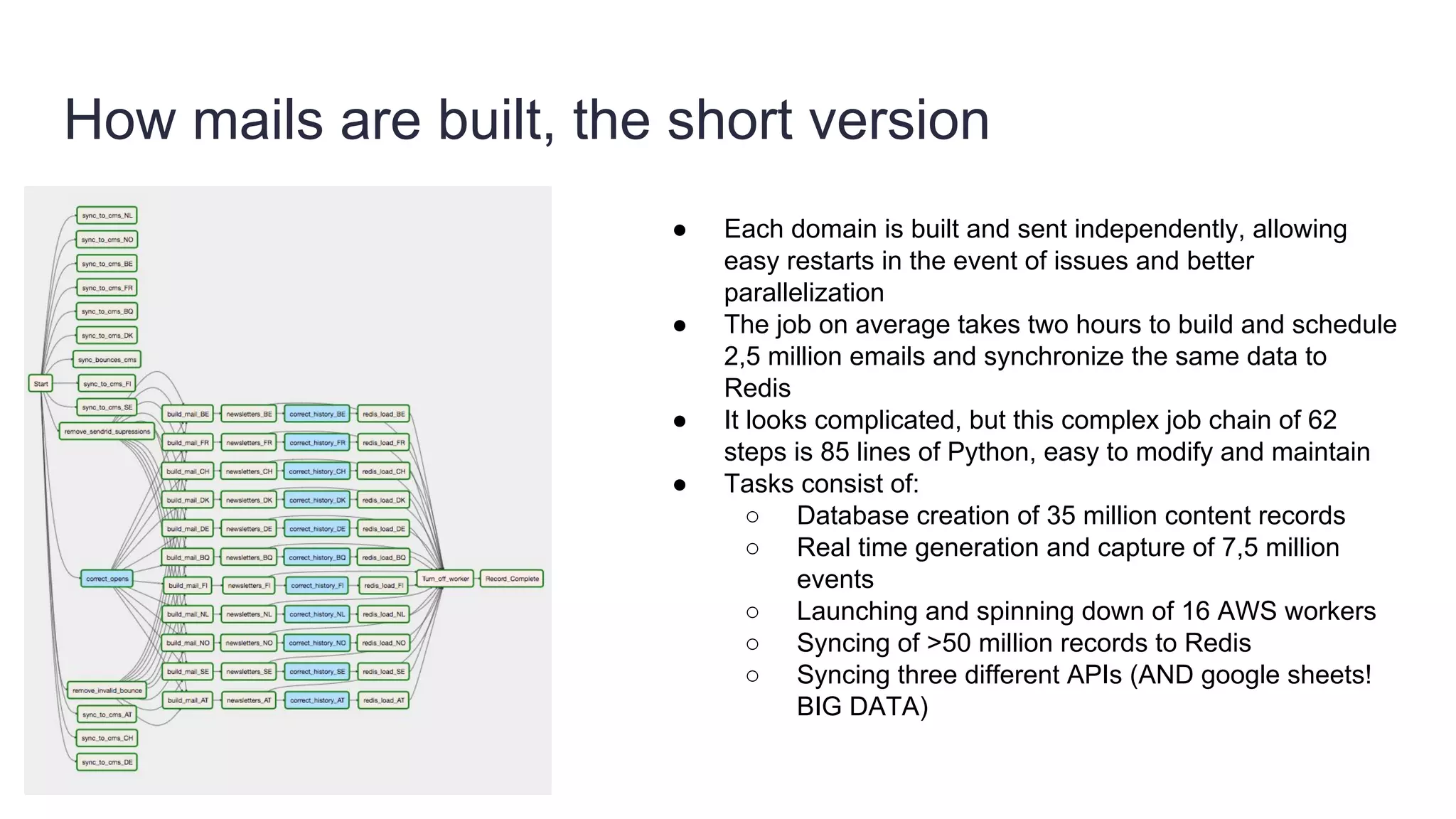

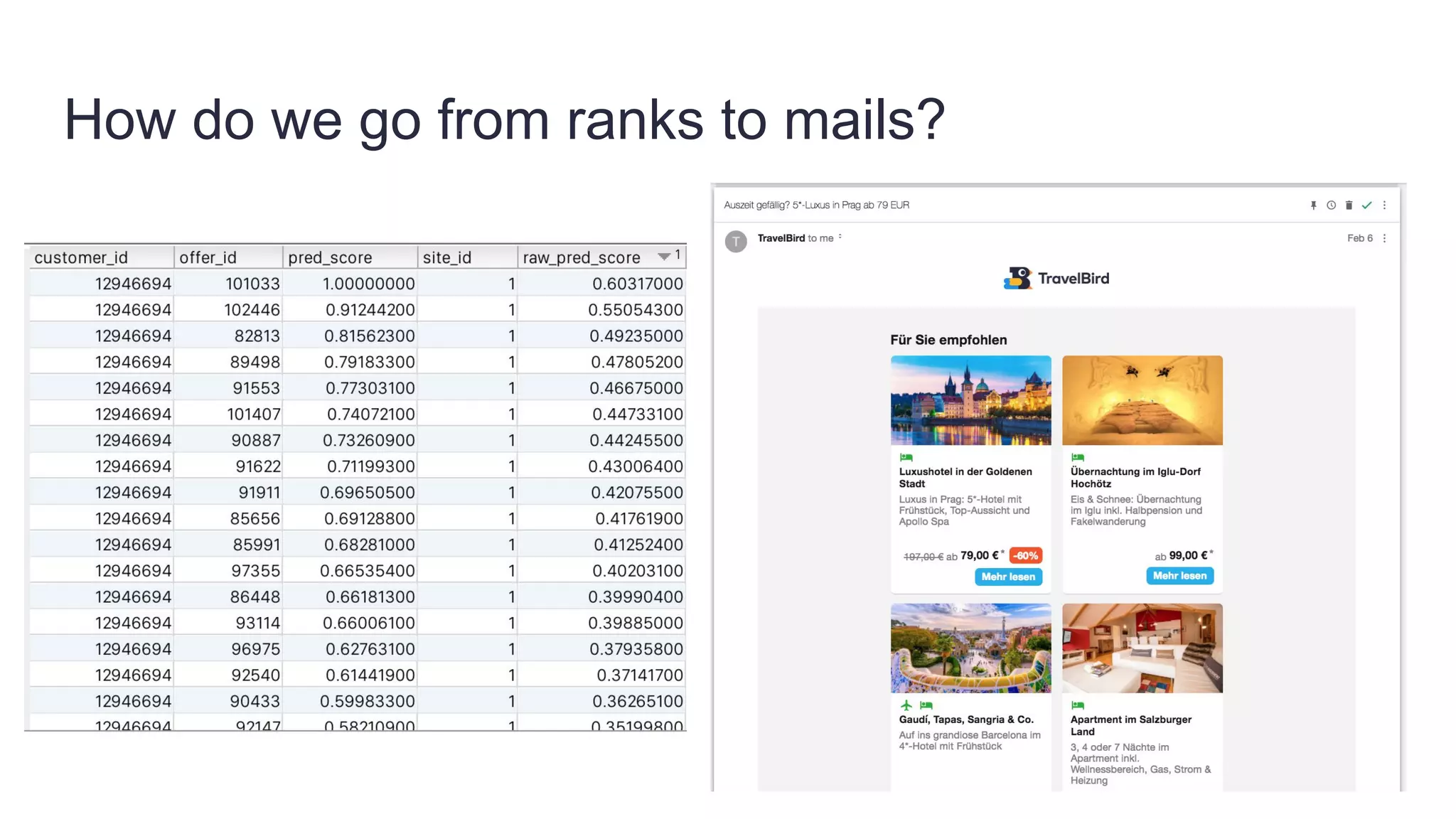

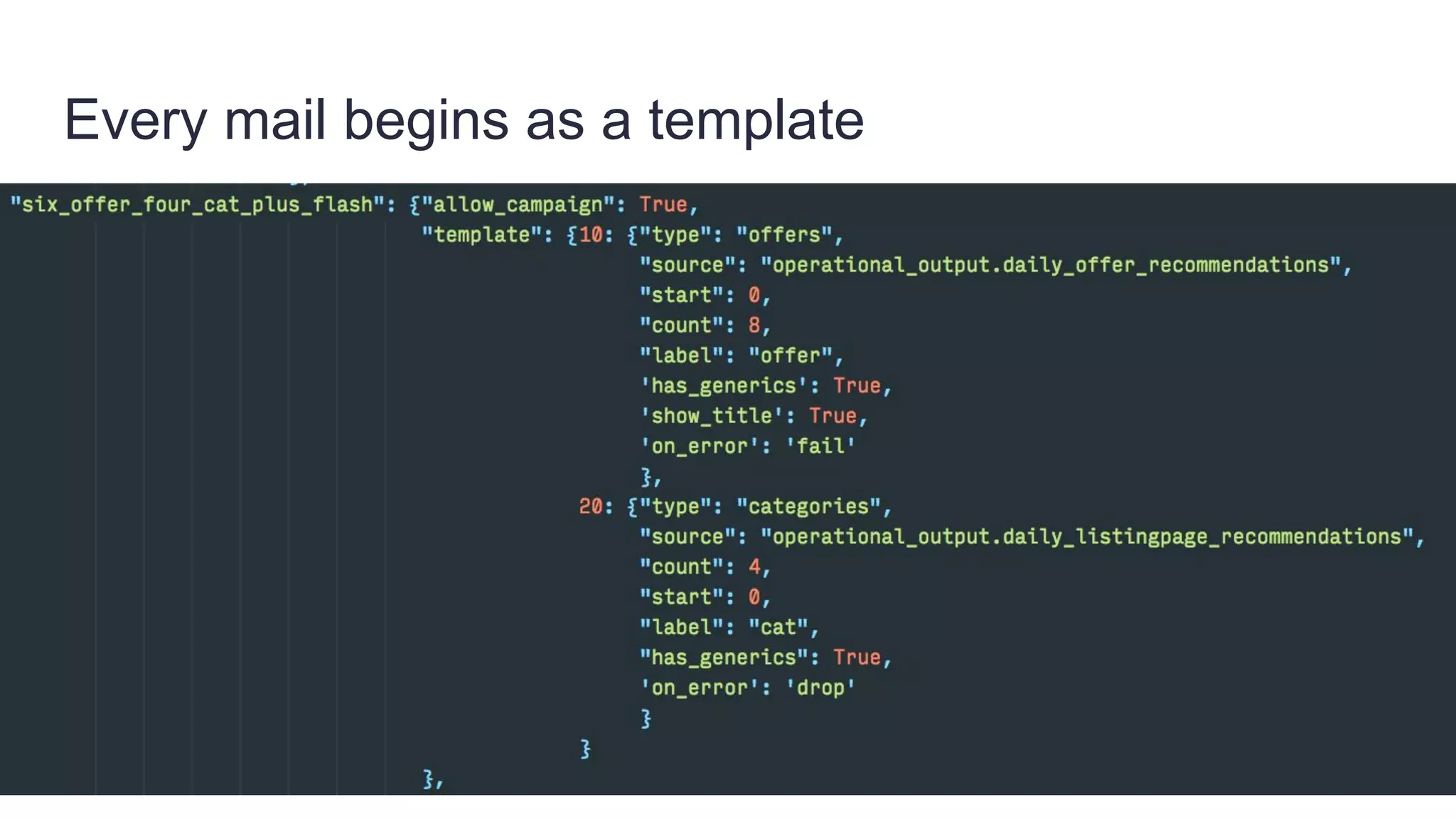

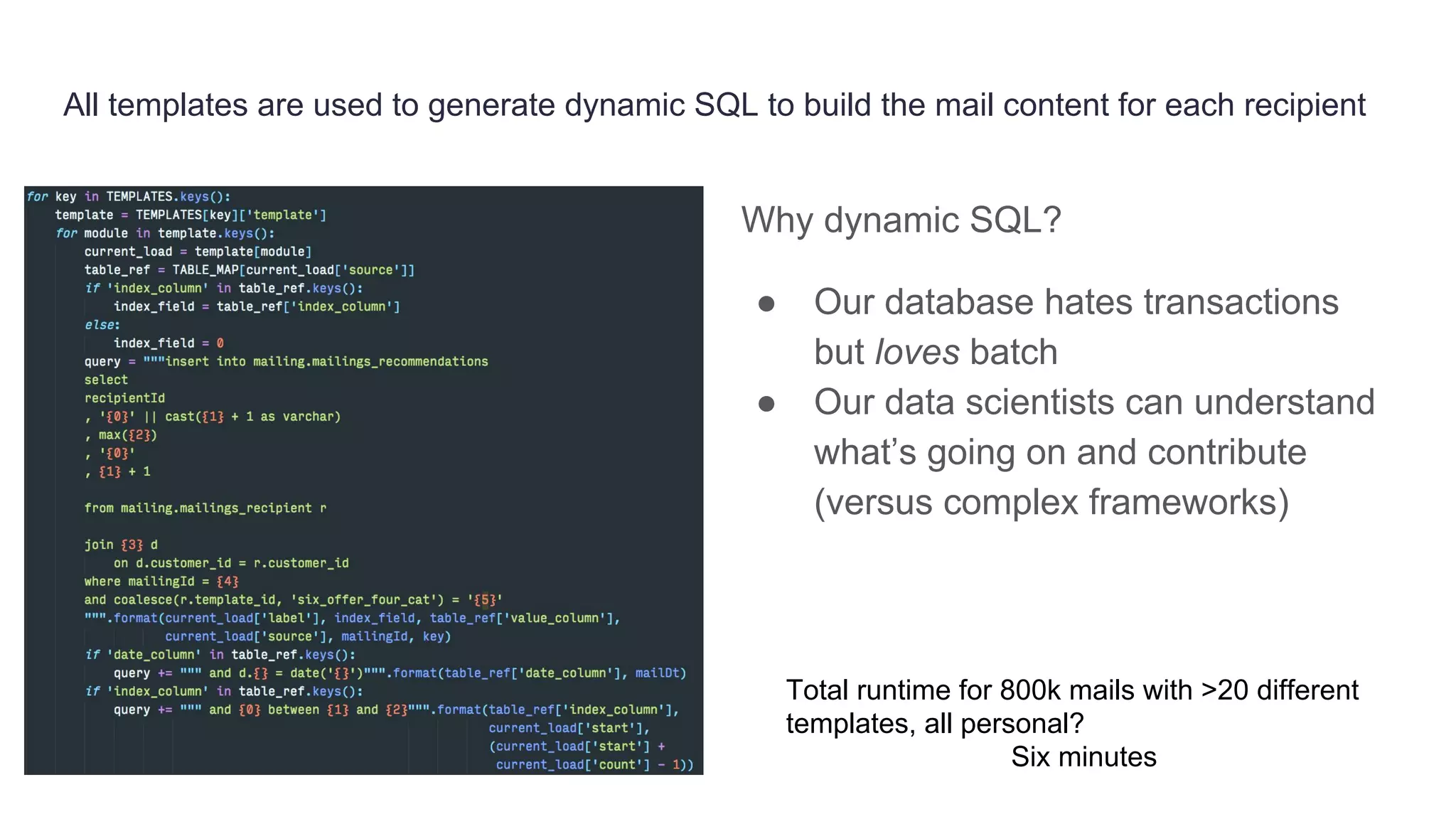

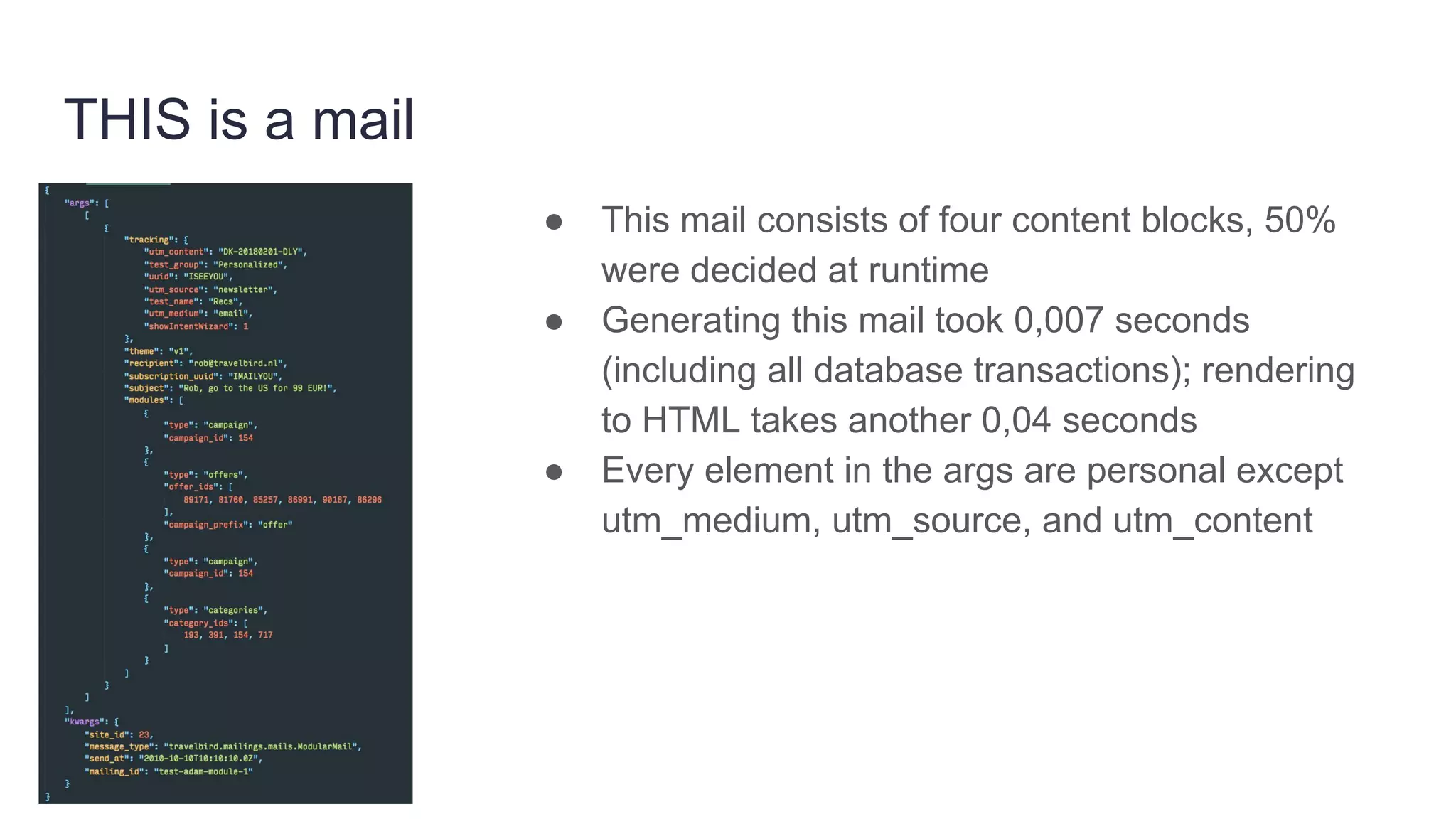

Founded in 2010, Travelbird aims to inspire travelers and simplify the process of discovering new destinations, actively engaging three million travelers daily across eleven European markets. The company utilizes a robust Python-based data architecture for data science, machine learning, and email channel management, ensuring an efficient workflow through tools like Spark and Keras. It employs a complex yet streamlined email generation process that personalizes content for users within minutes, leveraging data-driven decision-making and dynamic SQL for optimization.