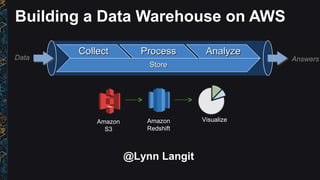

Building a data warehouse with AWS Redshift, Matillion and Yellowfin

•Download as PPT, PDF•

2 likes•1,979 views

screencast of the process to set up a data warehouse with AWS Redshift along with Matillion ETL and Yellowfin for data visualization

Report

Share

Report

Share

Recommended

SQL Server on Google Cloud Platform

Getting started demo on running SQL Server on Google Cloud Platform GCE

AWS for the Data Professional

Core AWS services for the data professional - EC2, RDS, S3, Kinesis and more

Benchmarking Aerospike on the Google Cloud - NoSQL Speed with Ease

Deck from blog post detailing our work with Aerospike to verify their performance benchmark on the Google Cloud, using GCE (Google Compute Engine) instances of 4 million TPS. Blog post is here -- http://googlecloudplatform.blogspot.com/2015/10/speed-with-Ease-NoSQL-on-the-Google-Cloud-Platform.html

Serverless Reality

Talk from NDCOslo on Serverless Cloud patterns - includes AWS Lambda, Azure and GCP Functions

New AWS Services for Bioinformatics

New AWS Services for Bioinformatics - Athena, Batch, Step Functions, Glue and QuickSight

AWS for the SQL Server Pro

About AWS services for SQL Server Professionals - EC2, RDS, DynamoDB, MapReduce and more. Also covers pricing - understanding on-demand, reserved and spot instances

Recommended

SQL Server on Google Cloud Platform

Getting started demo on running SQL Server on Google Cloud Platform GCE

AWS for the Data Professional

Core AWS services for the data professional - EC2, RDS, S3, Kinesis and more

Benchmarking Aerospike on the Google Cloud - NoSQL Speed with Ease

Deck from blog post detailing our work with Aerospike to verify their performance benchmark on the Google Cloud, using GCE (Google Compute Engine) instances of 4 million TPS. Blog post is here -- http://googlecloudplatform.blogspot.com/2015/10/speed-with-Ease-NoSQL-on-the-Google-Cloud-Platform.html

Serverless Reality

Talk from NDCOslo on Serverless Cloud patterns - includes AWS Lambda, Azure and GCP Functions

New AWS Services for Bioinformatics

New AWS Services for Bioinformatics - Athena, Batch, Step Functions, Glue and QuickSight

AWS for the SQL Server Pro

About AWS services for SQL Server Professionals - EC2, RDS, DynamoDB, MapReduce and more. Also covers pricing - understanding on-demand, reserved and spot instances

Day 4 - Big Data on AWS - RedShift, EMR & the Internet of Things

Big Data is everywhere these days. But what is it and how can you use it to fuel your business? Data is as important to organizations as labour and capital, and if organizations can effectively capture, analyze, visualize and apply big data insights to their business goals, they can differentiate themselves from their competitors and outperform them in terms of operational efficiency and the bottom line.

Join this session to understand the different AWS Big Data and Analytics services such as Amazon Elastic MapReduce (Hadoop), Amazon Redshift (Data Warehouse) and Amazon Kinesis (Streaming), when to use them and how they work together.

Reasons to attend:

- Learn how AWS can help you process and make better use of your data with meaningful insights.

- Learn about Amazon Elastic MapReduce and Amazon Redshift, fully managed petabyte-scale data warehouse solutions.

- Learn about real time data processing with Amazon Kinesis.

AWS re:Invent 2016: Tableau Rules of Engagement in the Cloud (STG306)

You have billions of events in your fact table, all of it waiting to be visualized. Enter Tableau… but wait: how can you ensure scalability and speed with your data in Amazon S3, Spark, Amazon Redshift, or Presto? In this talk, you’ll hear how Albert Wong and Srikanth Devidi at Netflix use Tableau on top of their big data stack. Albert and Srikanth also show how you can get the most out of a massive dataset using Tableau, and help guide you through the problems you may encounter along the way. Session sponsored by Tableau.

AWS Competency Partner

AWS re:Invent 2016: How Mapbox Uses the AWS Edge to Deliver Fast Maps for Mob...

Ian Ward, Platform and Security Engineer from Mapbox, discusses how the AWS global edge network helps improve the availability and performance of delivering hundreds of billions of map tiles to hundreds of millions of end users across the globe on mobile devices, in cars, and over the web. In this session, Ian shares insights on how Mapbox manages day-to-day edge operations using Amazon CloudFront logs, dashboards, and ad hoc queries, and how Mapbox has configured CloudFront with dozens of behaviors and origins to customize their content delivery. Mapbox has grown from using a single AWS region to using several regions, so Ian also explains how his team uses Amazon Route 53 and open source tools to simplify complexity around regional failover, and how Mapbox leverages AWS WAF to deter attacks and abuse.

AWS Batch: Simplifying batch computing in the cloud

Docker enables you to create highly customized images that are used to execute your jobs. These images allow you to easily share complex applications between teams and even organizations. However, sometimes you might just need to run a script! This talk walk you through the steps to create and run a simple “fetch & run” job in AWS Batch. AWS Batch executes jobs as Docker containers using Amazon ECS. You build a simple Docker image containing a helper application that can download your script or even a zip file from Amazon S3. AWS Batch then launches an instance of your container image to retrieve your script and run your job.

AWS re:Invent 2016: Taking Data to the Extreme (MBL202)

As GoPro expands into content networks and launches new products, new challenges have appeared. One of the most critical challenges facing GoPro during this period of rapid growth is their ability to make effective use of massive amounts of data. Every day, GoPro collects increasing amounts of data generated by internet connected consumer devices (smart cameras, smart drones), GoPro mobile apps, GoPro content networks, GoPro e-commerce sales, and social media. This data ranges from raw camera logs to refined and well-structured e-commerce datasets. In the past, it took GoPro months to understand new inbound data and determine how to transform or augment it for analysis. To streamline this process and bridge the gap between tech-savvy engineers and data-savvy analysts, GoPro is creating an analysis loop, which informs product usage trends and product insights. This analysis loop serves a large ecosystem of GoPro executives, product managers, engineers, data scientists, and business analysts through an integrated technology pipeline consisting of Apache Kafka, Apache Spark Streaming, Cloudera’s distribution of Hadoop, and Tableau’s Data Visualization Software as the end user analytical tool. Session sponsored by Tableau Software.

Big problems Big Data, simple solutions

How can you squeeze money from your big data, a real case scenario with Mondadori

AWSome Day Geneva 31/05/2017

Scaling Traffic from 0 to 139 Million Unique Visitors

How Yelp scaled from 0 to 139 million unique visitors. Slides from LAUNCH Scale, 2014

Introduction to AWS Kinesis

A quick overview of AWS Kinesis: What is Kinesis, what problems does Kinesis solve, and how might you integrate Kinesis with an existing data warehouse.

Introduction to Amazon Athena

by Joyjeet Banerjee, Solutions Architect, AWS

Amazon Athena is a new serverless query service that makes it easy to analyze data in Amazon S3, using standard SQL. With Athena, there is no infrastructure to setup or manage, and you can start analyzing your data immediately. You don’t even need to load your data into Athena, it works directly with data stored in S3. Level 200

In this session, we will show you how easy it is to start querying your data stored in Amazon S3, with Amazon Athena. First we will use Athena to create the schema for data already in S3. Then, we will demonstrate how you can run interactive queries through the built-in query editor. We will provide best practices and use cases for Athena. Then, we will talk about supported queries, data formats, and strategies to save costs when querying data with Athena.

AWS Kinesis - Streams, Firehose, Analytics

An introduction to AWS Kinesis including AWS Kinesis Streams, Firehose and Analytics. Focuses on the details of Kinesis Streams concepts such as partition key, sequence number, sharding, KCL etc. A simple comparison between similar services like Kafka and SQS with Amazon Kinesis Streams service.

Scaling Galaxy on Google Cloud Platform

Deck from Galaxy 2017 conference in Australia on scaling Galaxy bioinformatics tools via GCP or Google Cloud Platform

Introduction to Amazon Kinesis Analytics

Amazon Kinesis Analytics is the easiest way to process streaming data in real time with standard SQL without having to learn new programming languages or processing frameworks. Amazon Kinesis analytics enables you to create and run SQL queries on streaming data so that you can gain actionable insights and respond to your business and customer needs promptly. In this session, we will provide an overview of the capabilities of the Amazon Kinesis Analytics. We will show you how you can build an entire stream processing pipeline to collect, ingest, process, and emit streaming data using Amazon Kinesis Analytics, Amazon Kinesis Firehose, and Amazon Kinesis Streams.

Optimizing Storage for Big Data Analytics Workloads

With distributed frameworks like Hadoop and Kafka, it is essential to deploy the right environment to successfully support these workloads. Learn about the different block storage options from AWS and walk through with our experts on how to select the best option for your big data analytic workloads. We will demonstrate how to setup, select, and modify volume types to right size your environment needs.

Real-time Streaming and Querying with Amazon Kinesis and Amazon Elastic MapRe...

Originally, Hadoop was used as a batch analytics tool; however, this is rapidly changing, as applications move towards real-time processing and streaming. Amazon Elastic MapReduce has made running Hadoop in the cloud easier and more accessible than ever. Each day, tens of thousands of Hadoop clusters are run on the Amazon Elastic MapReduce infrastructure by users of every size — from university students to Fortune 50 companies. We recently launched Amazon Kinesis – a managed service for real-time processing of high volume, streaming data. Amazon Kinesis enables a new class of big data applications which can continuously analyze data at any volume and throughput, in real-time. Adi will discuss each service, dive into how customers are adopting the services for different use cases, and share emerging best practices. Learn how you can architect Amazon Kinesis and Amazon Elastic MapReduce together to create a highly scalable real-time analytics solution which can ingest and process terabytes of data per hour from hundreds of thousands of different concurrent sources. Forever change how you process web site click-streams, marketing and financial transactions, social media feeds, logs and metering data, and location-tracking events.

NEW LAUNCH! Intro to Amazon Athena. Easily analyze data in S3, using SQL.

Amazon Athena is a new interactive query service that makes it easy to analyze data in Amazon S3, using standard SQL. Athena is serverless, so there is no infrastructure to setup or manage, and you can start analyzing your data immediately. You don’t even need to load your data into Athena, it works directly with data stored in S3.

In this session, we will show you how easy is to start querying your data stored in Amazon S3, with Amazon Athena. First we will use Athena to create the schema for data already in S3. Then, we will demonstrate how you can run interactive queries through the built-in query editor. We will provide best practices and use cases for Athena. Then, we will talk about supported queries, data formats, and strategies to save costs when querying data with Athena.

Introduction to AWS Glue

In this session, we introduce AWS Glue, provide an overview of its components, and share how you can use AWS Glue to automate discovering your data, cataloging it, and preparing it for analysis.

Streaming ETL for Data Lakes using Amazon Kinesis Firehose - May 2017 AWS Onl...

Learning Objectives:

- Understand key requirements for collecting, preparing, and loading streaming data into data lakes

- Get an overview of transmitting data using Amazon Kinesis Firehose

- Learn how to perform data transformations with Amazon Kinesis Firehose

Data lakes enable your employees across the organization to access and analyze massive amounts of unstructured and structured data from disparate data sources, many of which generate data continuously and rapidly. Making this data available in a timely fashion for analysis requires a streaming solution that can durably and cost-effectively ingest this data into your data lake. Amazon Kinesis Firehose is a fully managed service that makes it easy to prepare and load streaming data into AWS. In this tech talk, we will provide an overview of Amazon Kinesis Firehose and dive deep into how you can use the service to collect, transform, batch, compress, and load real-time streaming data into your Amazon S3 data lakes.

Real-Time Log Analytics using Amazon Kinesis and Amazon Elasticsearch Service...

Learning Objectives:

- Understand how to easily build an end to end, real time log analytics solution

- Get an overview of collecting and processing data in real-time using Amazon Kinesis

- Learn how to Interactively query and visualize your log data using Amazon Elasticsearch Service

Log analytics is a common big data use case that allows you to analyze log data from websites, mobile devices, servers, sensors, and more for a wide variety of applications such as digital marketing, application monitoring, fraud detection, ad tech, gaming, and IoT. Moving your log analytics to real time can speed up your time to information allowing you to get insights in seconds or minutes instead of hours or days. In this session, you will learn how to ingest and deliver logs with no infrastructure using Amazon Kinesis Firehose. We will show how Amazon Kinesis Analytics can be used to process log data in real time to build responsive analytics. Finally, we will show how to use Amazon Elasticsearch Service to interactively query and visualize your log data.

(WRK302) Event-Driven Programming

Interested in learning about event-driven programming? In this session we will introduce you to some of the basics of using Amazon DynamoDB, its newly launched Streams feature and AWS Lambda. We will provide an overview of both AWS products and walk you through the process of building a real-world application using AWS Triggers, which combines DynamoDB Streams and AWS Lambda.

Success has Many Query Engines- Tel Aviv Summit 2018

Your data has value for multiple business functions in your organization. Shorten your time to analytics and take faster, better decisions based on data.

In this session you will learn how you can access your data from a myriad of tools such as multiple EMR clusters, Athena & Redshift.

Data Transformation Patterns in AWS - AWS Online Tech Talks

Learning Objectives:

- Learn how to accelerate common data transformations from a variety of data

- Learn how to efficiently orchestrate transformation jobs

- Learn best practices and methodologies in data preparation for analytics

More Related Content

What's hot

Day 4 - Big Data on AWS - RedShift, EMR & the Internet of Things

Big Data is everywhere these days. But what is it and how can you use it to fuel your business? Data is as important to organizations as labour and capital, and if organizations can effectively capture, analyze, visualize and apply big data insights to their business goals, they can differentiate themselves from their competitors and outperform them in terms of operational efficiency and the bottom line.

Join this session to understand the different AWS Big Data and Analytics services such as Amazon Elastic MapReduce (Hadoop), Amazon Redshift (Data Warehouse) and Amazon Kinesis (Streaming), when to use them and how they work together.

Reasons to attend:

- Learn how AWS can help you process and make better use of your data with meaningful insights.

- Learn about Amazon Elastic MapReduce and Amazon Redshift, fully managed petabyte-scale data warehouse solutions.

- Learn about real time data processing with Amazon Kinesis.

AWS re:Invent 2016: Tableau Rules of Engagement in the Cloud (STG306)

You have billions of events in your fact table, all of it waiting to be visualized. Enter Tableau… but wait: how can you ensure scalability and speed with your data in Amazon S3, Spark, Amazon Redshift, or Presto? In this talk, you’ll hear how Albert Wong and Srikanth Devidi at Netflix use Tableau on top of their big data stack. Albert and Srikanth also show how you can get the most out of a massive dataset using Tableau, and help guide you through the problems you may encounter along the way. Session sponsored by Tableau.

AWS Competency Partner

AWS re:Invent 2016: How Mapbox Uses the AWS Edge to Deliver Fast Maps for Mob...

Ian Ward, Platform and Security Engineer from Mapbox, discusses how the AWS global edge network helps improve the availability and performance of delivering hundreds of billions of map tiles to hundreds of millions of end users across the globe on mobile devices, in cars, and over the web. In this session, Ian shares insights on how Mapbox manages day-to-day edge operations using Amazon CloudFront logs, dashboards, and ad hoc queries, and how Mapbox has configured CloudFront with dozens of behaviors and origins to customize their content delivery. Mapbox has grown from using a single AWS region to using several regions, so Ian also explains how his team uses Amazon Route 53 and open source tools to simplify complexity around regional failover, and how Mapbox leverages AWS WAF to deter attacks and abuse.

AWS Batch: Simplifying batch computing in the cloud

Docker enables you to create highly customized images that are used to execute your jobs. These images allow you to easily share complex applications between teams and even organizations. However, sometimes you might just need to run a script! This talk walk you through the steps to create and run a simple “fetch & run” job in AWS Batch. AWS Batch executes jobs as Docker containers using Amazon ECS. You build a simple Docker image containing a helper application that can download your script or even a zip file from Amazon S3. AWS Batch then launches an instance of your container image to retrieve your script and run your job.

AWS re:Invent 2016: Taking Data to the Extreme (MBL202)

As GoPro expands into content networks and launches new products, new challenges have appeared. One of the most critical challenges facing GoPro during this period of rapid growth is their ability to make effective use of massive amounts of data. Every day, GoPro collects increasing amounts of data generated by internet connected consumer devices (smart cameras, smart drones), GoPro mobile apps, GoPro content networks, GoPro e-commerce sales, and social media. This data ranges from raw camera logs to refined and well-structured e-commerce datasets. In the past, it took GoPro months to understand new inbound data and determine how to transform or augment it for analysis. To streamline this process and bridge the gap between tech-savvy engineers and data-savvy analysts, GoPro is creating an analysis loop, which informs product usage trends and product insights. This analysis loop serves a large ecosystem of GoPro executives, product managers, engineers, data scientists, and business analysts through an integrated technology pipeline consisting of Apache Kafka, Apache Spark Streaming, Cloudera’s distribution of Hadoop, and Tableau’s Data Visualization Software as the end user analytical tool. Session sponsored by Tableau Software.

Big problems Big Data, simple solutions

How can you squeeze money from your big data, a real case scenario with Mondadori

AWSome Day Geneva 31/05/2017

Scaling Traffic from 0 to 139 Million Unique Visitors

How Yelp scaled from 0 to 139 million unique visitors. Slides from LAUNCH Scale, 2014

Introduction to AWS Kinesis

A quick overview of AWS Kinesis: What is Kinesis, what problems does Kinesis solve, and how might you integrate Kinesis with an existing data warehouse.

Introduction to Amazon Athena

by Joyjeet Banerjee, Solutions Architect, AWS

Amazon Athena is a new serverless query service that makes it easy to analyze data in Amazon S3, using standard SQL. With Athena, there is no infrastructure to setup or manage, and you can start analyzing your data immediately. You don’t even need to load your data into Athena, it works directly with data stored in S3. Level 200

In this session, we will show you how easy it is to start querying your data stored in Amazon S3, with Amazon Athena. First we will use Athena to create the schema for data already in S3. Then, we will demonstrate how you can run interactive queries through the built-in query editor. We will provide best practices and use cases for Athena. Then, we will talk about supported queries, data formats, and strategies to save costs when querying data with Athena.

AWS Kinesis - Streams, Firehose, Analytics

An introduction to AWS Kinesis including AWS Kinesis Streams, Firehose and Analytics. Focuses on the details of Kinesis Streams concepts such as partition key, sequence number, sharding, KCL etc. A simple comparison between similar services like Kafka and SQS with Amazon Kinesis Streams service.

Scaling Galaxy on Google Cloud Platform

Deck from Galaxy 2017 conference in Australia on scaling Galaxy bioinformatics tools via GCP or Google Cloud Platform

Introduction to Amazon Kinesis Analytics

Amazon Kinesis Analytics is the easiest way to process streaming data in real time with standard SQL without having to learn new programming languages or processing frameworks. Amazon Kinesis analytics enables you to create and run SQL queries on streaming data so that you can gain actionable insights and respond to your business and customer needs promptly. In this session, we will provide an overview of the capabilities of the Amazon Kinesis Analytics. We will show you how you can build an entire stream processing pipeline to collect, ingest, process, and emit streaming data using Amazon Kinesis Analytics, Amazon Kinesis Firehose, and Amazon Kinesis Streams.

Optimizing Storage for Big Data Analytics Workloads

With distributed frameworks like Hadoop and Kafka, it is essential to deploy the right environment to successfully support these workloads. Learn about the different block storage options from AWS and walk through with our experts on how to select the best option for your big data analytic workloads. We will demonstrate how to setup, select, and modify volume types to right size your environment needs.

Real-time Streaming and Querying with Amazon Kinesis and Amazon Elastic MapRe...

Originally, Hadoop was used as a batch analytics tool; however, this is rapidly changing, as applications move towards real-time processing and streaming. Amazon Elastic MapReduce has made running Hadoop in the cloud easier and more accessible than ever. Each day, tens of thousands of Hadoop clusters are run on the Amazon Elastic MapReduce infrastructure by users of every size — from university students to Fortune 50 companies. We recently launched Amazon Kinesis – a managed service for real-time processing of high volume, streaming data. Amazon Kinesis enables a new class of big data applications which can continuously analyze data at any volume and throughput, in real-time. Adi will discuss each service, dive into how customers are adopting the services for different use cases, and share emerging best practices. Learn how you can architect Amazon Kinesis and Amazon Elastic MapReduce together to create a highly scalable real-time analytics solution which can ingest and process terabytes of data per hour from hundreds of thousands of different concurrent sources. Forever change how you process web site click-streams, marketing and financial transactions, social media feeds, logs and metering data, and location-tracking events.

NEW LAUNCH! Intro to Amazon Athena. Easily analyze data in S3, using SQL.

Amazon Athena is a new interactive query service that makes it easy to analyze data in Amazon S3, using standard SQL. Athena is serverless, so there is no infrastructure to setup or manage, and you can start analyzing your data immediately. You don’t even need to load your data into Athena, it works directly with data stored in S3.

In this session, we will show you how easy is to start querying your data stored in Amazon S3, with Amazon Athena. First we will use Athena to create the schema for data already in S3. Then, we will demonstrate how you can run interactive queries through the built-in query editor. We will provide best practices and use cases for Athena. Then, we will talk about supported queries, data formats, and strategies to save costs when querying data with Athena.

Introduction to AWS Glue

In this session, we introduce AWS Glue, provide an overview of its components, and share how you can use AWS Glue to automate discovering your data, cataloging it, and preparing it for analysis.

Streaming ETL for Data Lakes using Amazon Kinesis Firehose - May 2017 AWS Onl...

Learning Objectives:

- Understand key requirements for collecting, preparing, and loading streaming data into data lakes

- Get an overview of transmitting data using Amazon Kinesis Firehose

- Learn how to perform data transformations with Amazon Kinesis Firehose

Data lakes enable your employees across the organization to access and analyze massive amounts of unstructured and structured data from disparate data sources, many of which generate data continuously and rapidly. Making this data available in a timely fashion for analysis requires a streaming solution that can durably and cost-effectively ingest this data into your data lake. Amazon Kinesis Firehose is a fully managed service that makes it easy to prepare and load streaming data into AWS. In this tech talk, we will provide an overview of Amazon Kinesis Firehose and dive deep into how you can use the service to collect, transform, batch, compress, and load real-time streaming data into your Amazon S3 data lakes.

Real-Time Log Analytics using Amazon Kinesis and Amazon Elasticsearch Service...

Learning Objectives:

- Understand how to easily build an end to end, real time log analytics solution

- Get an overview of collecting and processing data in real-time using Amazon Kinesis

- Learn how to Interactively query and visualize your log data using Amazon Elasticsearch Service

Log analytics is a common big data use case that allows you to analyze log data from websites, mobile devices, servers, sensors, and more for a wide variety of applications such as digital marketing, application monitoring, fraud detection, ad tech, gaming, and IoT. Moving your log analytics to real time can speed up your time to information allowing you to get insights in seconds or minutes instead of hours or days. In this session, you will learn how to ingest and deliver logs with no infrastructure using Amazon Kinesis Firehose. We will show how Amazon Kinesis Analytics can be used to process log data in real time to build responsive analytics. Finally, we will show how to use Amazon Elasticsearch Service to interactively query and visualize your log data.

(WRK302) Event-Driven Programming

Interested in learning about event-driven programming? In this session we will introduce you to some of the basics of using Amazon DynamoDB, its newly launched Streams feature and AWS Lambda. We will provide an overview of both AWS products and walk you through the process of building a real-world application using AWS Triggers, which combines DynamoDB Streams and AWS Lambda.

What's hot (20)

Day 4 - Big Data on AWS - RedShift, EMR & the Internet of Things

Day 4 - Big Data on AWS - RedShift, EMR & the Internet of Things

AWS re:Invent 2016: Tableau Rules of Engagement in the Cloud (STG306)

AWS re:Invent 2016: Tableau Rules of Engagement in the Cloud (STG306)

AWS re:Invent 2016: How Mapbox Uses the AWS Edge to Deliver Fast Maps for Mob...

AWS re:Invent 2016: How Mapbox Uses the AWS Edge to Deliver Fast Maps for Mob...

AWS Batch: Simplifying batch computing in the cloud

AWS Batch: Simplifying batch computing in the cloud

AWS re:Invent 2016: Taking Data to the Extreme (MBL202)

AWS re:Invent 2016: Taking Data to the Extreme (MBL202)

Scaling Traffic from 0 to 139 Million Unique Visitors

Scaling Traffic from 0 to 139 Million Unique Visitors

Optimizing Storage for Big Data Analytics Workloads

Optimizing Storage for Big Data Analytics Workloads

Real-time Streaming and Querying with Amazon Kinesis and Amazon Elastic MapRe...

Real-time Streaming and Querying with Amazon Kinesis and Amazon Elastic MapRe...

NEW LAUNCH! Intro to Amazon Athena. Easily analyze data in S3, using SQL.

NEW LAUNCH! Intro to Amazon Athena. Easily analyze data in S3, using SQL.

Streaming ETL for Data Lakes using Amazon Kinesis Firehose - May 2017 AWS Onl...

Streaming ETL for Data Lakes using Amazon Kinesis Firehose - May 2017 AWS Onl...

Real-Time Log Analytics using Amazon Kinesis and Amazon Elasticsearch Service...

Real-Time Log Analytics using Amazon Kinesis and Amazon Elasticsearch Service...

Similar to Building a data warehouse with AWS Redshift, Matillion and Yellowfin

Success has Many Query Engines- Tel Aviv Summit 2018

Your data has value for multiple business functions in your organization. Shorten your time to analytics and take faster, better decisions based on data.

In this session you will learn how you can access your data from a myriad of tools such as multiple EMR clusters, Athena & Redshift.

Data Transformation Patterns in AWS - AWS Online Tech Talks

Learning Objectives:

- Learn how to accelerate common data transformations from a variety of data

- Learn how to efficiently orchestrate transformation jobs

- Learn best practices and methodologies in data preparation for analytics

Build Data Lakes and Analytics on AWS

In this session, we show you how to understand what data you have, how to drive insights, and how to make predictions using purpose-built AWS services. Learn about the common pitfalls of building data lakes, and discover how to successfully drive analytics and insights from your data. Also learn how services such as Amazon S3, AWS Glue, Amazon Redshift, Amazon Athena, Amazon EMR, Amazon Kinesis, and Amazon ML services work together to build a successful data lake for various roles, including data scientists and business users.

Building a Modern Data Warehouse - Deep Dive on Amazon Redshift

Osemeke Isibor, Solutions Architect, AWS

In this session, we take a deep dive on Amazon Redshift architecture and the latest performance enhancements that give you faster insights into your data. We also cover Redshift Spectrum, a feature of Redshift that enables you to analyze data across Redshift and your Amazon S3 data lake to deliver unique insights not possible by analyzing independent data silos.

Analyzing Mixpanel Data into Amazon Redshift

This presentation is an overview guide to help us define a process or data pipeline, to load data from Mixpanel into Amazon Redshift for further analysis.

We will see how to:

- access and extract data from Mixpanel through its API

- how to load it into Redshift

This is not a full solution as it will require to writing the code to get the data and make sure that this process will run every time new data are generated.

Building+your+Data+Project+on+AWS+-+Luke+Anderson.pdf

Building+your+Data+Project+on+AWS+-+Luke+Anderson.pdf

Evolving Your Big Data Use Cases from Batch to Real-Time - AWS May 2016 Webi...

Batch querying and reporting is no longer enough for many organizations. Reducing time to insight – the time it takes to turn data into actionable insights – is becoming increasingly important to remain competitive. That’s why organizations are quickly evolving their data applications to support a broader set of real-time analytic use cases.

In this webinar, we will review some of the common use cases for real-time analytics such as click-stream analysis, event data processing, and real-time analytics. We will show proven architectures for collecting, storing, and processing real-time data using a combination of AWS managed services, including Amazon Kinesis Streams, Amazon Kinesis Firehose, Amazon EMR, and AWS Lambda, as well open source tools, such as Apache Spark. Then, we will discuss common approaches and best practices to incorporate real-time analytics into your existing batch applications.

Learning Objectives:

• Understand how to incorporate real-time analytics into existing applications

• Best practices to combine batch with real-time data flows

• Learn common architectures and use cases for real-time analytics

Build Your First Big Data Application on AWS (ANT213-R1) - AWS re:Invent 2018

Do you want to increase your knowledge of AWS big data web services and launch your first big data application on the cloud? In this session, we walk you through simplifying big data processing as a data bus comprising ingest, store, process, and visualize. You will build a big data application using AWS managed services, including Amazon Athena, Amazon Kinesis, Amazon DynamoDB, and Amazon S3. Along the way, we review architecture design patterns for big data applications and give you access to a take-home lab so you can rebuild and customize the application yourself. To get the most from this session, bring your own laptop and have some familiarity with AWS services.

FSI301 An Architecture for Trade Capture and Regulatory Reporting

For many securities organizations, post-trade processing is expensive, cumbersome, and time-consuming. This is in part due to the massive volumes of data required for processing a trade and the limited agility of the technology many organizations rely on today. In order to create efficiencies and move faster, many Financial Services organizations are working with AWS to implement post-trade solutions built with AWS’ storage services (S3 and Glacier) and big data capabilities (Athena, EMR, Redshift, and QuickSight ). In this session, AWS will walk through a trade capture and regulatory reporting solution that utilizes the aforementioned AWS services. We will also provide guidance around obtaining data-driven insights (from pixels to pictures), bolstering encryption with Amazon KMS, and maintaining transparency and control with Amazon CloudWatch and Amazon CloudTrail (which also helps meet SEC Rule 613 that requires the creation of comprehensive consolidated audit trails).

AWS Analytics Immersion Day - Build BI System from Scratch (Day1, Day2 Full V...

How to build Business Intelligence System from scratch on AWS (Day1, Day2)

------------------------------------------------------------------------------------------

2020-03-18(수)~19(목) 2일 동안 온라인으로 진행한 Online AWS Analytics Immersion Day 전체 발표 자료 입니다.

BI(Business Intelligence) 시스템을 설계하는 과정에서 AWS Analytics 서비스들을 어떻게 활용할 수 있는지 설명 드리고자 만든 자료 입니다.

Target Audience

-------------------

Online Analytics Immersion Day는 다음과 같은 고객을 대상으로 진행됩니다.

- AWS Analytics Services (ex. Kinesis, Athena, Redshift, EMR, etc)의 기본 개념을 알고 있지만, 이러한 서비스 활용 방법 및 데이터 분석 시스템 구축 과정이 궁금하신 분

- 데이터 분석 시스템을 구축한 경험은 있지만, 자신이 만든 시스템을 아키텍처 관점에서

어떻게 평가하고 확인할 수 있는지 궁금하신 분

在 Amazon Web Services 實現大數據應用-電子商務的案例分享

How to use big data to improve a EC platform is a hot topic. In this session, we will discuss some big data case studies in retail and EC, and introduce how to create a recommendation service with Amazon Machine Learning.

AWS Data Lake: data analysis @ scale

AWS Data Lake: data analysis @ scale

AWS Webinar Series 2018

Giorgio Nobile, AWS Solutions Architect

AWS Big Data Platform

This overview presentation discusses big data challenges and provides an overview of the AWS Big Data Platform by covering:

- How AWS customers leverage the platform to manage massive volumes of data from a variety of sources while containing costs.

- Reference architectures for popular use cases, including, connected devices (IoT), log streaming, real-time intelligence, and analytics.

- The AWS big data portfolio of services, including, Amazon S3, Kinesis, DynamoDB, Elastic MapReduce (EMR), and Redshift.

- The latest relational database engine, Amazon Aurora— a MySQL-compatible, highly-available relational database engine, which provides up to five times better performance than MySQL at one-tenth the cost of a commercial database.

Created by: Rahul Pathak,

Sr. Manager of Software Development

Building your First Big Data Application on AWS

Find out more about how to build your Big Data Applications on AWS

Modernise your Data Warehouse with Amazon Redshift and Amazon Redshift Spectrum

We will walk through how to migrate and modernise your legacy data warehouse, moving from an on-premises server or application, to the cloud. You will learn how to easily migrate your data by leveraging serverless ETL, data cataloging as well as the techniques needed to successfully modernise your data warehouse, reduce costs, and increase performance and scalability.

Speaker: Paul Macey, Specialist Solutions Architect, AWS

Similar to Building a data warehouse with AWS Redshift, Matillion and Yellowfin (20)

Success has Many Query Engines- Tel Aviv Summit 2018

Success has Many Query Engines- Tel Aviv Summit 2018

Data Transformation Patterns in AWS - AWS Online Tech Talks

Data Transformation Patterns in AWS - AWS Online Tech Talks

Building a Modern Data Warehouse - Deep Dive on Amazon Redshift

Building a Modern Data Warehouse - Deep Dive on Amazon Redshift

Building+your+Data+Project+on+AWS+-+Luke+Anderson.pdf

Building+your+Data+Project+on+AWS+-+Luke+Anderson.pdf

Building+your+Data+Project+on+AWS+-+Luke+Anderson.pdf

Building+your+Data+Project+on+AWS+-+Luke+Anderson.pdf

Evolving Your Big Data Use Cases from Batch to Real-Time - AWS May 2016 Webi...

Evolving Your Big Data Use Cases from Batch to Real-Time - AWS May 2016 Webi...

Build Your First Big Data Application on AWS (ANT213-R1) - AWS re:Invent 2018

Build Your First Big Data Application on AWS (ANT213-R1) - AWS re:Invent 2018

FSI301 An Architecture for Trade Capture and Regulatory Reporting

FSI301 An Architecture for Trade Capture and Regulatory Reporting

AWS Analytics Immersion Day - Build BI System from Scratch (Day1, Day2 Full V...

AWS Analytics Immersion Day - Build BI System from Scratch (Day1, Day2 Full V...

Modernise your Data Warehouse with Amazon Redshift and Amazon Redshift Spectrum

Modernise your Data Warehouse with Amazon Redshift and Amazon Redshift Spectrum

More from Lynn Langit

VariantSpark on AWS

Slides from Broad Institute SoftEng retreat poster session - moving CSIRO bioinformatics team's VariantSpark algorithm on AWS using EMR or EKS

10+ Years of Teaching Kids Programming

Experience report of teaching kids to code by two organizations. They are TKP (Teaching Kids Programming) and TKPLabs.

Blastn plus jupyter on Docker

Examples from bioinformatics - using containers for bioinformatics tools (such as blastn), plus example Jupyter notebooks

Teaching Kids to create Alexa Skills

Introduction to voice-user interface applications and Alexa skills development. Designed for use for middle-school teachers.

Practical cloud

Core deck on the state of the public cloud. Focuses on patterns (i.e. VMs, Serverless, Containers), Vendors (AWS, GCP) and practices.

Understanding Jupyter notebooks using bioinformatics examples

Understanding Jupyter notebooks using examples and tools from the CSRIO bioinformatics team - VariantSpark and GT-Scan2

Genome-scale Big Data Pipelines

Slides from talks at YOW 2017 on work with CSRIO Bioinformatics covering VariantSpark and GT-Scan2

Practical Cloud

Talk on public cloud architectures, particularly for data pipelines. Includes Serverless and Server-based architectures built on AWS and GCP.

Serverless Reality

deck from talk as ASAS conference in Arnhem, Netherlands on cloud serverless architecture patterns and vendor comparison for aws, gcp and azure

Genomic Scale Big Data Pipelines

deck from talk at YOW Data in Sydney, covers VariantSpark, custom Apache Spark Machine Learning library and also GT-Scan2 using AWS Lambda architecture for bioinformatics

VariantSpark - a Spark library for genomics

VariantSpark a customer Apache Spark library for genomic data. Customer wide random forest machine learning algorithm, designed for workloads with millions of features.

Bioinformatics Data Pipelines built by CSIRO on AWS

bioinformatics (genomics) data pipelines built by CSRIO Australia on the AWS Cloud

Google Cloud and Data Pipeline Patterns

deck from talk for YOW Nights Australia, on GCP (Google Cloud Platform) and Data Pipeline patterns

Redis Labs and SQL Server

talk on using Redis Labs using demo of Redis Cloud as session store for node.js application with Azure SQL

What is 'Teaching Kids Programming'

Core deck for developer audience, explaining origins, mission, activities and goals of 'Teaching Kids Programming non-profit - courseware for teachers to teach kids core computational concepts with a customized version of Java

Teaching Kids Programming for Developers

About TKP for technical professionals - developers and DBAs. Resources for teaching kids to code.

More from Lynn Langit (20)

Understanding Jupyter notebooks using bioinformatics examples

Understanding Jupyter notebooks using bioinformatics examples

Bioinformatics Data Pipelines built by CSIRO on AWS

Bioinformatics Data Pipelines built by CSIRO on AWS

Recently uploaded

JMeter webinar - integration with InfluxDB and Grafana

Watch this recorded webinar about real-time monitoring of application performance. See how to integrate Apache JMeter, the open-source leader in performance testing, with InfluxDB, the open-source time-series database, and Grafana, the open-source analytics and visualization application.

In this webinar, we will review the benefits of leveraging InfluxDB and Grafana when executing load tests and demonstrate how these tools are used to visualize performance metrics.

Length: 30 minutes

Session Overview

-------------------------------------------

During this webinar, we will cover the following topics while demonstrating the integrations of JMeter, InfluxDB and Grafana:

- What out-of-the-box solutions are available for real-time monitoring JMeter tests?

- What are the benefits of integrating InfluxDB and Grafana into the load testing stack?

- Which features are provided by Grafana?

- Demonstration of InfluxDB and Grafana using a practice web application

To view the webinar recording, go to:

https://www.rttsweb.com/jmeter-integration-webinar

Transcript: Selling digital books in 2024: Insights from industry leaders - T...

The publishing industry has been selling digital audiobooks and ebooks for over a decade and has found its groove. What’s changed? What has stayed the same? Where do we go from here? Join a group of leading sales peers from across the industry for a conversation about the lessons learned since the popularization of digital books, best practices, digital book supply chain management, and more.

Link to video recording: https://bnctechforum.ca/sessions/selling-digital-books-in-2024-insights-from-industry-leaders/

Presented by BookNet Canada on May 28, 2024, with support from the Department of Canadian Heritage.

GDG Cloud Southlake #33: Boule & Rebala: Effective AppSec in SDLC using Deplo...

Effective Application Security in Software Delivery lifecycle using Deployment Firewall and DBOM

The modern software delivery process (or the CI/CD process) includes many tools, distributed teams, open-source code, and cloud platforms. Constant focus on speed to release software to market, along with the traditional slow and manual security checks has caused gaps in continuous security as an important piece in the software supply chain. Today organizations feel more susceptible to external and internal cyber threats due to the vast attack surface in their applications supply chain and the lack of end-to-end governance and risk management.

The software team must secure its software delivery process to avoid vulnerability and security breaches. This needs to be achieved with existing tool chains and without extensive rework of the delivery processes. This talk will present strategies and techniques for providing visibility into the true risk of the existing vulnerabilities, preventing the introduction of security issues in the software, resolving vulnerabilities in production environments quickly, and capturing the deployment bill of materials (DBOM).

Speakers:

Bob Boule

Robert Boule is a technology enthusiast with PASSION for technology and making things work along with a knack for helping others understand how things work. He comes with around 20 years of solution engineering experience in application security, software continuous delivery, and SaaS platforms. He is known for his dynamic presentations in CI/CD and application security integrated in software delivery lifecycle.

Gopinath Rebala

Gopinath Rebala is the CTO of OpsMx, where he has overall responsibility for the machine learning and data processing architectures for Secure Software Delivery. Gopi also has a strong connection with our customers, leading design and architecture for strategic implementations. Gopi is a frequent speaker and well-known leader in continuous delivery and integrating security into software delivery.

Leading Change strategies and insights for effective change management pdf 1.pdf

Leading Change strategies and insights for effective change management pdf 1.pdf

AI for Every Business: Unlocking Your Product's Universal Potential by VP of ...

AI for Every Business: Unlocking Your Product's Universal Potential by VP of Product, Slack

Securing your Kubernetes cluster_ a step-by-step guide to success !

Today, after several years of existence, an extremely active community and an ultra-dynamic ecosystem, Kubernetes has established itself as the de facto standard in container orchestration. Thanks to a wide range of managed services, it has never been so easy to set up a ready-to-use Kubernetes cluster.

However, this ease of use means that the subject of security in Kubernetes is often left for later, or even neglected. This exposes companies to significant risks.

In this talk, I'll show you step-by-step how to secure your Kubernetes cluster for greater peace of mind and reliability.

LF Energy Webinar: Electrical Grid Modelling and Simulation Through PowSyBl -...

Do you want to learn how to model and simulate an electrical network from scratch in under an hour?

Then welcome to this PowSyBl workshop, hosted by Rte, the French Transmission System Operator (TSO)!

During the webinar, you will discover the PowSyBl ecosystem as well as handle and study an electrical network through an interactive Python notebook.

PowSyBl is an open source project hosted by LF Energy, which offers a comprehensive set of features for electrical grid modelling and simulation. Among other advanced features, PowSyBl provides:

- A fully editable and extendable library for grid component modelling;

- Visualization tools to display your network;

- Grid simulation tools, such as power flows, security analyses (with or without remedial actions) and sensitivity analyses;

The framework is mostly written in Java, with a Python binding so that Python developers can access PowSyBl functionalities as well.

What you will learn during the webinar:

- For beginners: discover PowSyBl's functionalities through a quick general presentation and the notebook, without needing any expert coding skills;

- For advanced developers: master the skills to efficiently apply PowSyBl functionalities to your real-world scenarios.

The Art of the Pitch: WordPress Relationships and Sales

Clients don’t know what they don’t know. What web solutions are right for them? How does WordPress come into the picture? How do you make sure you understand scope and timeline? What do you do if sometime changes?

All these questions and more will be explored as we talk about matching clients’ needs with what your agency offers without pulling teeth or pulling your hair out. Practical tips, and strategies for successful relationship building that leads to closing the deal.

FIDO Alliance Osaka Seminar: The WebAuthn API and Discoverable Credentials.pdf

FIDO Alliance Osaka Seminar

Epistemic Interaction - tuning interfaces to provide information for AI support

Paper presented at SYNERGY workshop at AVI 2024, Genoa, Italy. 3rd June 2024

https://alandix.com/academic/papers/synergy2024-epistemic/

As machine learning integrates deeper into human-computer interactions, the concept of epistemic interaction emerges, aiming to refine these interactions to enhance system adaptability. This approach encourages minor, intentional adjustments in user behaviour to enrich the data available for system learning. This paper introduces epistemic interaction within the context of human-system communication, illustrating how deliberate interaction design can improve system understanding and adaptation. Through concrete examples, we demonstrate the potential of epistemic interaction to significantly advance human-computer interaction by leveraging intuitive human communication strategies to inform system design and functionality, offering a novel pathway for enriching user-system engagements.

Assuring Contact Center Experiences for Your Customers With ThousandEyes

Presented by Suzanne Phillips and Alex Marcotte

How world-class product teams are winning in the AI era by CEO and Founder, P...

How world-class product teams are winning in the AI era by CEO and Founder, Product School

State of ICS and IoT Cyber Threat Landscape Report 2024 preview

The IoT and OT threat landscape report has been prepared by the Threat Research Team at Sectrio using data from Sectrio, cyber threat intelligence farming facilities spread across over 85 cities around the world. In addition, Sectrio also runs AI-based advanced threat and payload engagement facilities that serve as sinks to attract and engage sophisticated threat actors, and newer malware including new variants and latent threats that are at an earlier stage of development.

The latest edition of the OT/ICS and IoT security Threat Landscape Report 2024 also covers:

State of global ICS asset and network exposure

Sectoral targets and attacks as well as the cost of ransom

Global APT activity, AI usage, actor and tactic profiles, and implications

Rise in volumes of AI-powered cyberattacks

Major cyber events in 2024

Malware and malicious payload trends

Cyberattack types and targets

Vulnerability exploit attempts on CVEs

Attacks on counties – USA

Expansion of bot farms – how, where, and why

In-depth analysis of the cyber threat landscape across North America, South America, Europe, APAC, and the Middle East

Why are attacks on smart factories rising?

Cyber risk predictions

Axis of attacks – Europe

Systemic attacks in the Middle East

Download the full report from here:

https://sectrio.com/resources/ot-threat-landscape-reports/sectrio-releases-ot-ics-and-iot-security-threat-landscape-report-2024/

Software Delivery At the Speed of AI: Inflectra Invests In AI-Powered Quality

In this insightful webinar, Inflectra explores how artificial intelligence (AI) is transforming software development and testing. Discover how AI-powered tools are revolutionizing every stage of the software development lifecycle (SDLC), from design and prototyping to testing, deployment, and monitoring.

Learn about:

• The Future of Testing: How AI is shifting testing towards verification, analysis, and higher-level skills, while reducing repetitive tasks.

• Test Automation: How AI-powered test case generation, optimization, and self-healing tests are making testing more efficient and effective.

• Visual Testing: Explore the emerging capabilities of AI in visual testing and how it's set to revolutionize UI verification.

• Inflectra's AI Solutions: See demonstrations of Inflectra's cutting-edge AI tools like the ChatGPT plugin and Azure Open AI platform, designed to streamline your testing process.

Whether you're a developer, tester, or QA professional, this webinar will give you valuable insights into how AI is shaping the future of software delivery.

Designing Great Products: The Power of Design and Leadership by Chief Designe...

Designing Great Products: The Power of Design and Leadership by Chief Designer, Beats by Dr Dre

De-mystifying Zero to One: Design Informed Techniques for Greenfield Innovati...

De-mystifying Zero to One: Design Informed Techniques for Greenfield Innovation With Your Product by VP of Product Design, Warner Music Group

Kubernetes & AI - Beauty and the Beast !?! @KCD Istanbul 2024

As AI technology is pushing into IT I was wondering myself, as an “infrastructure container kubernetes guy”, how get this fancy AI technology get managed from an infrastructure operational view? Is it possible to apply our lovely cloud native principals as well? What benefit’s both technologies could bring to each other?

Let me take this questions and provide you a short journey through existing deployment models and use cases for AI software. On practical examples, we discuss what cloud/on-premise strategy we may need for applying it to our own infrastructure to get it to work from an enterprise perspective. I want to give an overview about infrastructure requirements and technologies, what could be beneficial or limiting your AI use cases in an enterprise environment. An interactive Demo will give you some insides, what approaches I got already working for real.

Essentials of Automations: Optimizing FME Workflows with Parameters

Are you looking to streamline your workflows and boost your projects’ efficiency? Do you find yourself searching for ways to add flexibility and control over your FME workflows? If so, you’re in the right place.

Join us for an insightful dive into the world of FME parameters, a critical element in optimizing workflow efficiency. This webinar marks the beginning of our three-part “Essentials of Automation” series. This first webinar is designed to equip you with the knowledge and skills to utilize parameters effectively: enhancing the flexibility, maintainability, and user control of your FME projects.

Here’s what you’ll gain:

- Essentials of FME Parameters: Understand the pivotal role of parameters, including Reader/Writer, Transformer, User, and FME Flow categories. Discover how they are the key to unlocking automation and optimization within your workflows.

- Practical Applications in FME Form: Delve into key user parameter types including choice, connections, and file URLs. Allow users to control how a workflow runs, making your workflows more reusable. Learn to import values and deliver the best user experience for your workflows while enhancing accuracy.

- Optimization Strategies in FME Flow: Explore the creation and strategic deployment of parameters in FME Flow, including the use of deployment and geometry parameters, to maximize workflow efficiency.

- Pro Tips for Success: Gain insights on parameterizing connections and leveraging new features like Conditional Visibility for clarity and simplicity.

We’ll wrap up with a glimpse into future webinars, followed by a Q&A session to address your specific questions surrounding this topic.

Don’t miss this opportunity to elevate your FME expertise and drive your projects to new heights of efficiency.

Recently uploaded (20)

JMeter webinar - integration with InfluxDB and Grafana

JMeter webinar - integration with InfluxDB and Grafana

Transcript: Selling digital books in 2024: Insights from industry leaders - T...

Transcript: Selling digital books in 2024: Insights from industry leaders - T...

GDG Cloud Southlake #33: Boule & Rebala: Effective AppSec in SDLC using Deplo...

GDG Cloud Southlake #33: Boule & Rebala: Effective AppSec in SDLC using Deplo...

Leading Change strategies and insights for effective change management pdf 1.pdf

Leading Change strategies and insights for effective change management pdf 1.pdf

AI for Every Business: Unlocking Your Product's Universal Potential by VP of ...

AI for Every Business: Unlocking Your Product's Universal Potential by VP of ...

Securing your Kubernetes cluster_ a step-by-step guide to success !

Securing your Kubernetes cluster_ a step-by-step guide to success !

LF Energy Webinar: Electrical Grid Modelling and Simulation Through PowSyBl -...

LF Energy Webinar: Electrical Grid Modelling and Simulation Through PowSyBl -...

The Art of the Pitch: WordPress Relationships and Sales

The Art of the Pitch: WordPress Relationships and Sales

FIDO Alliance Osaka Seminar: The WebAuthn API and Discoverable Credentials.pdf

FIDO Alliance Osaka Seminar: The WebAuthn API and Discoverable Credentials.pdf

Epistemic Interaction - tuning interfaces to provide information for AI support

Epistemic Interaction - tuning interfaces to provide information for AI support

Assuring Contact Center Experiences for Your Customers With ThousandEyes

Assuring Contact Center Experiences for Your Customers With ThousandEyes

How world-class product teams are winning in the AI era by CEO and Founder, P...

How world-class product teams are winning in the AI era by CEO and Founder, P...

State of ICS and IoT Cyber Threat Landscape Report 2024 preview

State of ICS and IoT Cyber Threat Landscape Report 2024 preview

Software Delivery At the Speed of AI: Inflectra Invests In AI-Powered Quality

Software Delivery At the Speed of AI: Inflectra Invests In AI-Powered Quality

Designing Great Products: The Power of Design and Leadership by Chief Designe...

Designing Great Products: The Power of Design and Leadership by Chief Designe...

De-mystifying Zero to One: Design Informed Techniques for Greenfield Innovati...

De-mystifying Zero to One: Design Informed Techniques for Greenfield Innovati...

Kubernetes & AI - Beauty and the Beast !?! @KCD Istanbul 2024

Kubernetes & AI - Beauty and the Beast !?! @KCD Istanbul 2024

FIDO Alliance Osaka Seminar: Passkeys and the Road Ahead.pdf

FIDO Alliance Osaka Seminar: Passkeys and the Road Ahead.pdf

Essentials of Automations: Optimizing FME Workflows with Parameters

Essentials of Automations: Optimizing FME Workflows with Parameters

Building a data warehouse with AWS Redshift, Matillion and Yellowfin

- 1. Building a Data Warehouse on AWS Amazon S3 Amazon Redshift CollectCollect ProcessProcess AnalyzeAnalyze StoreStore Data Answers Visualize @Lynn Langit

- 2. AWS Marketplace Enterprise software store for business users who need simplified procurement •2000+ product listings •to browse, test and buy software •1-click deployment •to launch, in multiple regions around the world •Pay-as-you-go pricing •to use on demand Advanced Analytics Data Enablement Business Intelligence

- 3. Building a Data Warehouse on AWS Move data into Redshift from S3 for analysis Amazon S3 Amazon Redshift AWS Marketplace Partners Matillion Visualize Yellowfin CollectCollect ProcessProcess AnalyzeAnalyze StoreStore Data Answers

- 4. Setup

- 5. Our Scenario and Source Files File Types -- Text - .csv -- Compressed - .gz File Categories Details / Events -- Flights -- Weather Metadata -- Airports -- Carriers “In this scenario we will use Matillion ETL for Redshift to prepare two separate data sources ready for analysis. The sample data is US airport flight information from 1995 -> 2008. Every flight to or from a US airport (and whether it left on time or not) is included. The second data set is weather data, taken from NOAA, including the daily weather readings for each US Airport.”

- 6. Loading data from S3 in to Redshift

- 7. Using Matillion ETL for Redshift • Create Instance (AMI/EC2) of Matillion/AWS Marketplace • Connect Matillion to Redshift

- 9. Table distribution styles Distribution Key All Node 1 Slice 1 Slice 1 Slice 2 Slice 2 Node 2 Slice 3 Slice 3 Slice 4 Slice 4 Node 1 Slice 1 Slice 1 Slice 2 Slice 2 Node 2 Slice 3 Slice 3 Slice 4 Slice 4 key1 key2 key3 key4 All data on every node Same key to same location Node 1 Slice 1 Slice 1 Slice 2 Slice 2 Node 2 Slice 3 Slice 3 Slice 4 Slice 4 Even Round robin distribution

- 10. Sort Keys • Single Column - [ SORTKEY ( date ) ] • Queries that use 1st column (i.e. date) as primary filter • Compound - [ SORTKEY COMPOUND ( date, region, country) ] • Queries that use 1st column as primary filter, then other columns • Interleaved - [ SORTKEY INTERLEAVED ( date, region, country) ] • Queries that use different columns in filter

- 11. Time Series Data – Vacuum Operation Unsorted Region Sorted Region Sorted Sorted Sorted Append in Sort Key Order Sort Unsorted Region Merge

Editor's Notes

- Collect logs in an Amazon Kinesis Stream Launch Amazon EMR and Amazon Redshift clusters Use Hive on Amazon EMR to access data in an Amazon Kinesis stream Use Hive on Amazon EMR to transform, partition and output data to Amazon S3 Load data in parallel into Amazon Redshift from Amazon S3 Bonus: use Hive and Amazon DynamoDB to enable Amazon Kinesis “checkpointing”

- Big Data software on AWS Marketplace:http://amzn.to/1va4KQ6

- Public data from -- s3://demo-data-sets-west/airline/data/

- http://docs.aws.amazon.com/general/latest/gr/rande.html http://docs.aws.amazon.com/redshift/latest/dg/r_STV_SLICES.html

- Redshift is a distributed system: A cluster contains a leader node and compute nodes A compute node contains slices (one per core) that contain data Data is distributed among slices in 3 ways: Even – Rows distributed in Round Robin fashion (default) Key – Rows distributed based on a distribution key (hash of a defined column) All - Rows distributed to all slices Queries run on all slices in parallel Optimal query throughput can be achieved when data is evenly spread across slices

- When you append data, it’s appended to the unsorted region in sorted order When you vacuum, the unsorted region is sorted first, then merged into the sorted regions This can be really expensive If you append data only in the order of your sortkeys, you’ll never have to vacuum Mycroft does this automatically