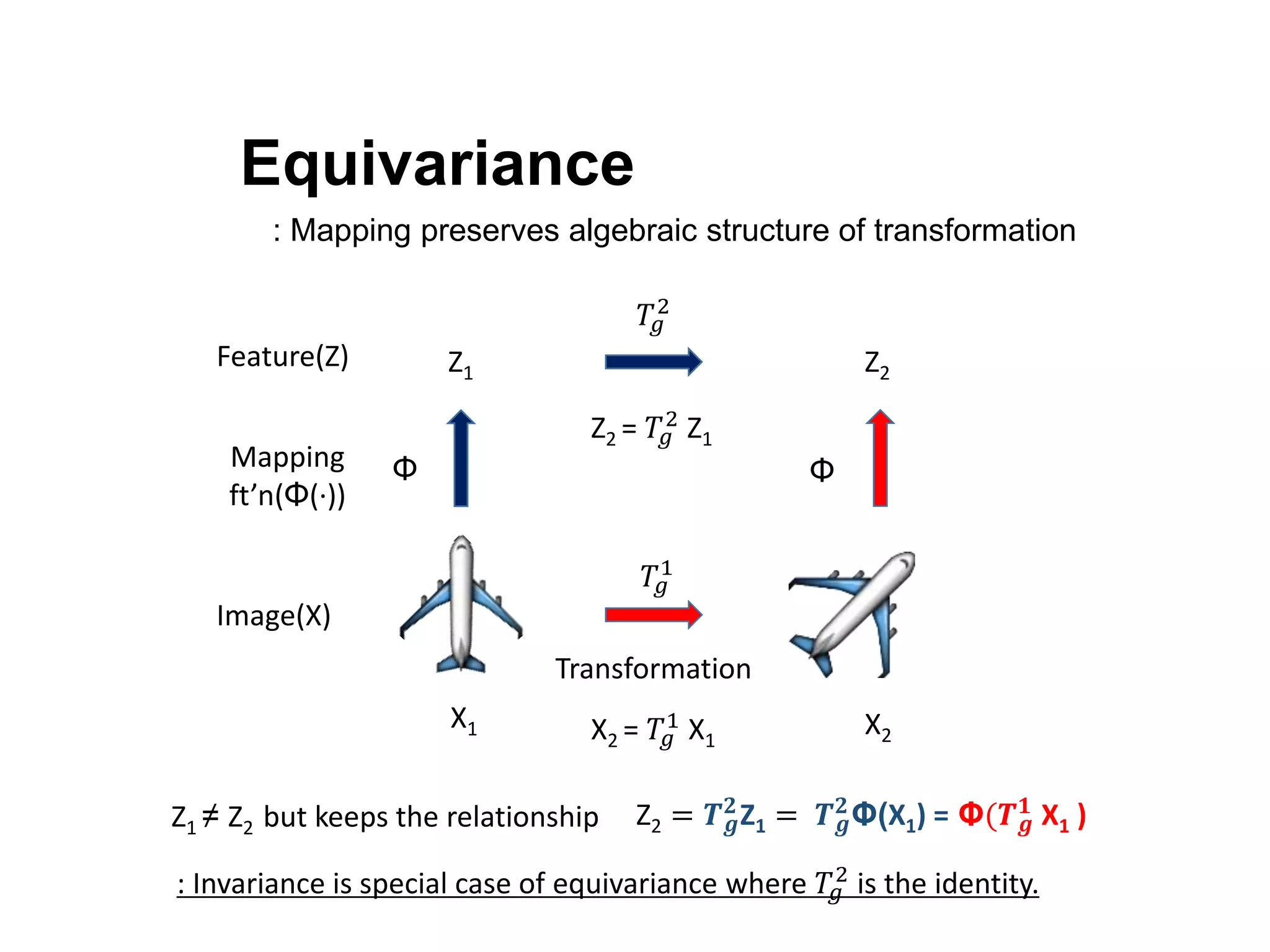

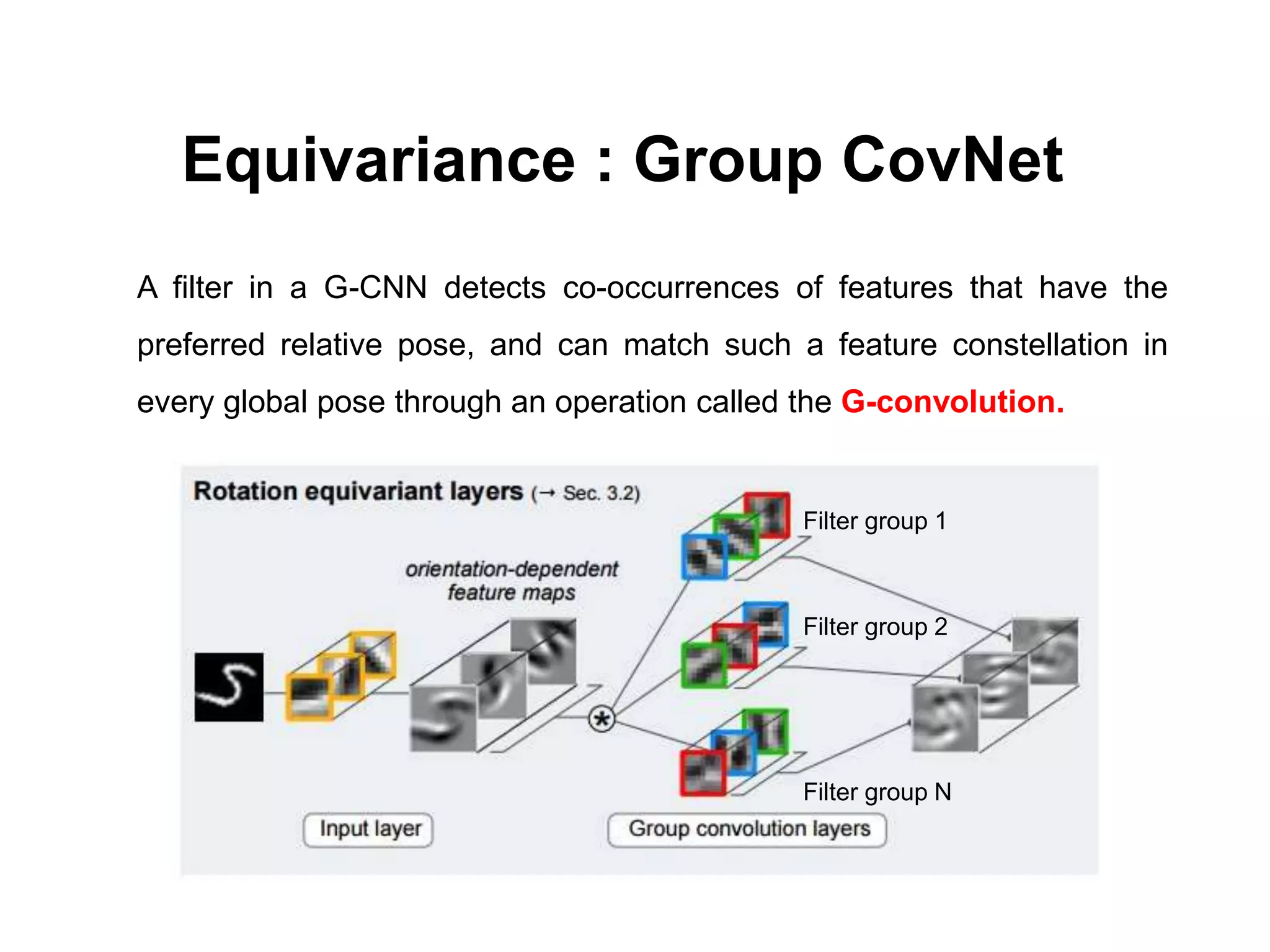

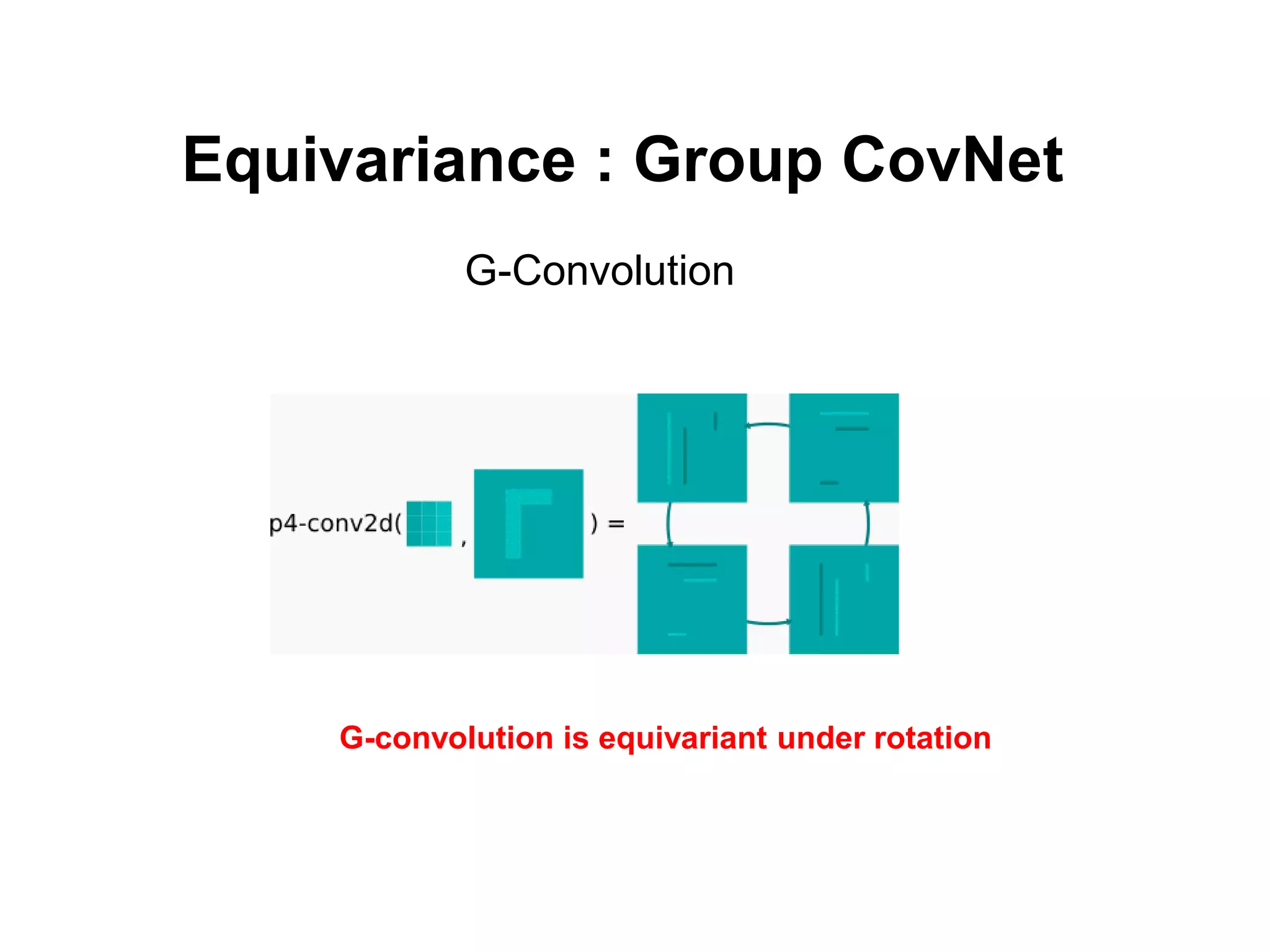

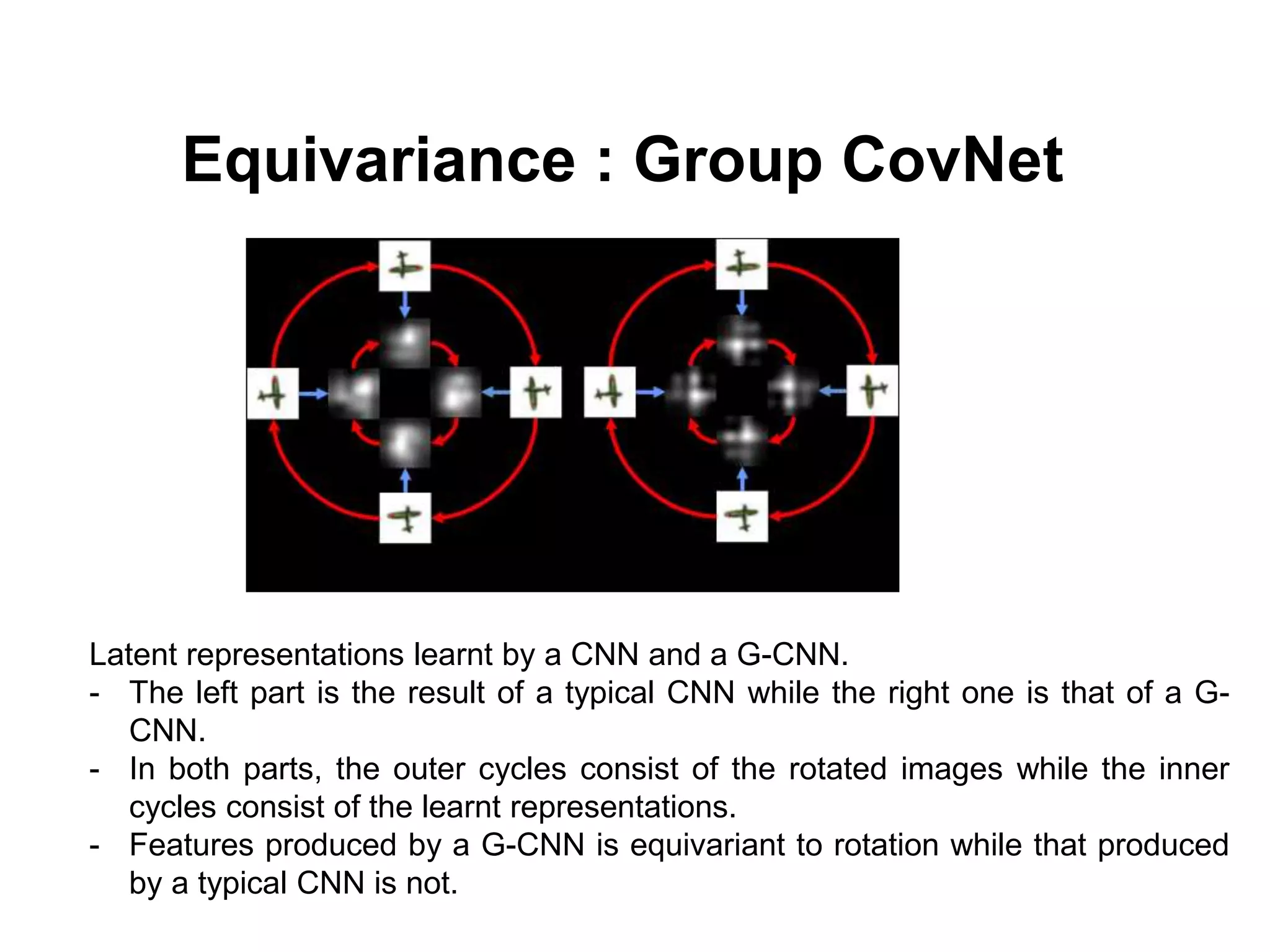

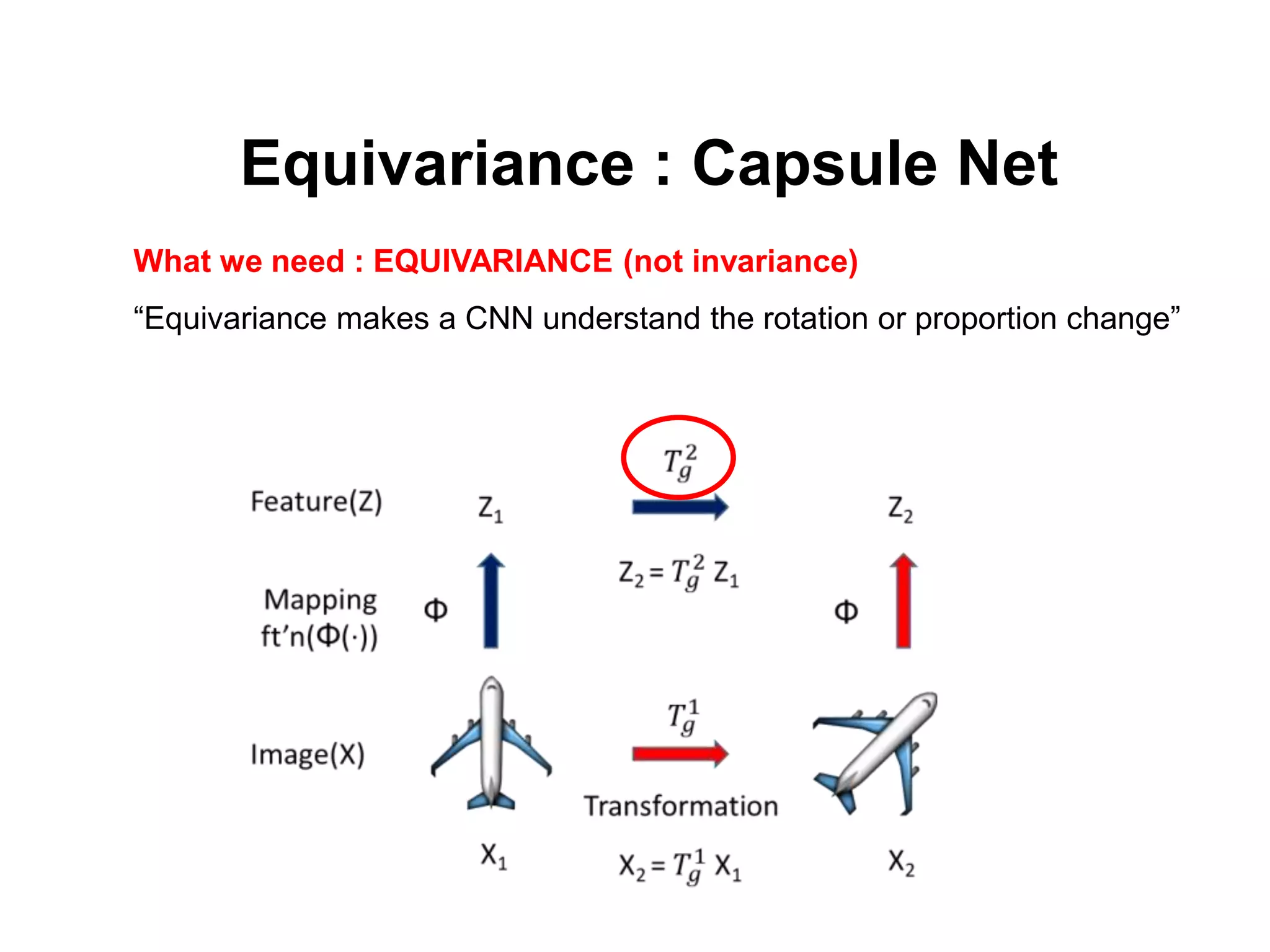

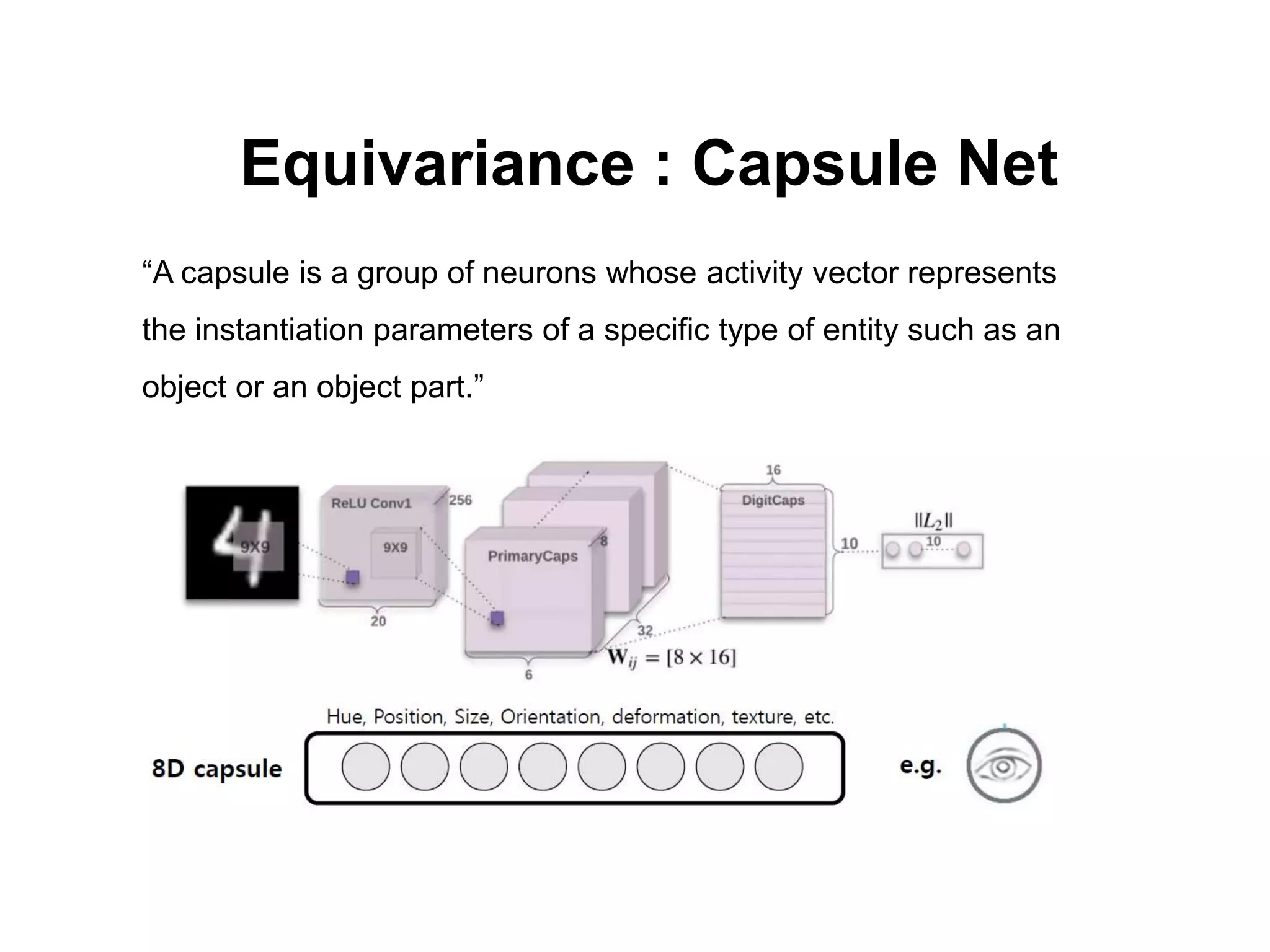

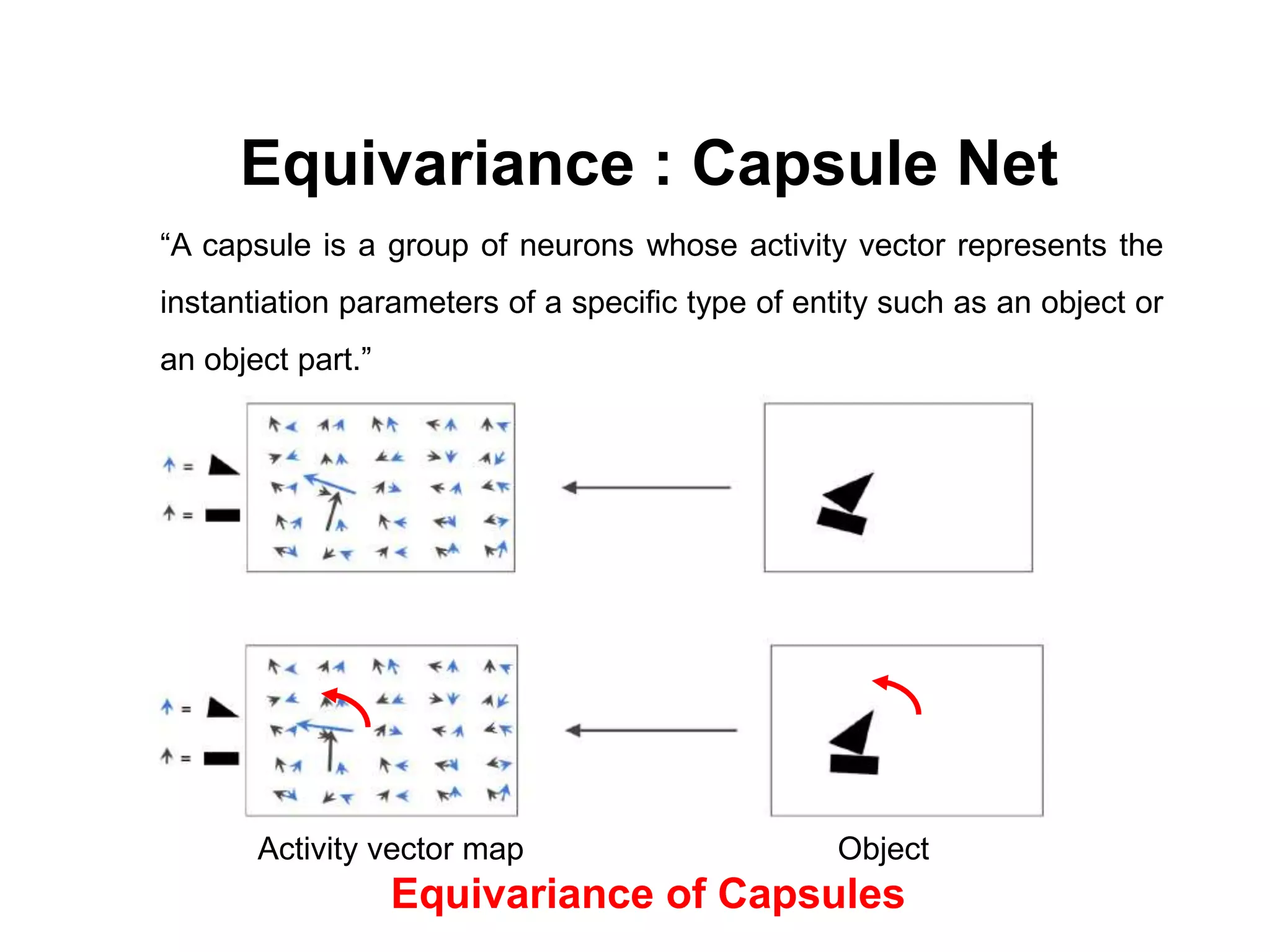

The document discusses the limitations of conventional convolutional neural networks (CNNs), particularly their equivariance under translation but not under rotation. It introduces group convolutional networks (g-CNNs) that utilize a group of filters to achieve equivariance, allowing for better recognition of features across different orientations. Additionally, it explores the concept of capsules in neural networks, which represent instantiation parameters for objects and contribute to understanding equivariance.