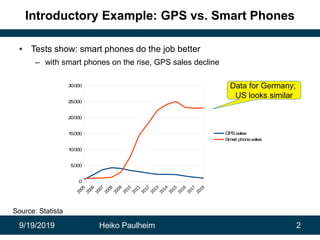

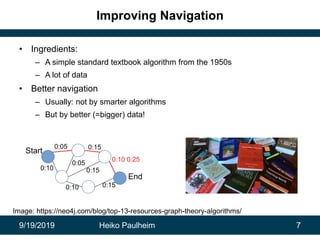

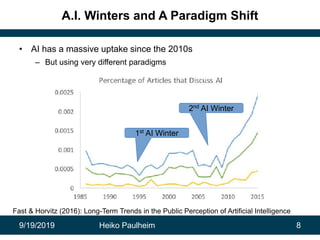

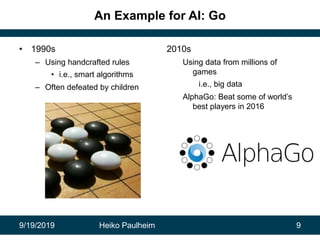

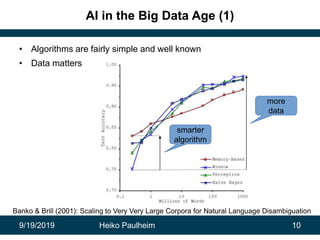

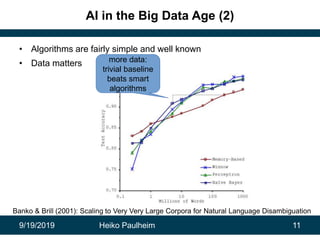

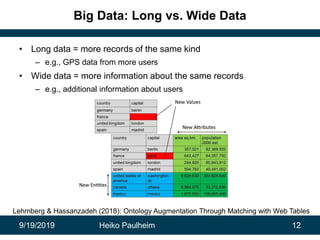

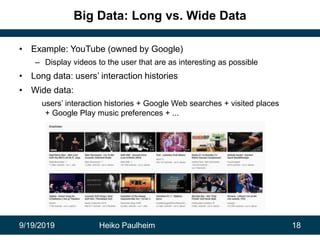

This document discusses how modern artificial intelligence relies on massive amounts of data rather than complex algorithms. It provides examples of how companies like Google, Facebook, and WeChat have improved services by utilizing long datasets from many users and merging different types of wide data. The author argues that while algorithms are typically well-known, data ownership allows companies to gain market power since data availability is crucial for artificial intelligence systems.