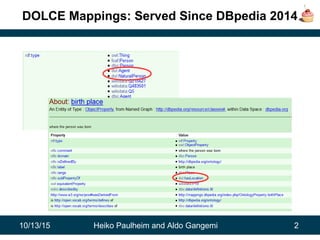

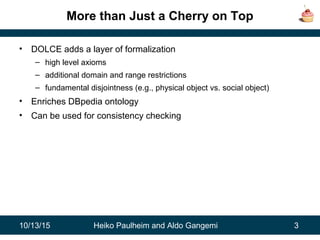

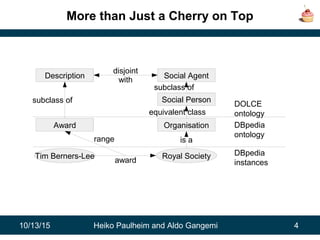

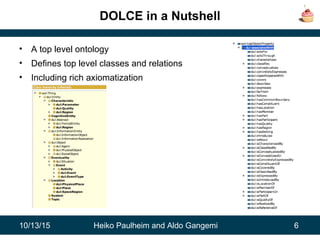

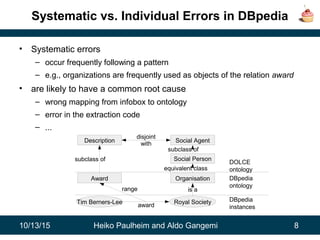

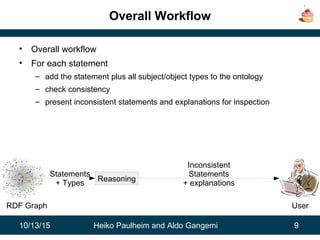

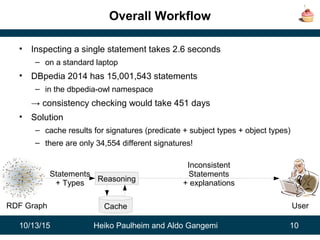

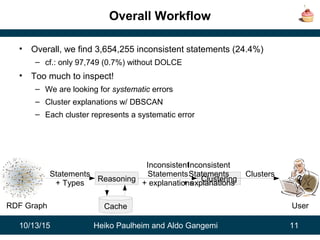

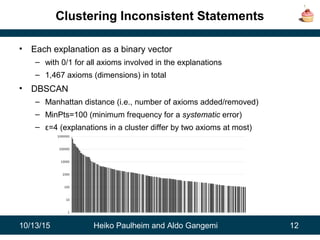

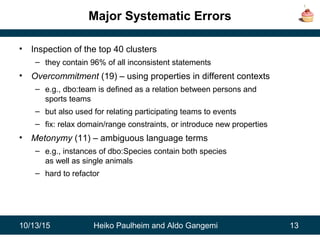

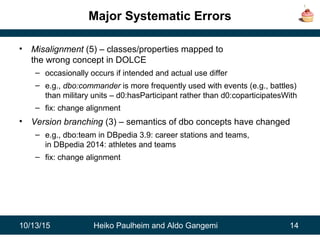

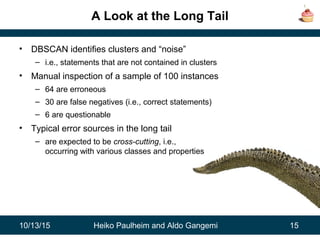

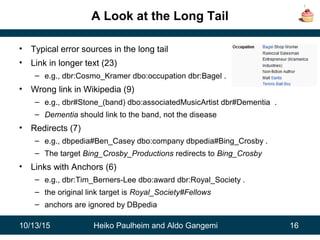

The document discusses the integration of the DOLCE ontology with DBpedia to enhance its consistency checking and enrich its ontology. It highlights systematic errors found in DBpedia through clustering techniques, identifying prevalent issues such as overcommitment and misalignment of classes. The findings suggest that DOLCE significantly aids in pinpointing inconsistencies and could lead to improvements in future DBpedia versions.