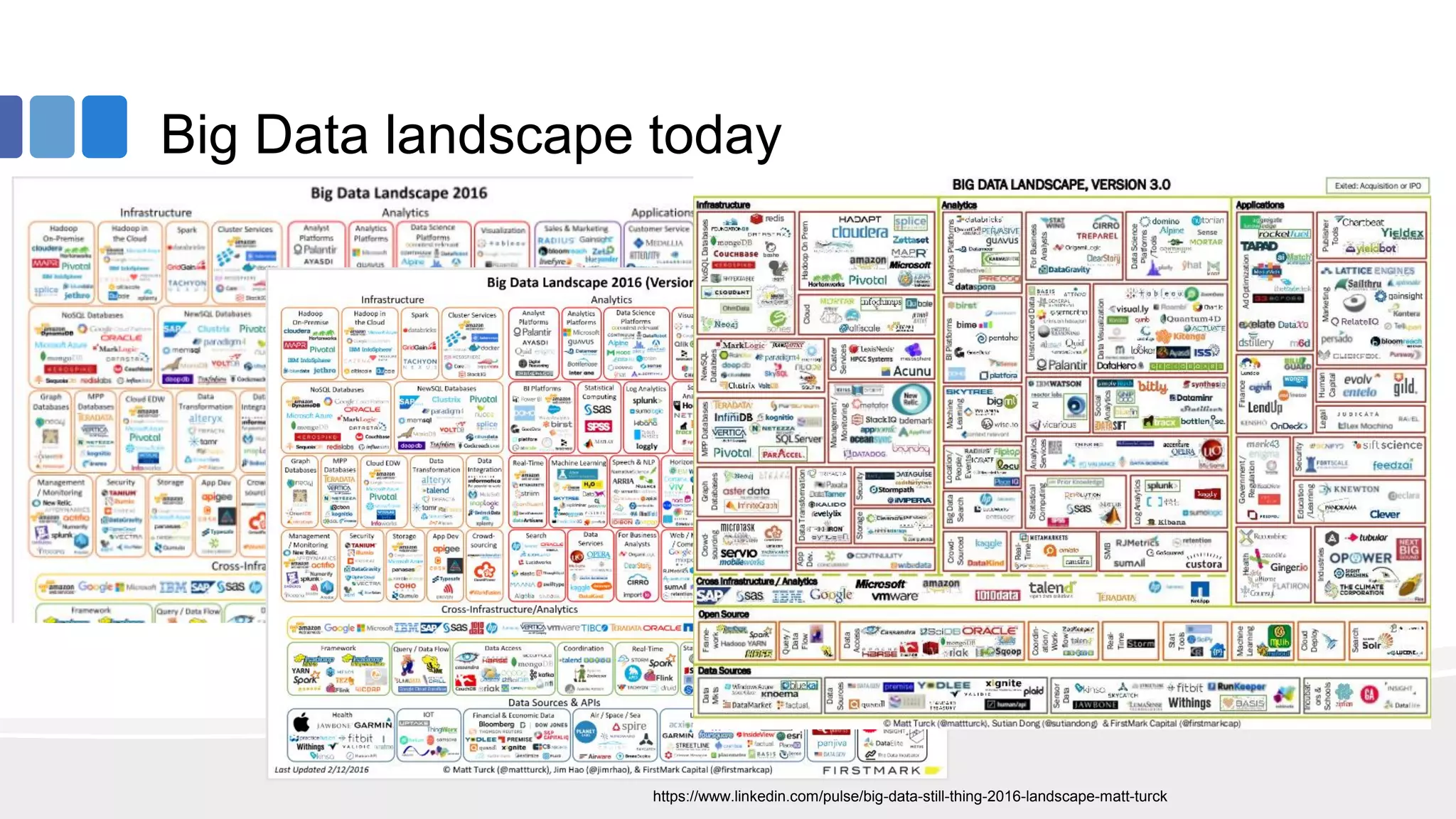

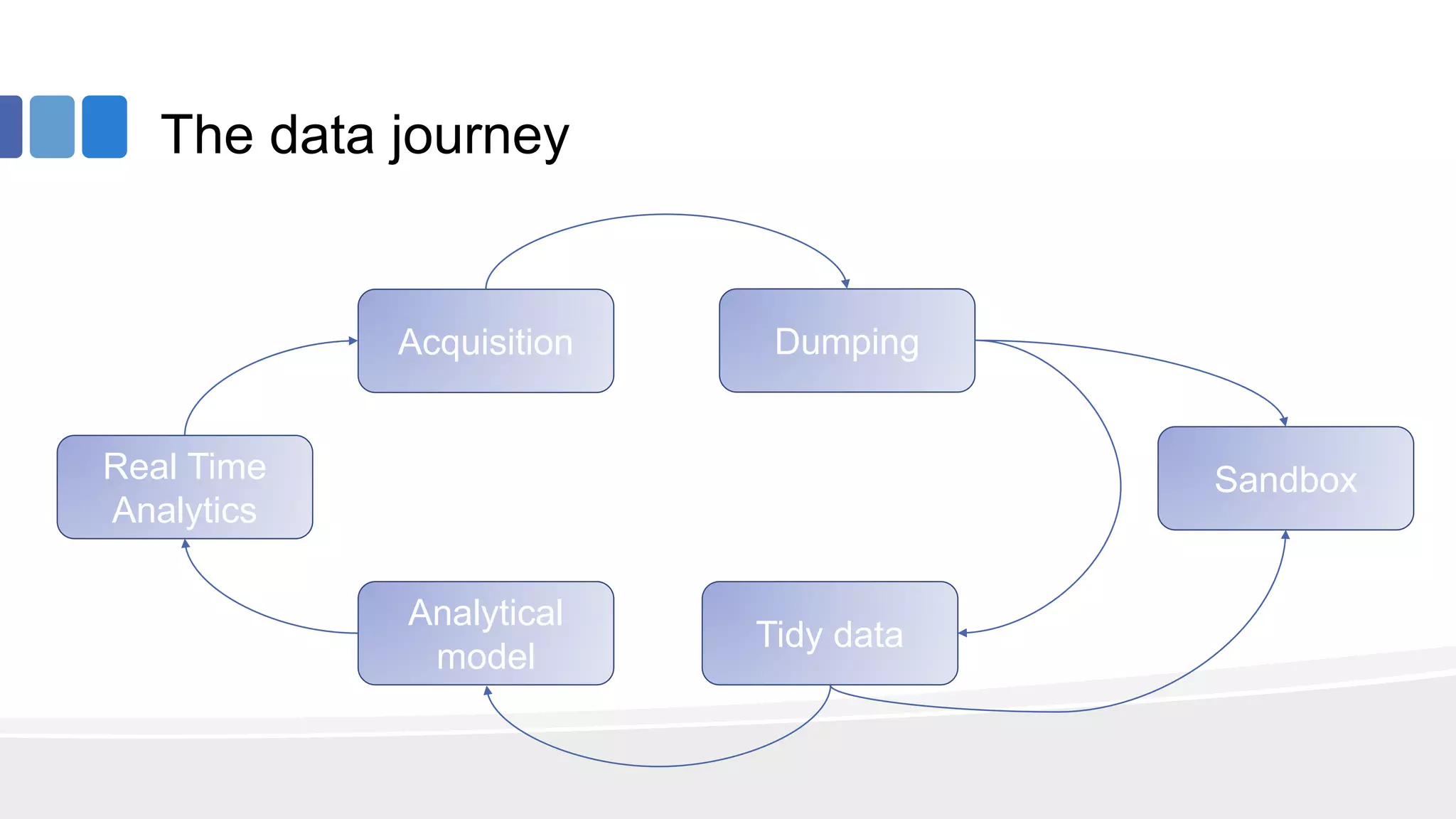

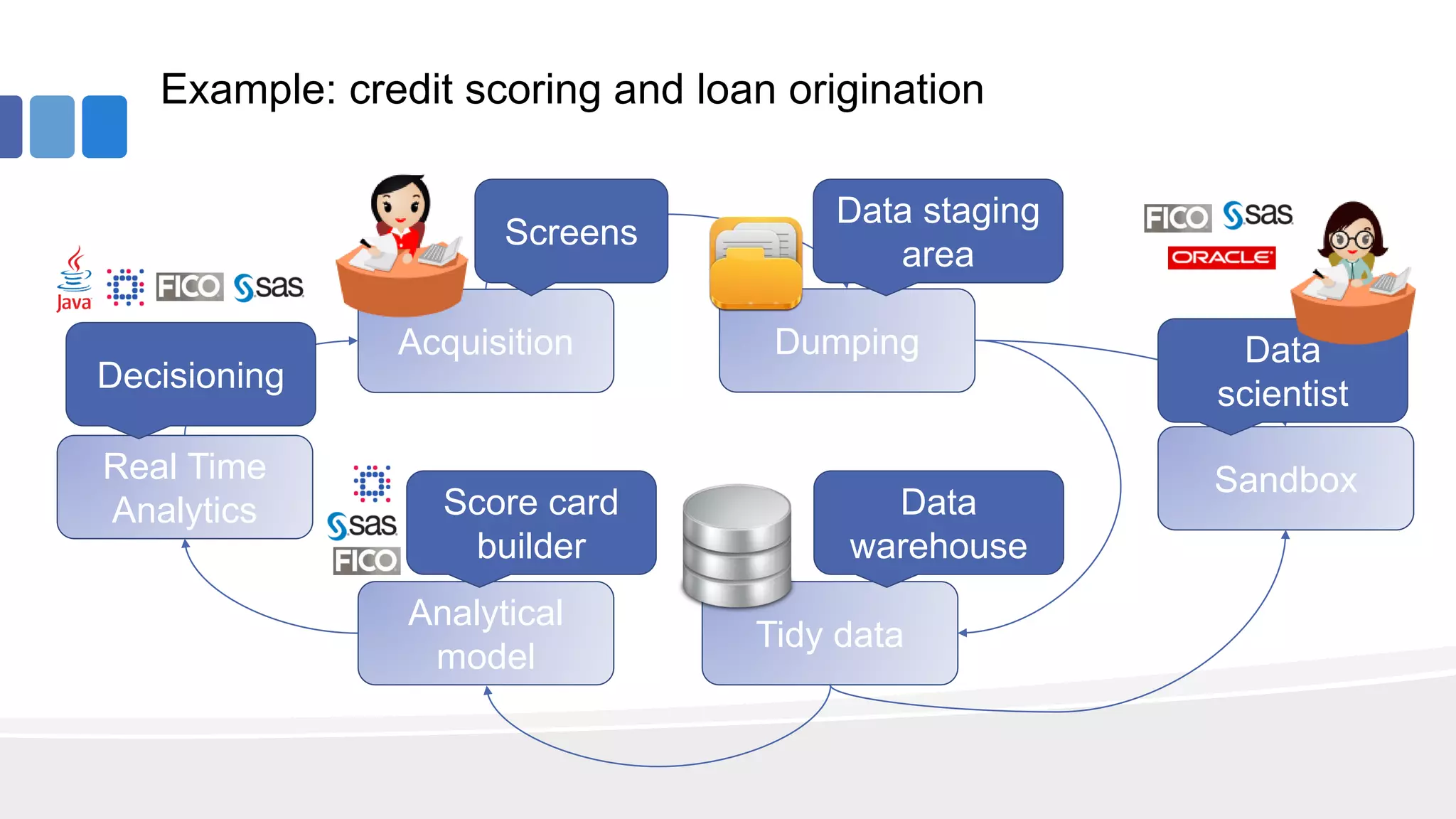

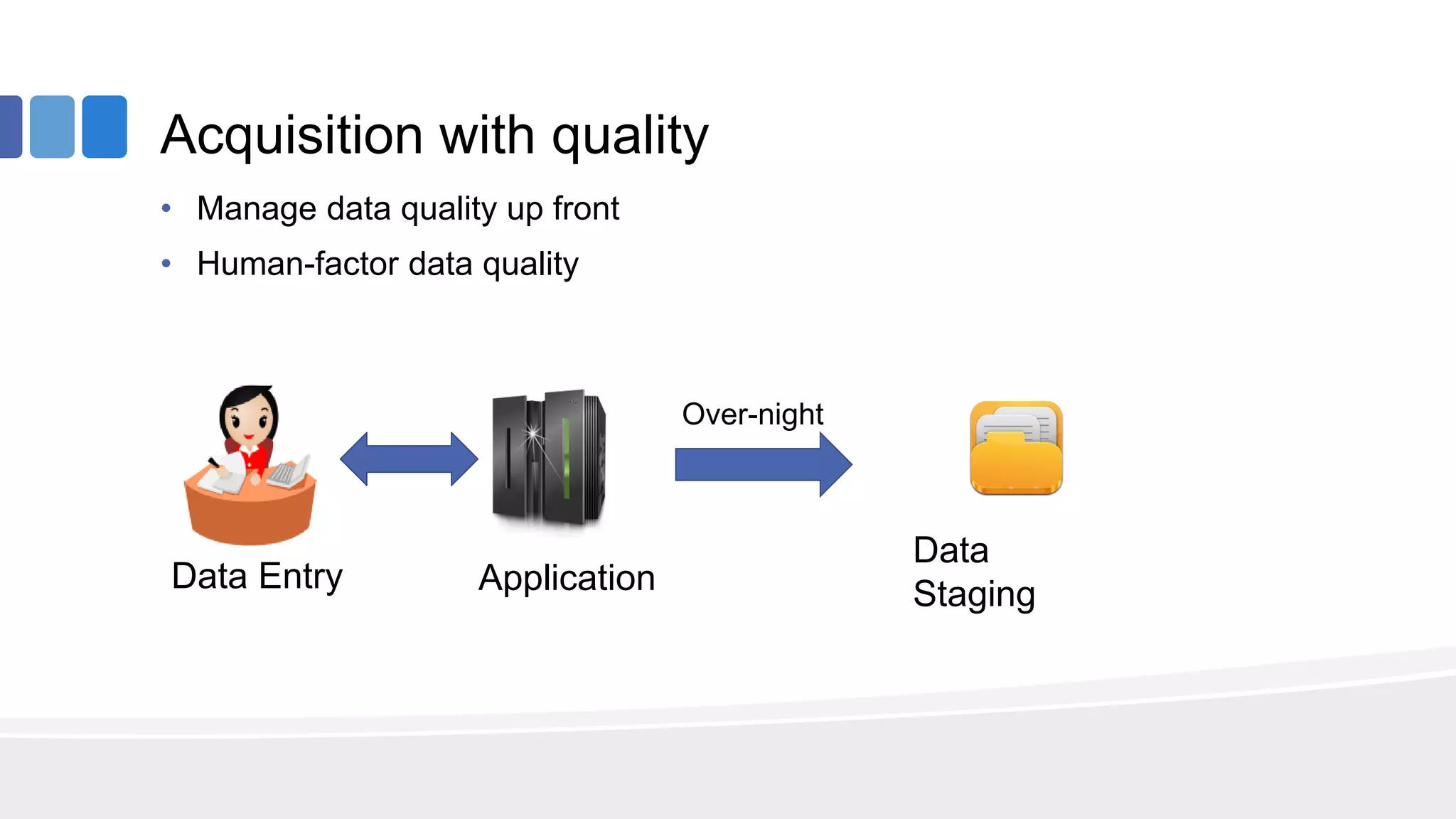

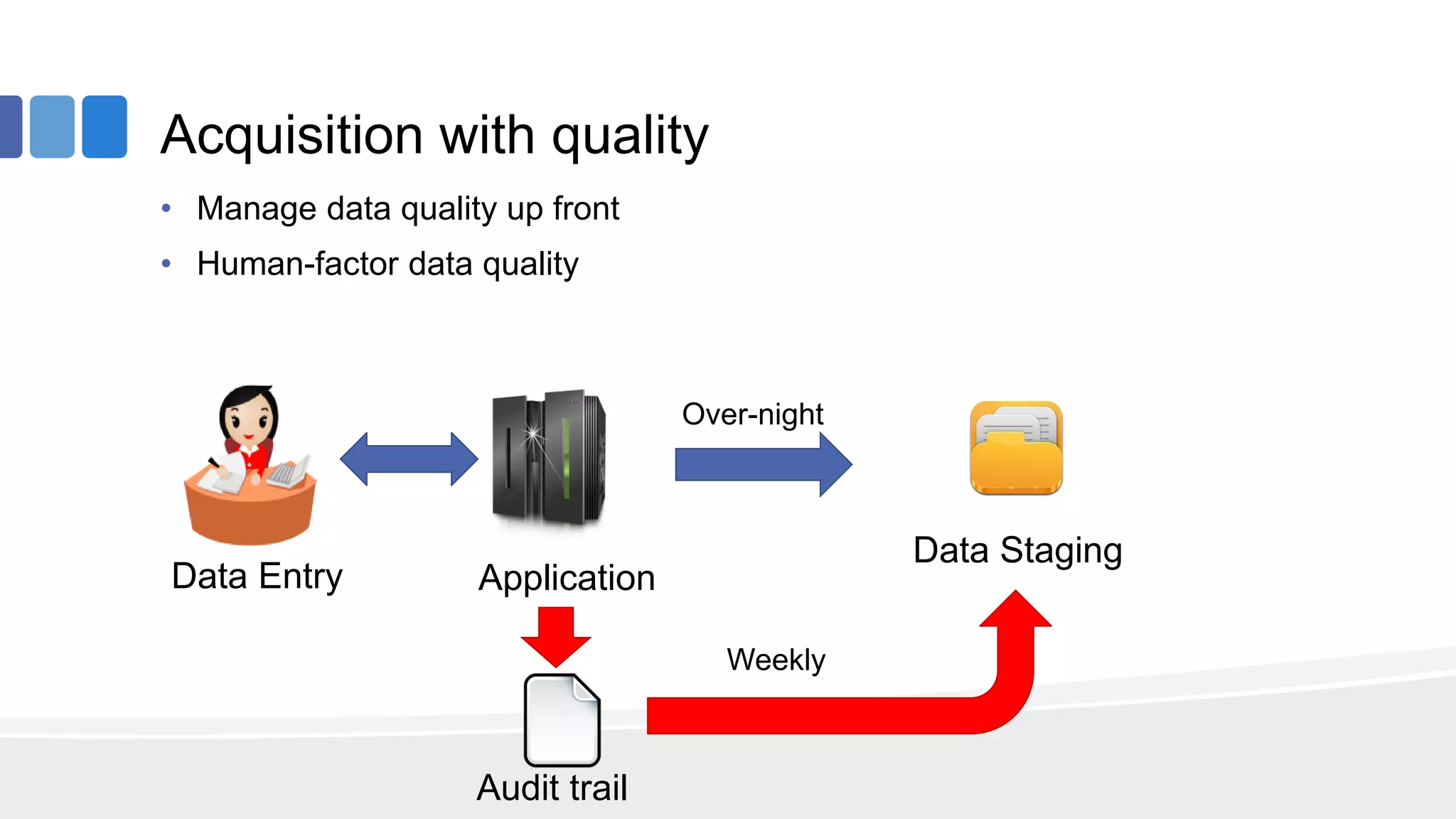

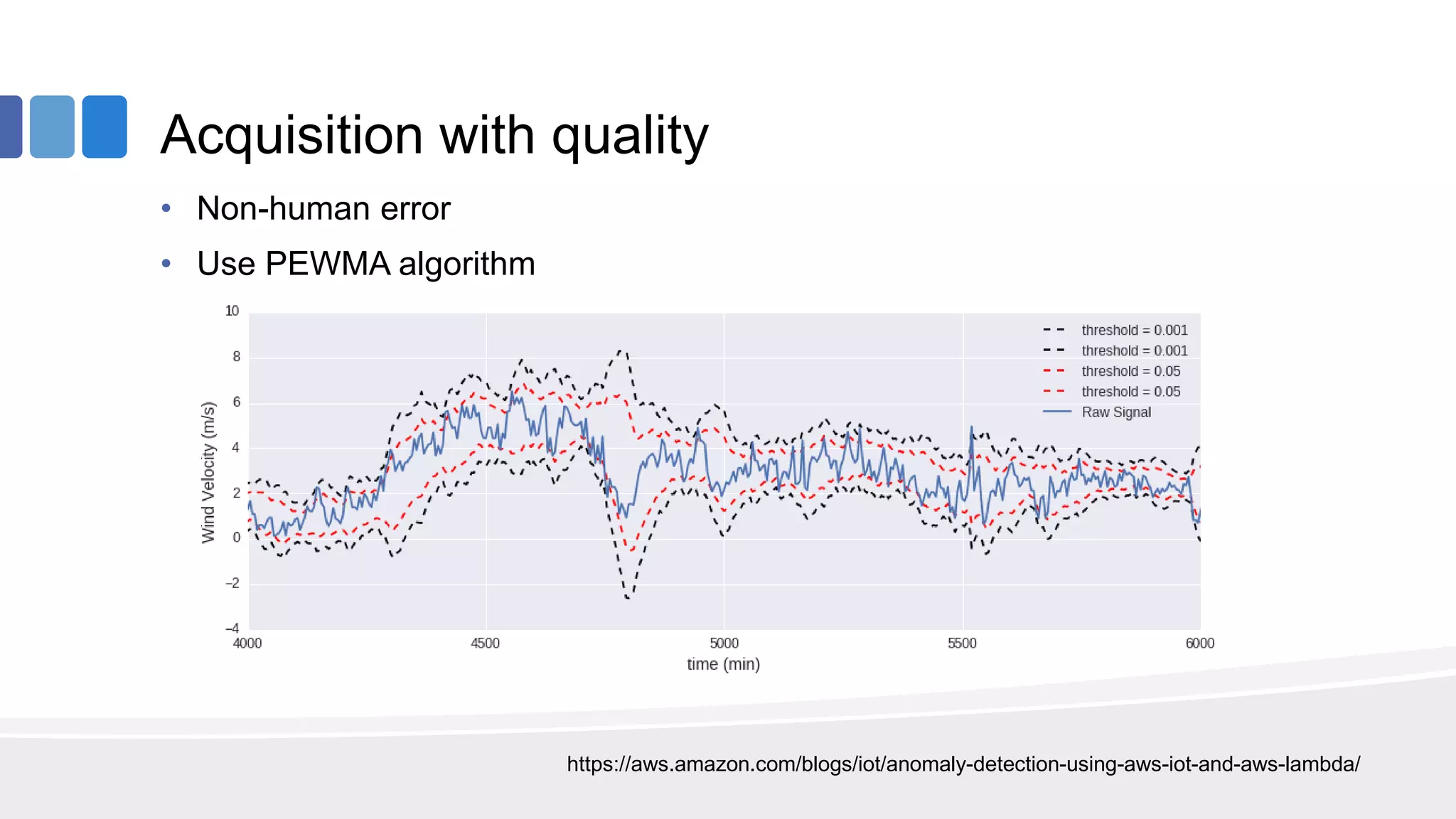

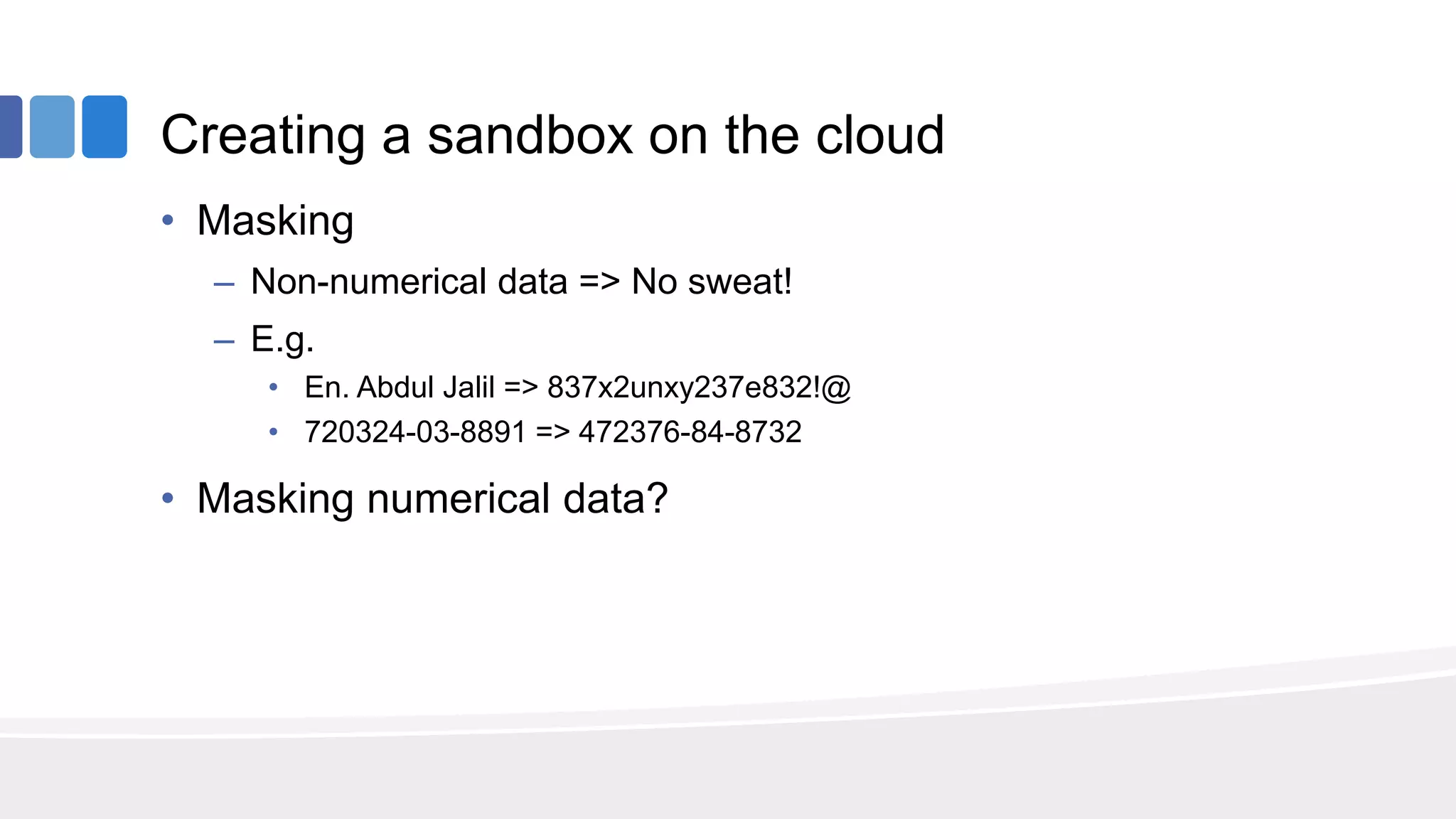

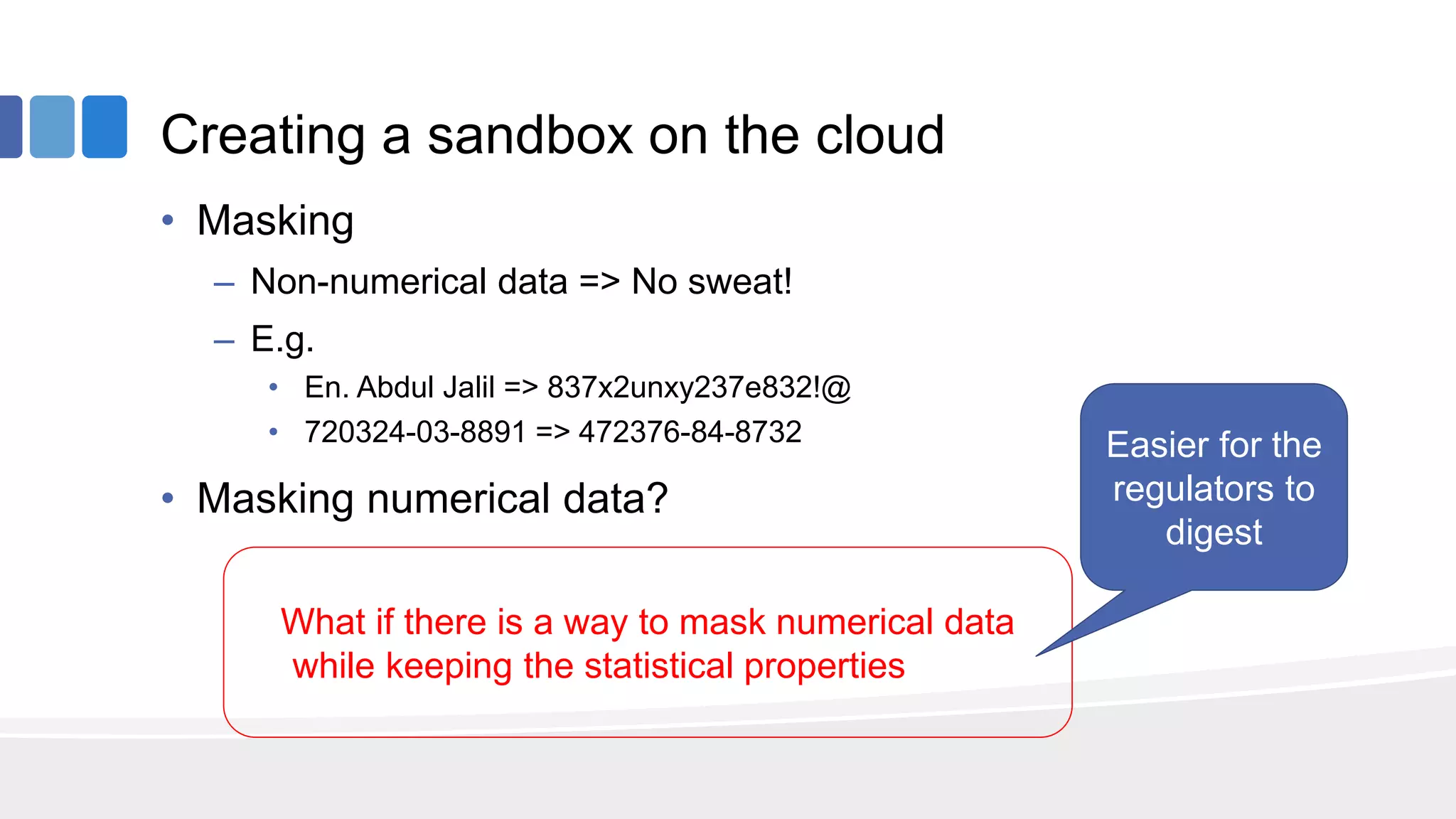

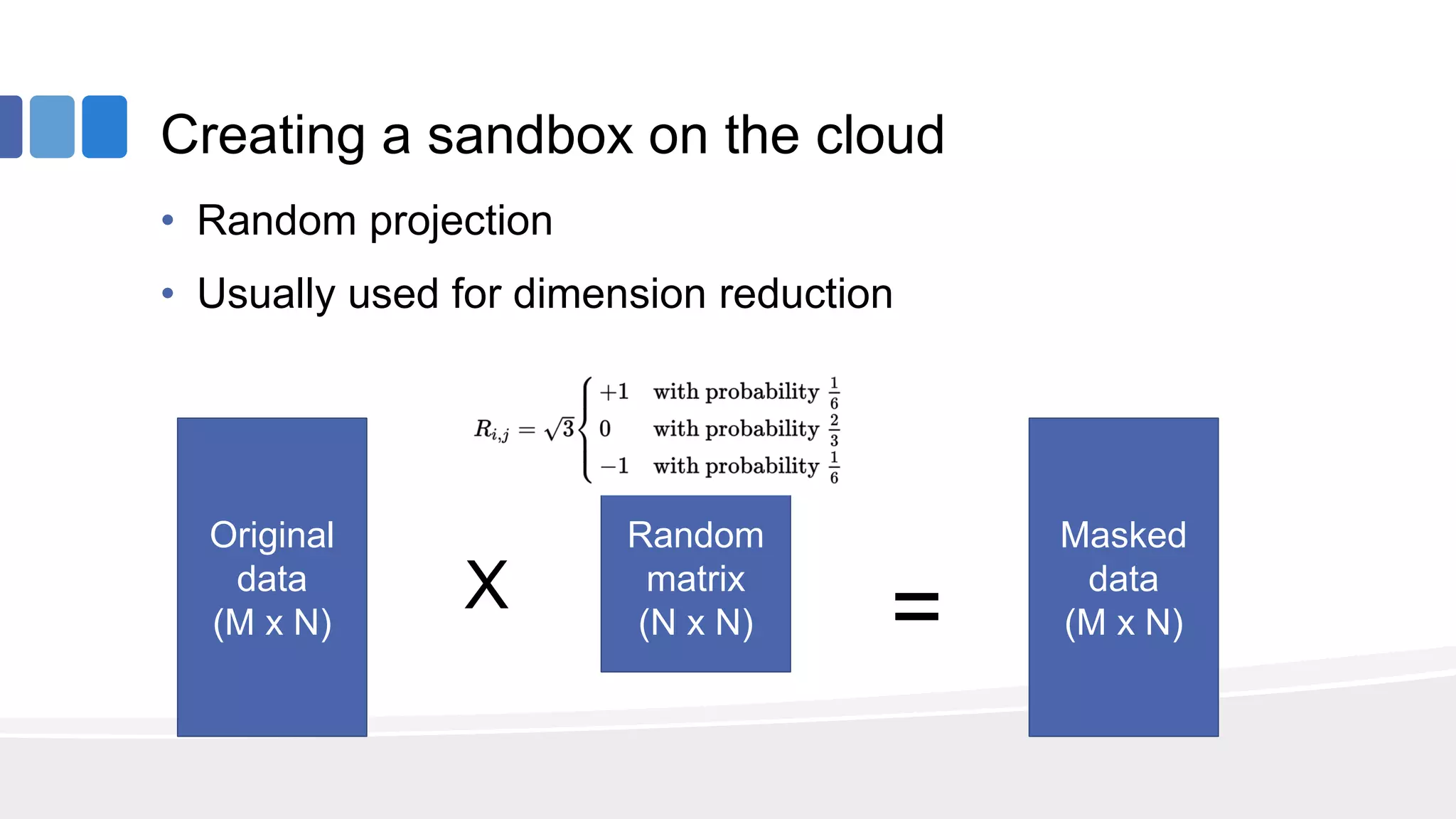

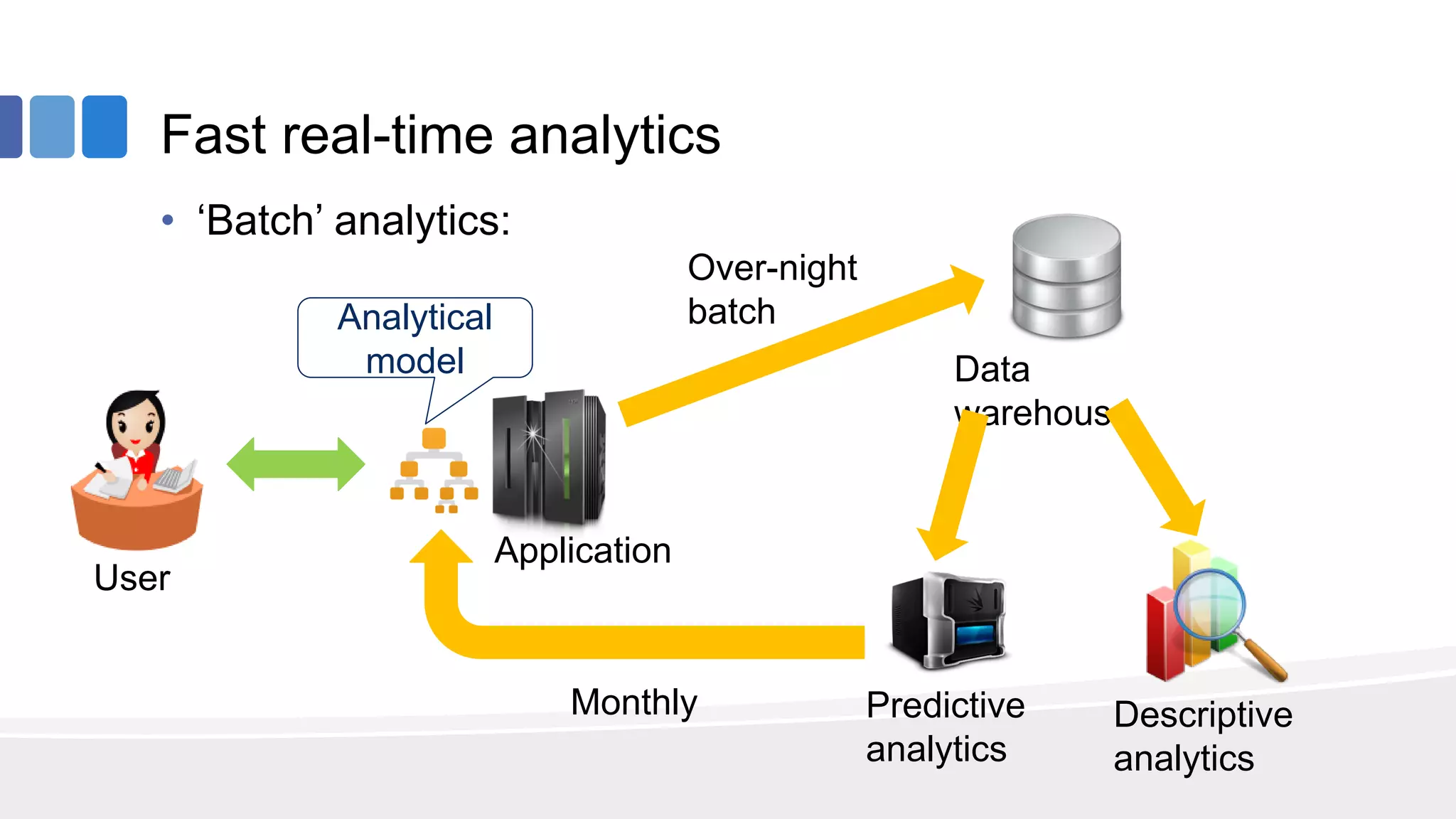

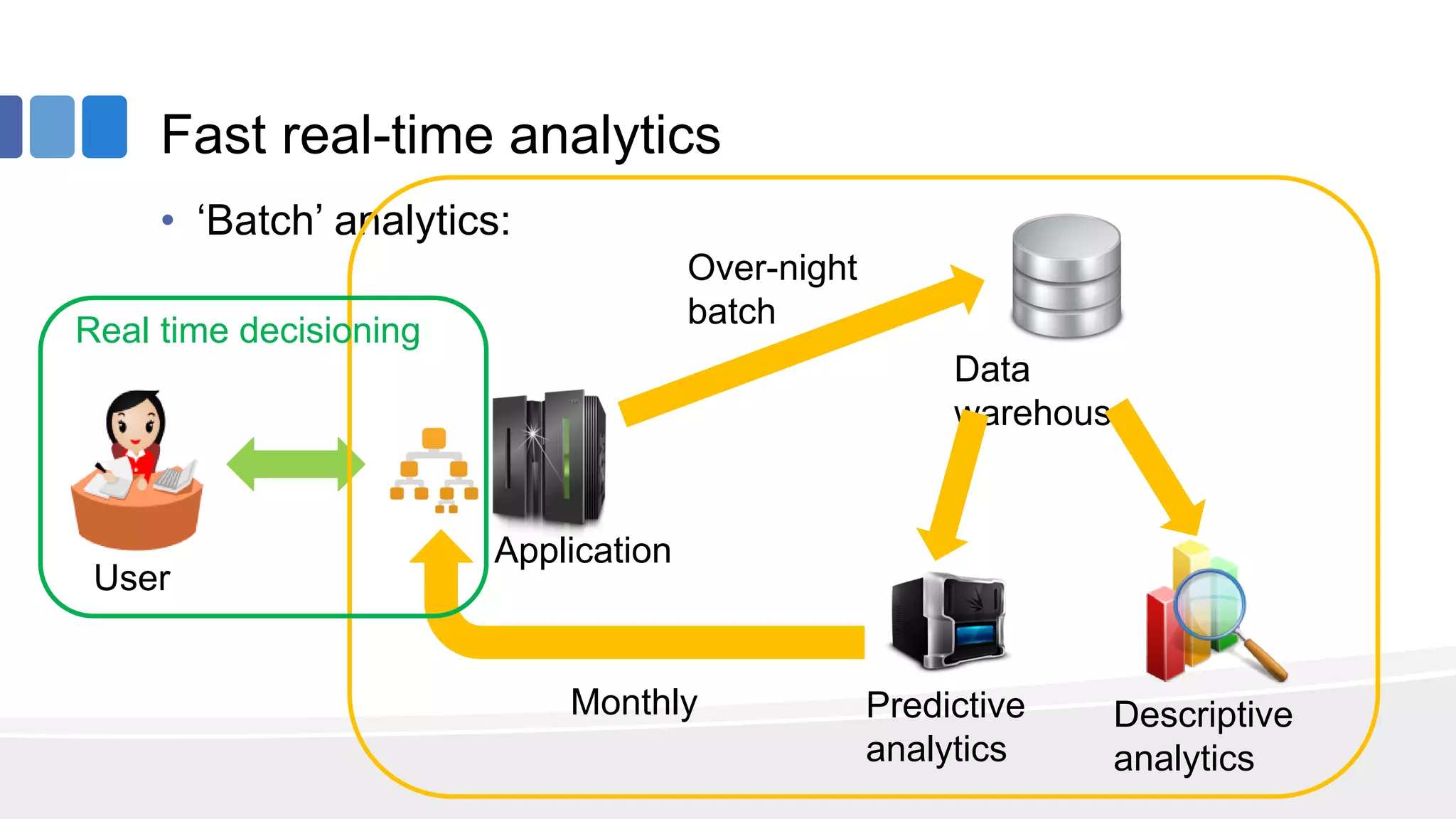

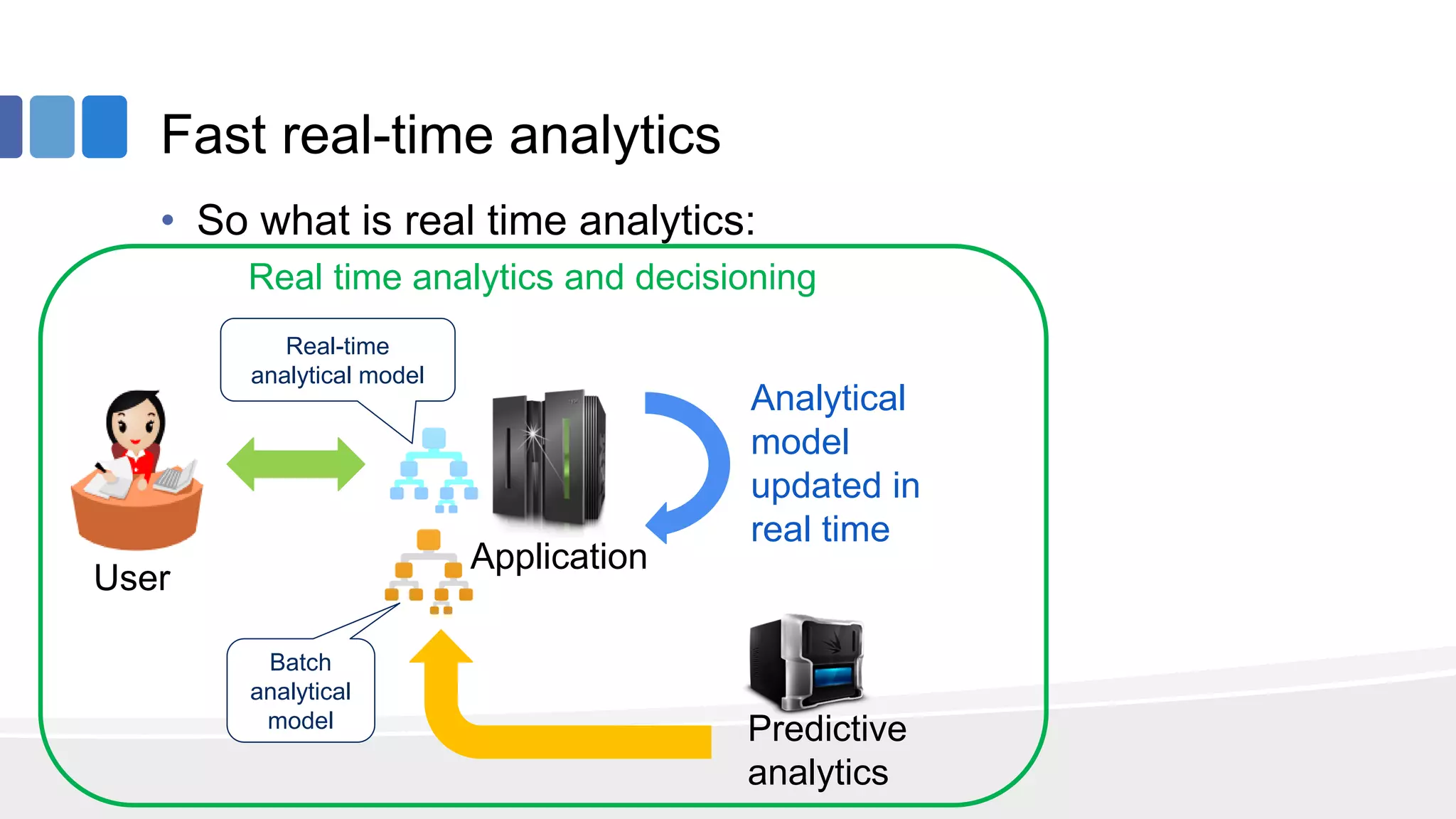

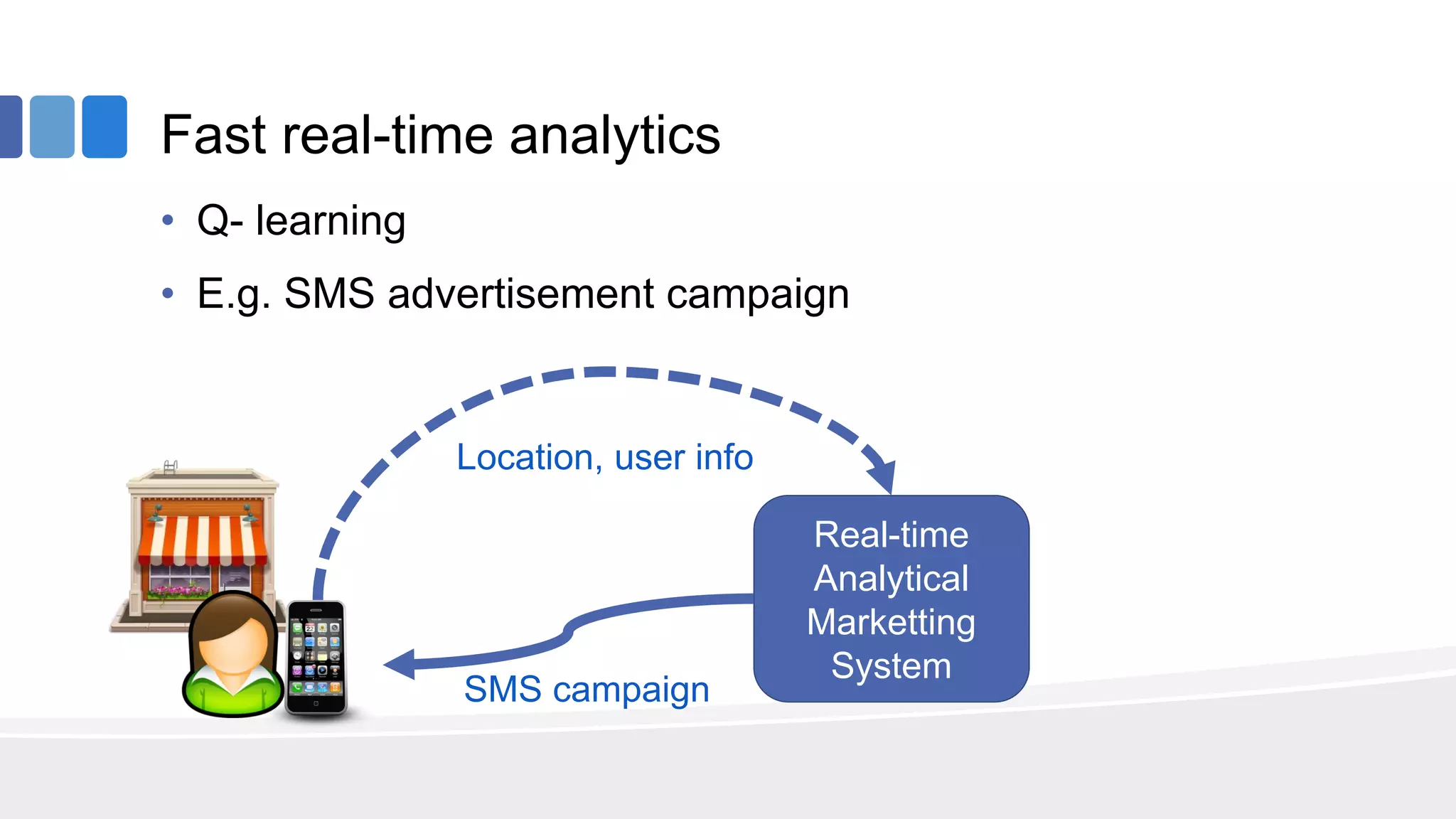

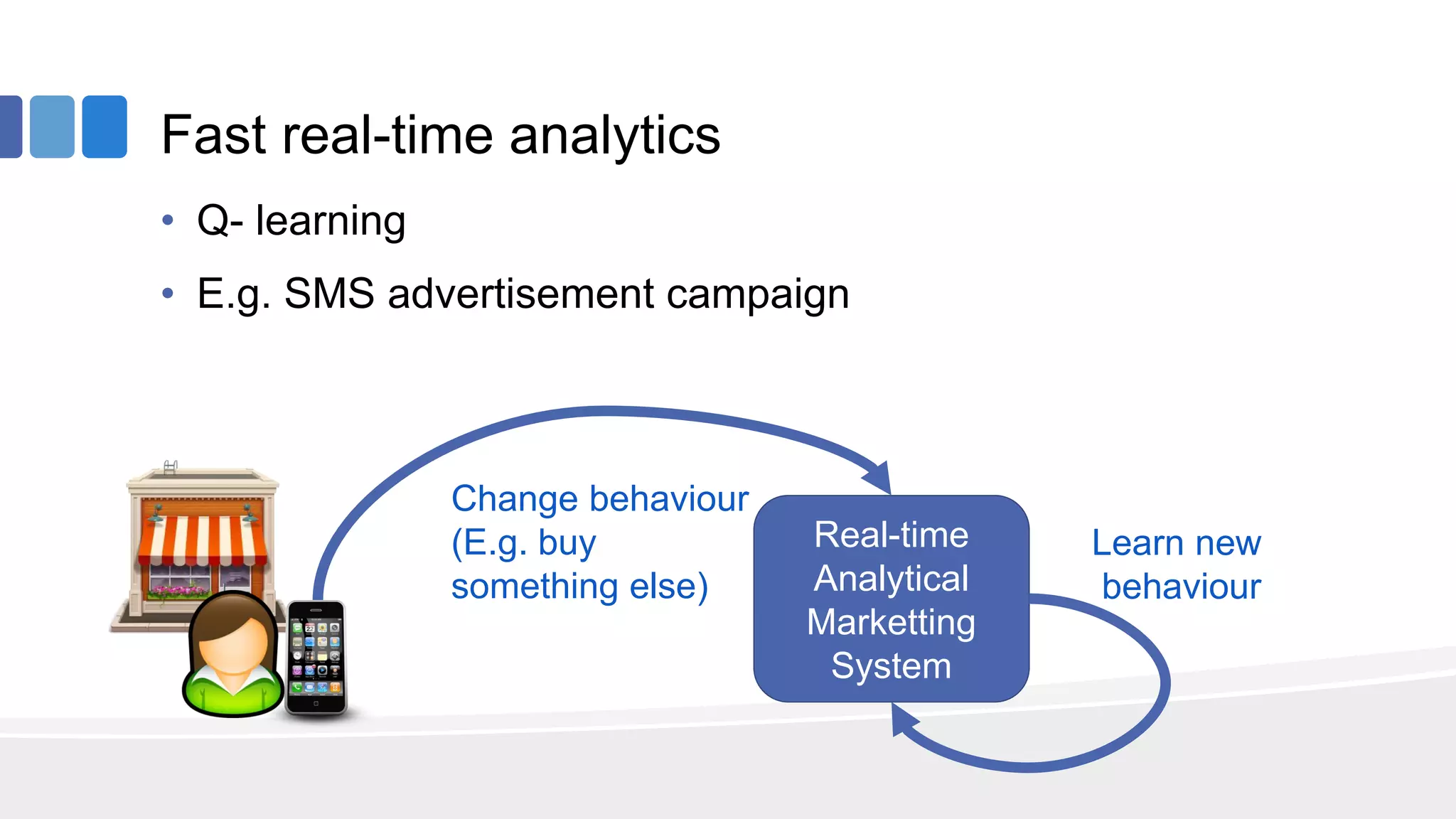

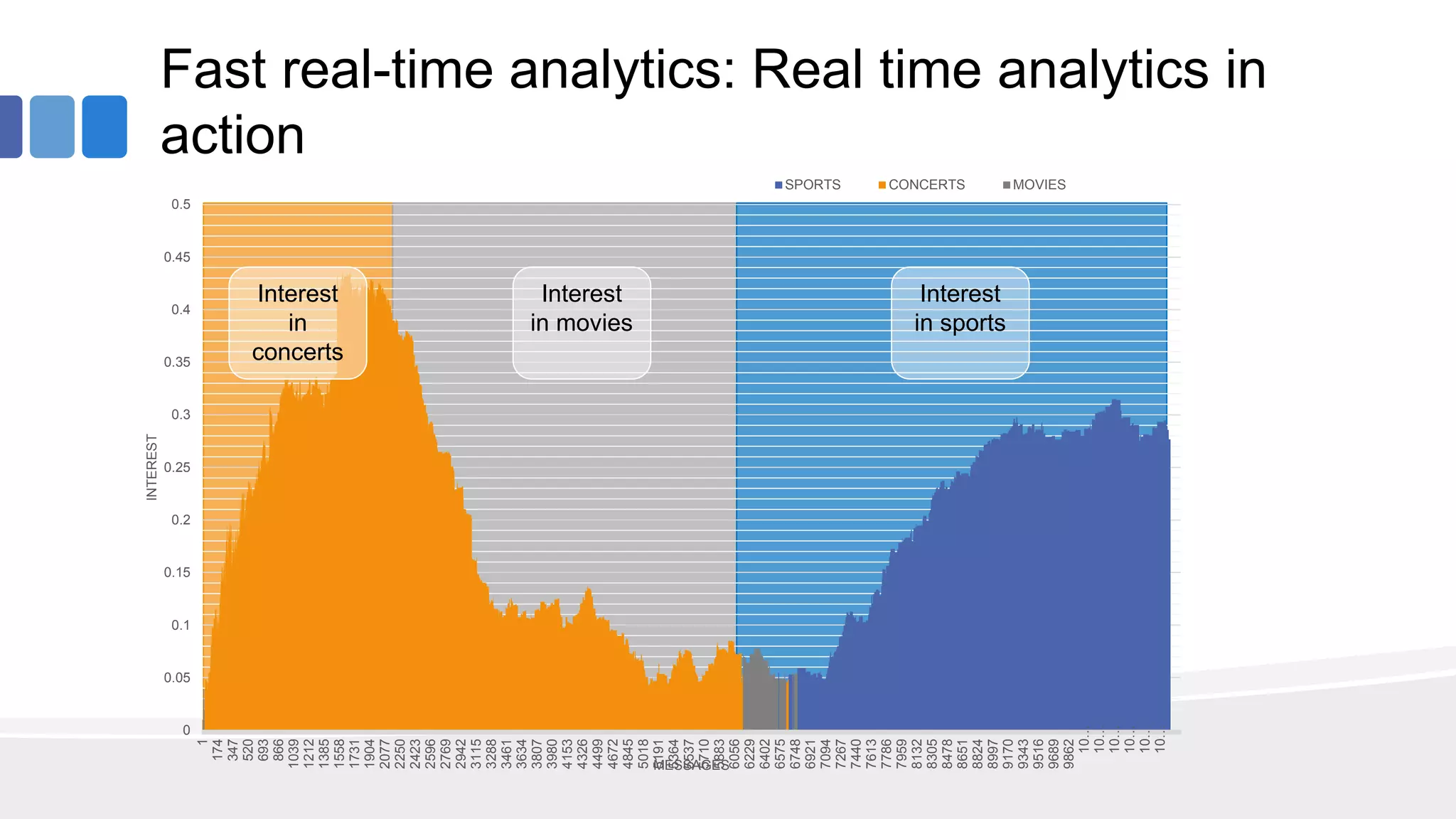

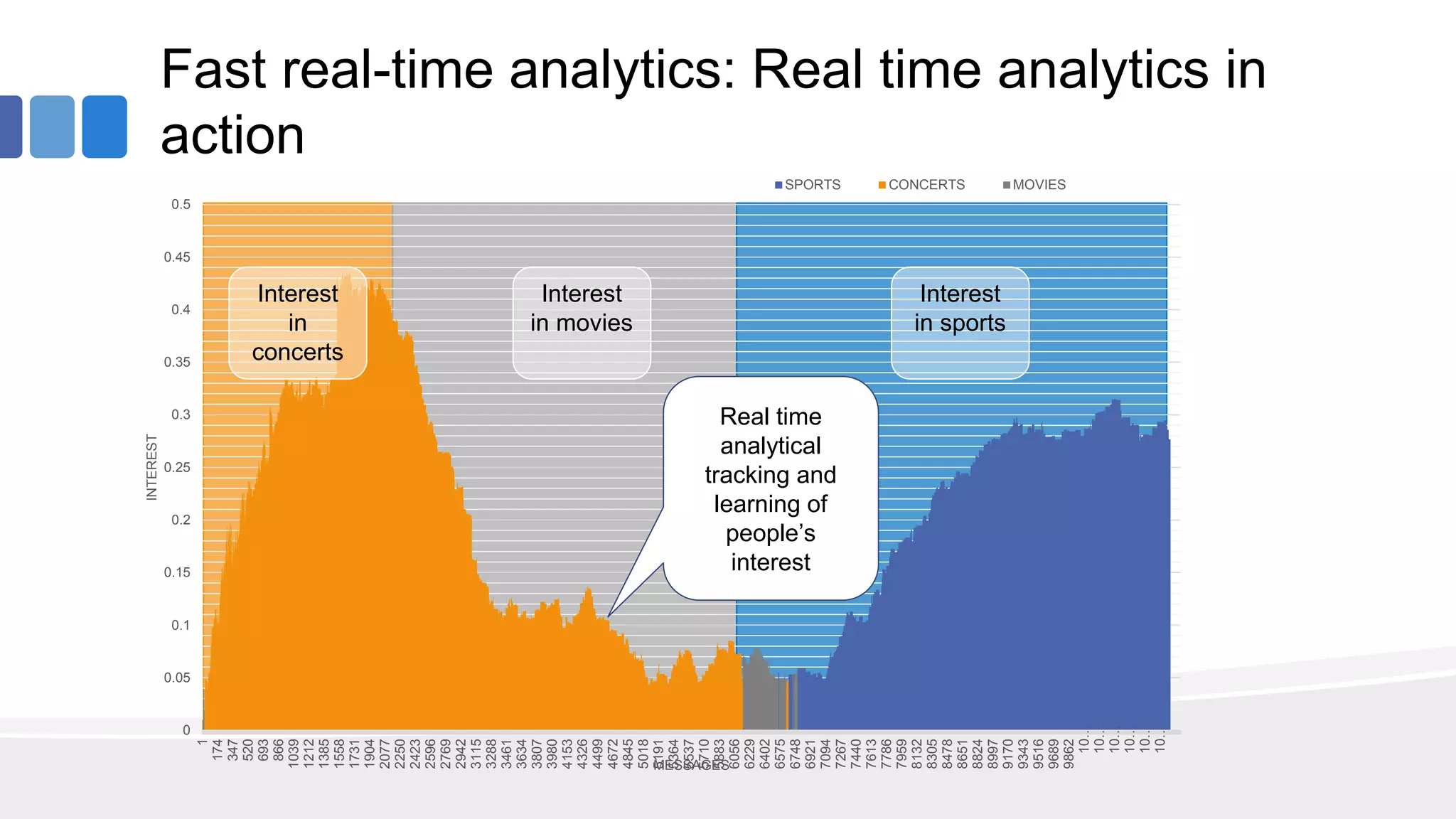

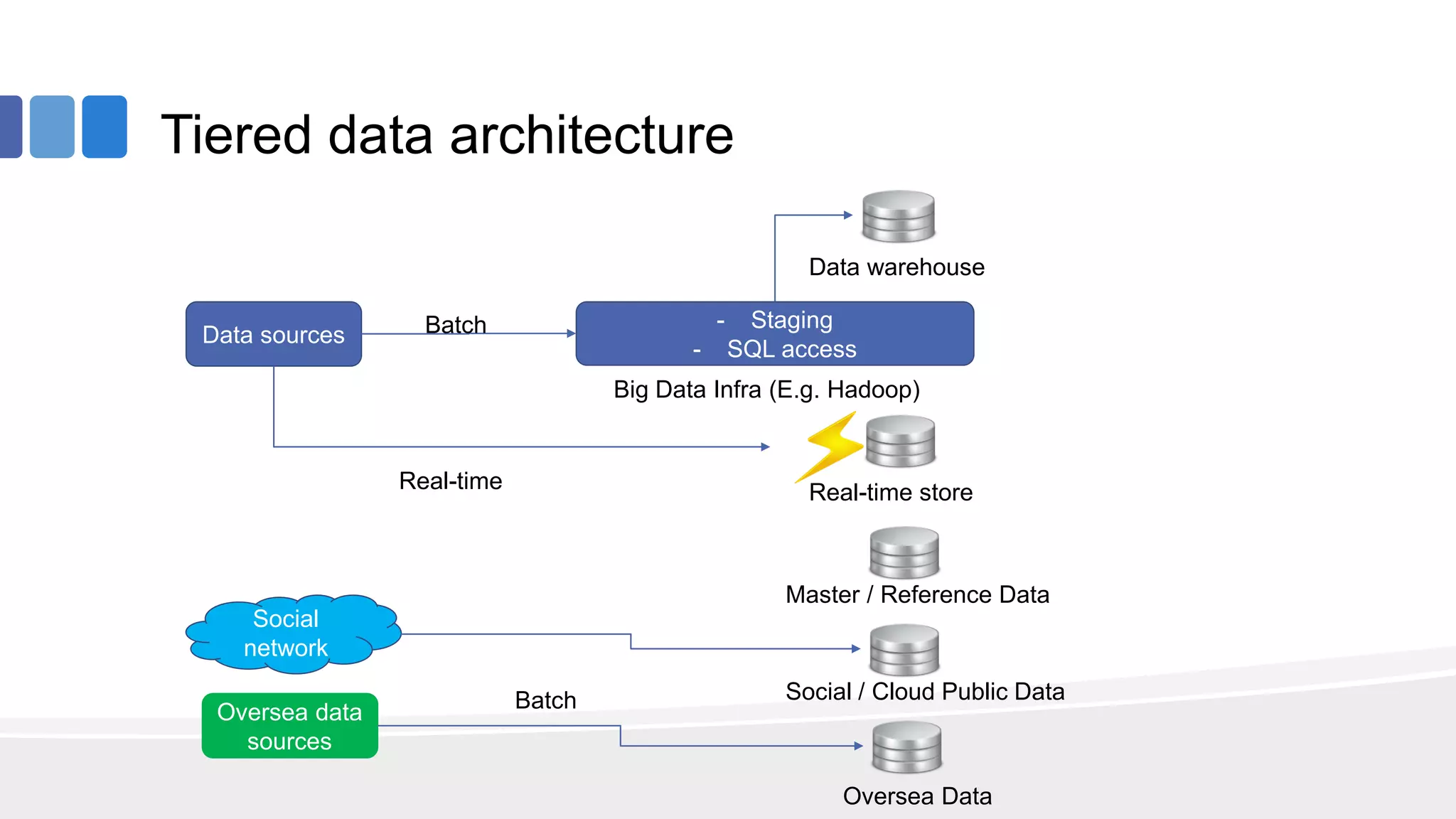

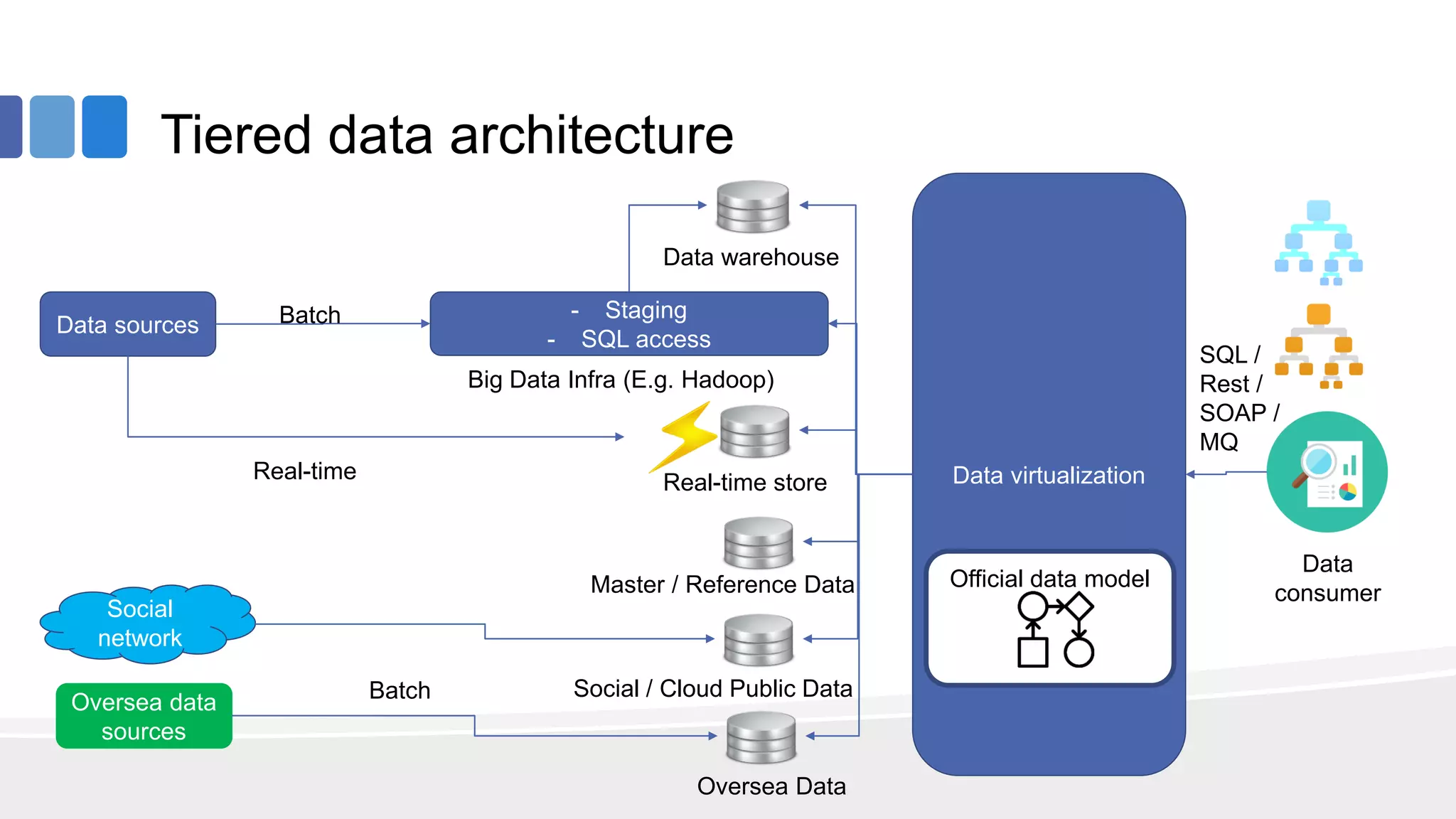

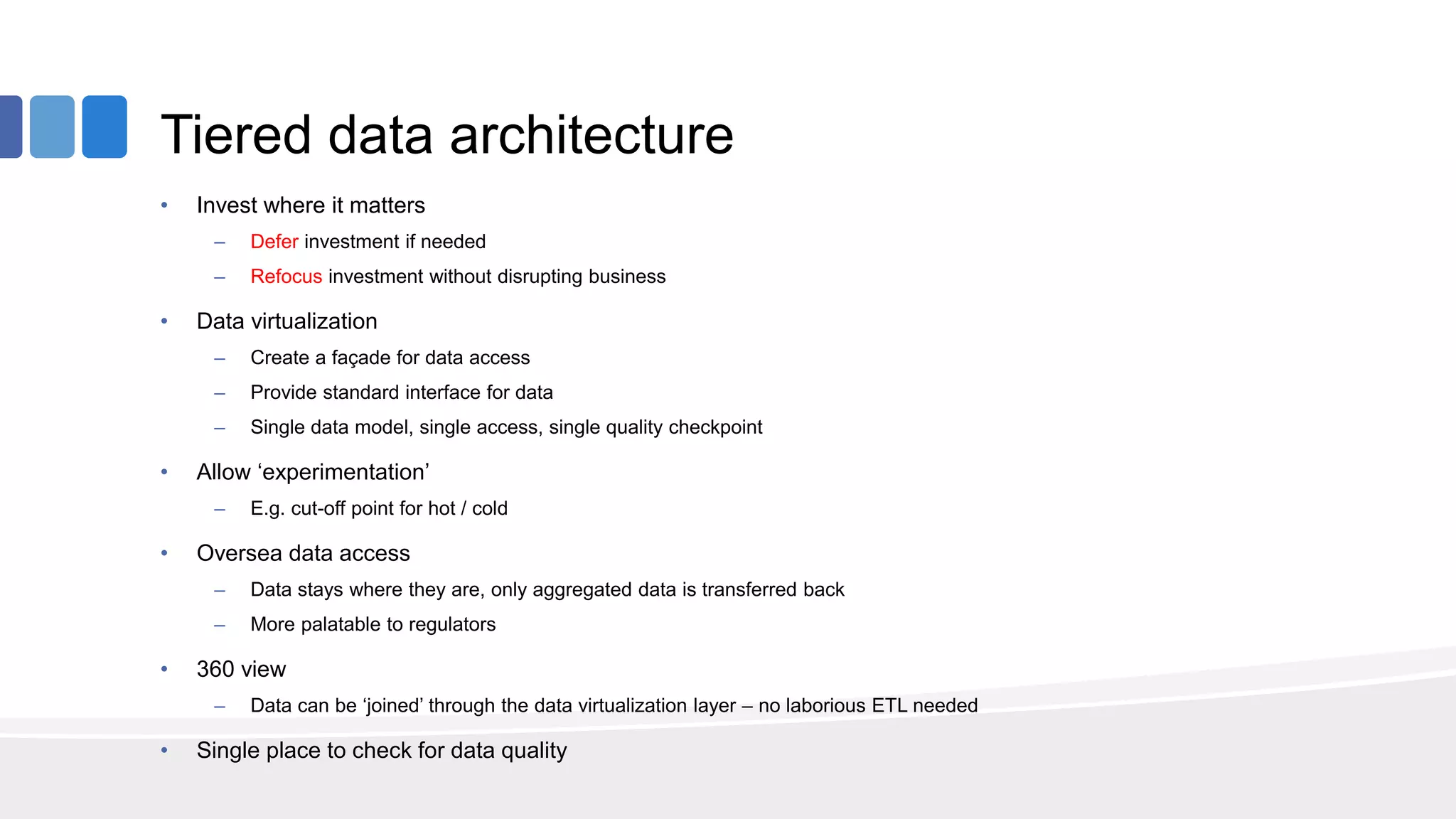

This document provides an overview of big data concepts from the perspective of an enterprise data architect. It discusses the data journey from acquisition to analytics and highlights best practices for data quality, sandboxing data on the cloud, balancing real-time and batch analytics, and putting these concepts together under a tiered data architecture. The architecture proposes investing more in fast data sources and less in cold data, using data virtualization to provide a unified view, and keeping sensitive customer data localized to comply with regulations.