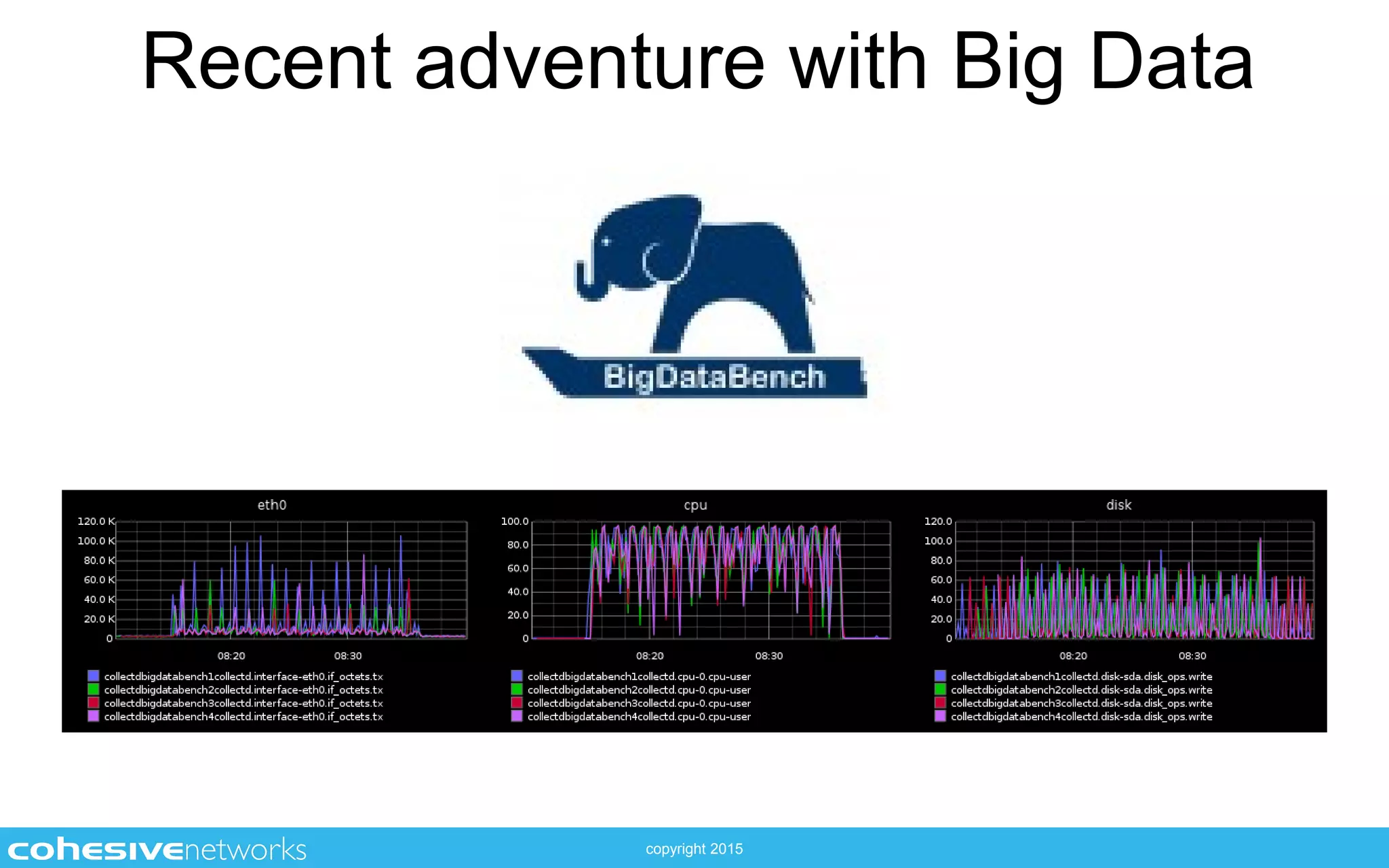

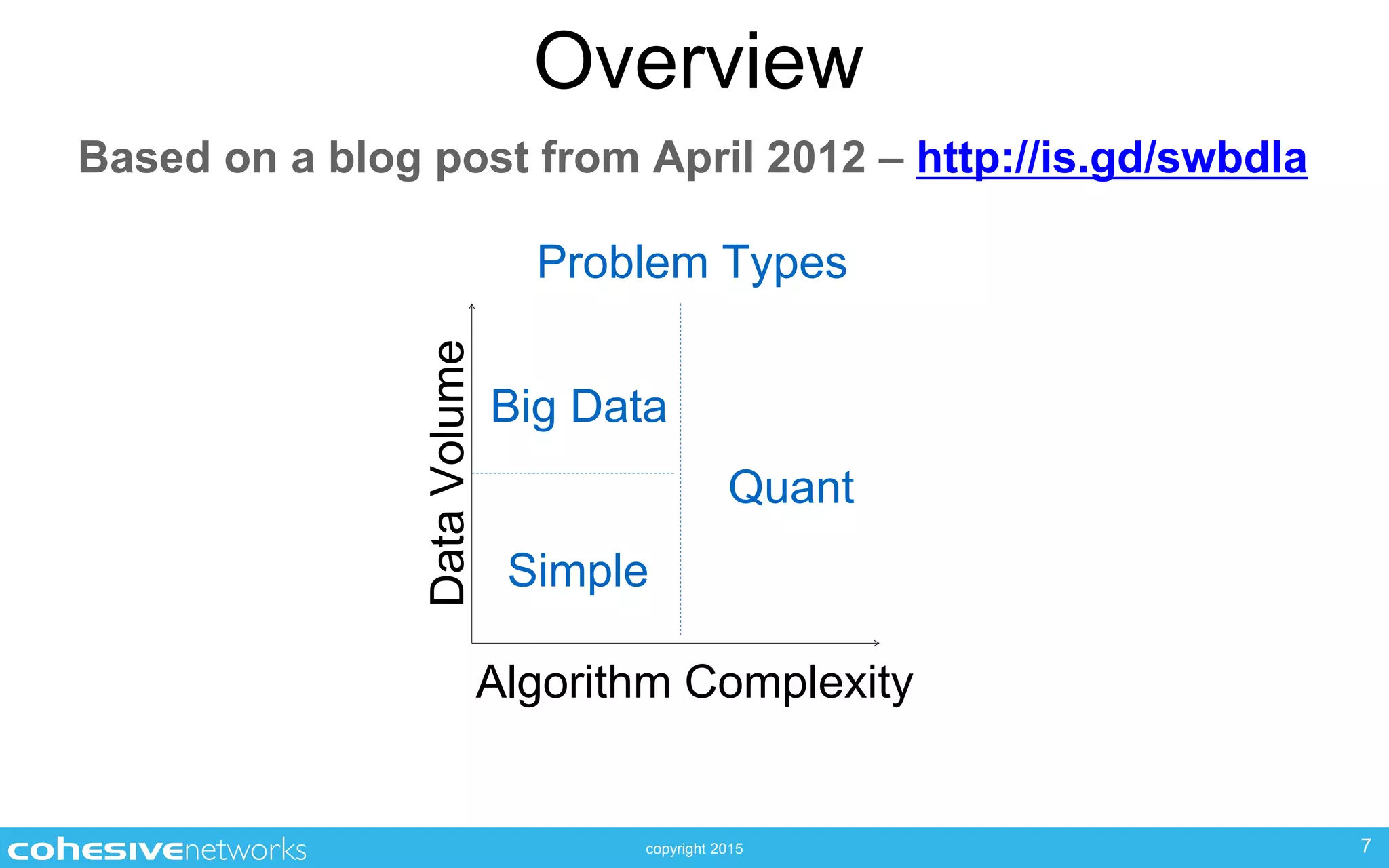

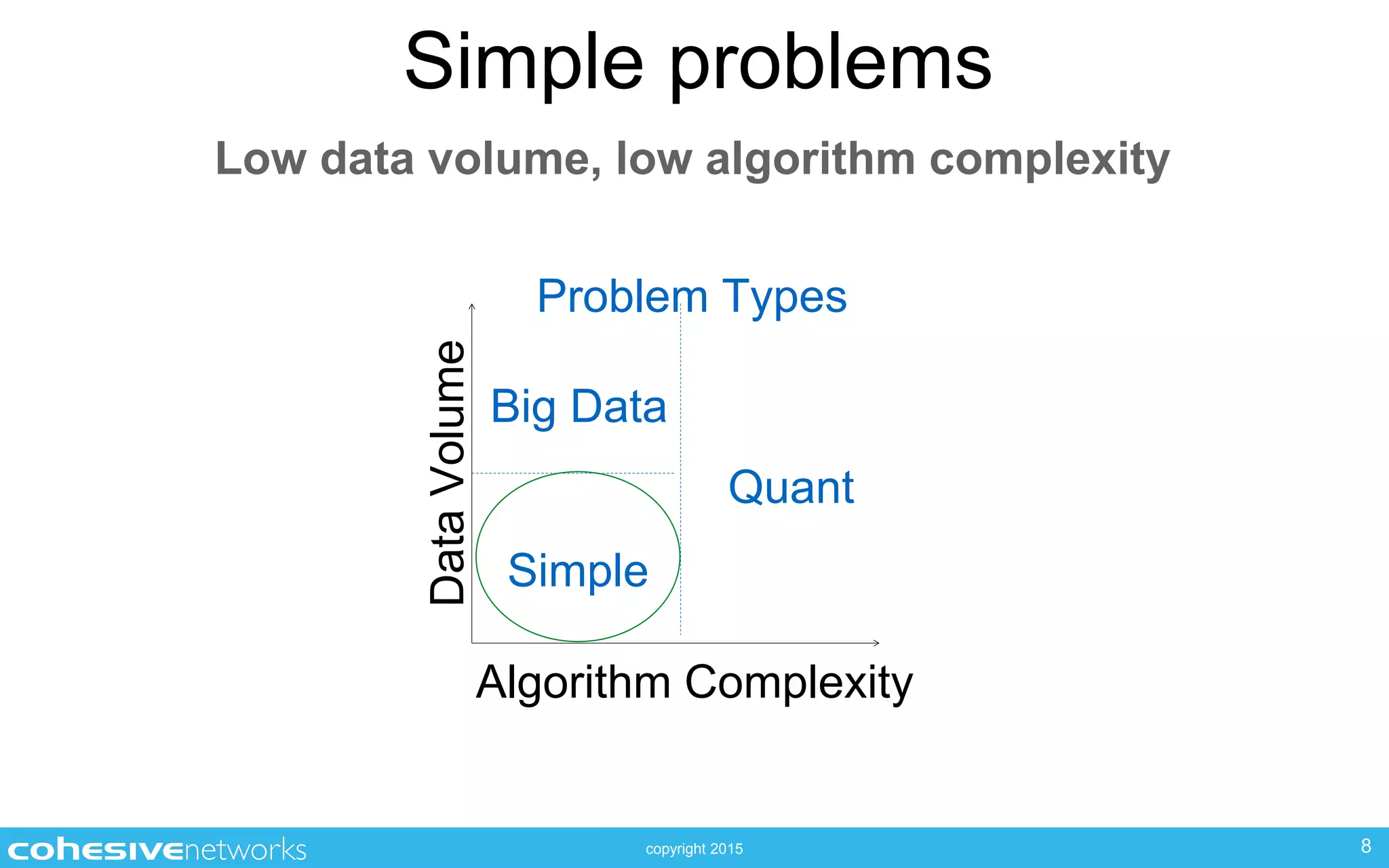

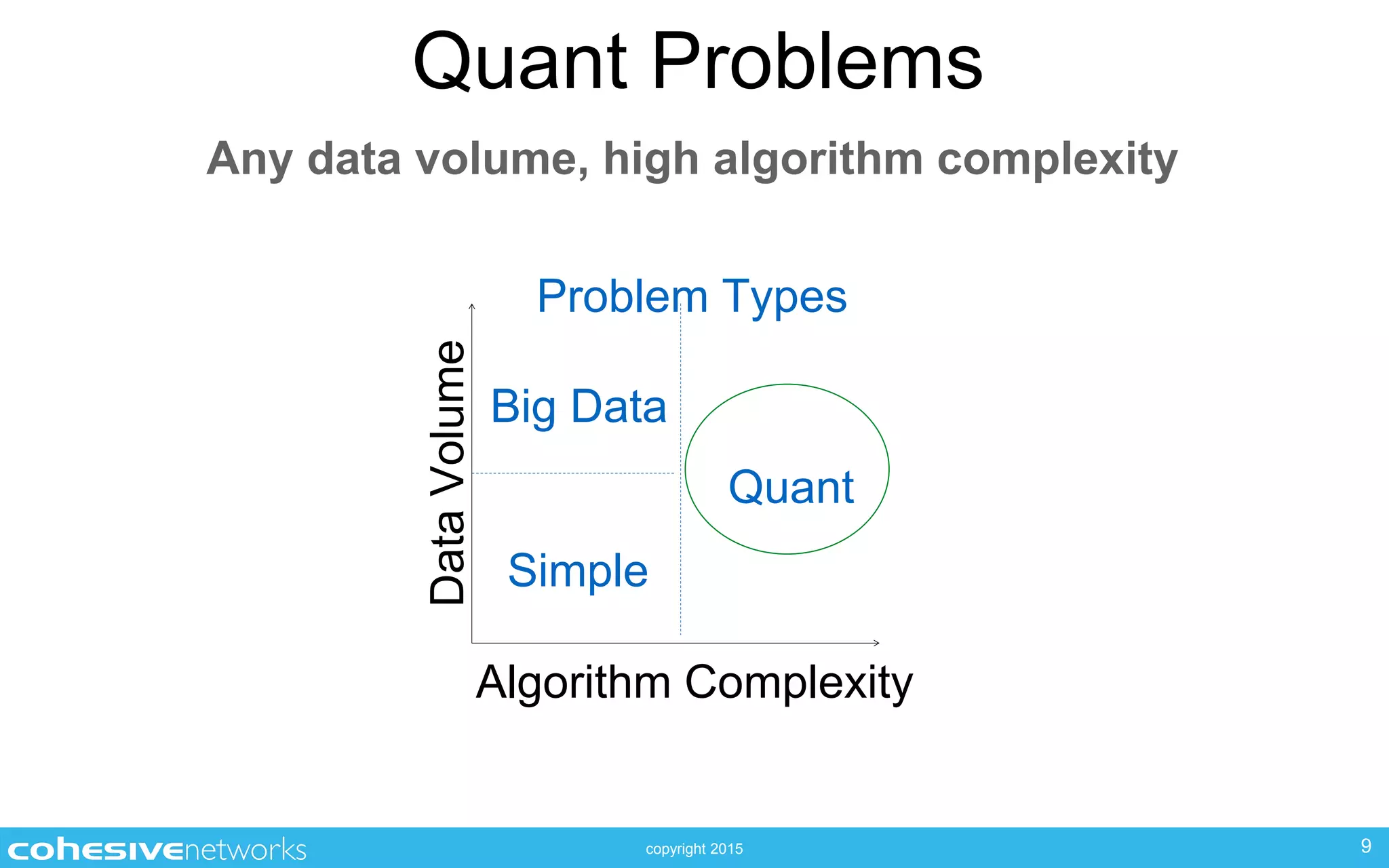

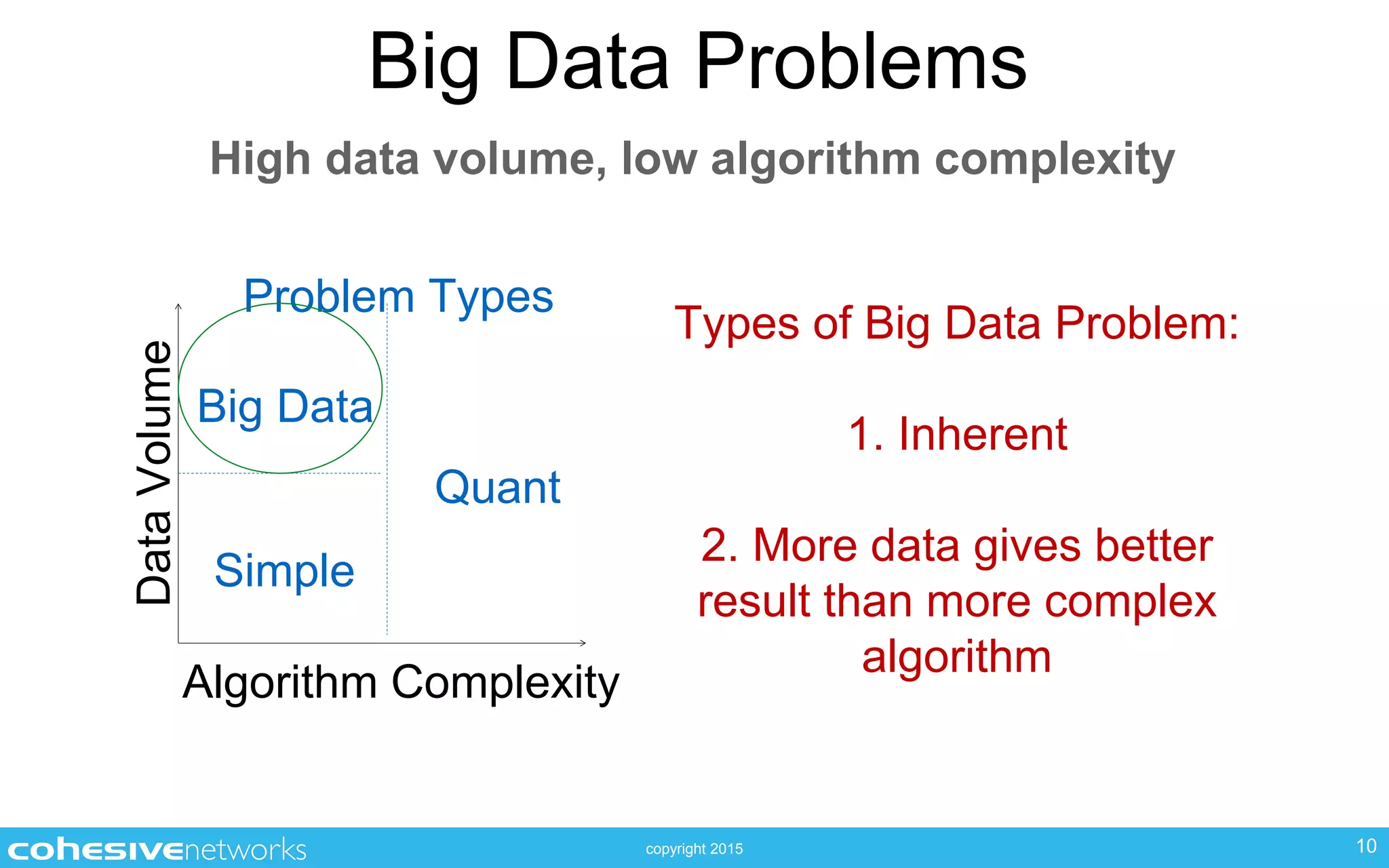

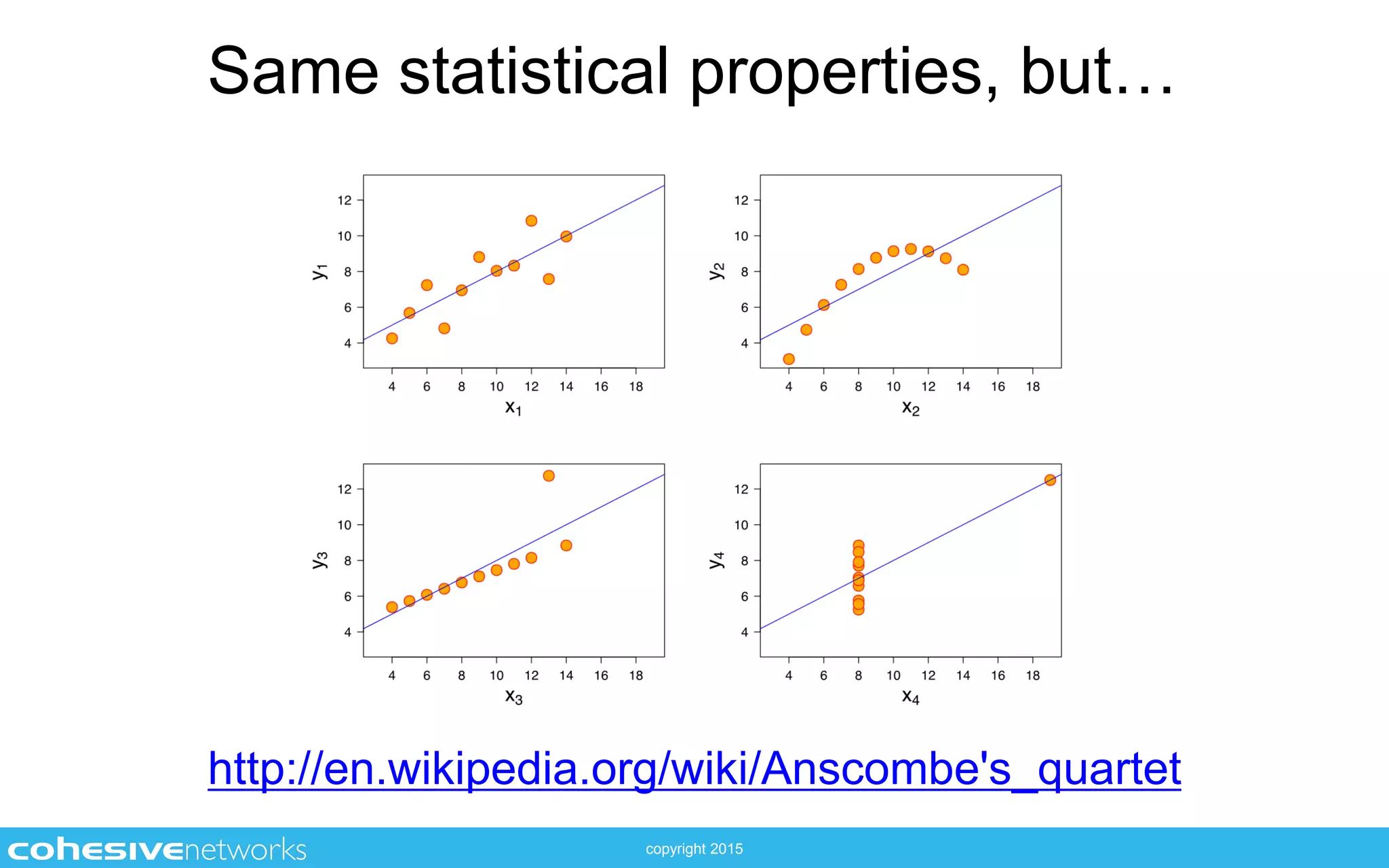

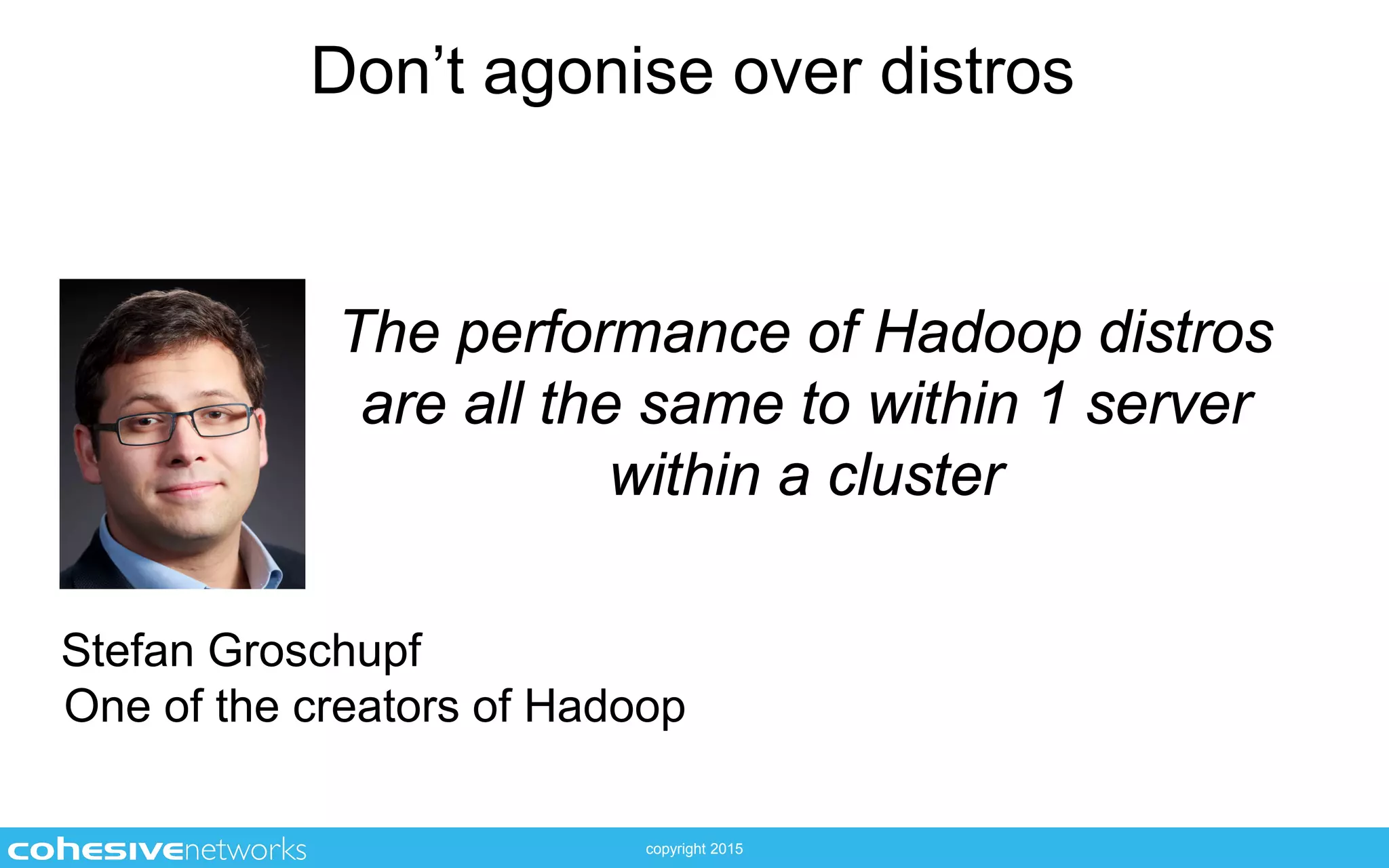

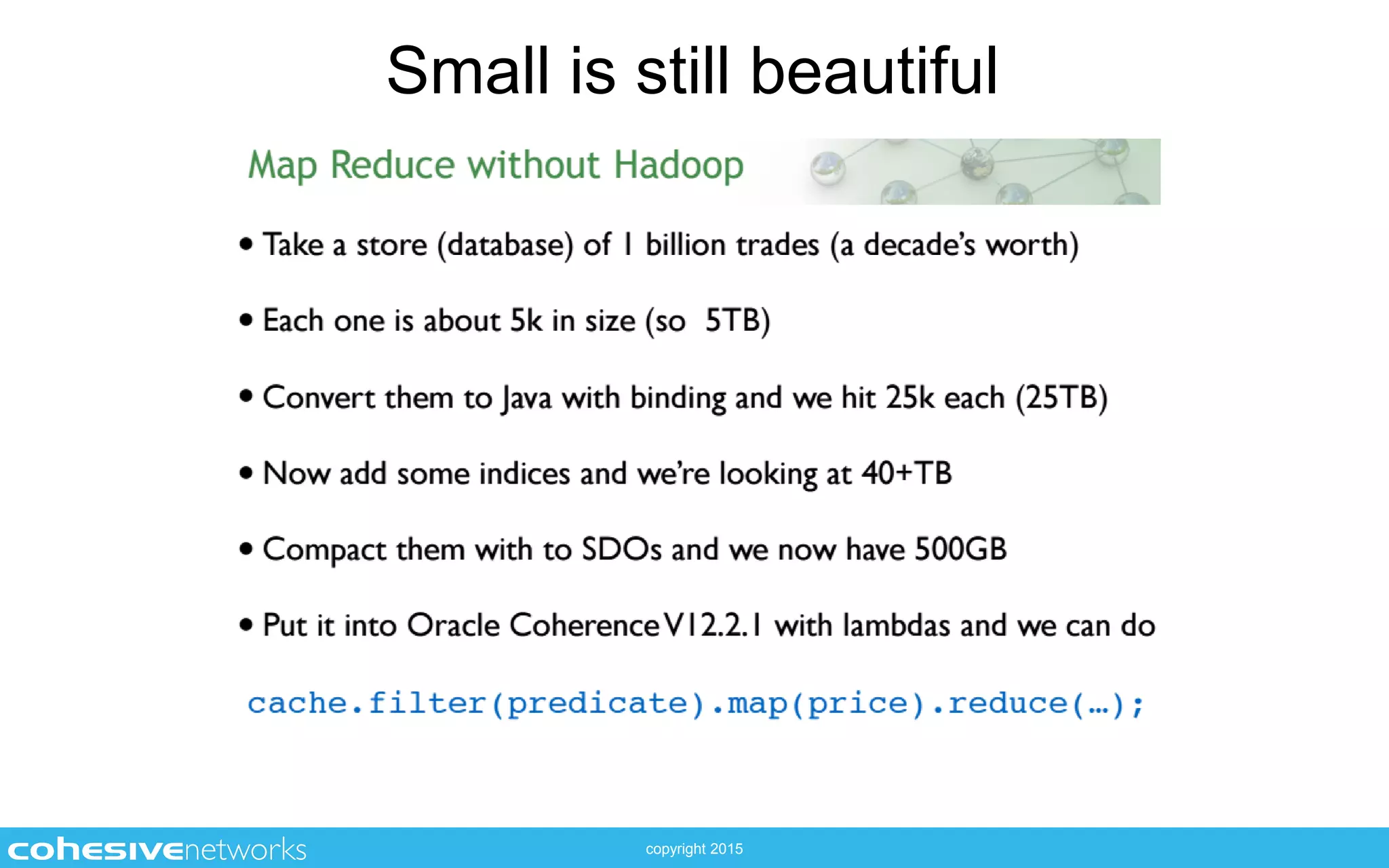

The document discusses the complexities and misconceptions surrounding big data, emphasizing the importance of understanding algorithms and data types. It highlights different categories of big data problems, categorizing them by data volume and algorithm complexity. Additionally, it warns against over-reliance on opaque systems and stresses the significance of knowing the specifics of your data and tools.