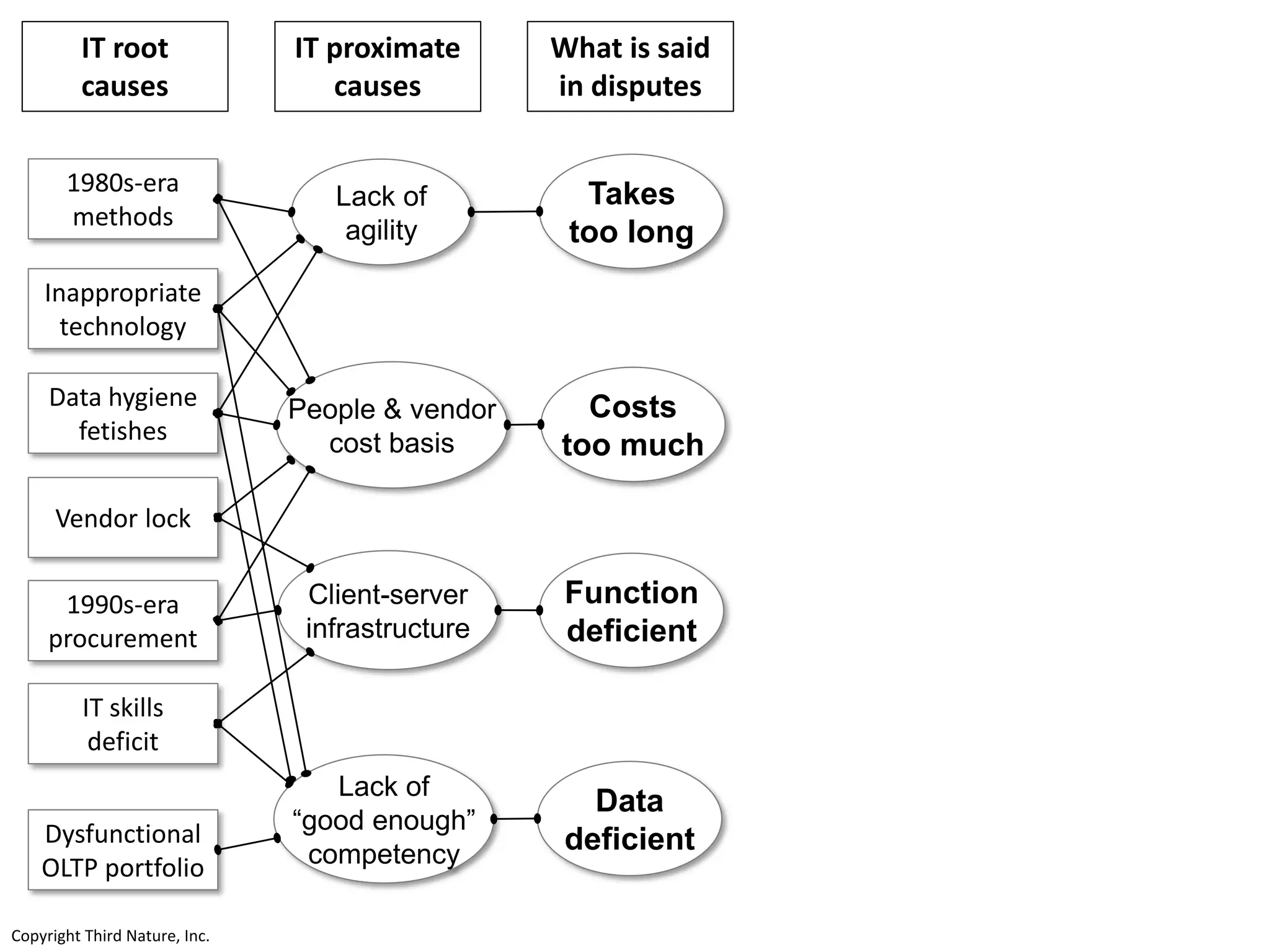

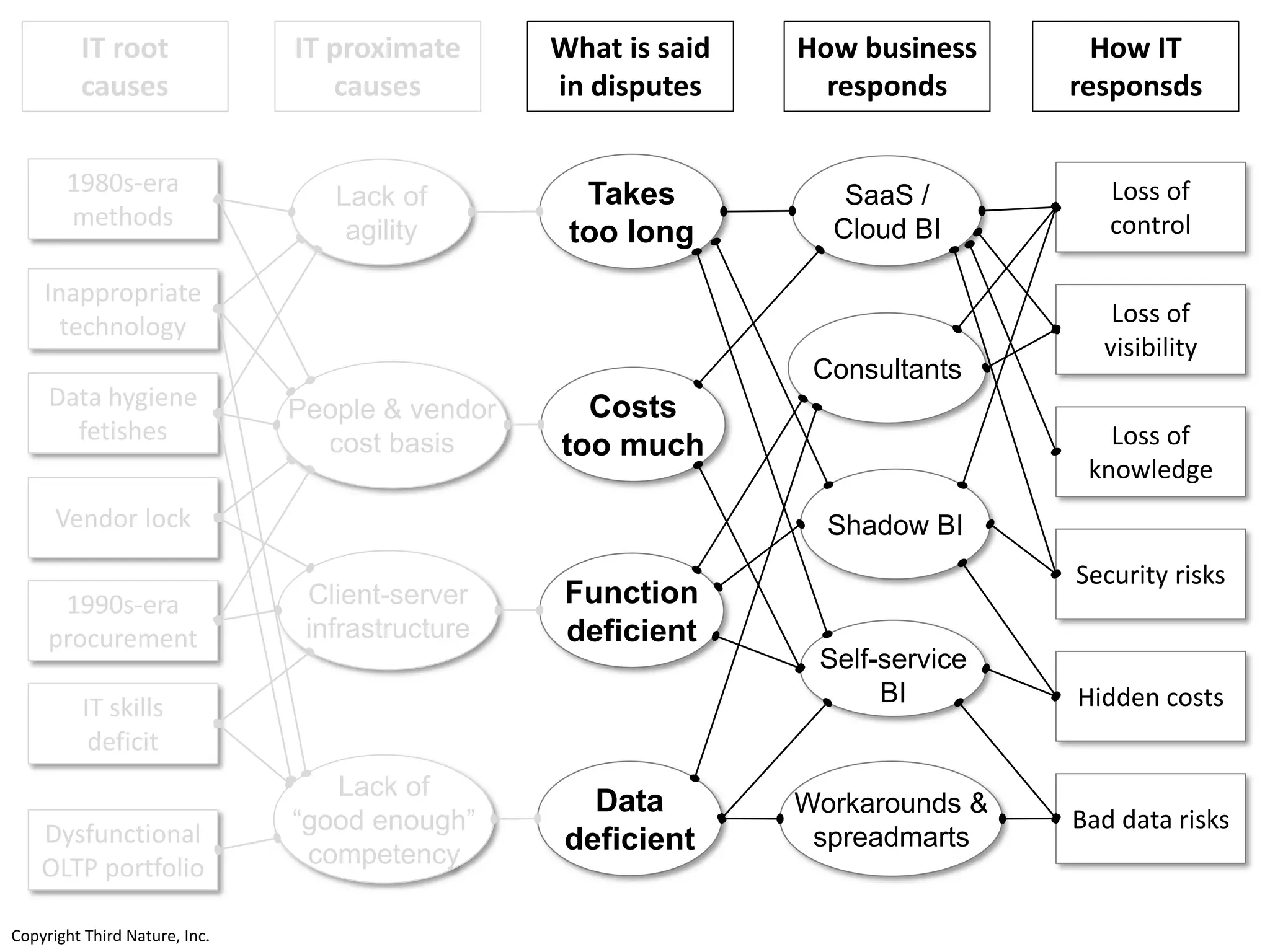

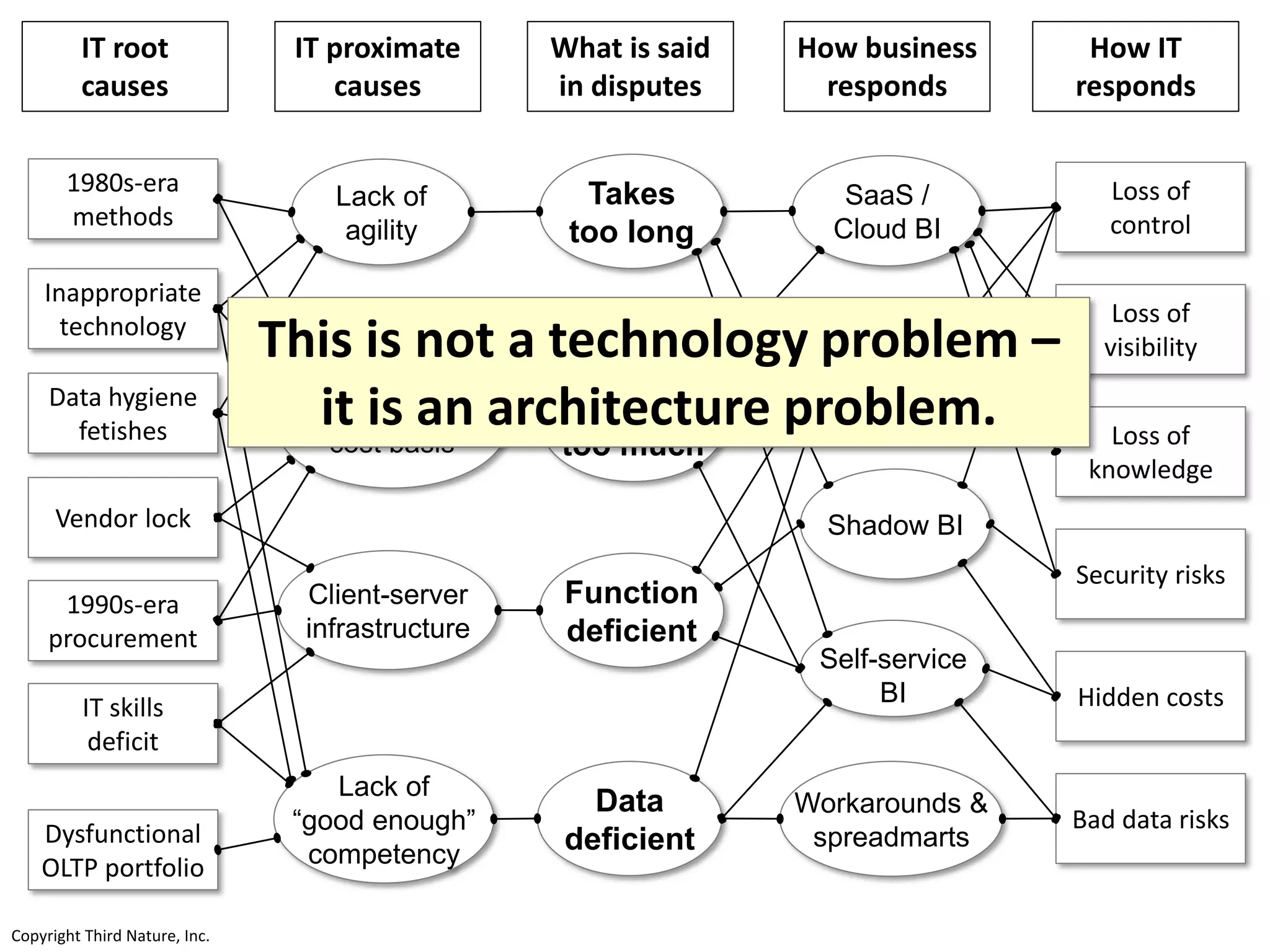

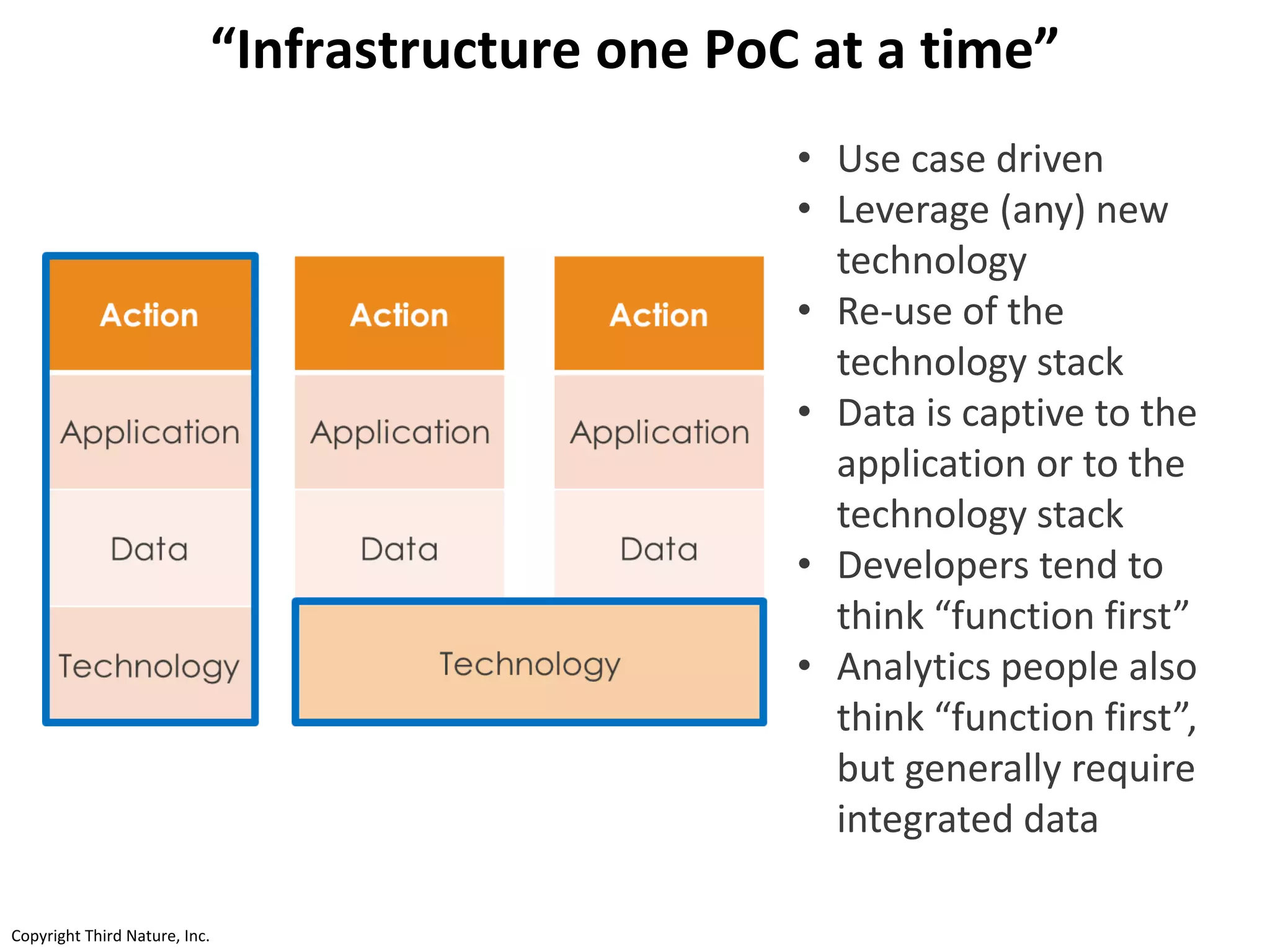

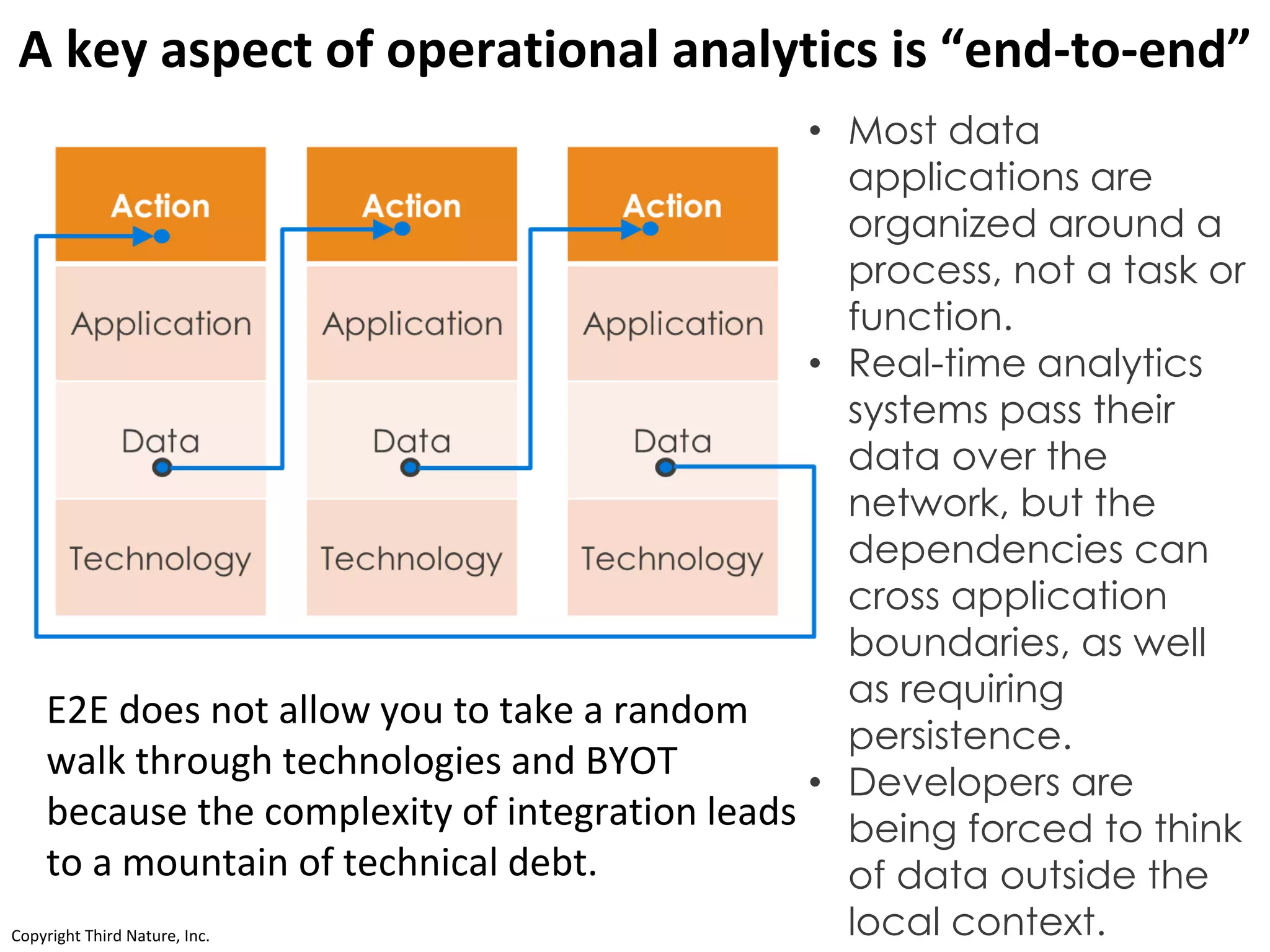

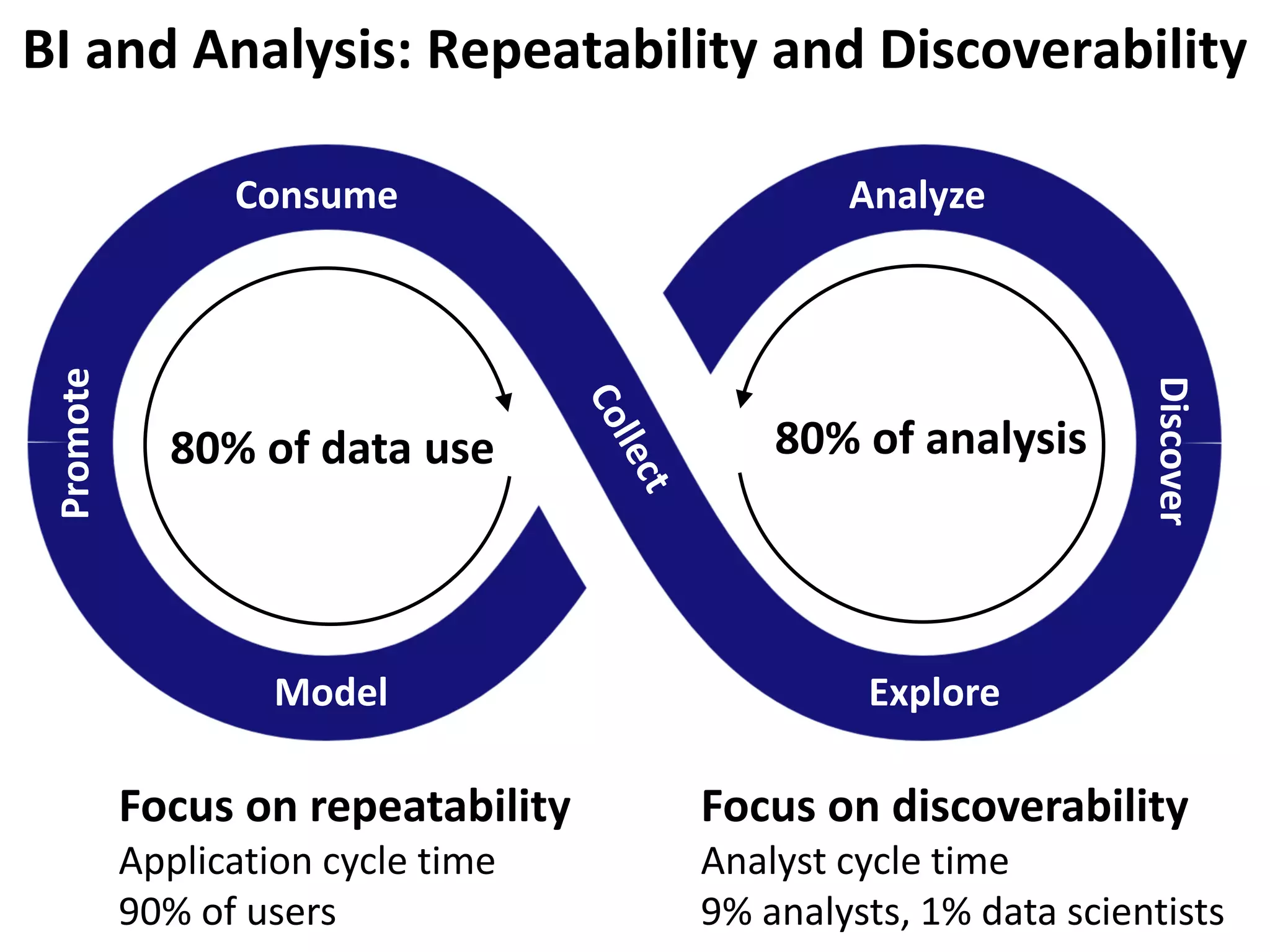

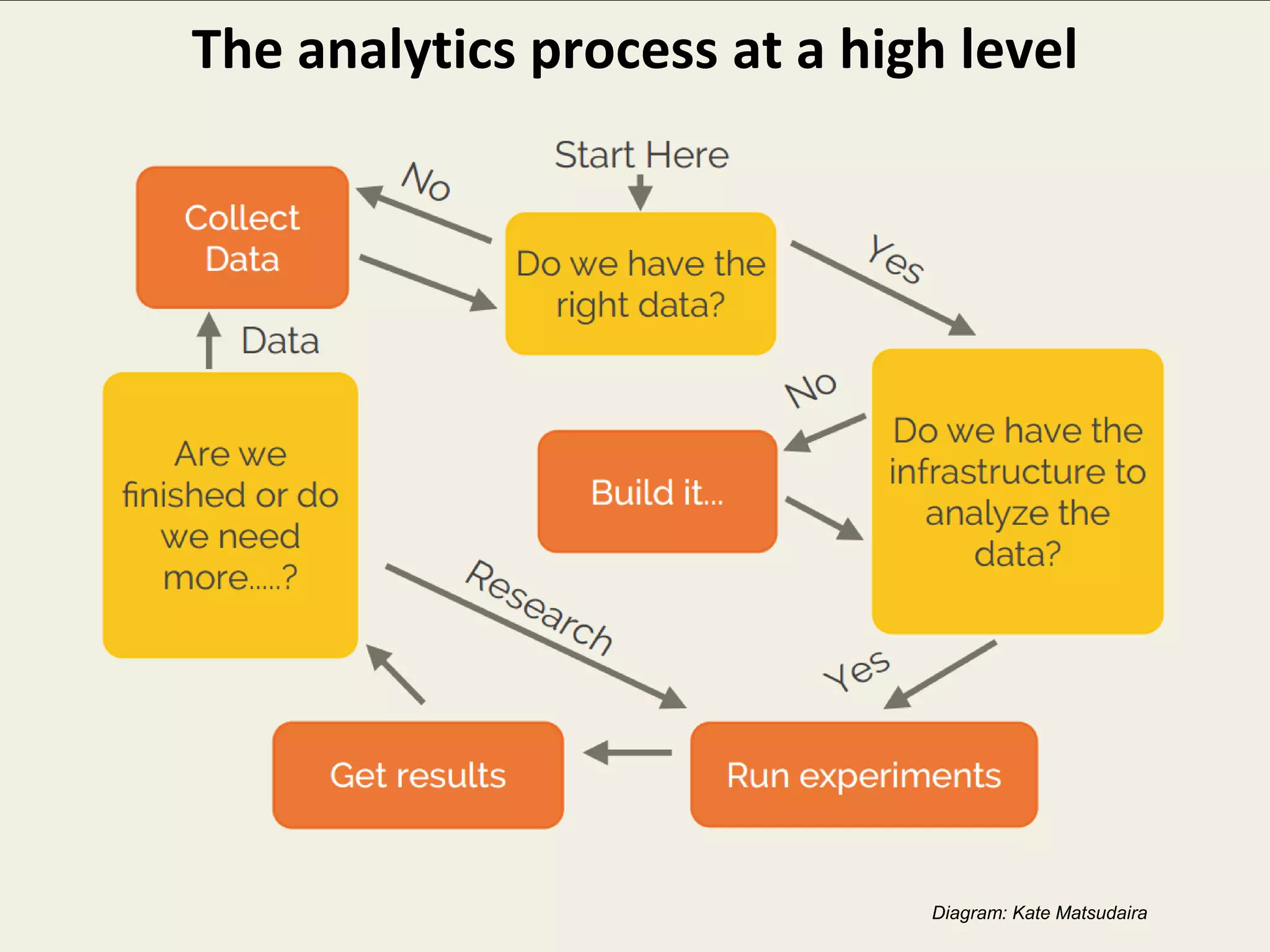

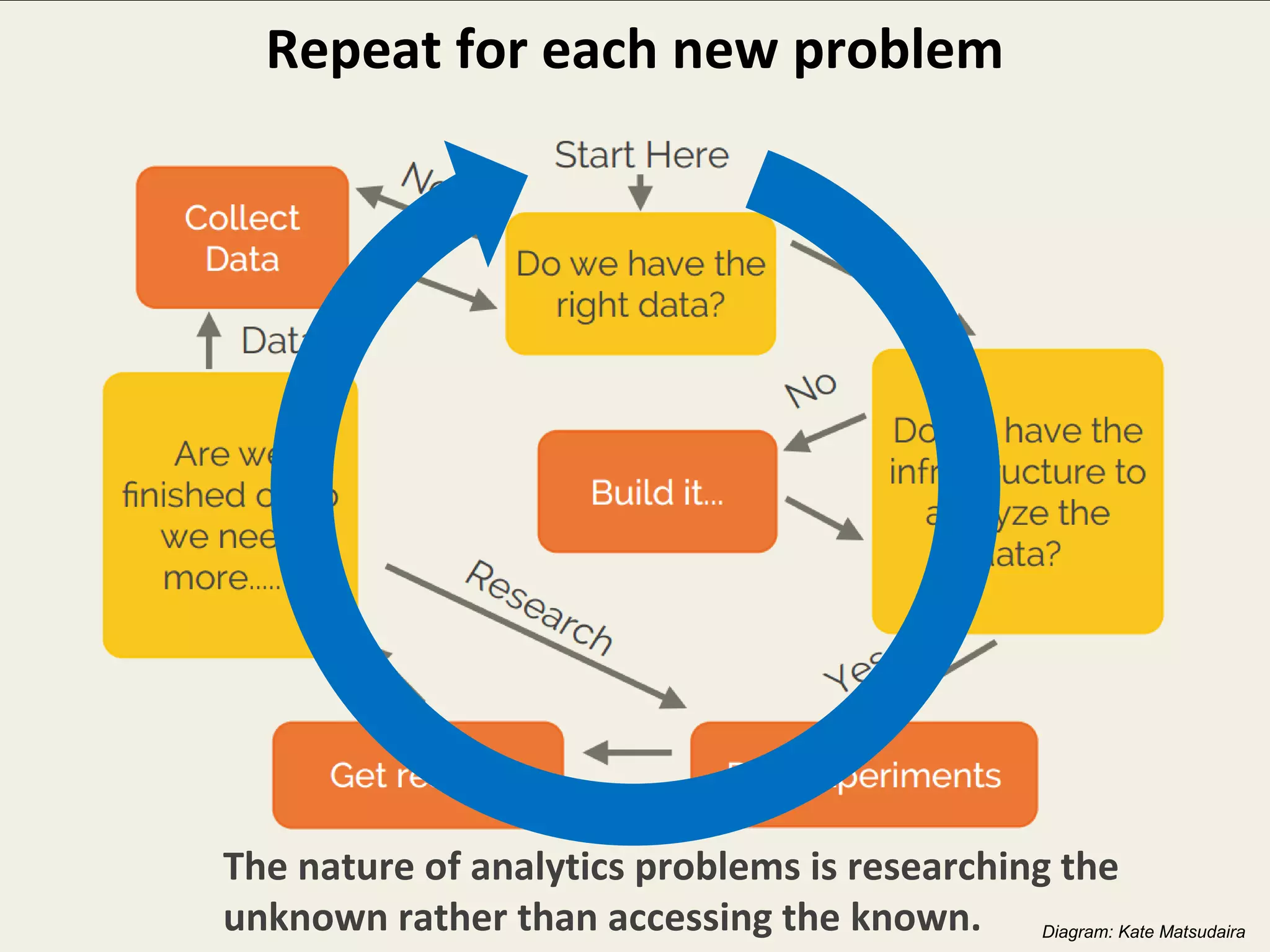

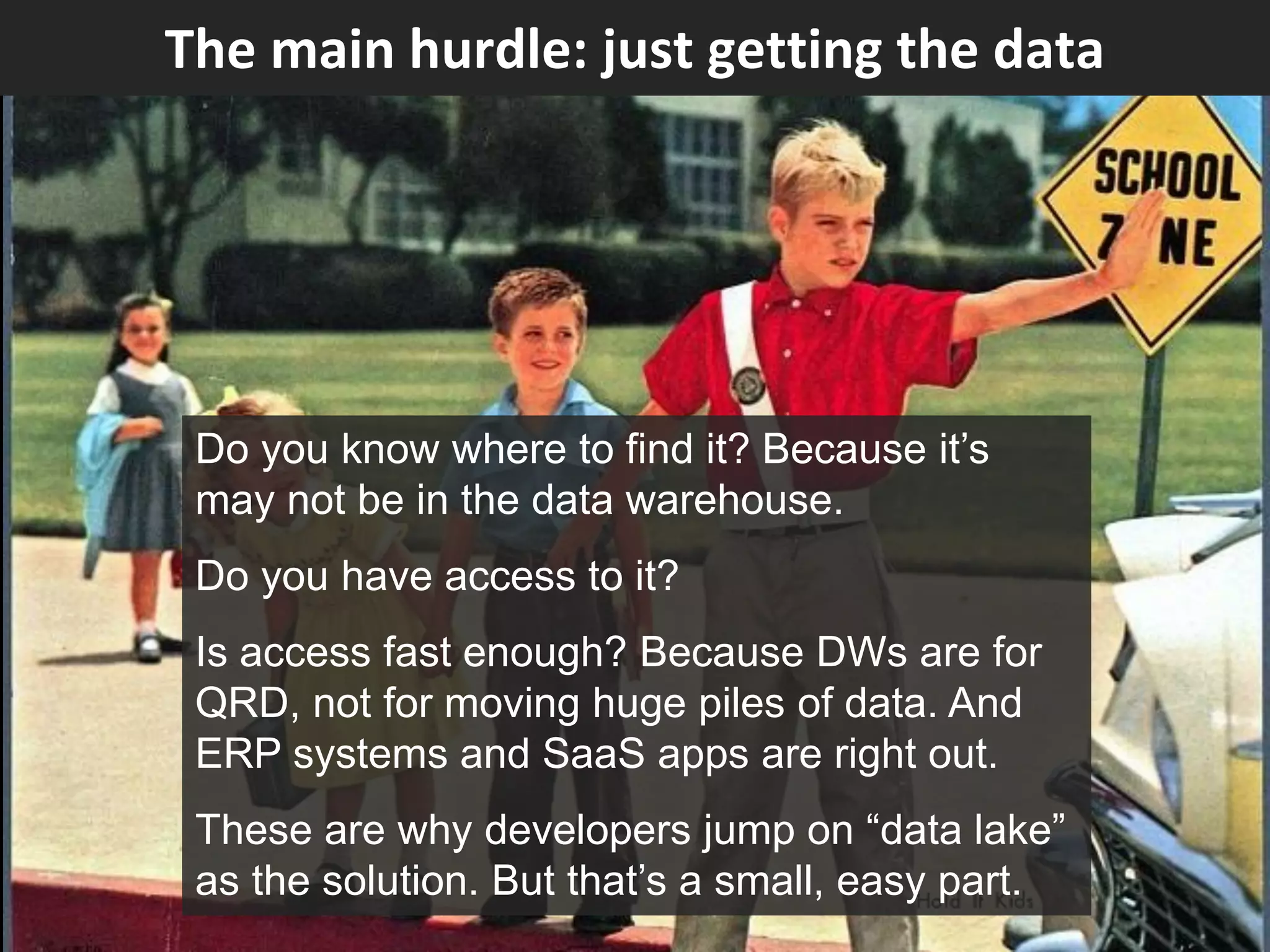

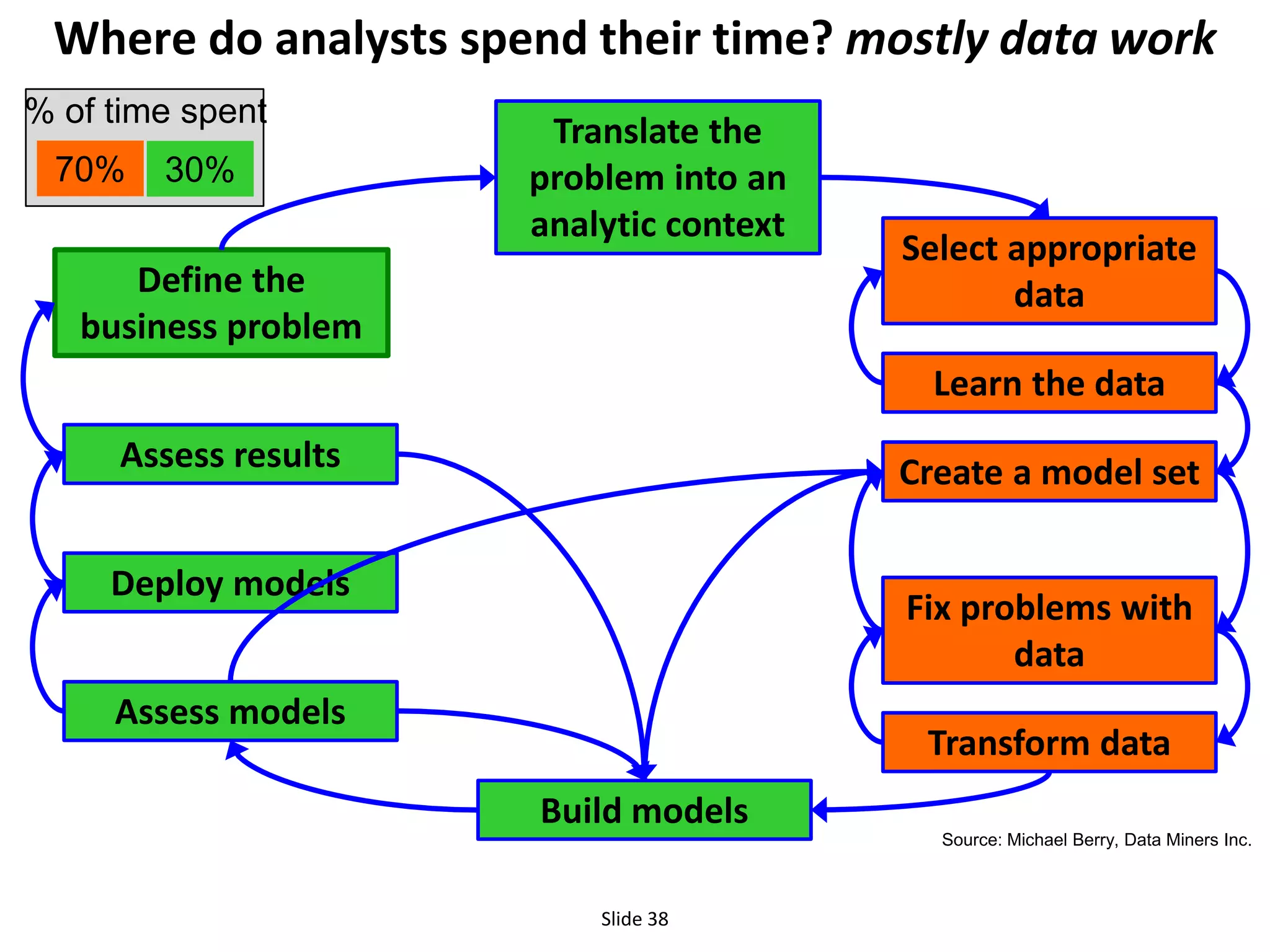

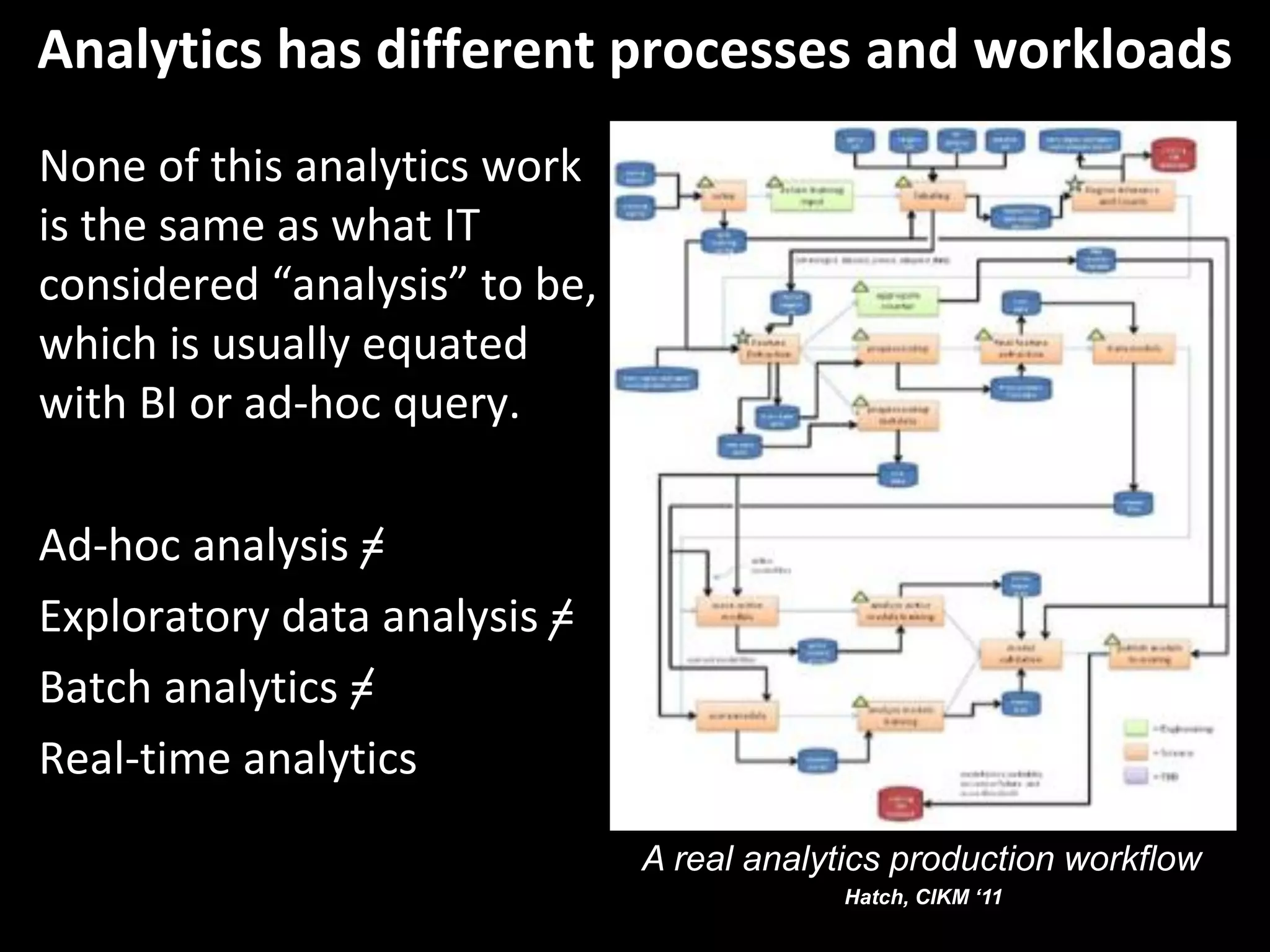

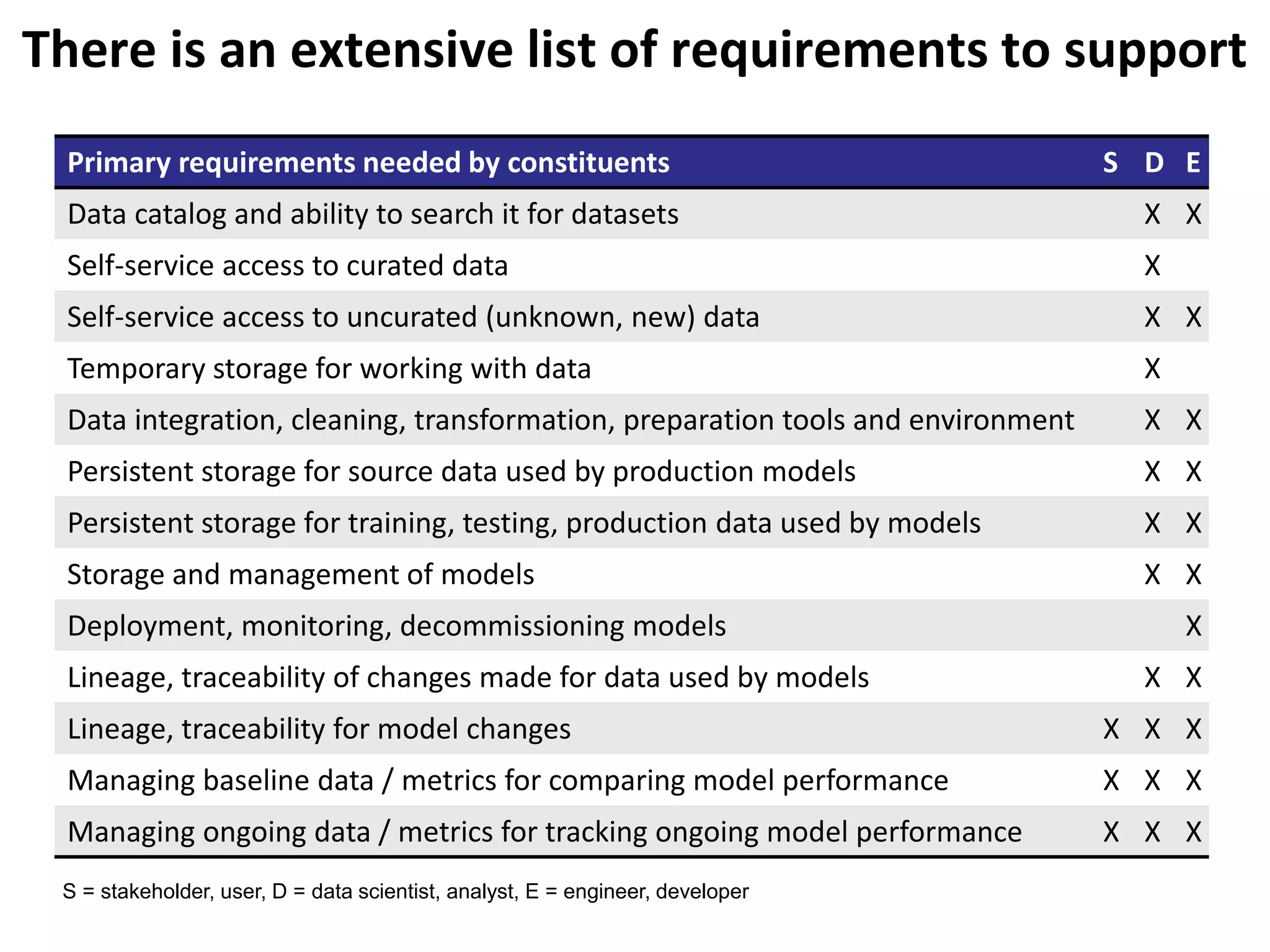

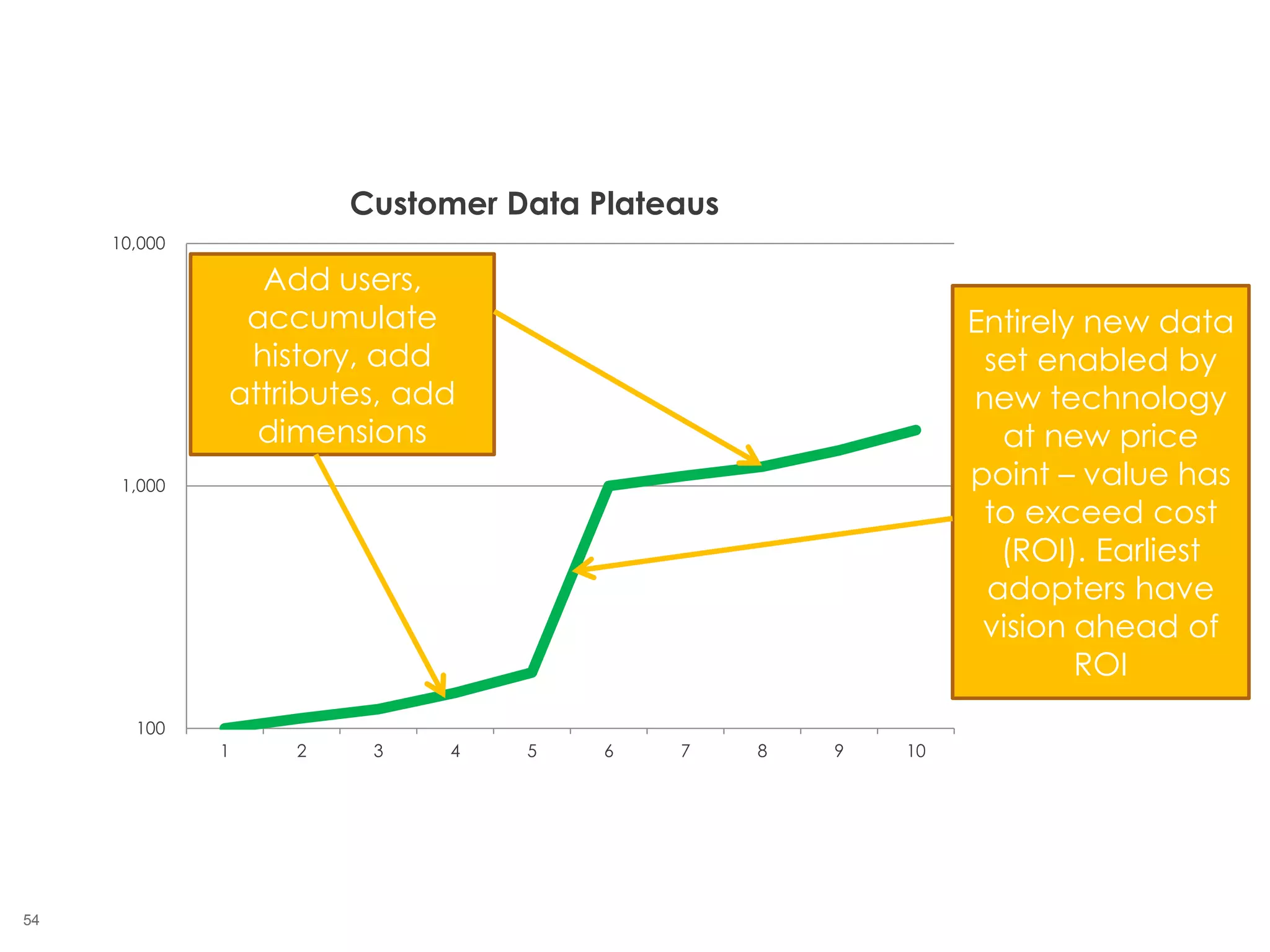

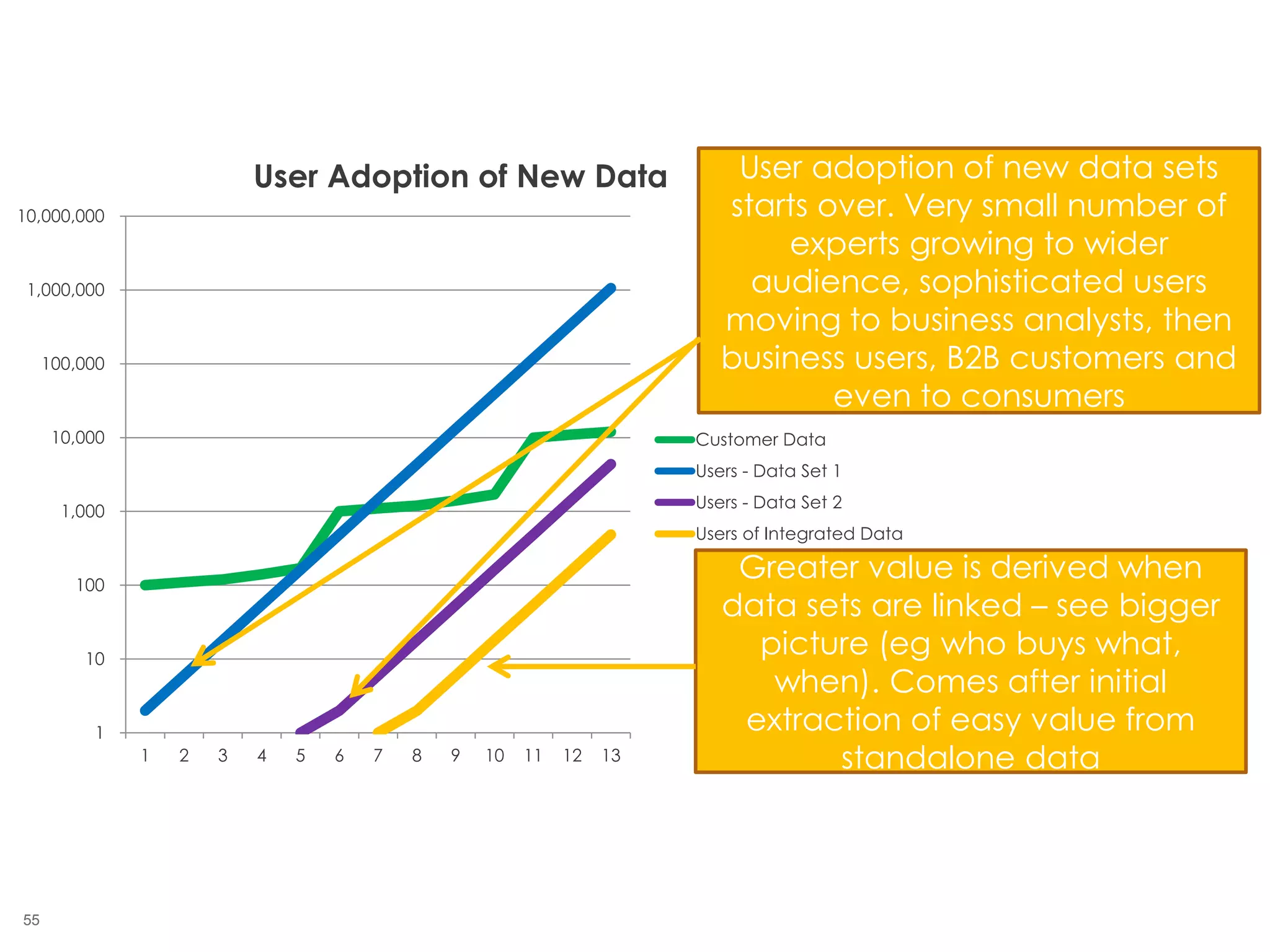

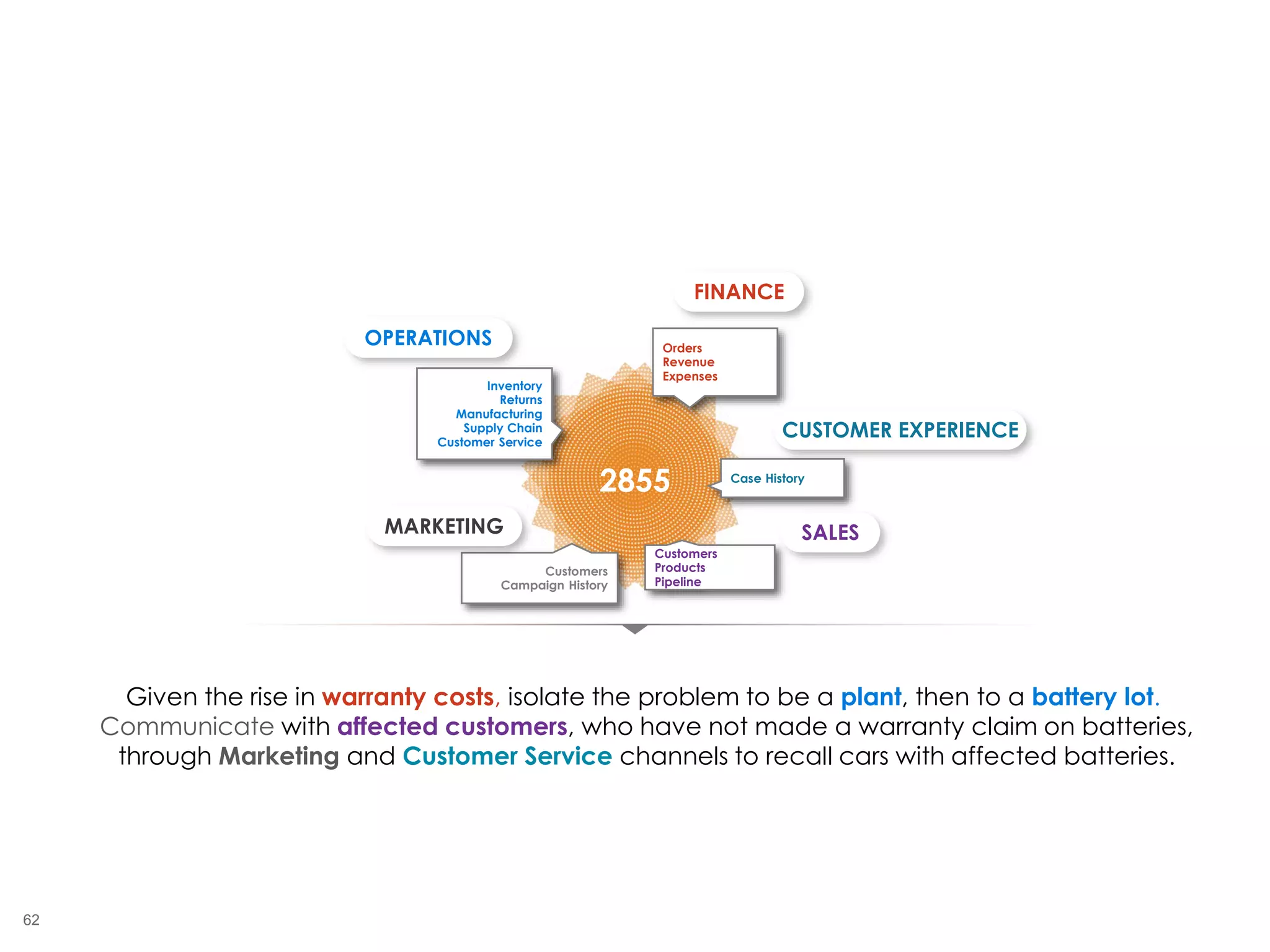

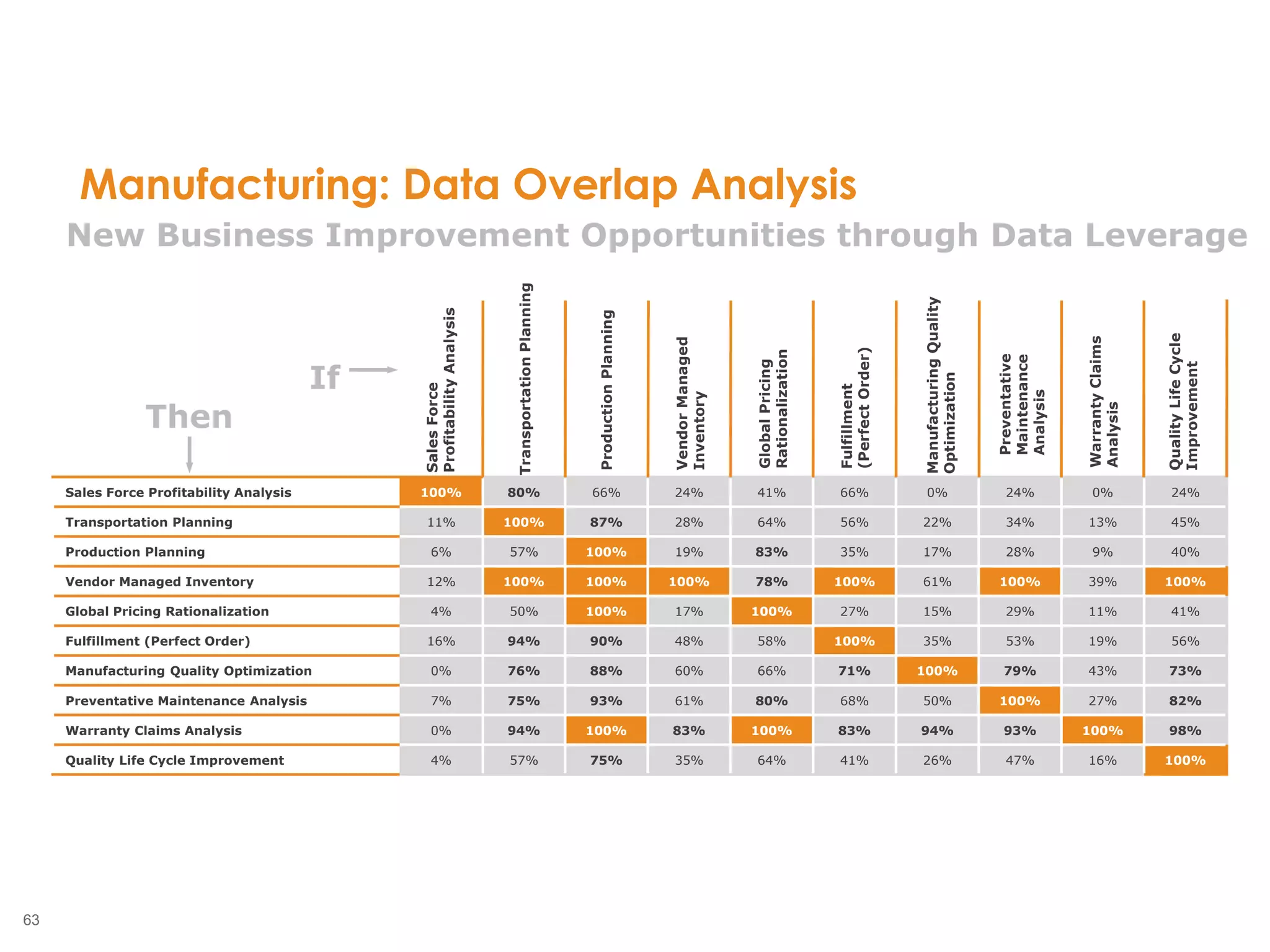

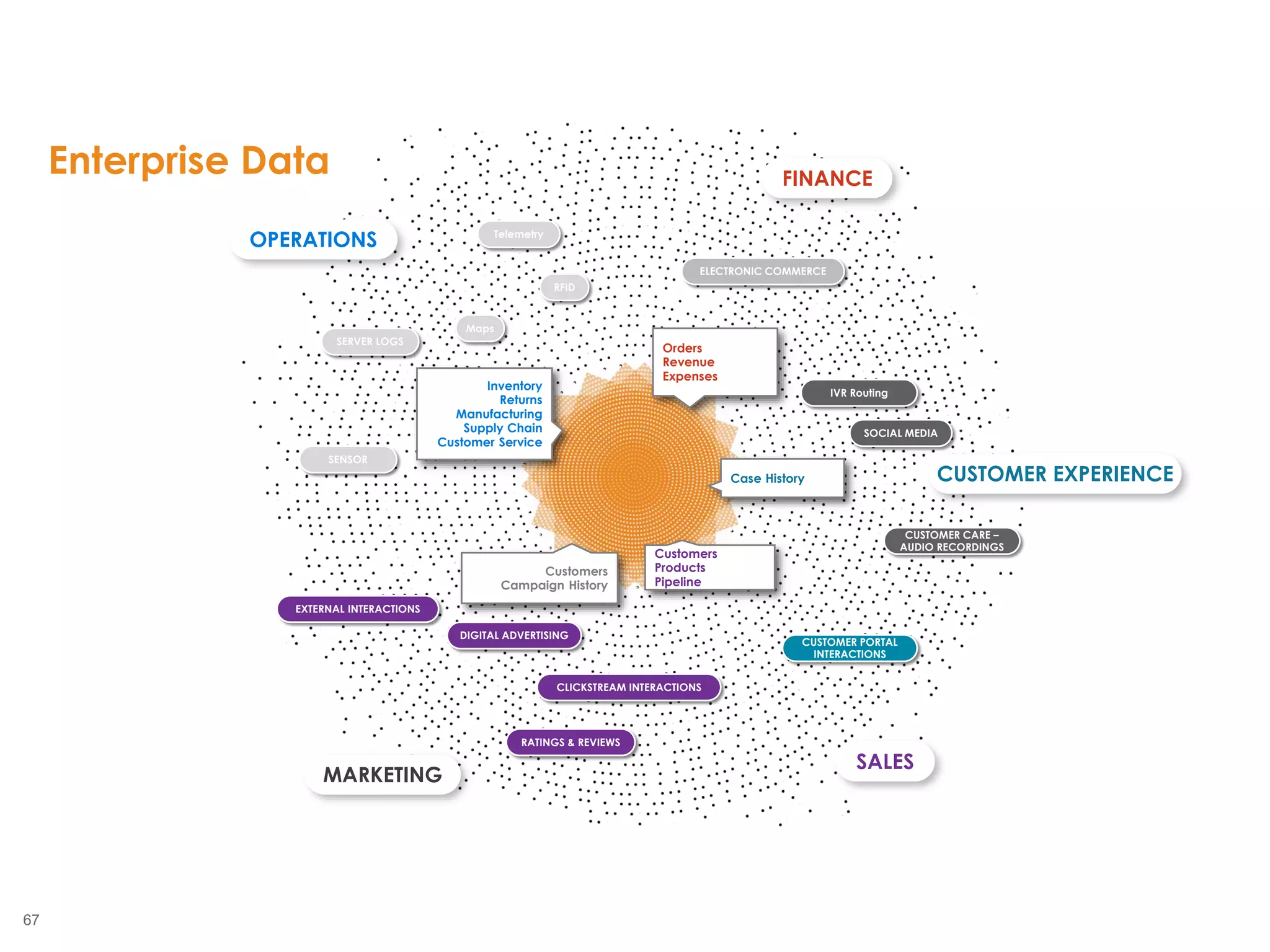

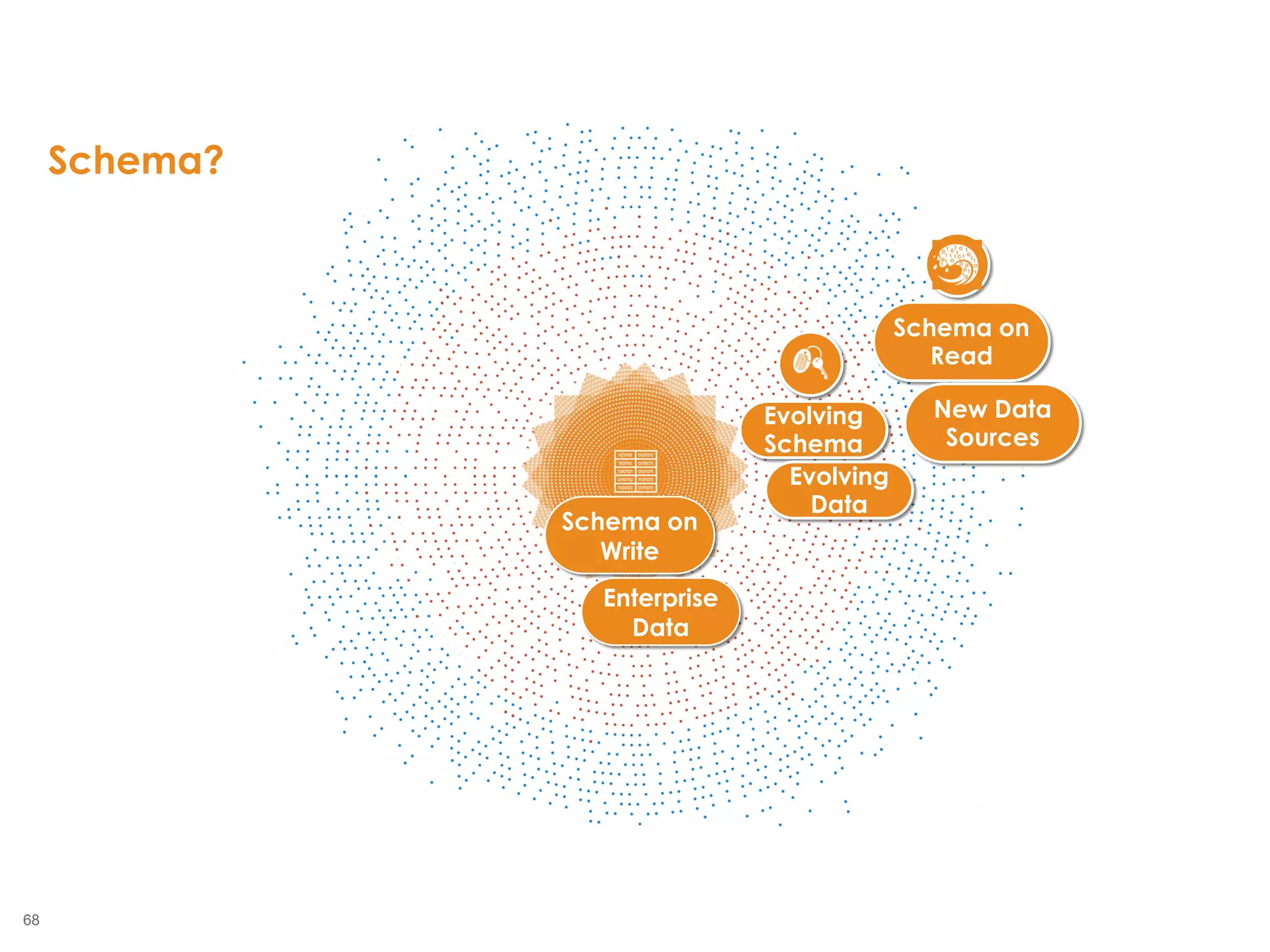

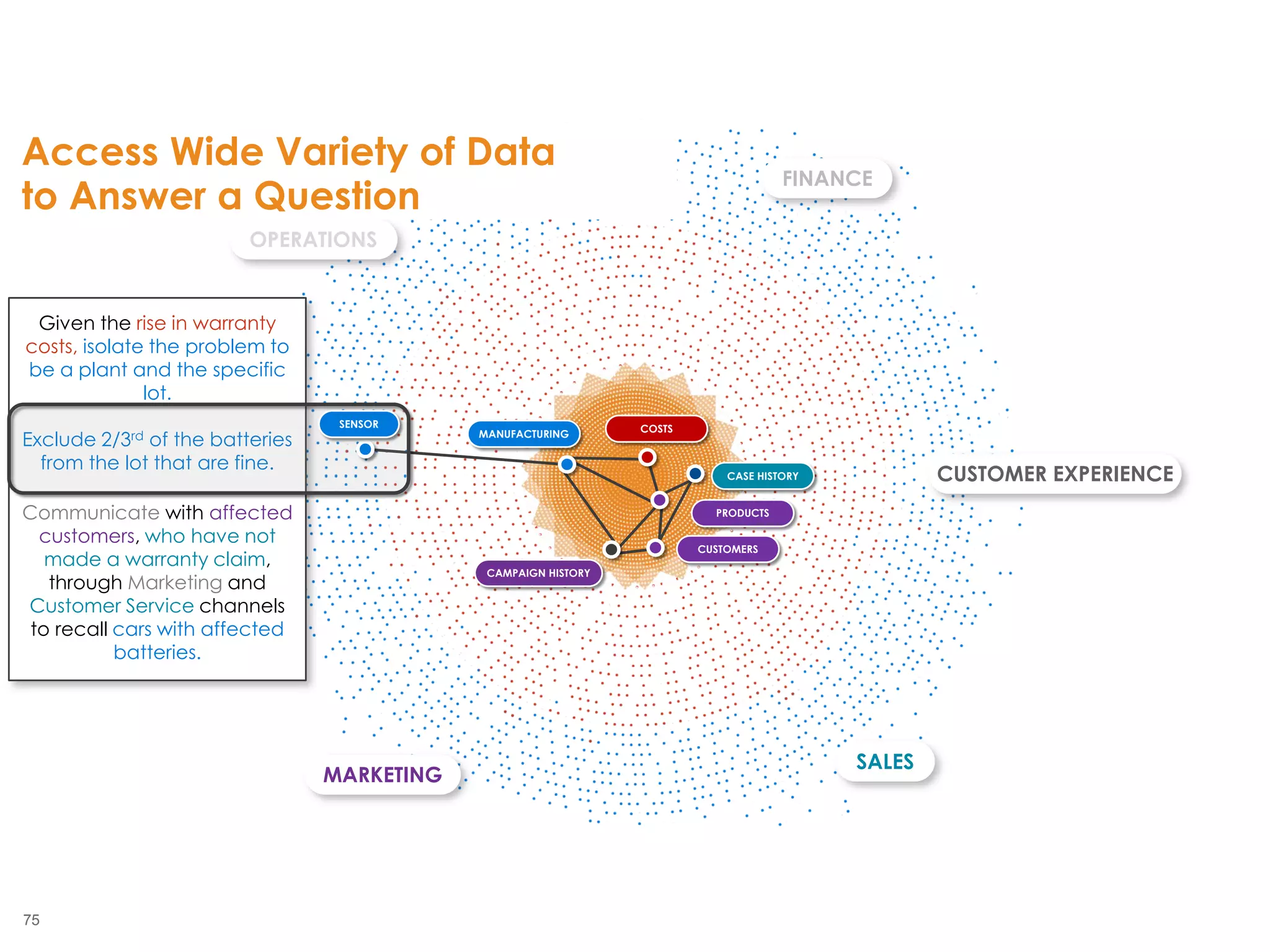

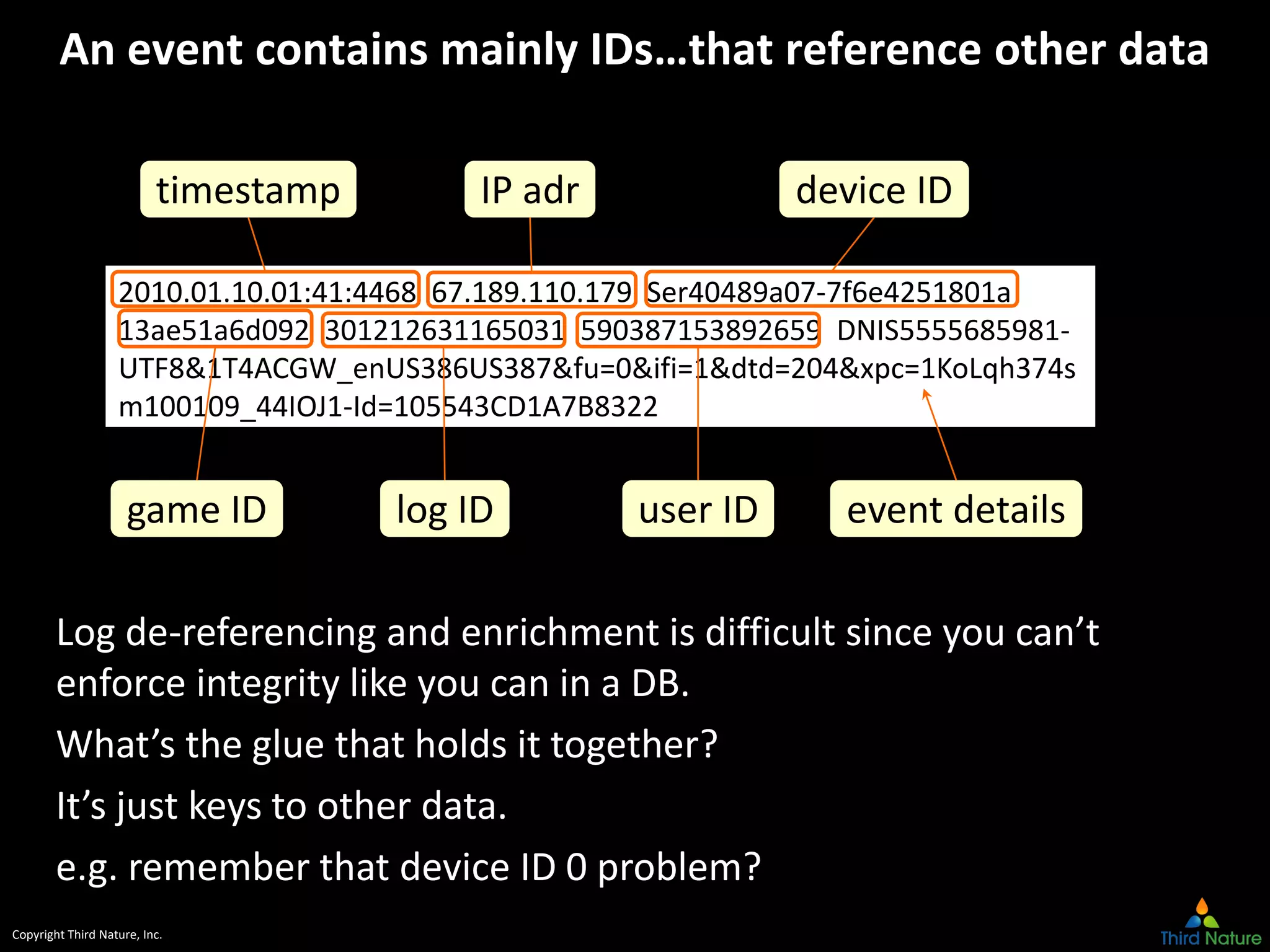

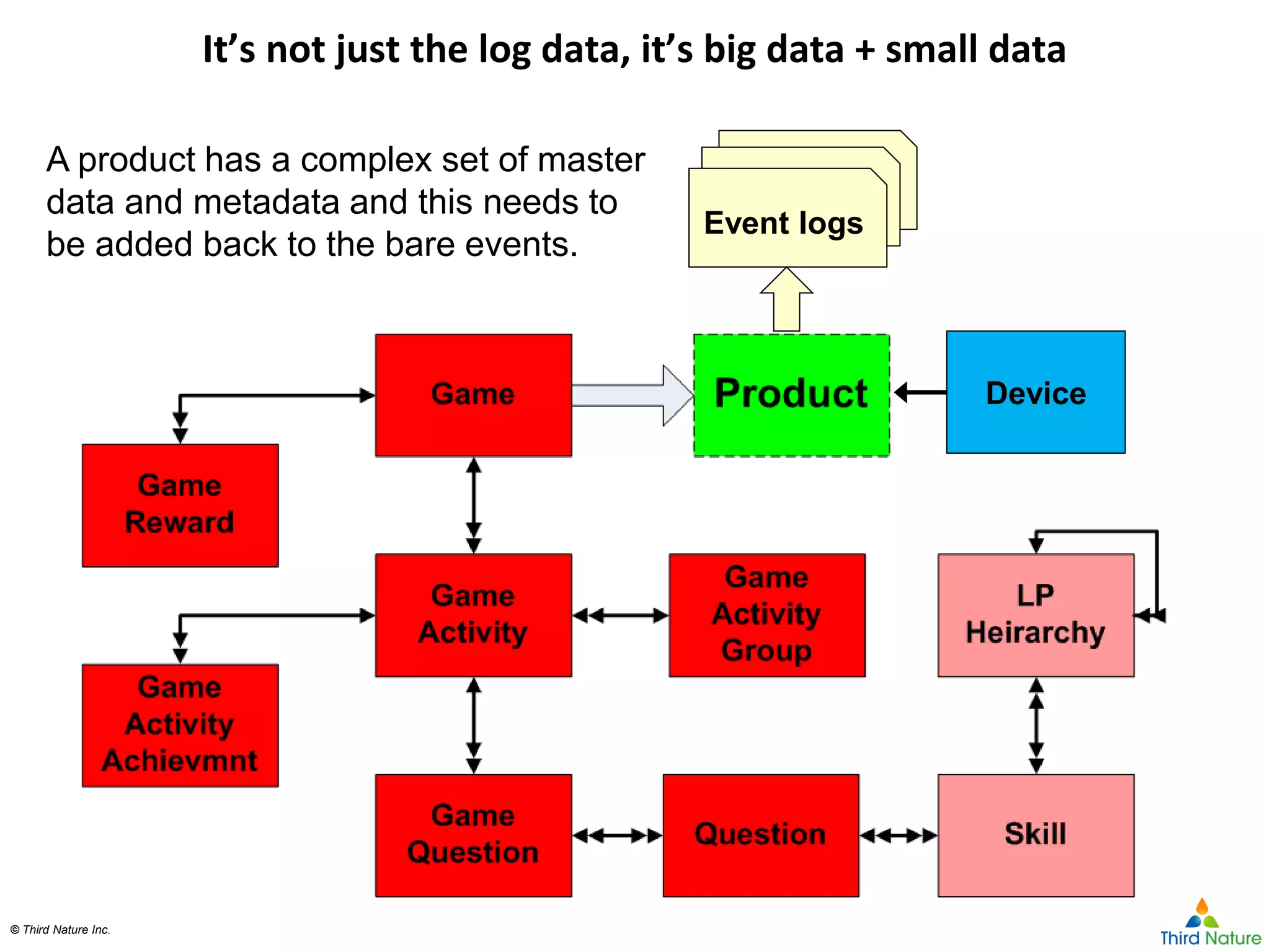

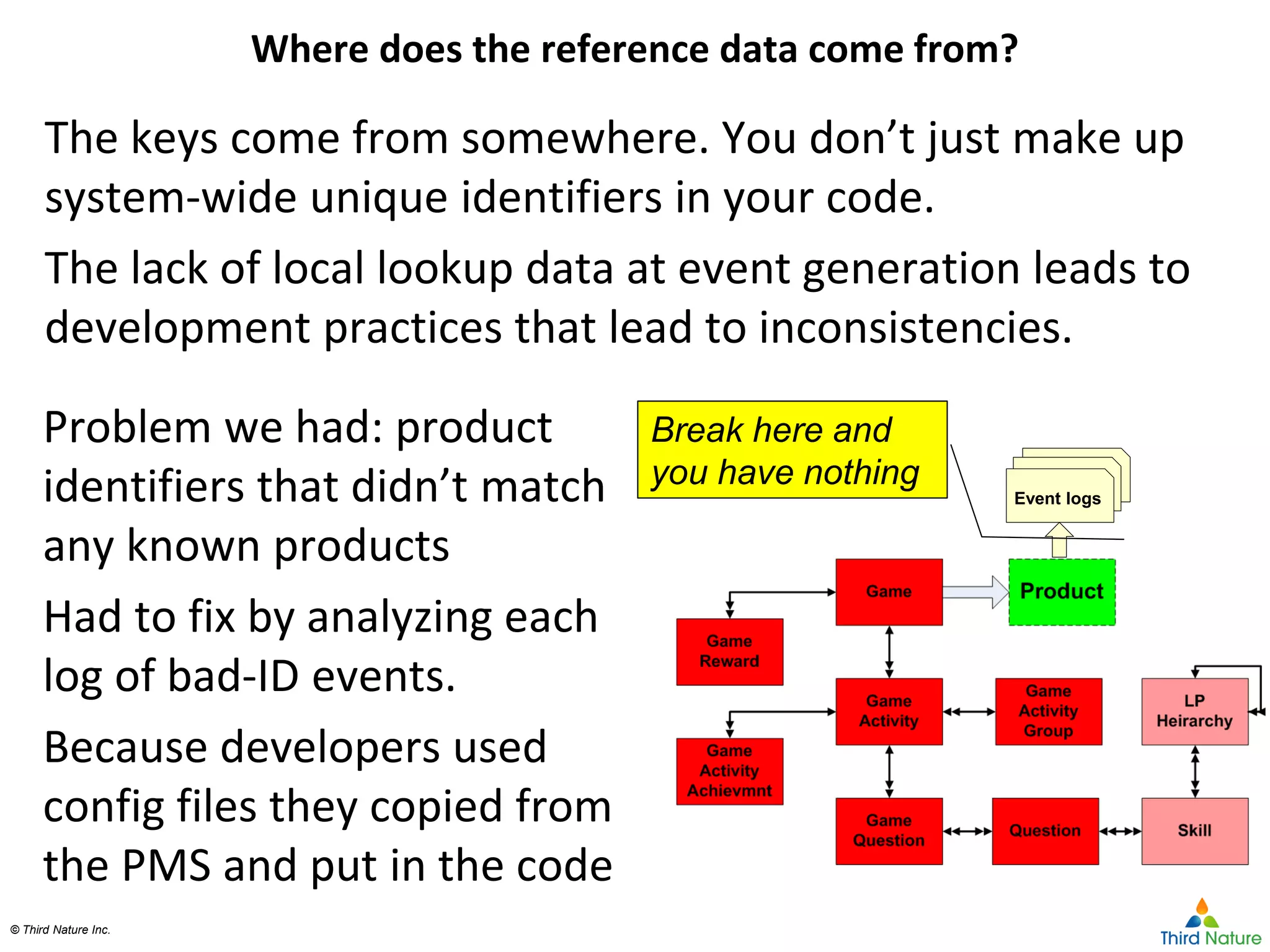

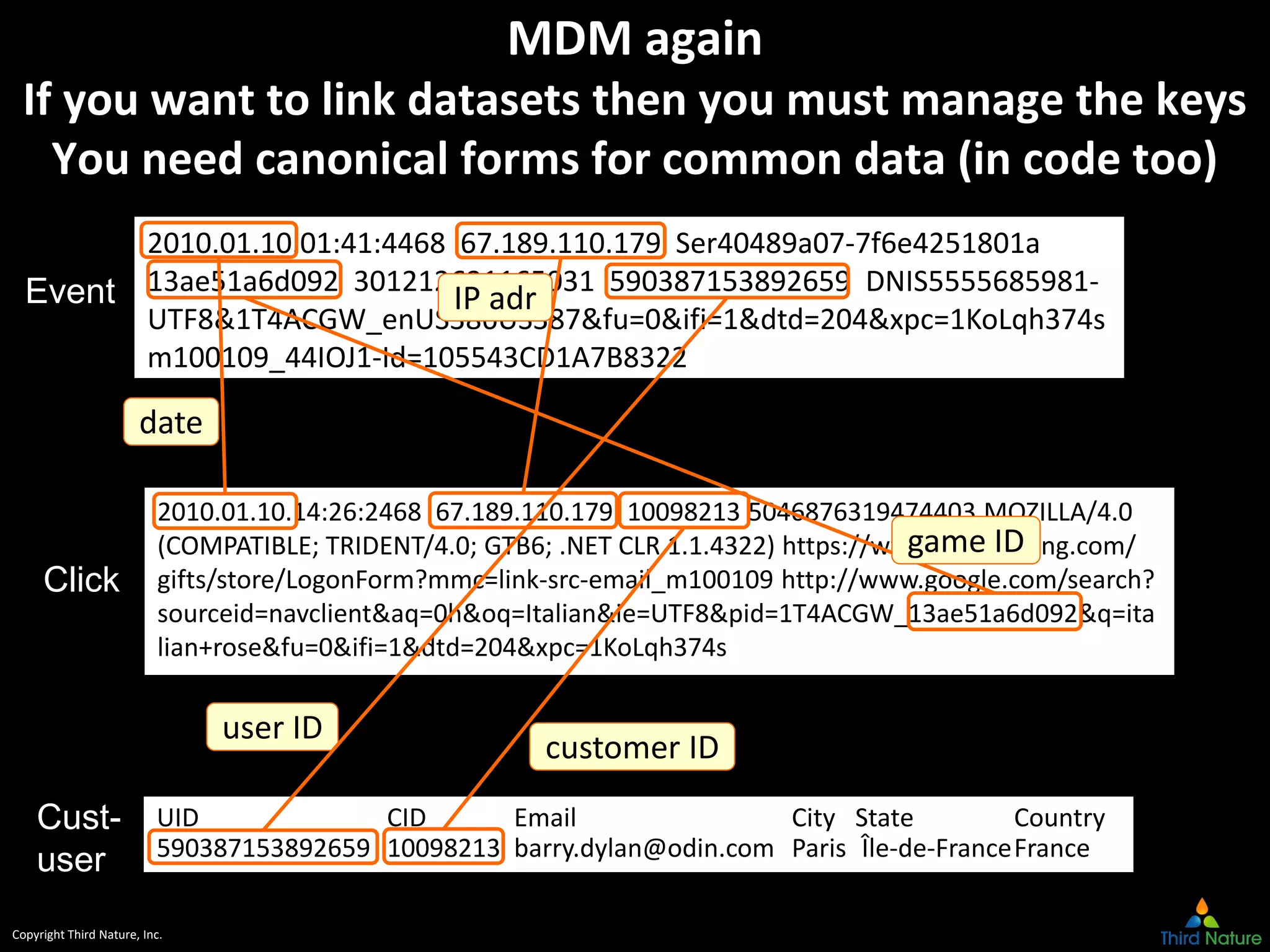

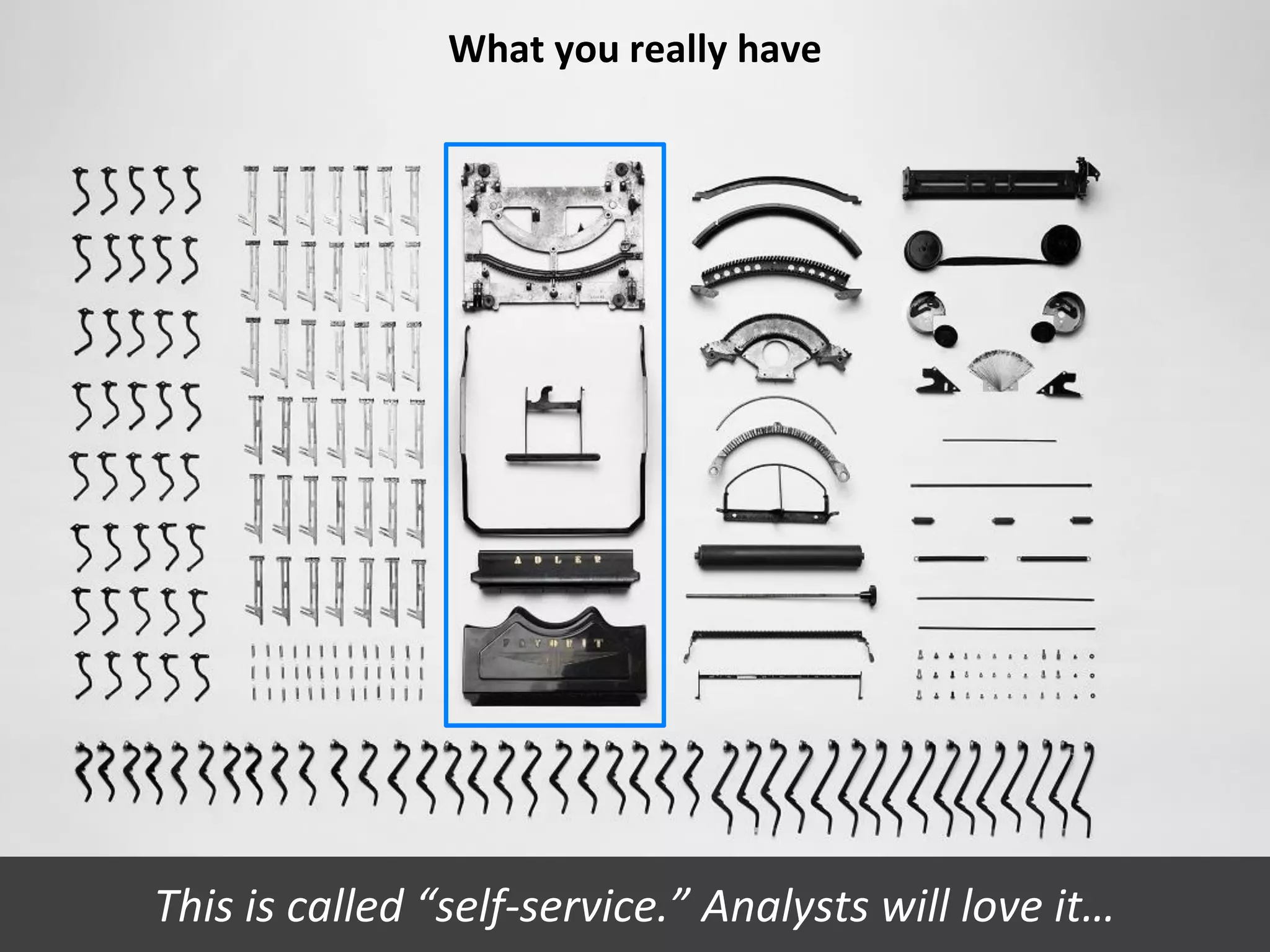

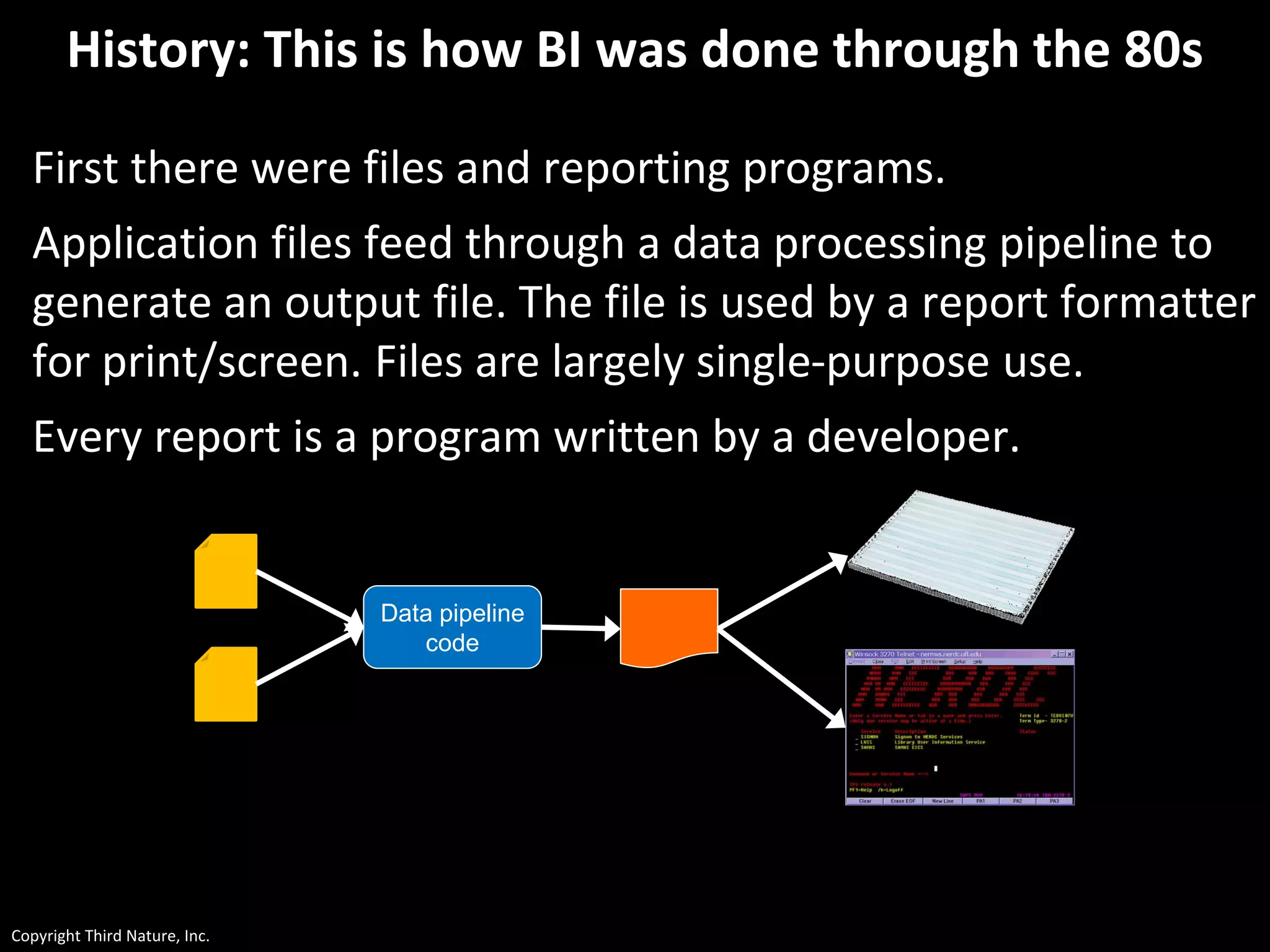

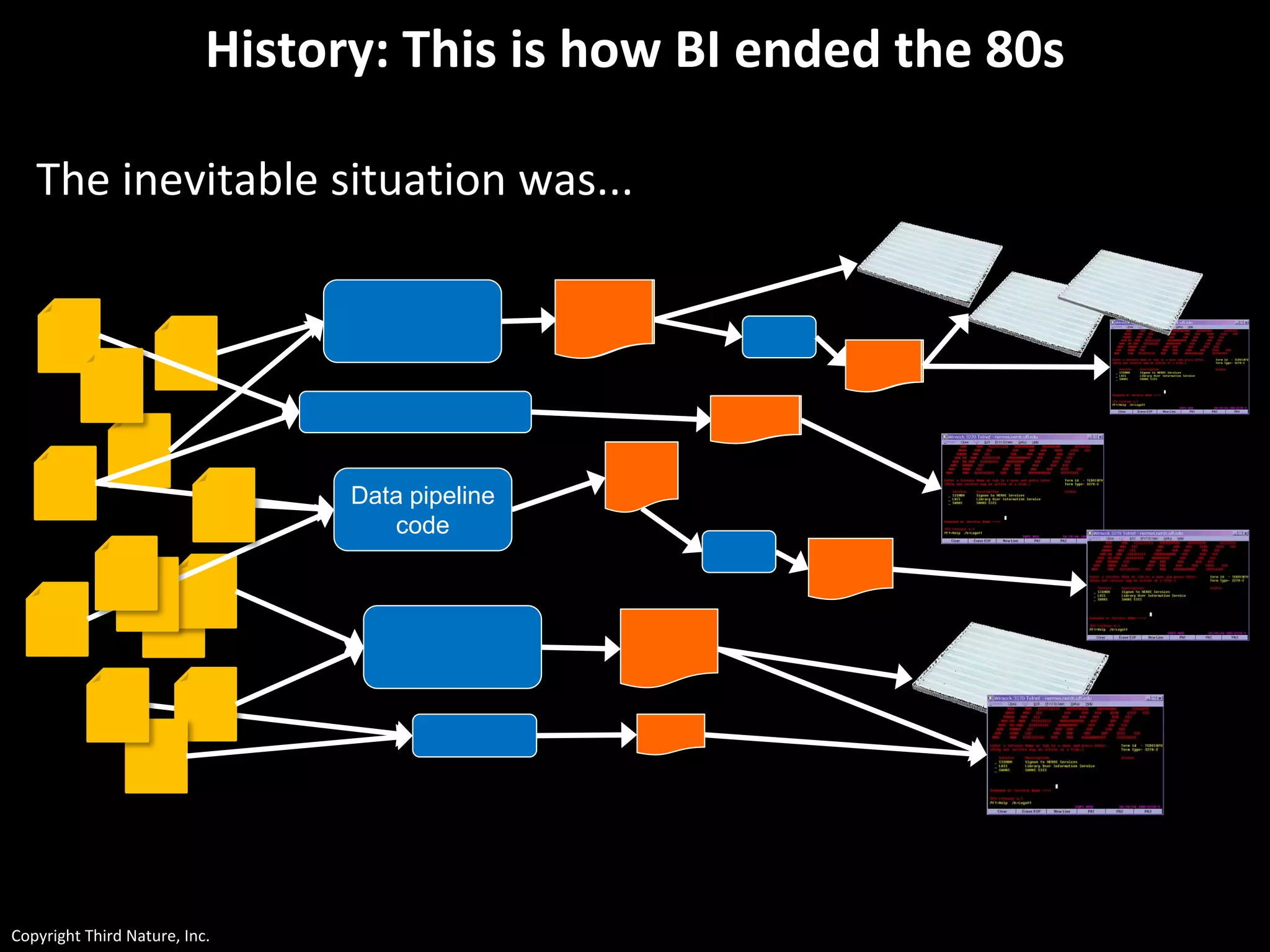

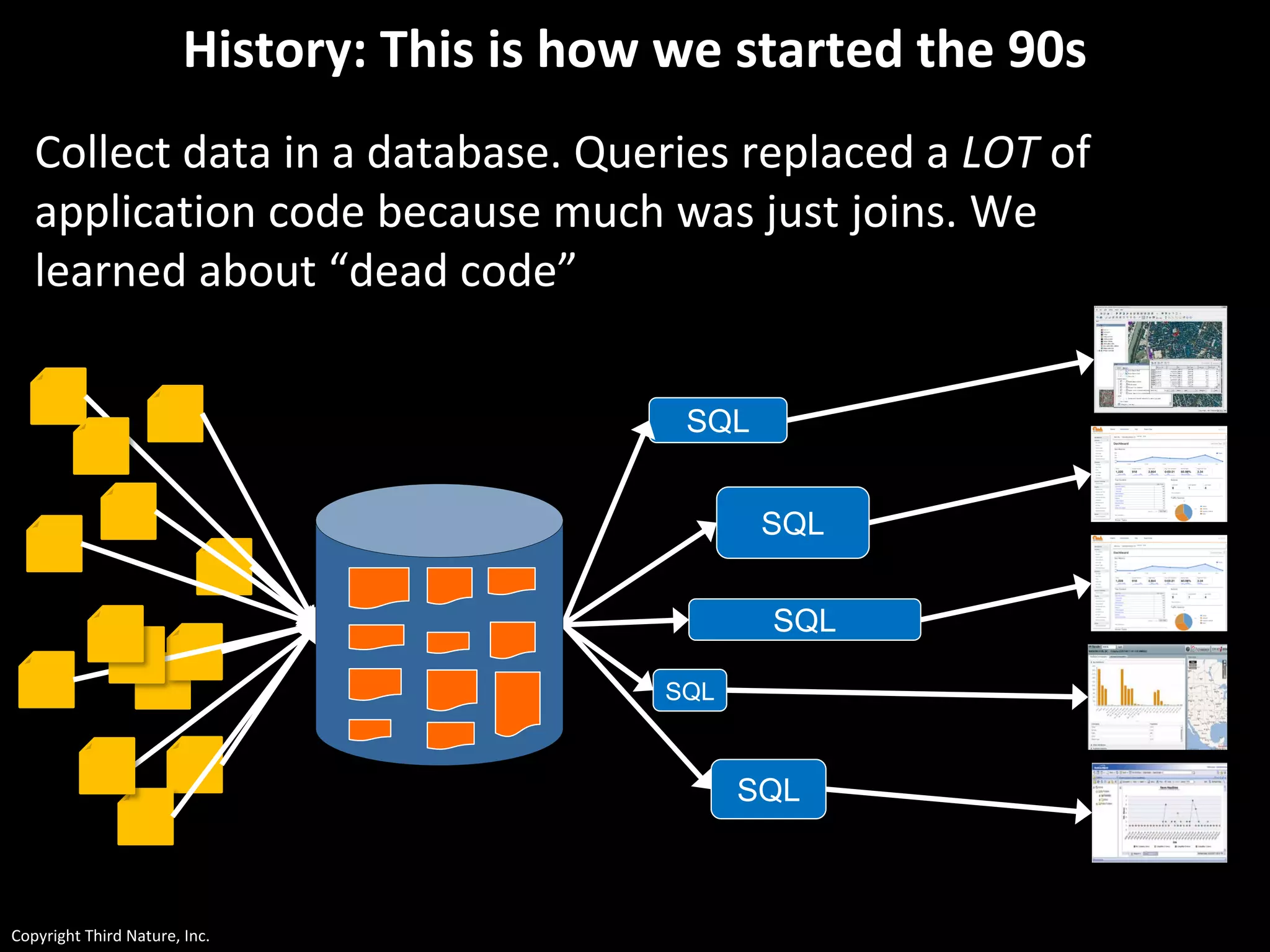

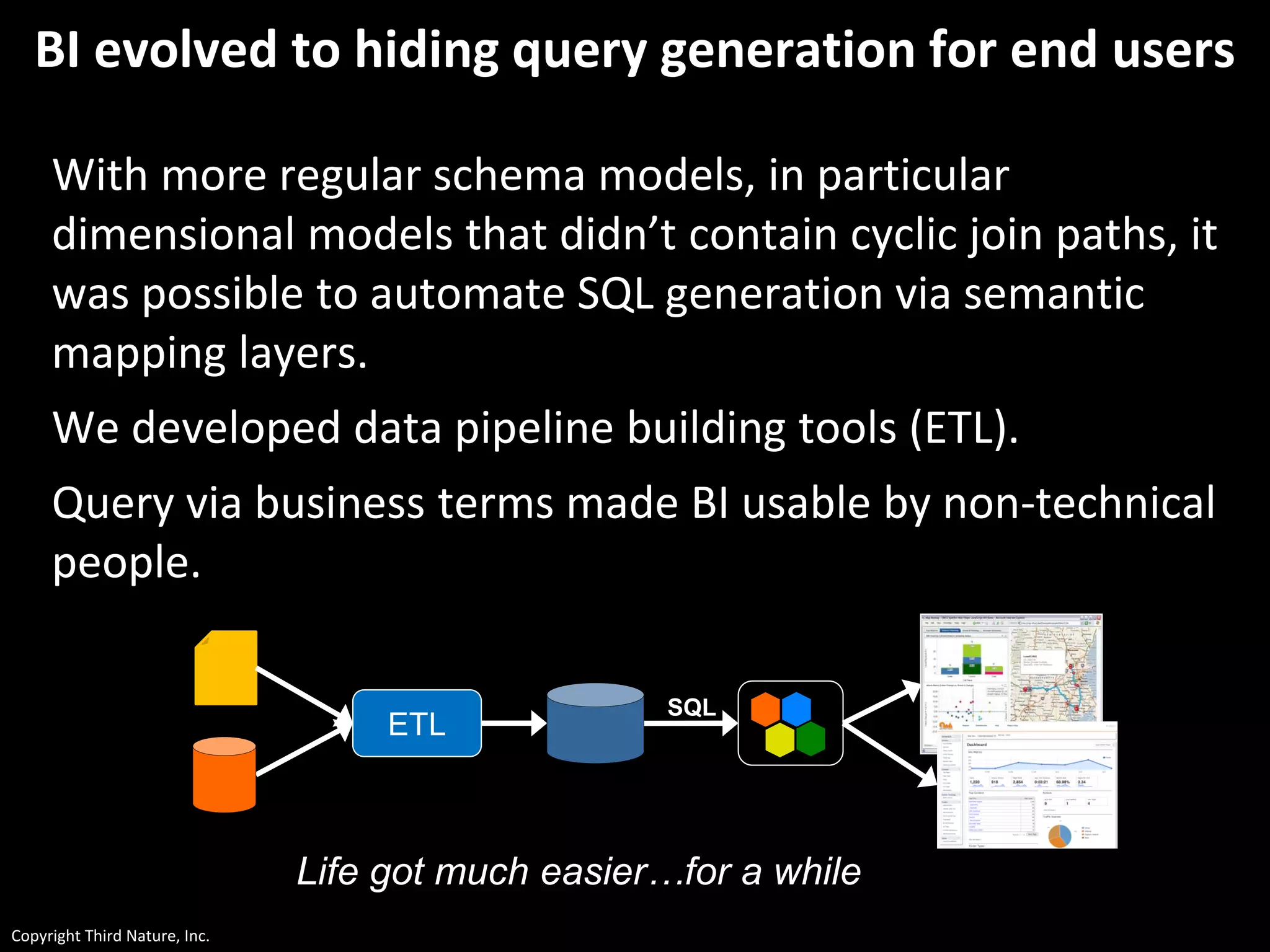

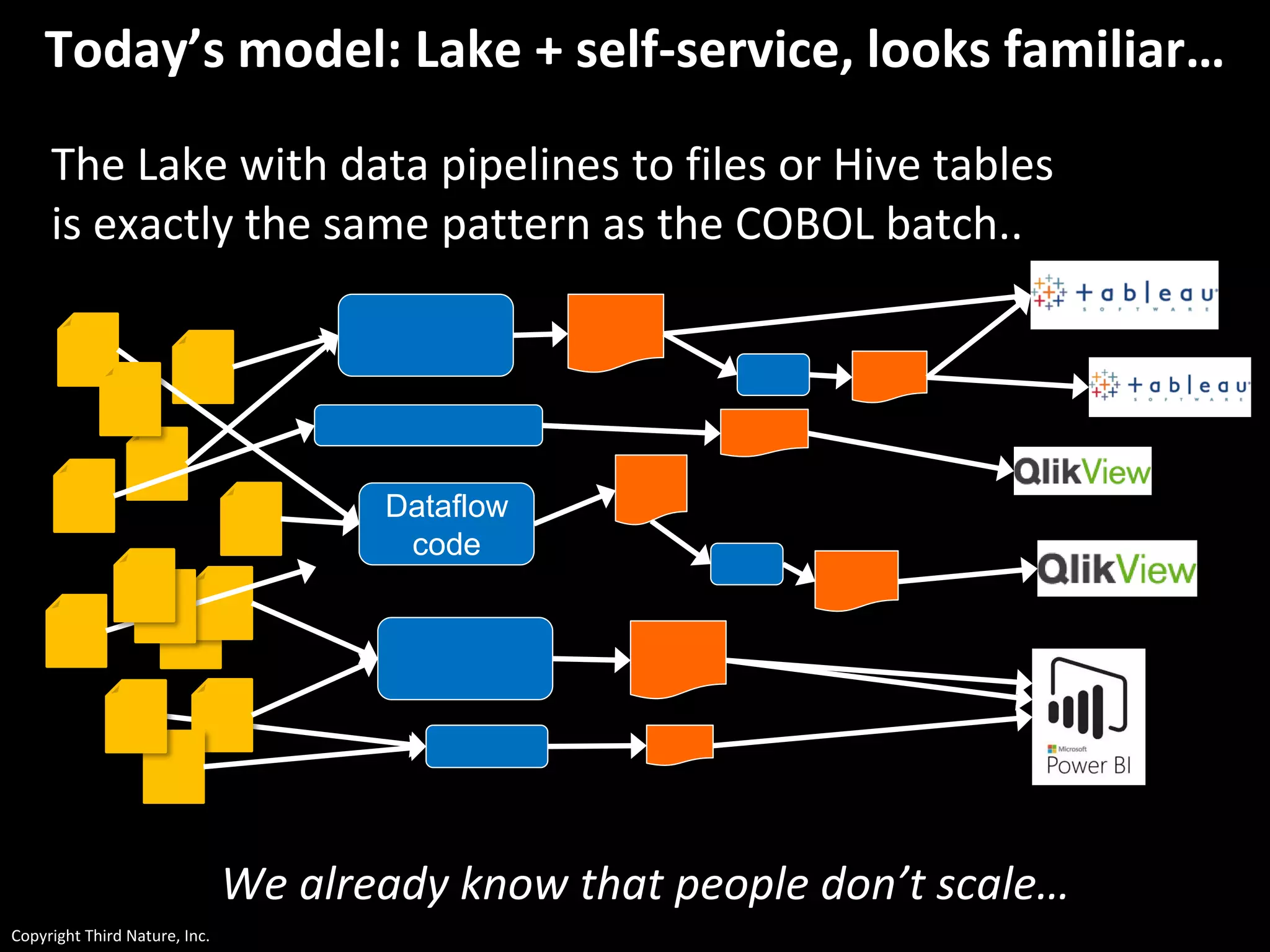

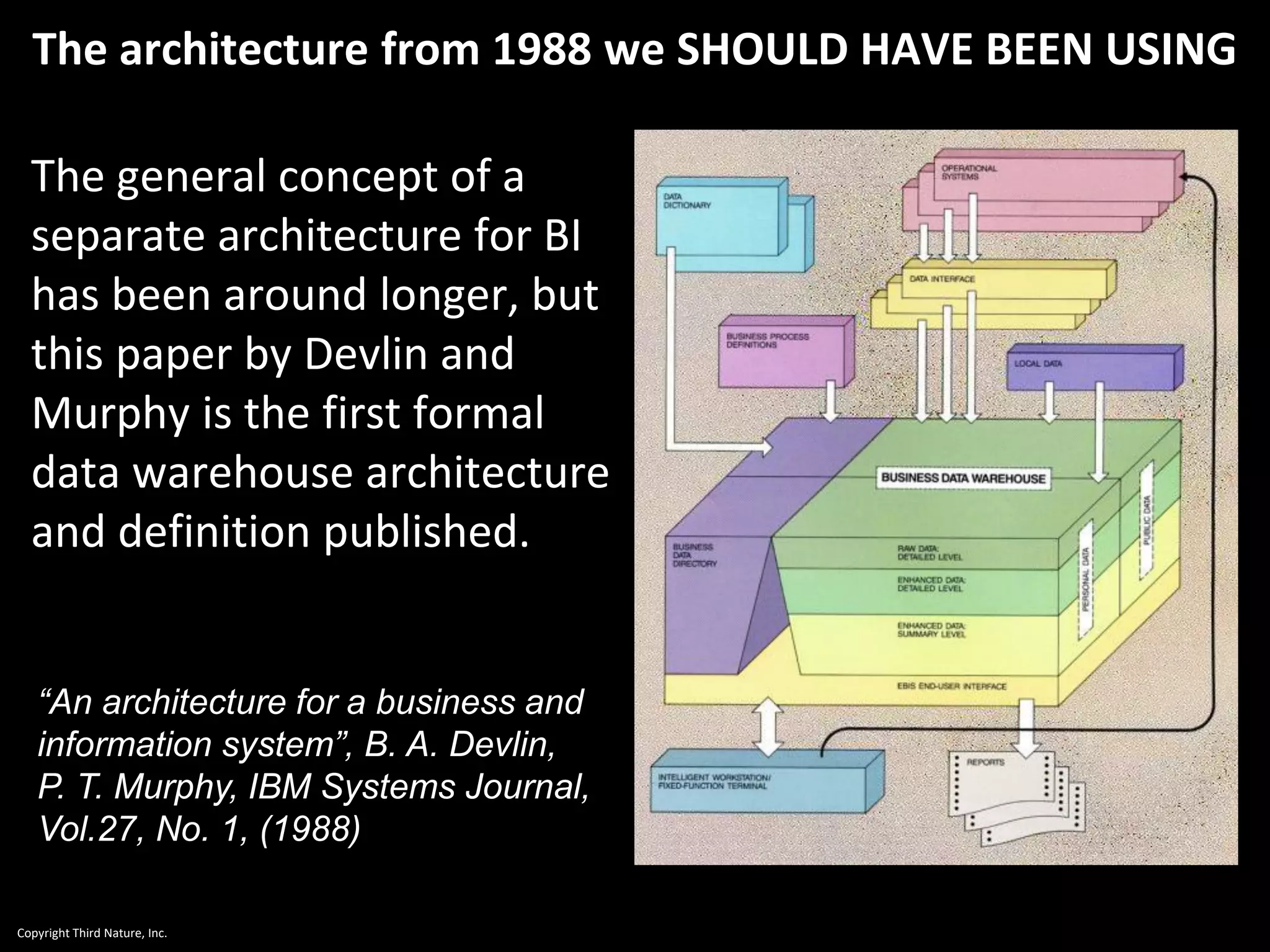

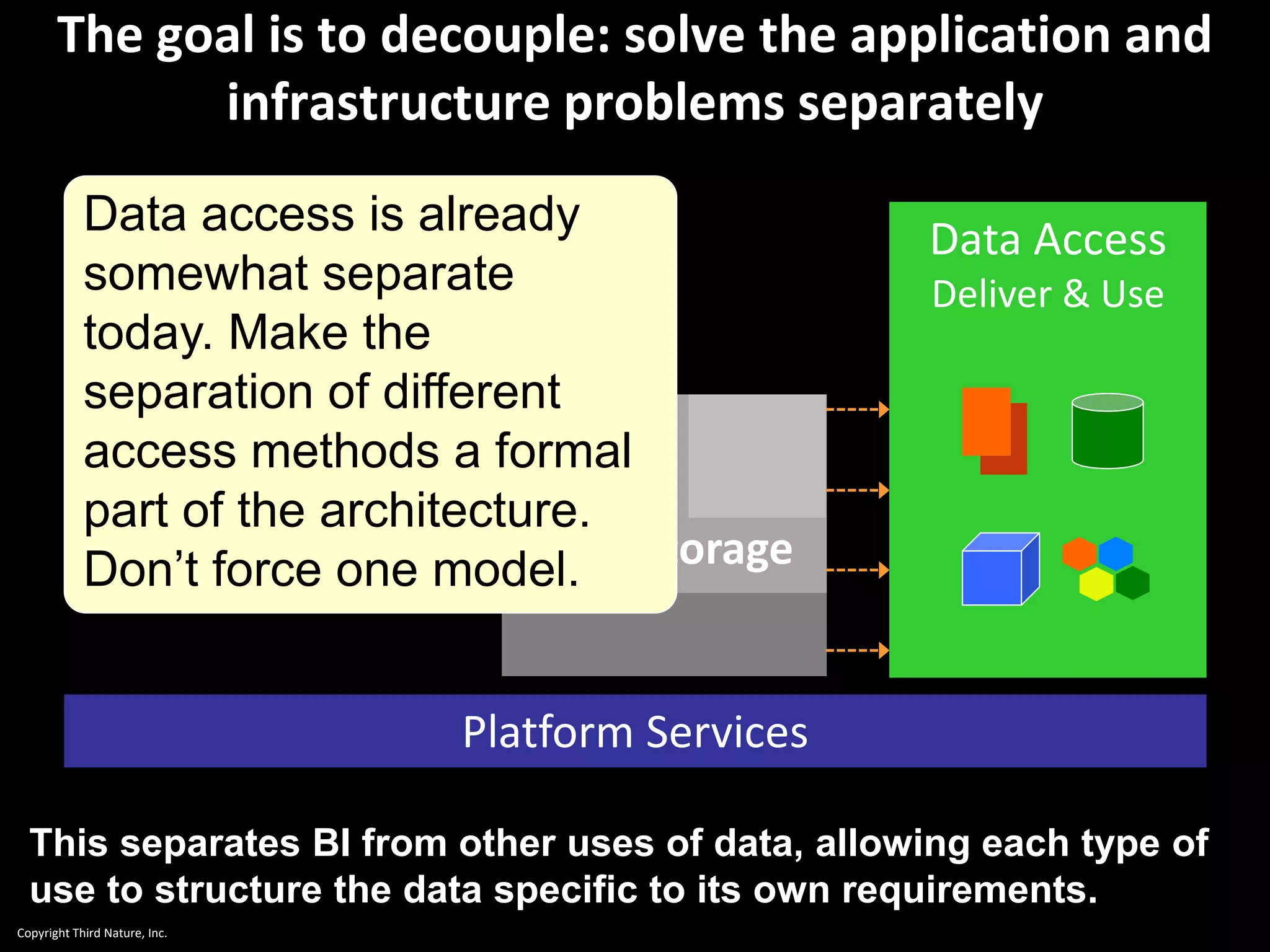

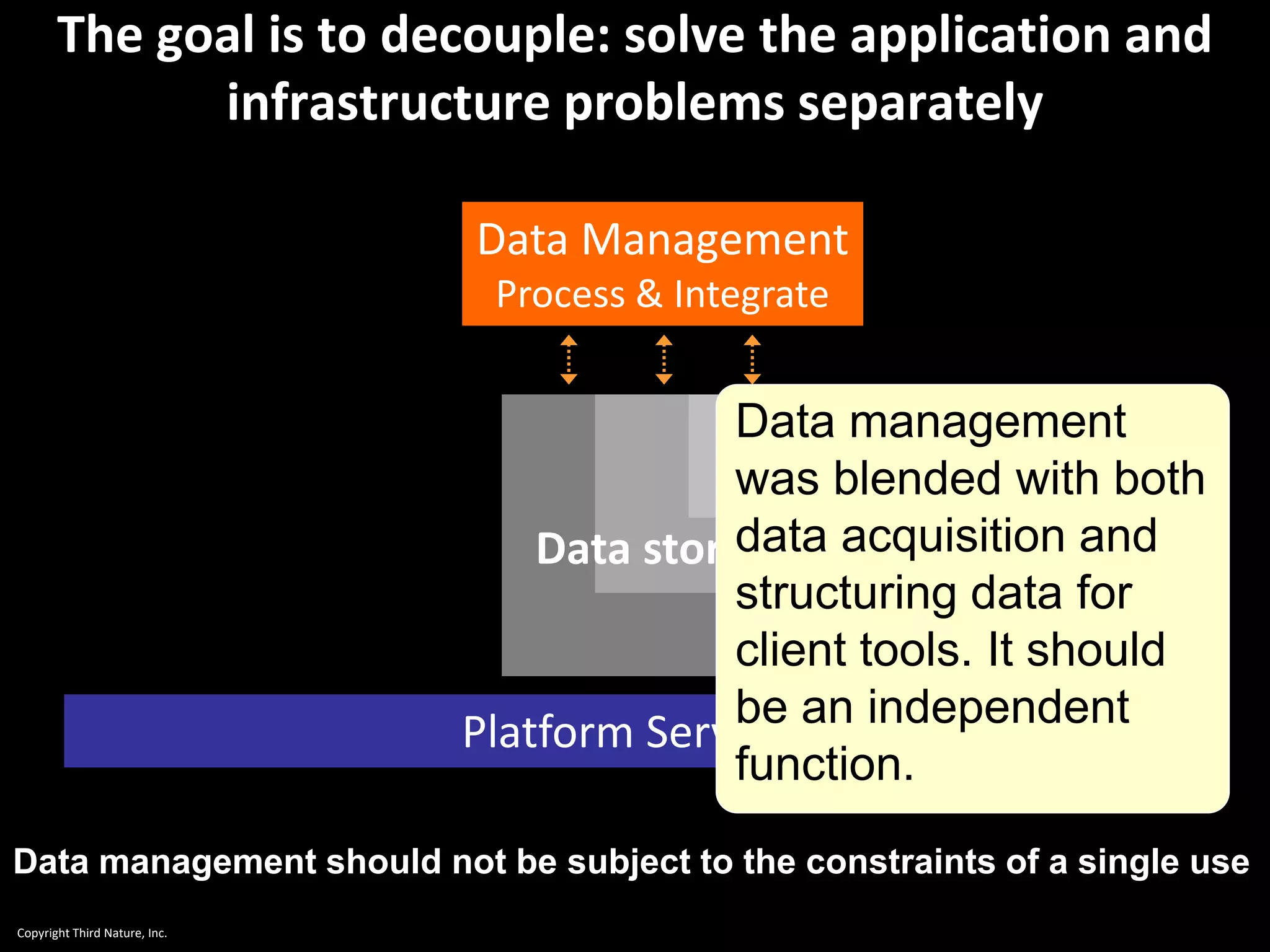

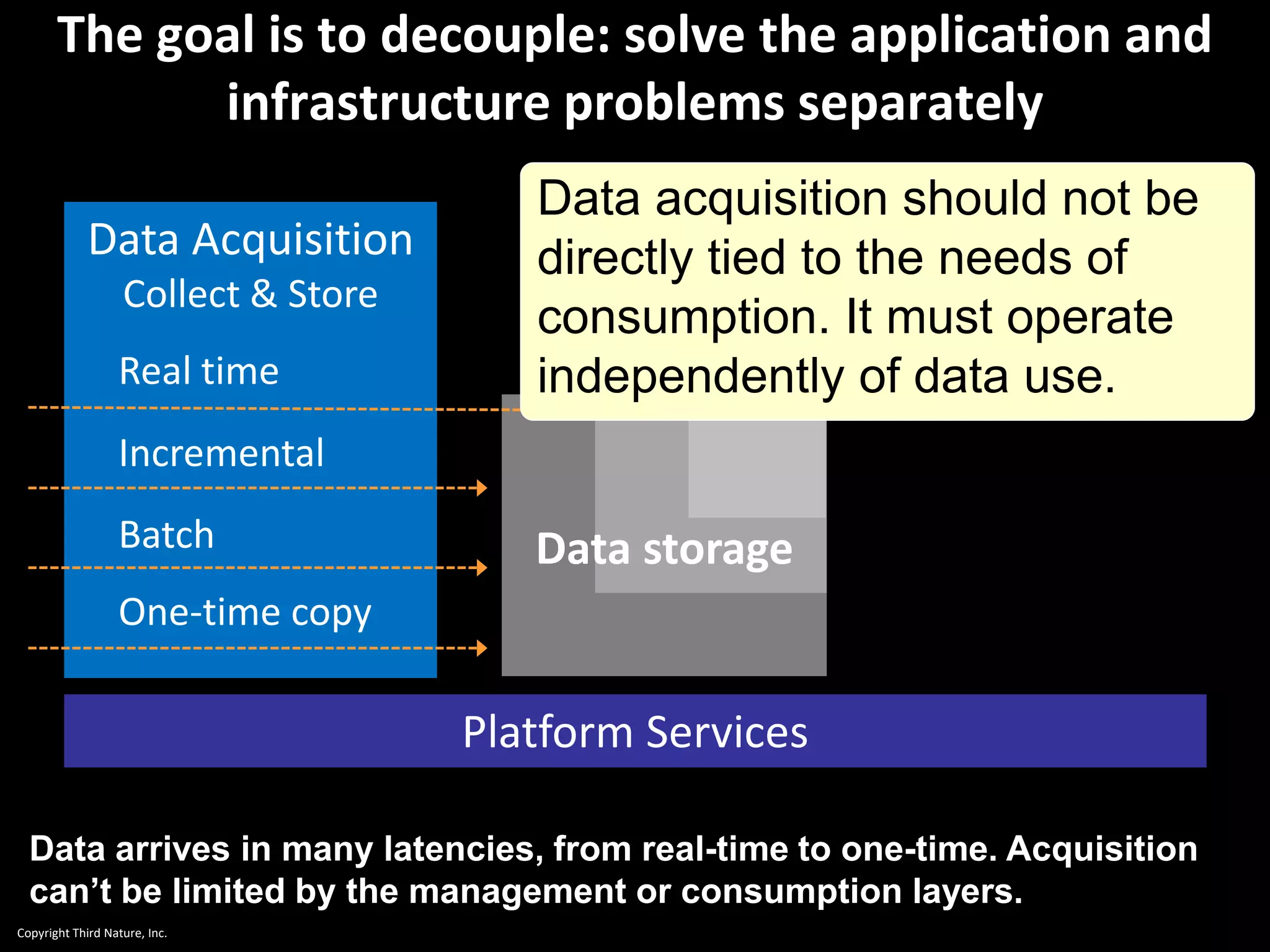

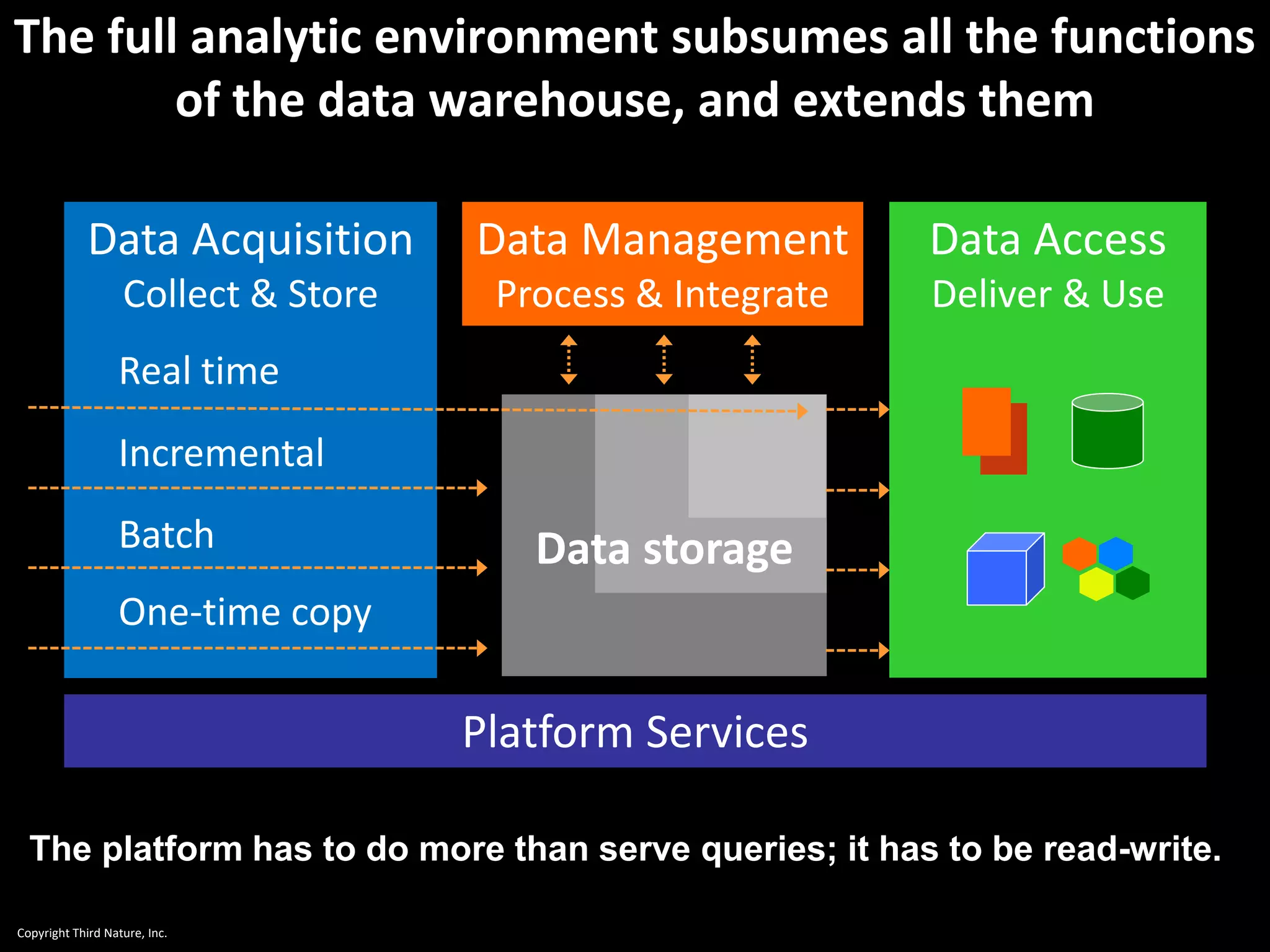

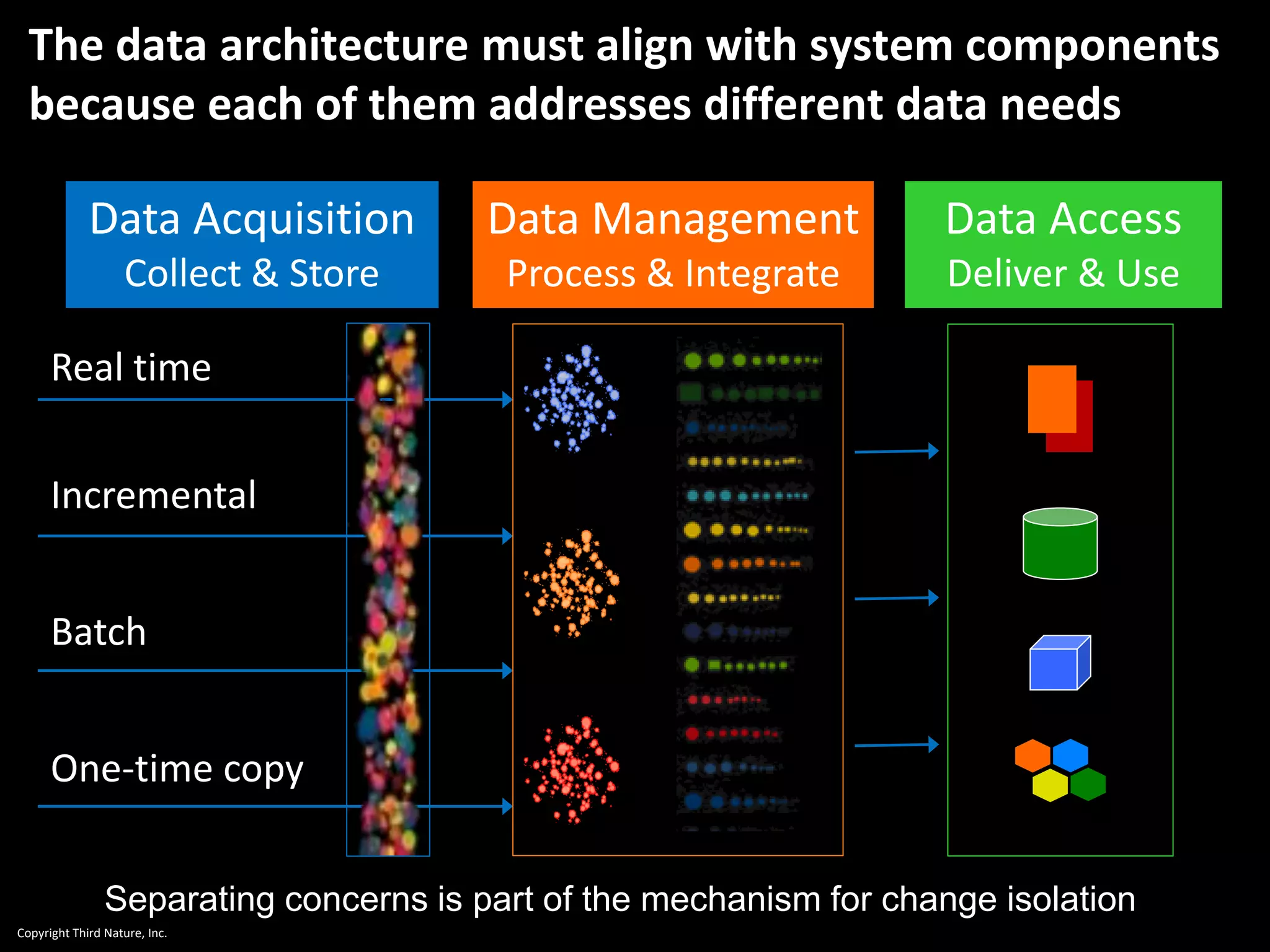

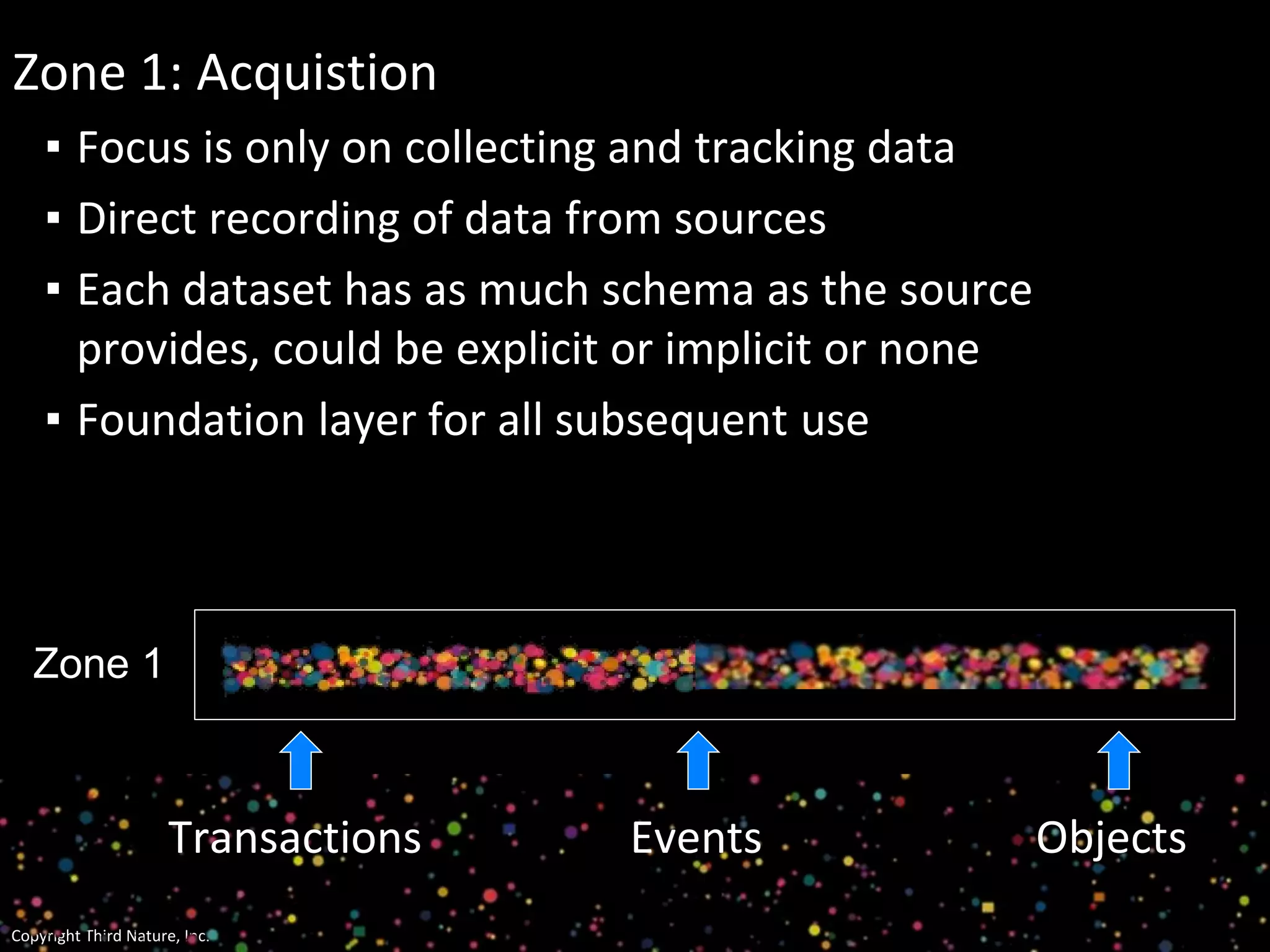

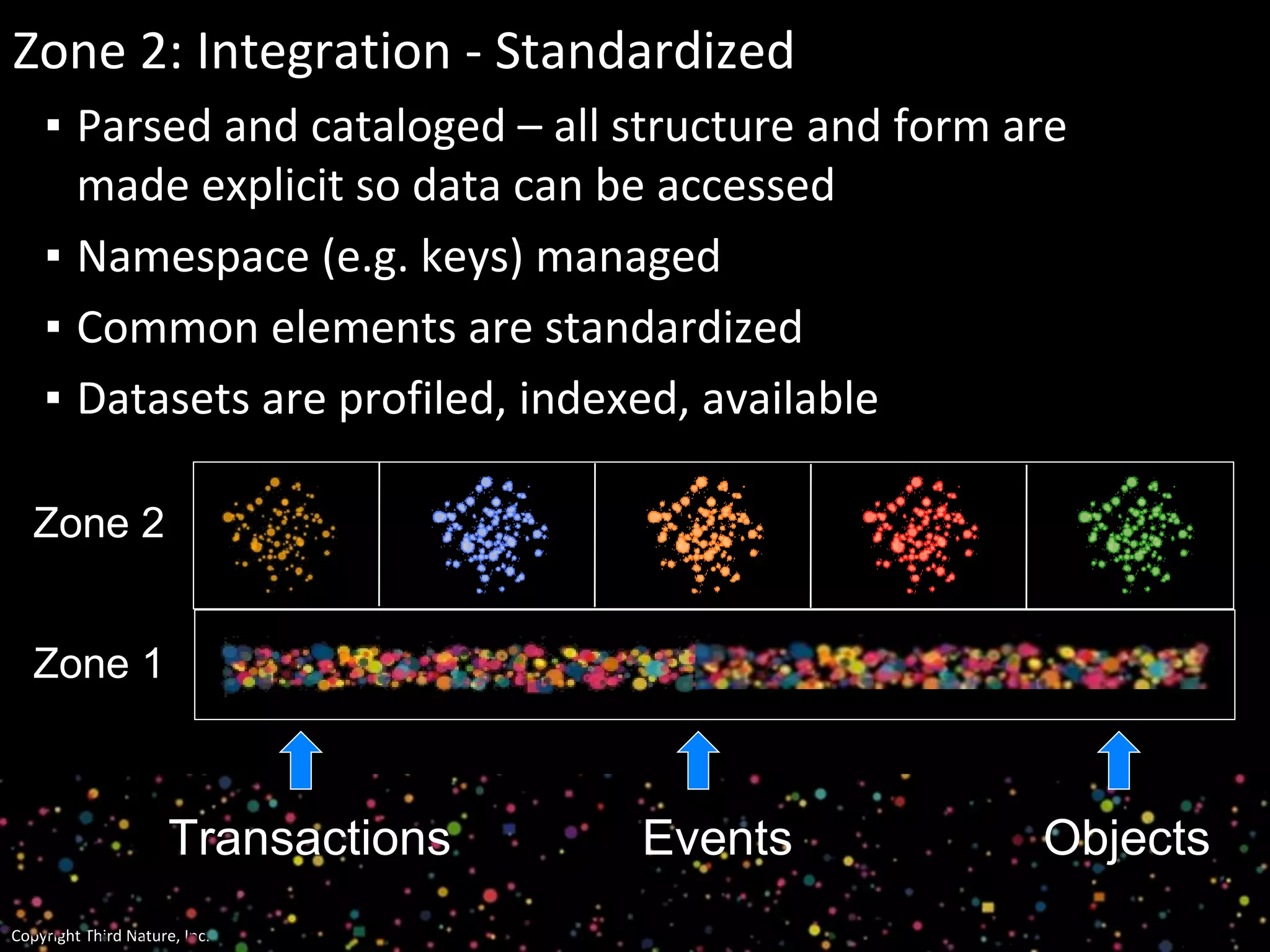

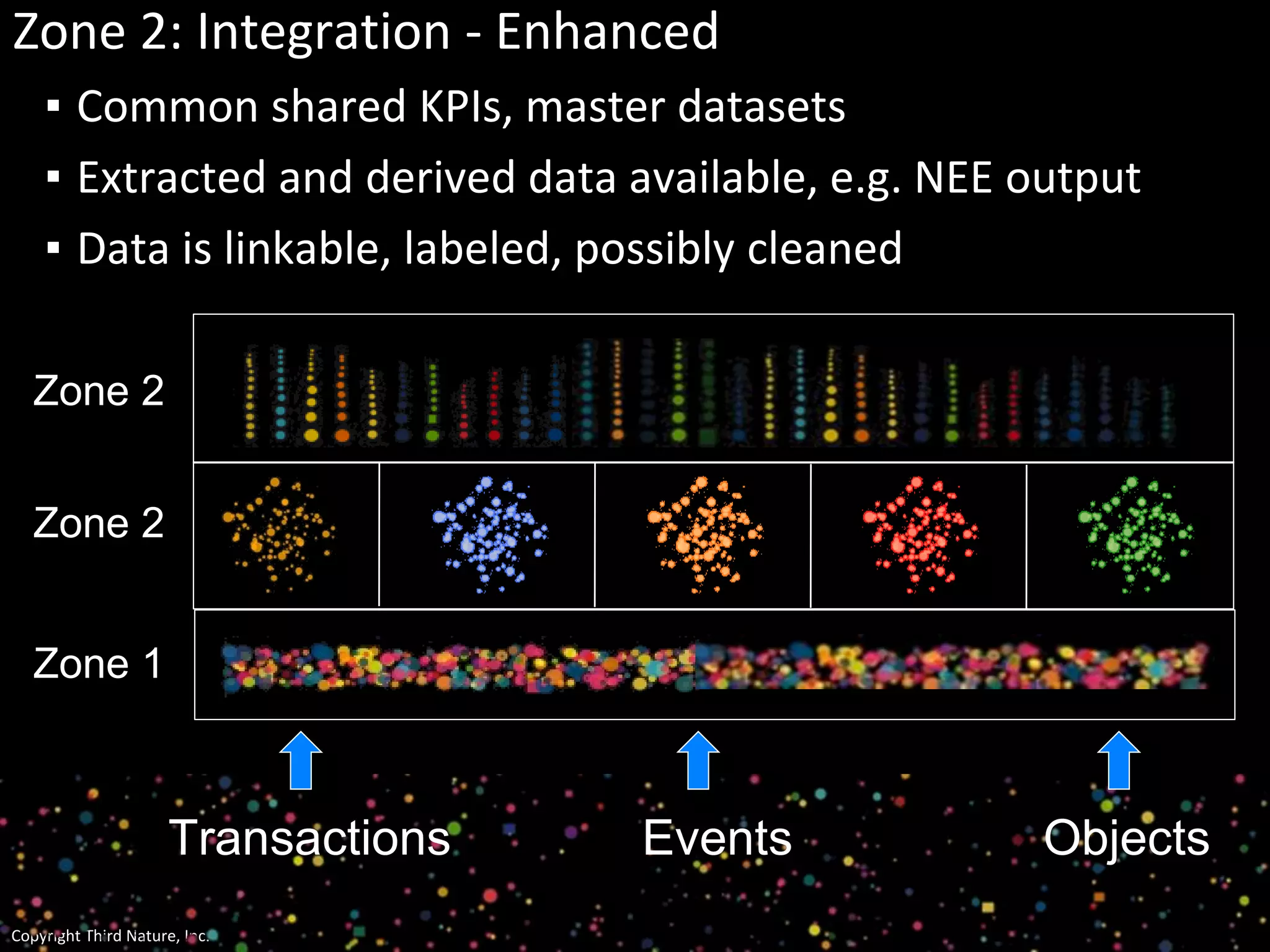

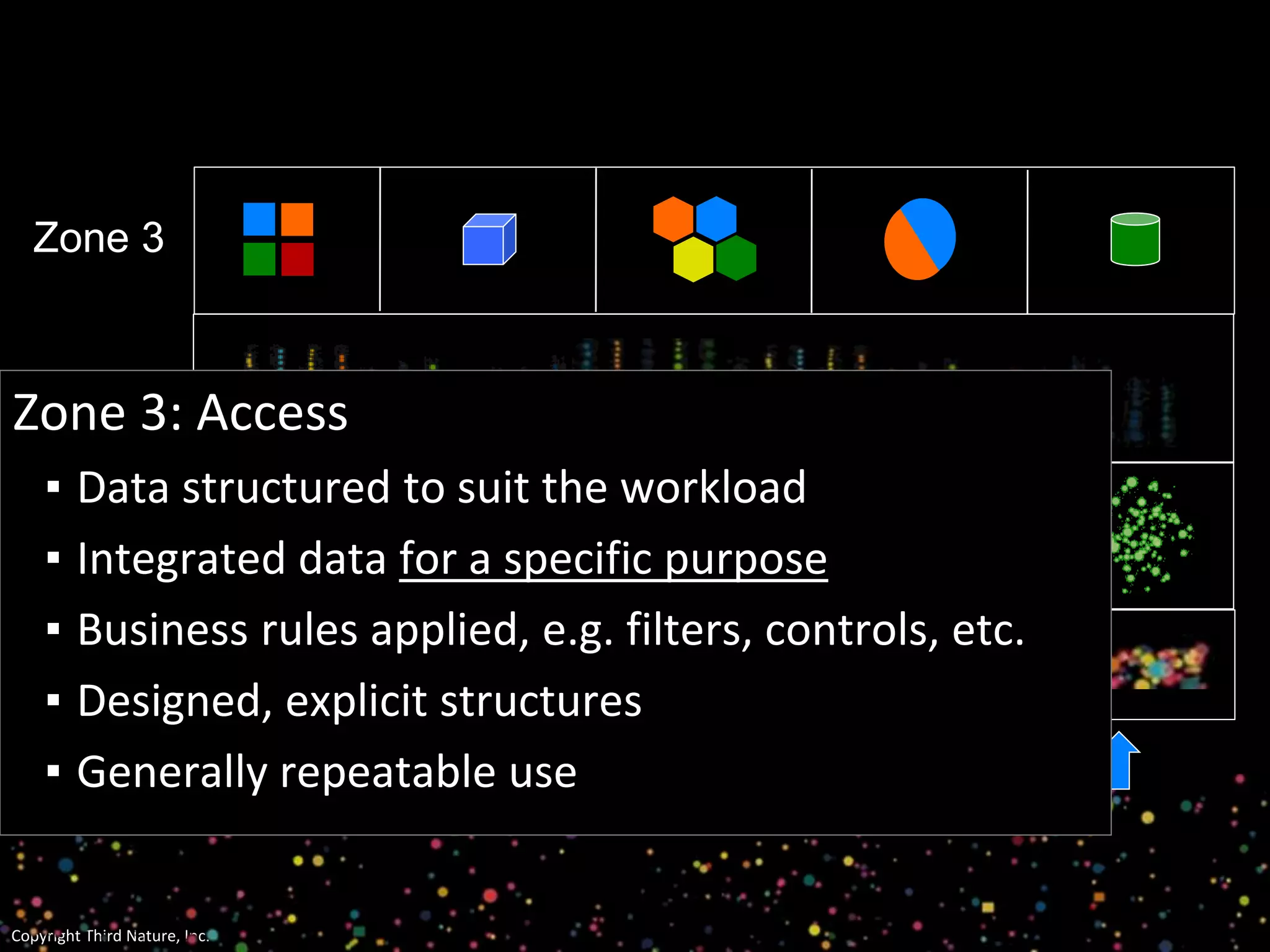

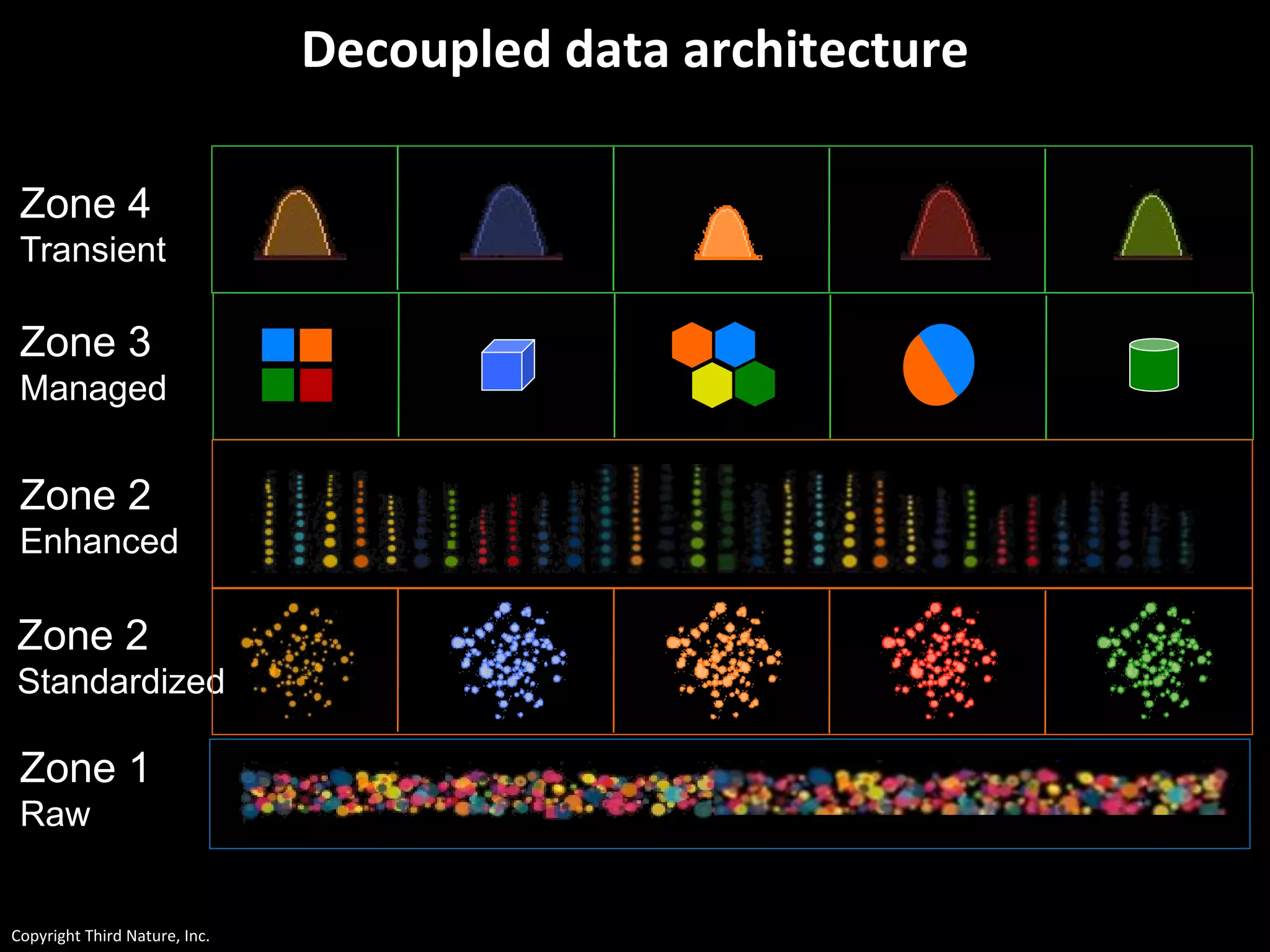

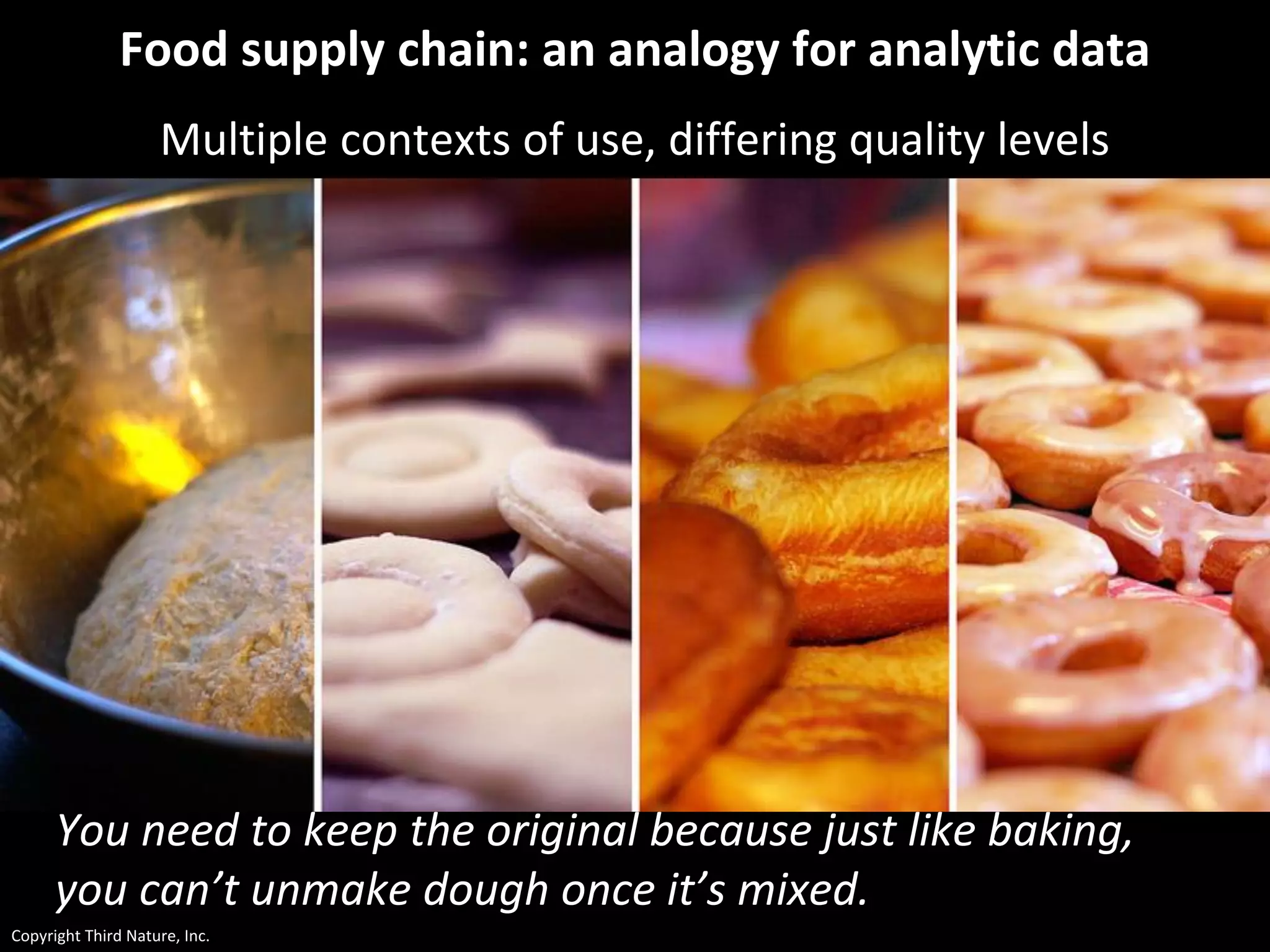

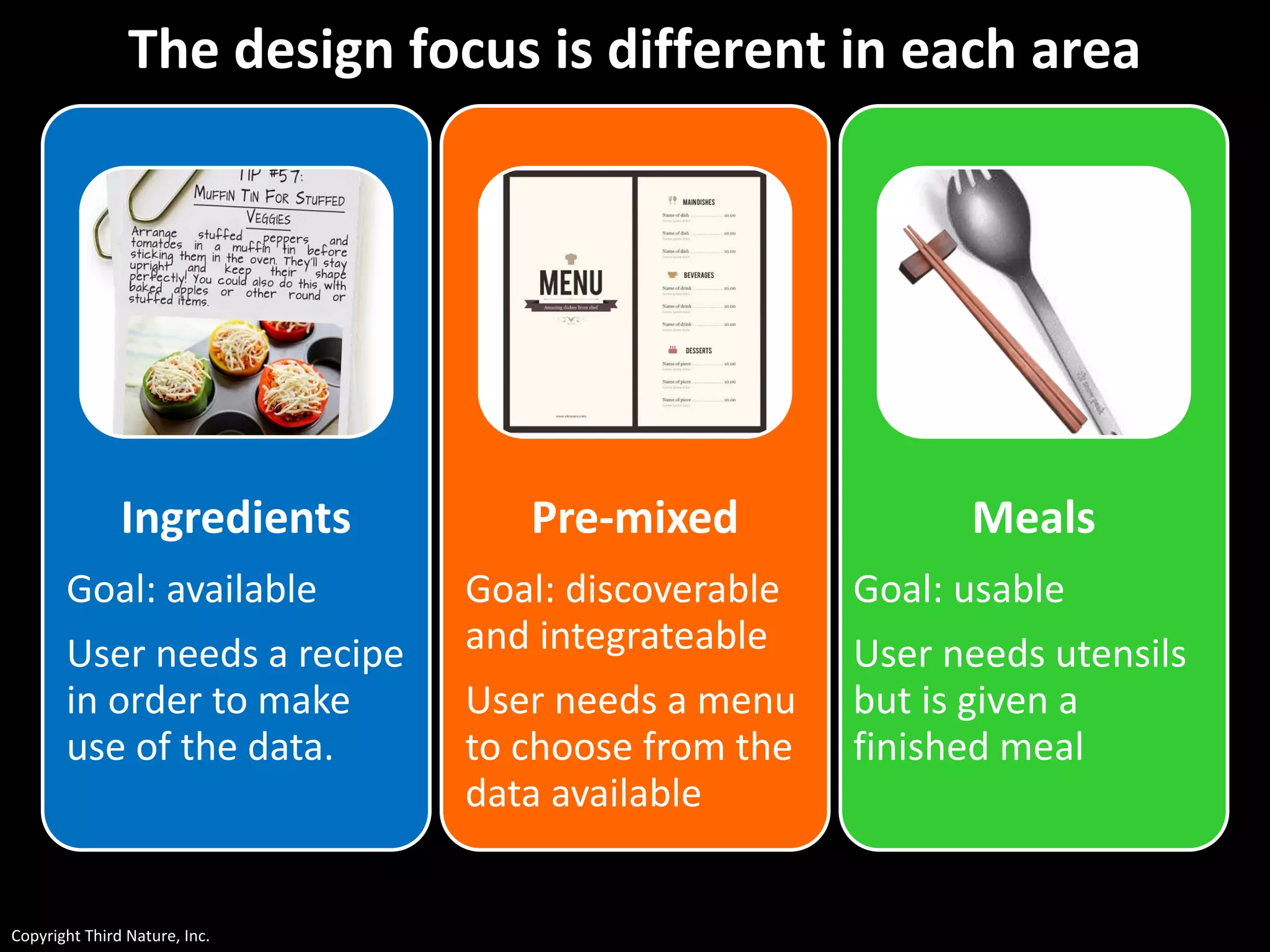

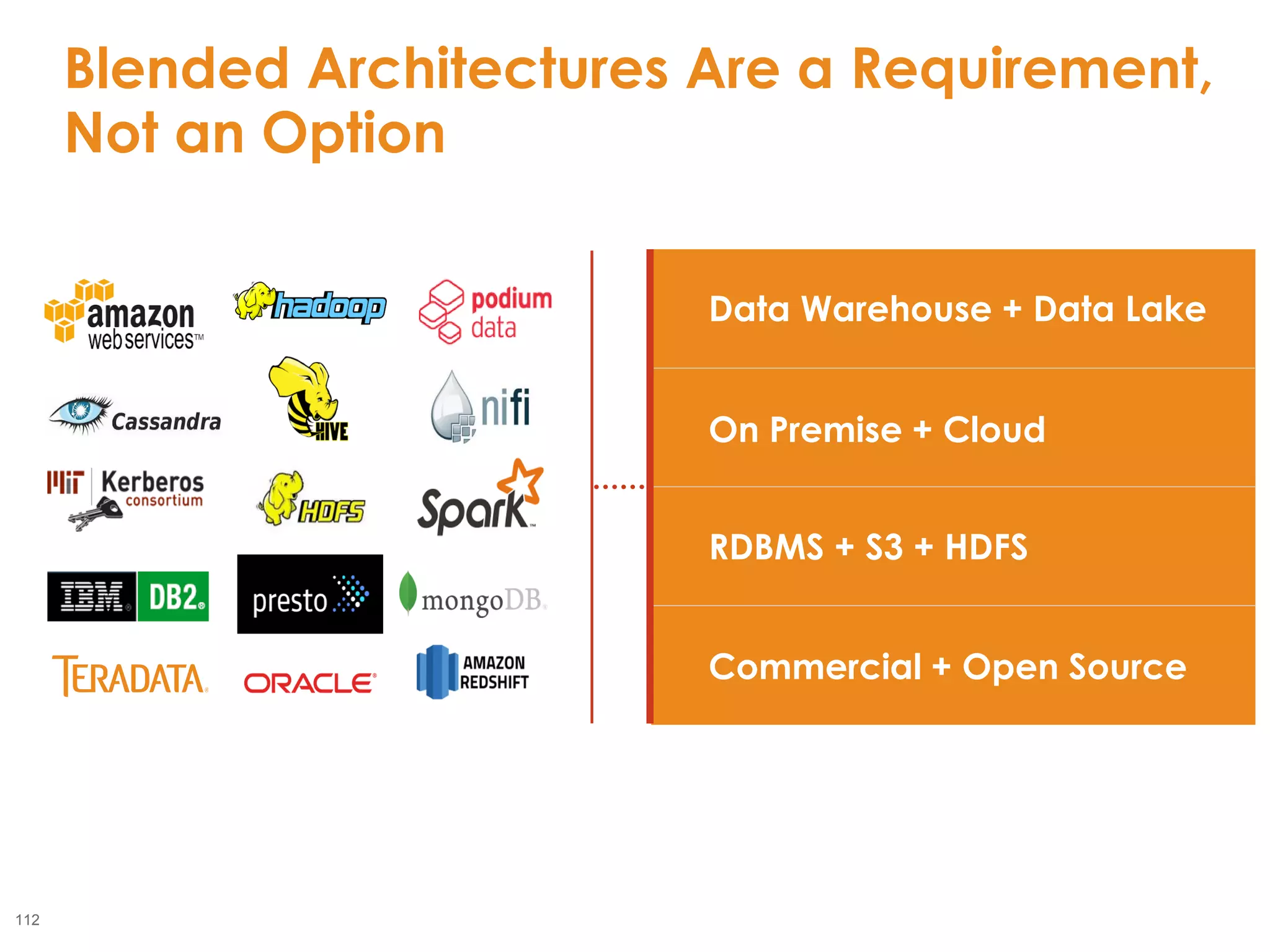

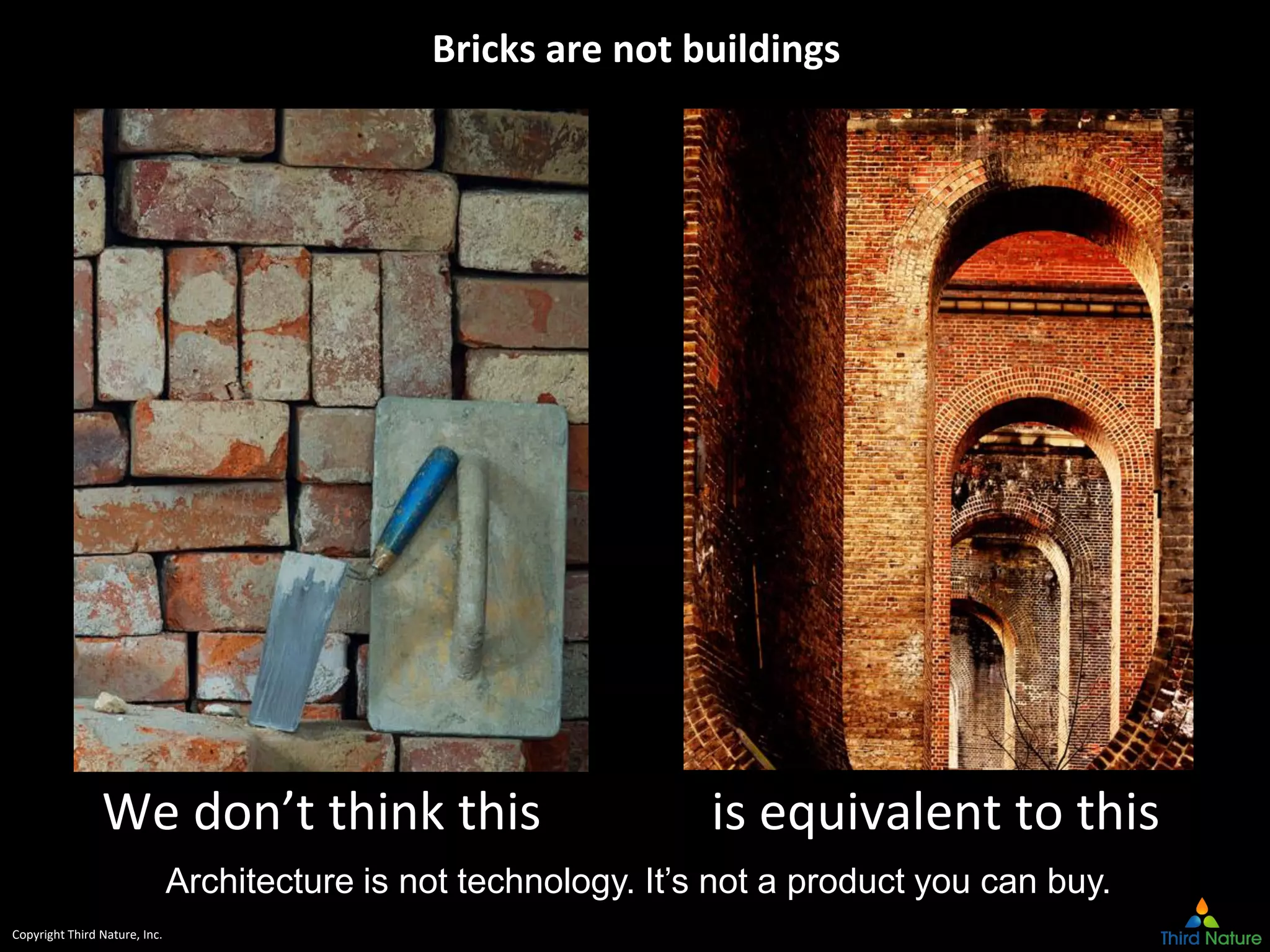

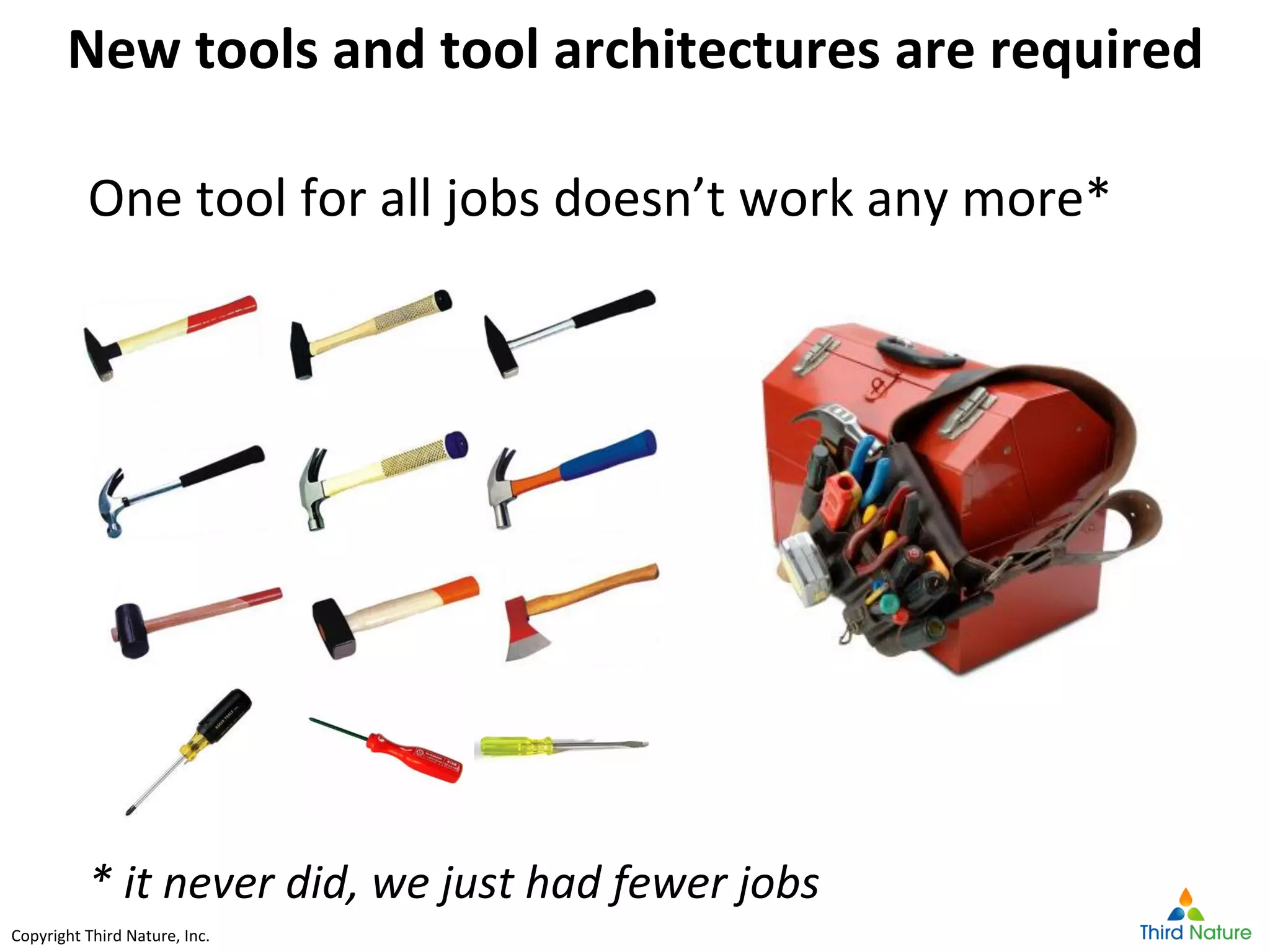

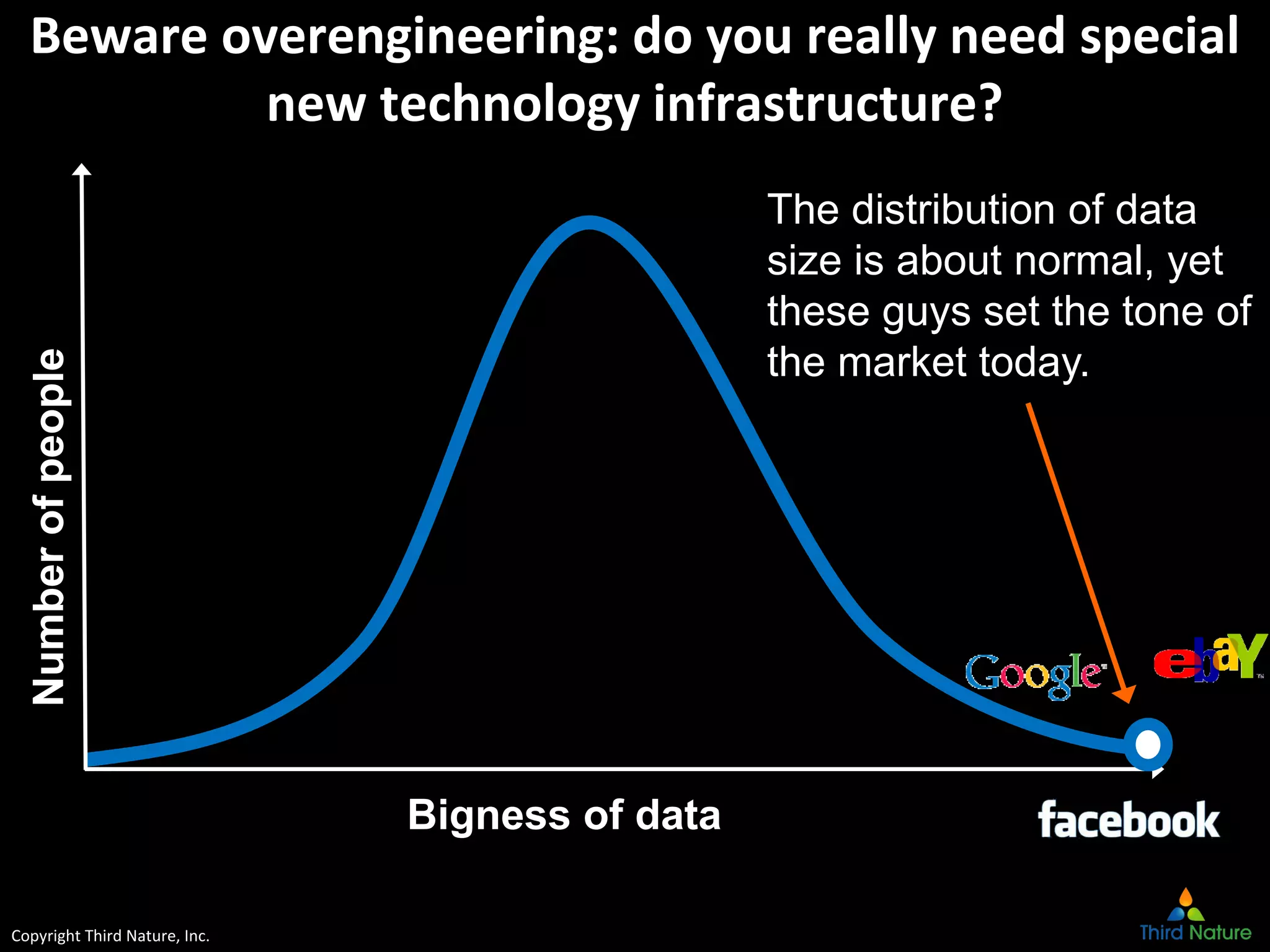

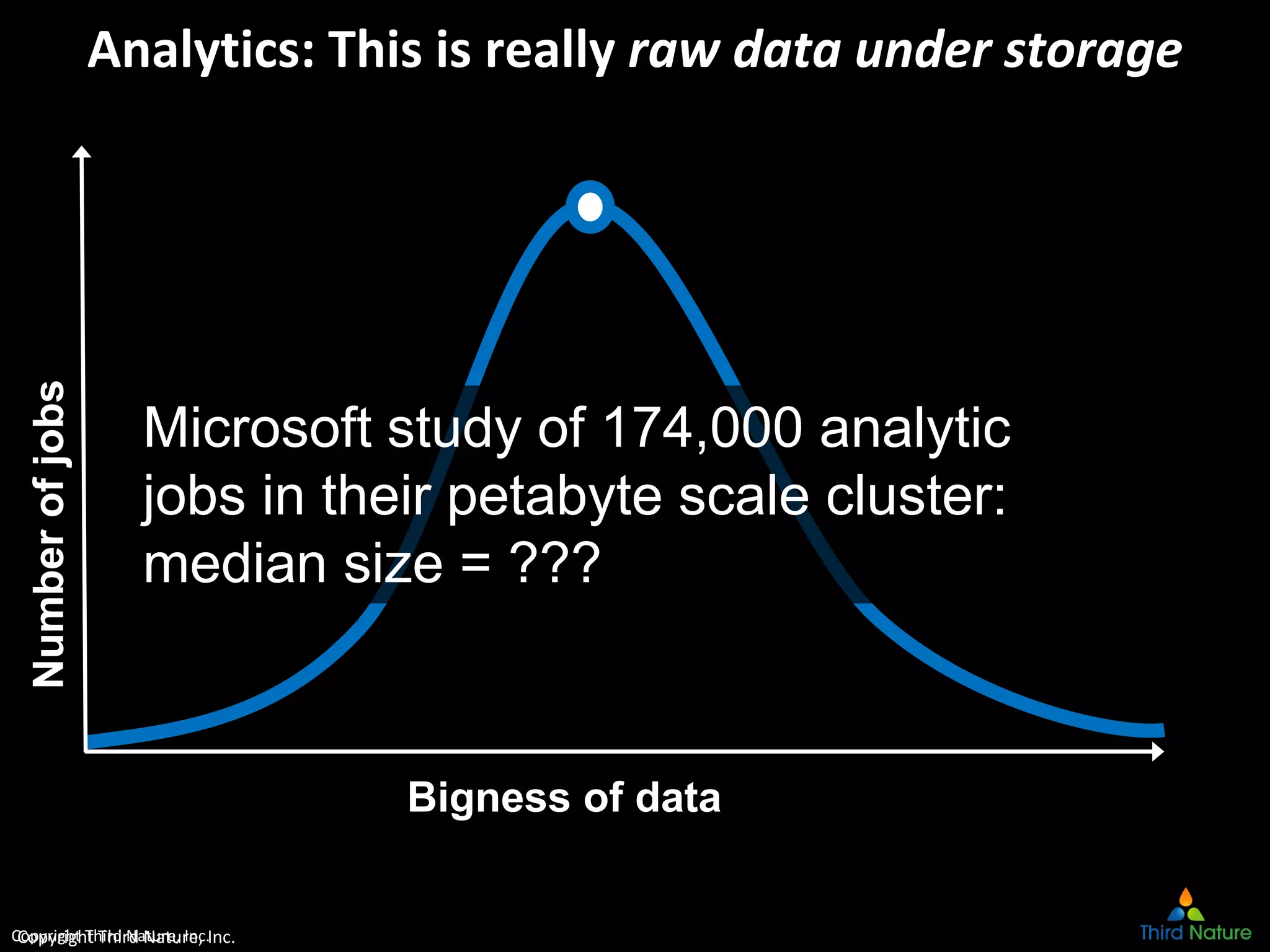

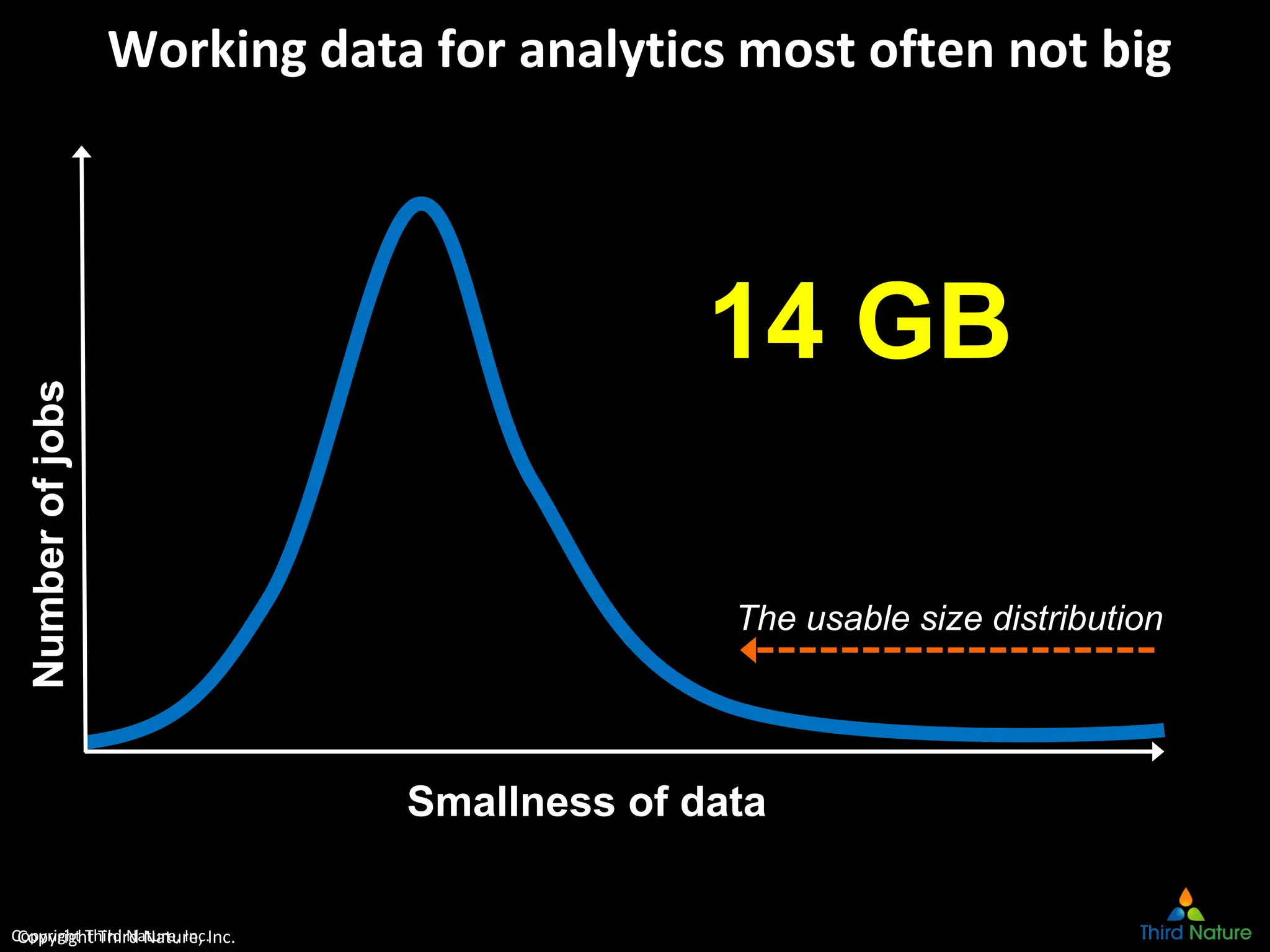

The document outlines the challenges enterprises face in data and analytics, identifying root causes like outdated infrastructure, lack of agility, and inefficient processes. It emphasizes the need for a robust data architecture rather than just technological solutions and stresses the importance of beginning analytics strategies with stakeholder needs. Additionally, it discusses the perspectives of various constituencies involved in analytics, the complexities of managing data, and the necessity of a system of record for operational analytics.