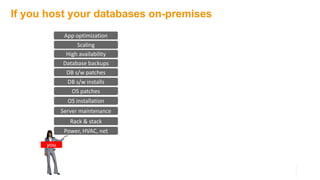

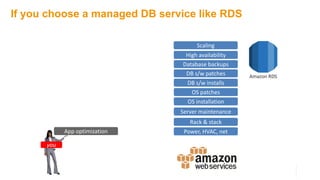

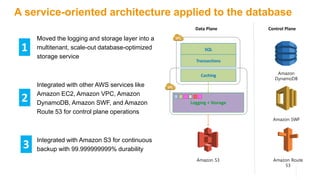

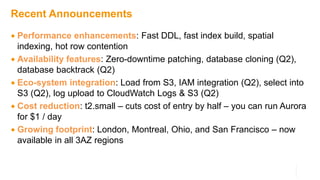

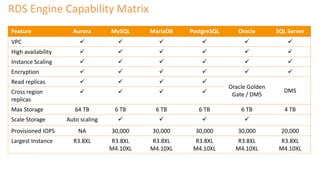

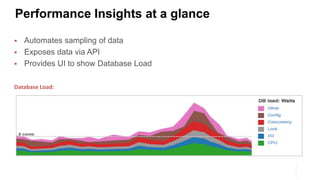

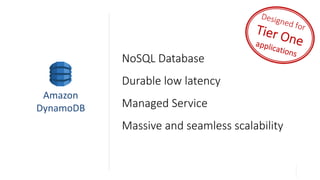

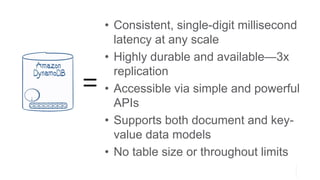

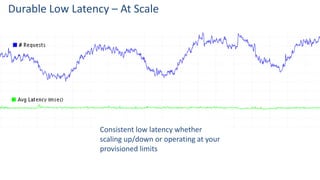

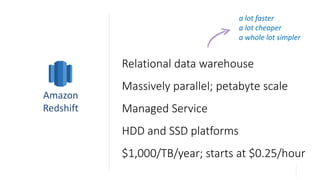

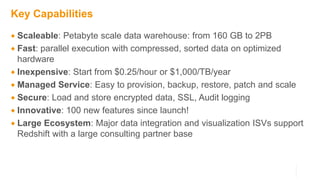

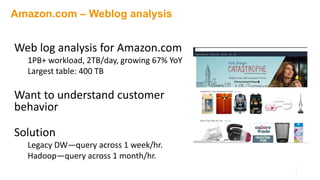

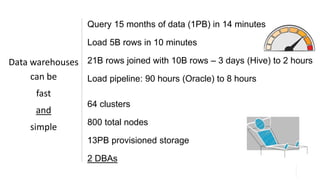

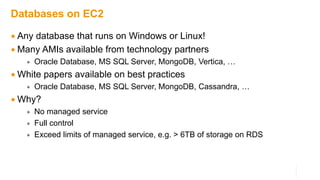

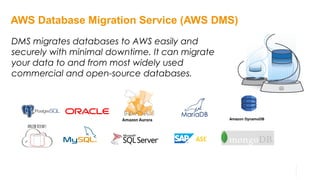

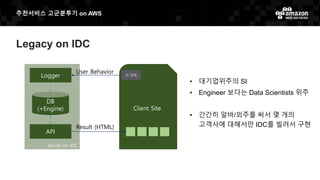

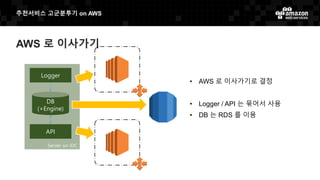

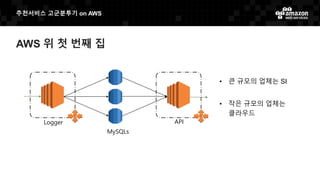

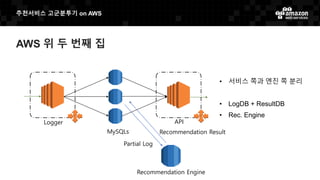

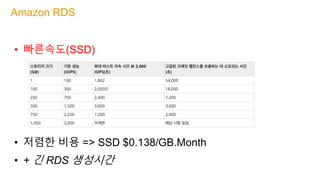

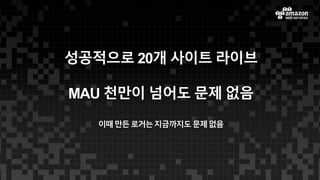

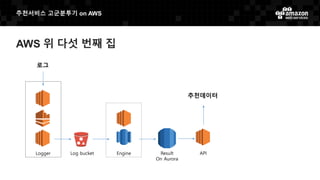

The document discusses Amazon Web Services' database solutions, highlighting services like Amazon RDS, DynamoDB, Aurora, and Redshift. It emphasizes the advantages of managed database services such as lower operational overhead, scalability, and high availability, along with features like rapid provisioning and automated backups. The document also includes customer use cases demonstrating improved performance and cost-effectiveness in various applications.