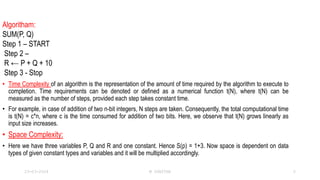

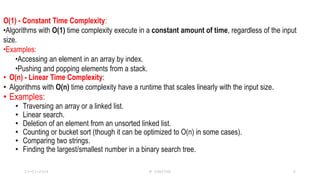

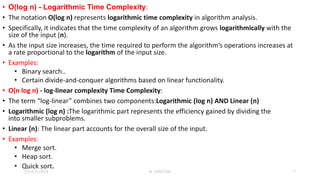

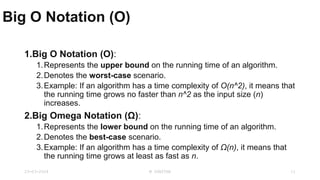

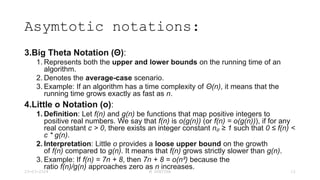

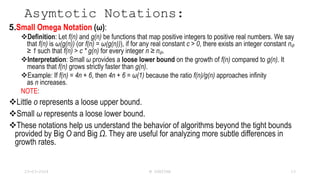

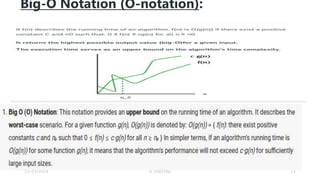

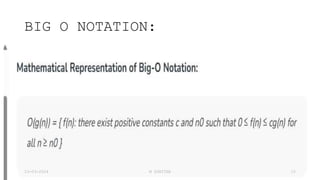

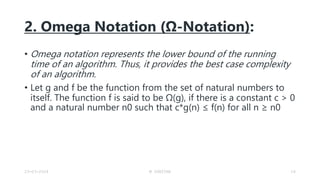

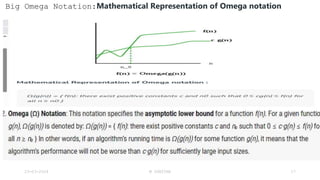

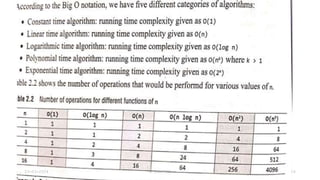

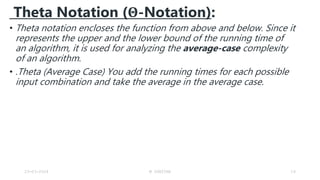

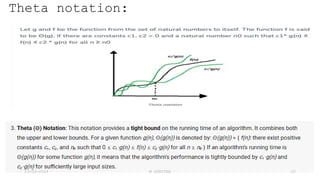

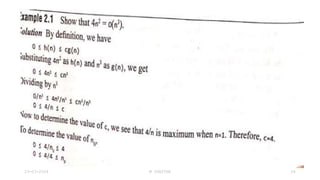

1. The document discusses various asymptotic notations used to analyze algorithm efficiency such as Big O, Omega, and Theta notations.

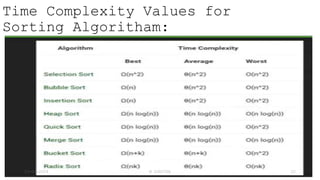

2. It provides examples of time complexity for common algorithms like searching, sorting, etc.

3. The asymptotic notations help understand how an algorithm's running time scales with increasing input size.