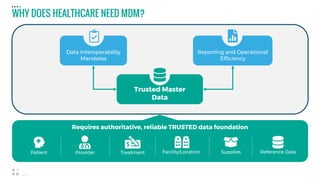

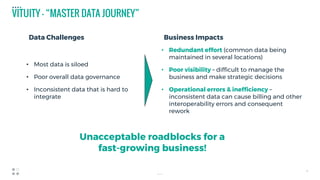

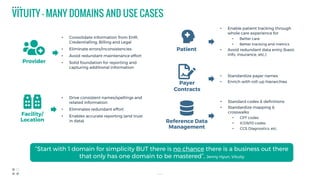

The document discusses healthcare's need for master data management (MDM) to create a single trusted source of reference data across disparate systems. It notes that MDM hubs can standardize data to common governance rules, define common reference data, and avoid redundant data entry. The document also provides examples of common healthcare domains that can benefit from MDM like providers, facilities, patients, reference codes. Finally, it summarizes one healthcare organization's experience deploying MDM starting with provider and location domains to consolidate inconsistent data across various systems and enable more accurate reporting.