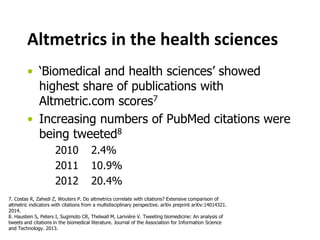

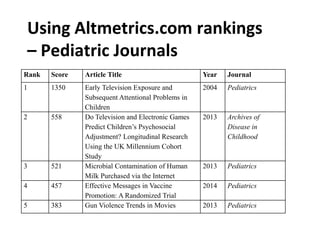

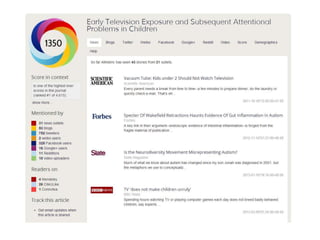

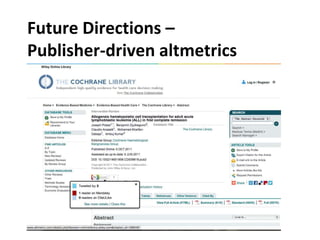

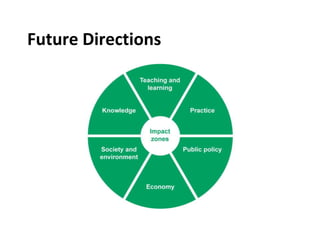

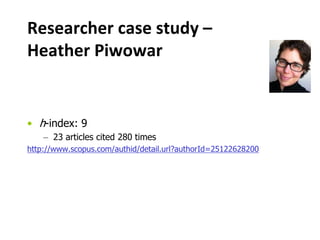

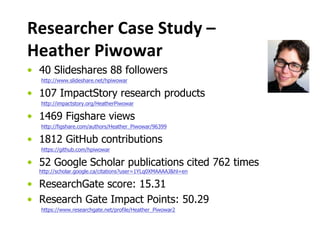

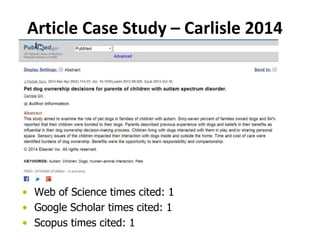

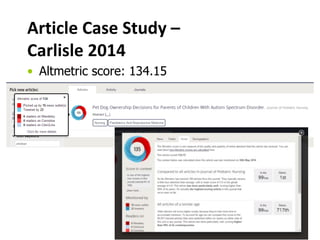

This document discusses altmetrics, which are alternative metrics for measuring research impact beyond citations. It provides examples of altmetric data sources like tweets, blogs, and news articles. The document also presents case studies of researchers and articles to demonstrate altmetric measurements. It discusses issues around gaming the system and outlines future directions for altmetrics, including increased transparency, standards development, and assessing correlations with other impact measures.

![Altmetrics…

[…] capture ways in which articles are

disseminated throughout the expanding

scholarly ecosystem, and reach beyond the

scope of traditional trackers and filters.

[…] measure research impact by including

references outside of traditional scholarly

publishing.

1. Public Library of Science (PLOS). Altmetrics. [29 April 2014].

Available from http://article-level-metrics.plos.org/alt-metrics/

2. Baynes G. Scientometrics, bibliometrics, altmetrics: Some introductory advice for the lost and

bemused. Insights. 2012;25(3):311-5. doi: 10.1629/2048-7754.25.3.311.](https://image.slidesharecdn.com/archealtmetricsjuly2014-140721142504-phpapp01/85/Altmetrics-for-research-impact-measurement-hcsm-3-320.jpg)

![What’s your impact3?

3. Emerald Group Publishing. Impact of Research [11 April 2014].

Available from: http://www.emeraldgrouppublishing.com/authors/impact/index.htm

http://blogs.lse.ac.uk/impactofsocialsciences/2011/07/14/publishers-measuring-impact/](https://image.slidesharecdn.com/archealtmetricsjuly2014-140721142504-phpapp01/85/Altmetrics-for-research-impact-measurement-hcsm-6-320.jpg)

![What is gaming?

• Alice has a new paper out. She asks those grad

students of hers who blog to write about it.

• Alice has a new paper out. She believes that it

contains important information for diabetes patients

and so signs up to a ’100 retweets for $$$’ service.

5. Case examples from: Adie, E. Gaming Altmetrics. [29 April 2014].

Available from http://www.altmetric.com/blog/gaming-altmetrics/](https://image.slidesharecdn.com/archealtmetricsjuly2014-140721142504-phpapp01/85/Altmetrics-for-research-impact-measurement-hcsm-19-320.jpg)

![Spectrum of social media self-

promotion

6. Adie, E. Gaming Altmetrics. [29 April 2014].

Available from http://www.altmetric.com/blog/gaming-altmetrics/](https://image.slidesharecdn.com/archealtmetricsjuly2014-140721142504-phpapp01/85/Altmetrics-for-research-impact-measurement-hcsm-21-320.jpg)