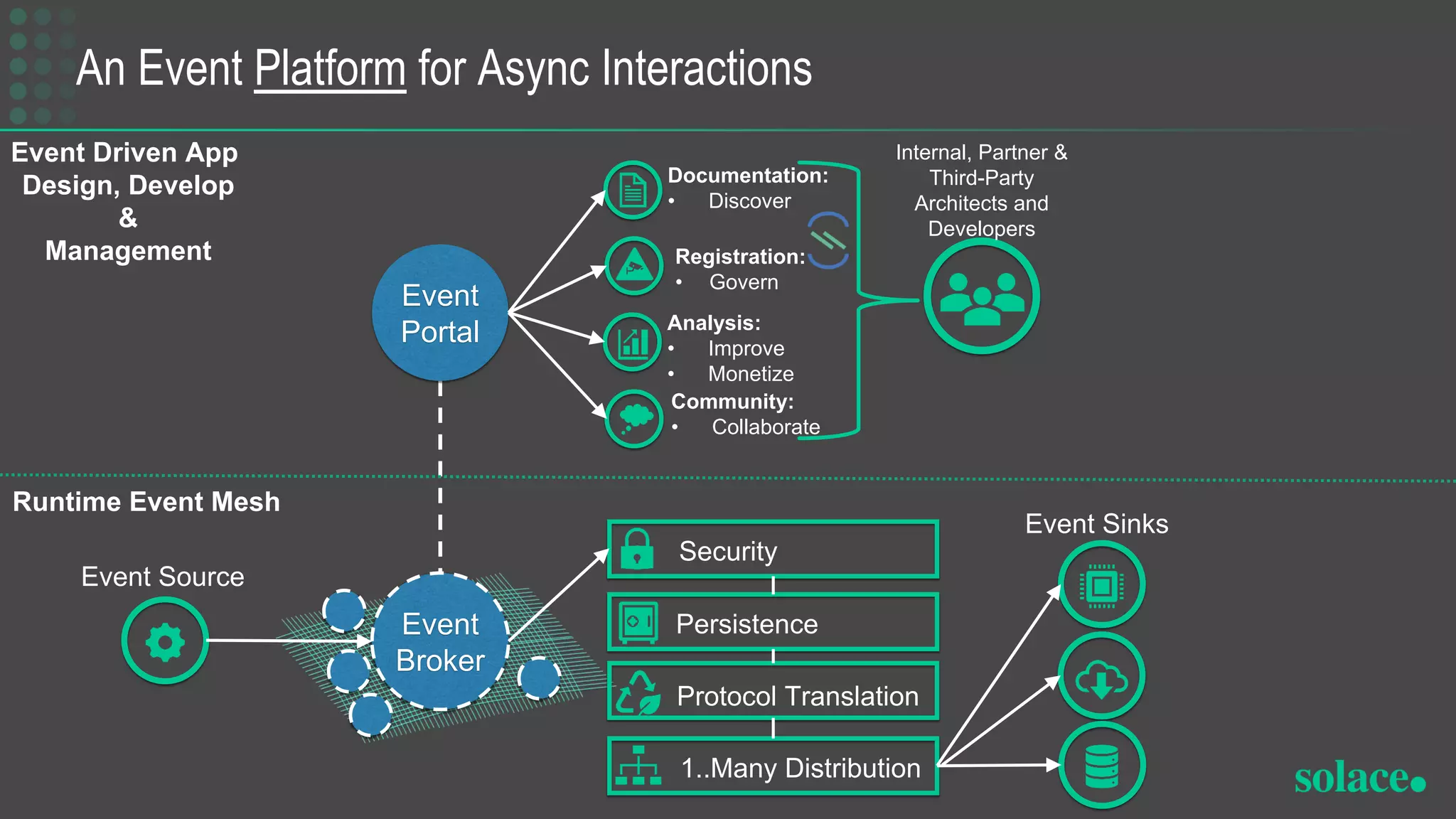

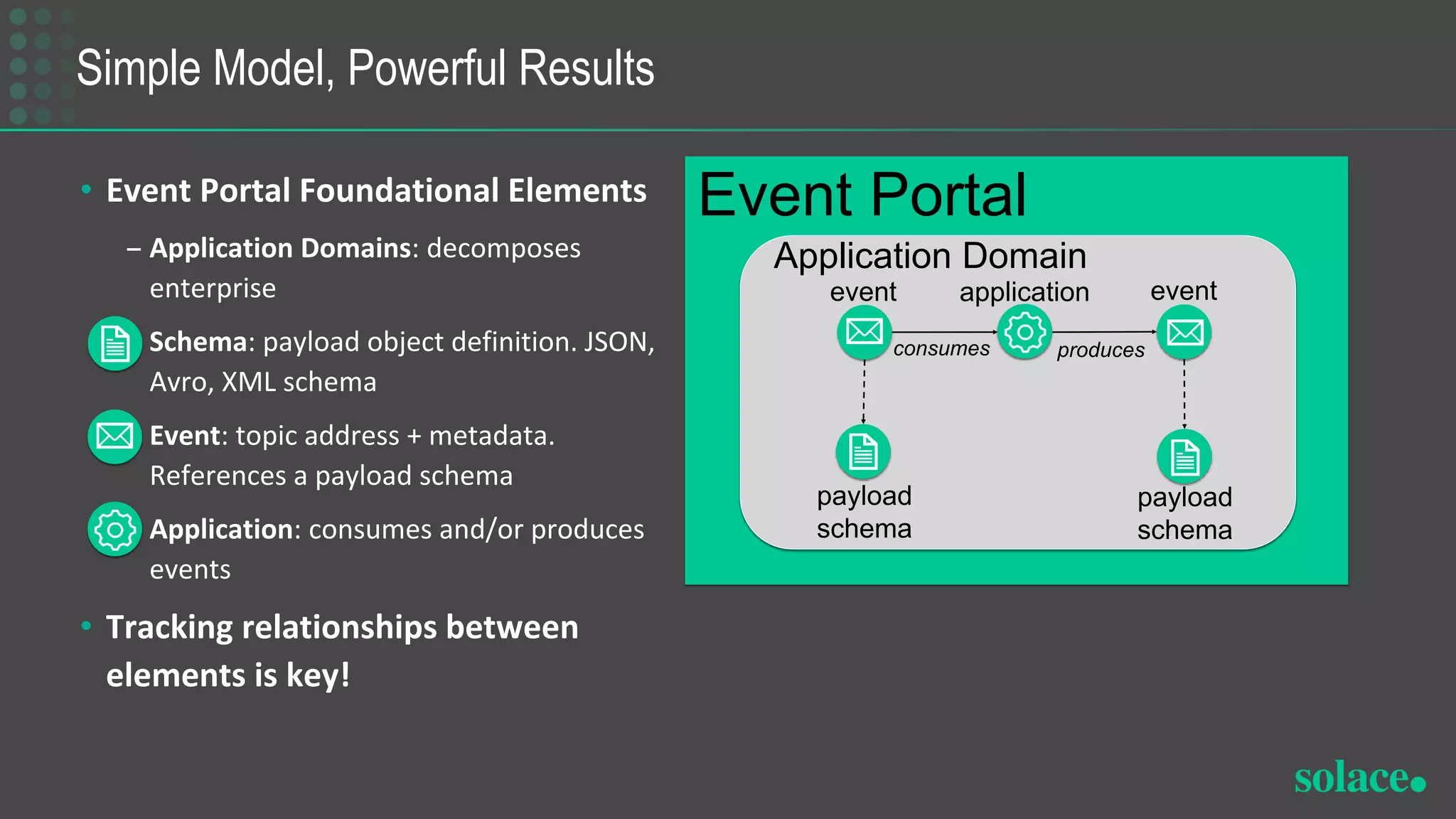

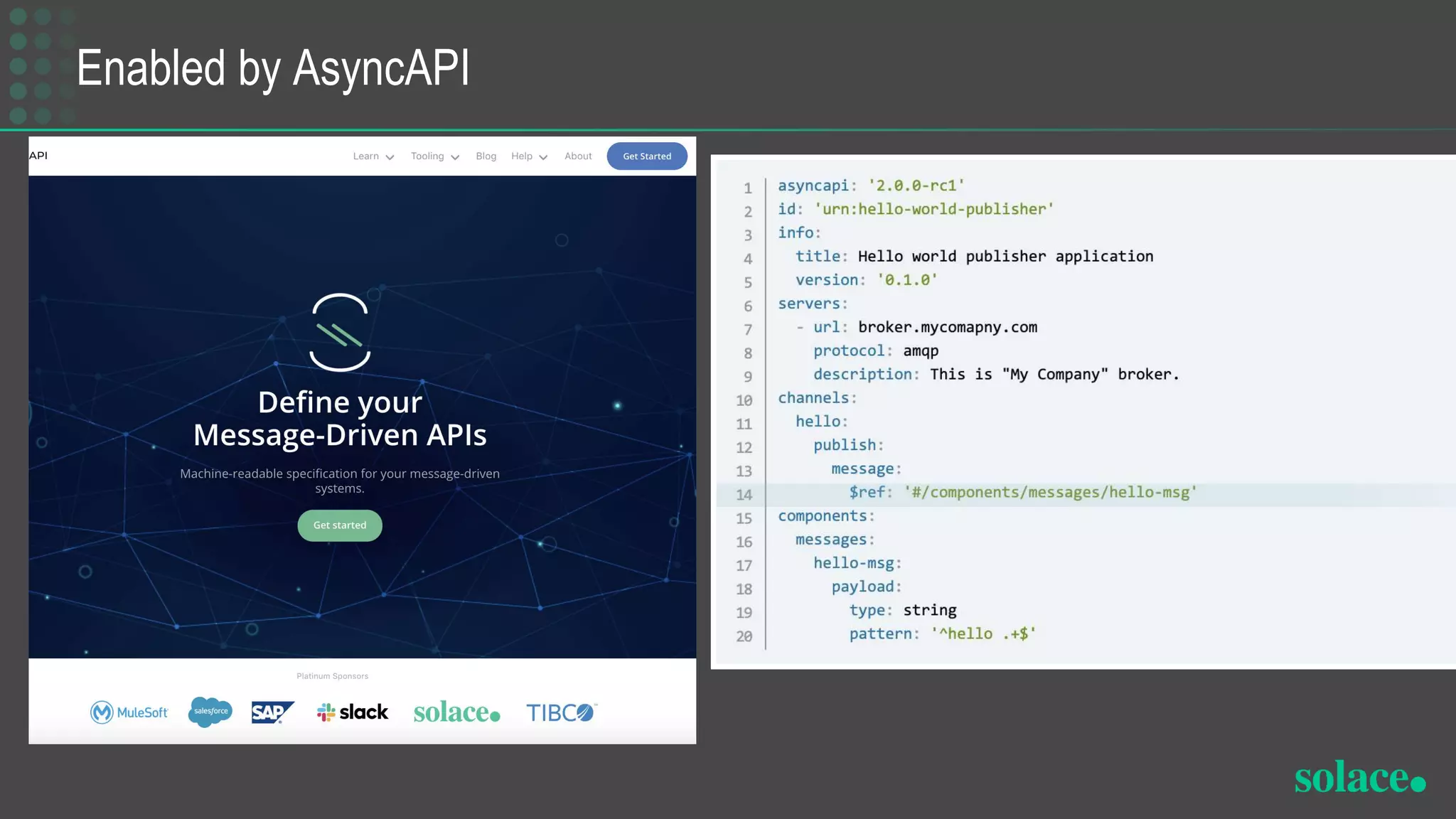

The document discusses the importance of adopting event-driven architecture in applications to enhance customer engagement and real-time responsiveness. It outlines the challenges previously faced, such as complexity and cost, and introduces an event management platform designed to facilitate discovery, governance, and collaboration among developers. Key components include event schemas, topics, and a runtime event mesh to support the implementation of asynchronous interactions.