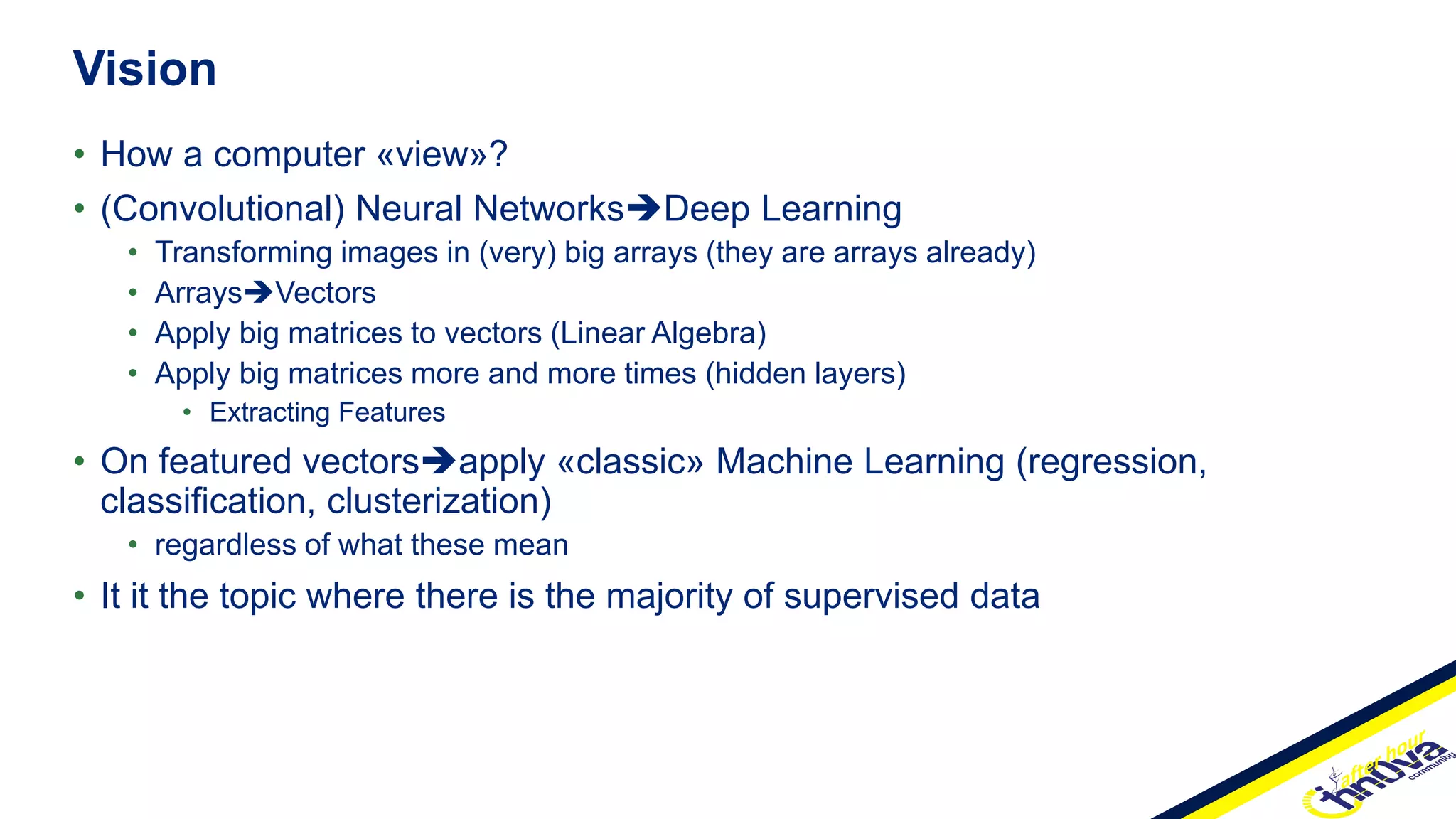

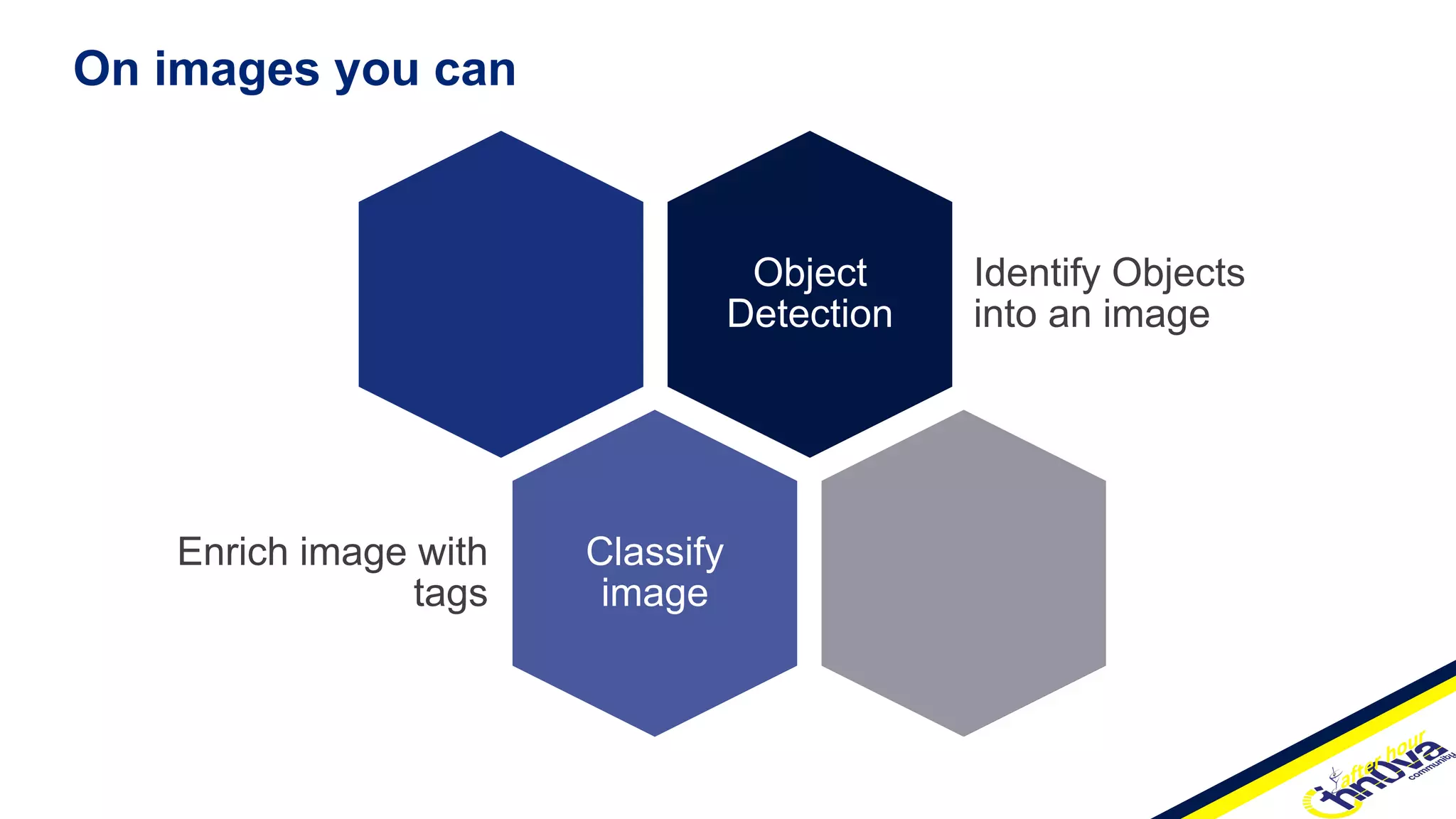

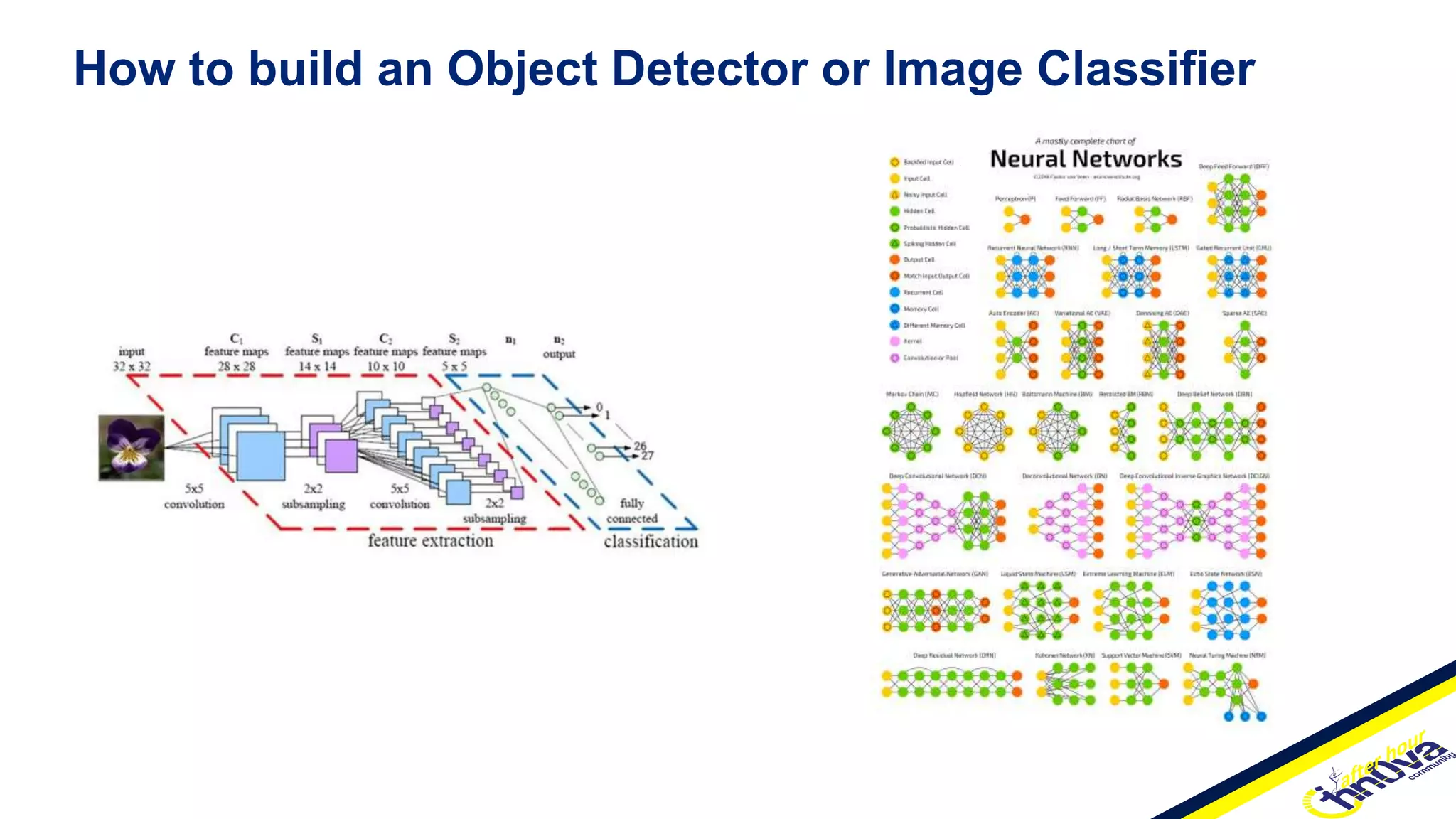

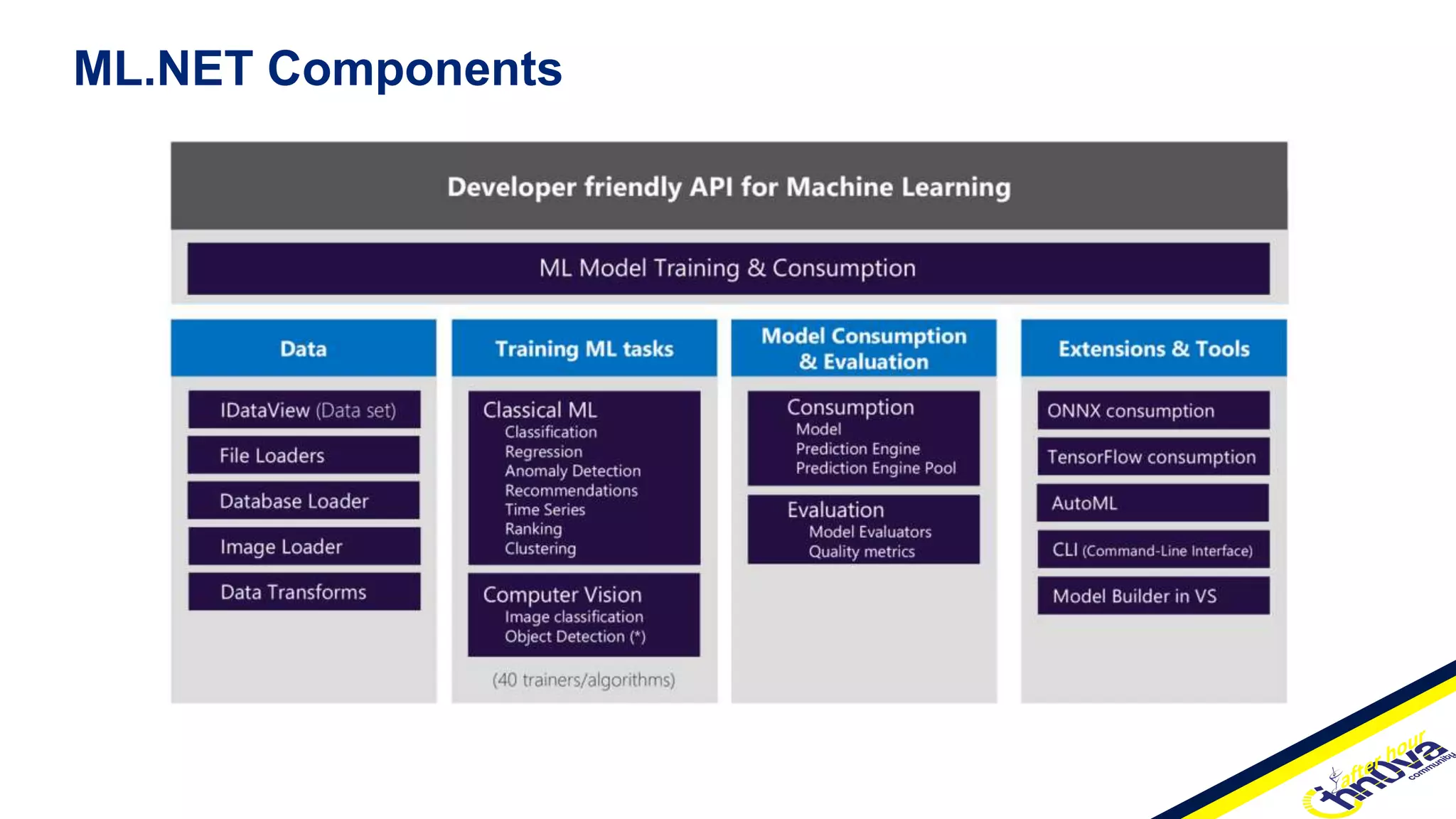

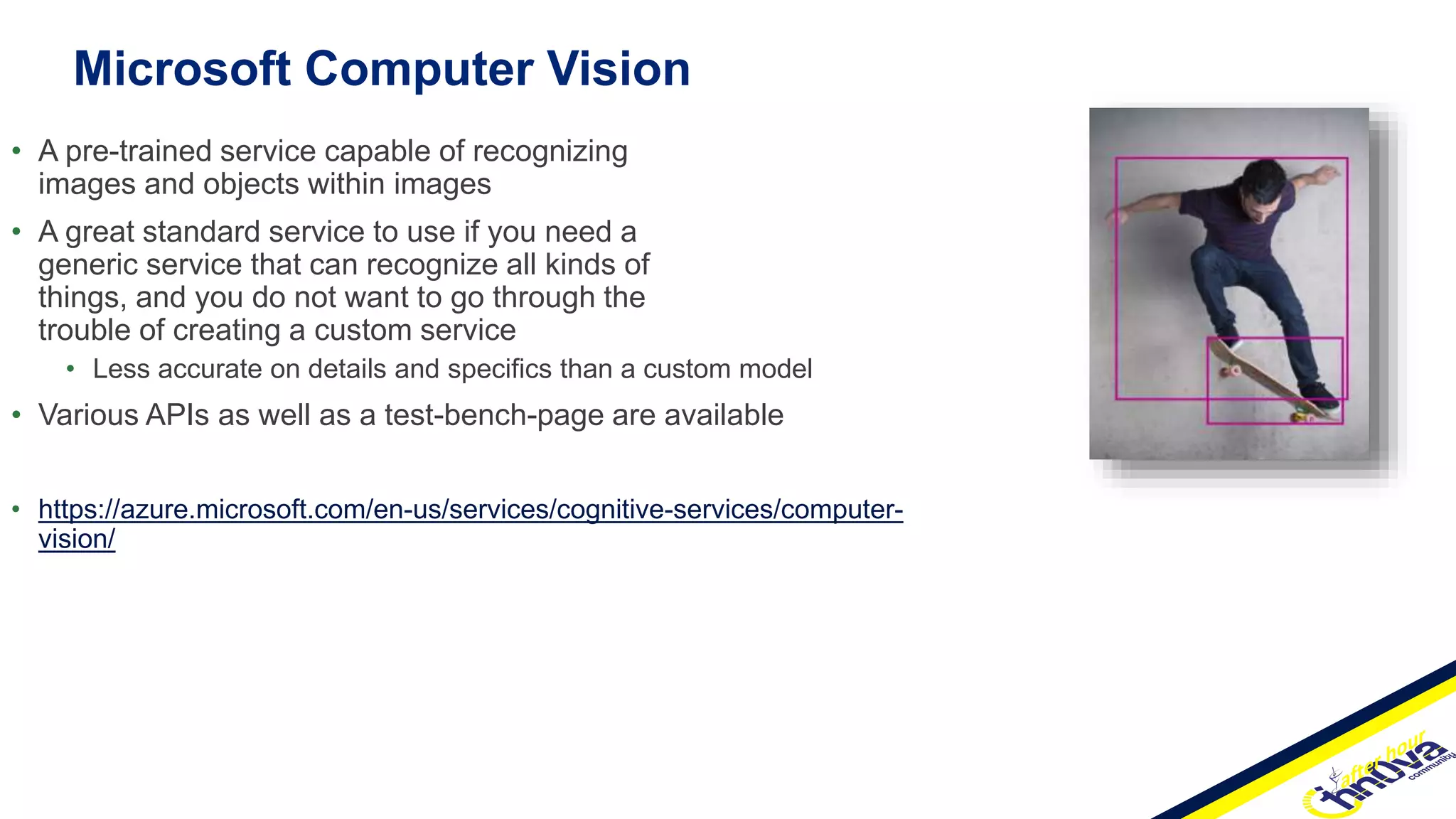

The document provides an overview of Marco Parenzan's work as a Senior Solution Architect focusing on Azure IoT and anomaly detection, emphasizing the integration of deep learning for image classification and object detection using various CNN models. It discusses methods for anomaly detection in time series data, including statistical profiling and machine learning approaches, and highlights Microsoft's ML.NET for building custom machine learning models within .NET applications. Additionally, it outlines several pre-trained services, such as Microsoft Computer Vision and Custom Vision, as well as frameworks like TensorFlow and PyTorch, underlining the evolution of data science solutions for .NET developers.

![• To monitor the time-series continuously and alert for potential incidents on time

• The algorithm first computes the Fourier Transform of the original data. Then it computes

the spectral residual of the log amplitude of the transformed signal before applying the Inverse

Fourier Transform to map the sequence back from the frequency to the time domain. This

sequence is called the saliency map. The anomaly score is then computed as the relative

difference between the saliency map values and their moving averages. If the score is above a

threshold, the value at a specific timestep is flagged as an outlier.

• There are several parameters for SR algorithm. To obtain a model with good performance, we

suggest to tune windowSize and threshold at first, these are the most important parameters to

SR. Then you could search for an appropriate judgementWindowSize which is no larger than

windowSize. And for the remaining parameters, you could use the default value directly.

• Time-Series Anomaly Detection Service at Microsoft [https://arxiv.org/pdf/1906.03821.pdf]

Spectrum Residual Cnn (SrCnn)](https://image.slidesharecdn.com/2021-211121222942/75/Anomaly-Detection-with-Azure-and-NET-38-2048.jpg)