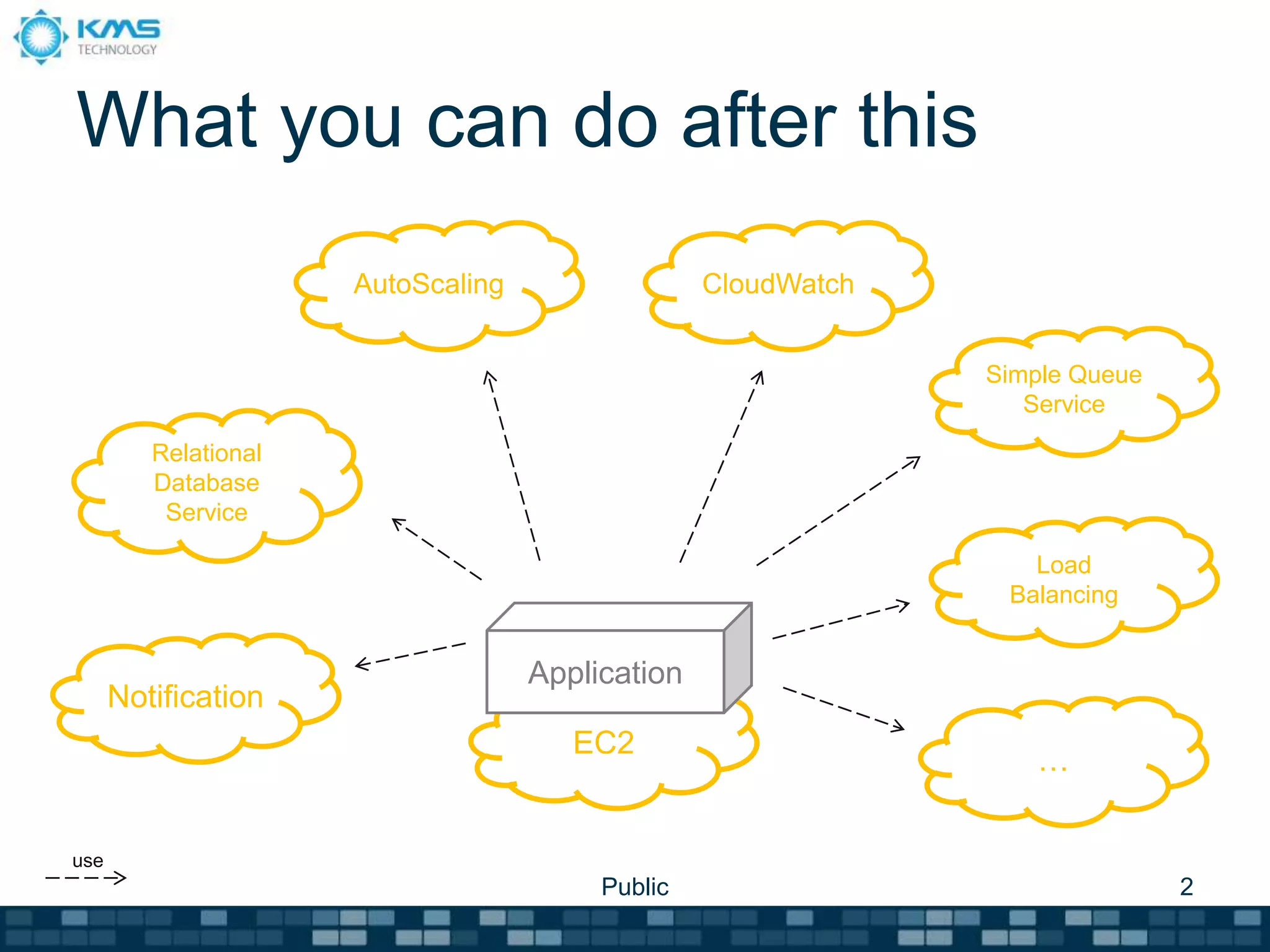

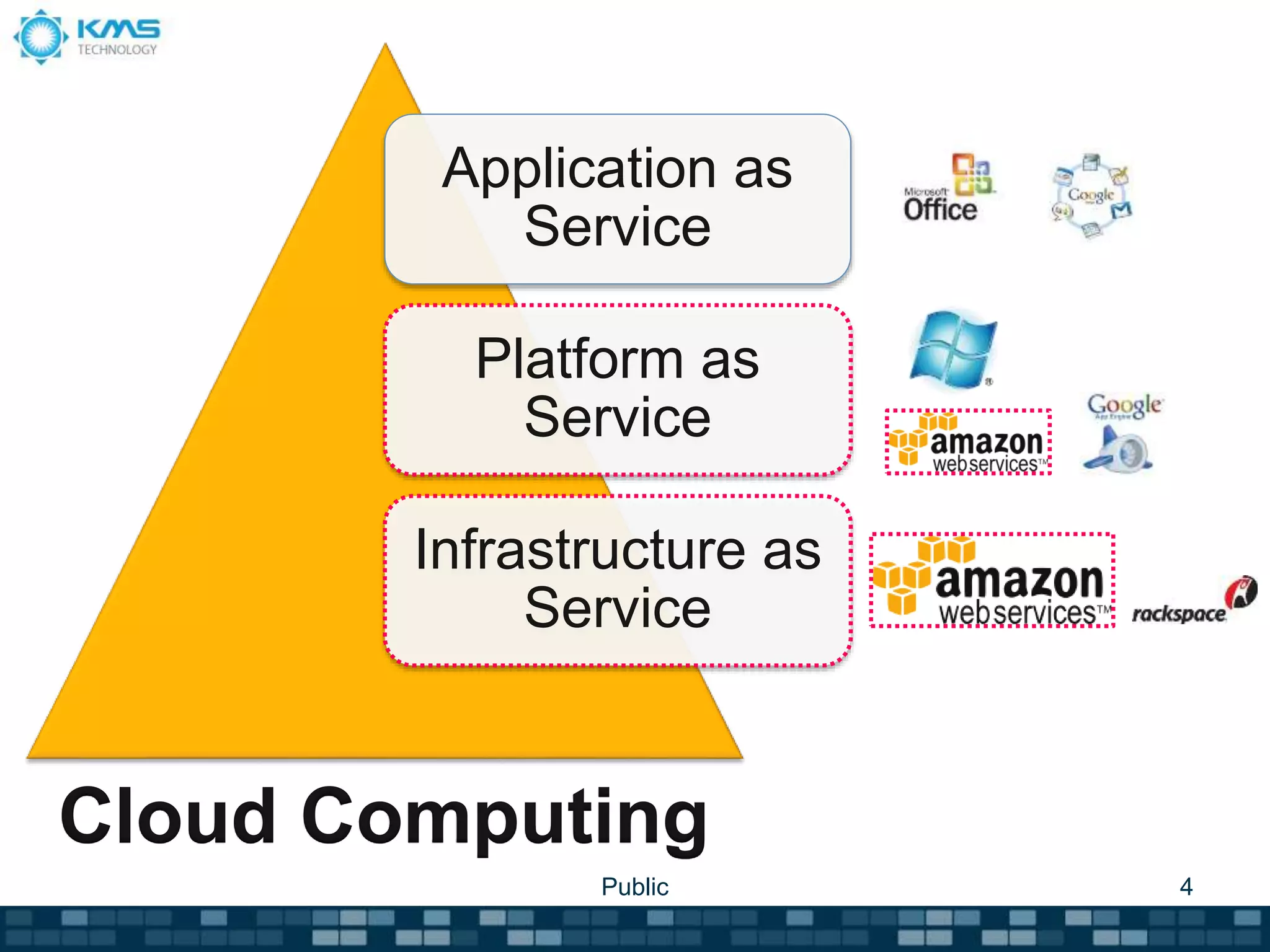

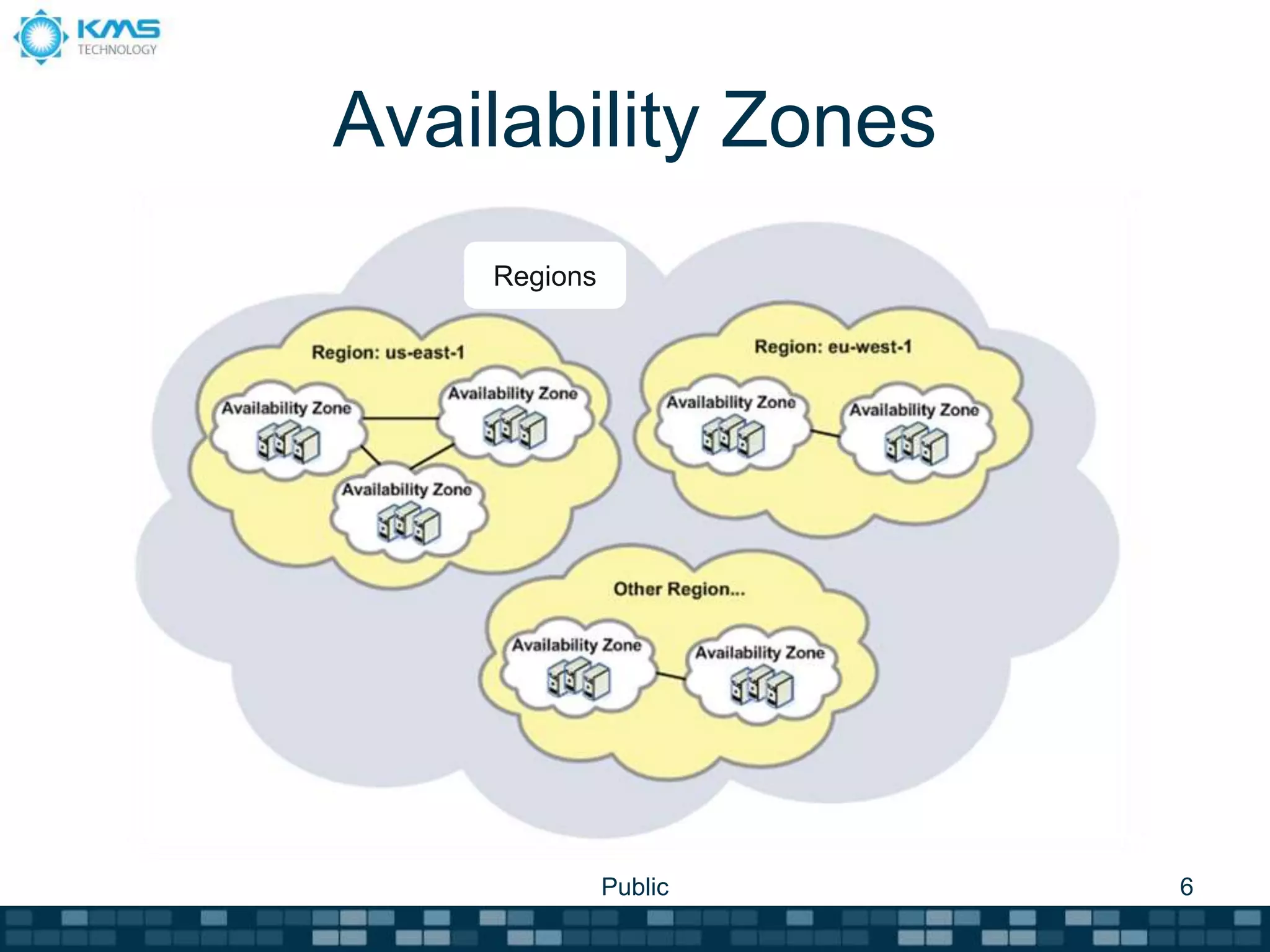

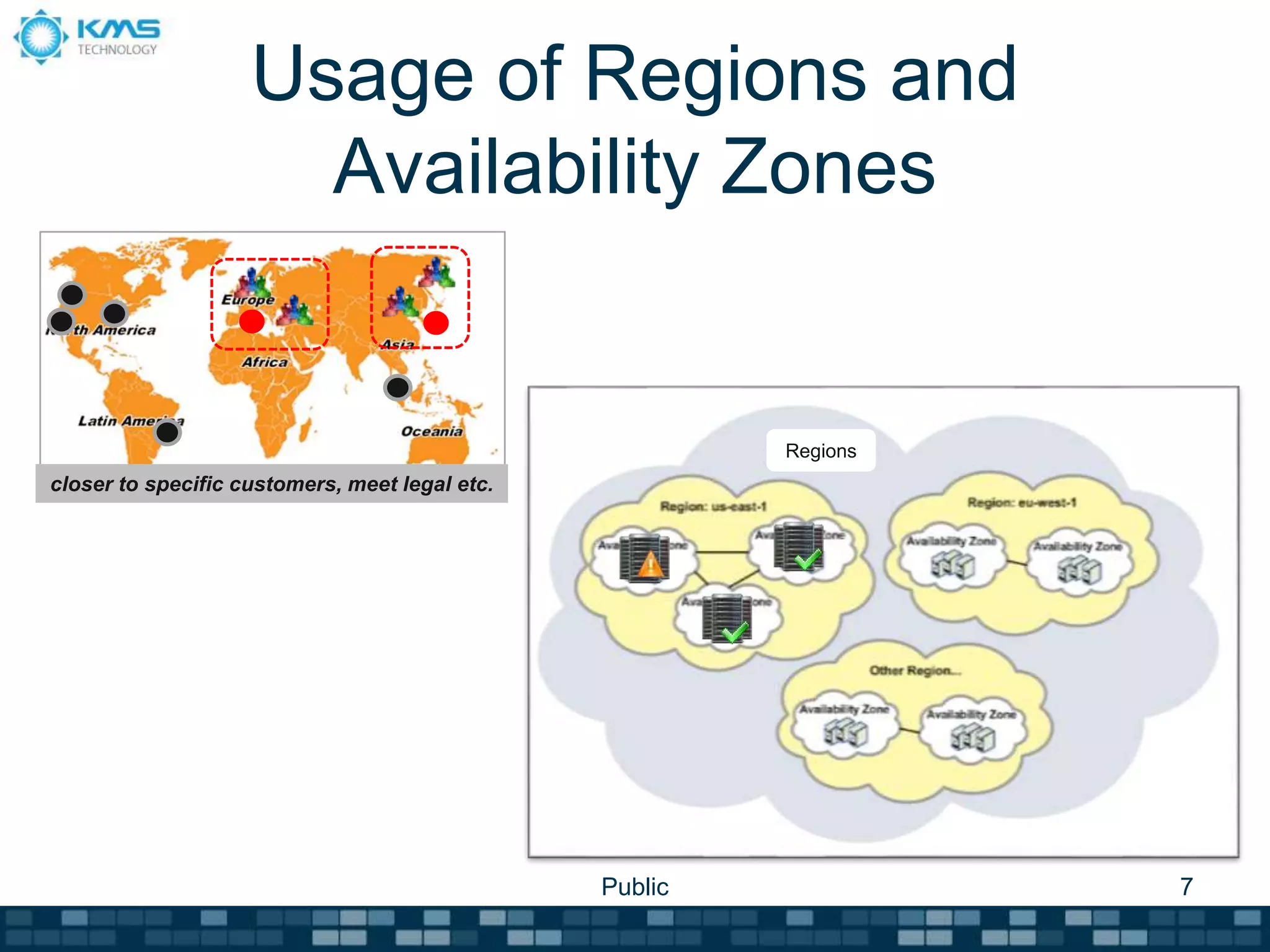

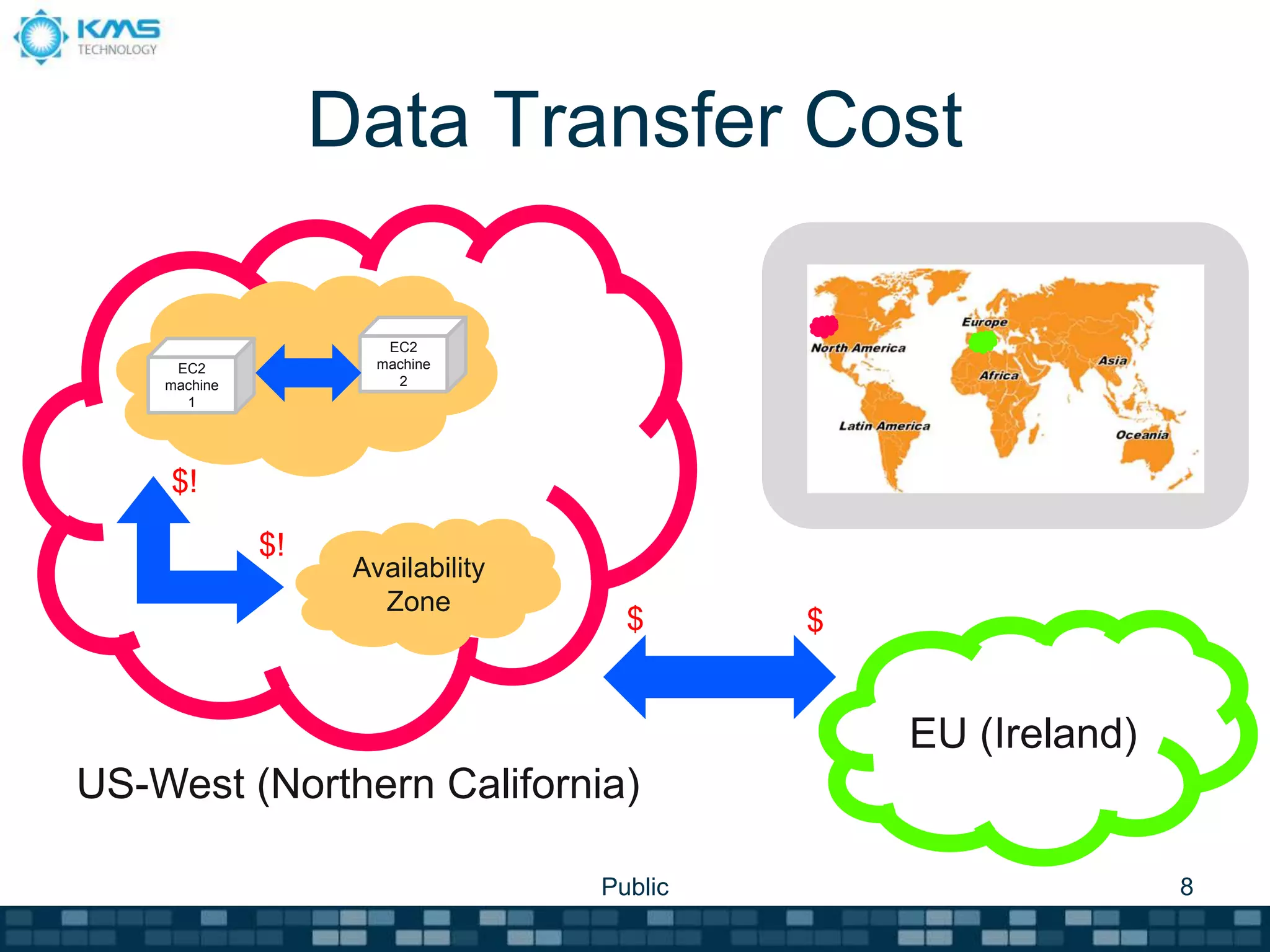

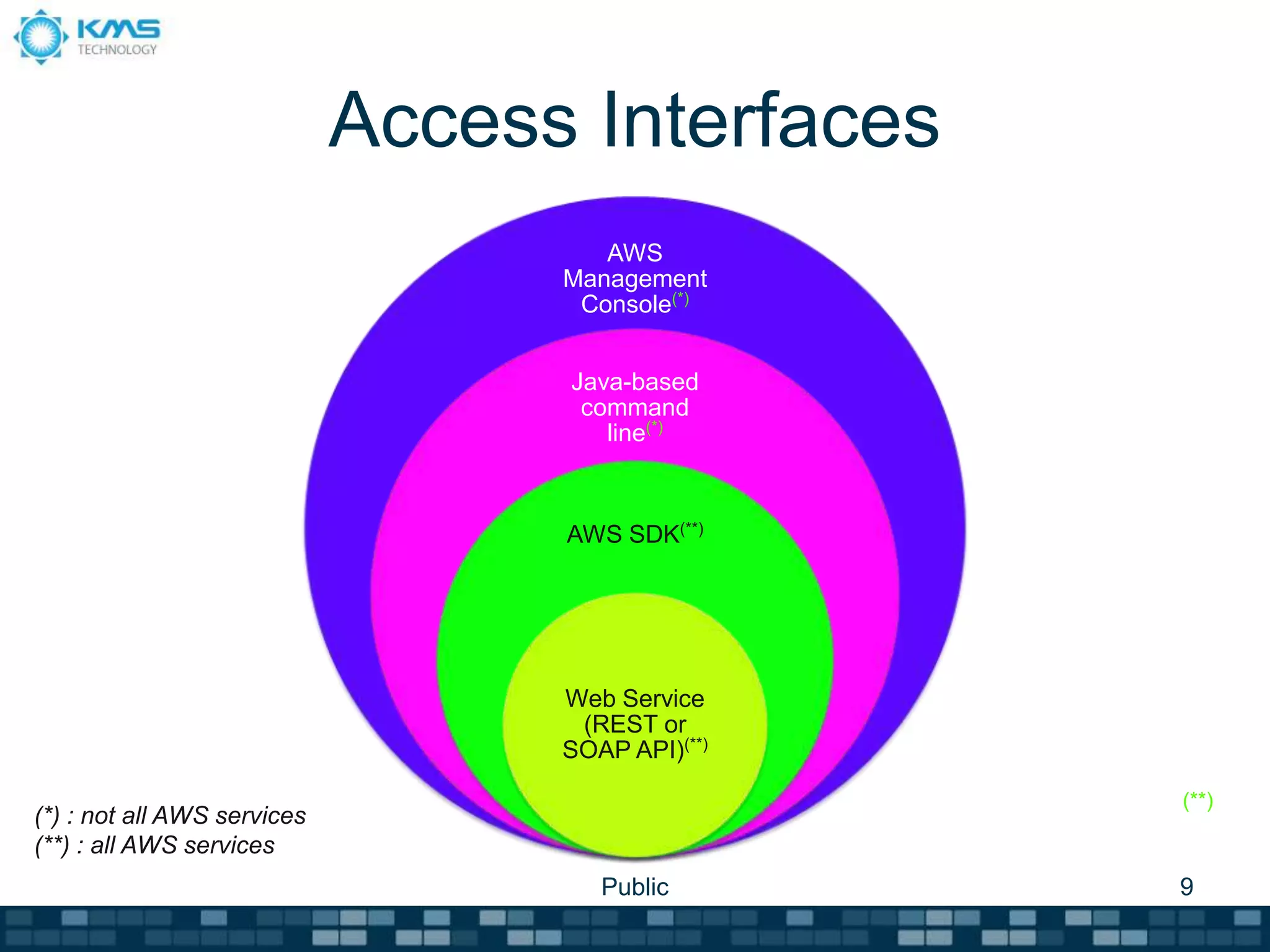

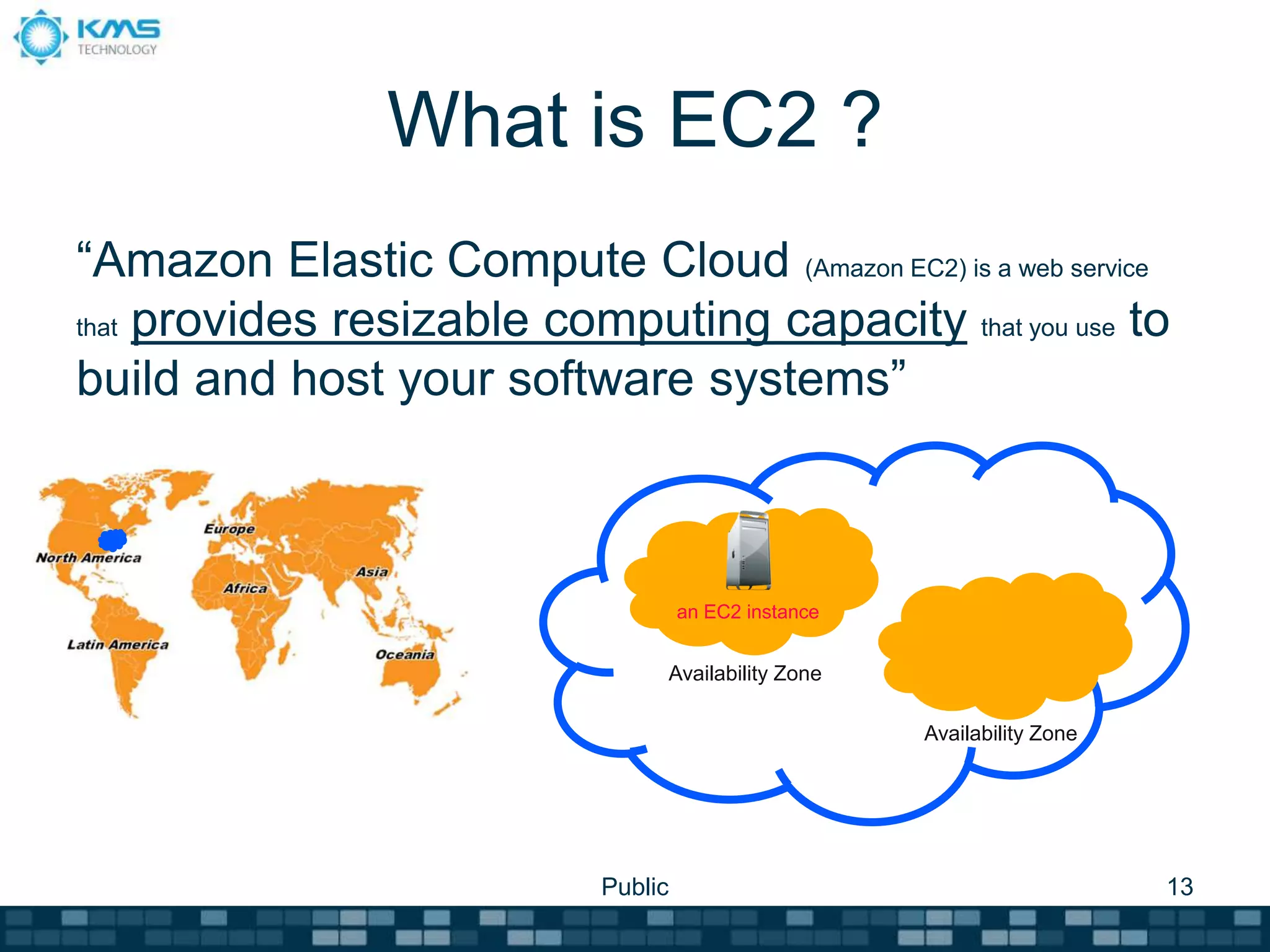

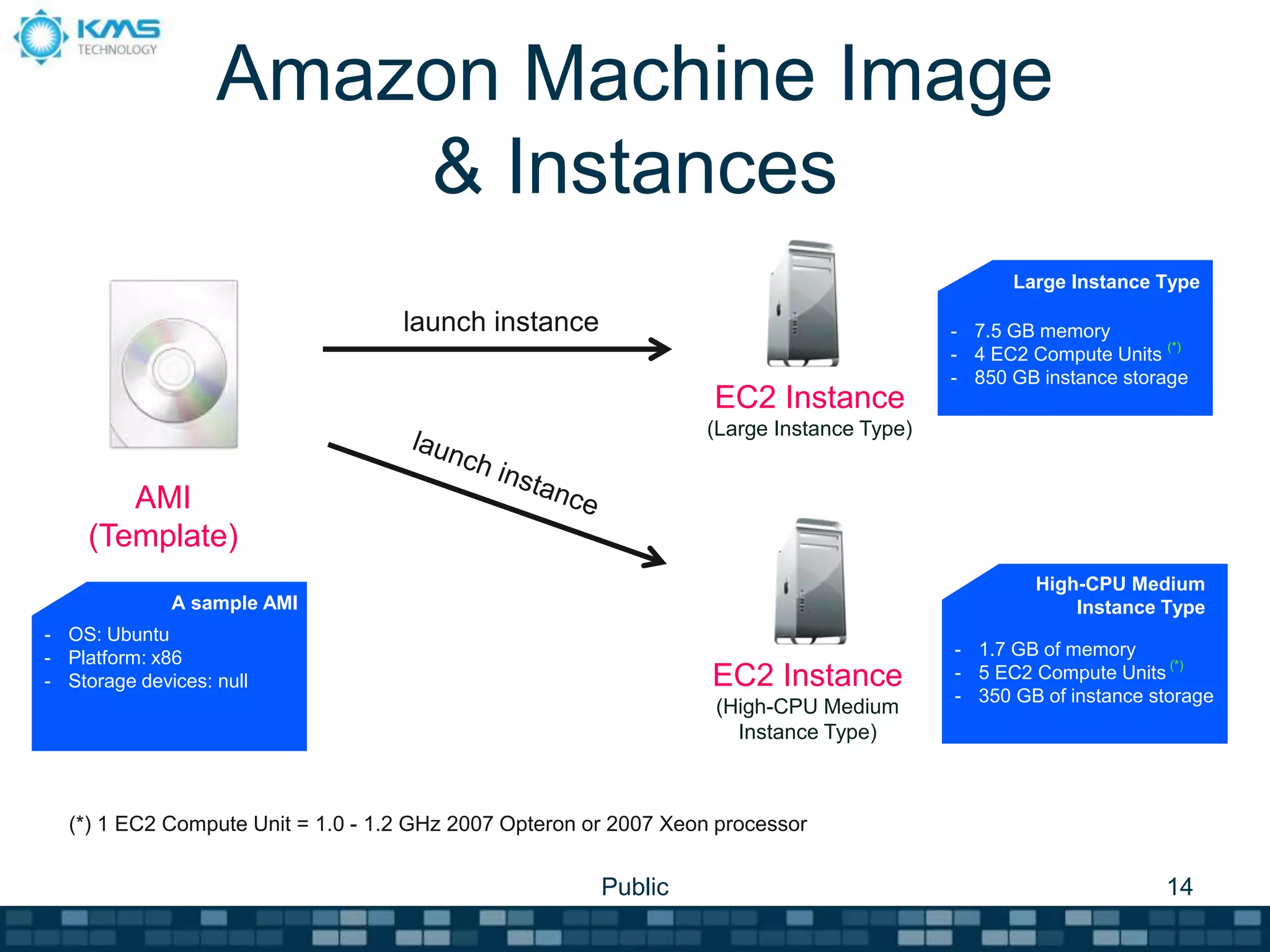

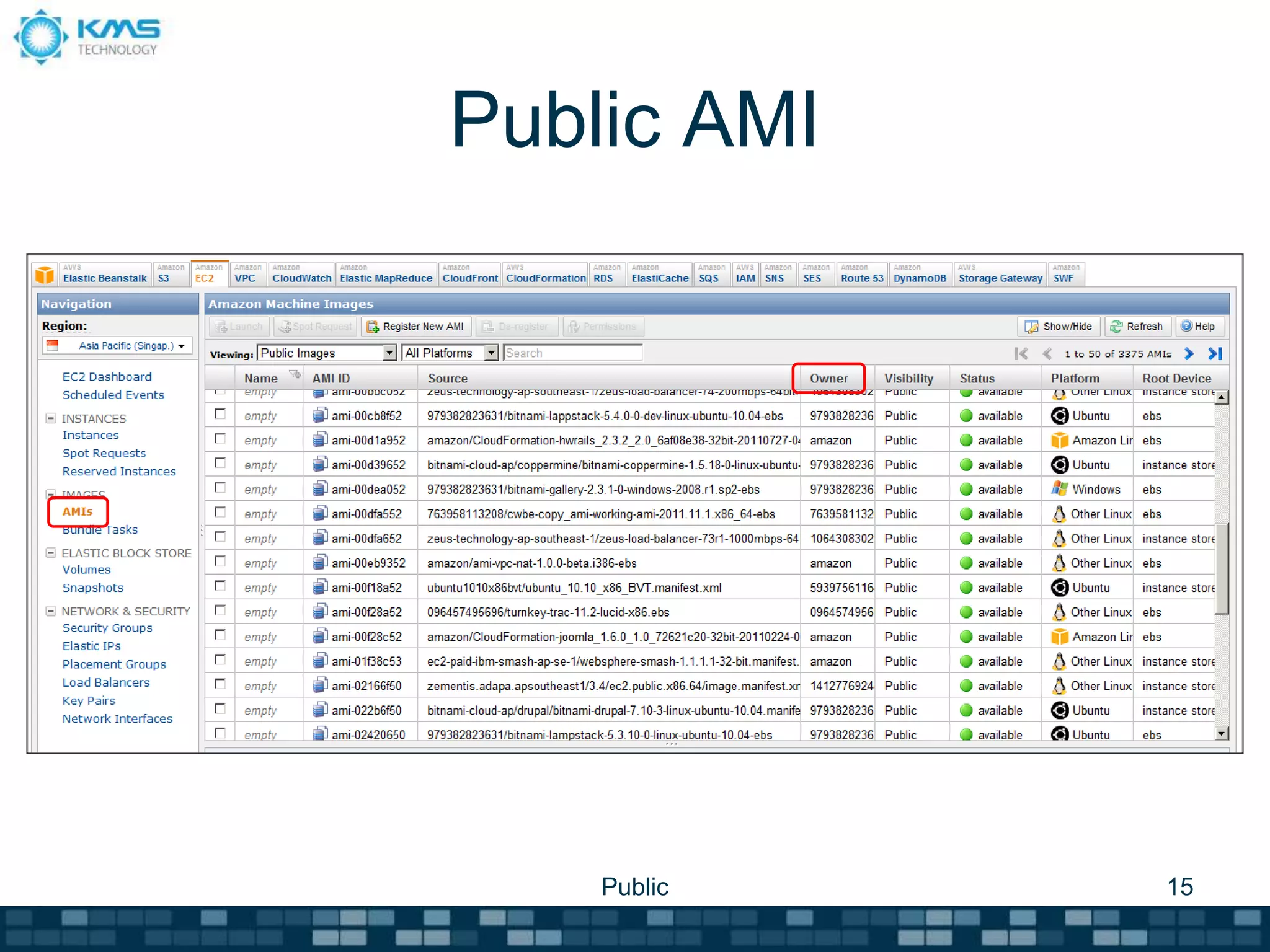

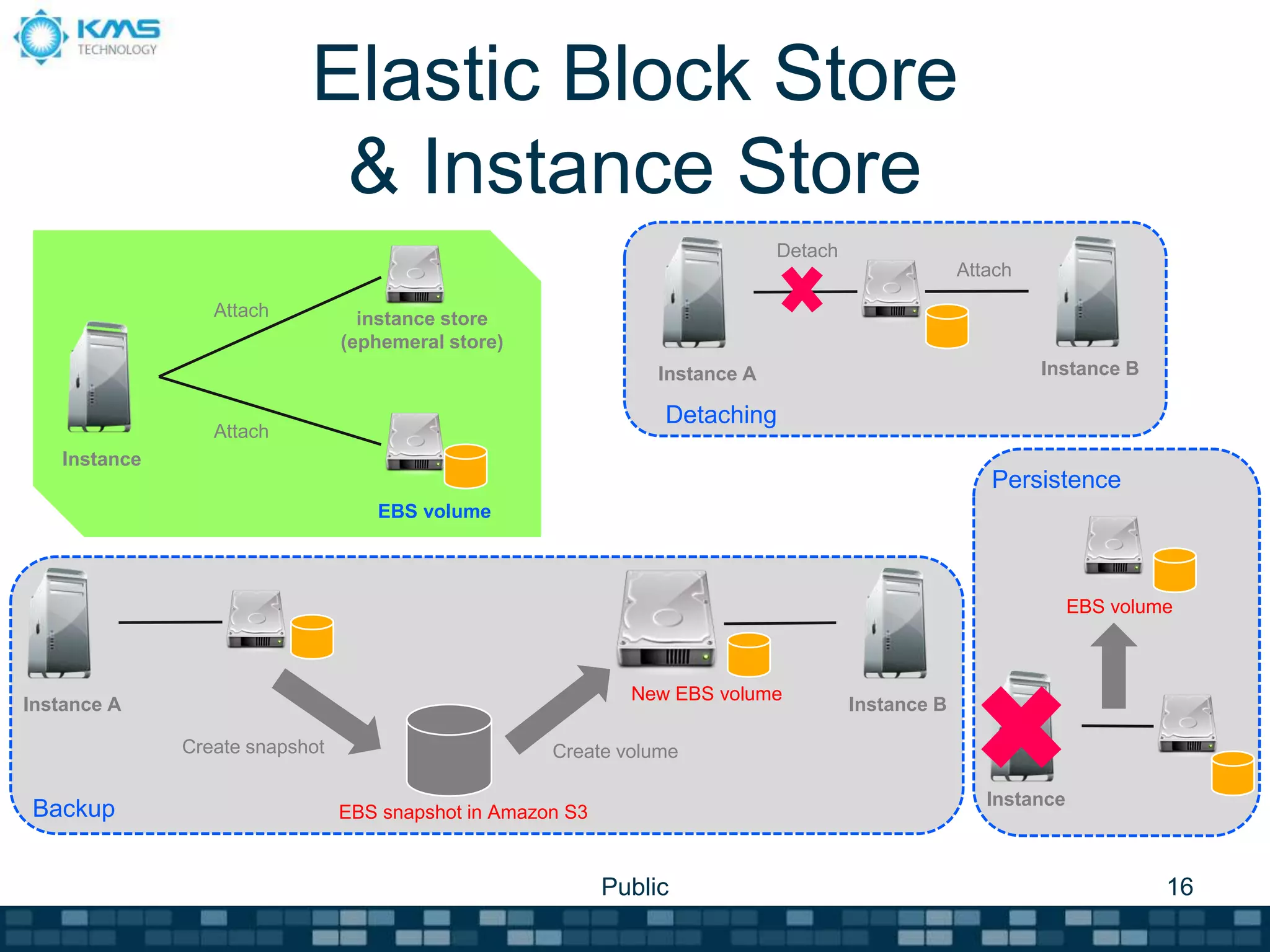

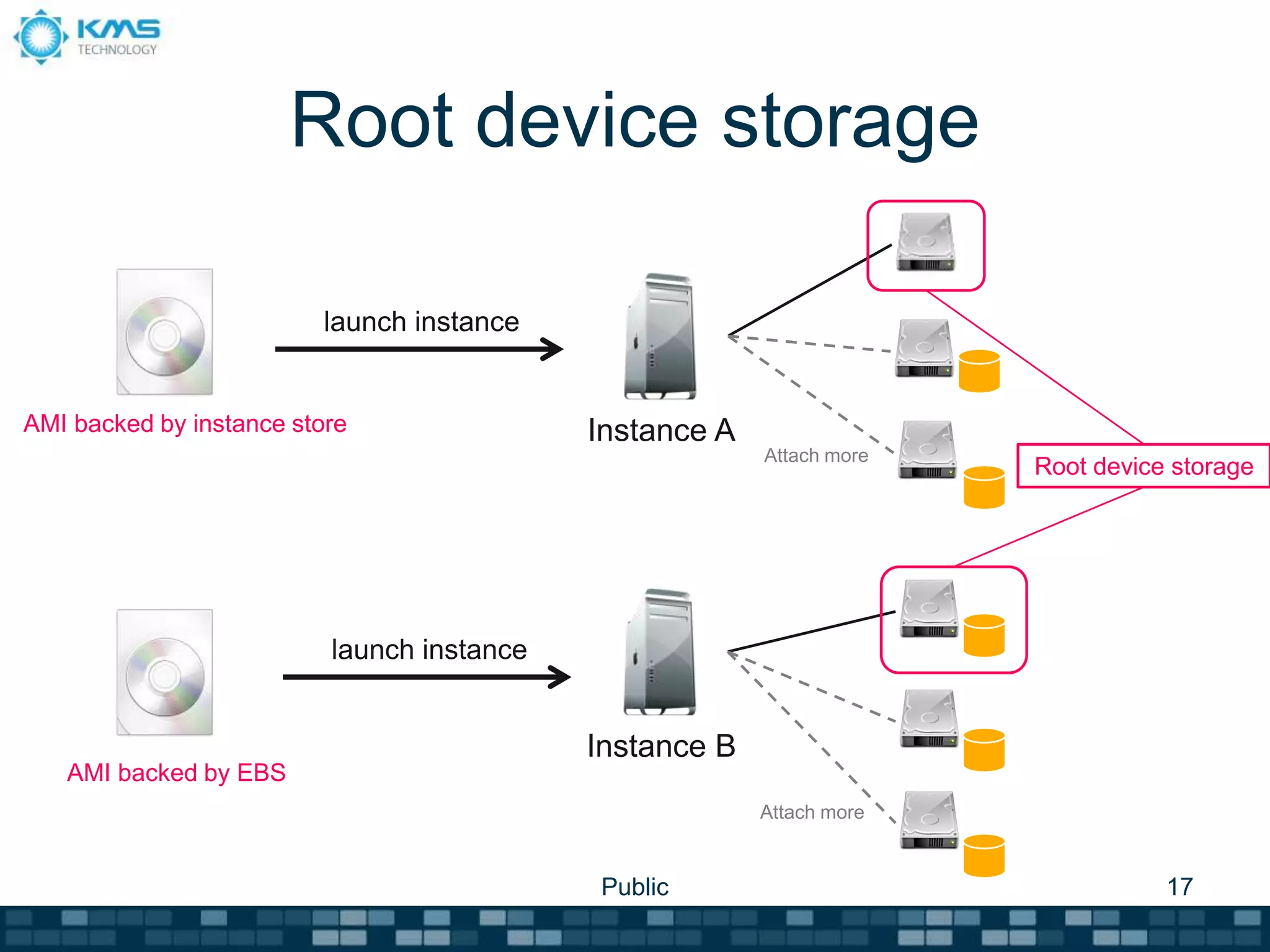

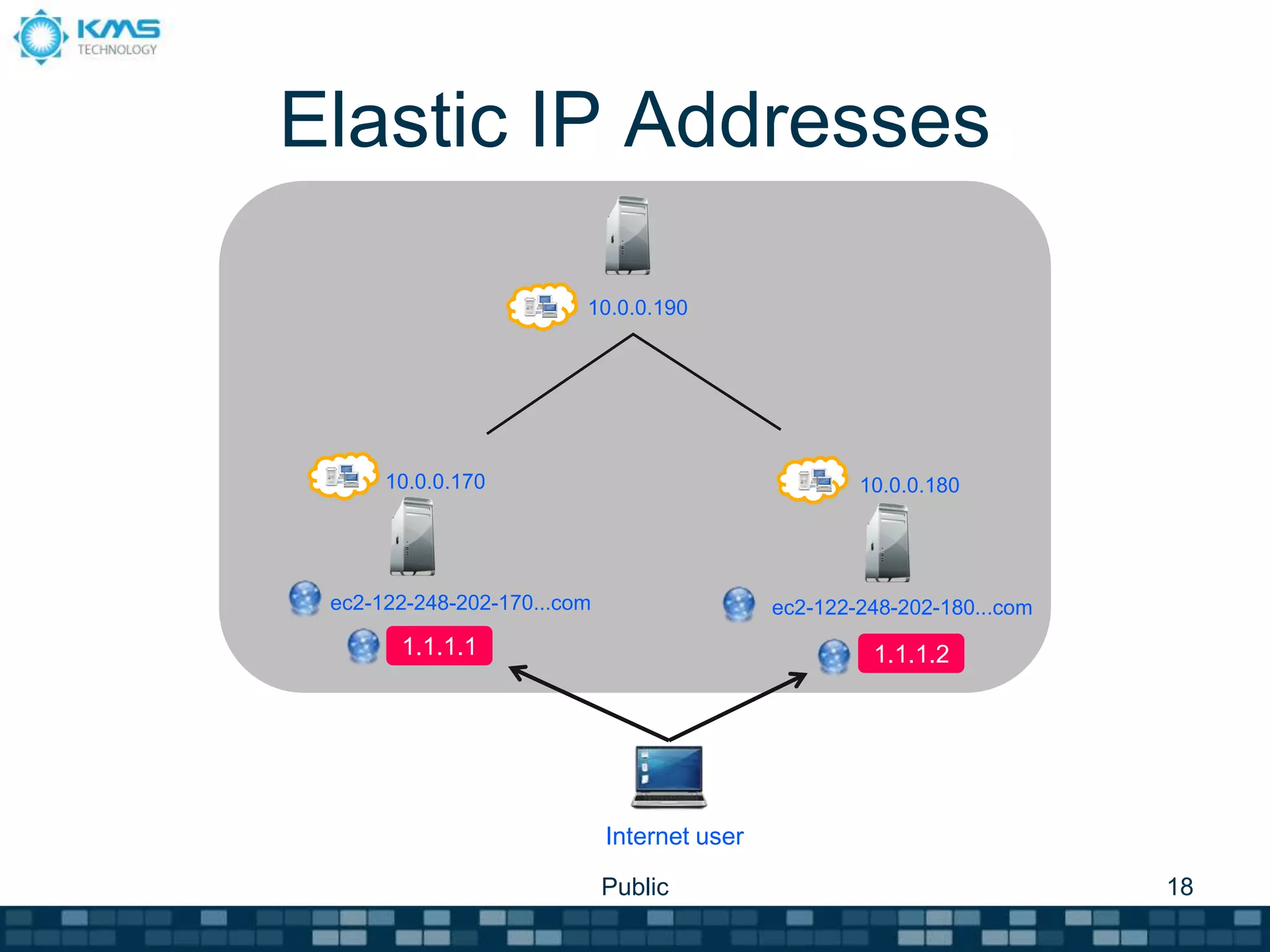

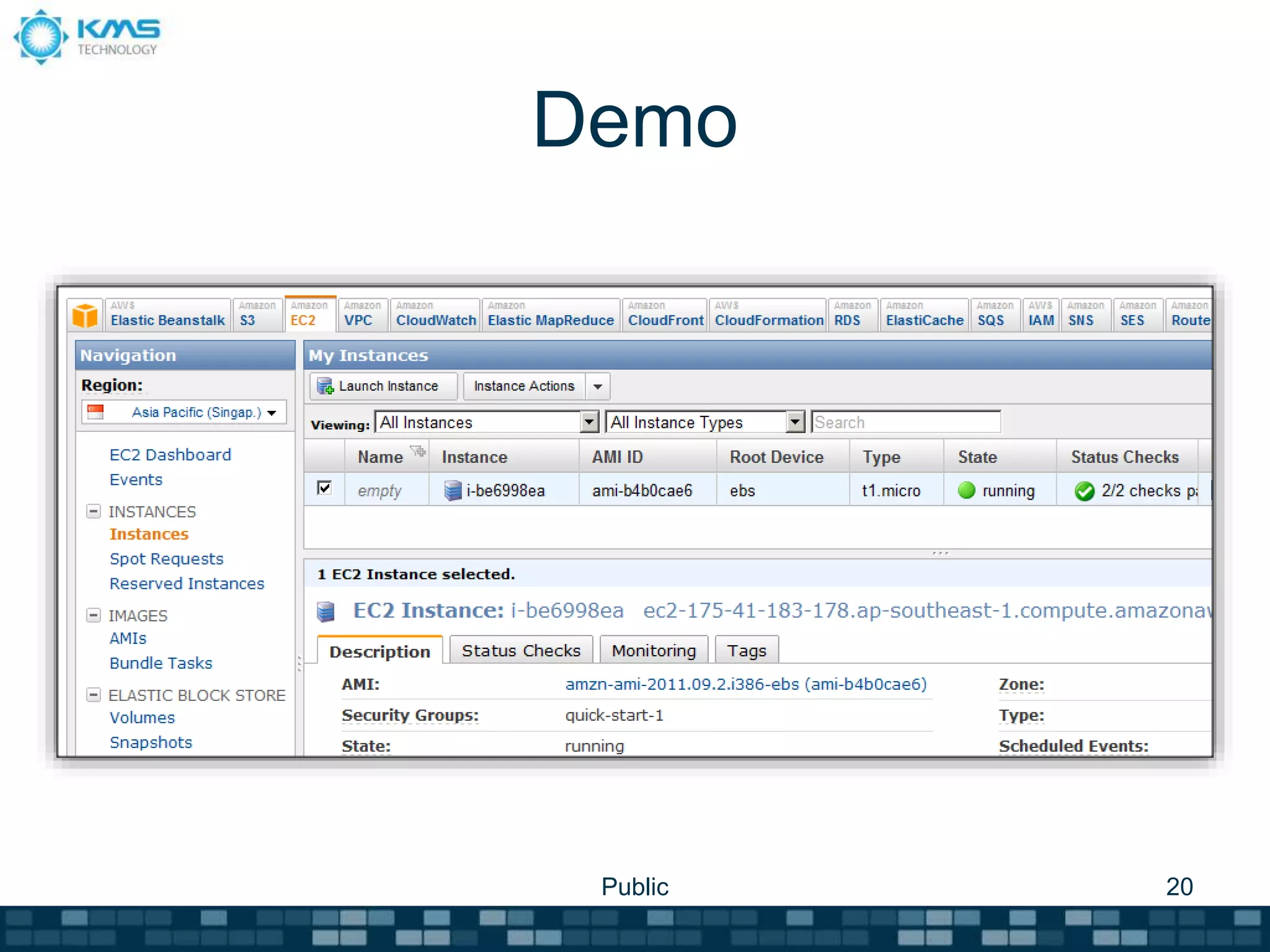

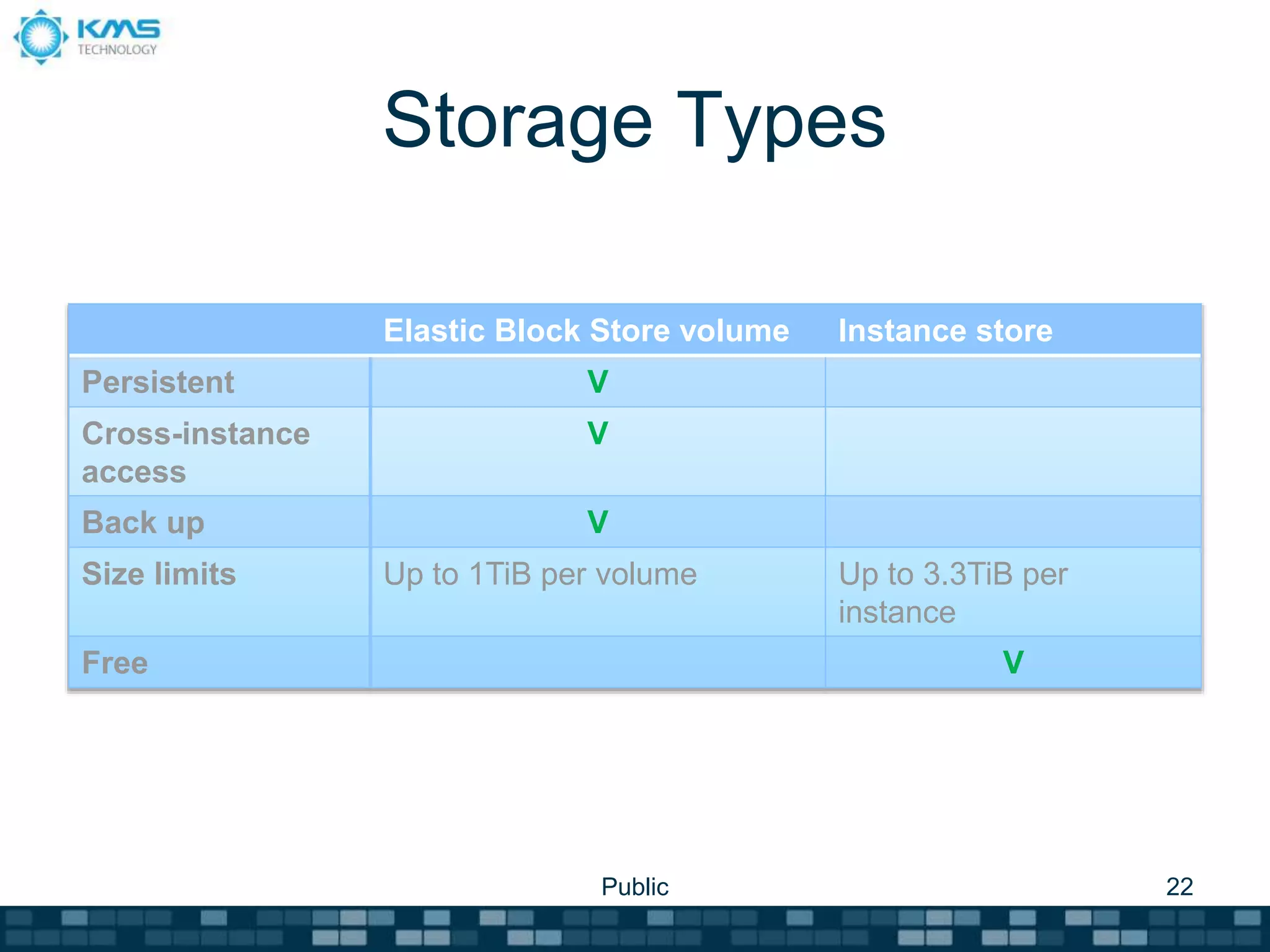

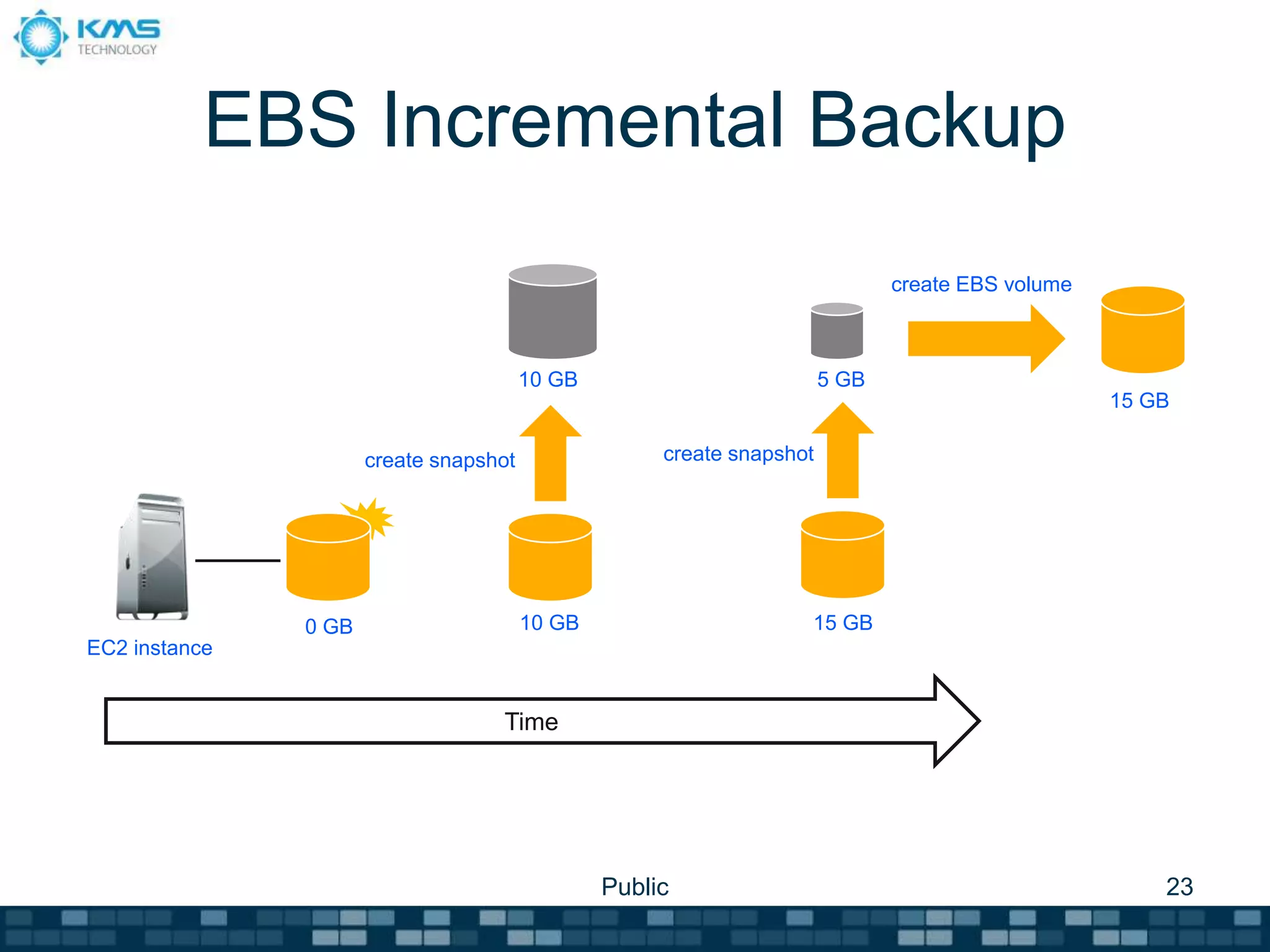

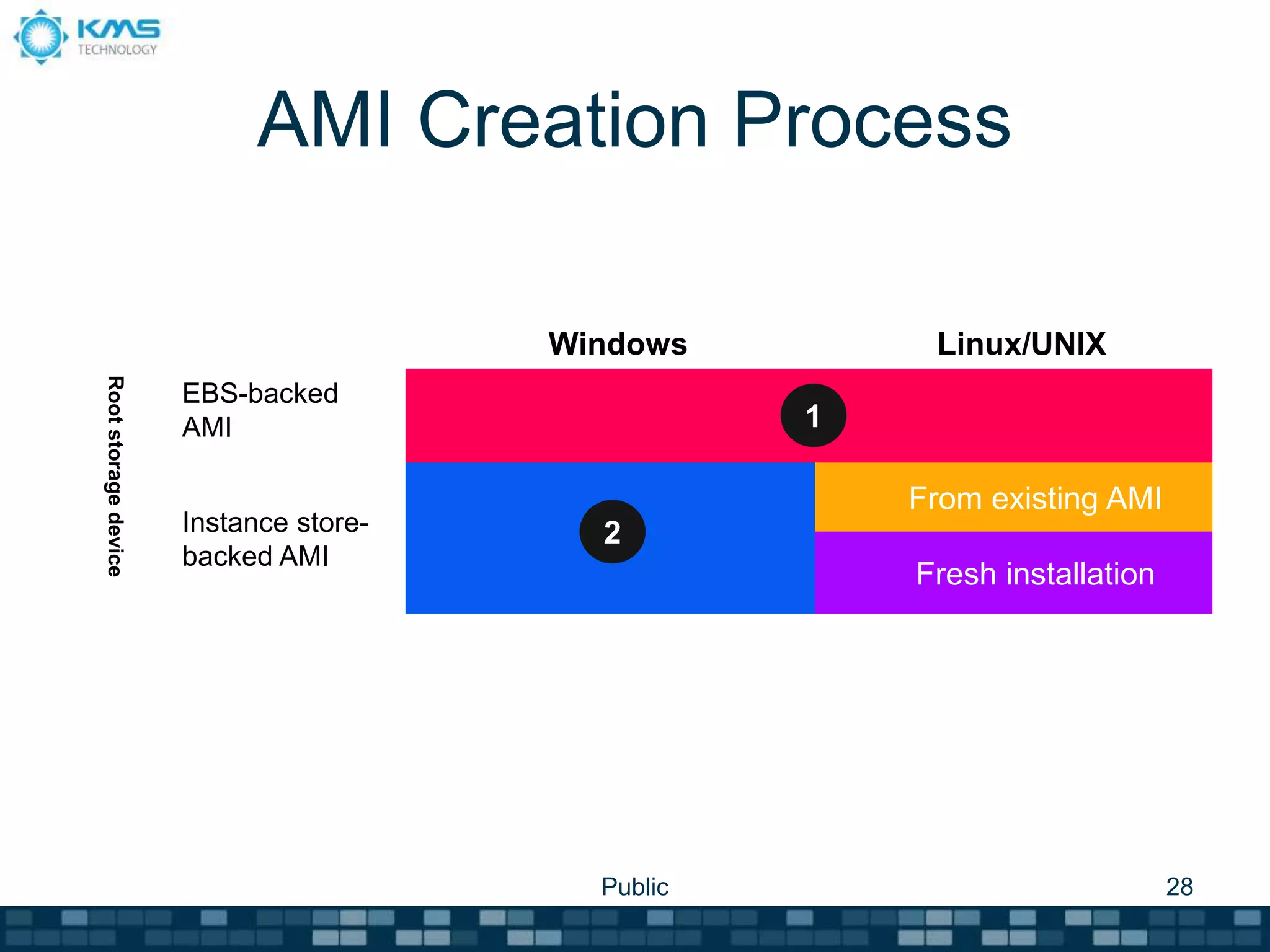

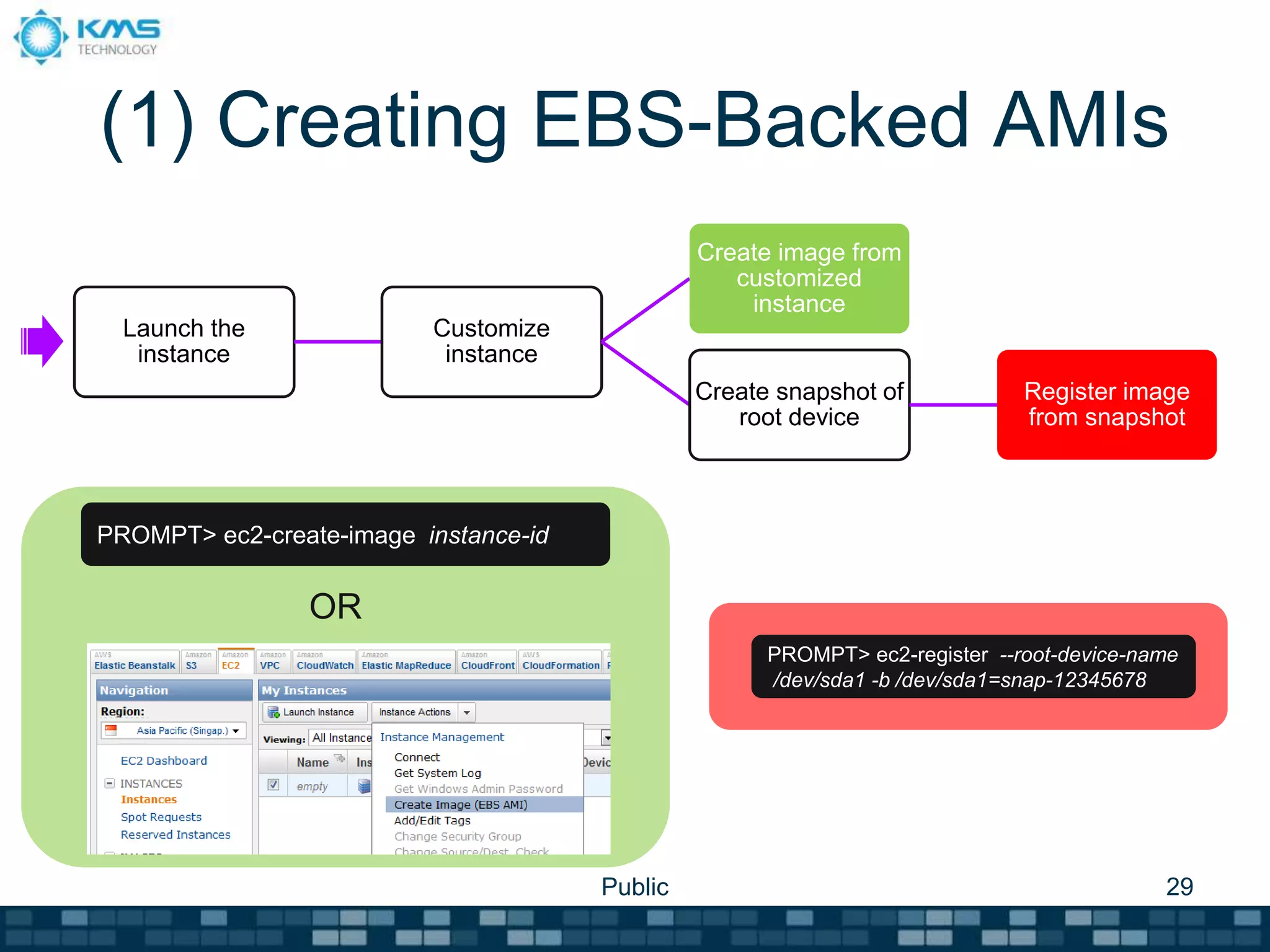

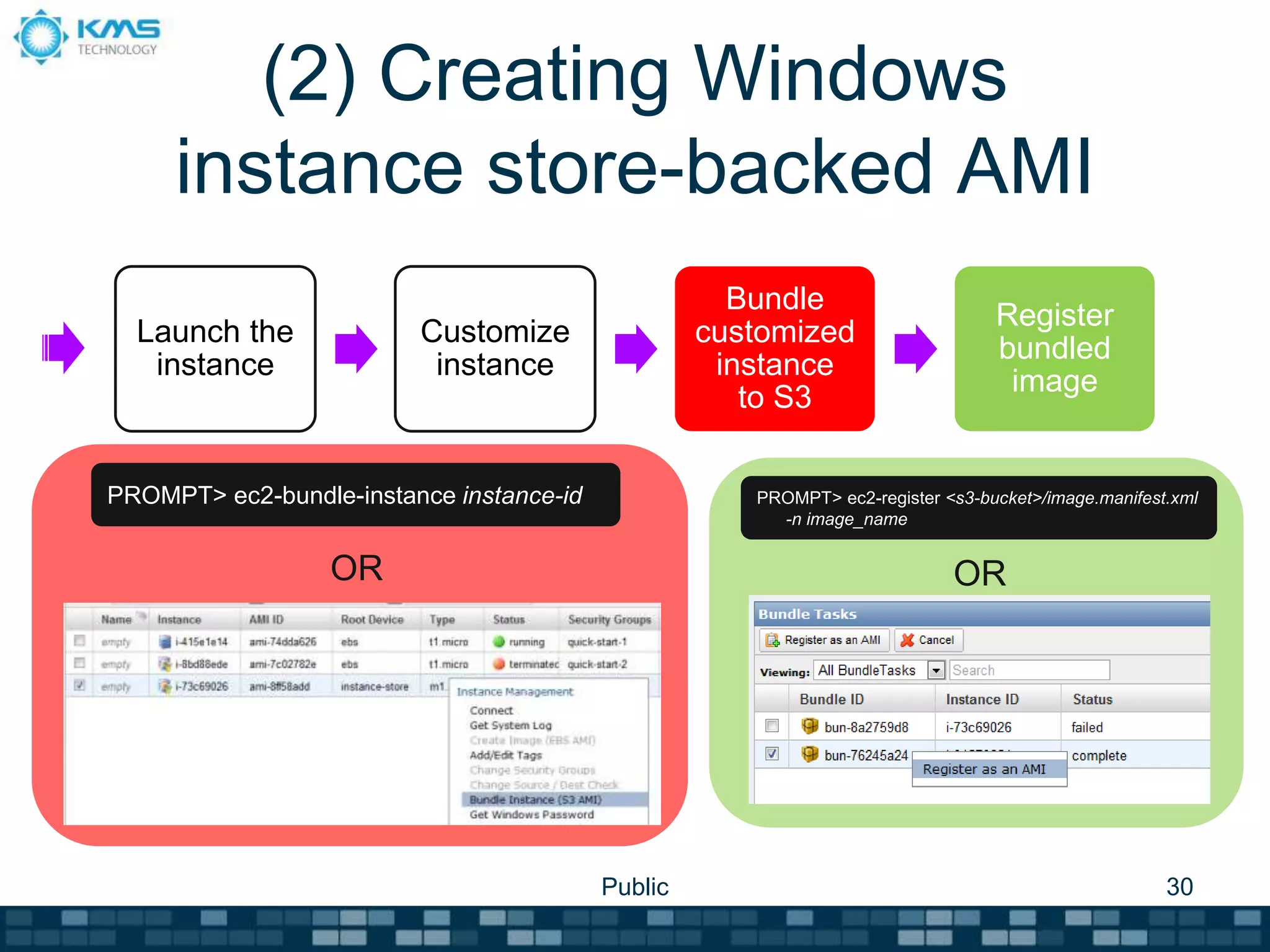

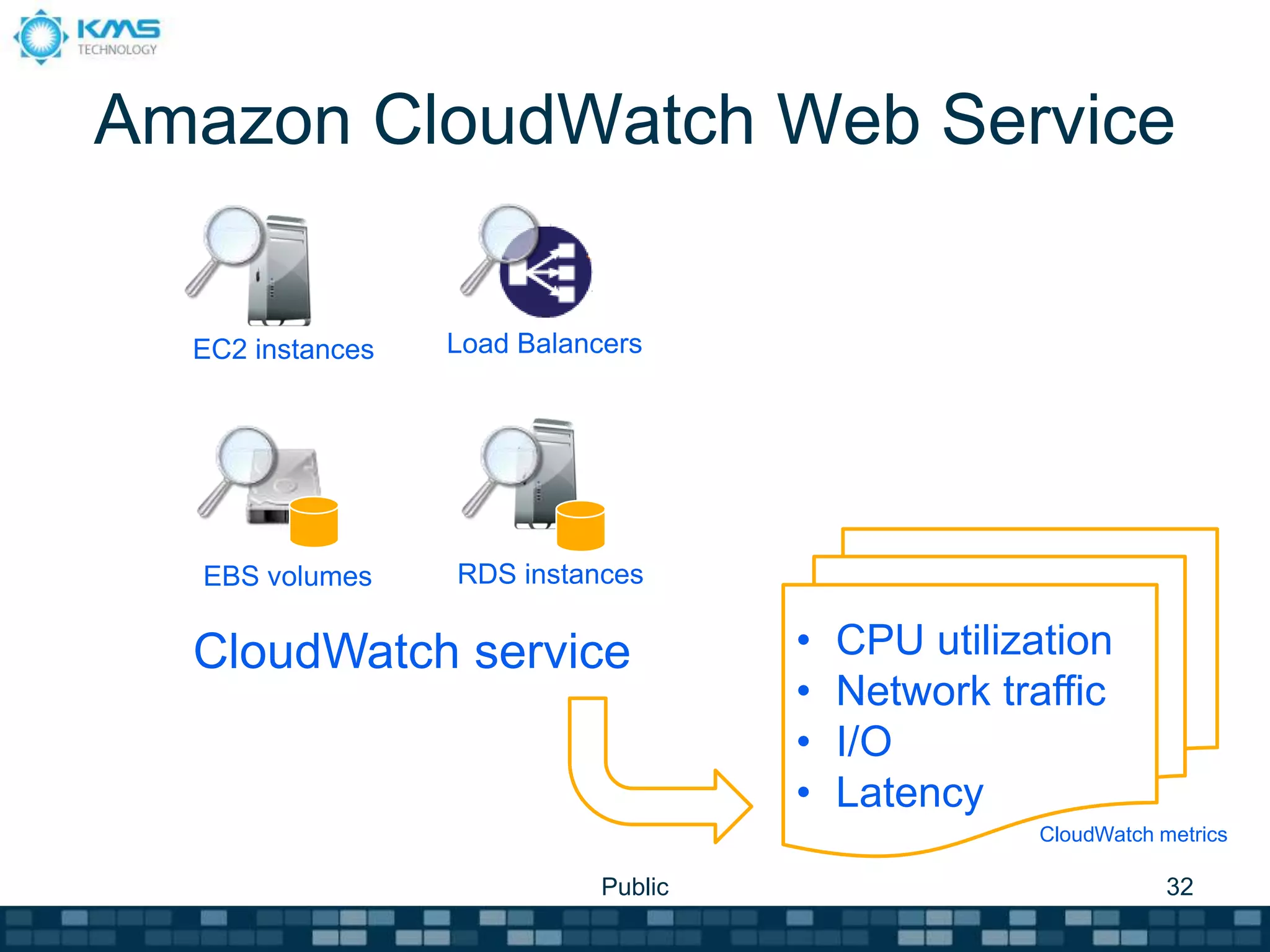

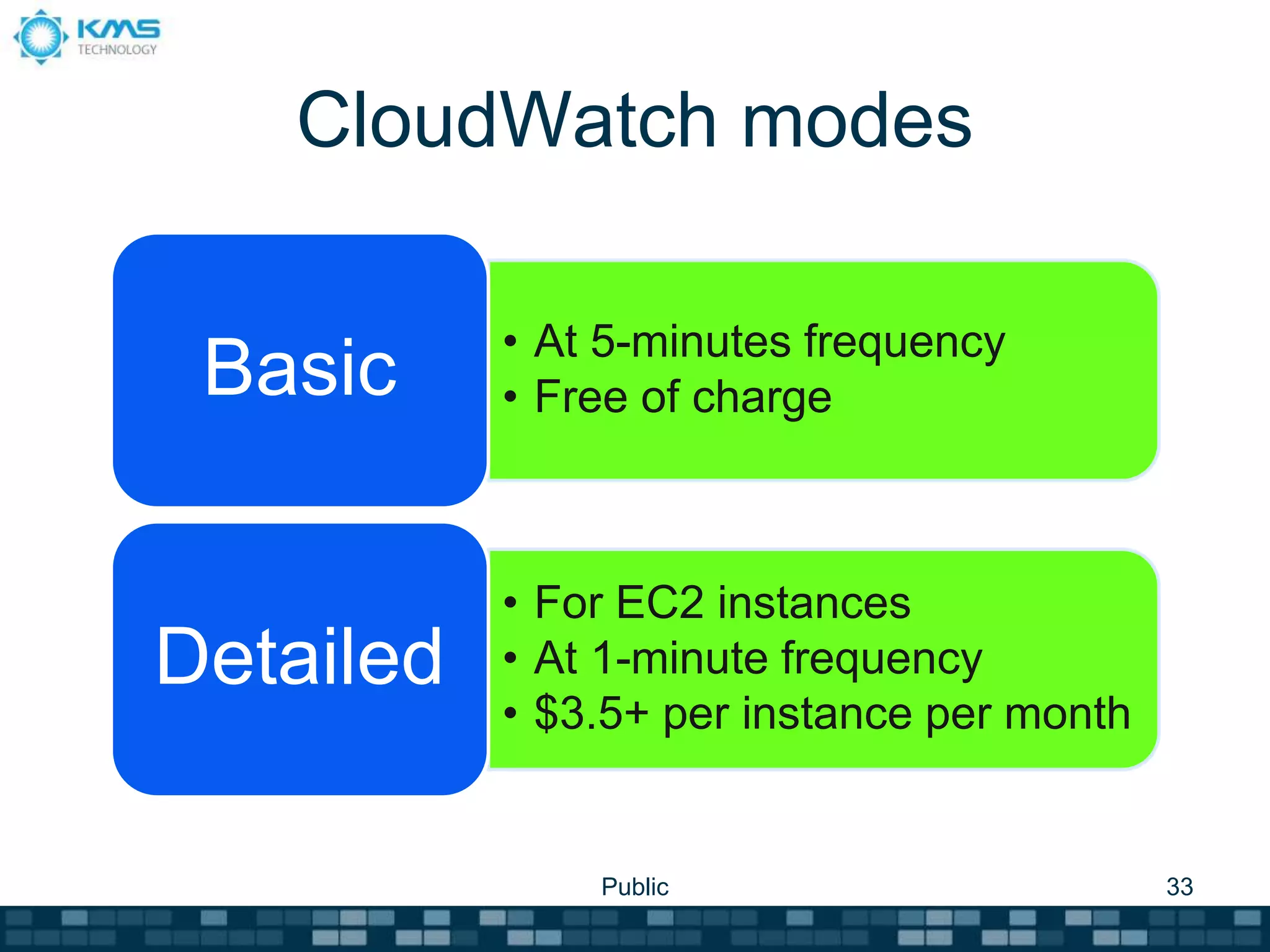

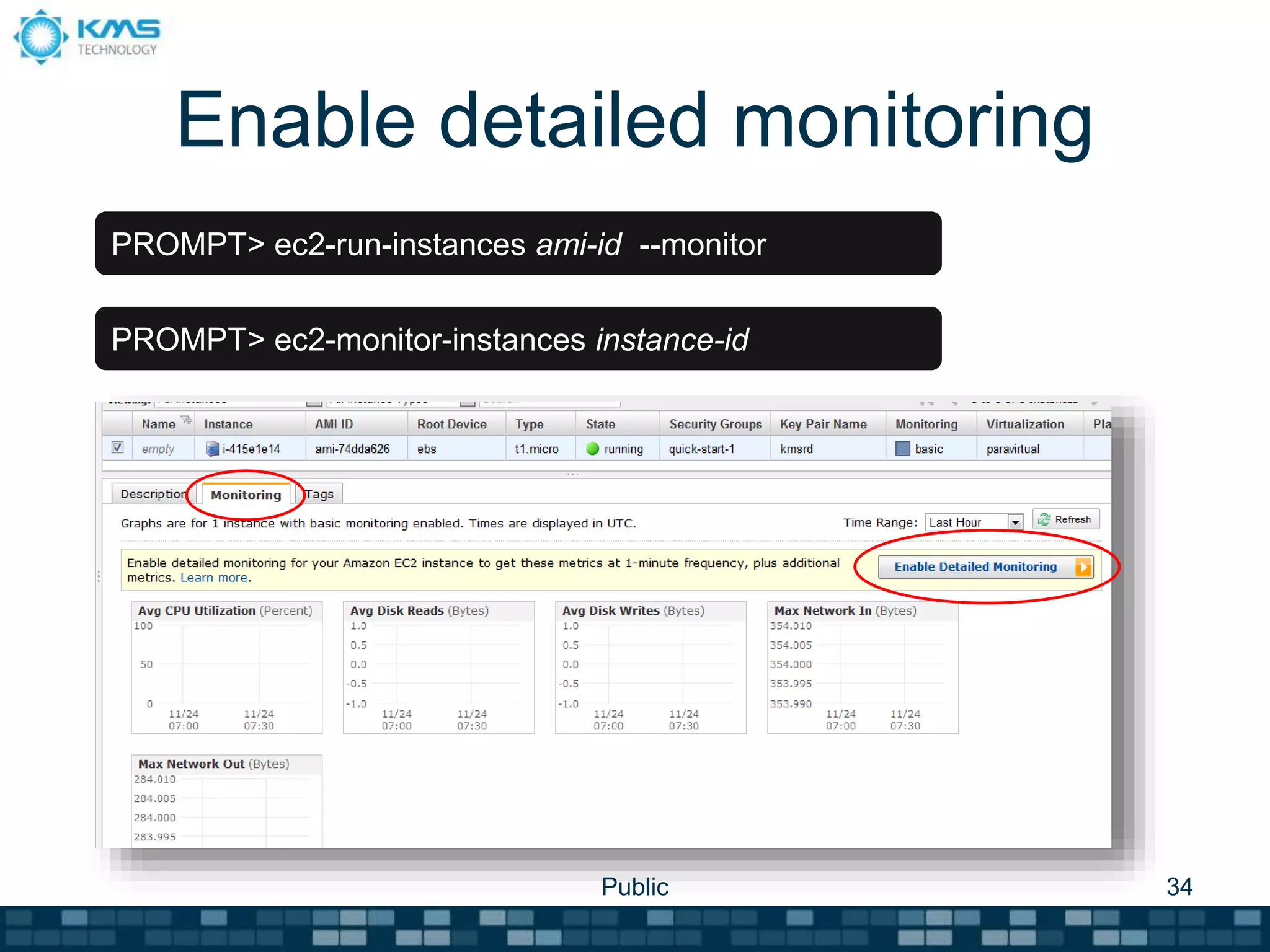

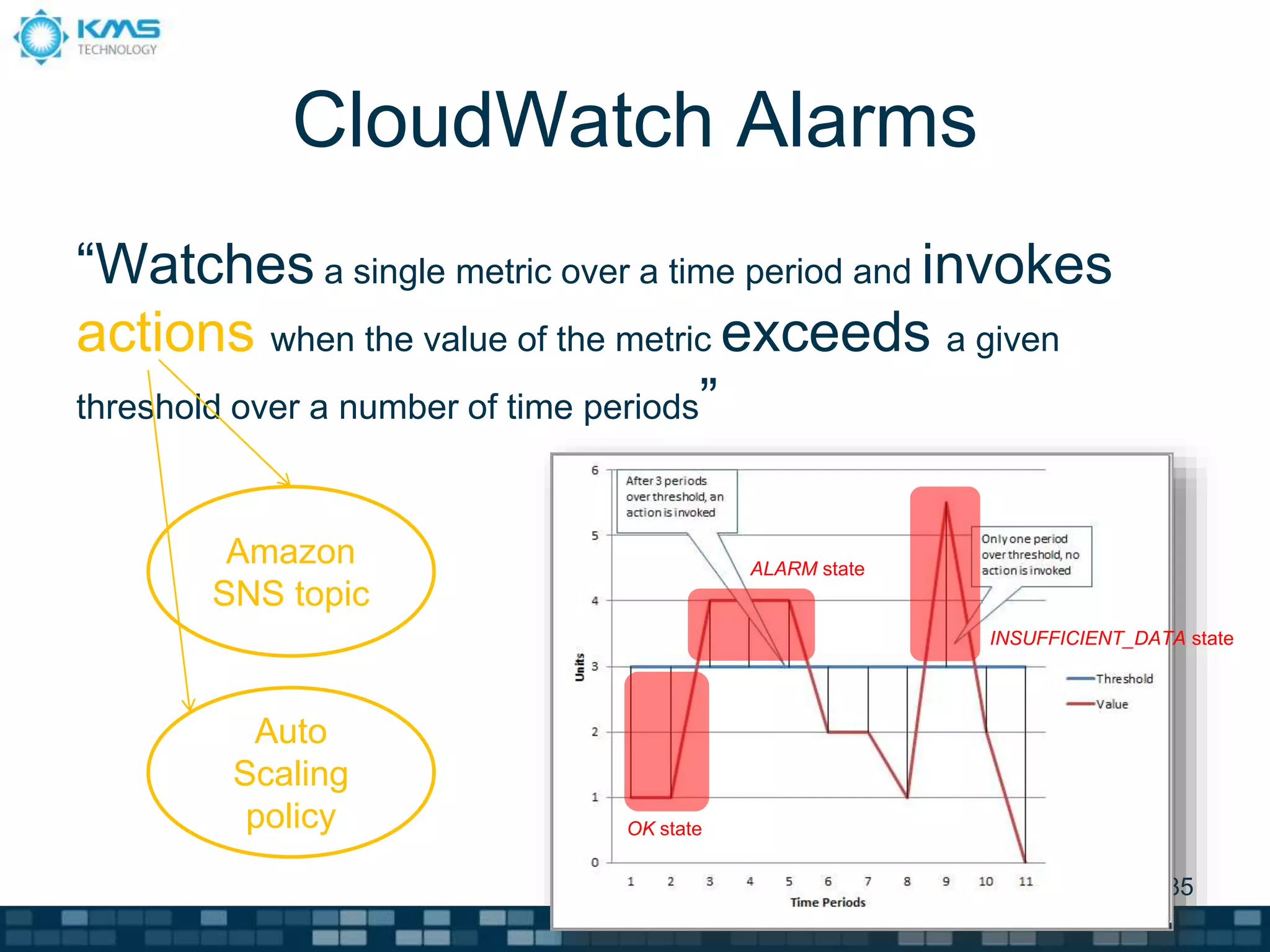

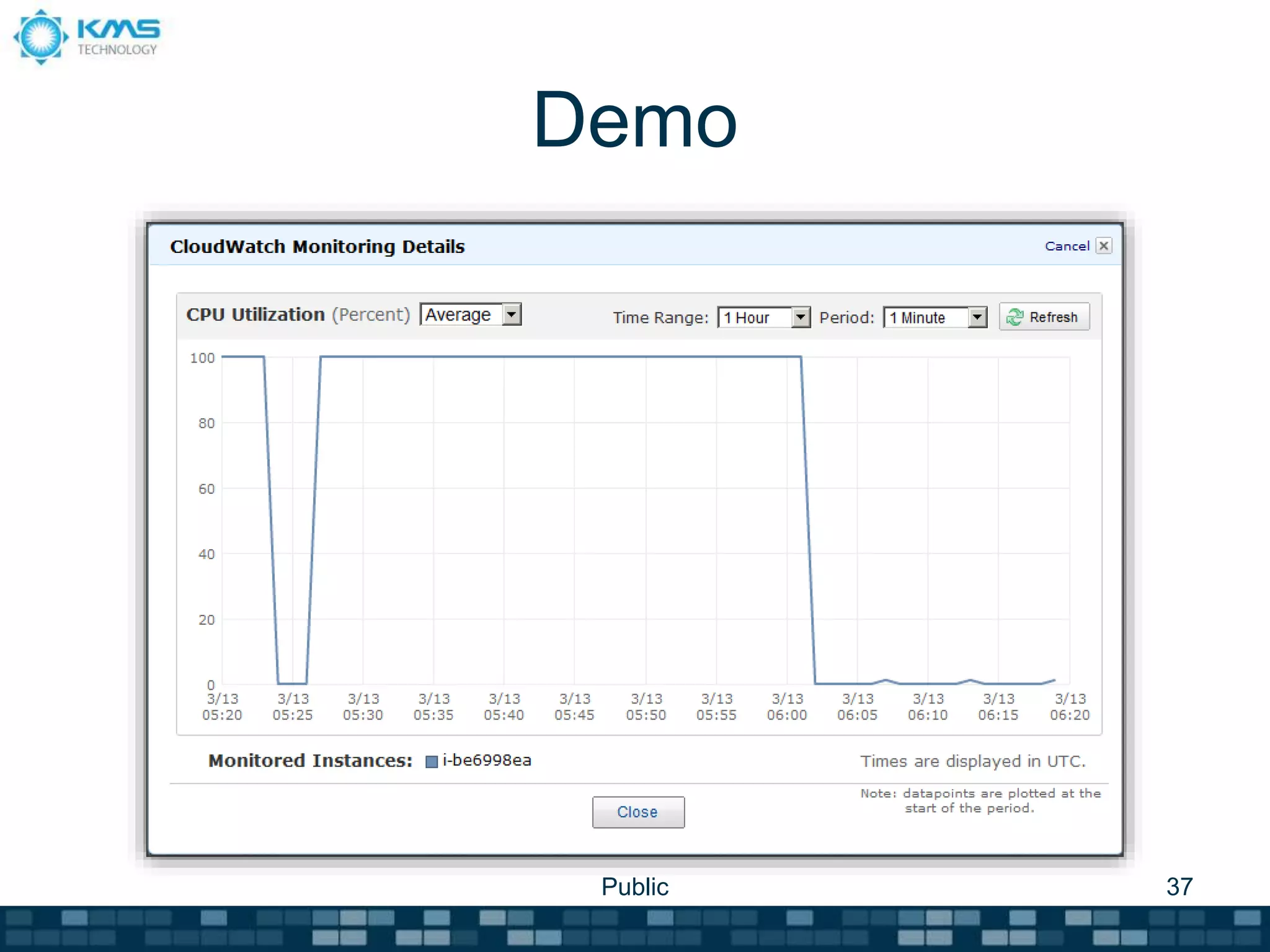

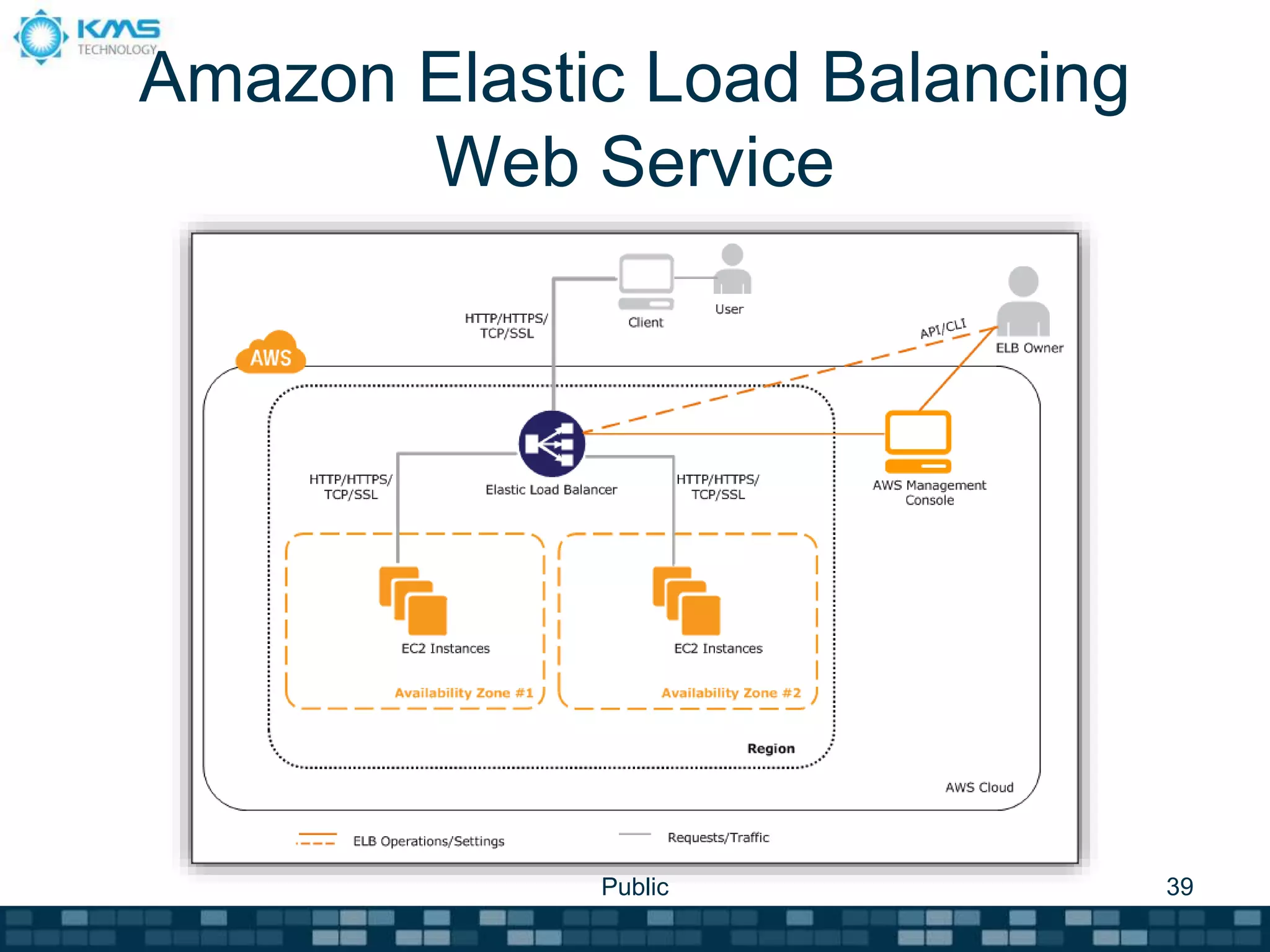

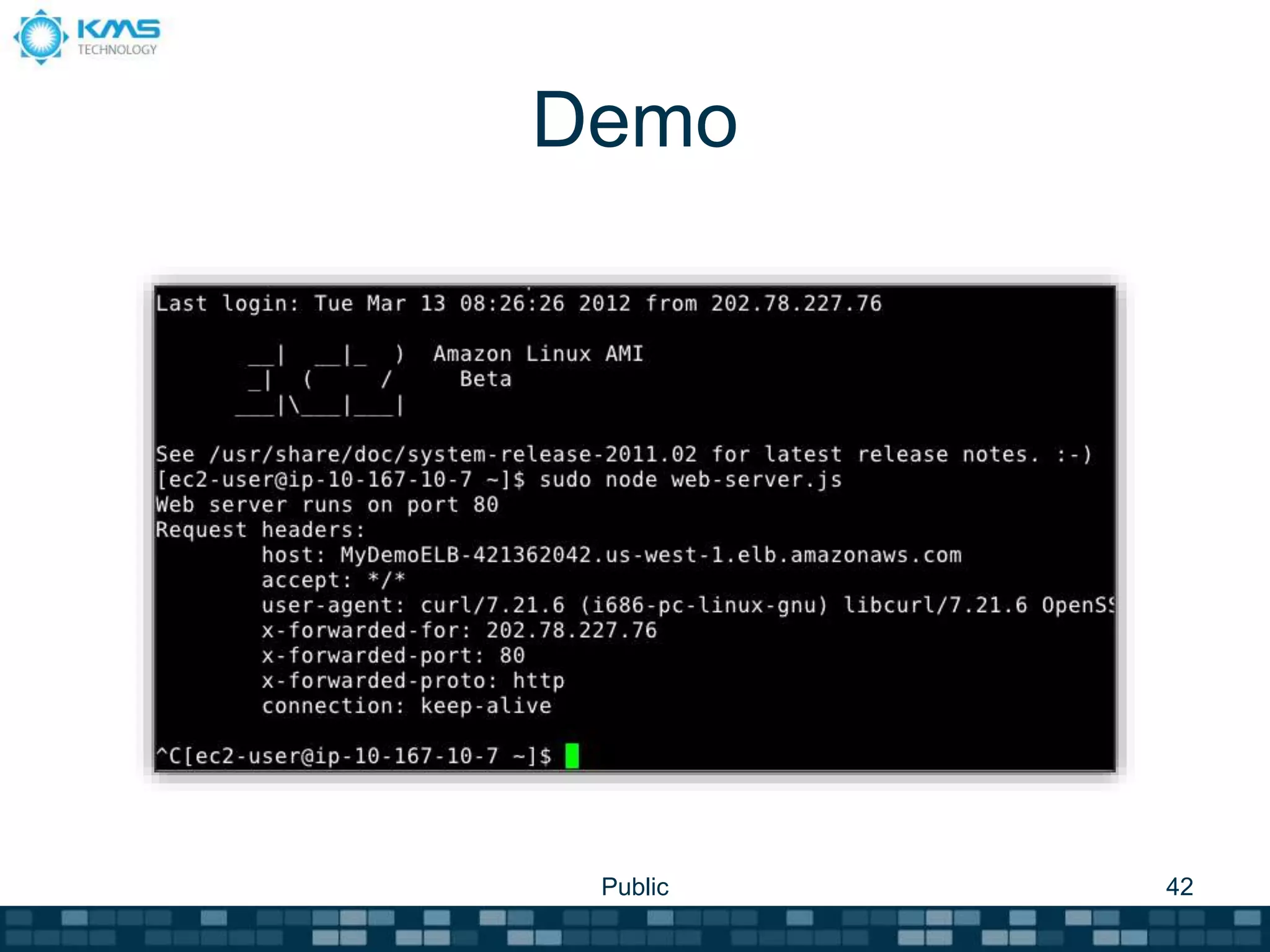

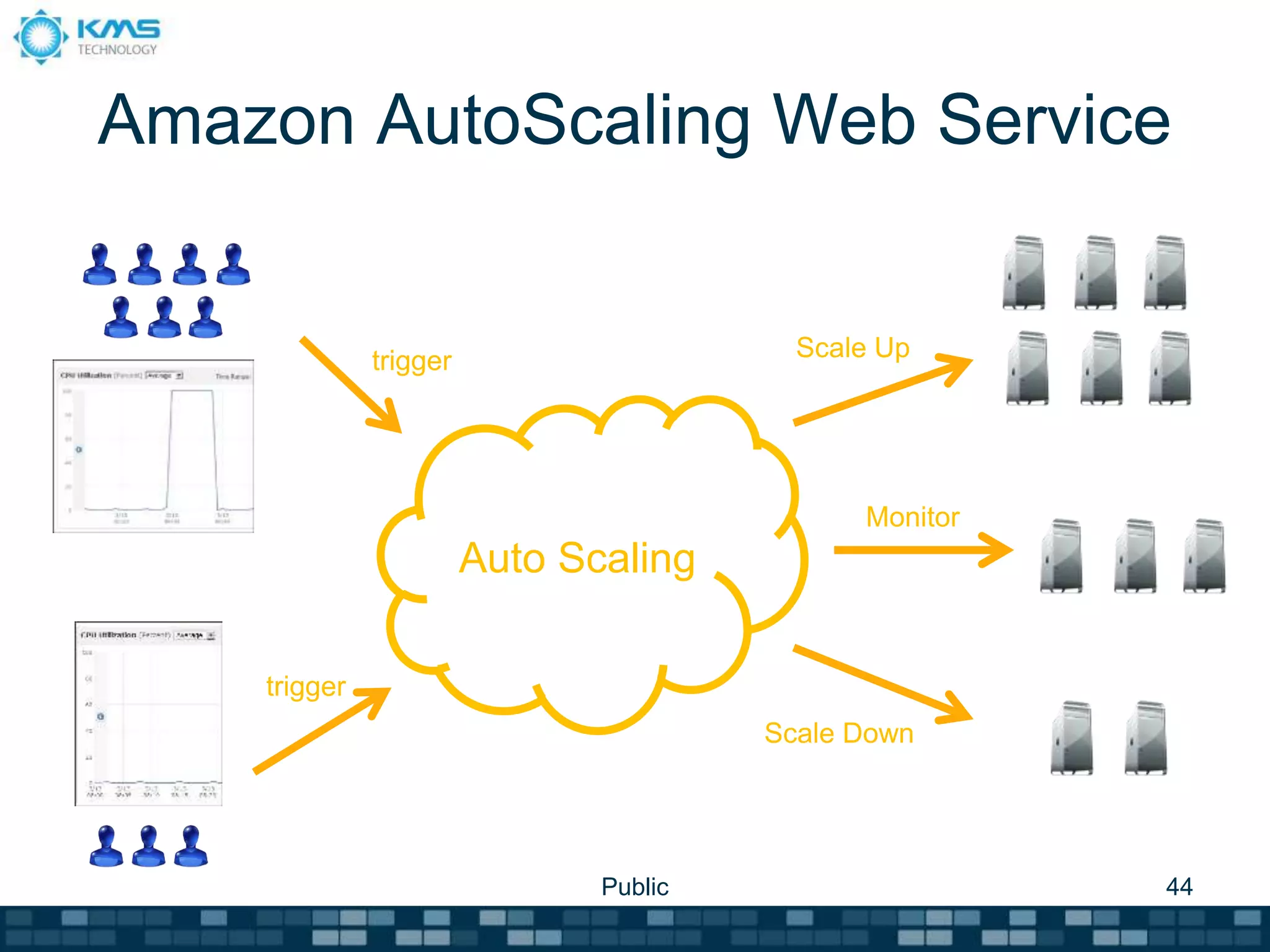

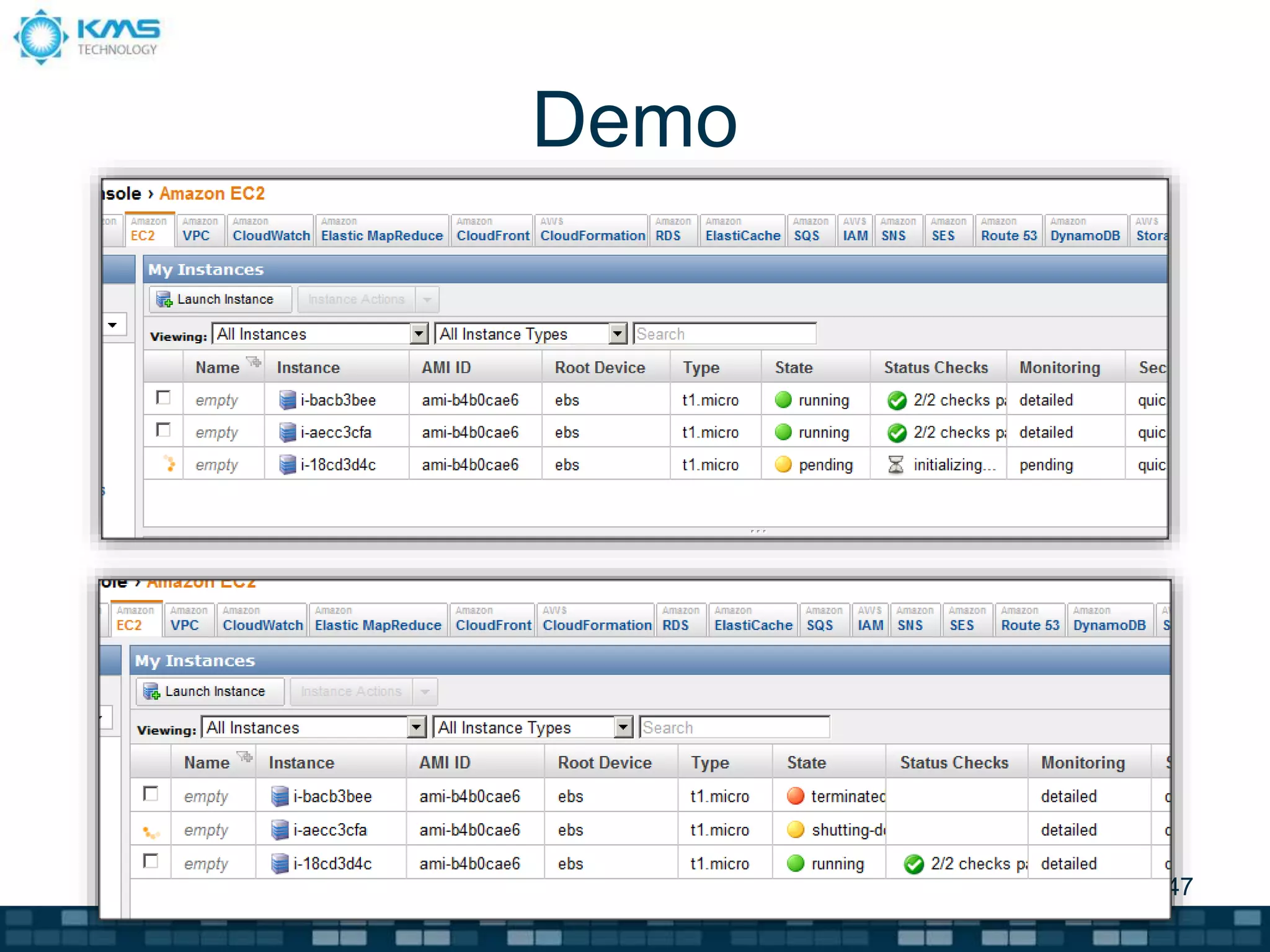

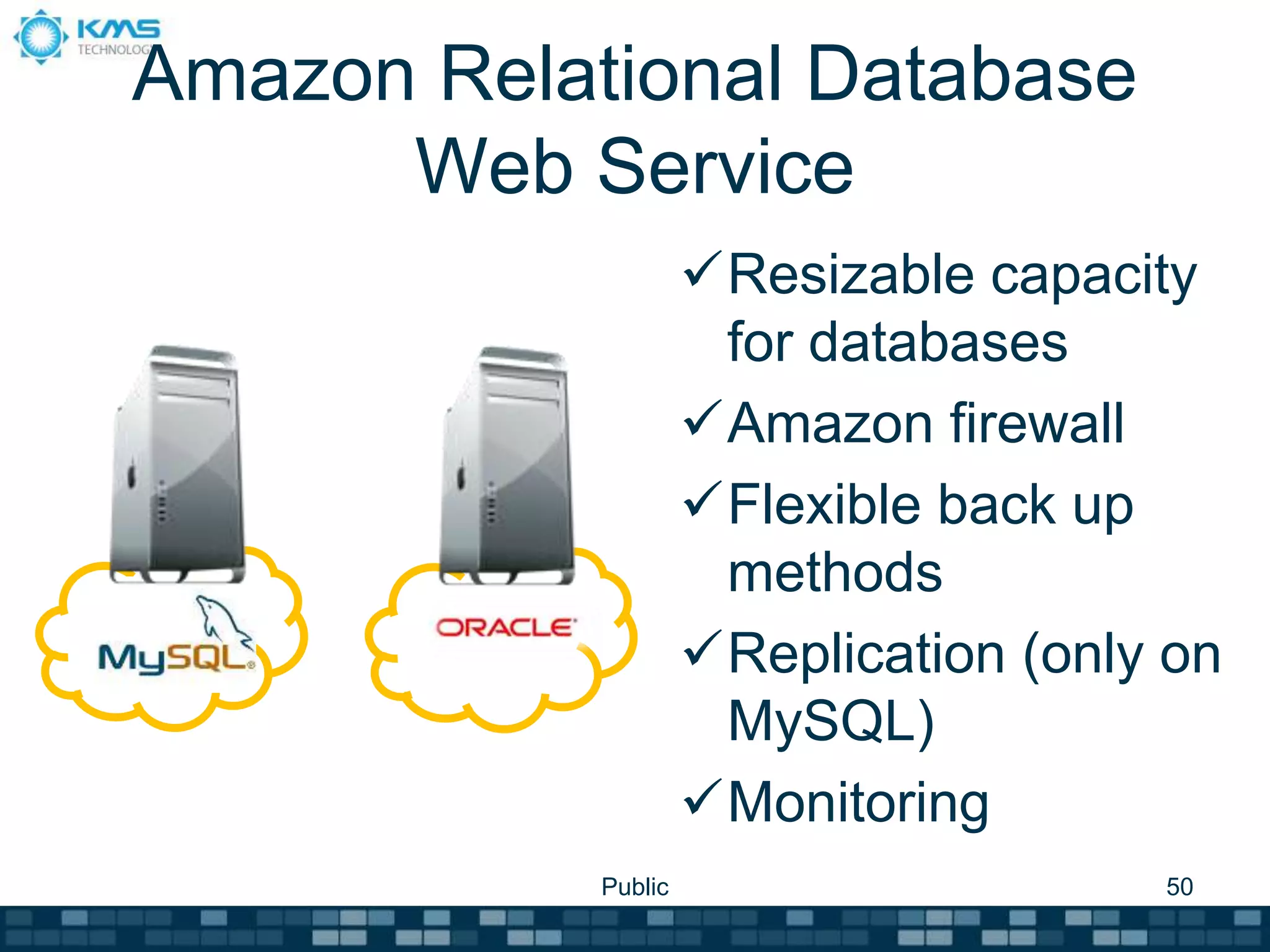

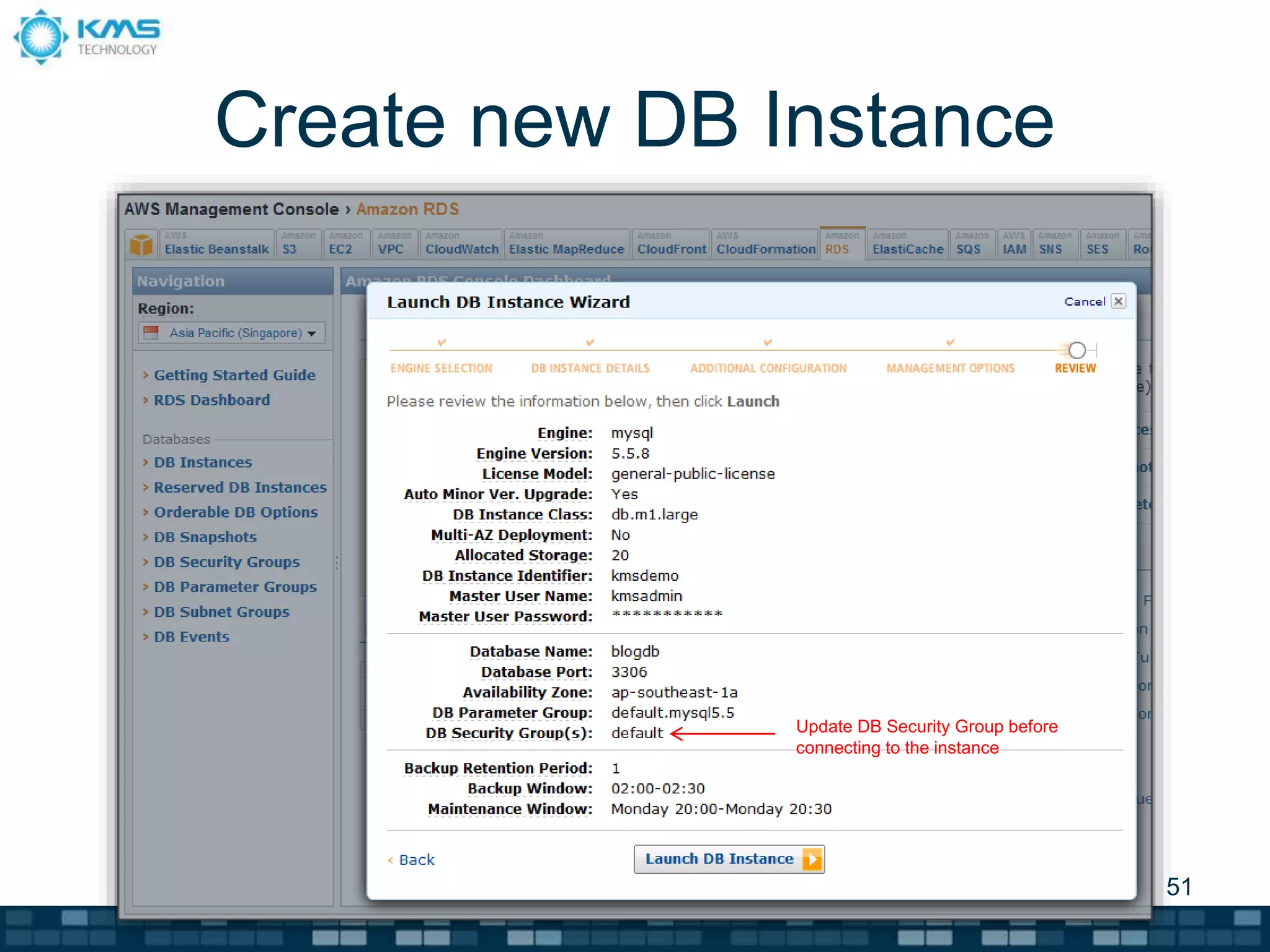

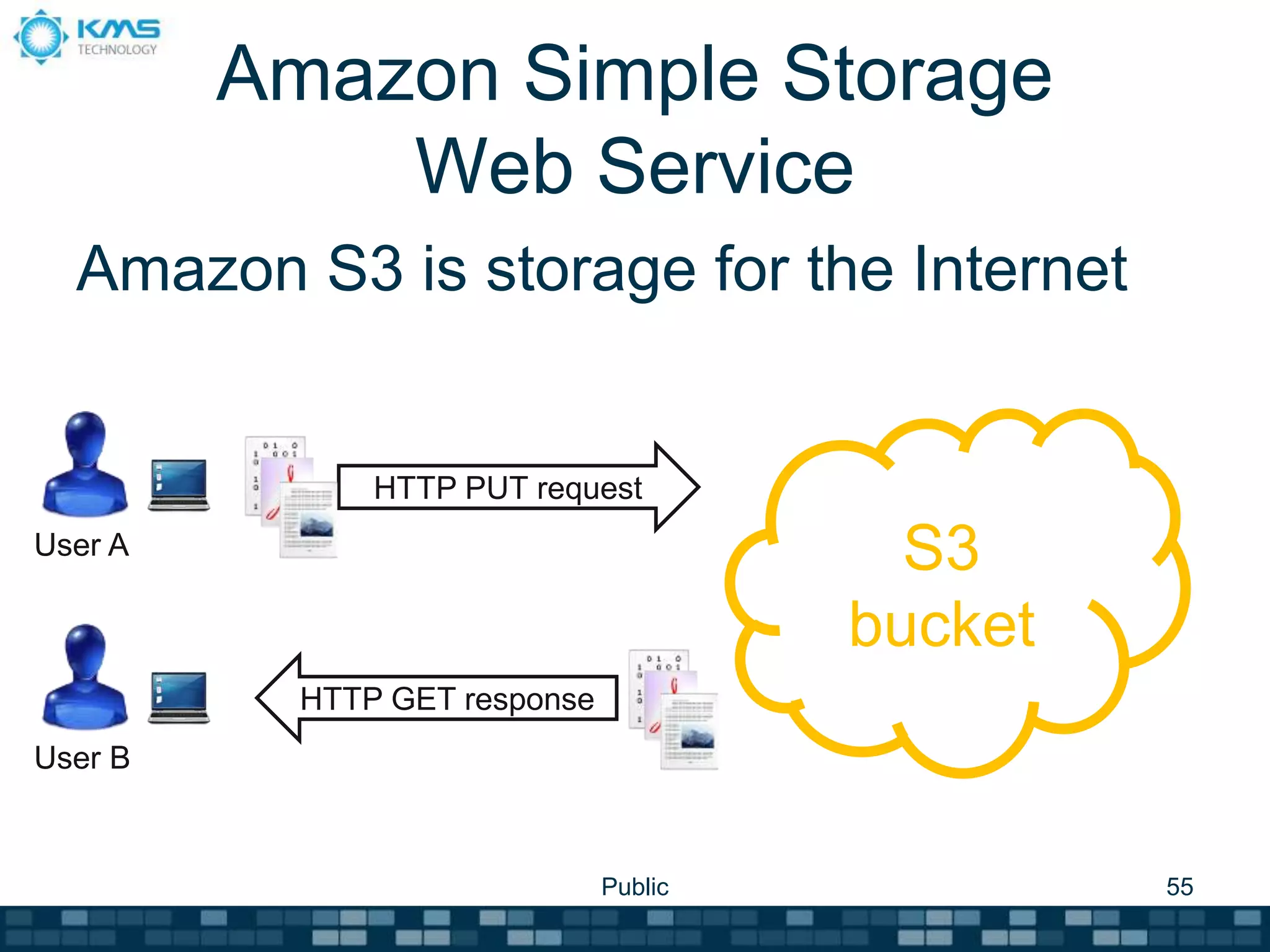

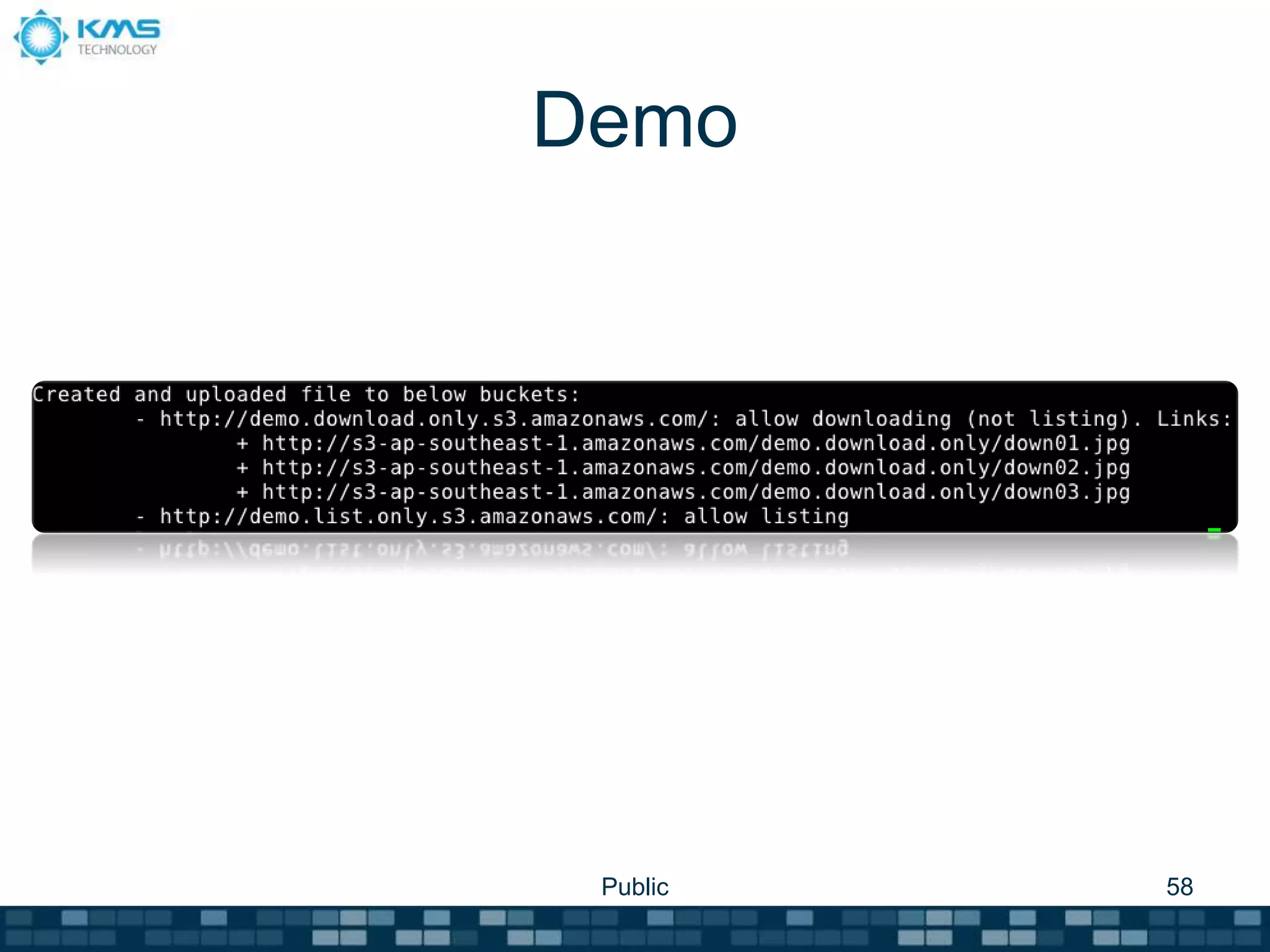

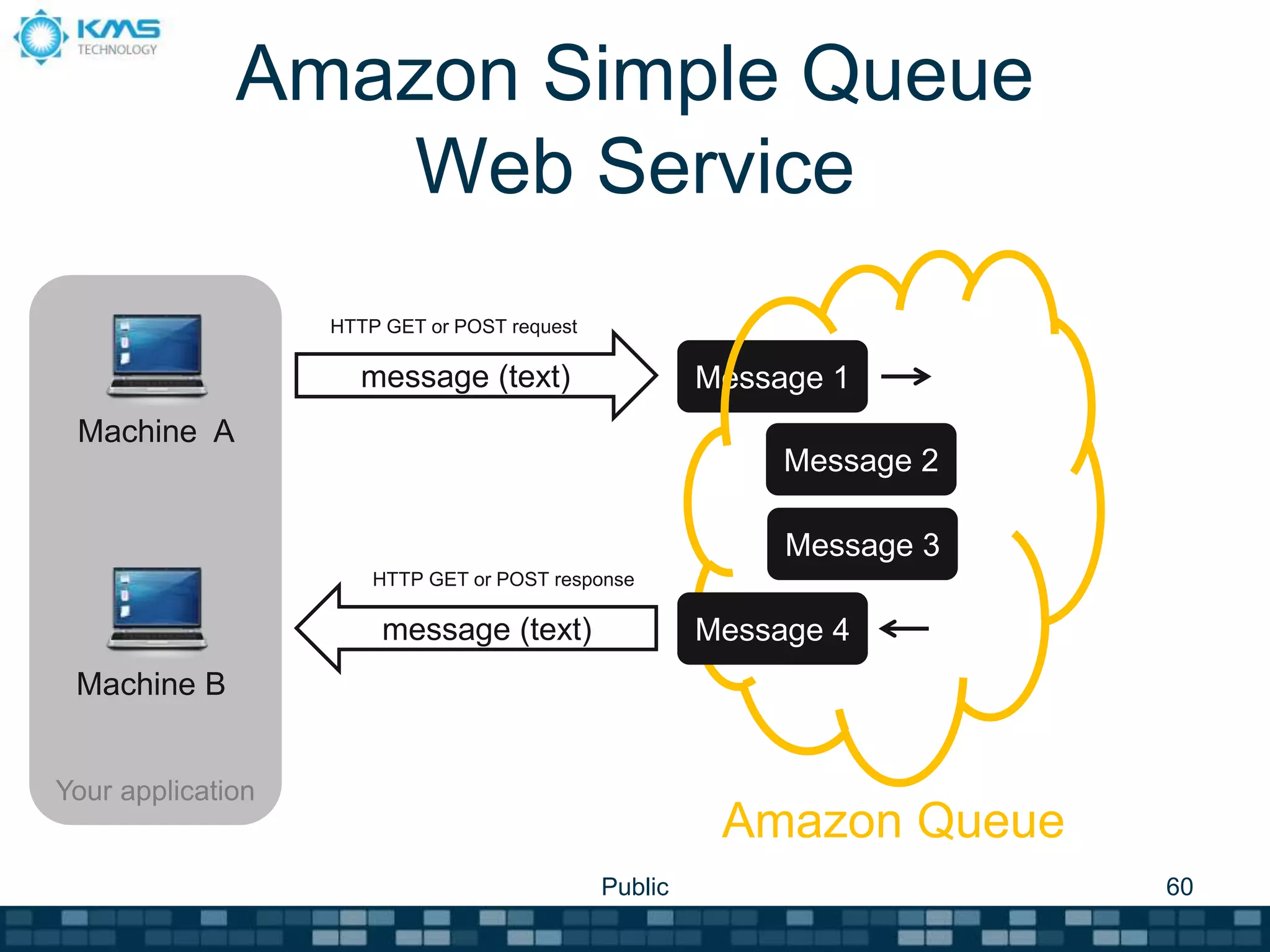

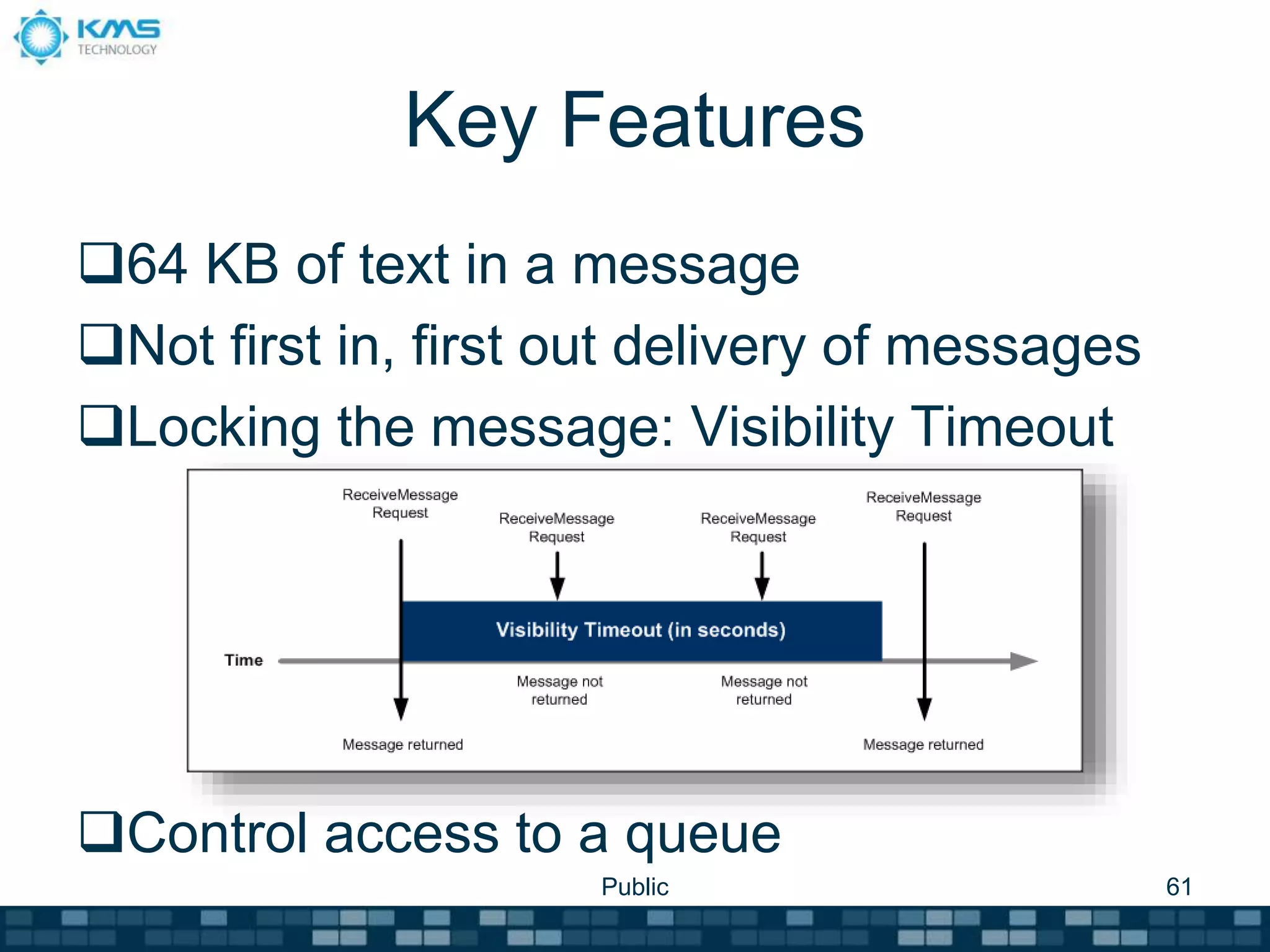

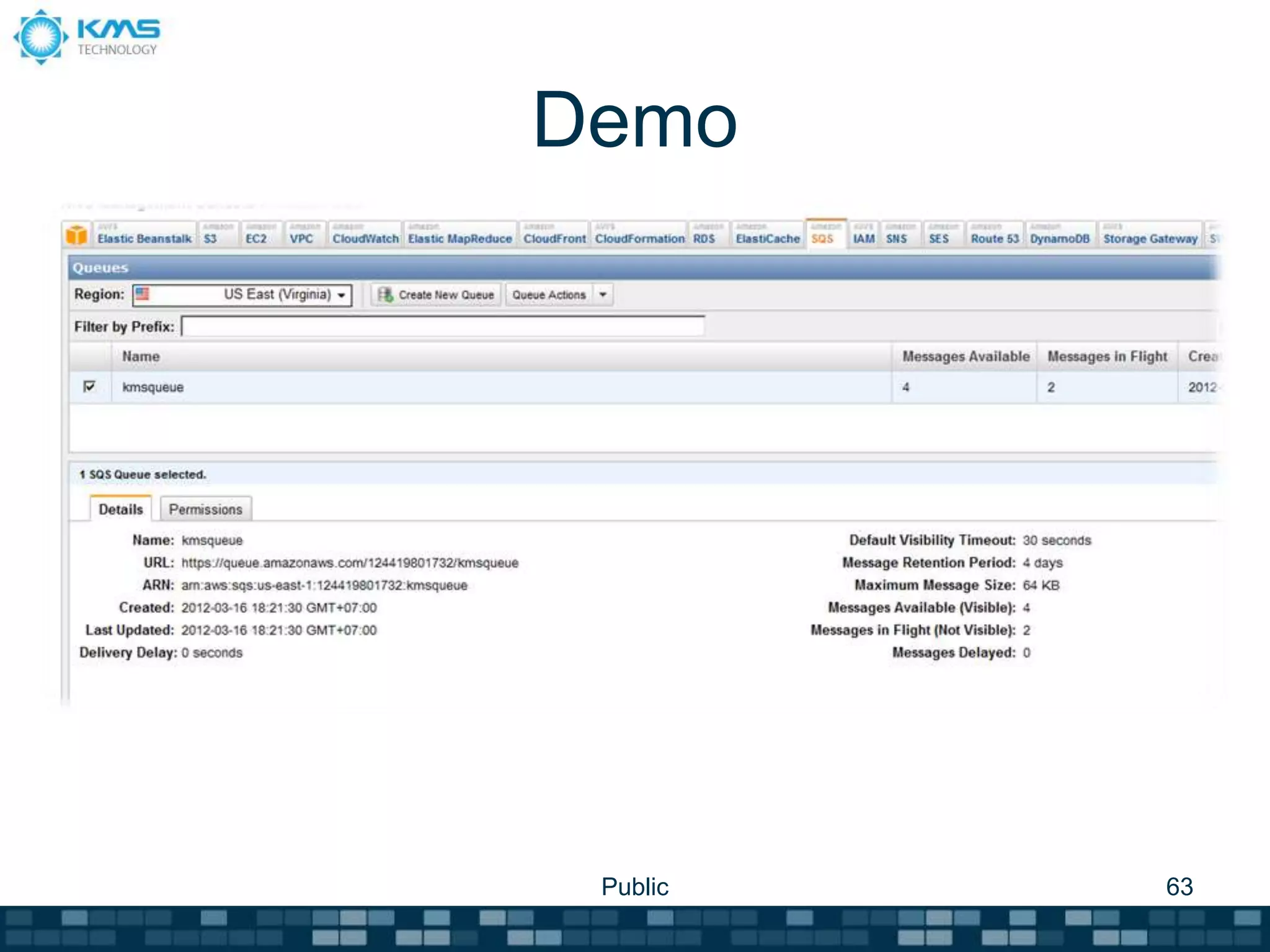

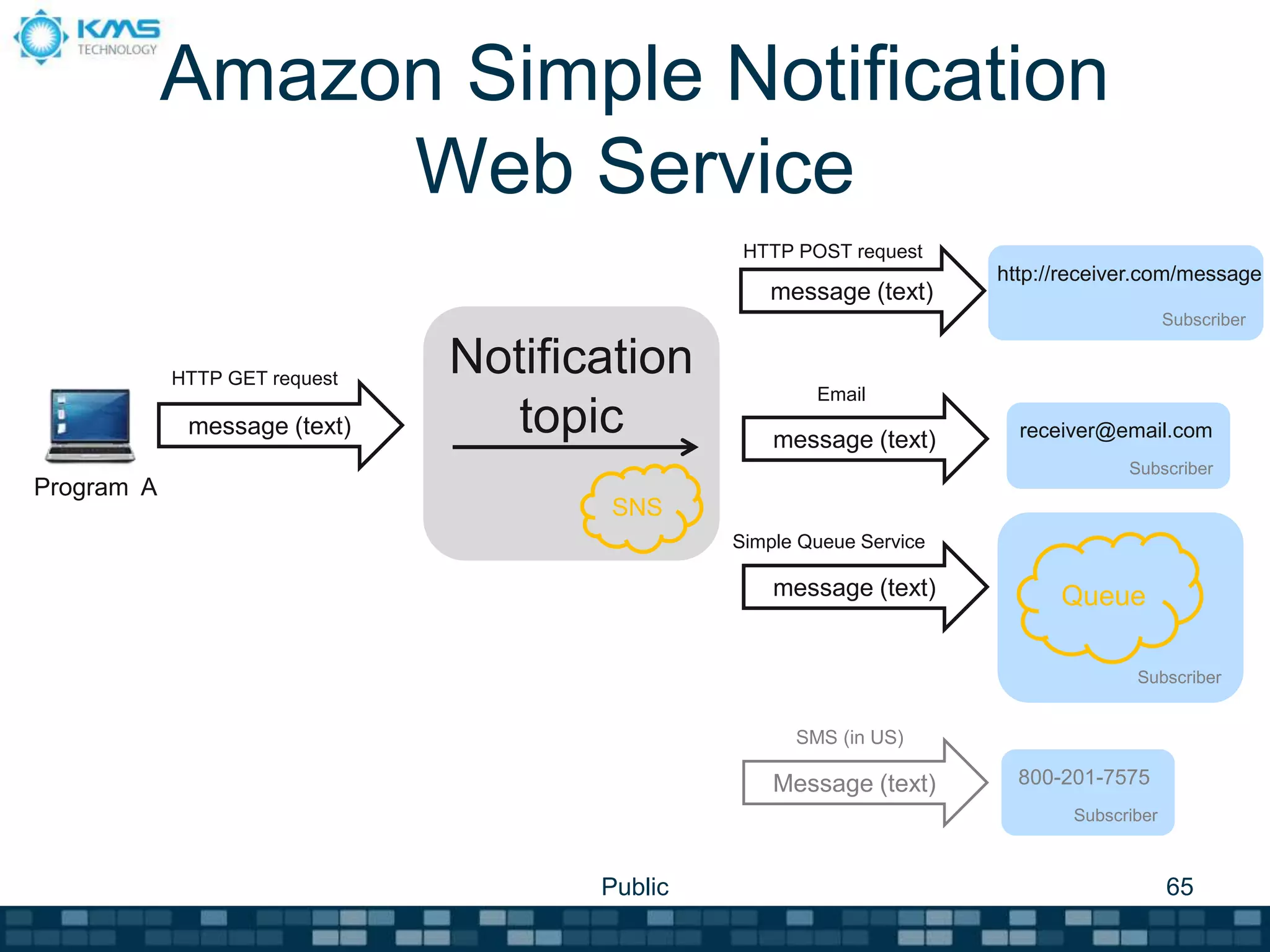

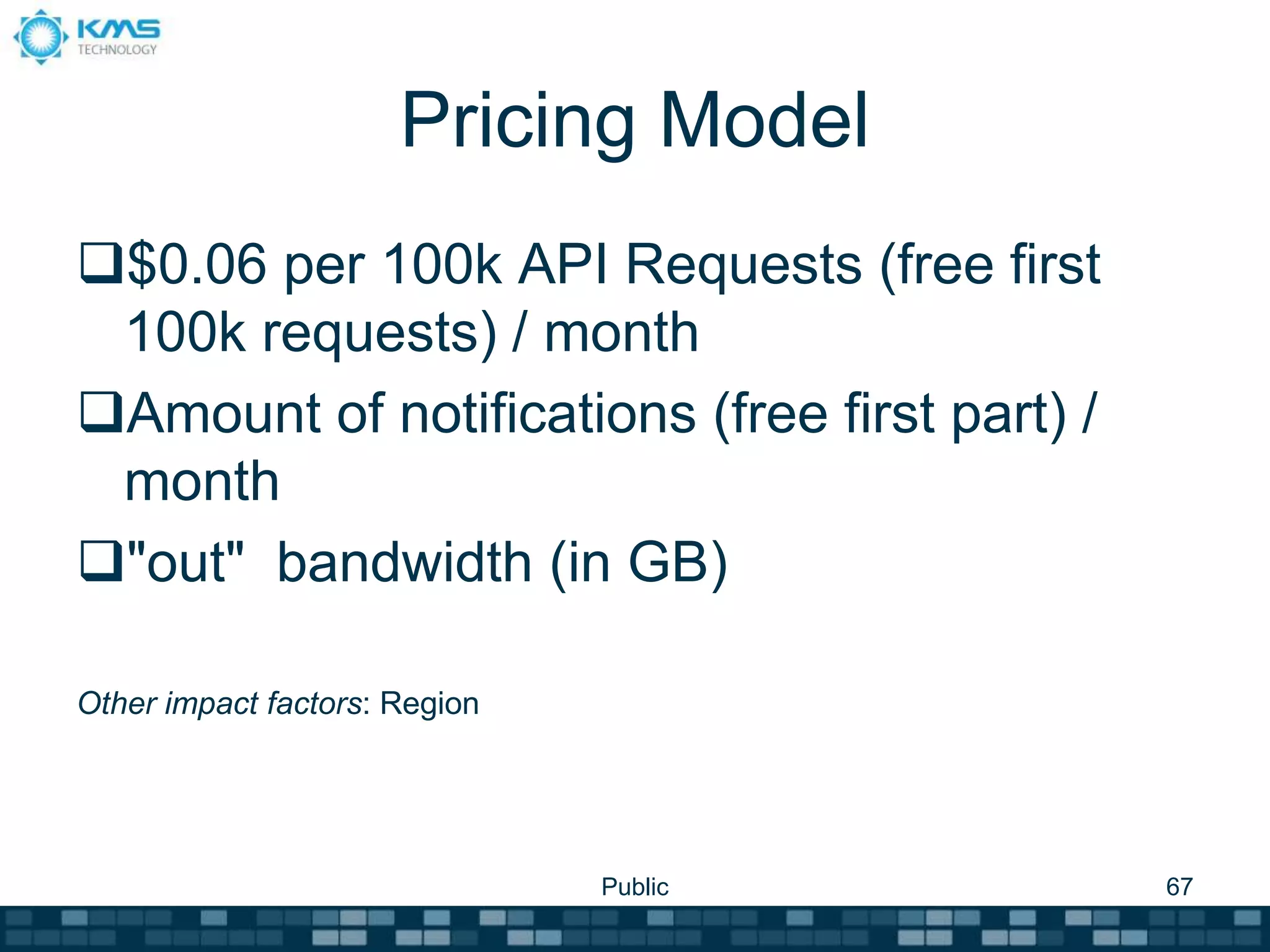

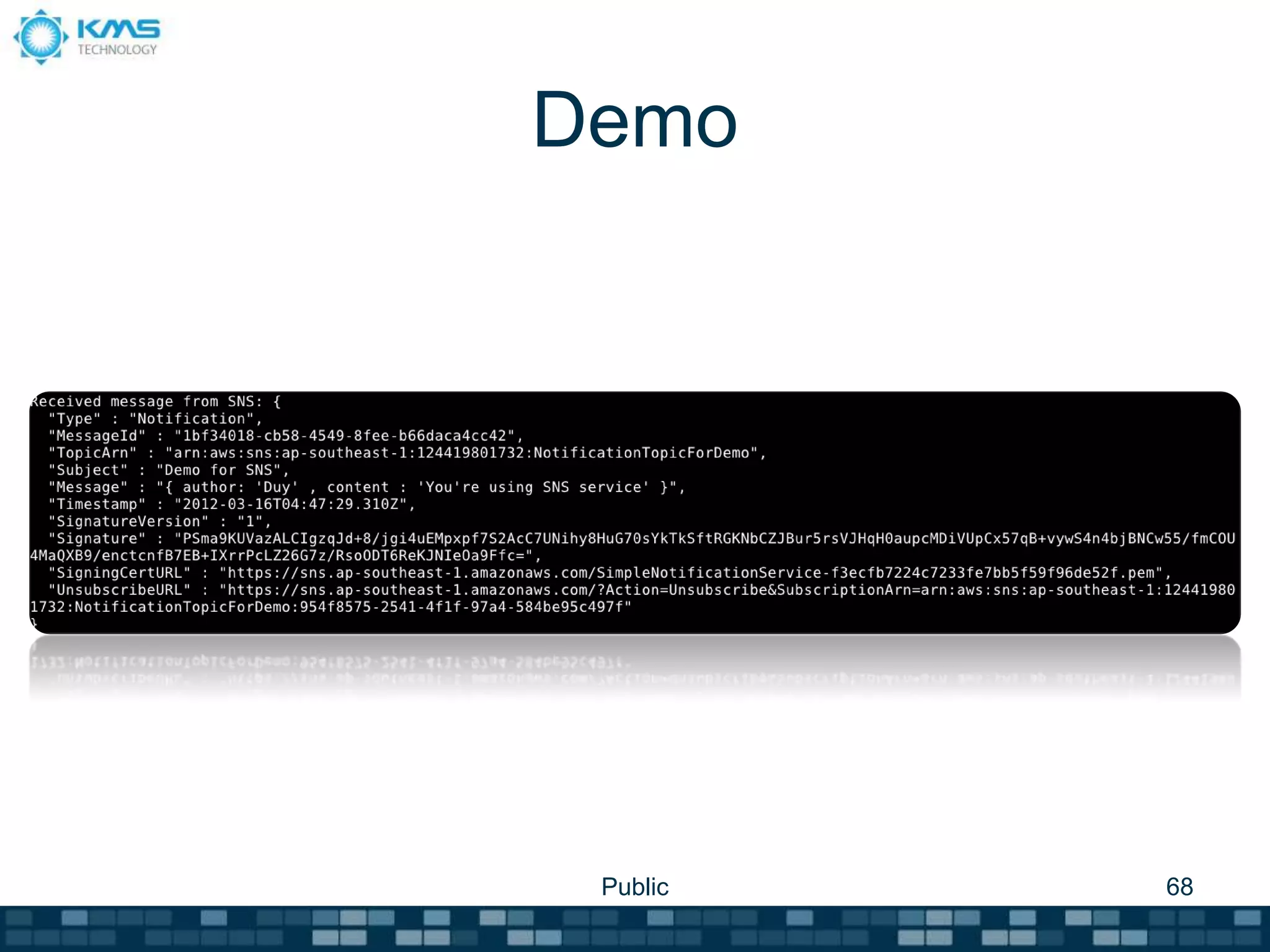

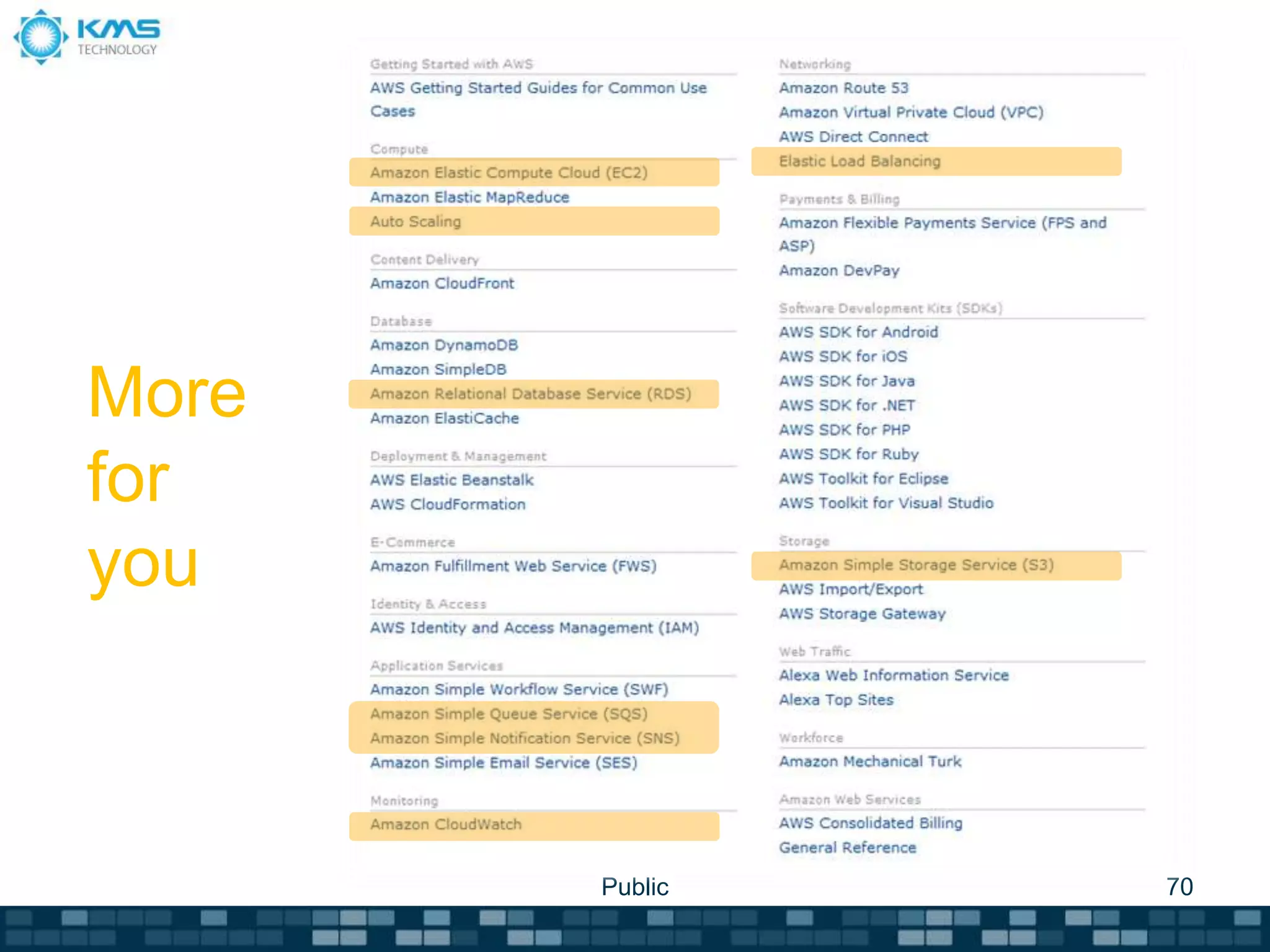

This document provides an overview of Amazon Web Services. It begins with an agenda that lists Amazon Cloud Platform, Amazon Compute Services, and Amazon Services. It then covers various AWS services like EC2, S3, RDS, SQS, SNS, CloudWatch, ELB, and Auto Scaling in detail, describing their key features, pricing models, and providing demos. The document aims to educate users on how to get started with AWS and leverage its various cloud-based services.