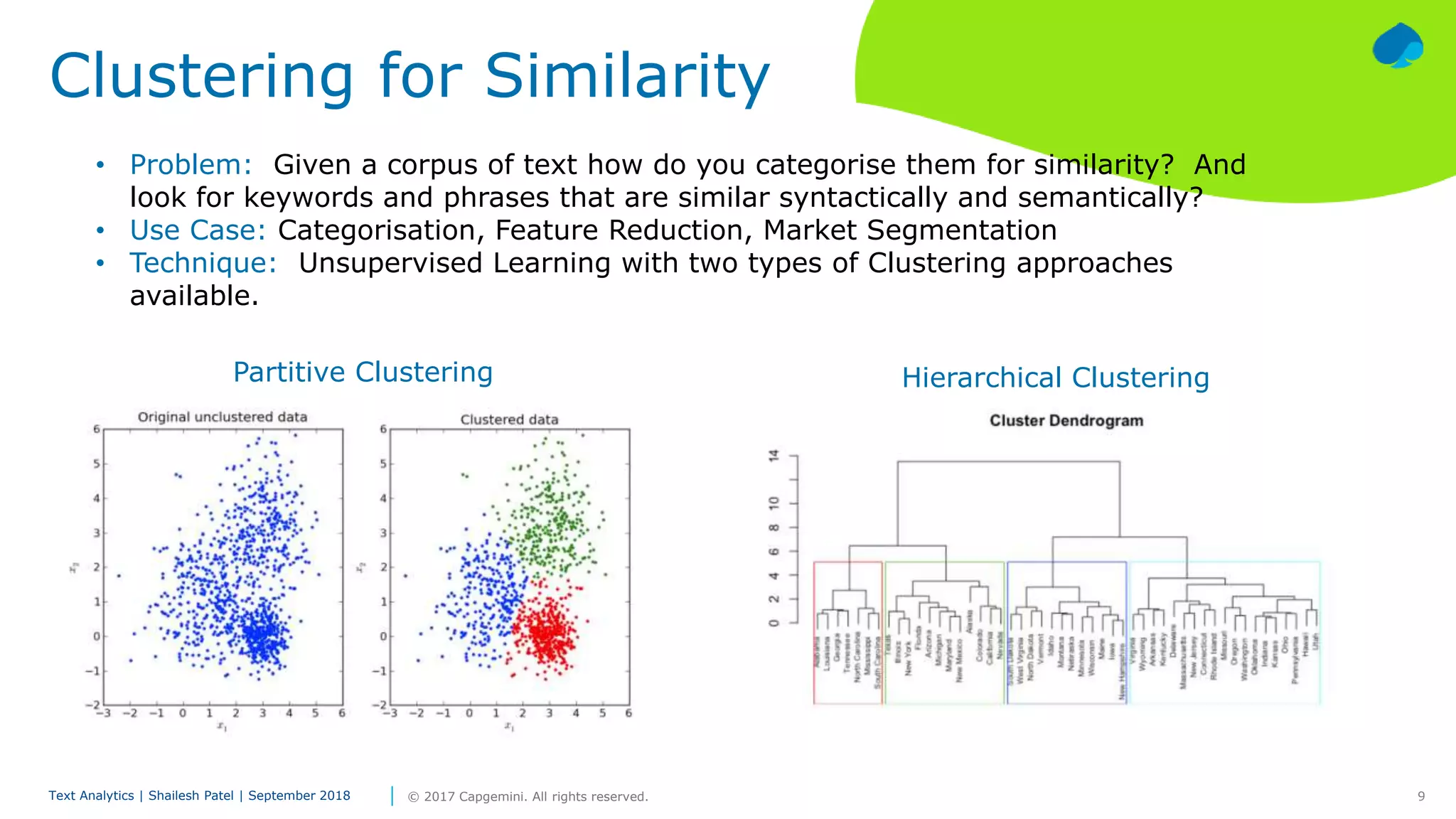

The document discusses text analytics and various approaches used for tasks like text classification, clustering, topic modeling, semantic analysis, sentiment analysis and text summarization. It notes that data production will be 44 times greater by 2020 than 2009 and unstructured data represents 70-90% of captured data. It provides high-level overviews of different text analytics techniques.