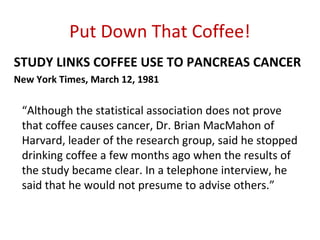

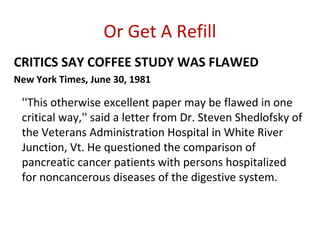

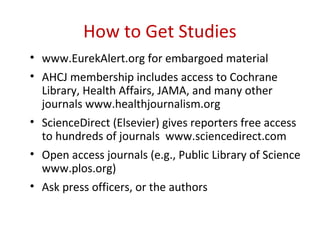

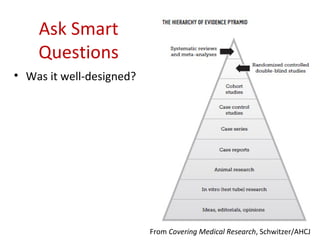

This document provides guidance on how to accurately summarize and report on medical studies to avoid misrepresenting results. It emphasizes the importance of reading full studies, asking clarifying questions of authors, considering limitations and biases, disclosing conflicts of interest, and relying on outside experts rather than just study authors when evaluating results. The goal is to help readers make informed health decisions by providing coverage that reflects the evidence objectively and acknowledges uncertainty.