Embed presentation

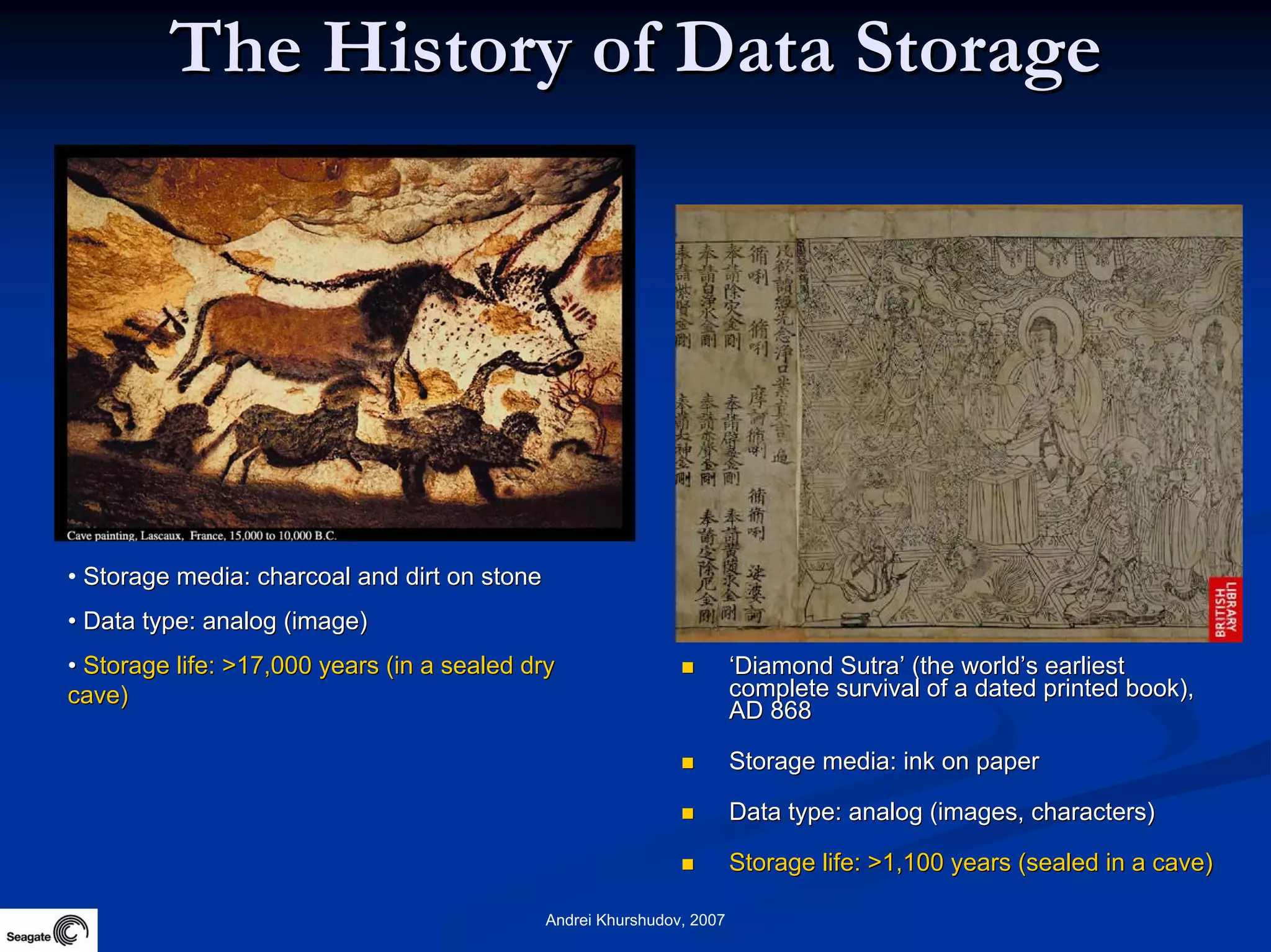

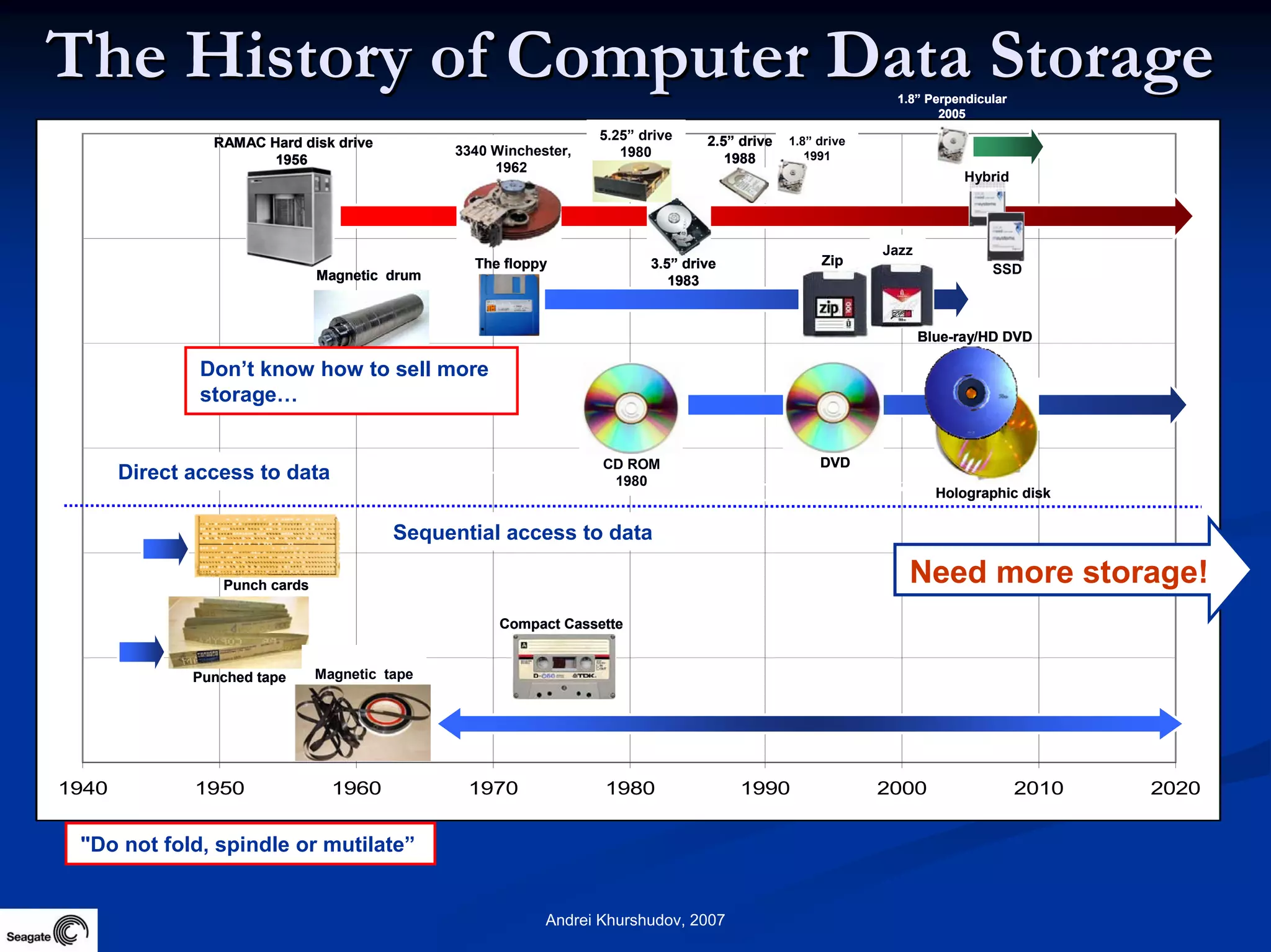

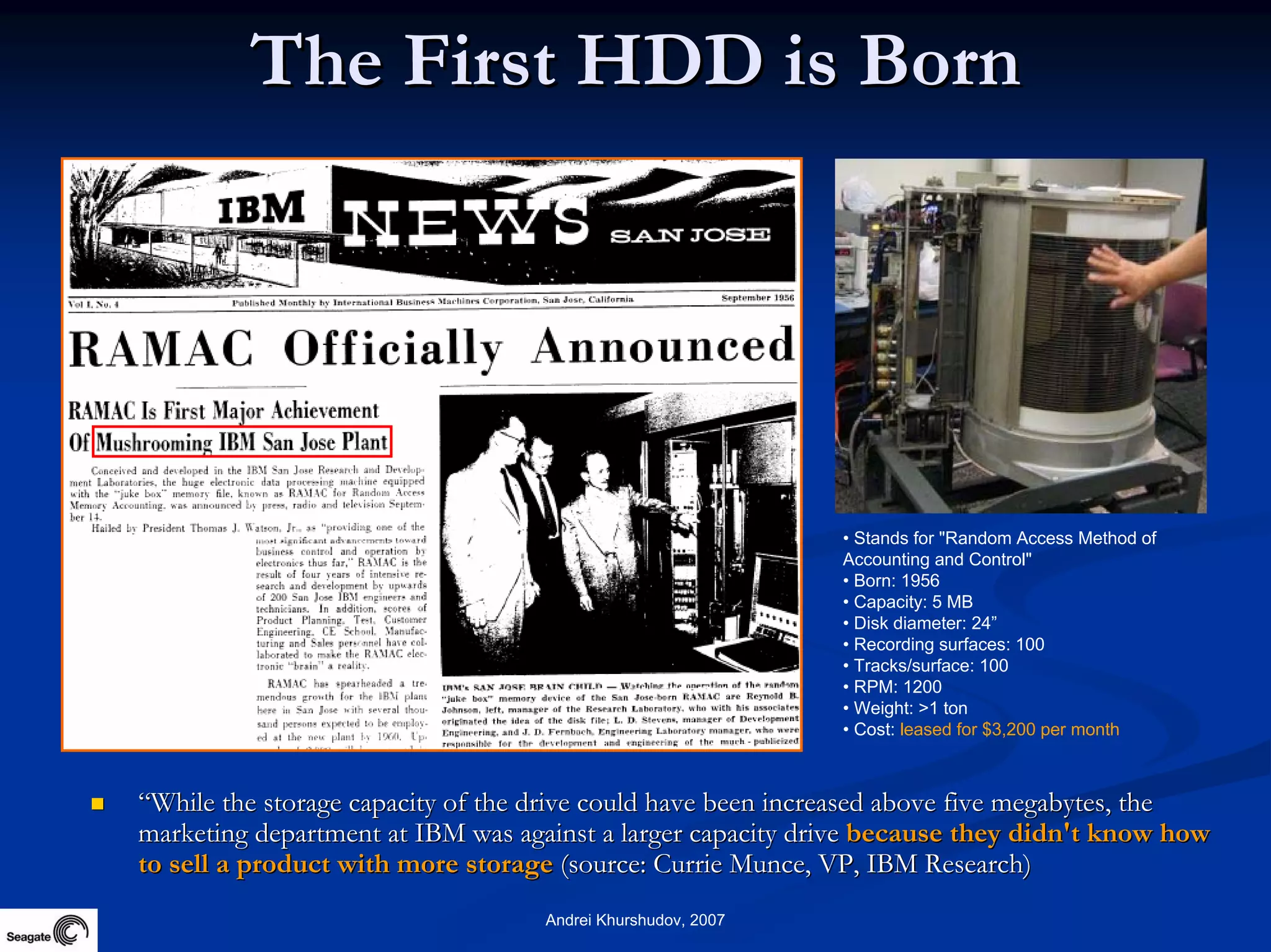

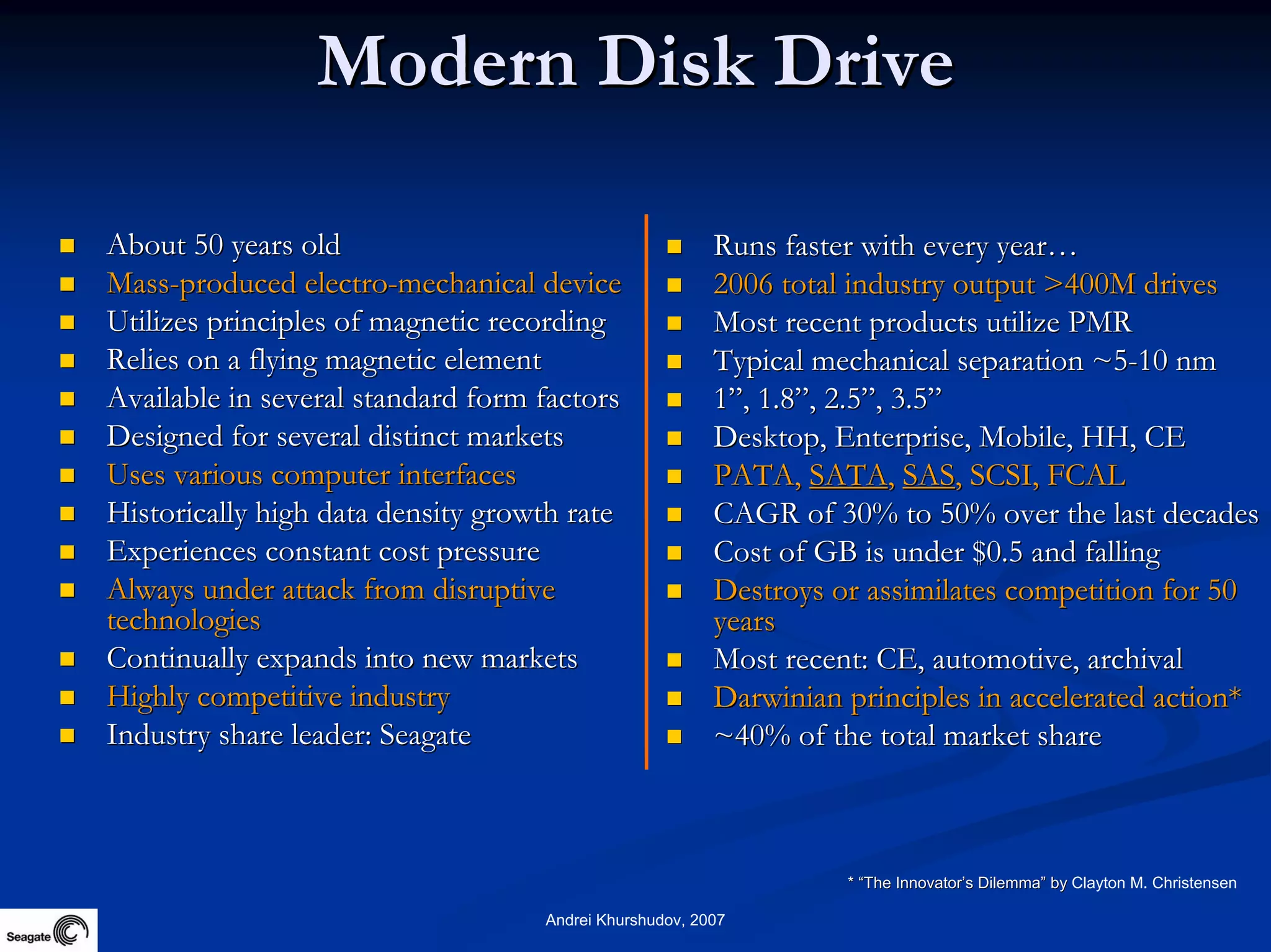

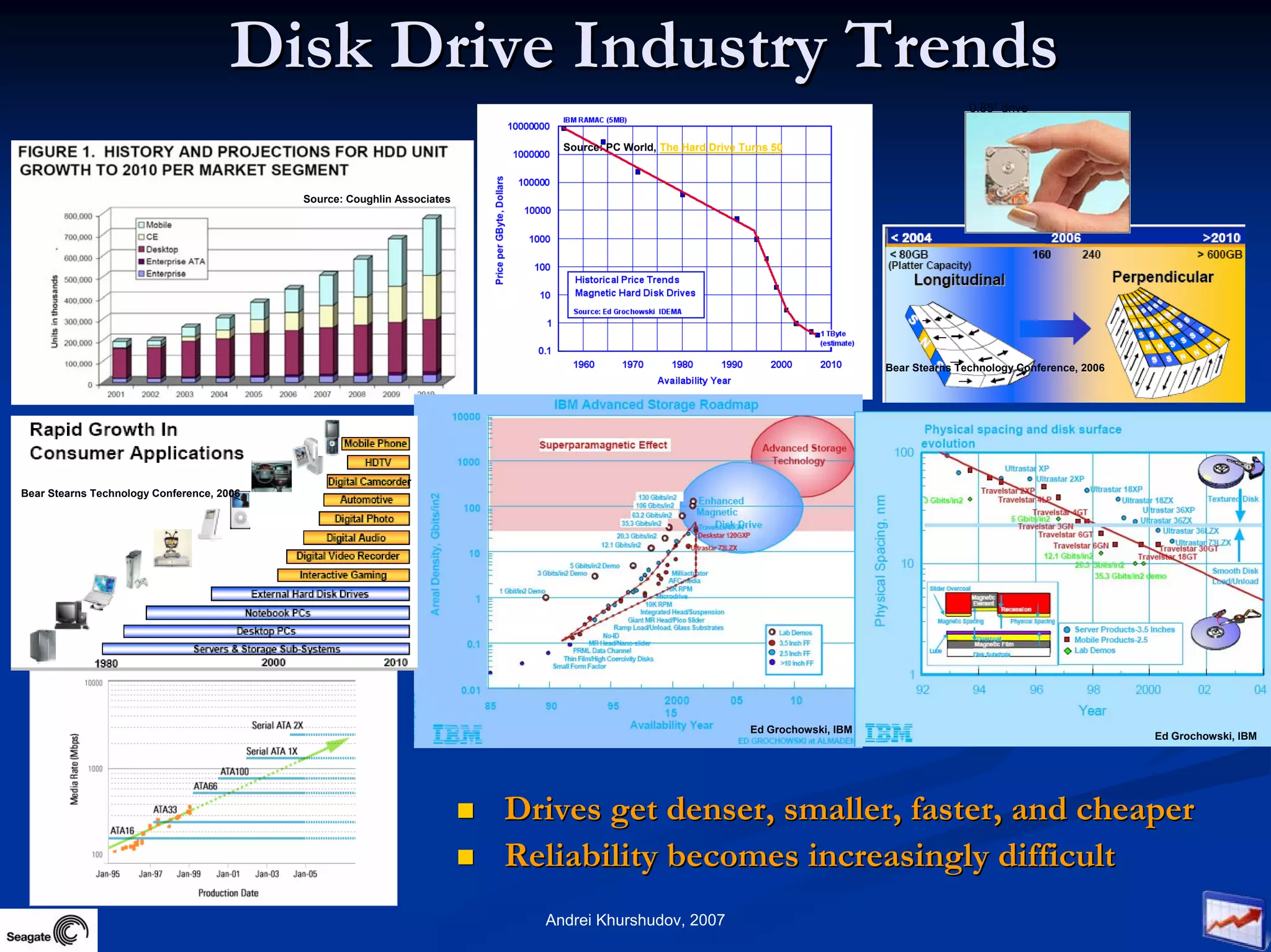

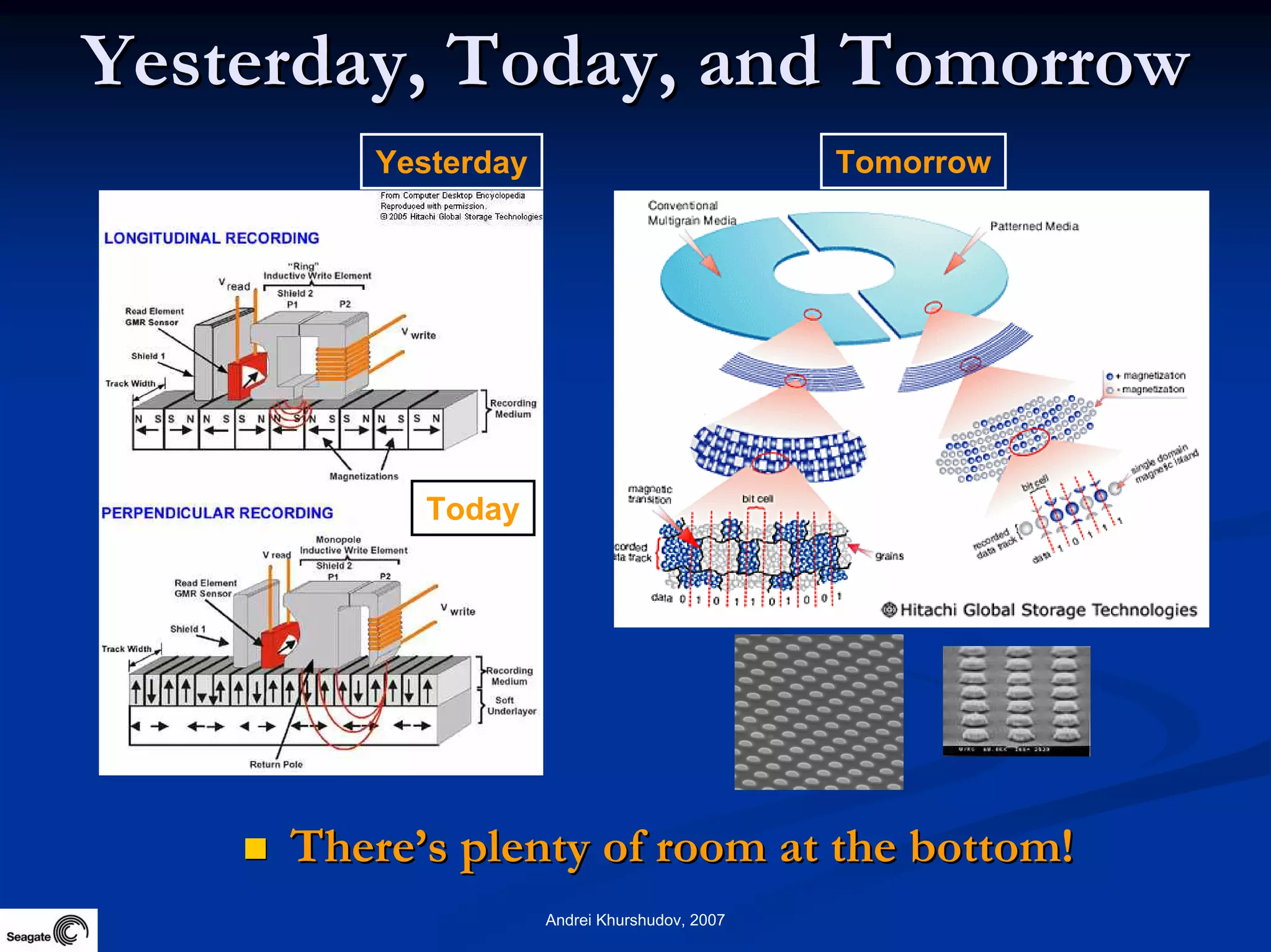

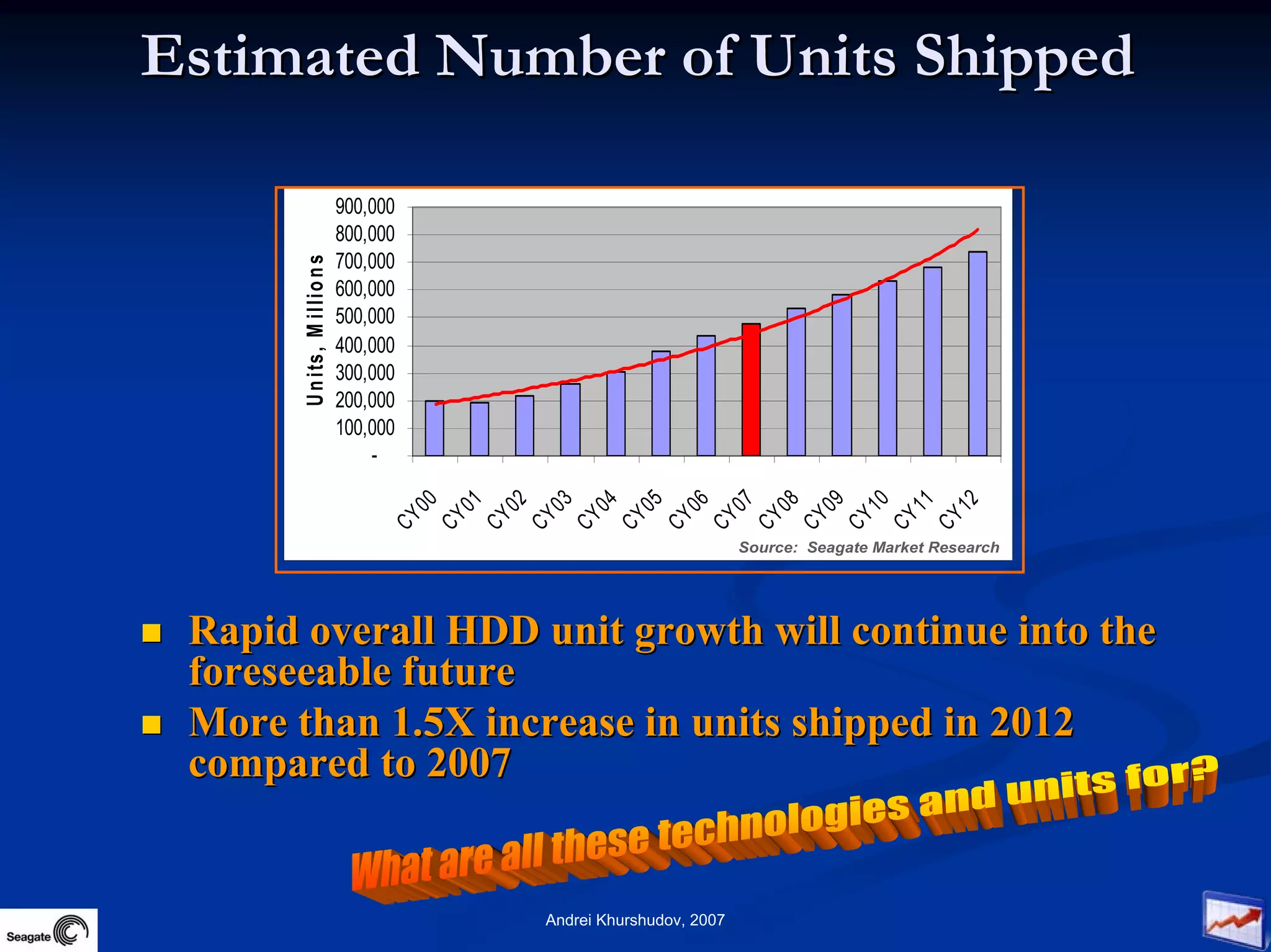

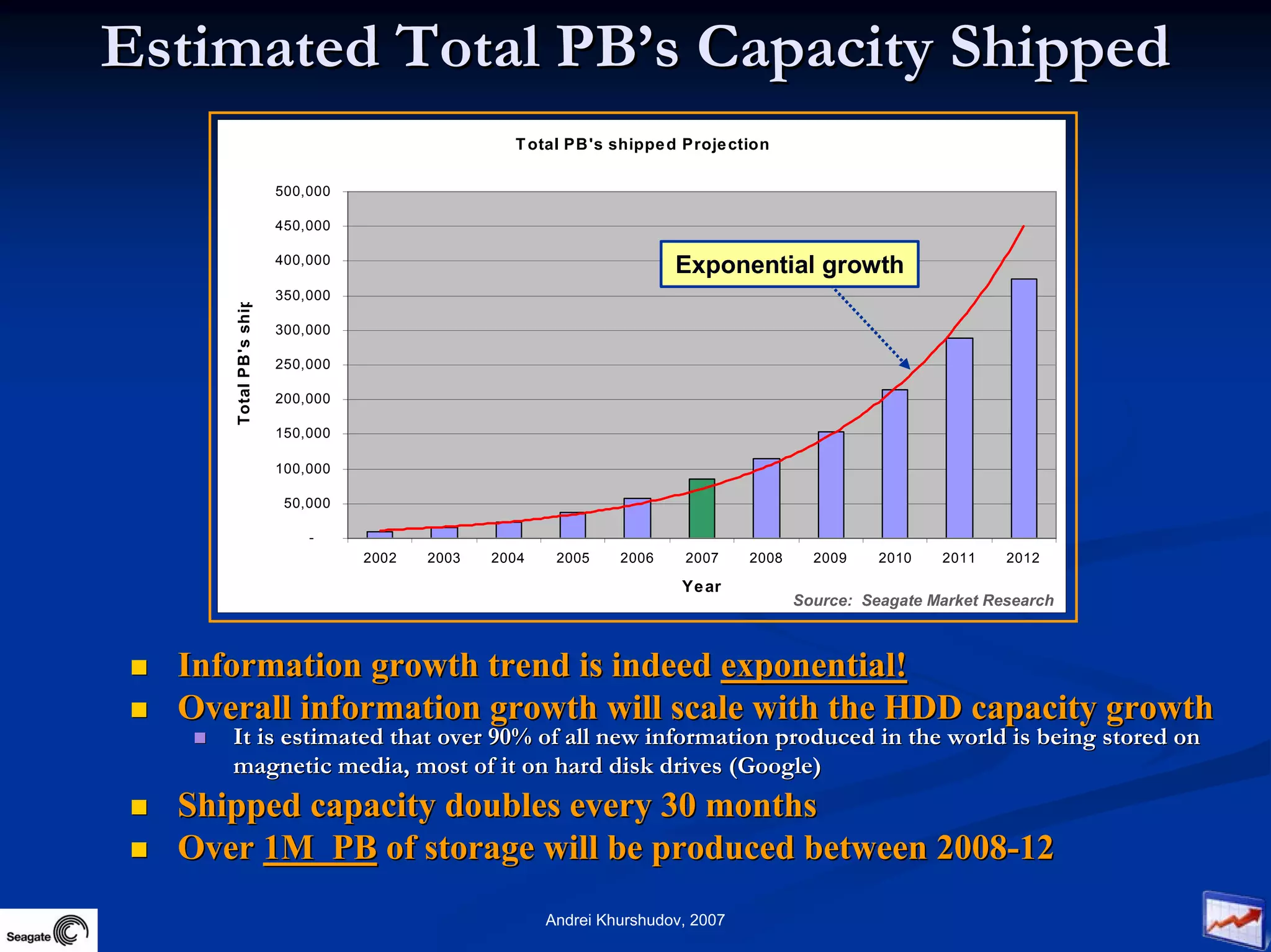

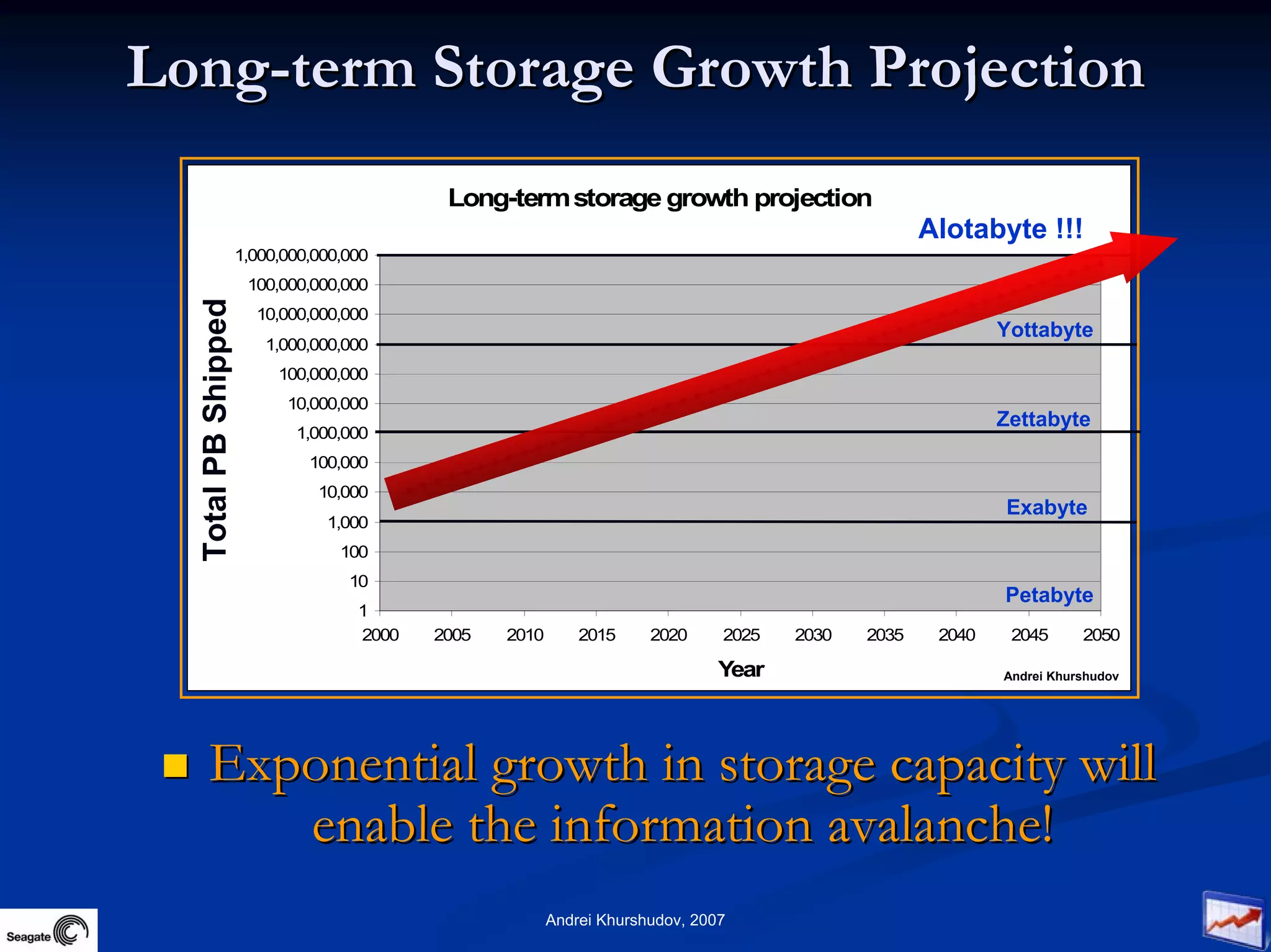

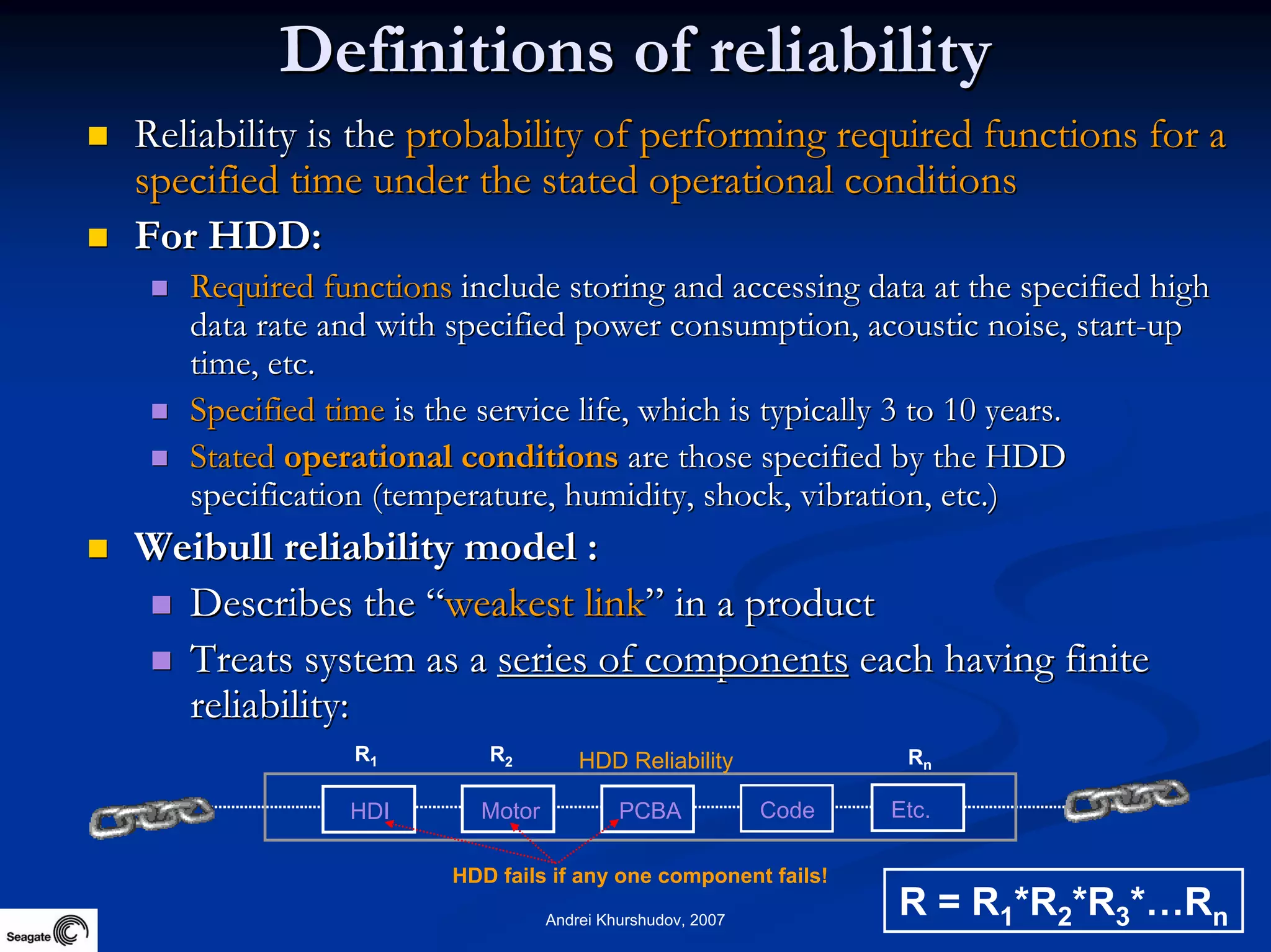

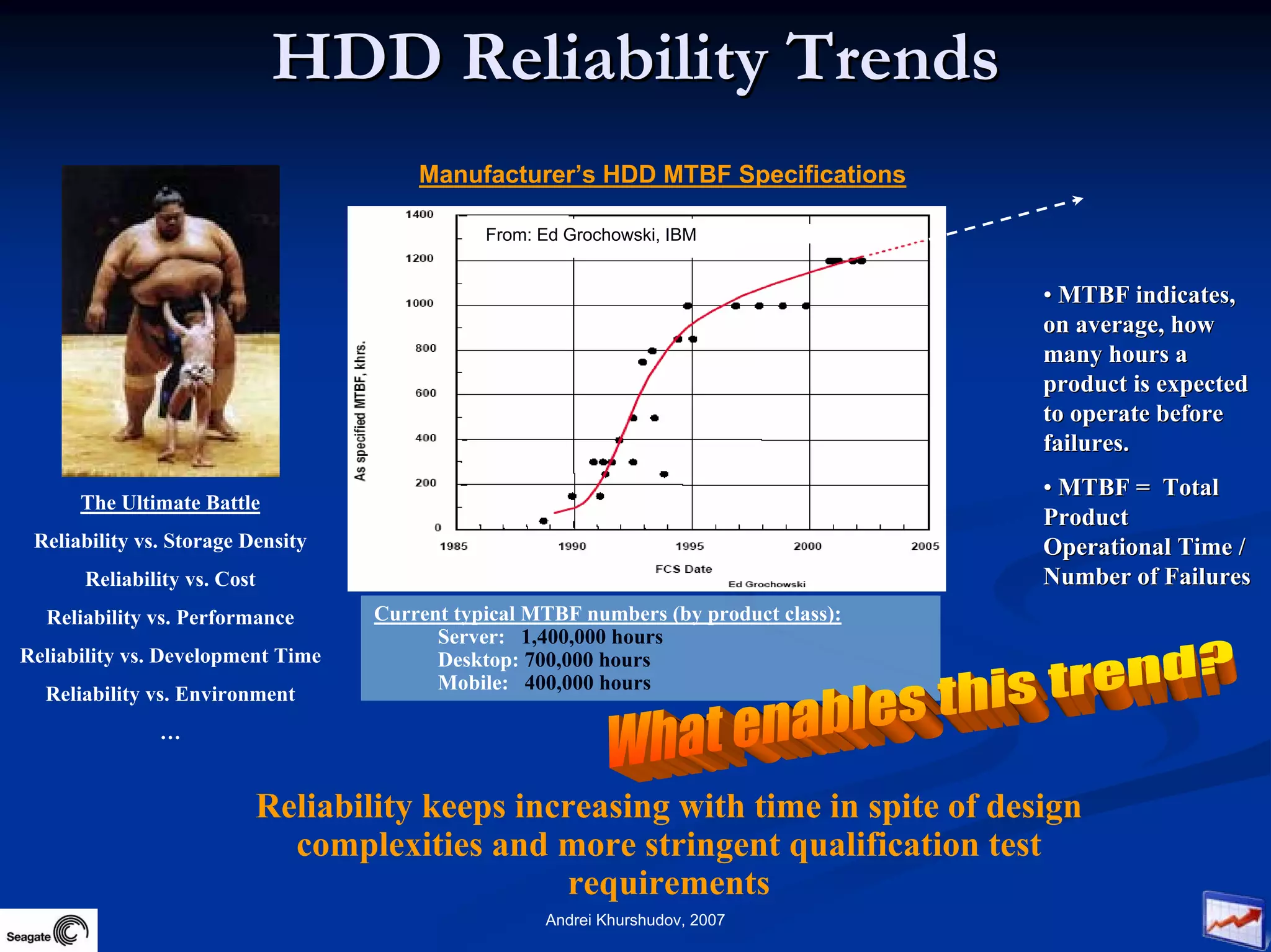

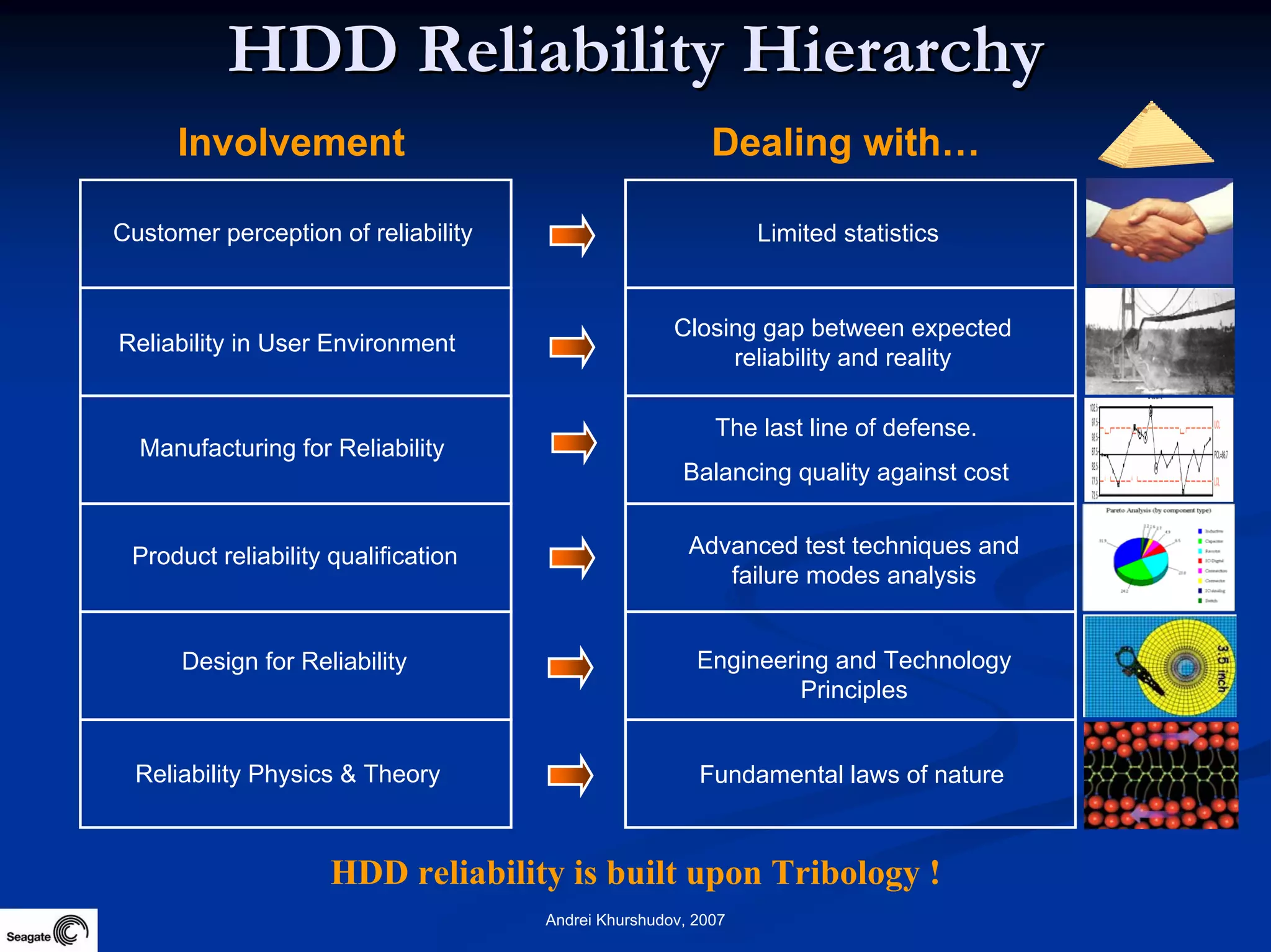

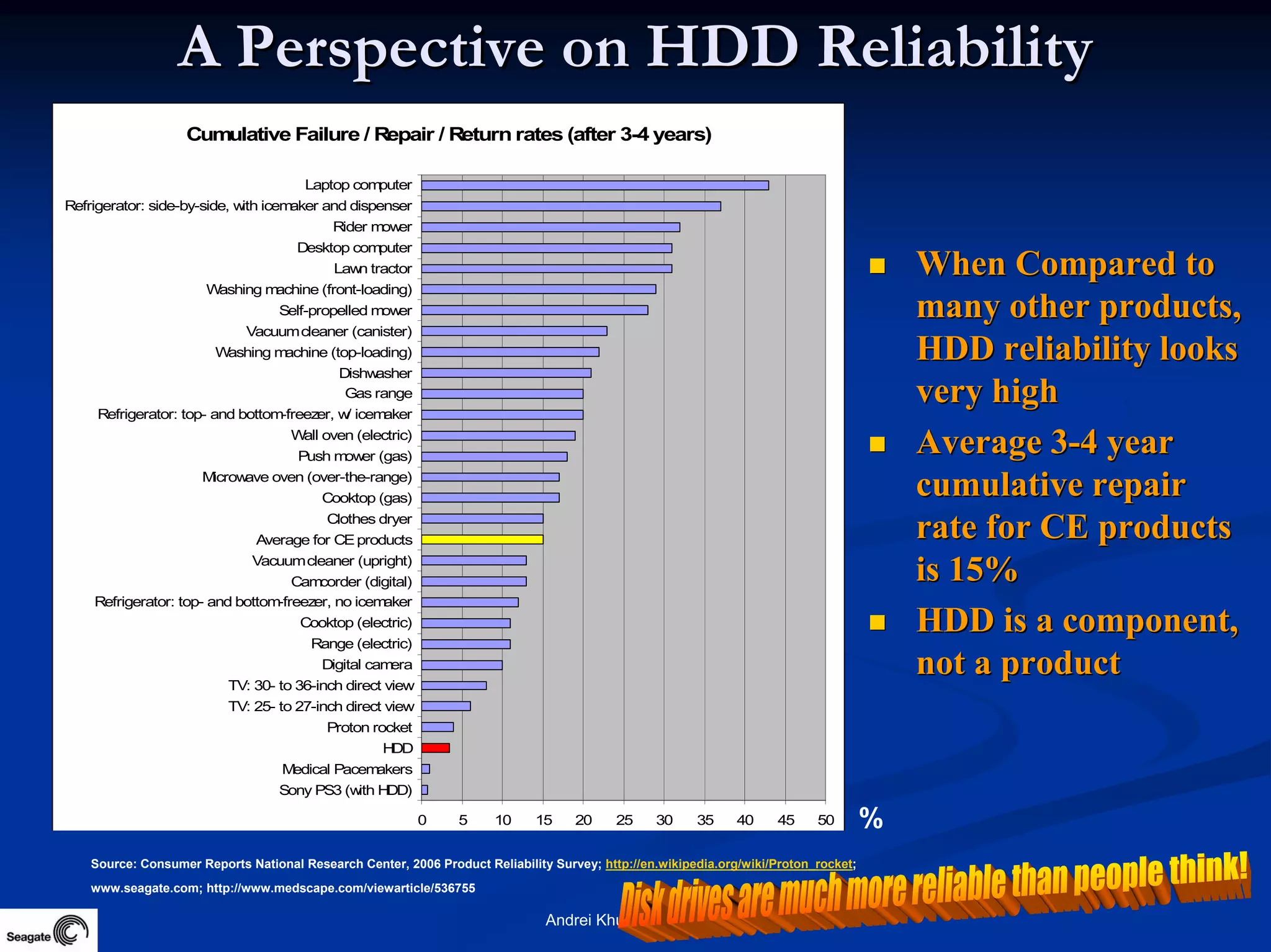

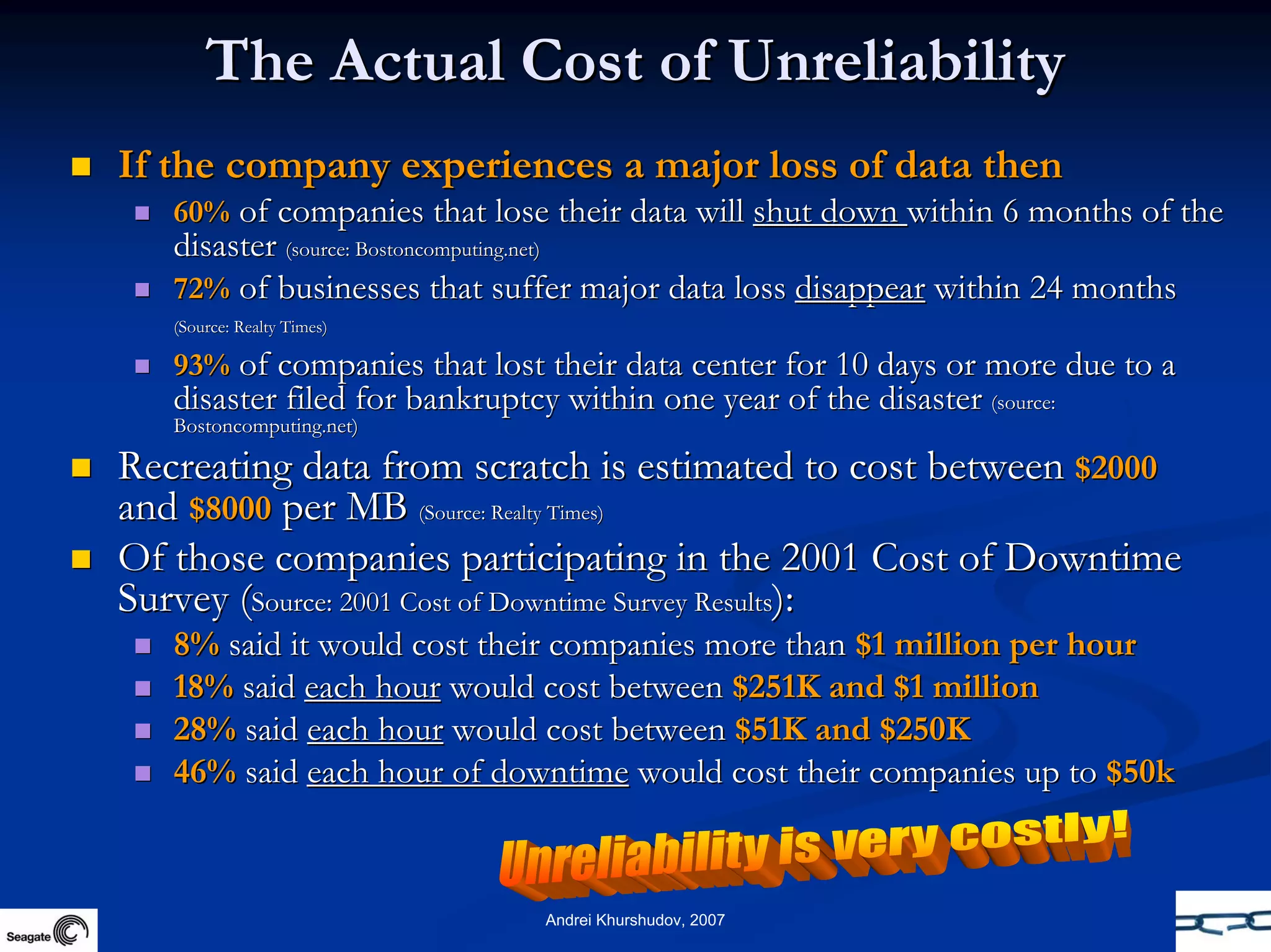

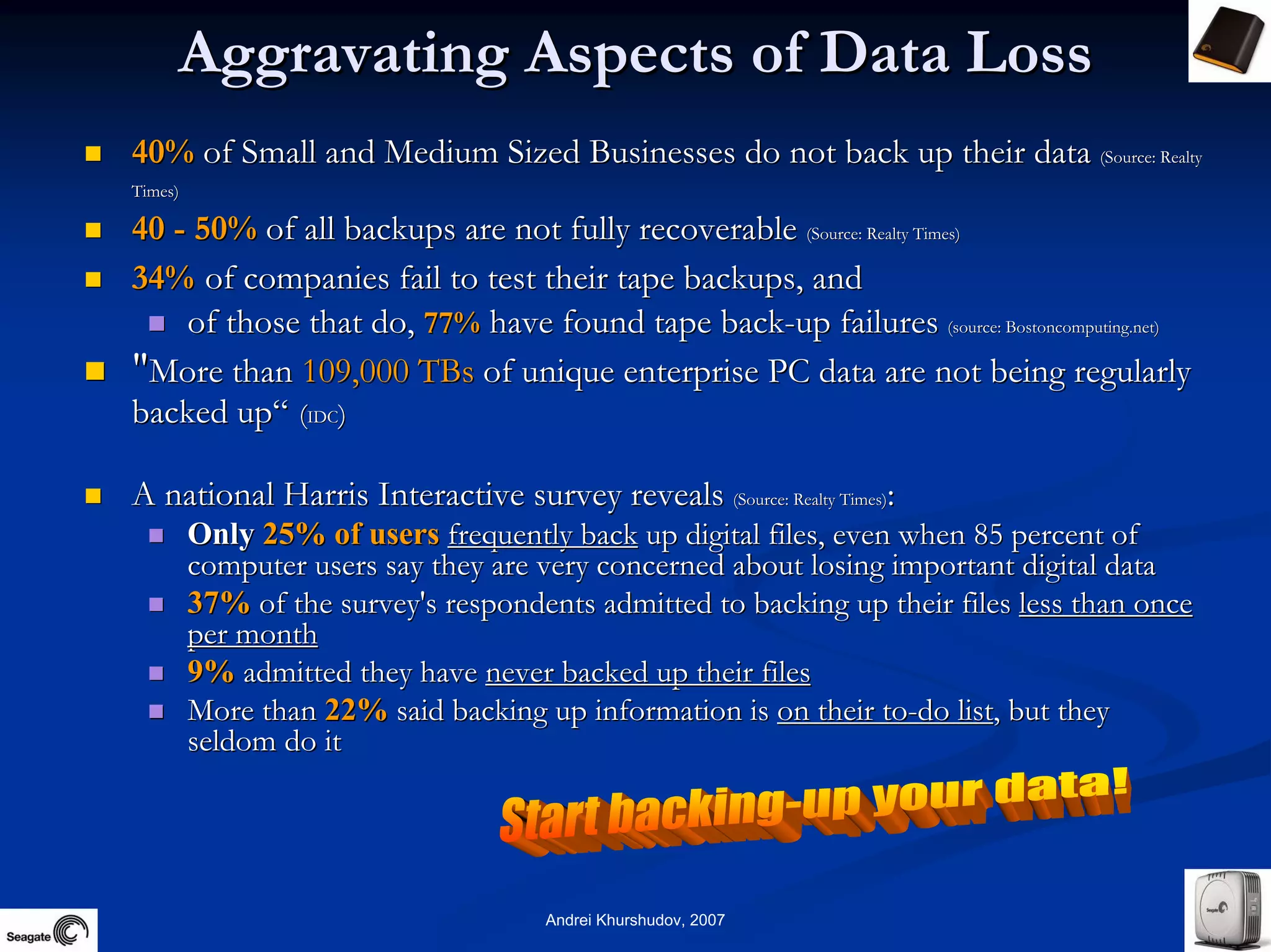

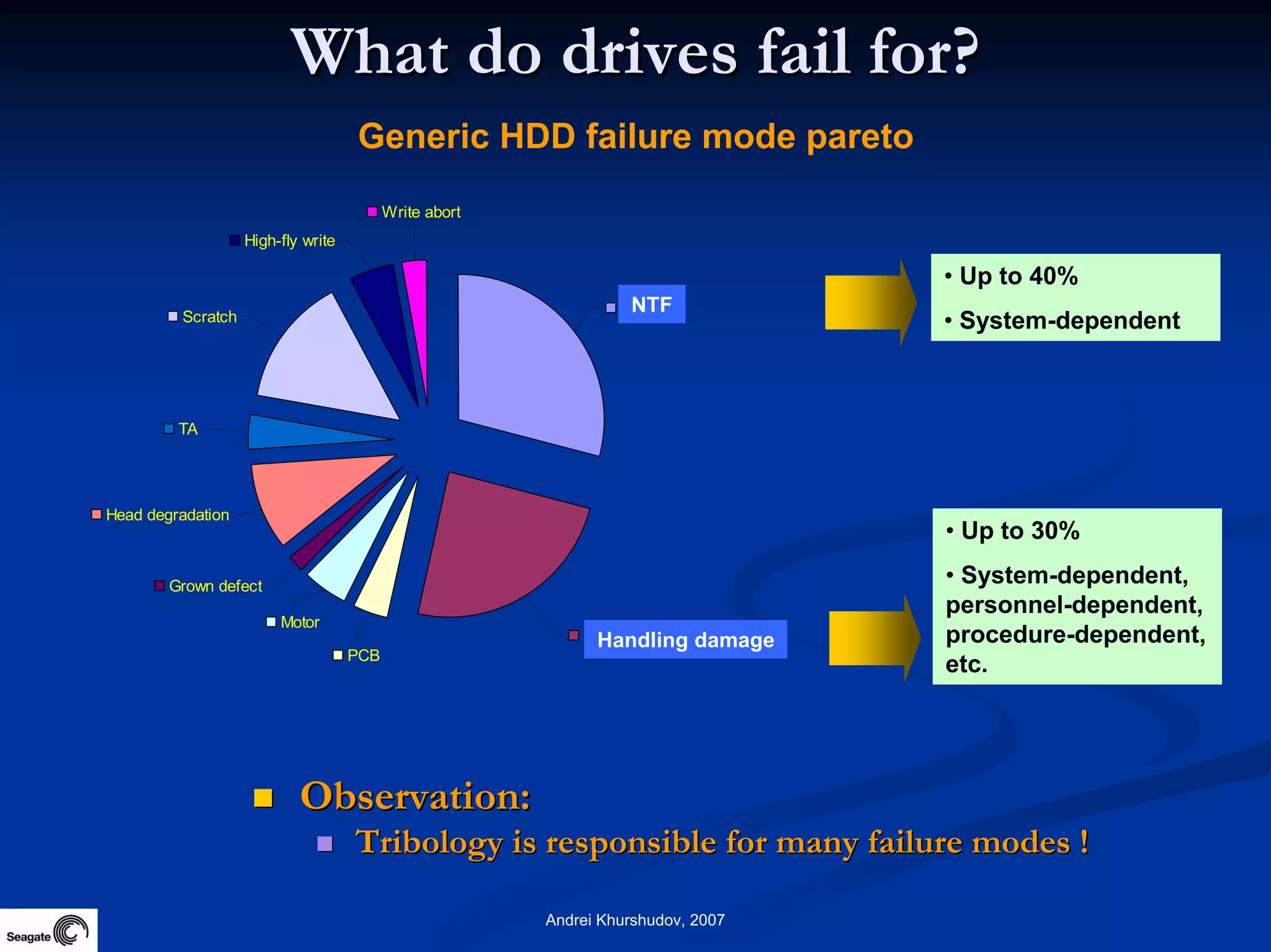

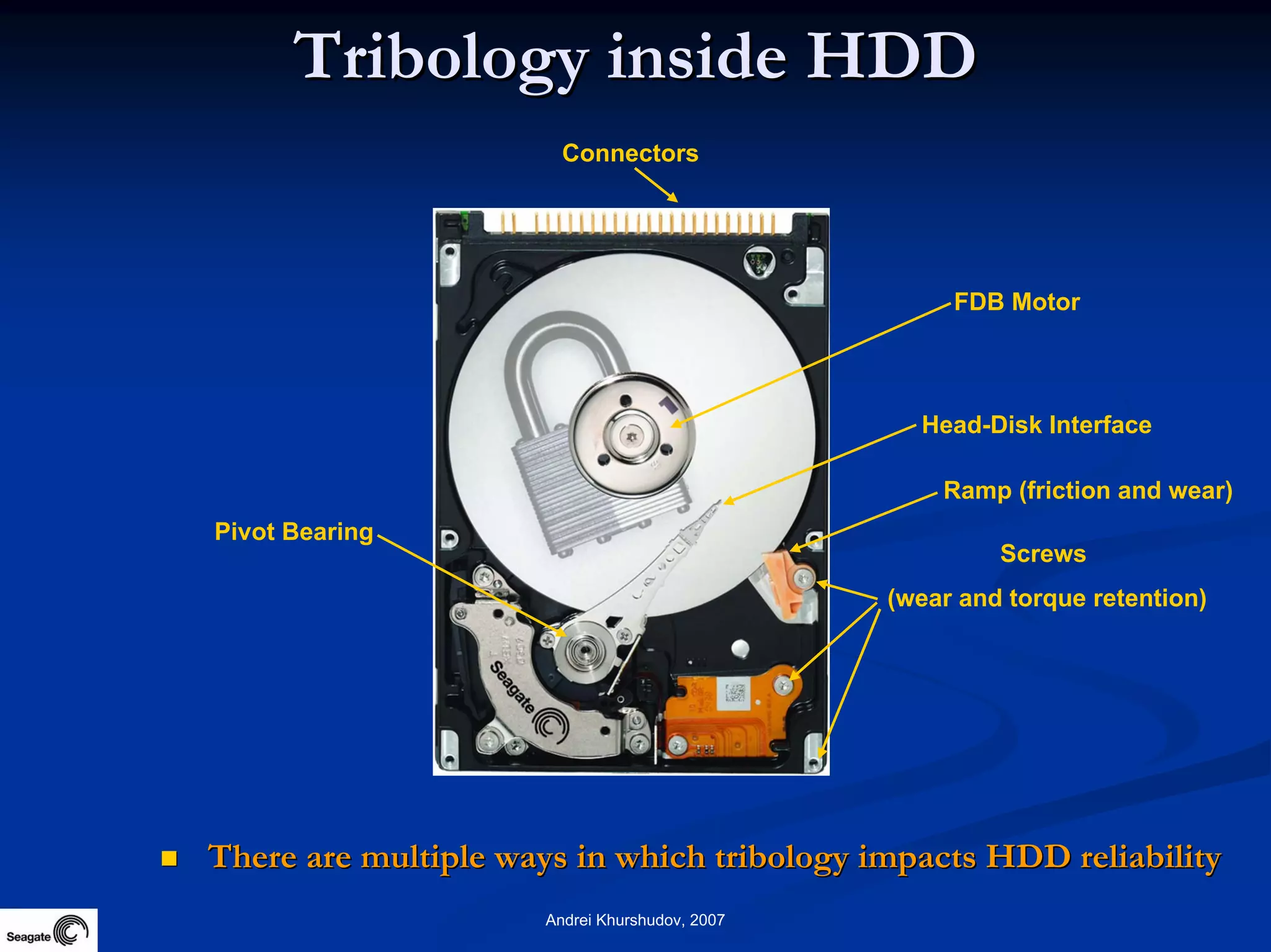

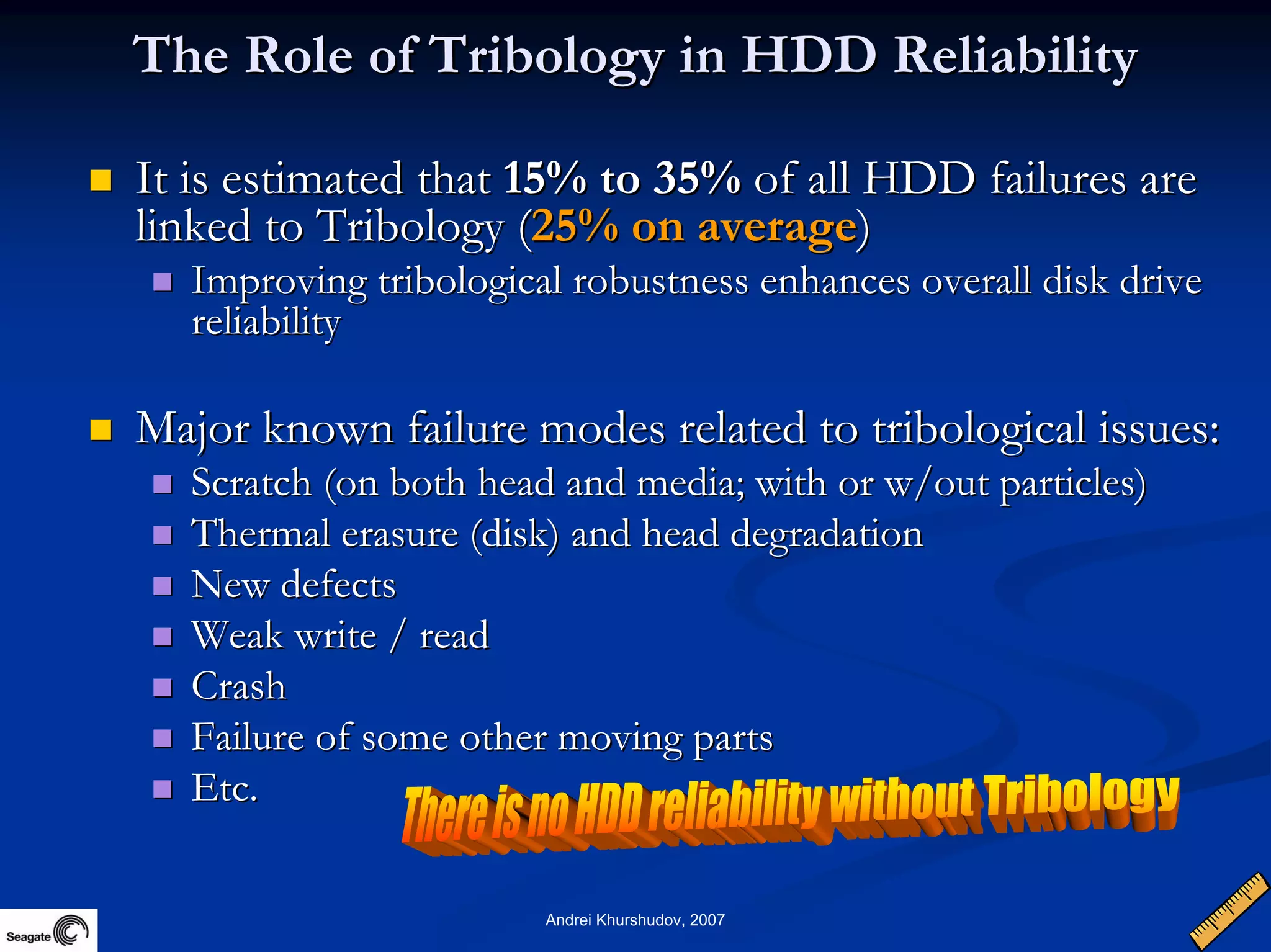

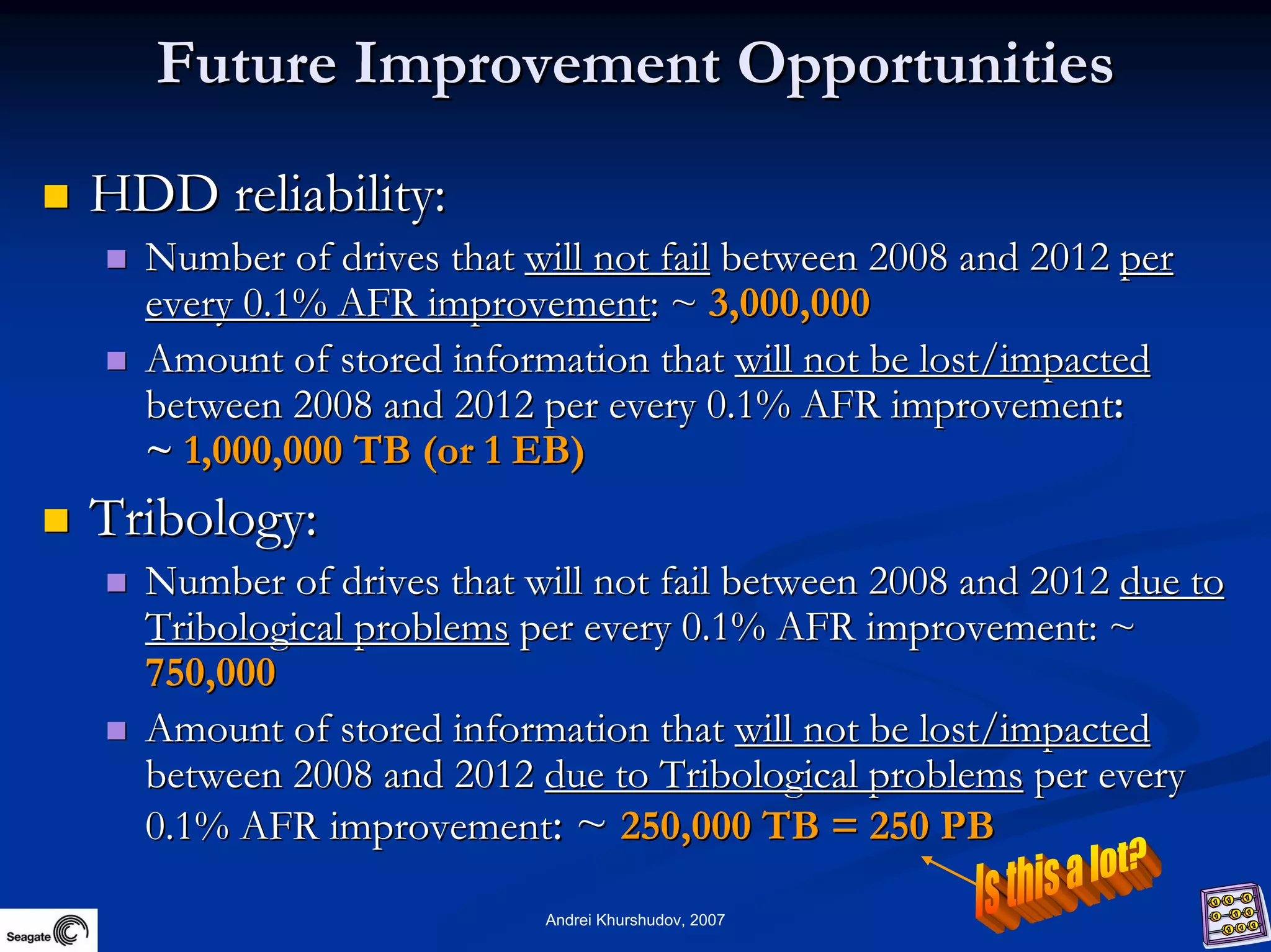

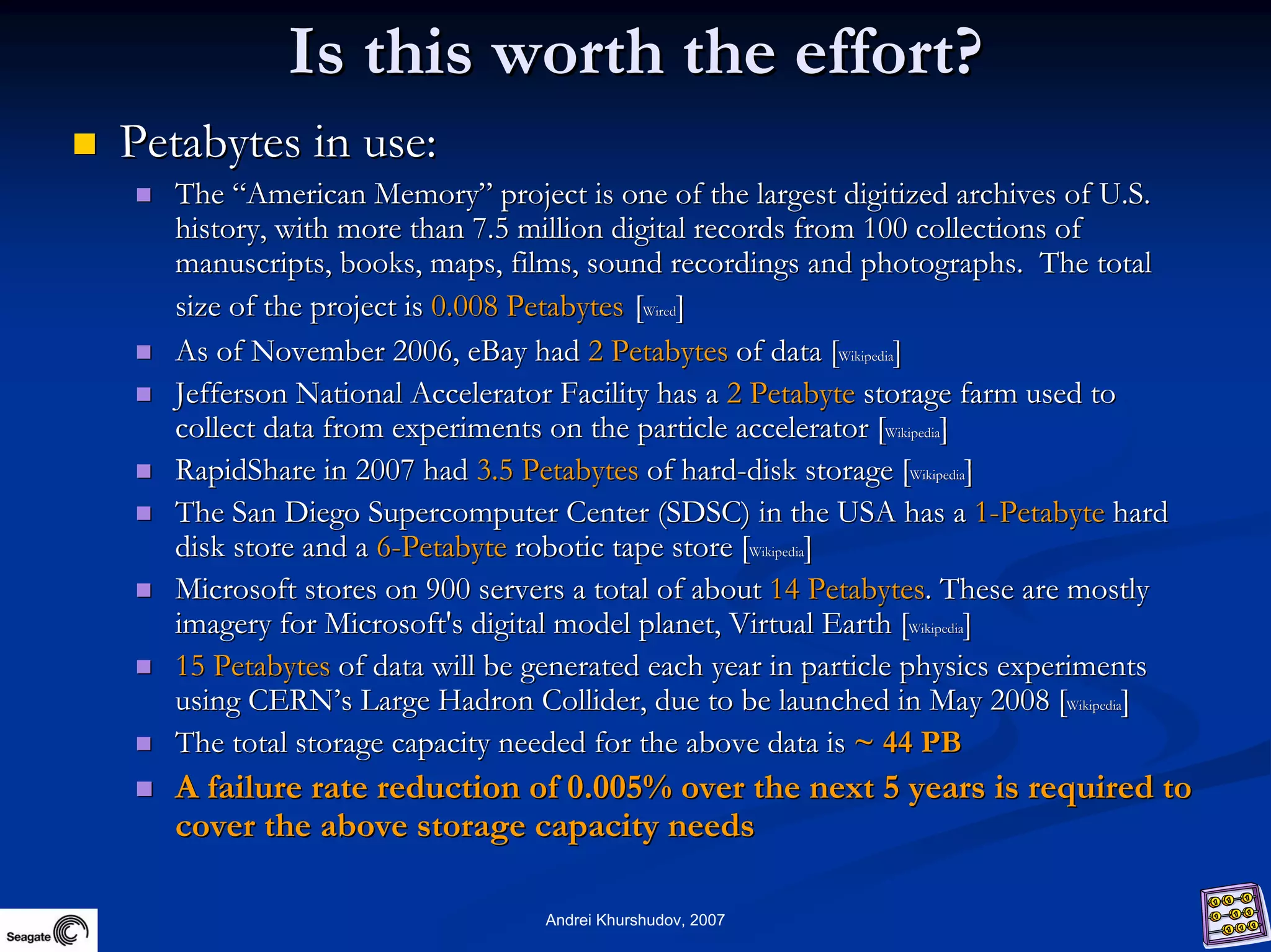

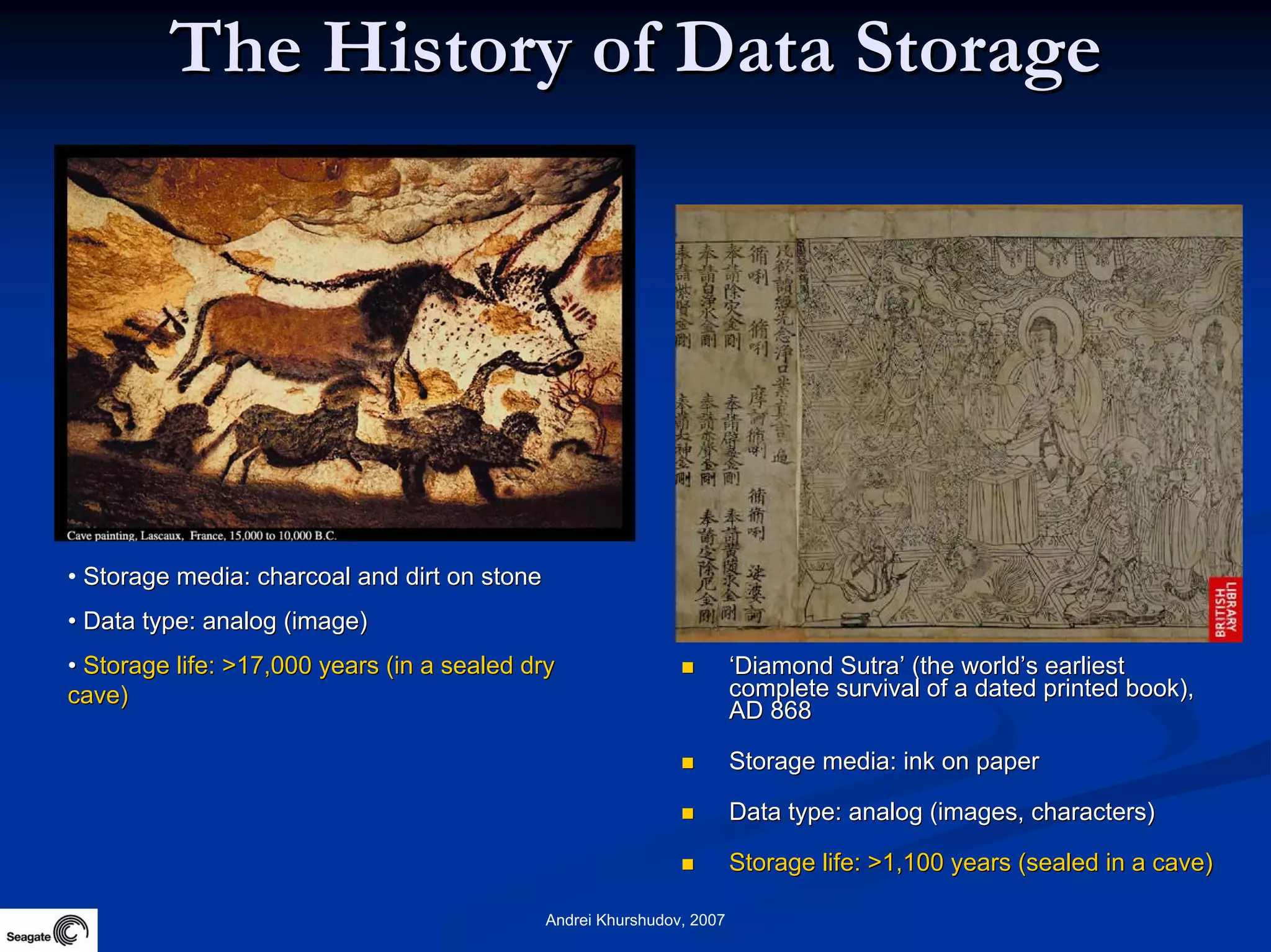

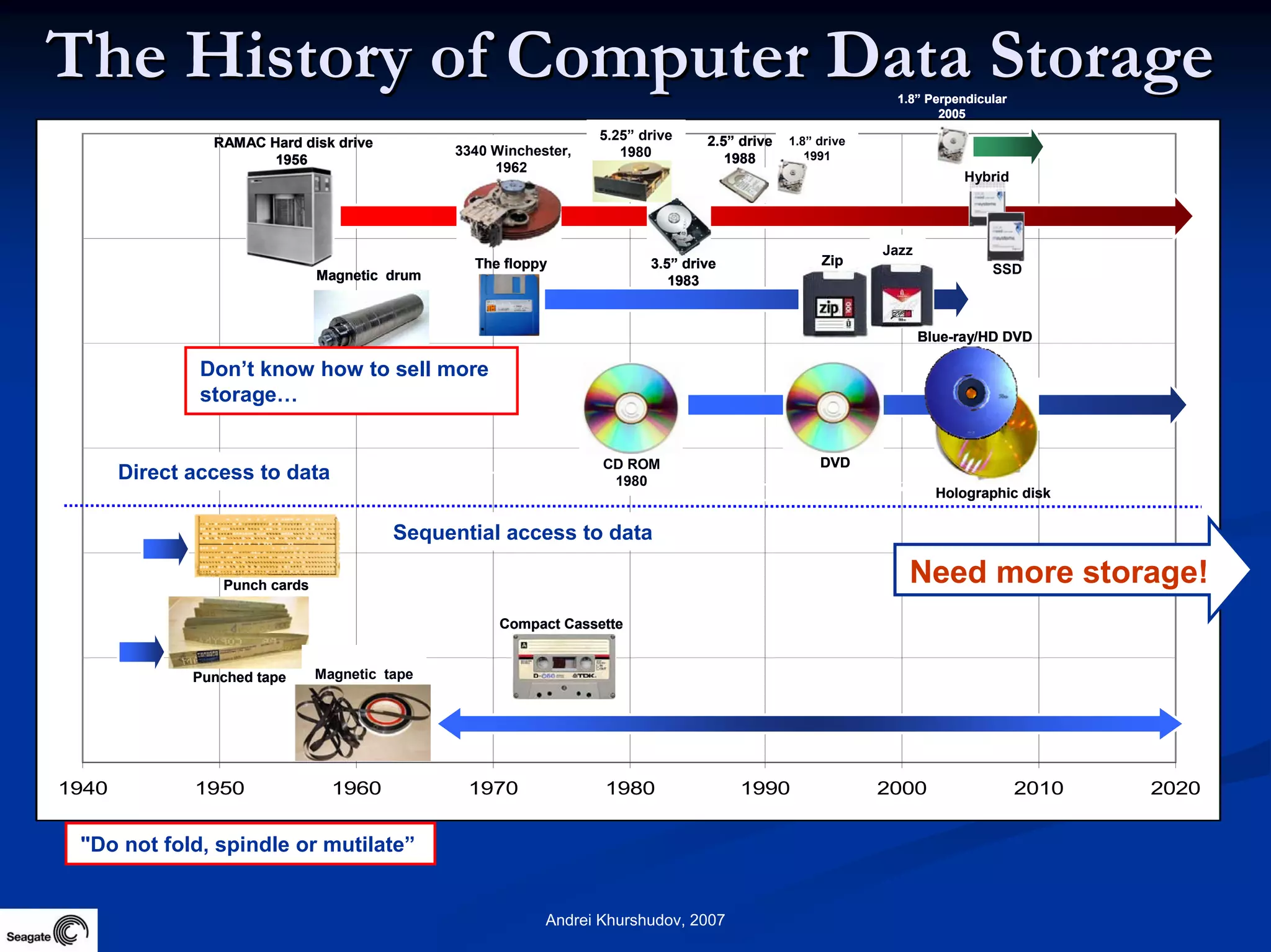

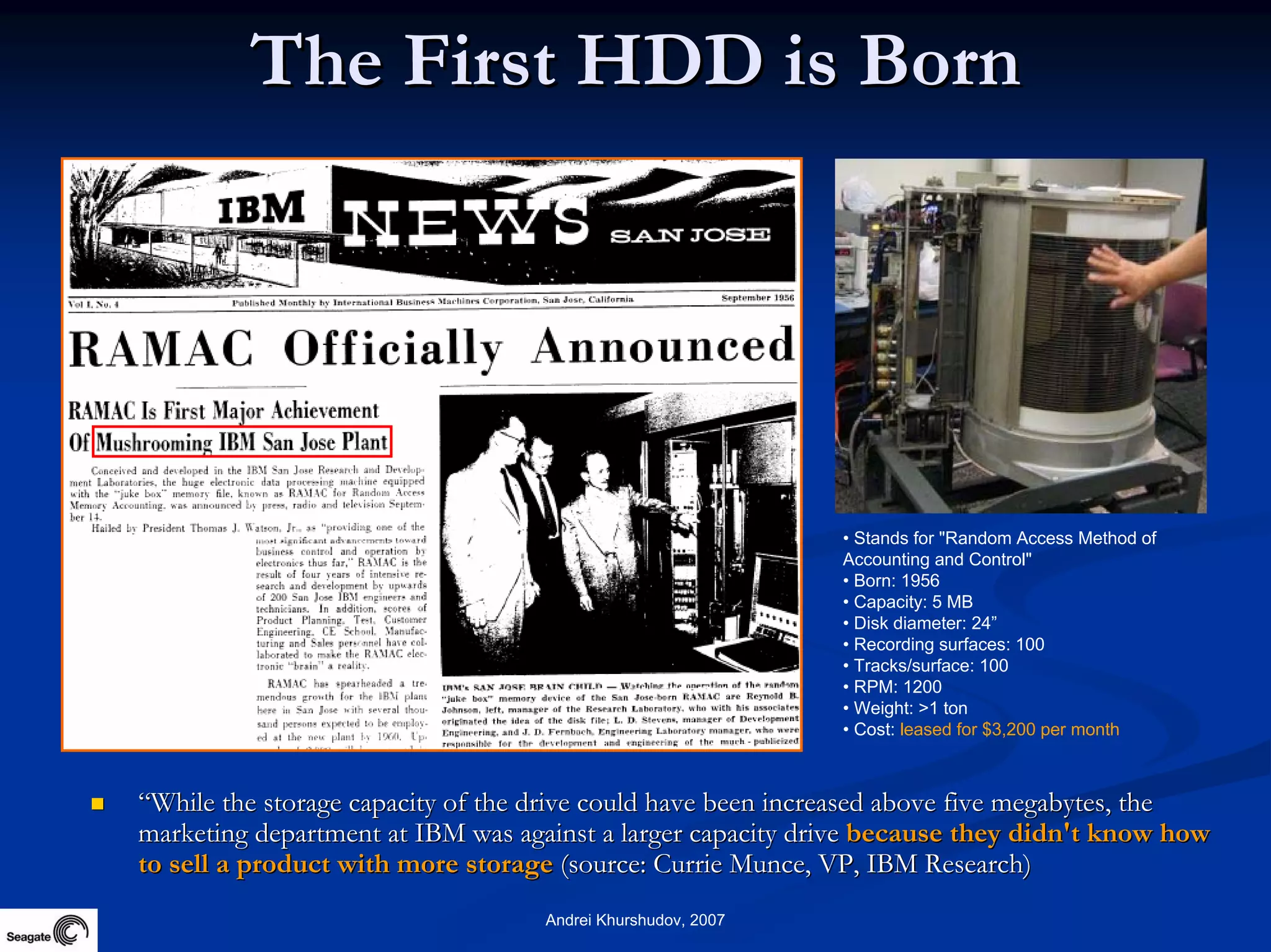

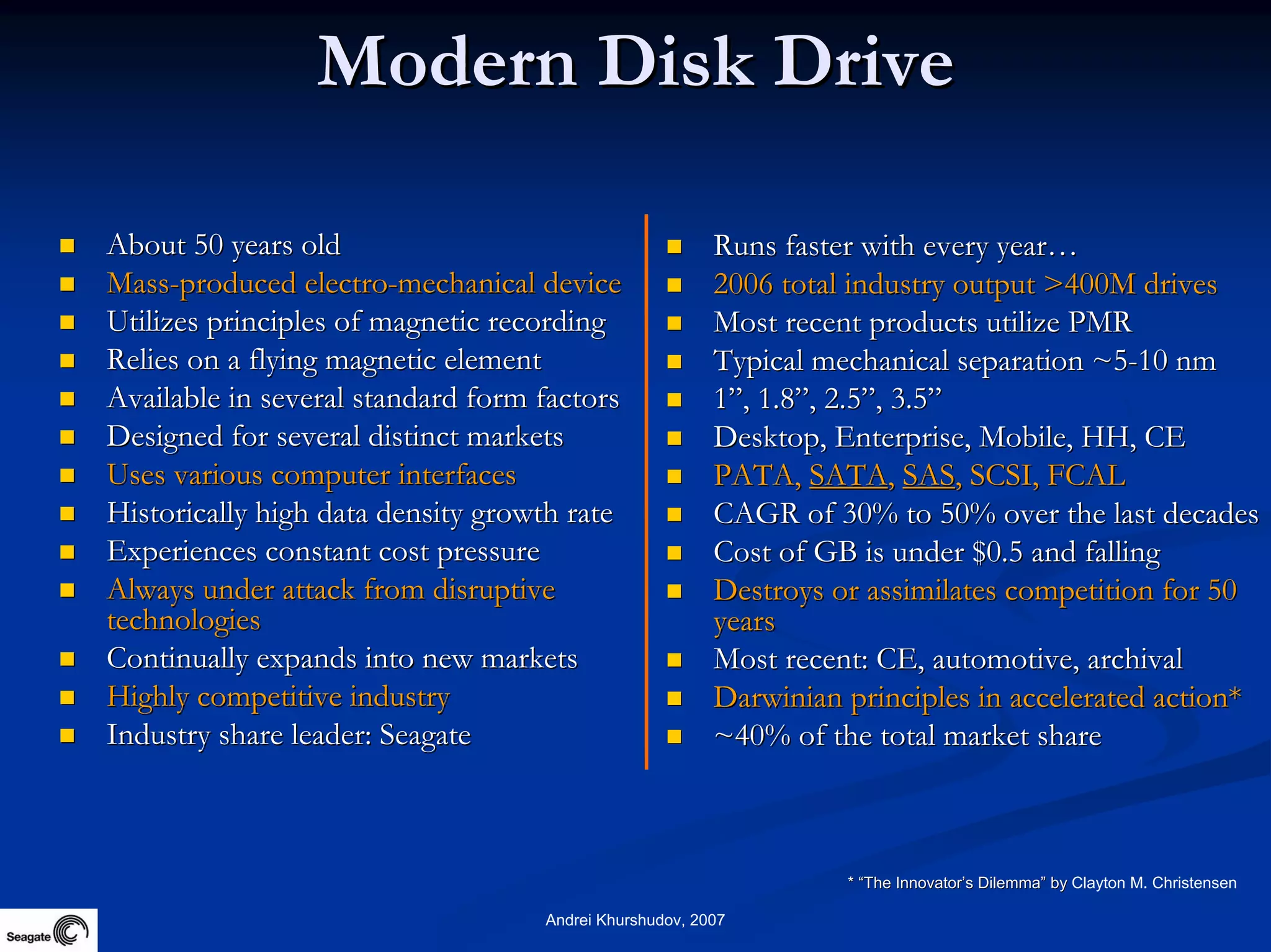

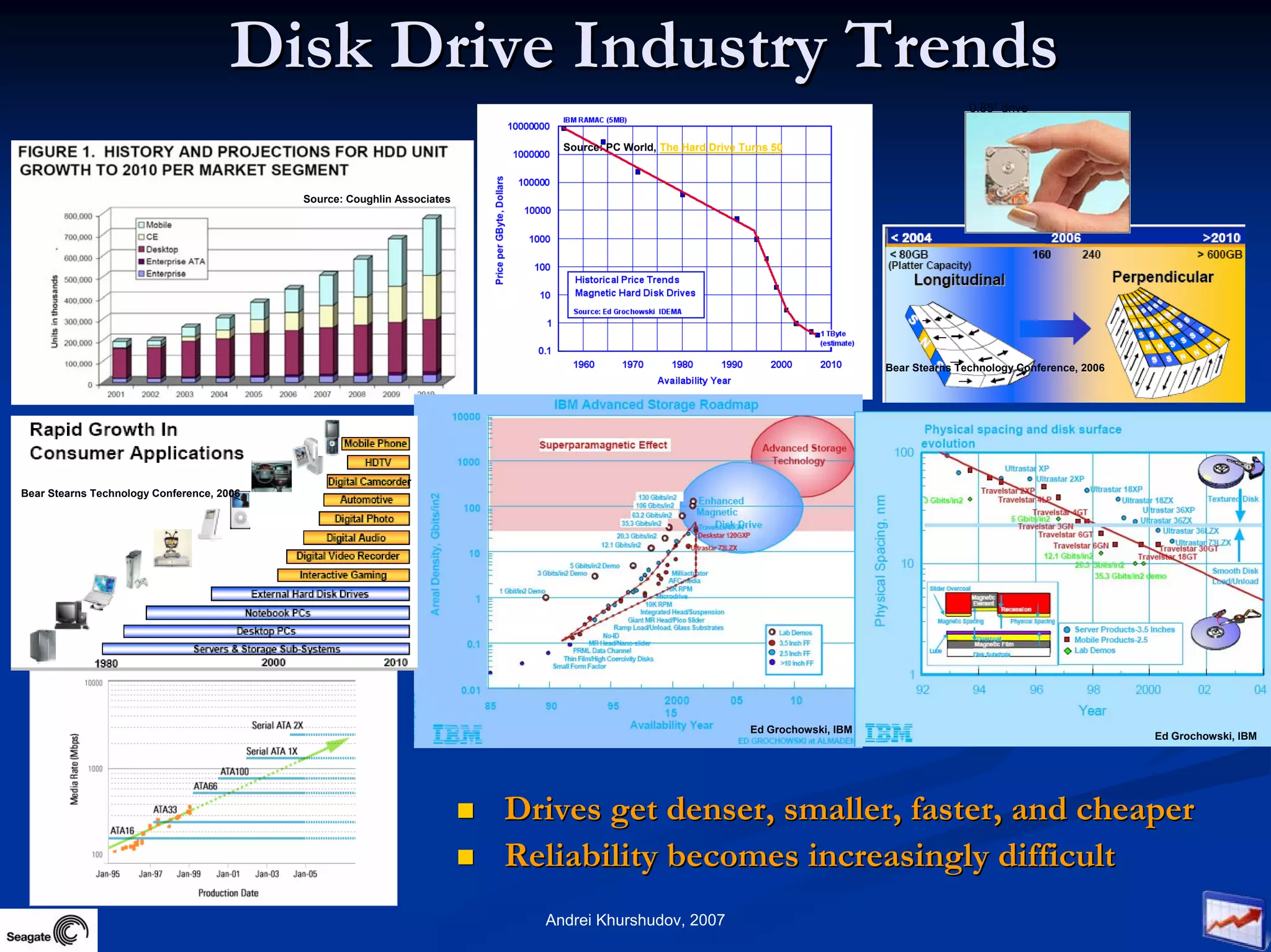

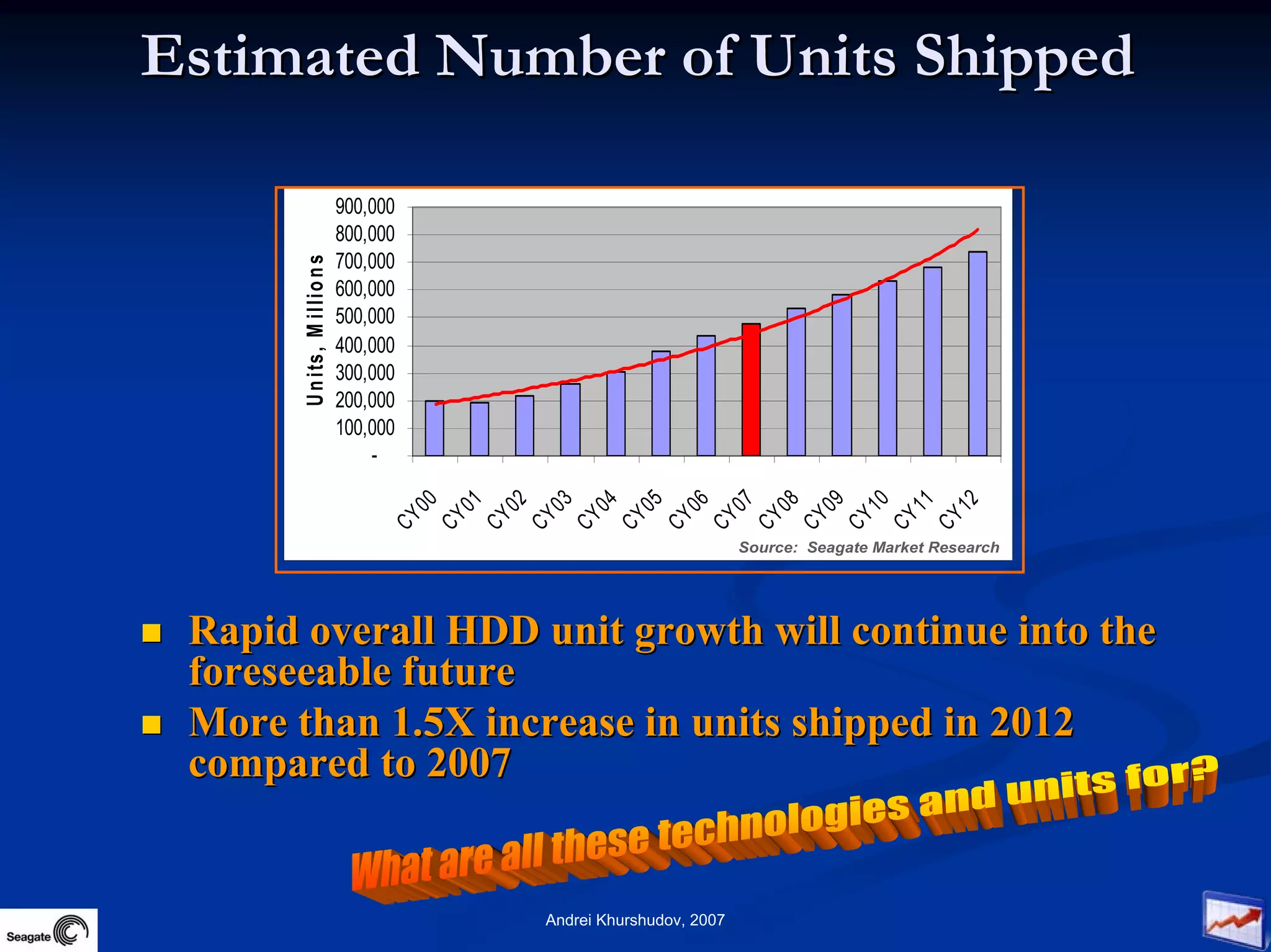

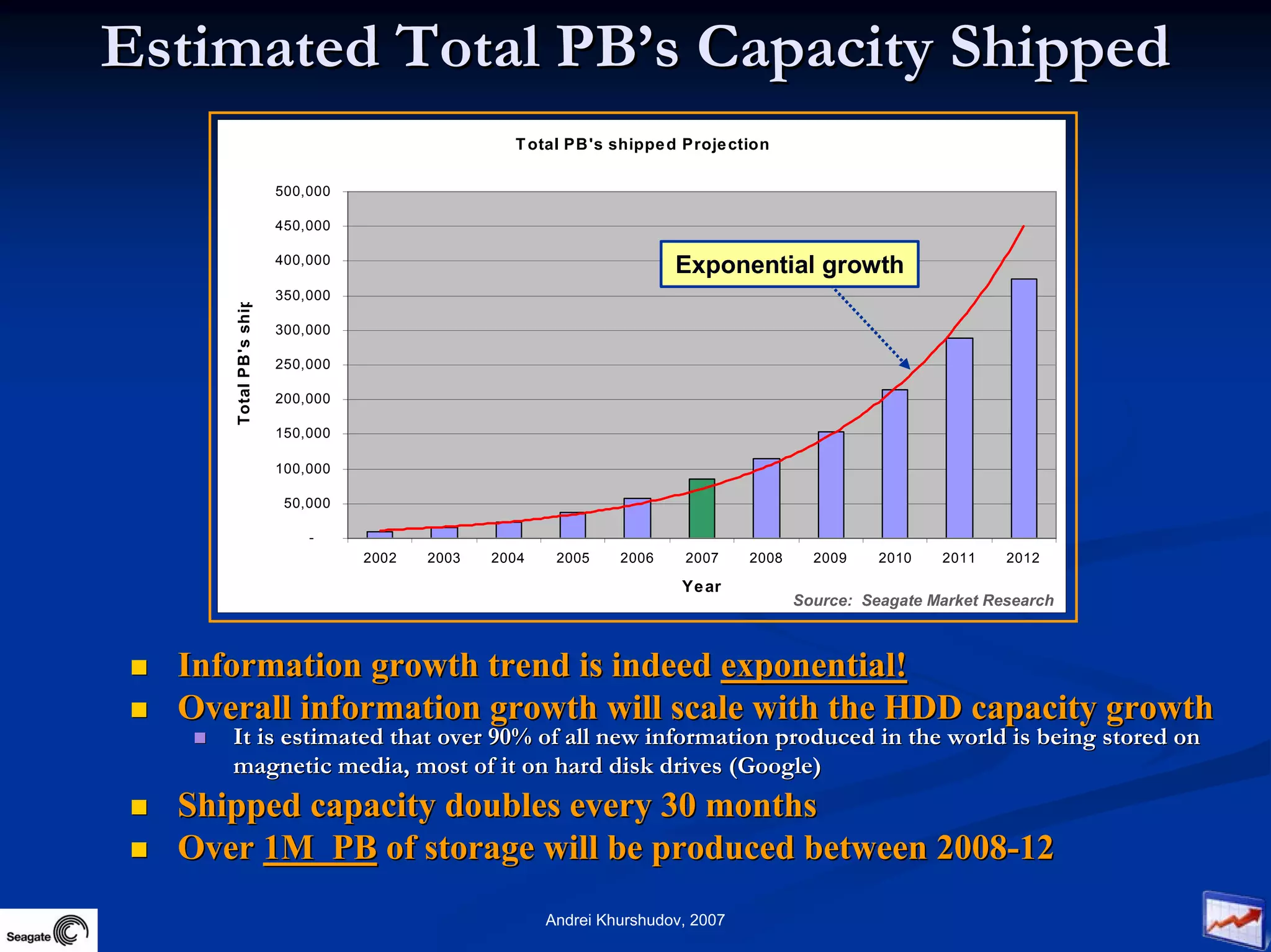

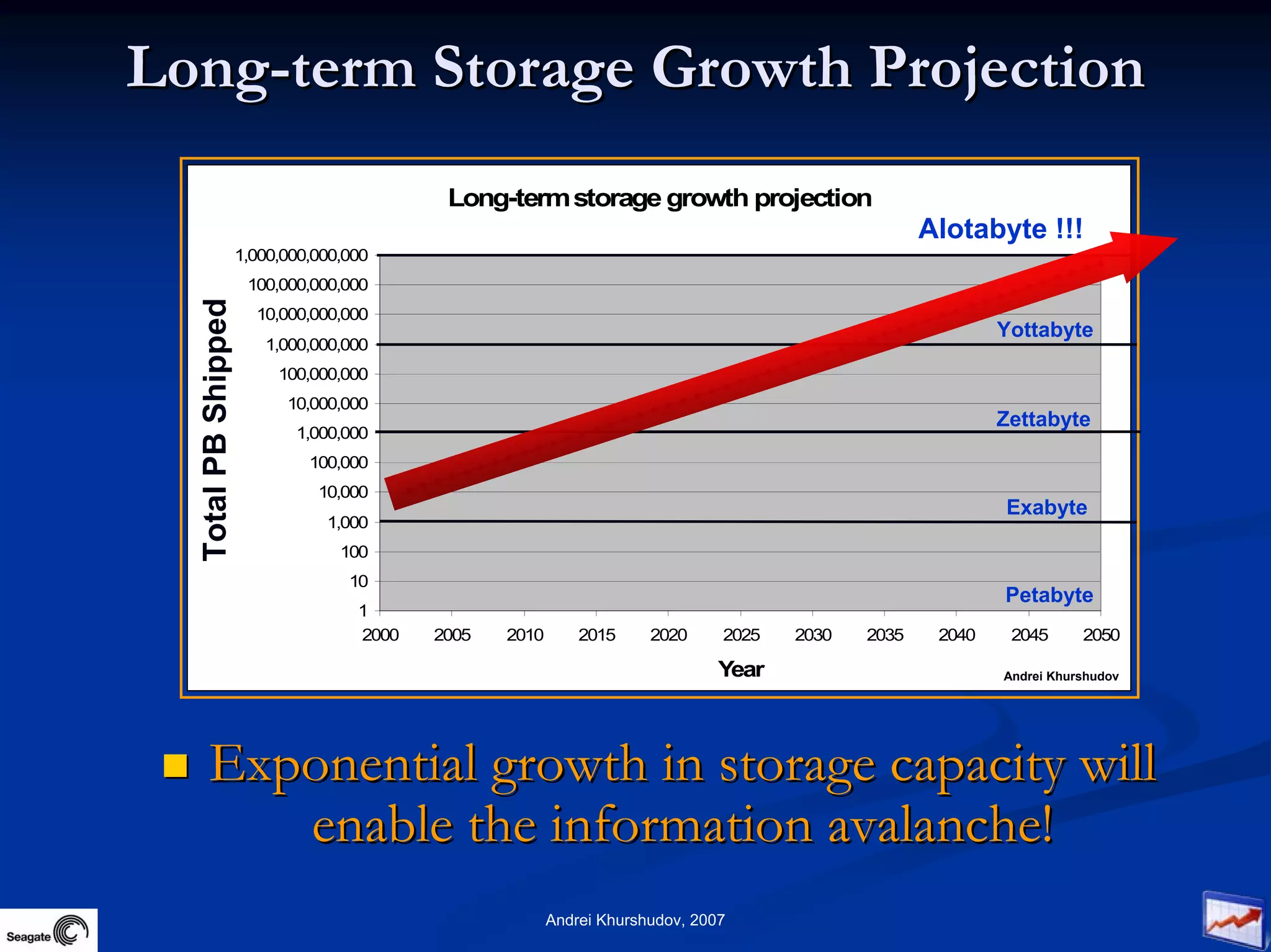

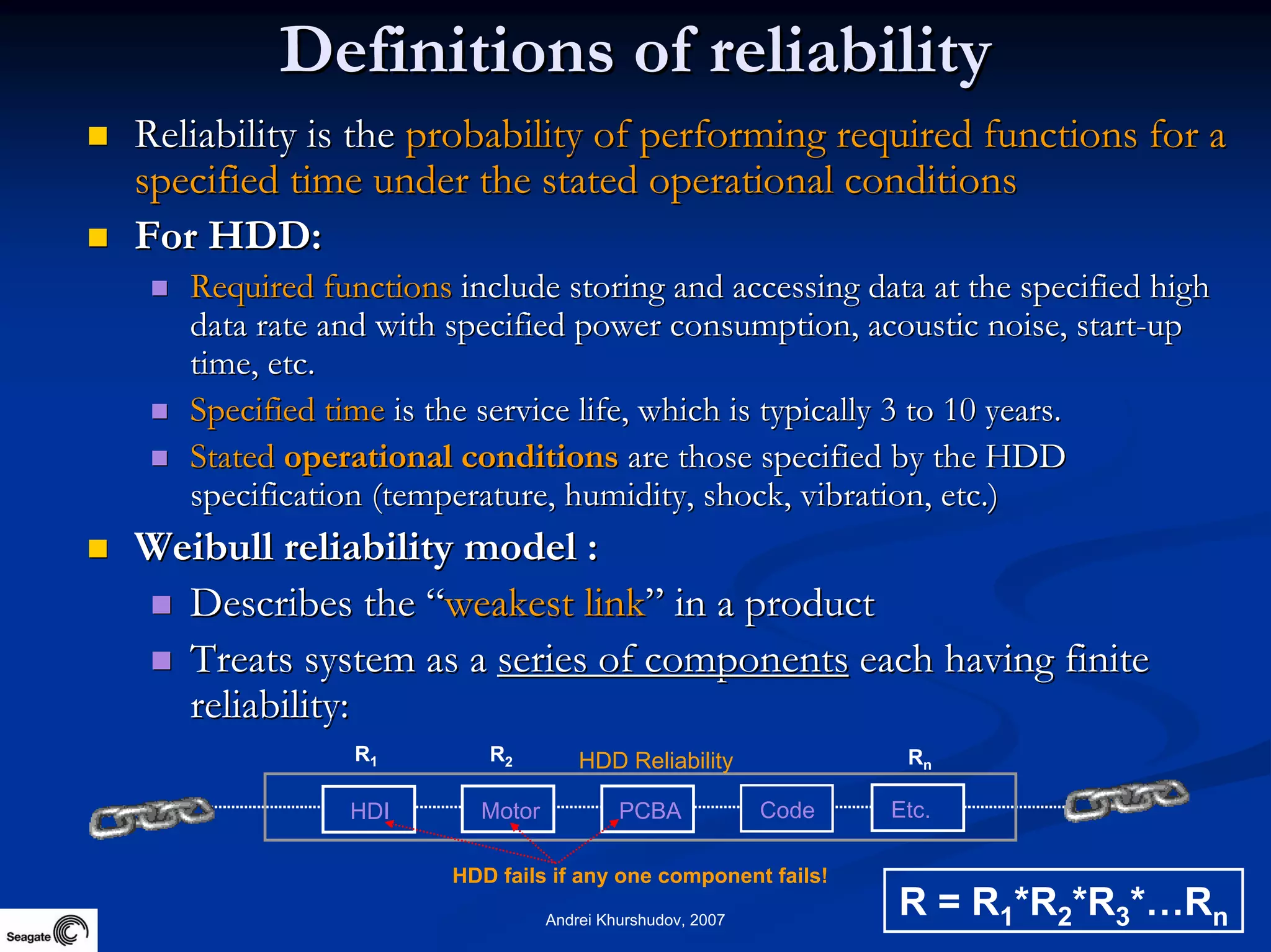

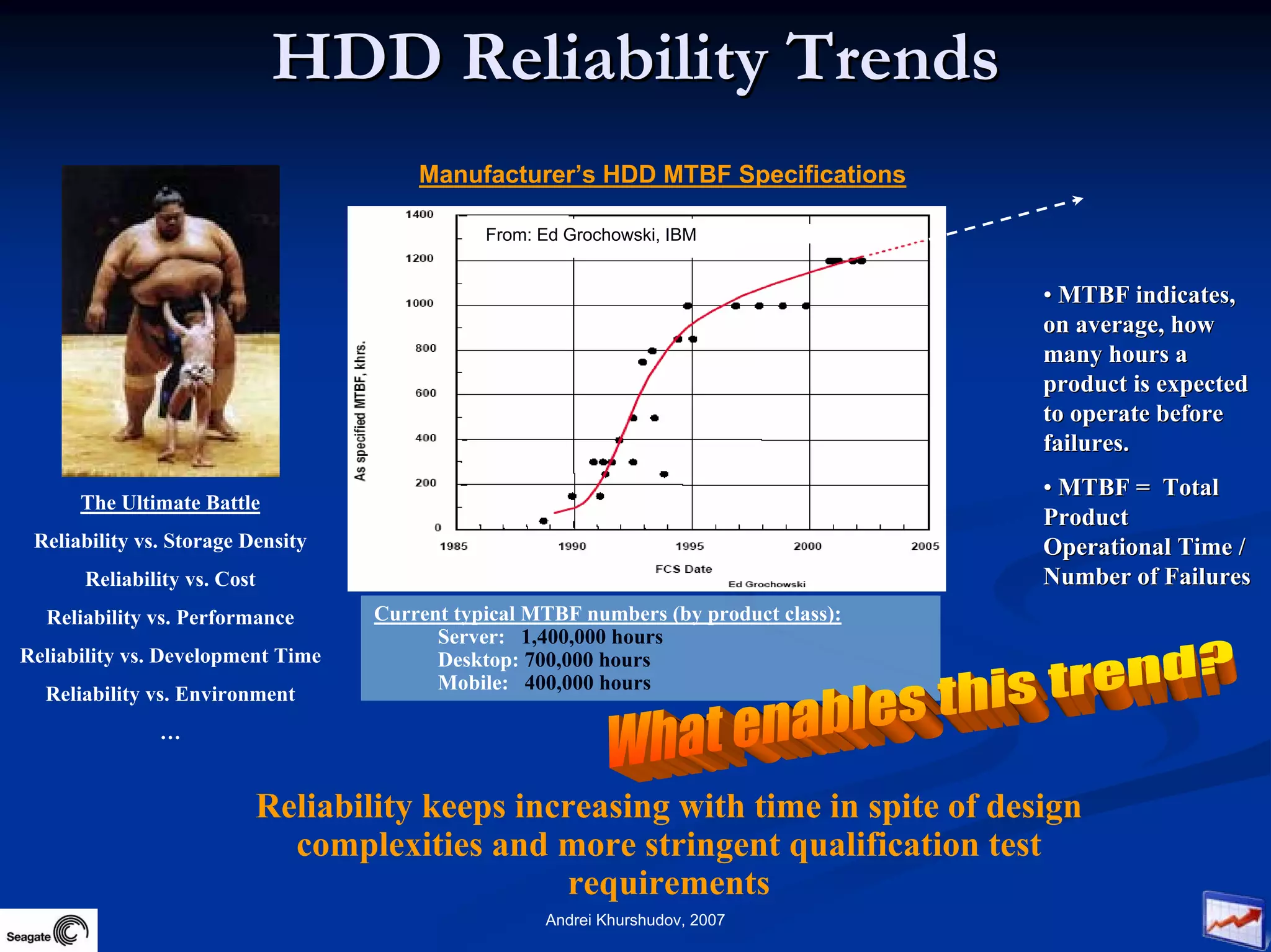

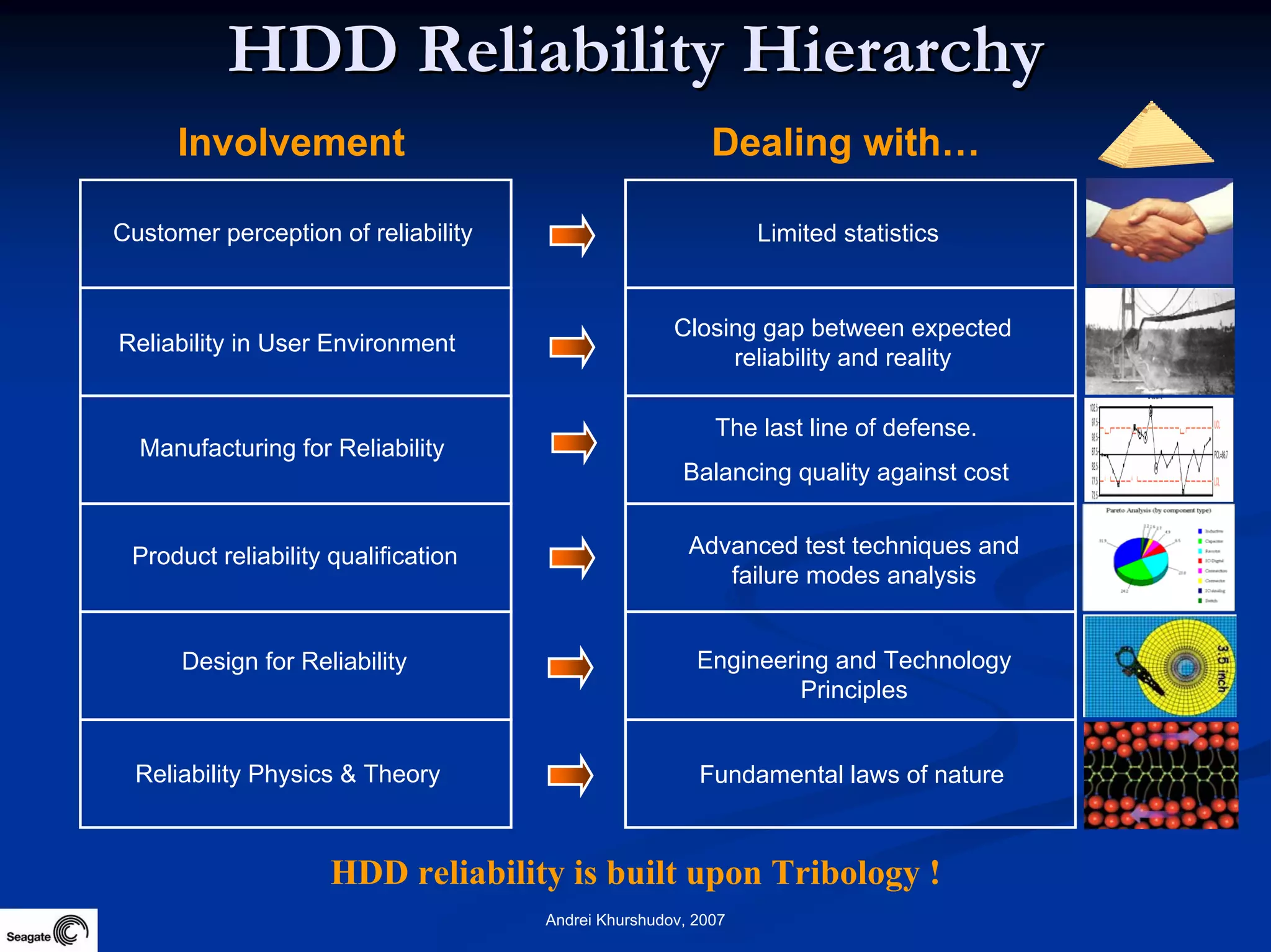

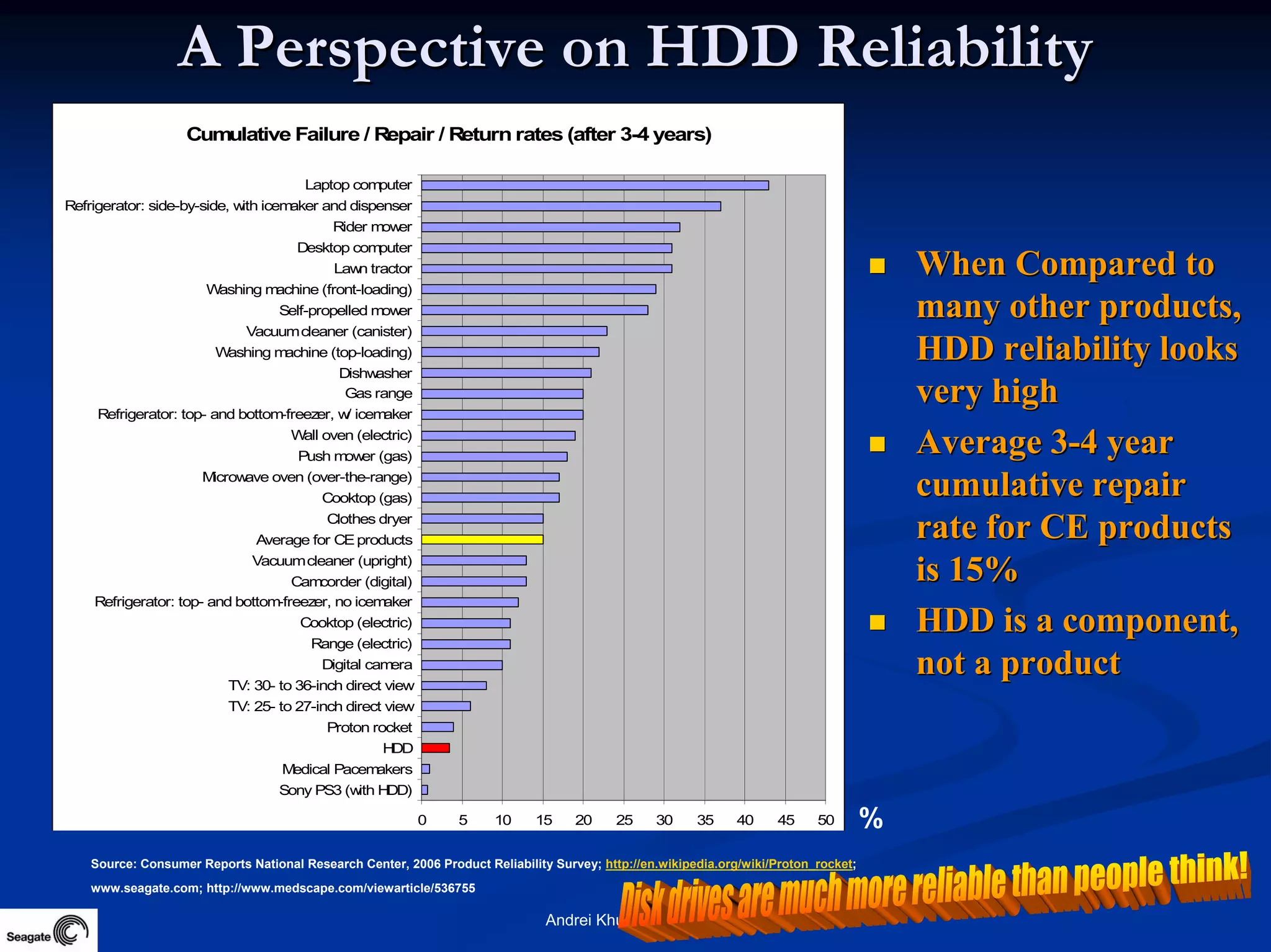

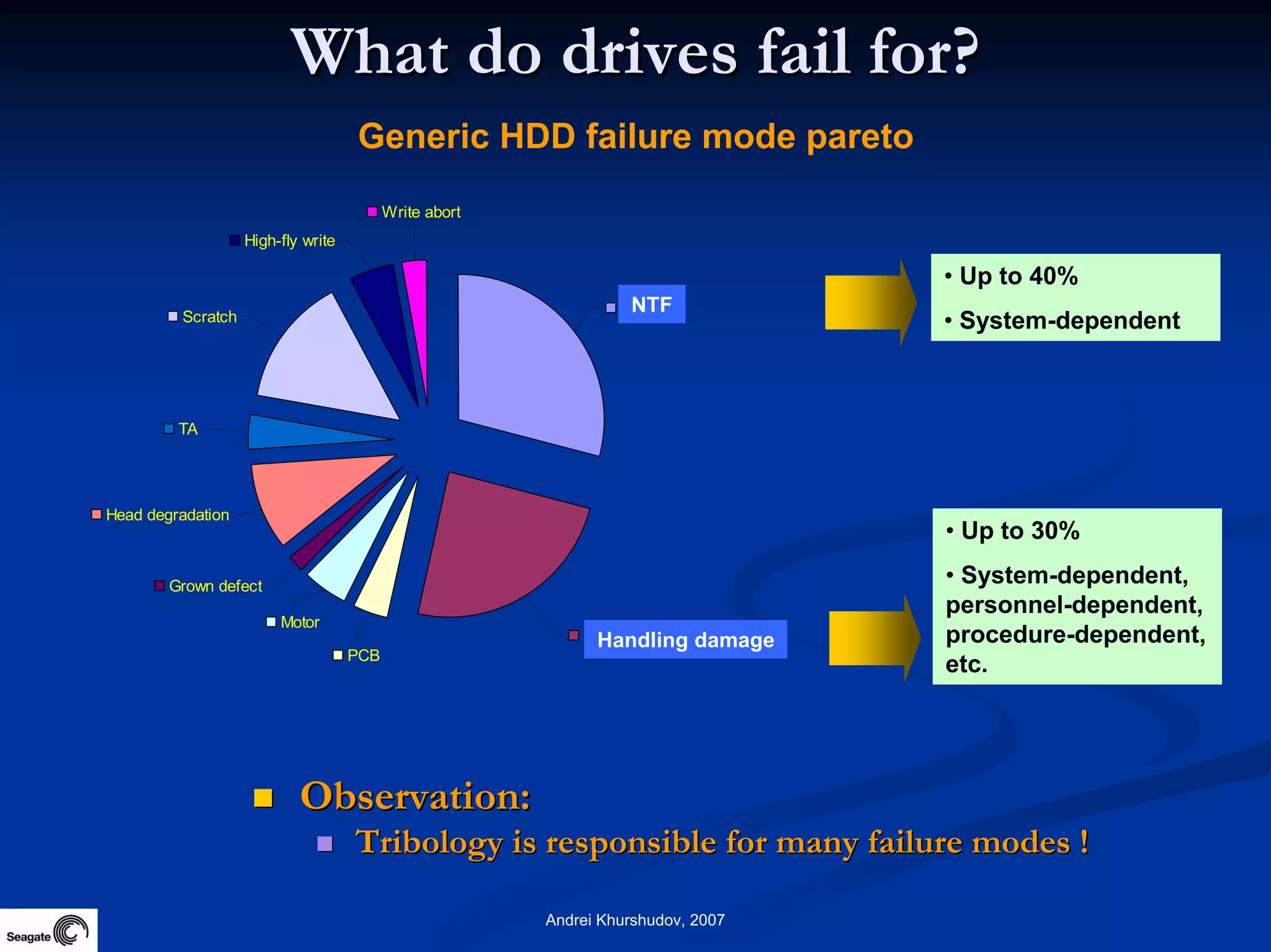

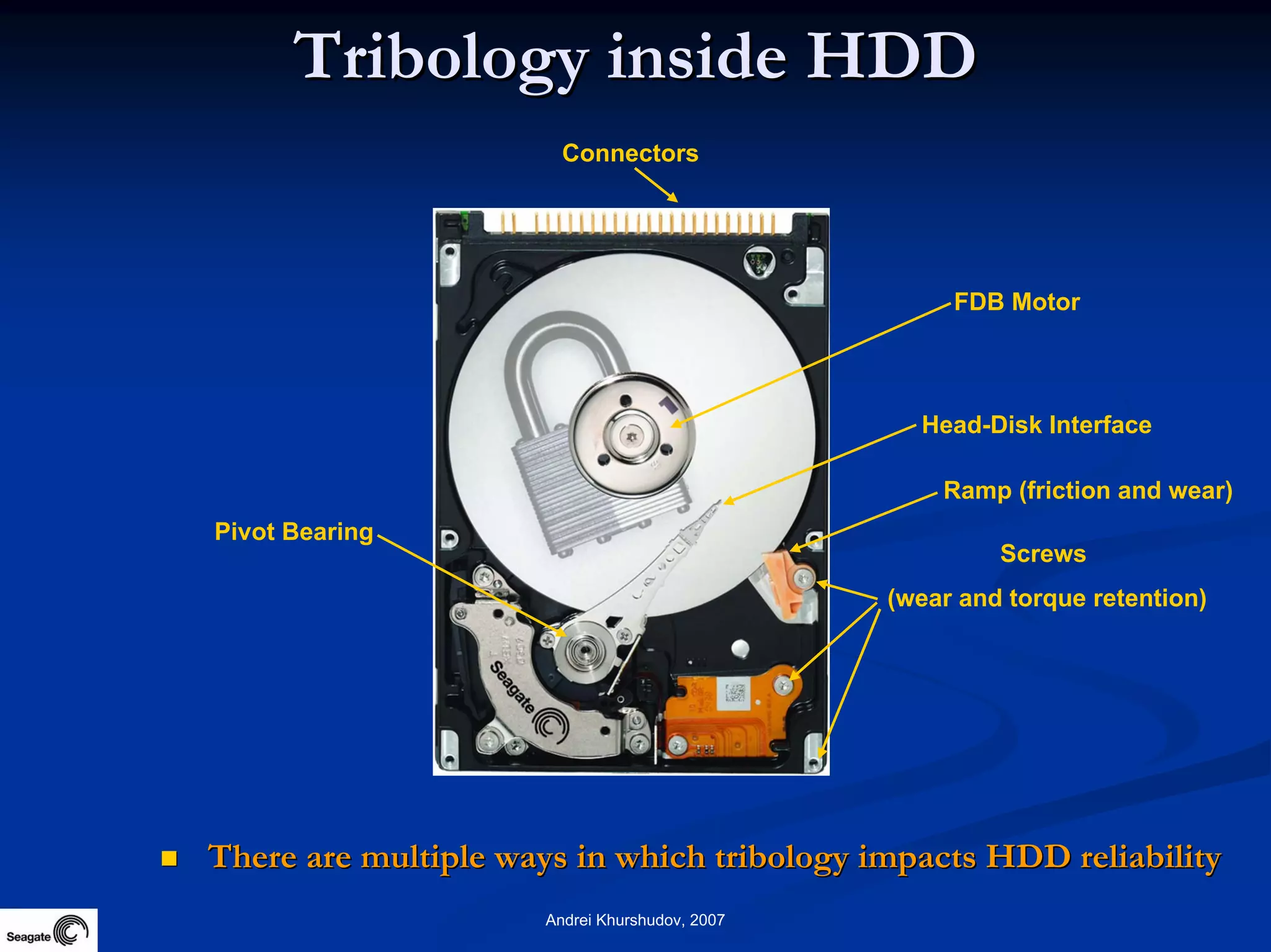

This document discusses the history and future of data storage. It begins with early examples of data storage using materials like stone and paper. It then outlines the development of data storage technologies over time, from magnetic tapes and disks to modern solid state drives. The document notes that storage capacity and data growth are increasing exponentially, and projections estimate yottabytes of storage being produced by 2050. Reliability is also discussed, as are the tribological factors that impact hard disk drive reliability.