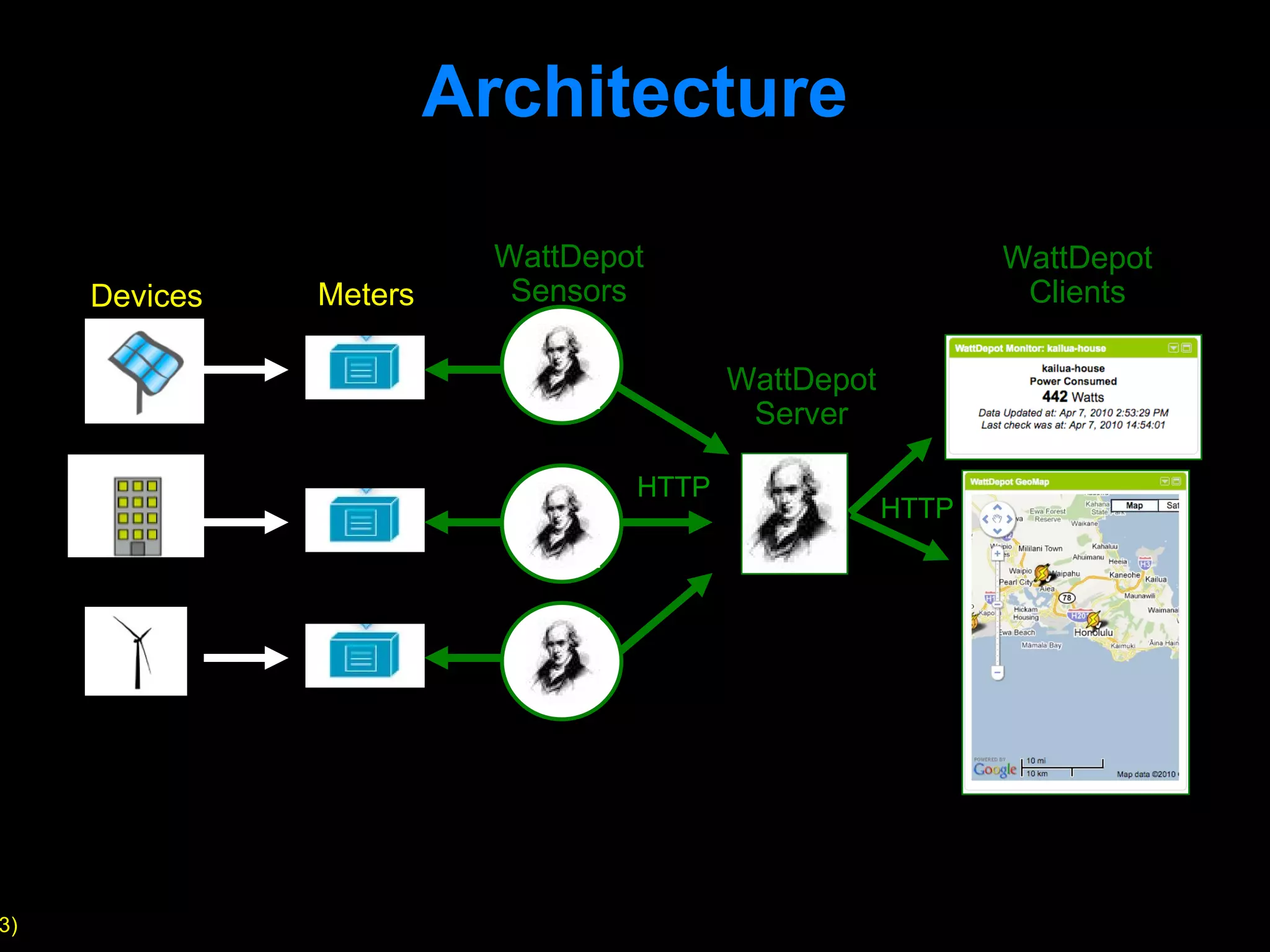

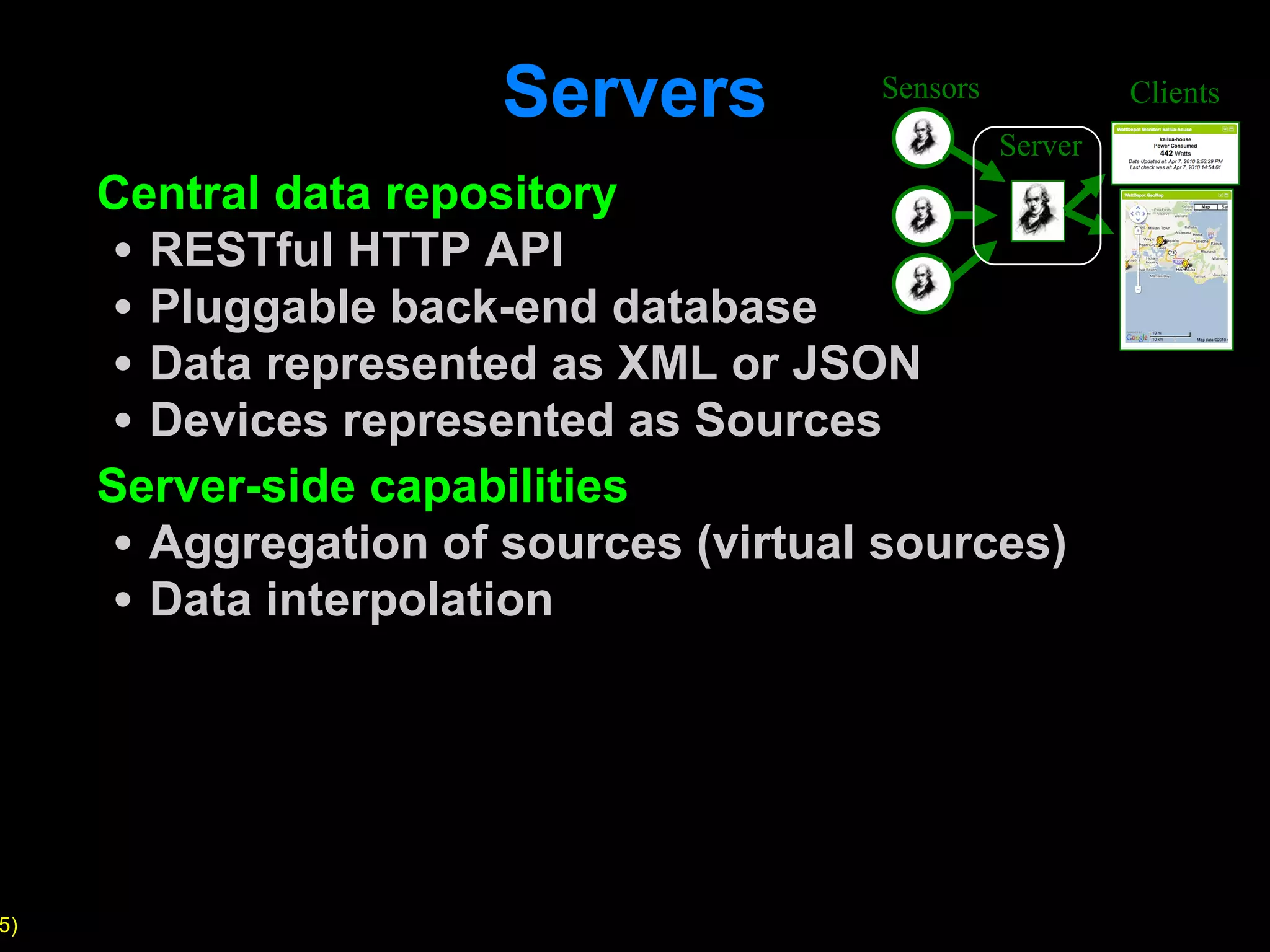

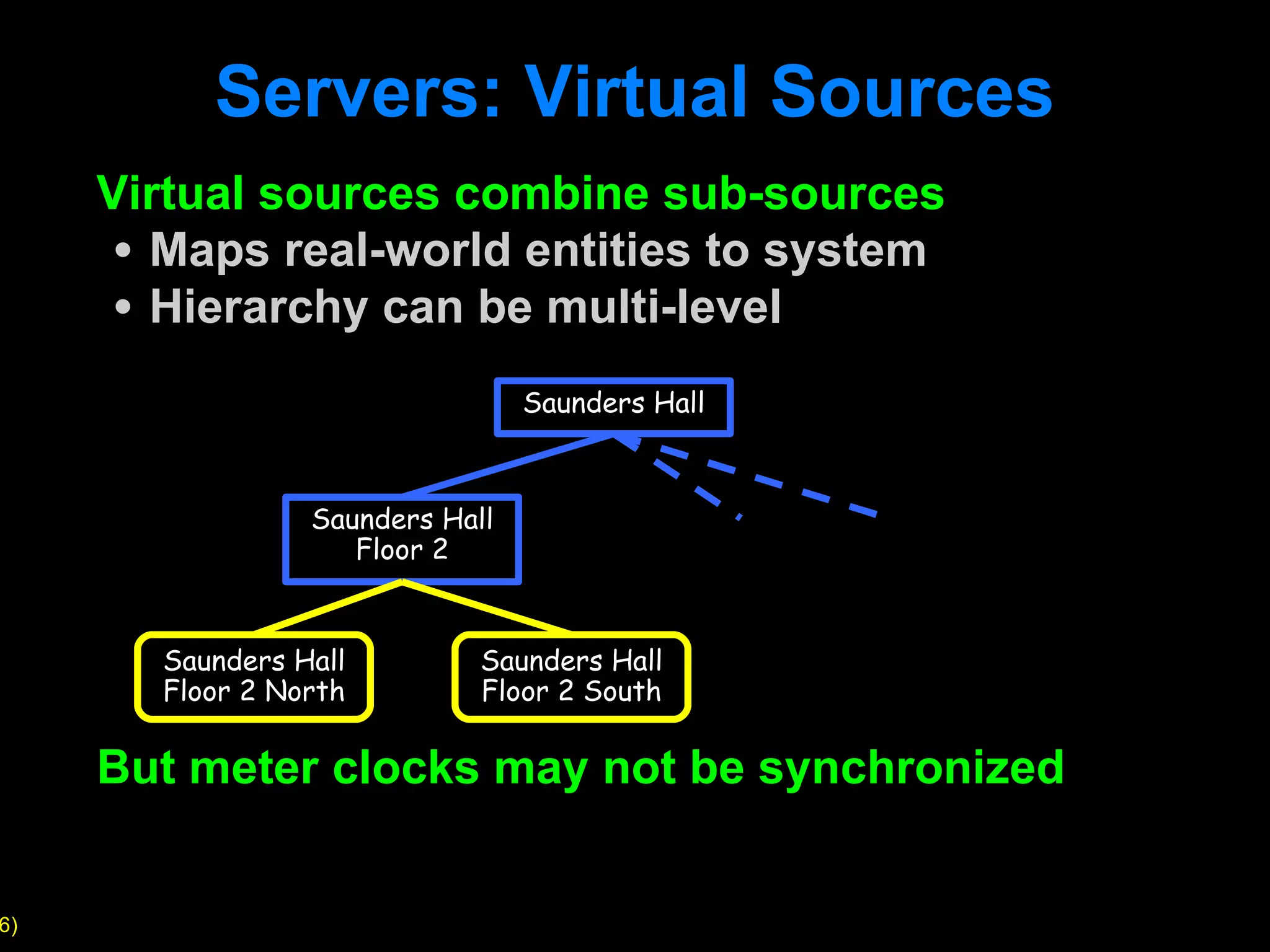

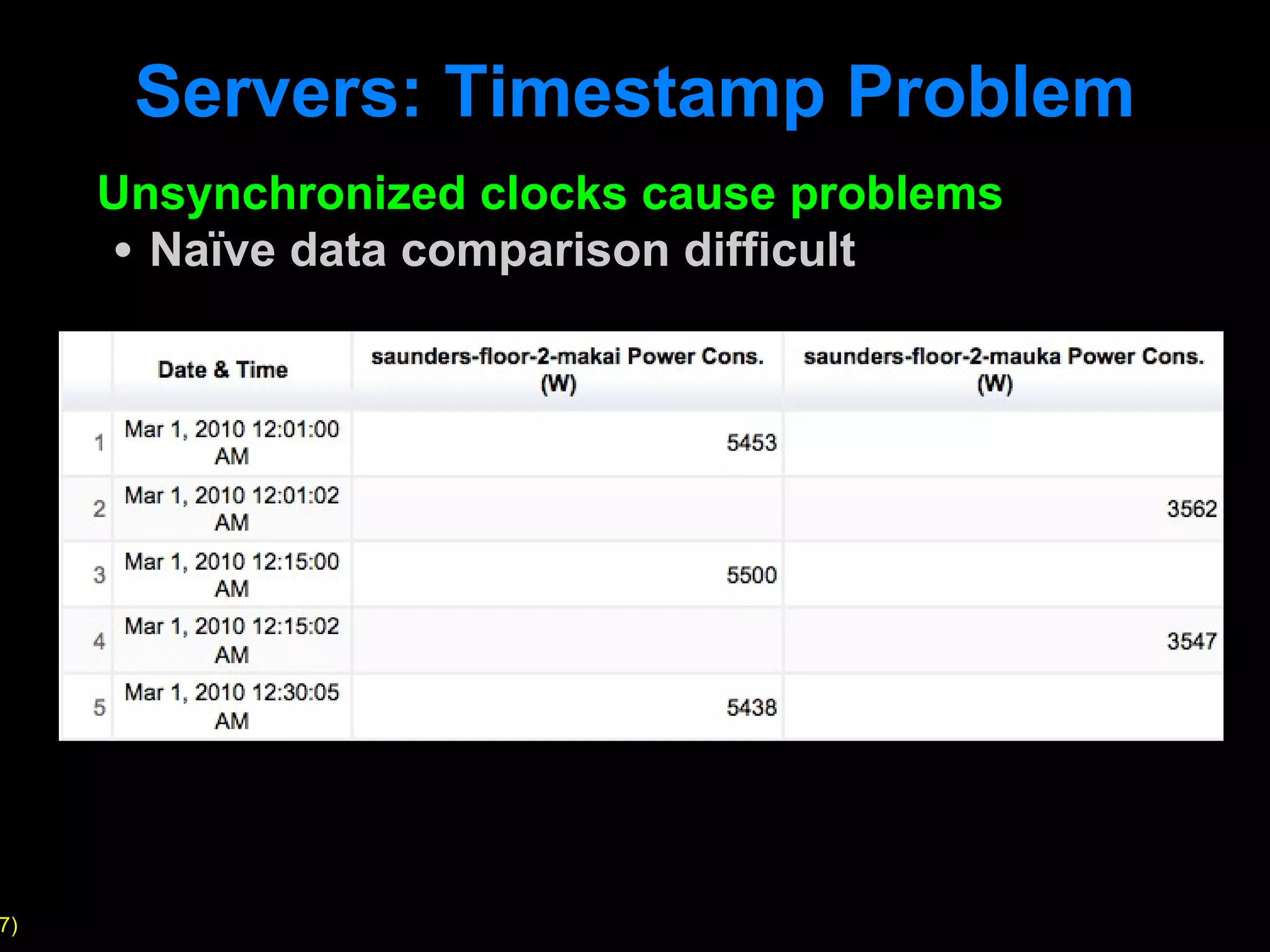

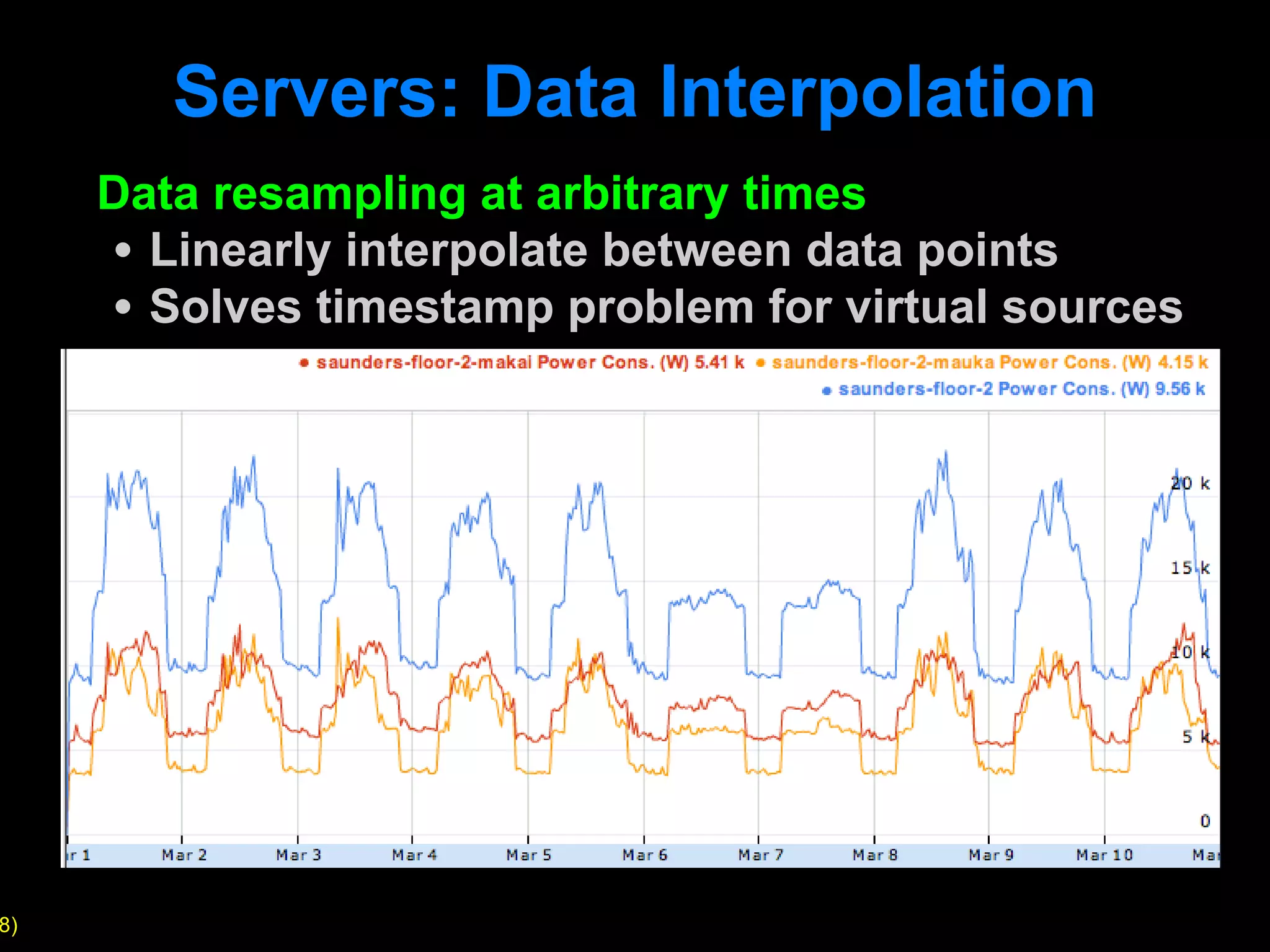

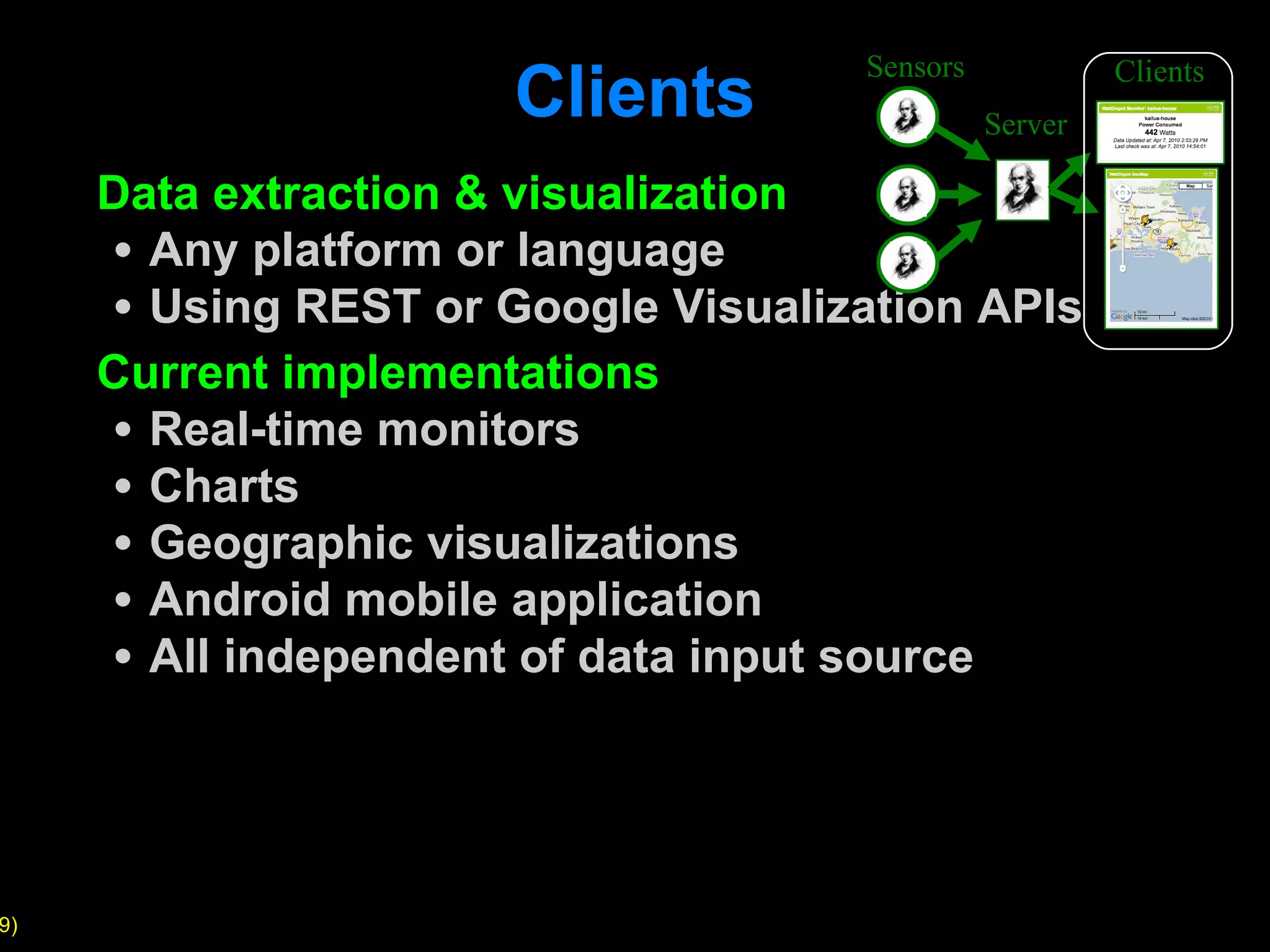

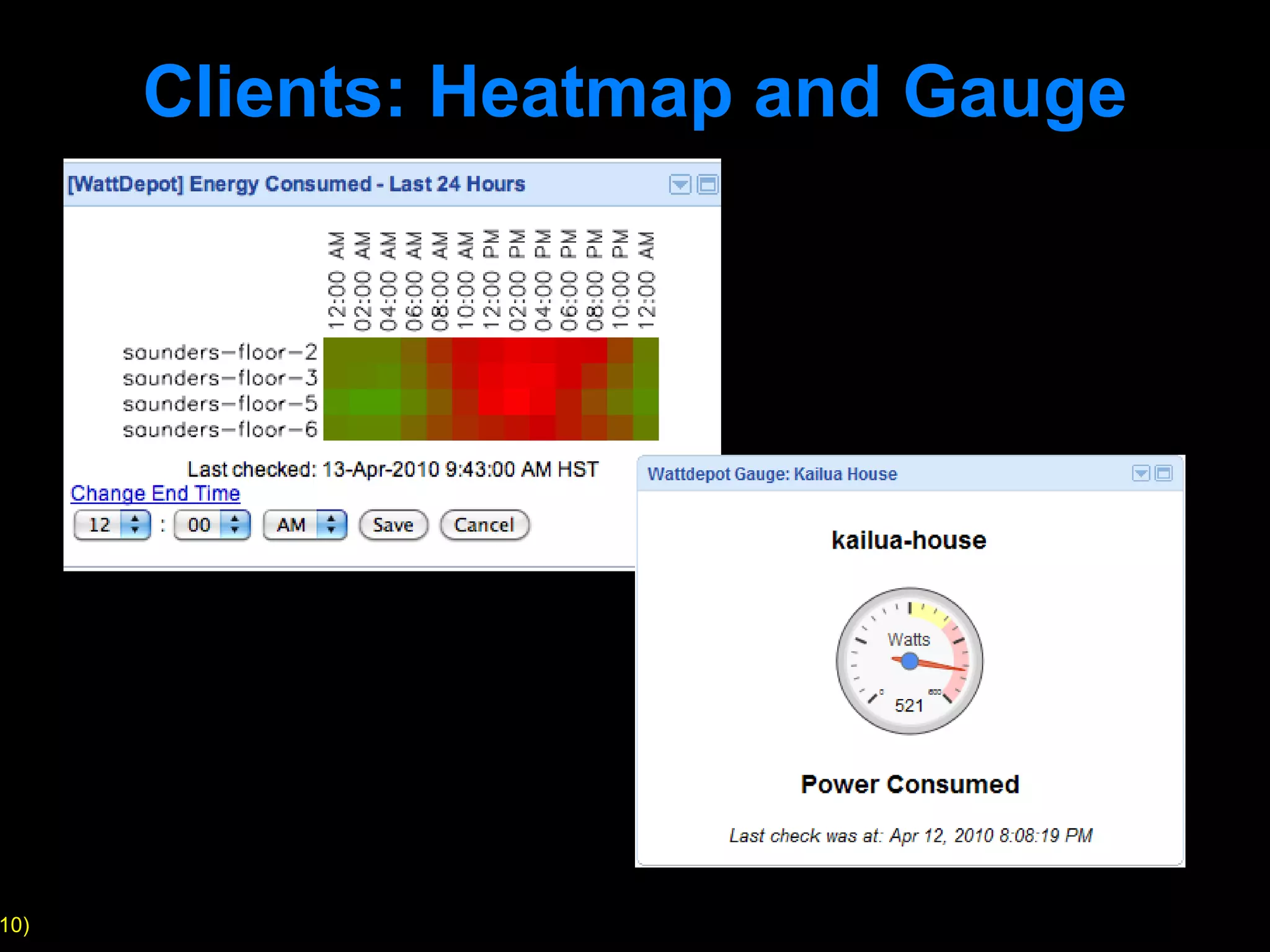

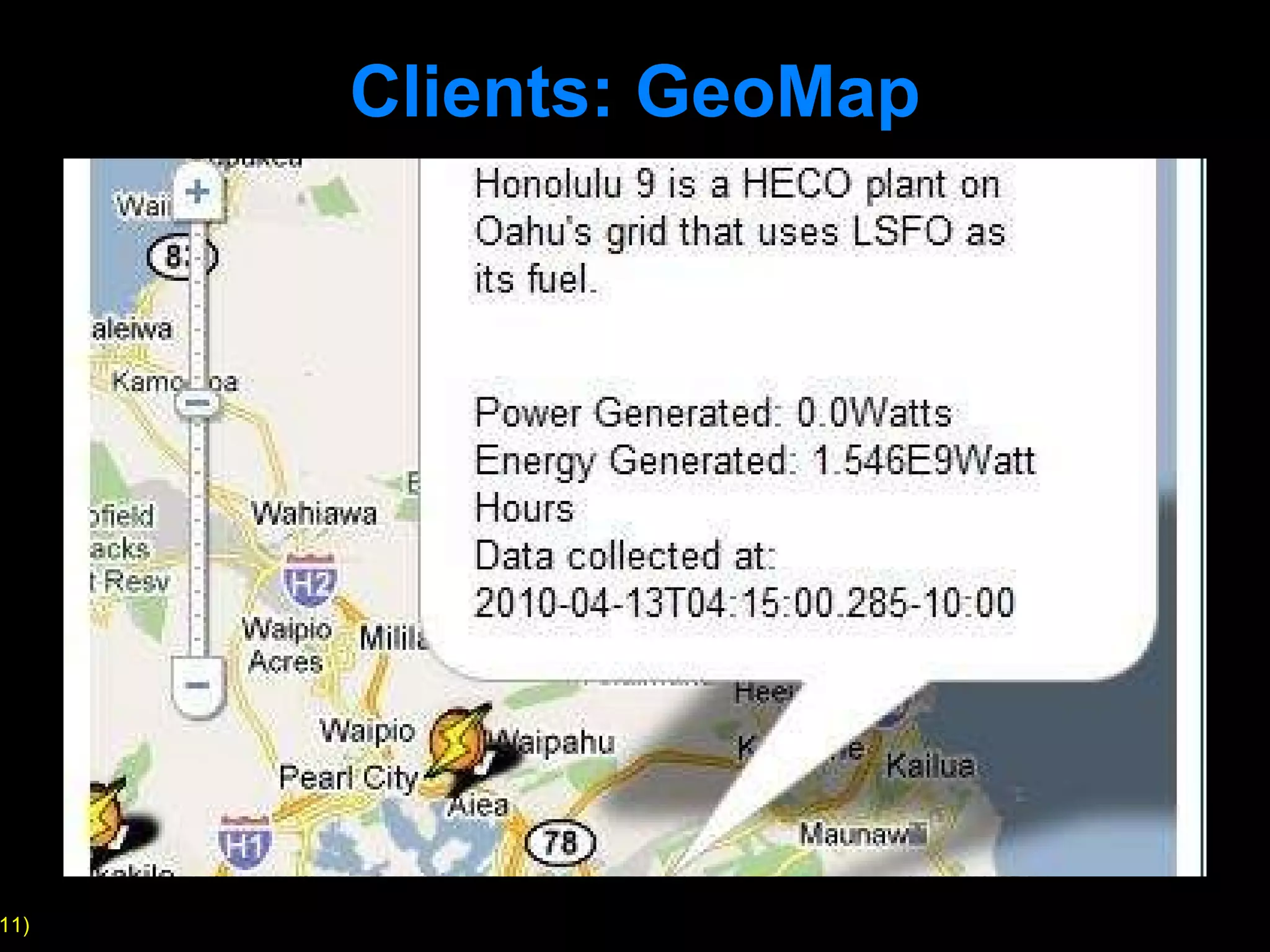

WattDepot is a software ecosystem developed for the collection, storage, analysis, and visualization of energy data, addressing the need for effective energy use behavior research. It features a flexible architecture that accommodates various sensors and meters, allowing data collection via HTTP and supporting visualization through diverse platforms. The system is open-source and provides capabilities such as data aggregation, interpolation, and real-time monitoring through accessible APIs.