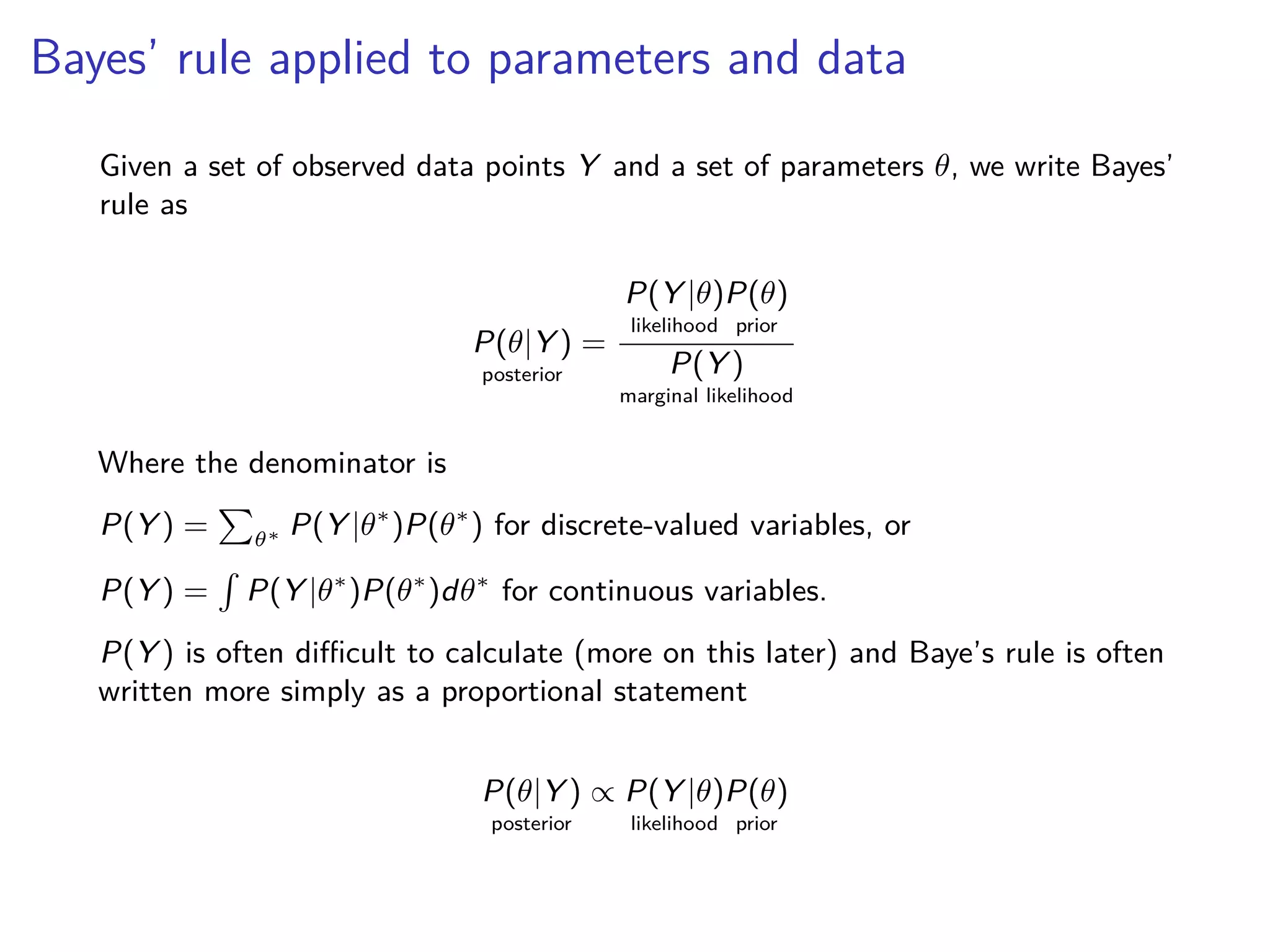

1) Bayesian statistics uses Bayes' rule for inference and decision making, while frequentist statistics does not.

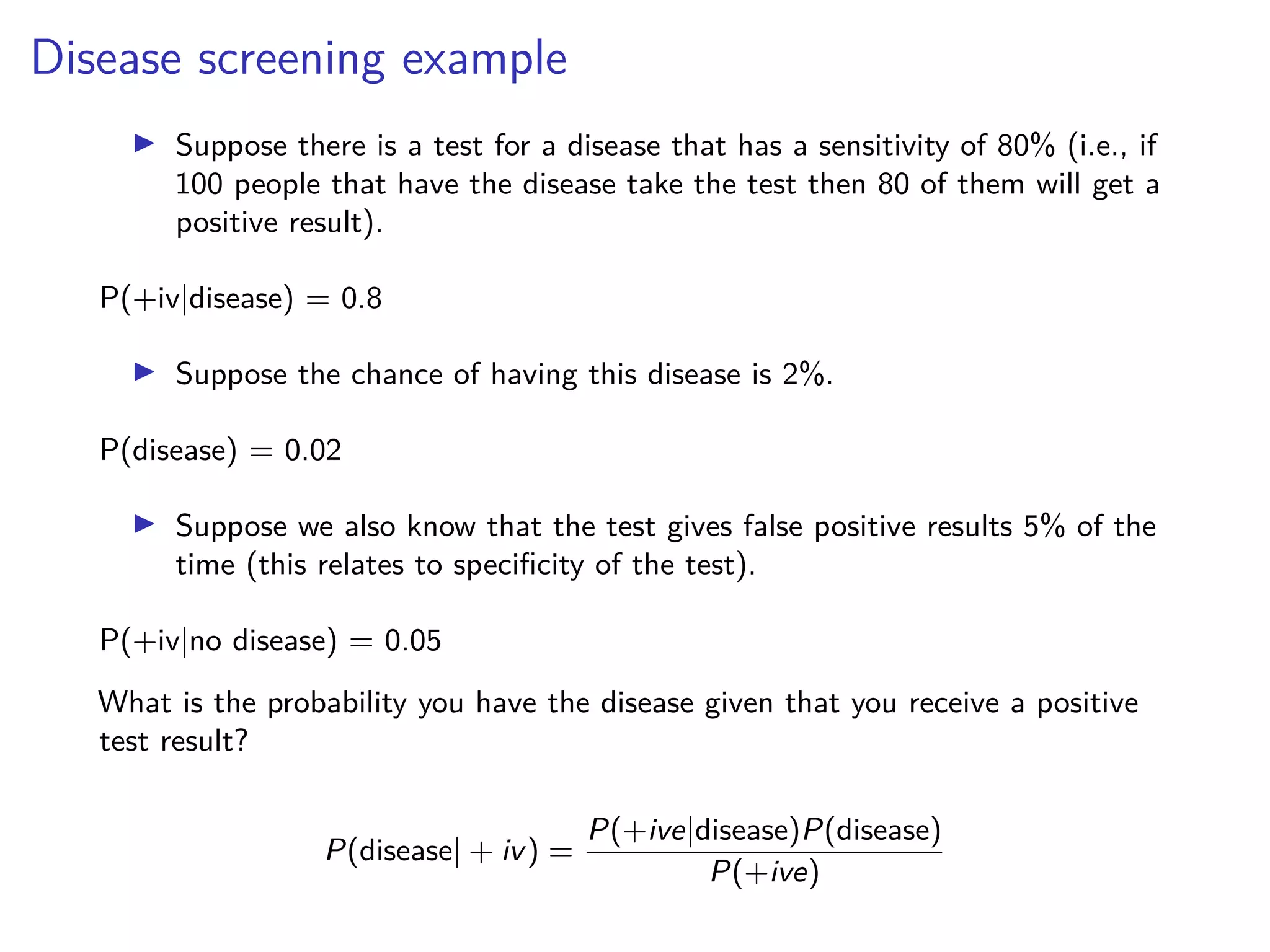

2) Bayes' rule allows you to calculate the probability of an event given additional information, such as calculating the probability of rain given that it is cloudy in the morning.

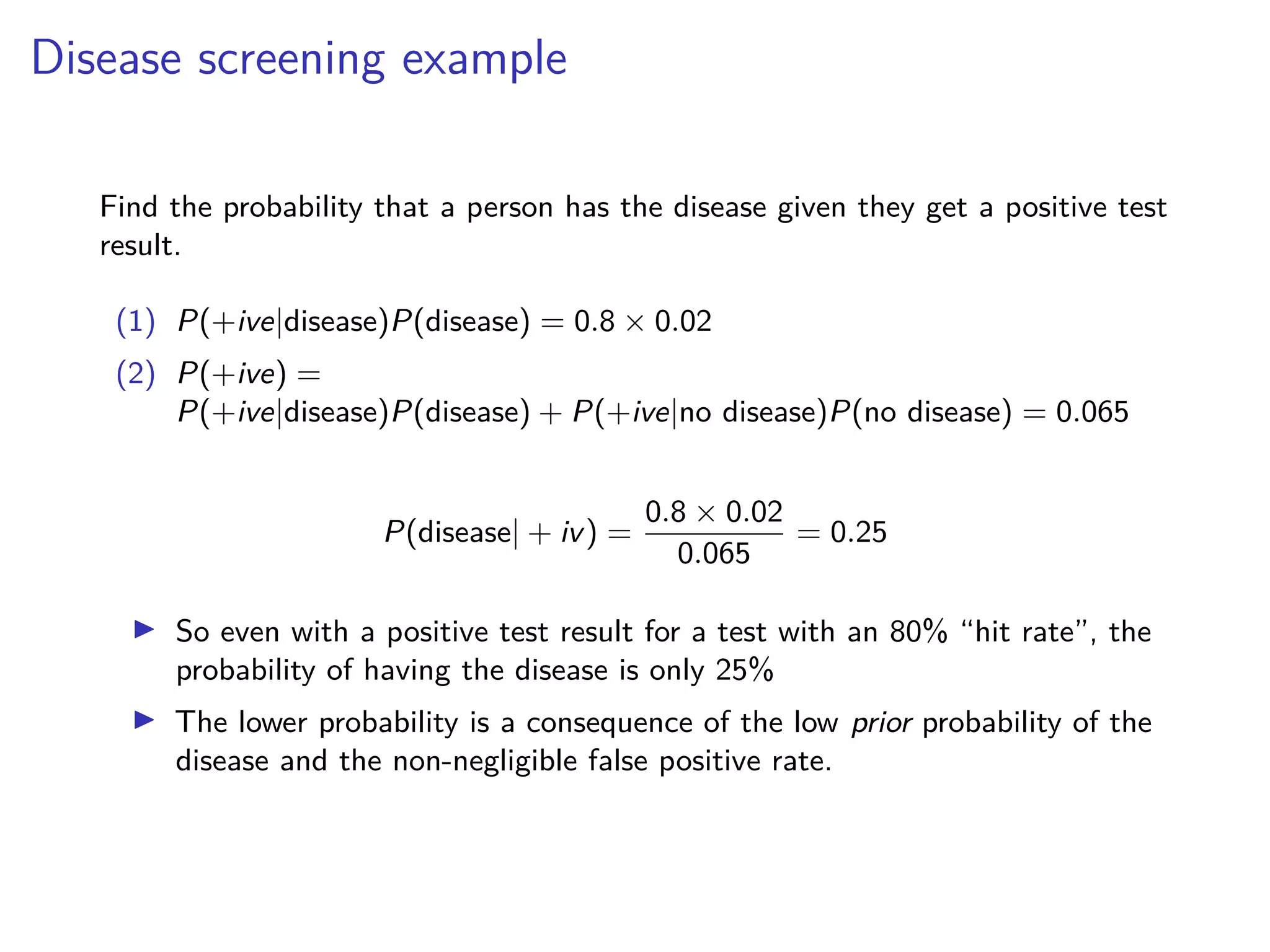

3) Even with a positive test result, the probability of having a disease can be low due to the low prior probability of the disease and potential for false positives.