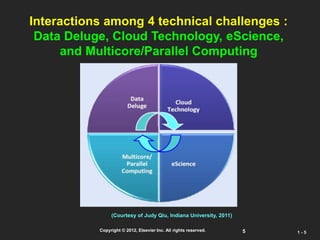

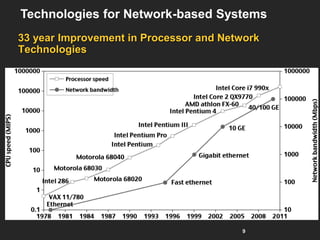

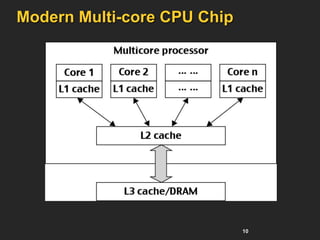

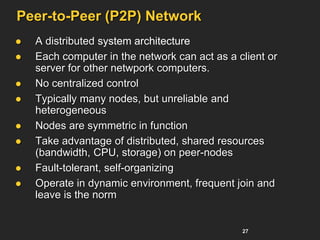

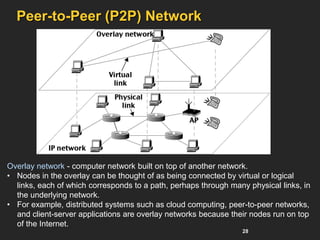

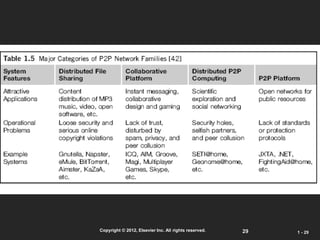

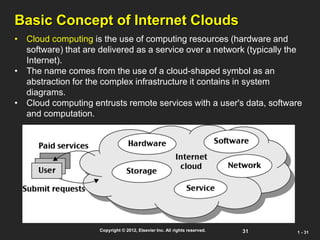

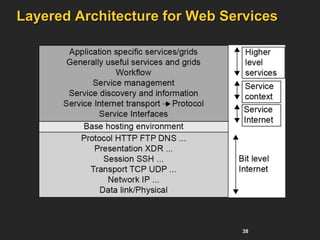

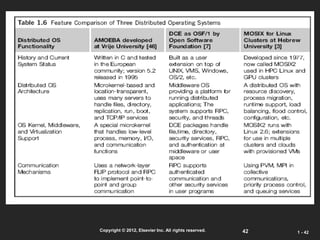

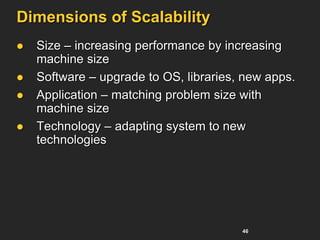

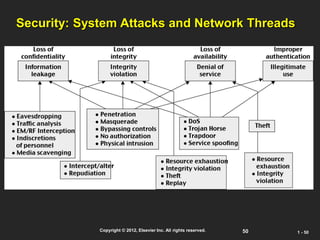

The document discusses enabling technologies for distributed and cloud computing over the past 30 years. It describes how computing has evolved from centralized mainframes and supercomputers to today's distributed systems using grids, peer-to-peer networks, and internet clouds. It also discusses the interactions between challenges like data deluge, cloud technologies, e-science, and parallel computing.