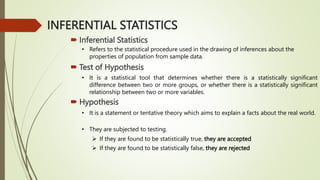

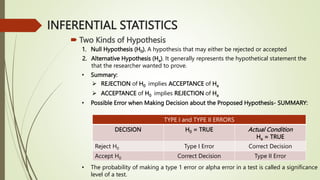

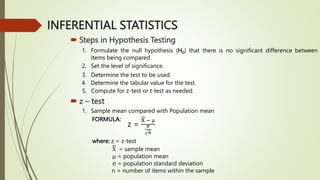

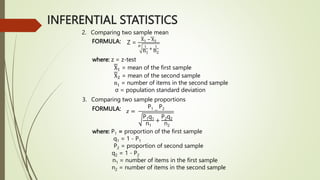

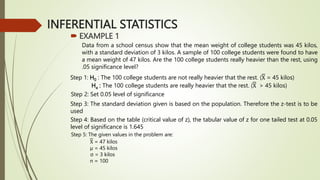

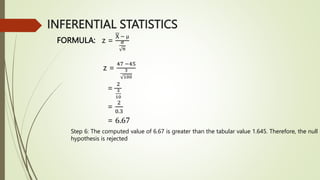

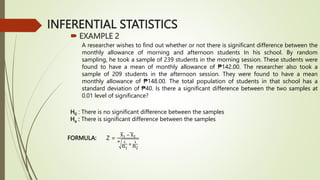

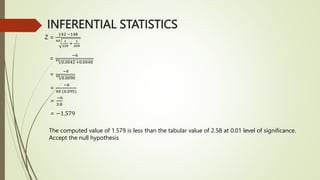

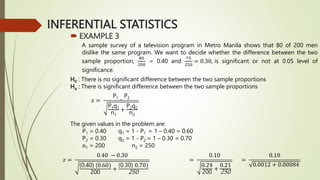

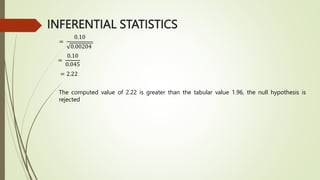

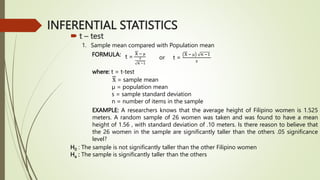

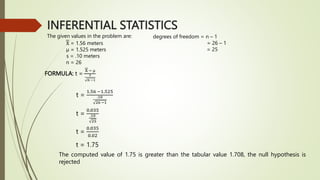

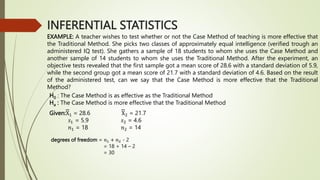

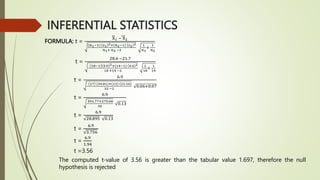

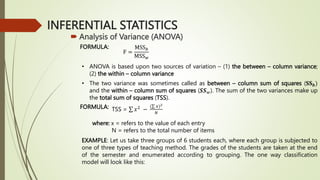

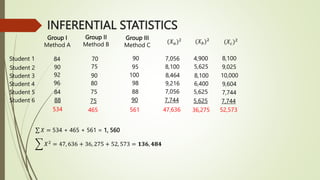

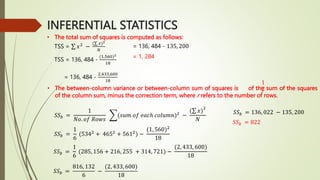

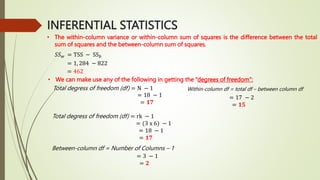

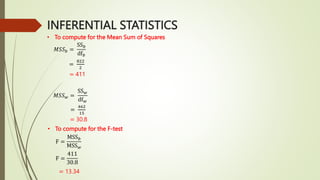

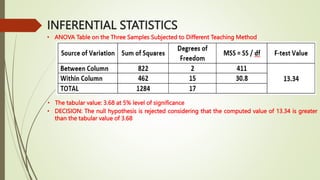

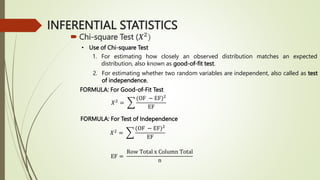

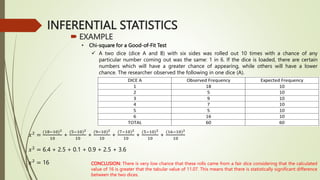

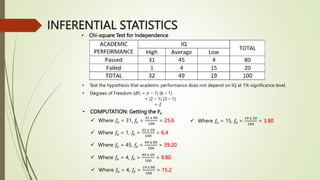

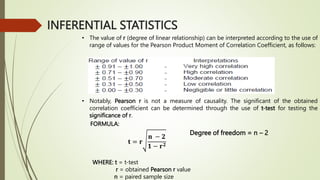

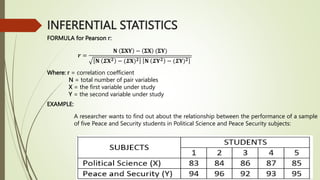

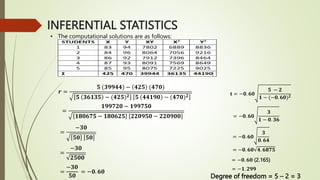

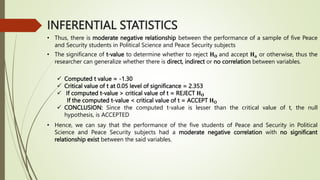

This document provides an overview of inferential statistics concepts including hypothesis testing, types of hypotheses, errors in hypothesis testing, steps in hypothesis testing, z-tests, t-tests, and analysis of variance (ANOVA). Key points covered are: hypothesis testing determines if there is a statistically significant difference between groups or a relationship between variables; the two types of hypotheses are the null and alternative; z-tests and t-tests are used to test hypotheses comparing means and proportions; and ANOVA analyzes variance between and within groups. Examples of each statistical test are provided.