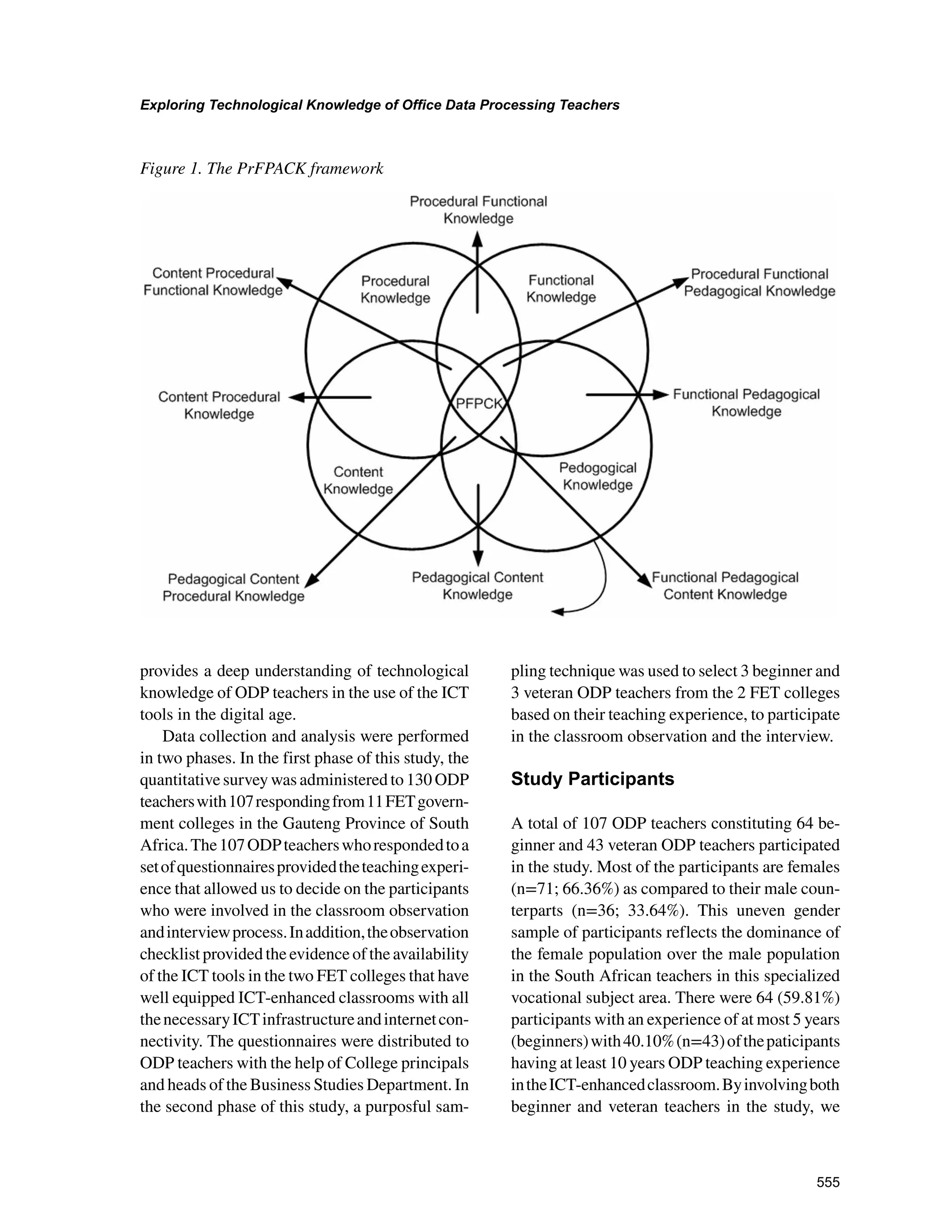

This study used factor analysis to explore the technological knowledge of beginner and veteran Office Data Processing (ODP) teachers at colleges in South Africa. The researchers developed a survey based on the Procedural Functional Pedagogical Content Knowledge (PrFPACK) framework, which is an extension of the Technological Pedagogical Content Knowledge (TPACK) framework. The survey collected data on teachers' knowledge of technologies like Microsoft Office, presentation software, and data projectors. The findings revealed that procedural functional content knowledge was the most important factor for ODP teachers' technological knowledge. The study provides insight into the technological knowledge required by teachers in technology-focused subjects.

![549

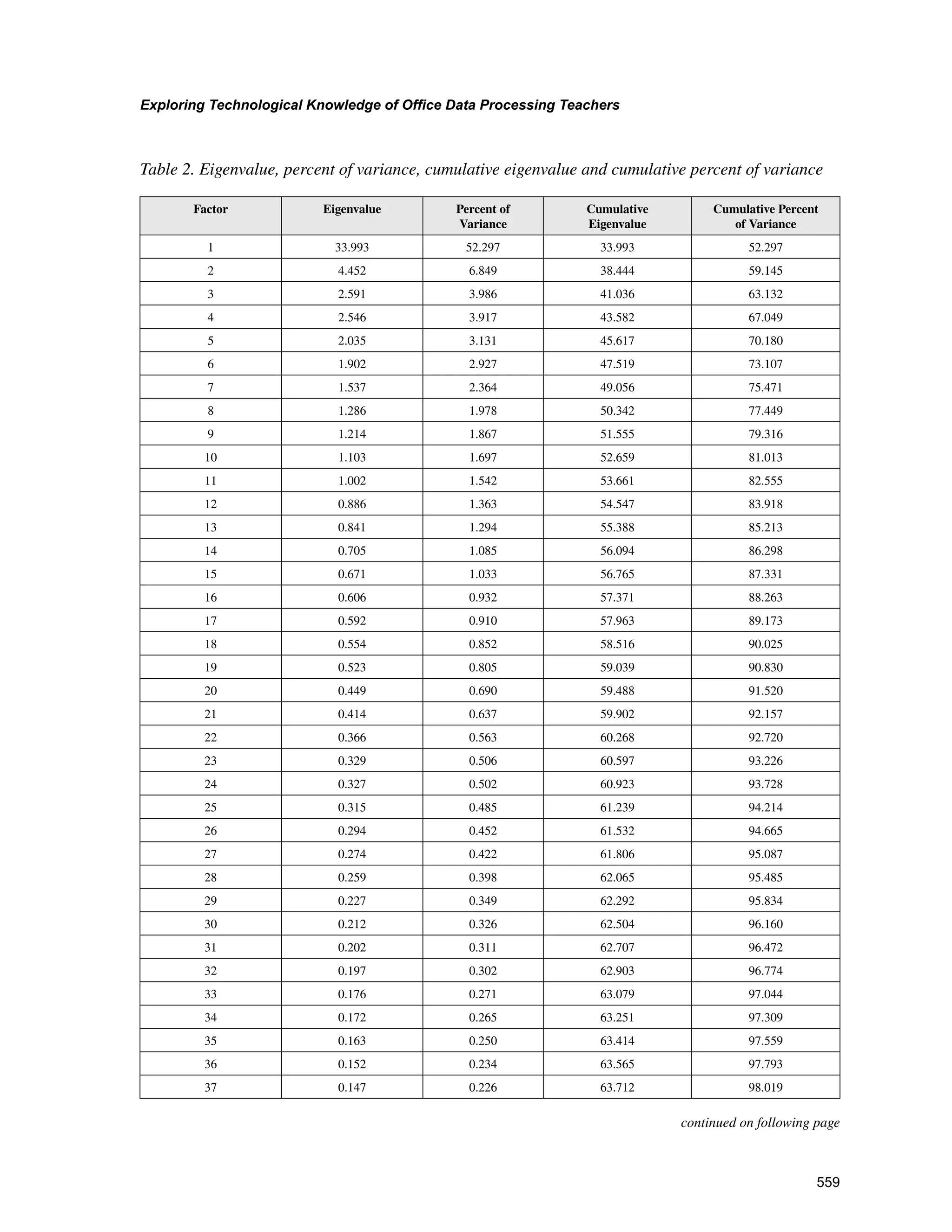

Exploring Technological Knowledge of Office Data Processing Teachers

INTRODUCTION

Helping beginner and veteran teachers develop

technological knowledge and skills in how to use

new technologies to teach is a critical component

ofteacherpreparationinthisdigitalage(National

Council for Accreditation of Teacher Education

[NCATE], 2010). Existing research indicates that

a critical factor influencing beginner teachers’

adoption of technology is the quantity and qual-

ity of technological knowledge and experiences

includedintheirteachereducationprogram(Agyei

Voogt, 2011; Tondeur, Van Braak, Sang, Fisser

Ottenbreit-Leftwich, 2012). Today’s teachers

shoulddeveloplessonsthatteachlearnerscontent

knowledgeandassistthemtodeveloptwenty-first

century skills so that they can think effectively,

actively solve problems, and be digitally literate.

Thepreparationofteachersintheeducationaluses

of technology in the current digital age appears

to be a key component in almost every improve-

ment plan for education and educational reform

program (Davis Falba, 2002). According to

Gess-Newsome Lederman (2003), while some

issuesineducationtakeontheflavorofsocialand

historical context, others, such as how to prepare

beginner and experienced teachers to integrate

technology for effective teaching and learning in

the current digital age, remain almost ill-defined.

Mostimportantly,researchevidenceshowsthatin

spite of many efforts that researchers and educa-

tional institutions have invested over the years in

preparingbothbeginnerandexperiencedteachers

in the educational uses of technology, pre-service

(beginner in this study) and in-service (veteran in

thisstudy)teachersstilllackappropriateskillsand

knowledge needed to be able to successfully use

technology to teach (Uwameiye Adegbenro,

2007). According to Meskill, Mossop, DiAngelo

Pasquate(2002),thisisnotnecessarilythecase,

finding that new teachers appear to be affected by

the existing culture of the teaching profession.

While beginner teachers may be more conversant

with technology in their daily lives than veteran

teachers, they are not exposed to ideas about how

to integrate technology in classroom settings.

Althoughsomeresearchreportedthatteachers’

experience in teaching did not influence their use

of information communication technology (ICT)

in teaching (Niederhauser Stoddart, 2001),

more research showed that teaching experience

influenced the successful use of ICT in class-

rooms (Williams, 2003; Gorder, 2008; Cubuk-

cuoglu, 2013; Ndibalema, 2014). In particular,

Gorder (2008) reported that teacher experience

is significantly correlated with the actual use

of technology. Lau and Sim (2008) conducted a

study on the extent of ICT adoption among 250

secondaryschoolteachersinMalaysia.Theirfind-

ingsrevealedthatexperiencedteachersfrequently

use computer technology in the classrooms more

than the beginner teachers. This result implies

that teachers’ ICT knowledge and skills in rela-

tion to the successful implementation of ICT as

pedagogical tool (Pierson, 2001) is complex and

not a clear predictor of ICT integration in teach-

ing and learning. In addition, Kumar Kumar

(2003) argue that lack of adequate training and

experienceisoneofthemainfactorswhyteachers

do not use technology in their teaching.

The Further Education and Training (FET)

colleges in South Africa currently have greater

access to educational technologies than has

been the case in the past. In addition, much

investment has been made in the acquisition

of ICT infrastructure in these colleges where

vocational and technology-based subjects are

being offered for the purpose of artisan skills

development.However,verylittleisknownabout

whatformsoftechnologicalknowledgeandskills

are needed by Office Data Processing (ODP)

teachers for effective teaching and learning. It

is in this sense that in this study we explored

the technological knowledge of ODP teachers

at FET colleges in South Africa. We explored

the technological knowledge of ODP teachers](https://image.slidesharecdn.com/9fa76cad-d82e-4899-af61-921f328b50bc-170203105159/75/Exploring-Technological-Knowledge-of-Office-Data-Processing-Teachers_-Using-Factor-Analytic-Methods-4-2048.jpg)

![550

Exploring Technological Knowledge of Office Data Processing Teachers

in the specific domains such as Microsoft Word

program, spreadsheet application, audio typing,

PowerPoint presentation, Interactive Teaching

Box (ITB), Web technology, and data projector

application. The teaching of these applications

in FET colleges is in line with the new South

Africa’s National Certificate Vocational (NCV)

curriculum standard and the ICT White Paper

Policy of the Department of Basic Education

(2007). Moreover, ODP requires a particular

procedure and a set of tasks that should be ex-

ecuted within a given period of time.

This study promises to contribute towards a

better understanding of the framework that can

be used to unpack the technological knowledge

of teachers. A framework for understanding

and unpacking the knowledge of teachers who

teach with the help of technology needs to have

a technology dimension. The overarching re-

search questions that we pursued in this study

are enunciated as follows:

(a) What is the nature of technological knowl-

edge of beginner and veteran ODP teachers

in the use of ICT as a pedagogical tool?

(b) Is there any significant differences in the

technological knowledge between beginner

and veteran ODP teachers with respect to

their teaching experience?

We proceed by giving the background of the

study in the next section to examine the techno-

logicalpedagogicalcontentknowledge(TPACK)

framework that undergirds this study, explain

technologicalknowledge,andexaminetheProce-

duralFunctionalPedagogicalContentKnowledge

(PrFPACK) framework. These literature review

sections are followed by the discussion of the

chosen methodology, and the presentation and

discussion of findings.

BACKGROUND

The knowledge related to the effective use of

educational technologies has become widely

recognizedasanimportantaspectofaknowledge

baseofeducatorsinthedigitalage(ISTE[Institute

for Science and Technology Education], 2008;

NCATE,2010;Partnershipfor21st

CenturySkills,

2003). The concept of knowledge as applied to

Science,EngineeringandTechnology(SET)cov-

ersdeclarativeknowledge(knowthat),functional

knowledge(know-how)andproceduralknowledge

(skills) (Ferris, 2009; Nissen, 2006). Most of the

literature discussing different types of knowledge

of teaching and learning dwells extensively on

cognitive and affective domains of knowledge

Shulman(1986)andBiggs(1999).Thesetypesof

knowledgeareoftenassociatedwithsurfacelearn-

ing concerning the representation of facts rather

than assimilation of the significance of the facts

into a construct that guides an appropriate action

(procedural knowledge). The essential types of

knowledgethatcanenhanceeffectiveteachingand

learninginanICT-enhancedclassroomhavebeen

identified in the literature to include pedagogical,

declarative,functionalandproceduralknowledge

as applied to SET (Nissen, 2006).

Pedagogicalknowledgereferstotheknowledge

of methods and strategies employed by teachers

in the process of teaching and learning. This

knowledge includes the fundamental knowledge

of classroom management, sequential lesson

preparation, student motivation, assessment and

evaluation. Declarative knowledge is defined by

Ryle (1958) as “know that”, which is a form of

knowledge that is associated with representa-

tions of facts rather than assimilation of facts

into constructs that guide effective actions. Some

researchers also refer to this concept as content

knowledge (Shulman, 1986;Shavelson, Ruiz-

Primo, Li Ayala, 2003).

Functional knowledge is defined by Ryle

(1958) as “know-how”, or having the ability to](https://image.slidesharecdn.com/9fa76cad-d82e-4899-af61-921f328b50bc-170203105159/75/Exploring-Technological-Knowledge-of-Office-Data-Processing-Teachers_-Using-Factor-Analytic-Methods-5-2048.jpg)

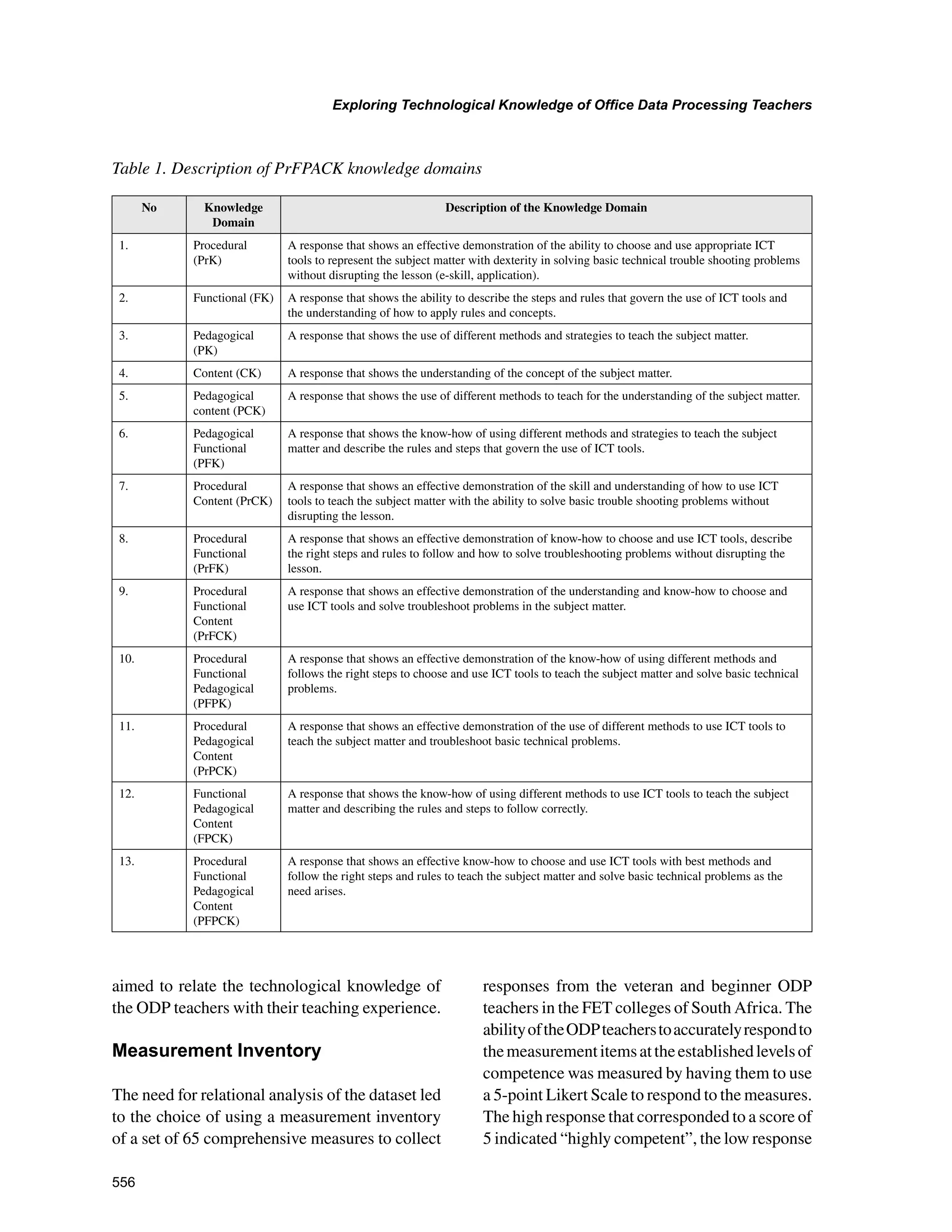

![562

Exploring Technological Knowledge of Office Data Processing Teachers

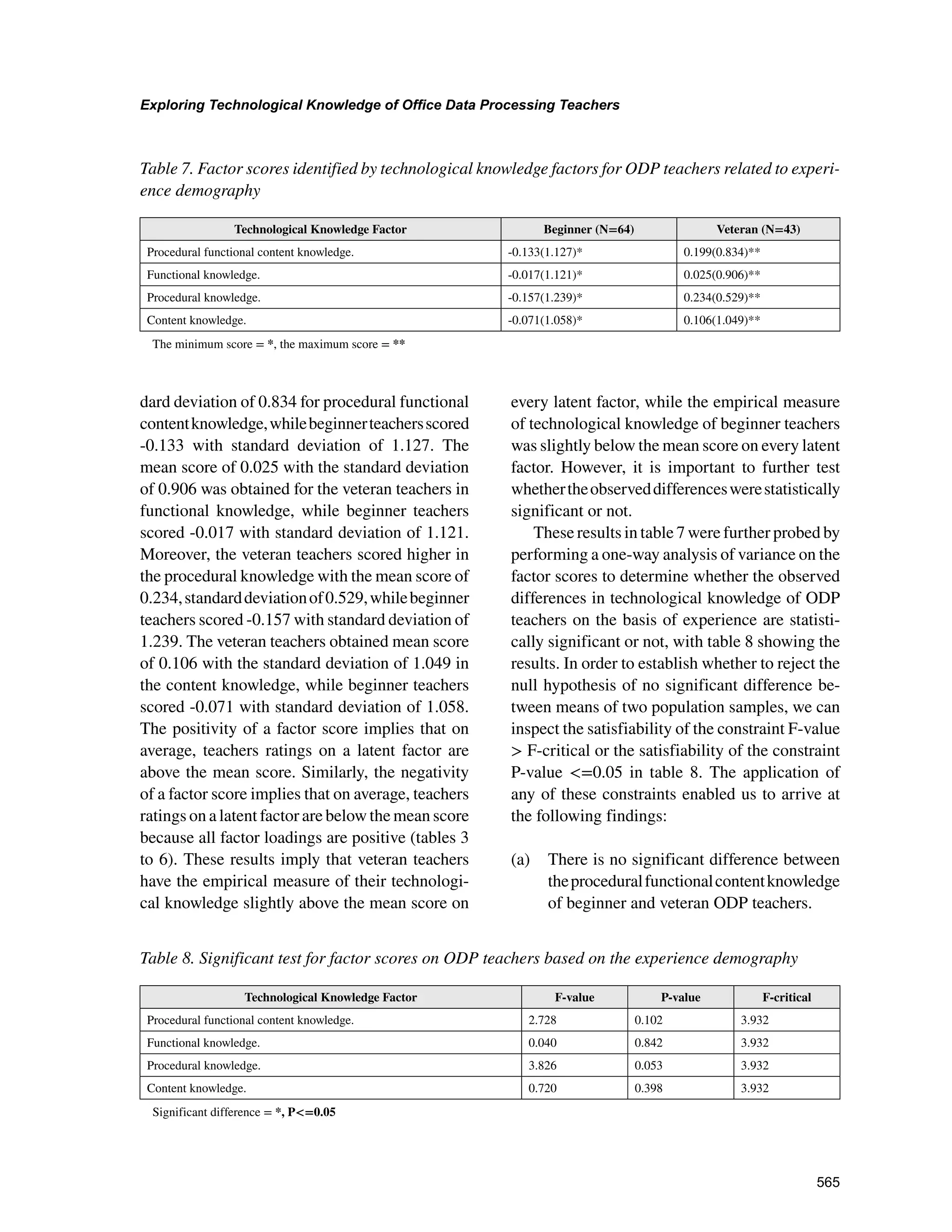

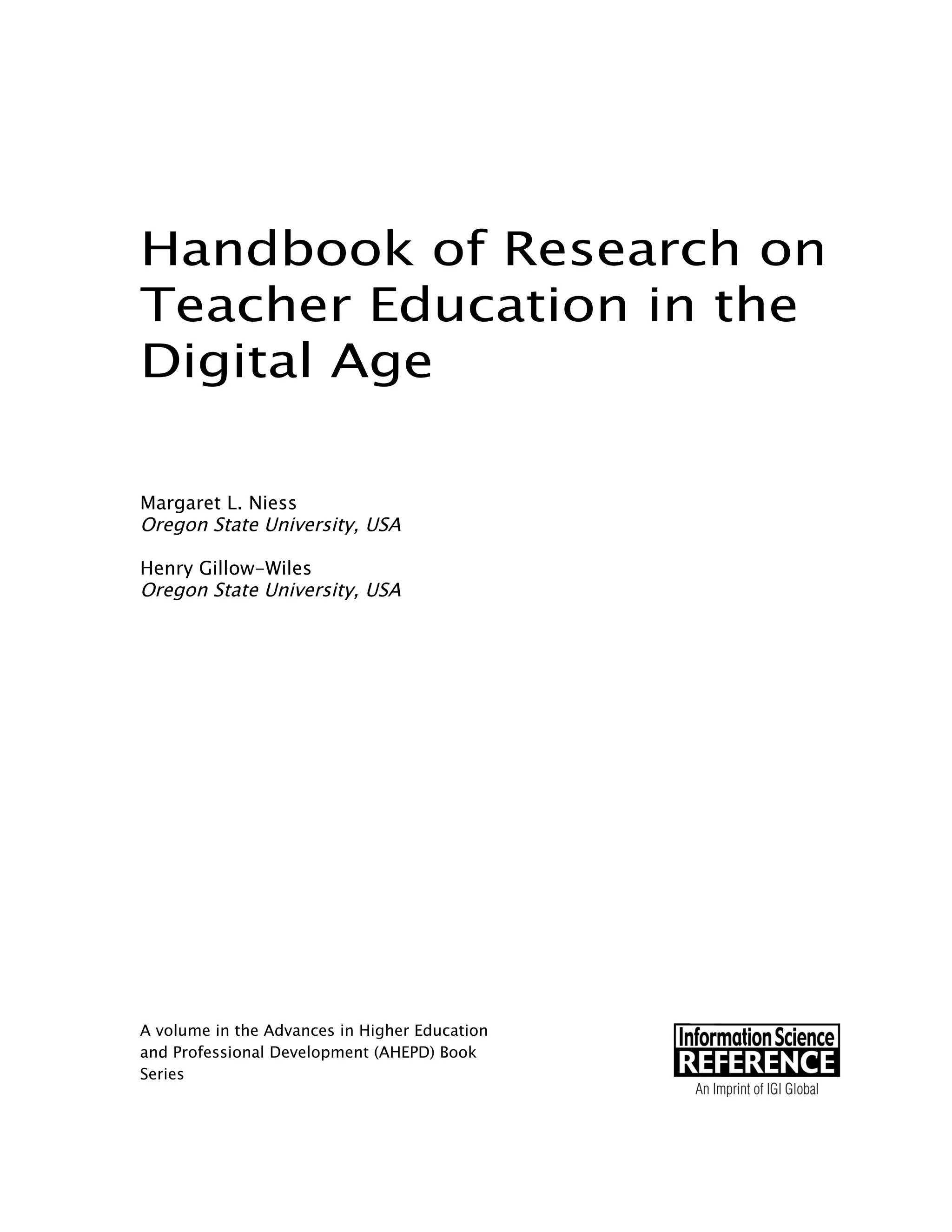

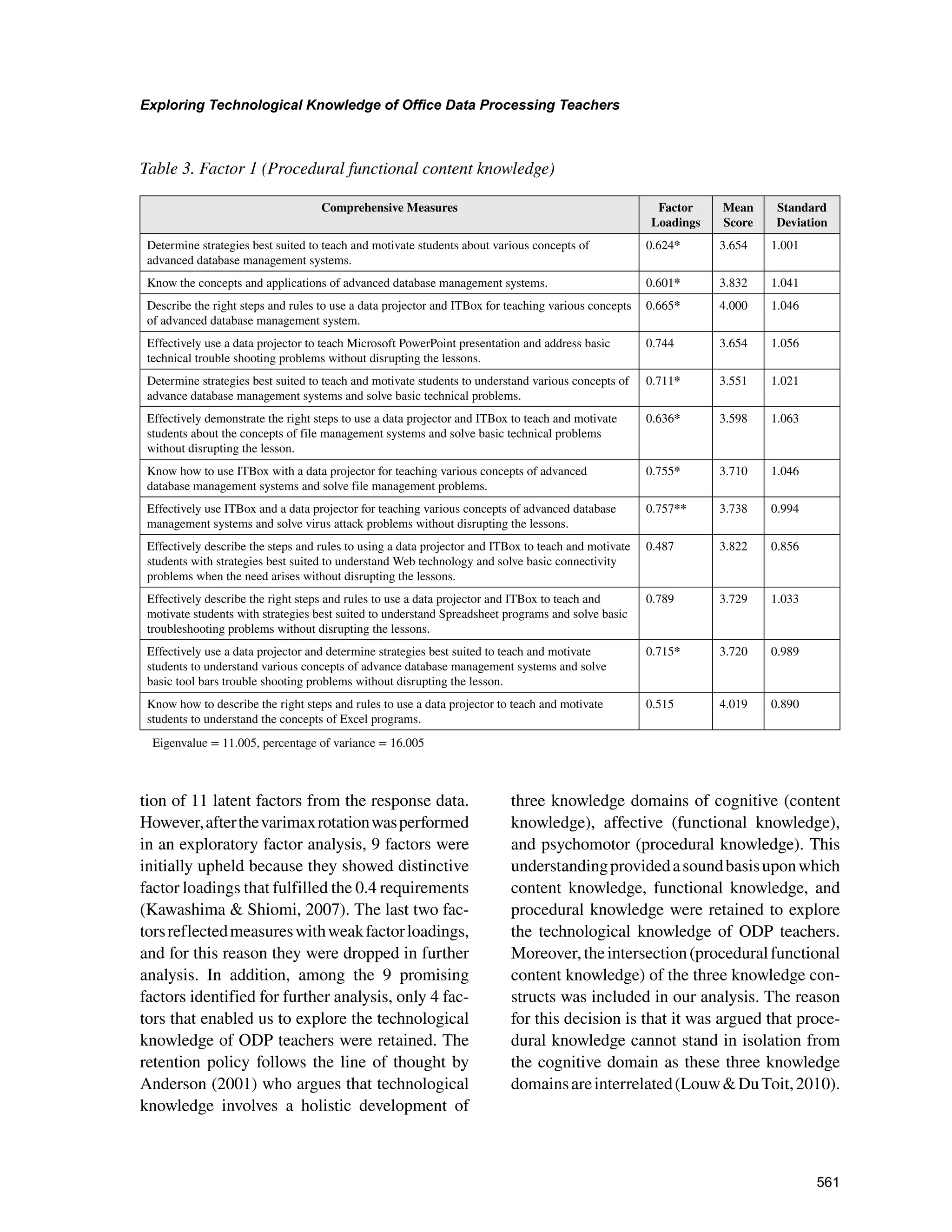

The surrogate method was used to name the 4

latent factors for technological knowledge explo-

ration as discovered from the exploratory factor

analysis. According to this surrogate method, the

name of a latent factor corresponds to the name

of a single comprehensive measure that loaded

highly amongst all semantically related compre-

hensive measures of the factor. The loadings of

semantically related comprehensive measures

are marked with asterisk (*) and the factor that

loaded highly amongst the semantically related

comprehensive measures is marked with double

asterisk (**). The terms “weak”, “moderate” and

“strong” respectively, refer to the factor loading

values in the interval [0.3, 0.5], [0.5, 0.75] and

[0.75, 1.0] (Liu et al., 2003).

The most important factor, accounting for

16.00% of variance, is factor 1 presented in table

3. There were 12 measures in this factor with

their loadings ranging from 0.487 to 0.757. The

mean scores of the measures of this factor ranged

from 3.551 to 4.019, meaning the ODP teachers

generally responded well above the middle point

of 3. The majority of the measures semantically

referredtotheuseoftechnologytoteachdatabase

management systems. In particular, the measure

with the highest loading (0.757) that belongs to

the knowledge category of procedural functional

Table 4. Factor 2 (Functional knowledge)

Comprehensive Measures Factor

Loadings

Mean

Score

Standard

Deviation

Determine strategies best suited to motivate students to understand various concepts of

spreadsheet programs.

0.724* 4.112 0.805

Know various concepts and application of spreadsheet programs, including Microsoft Excel. 0.636* 4.224 0.793

Know how to describe the right steps and rules to use a data projector to teach the concepts of

formatting worksheets.

0.752** 3.972 0.956

Effectively use a data projector and Interactive Teaching Box (ITBox)to teach Worksheets

Printing and solve all basic printing problems when the need arises.

0.511* 3.850 0.909

Determine strategies best suited to teach students to understand various concepts of file

management programs.

0.552* 3.841 0.943

Effectively demonstrate the right steps to use ITBox and a data projector to motivate students to

understand the concepts of Mail merge and solve basic troubleshooting problems when the need

arises.

0.629* 3.963 0.951

Know how to effectively describe the right steps and procedures to use a data projector to teach

various concepts of Keyboard customization and solve basic technical problems when the need

arises.

0.629* 3.841 1.029

Know how to describe the right steps to use ITBox with a data projector to teach various

concepts of Keyboard customization.

0.619* 3.841 1.083

Know how to effectively use ITBox with a data projector to teach and demonstrate various

concepts of Worksheet formatting and solve basic troubleshooting problems when the need arises

without disrupting the lessons.

0.743* 3.841 0.992

Effectively use a data projector and determine strategies best suited to motivate students

to understand various concepts of Keyboard customization and Toolbar and solve basic

troubleshooting problems when the need arises without disrupting the lessons.

0.634 4.009 0.906

Know how to describe the right steps and rules to use a data projector to teach and motivate

students with strategies to understand Microsoft word processing concepts and solve tool bar

access troubleshooting problems without disrupting the lesson.

0.675 3.925 0.929

Effectively describe the right steps and strategies best suited to use a data projector and ITBox

to teach and motivate students to understand various concepts of spreadsheet programs and solve

trouble shooting problems without disrupting the lesson.

0.709* 3.981 0.879

Eigenvalue = 8.433, percentage of variance = 12.974](https://image.slidesharecdn.com/9fa76cad-d82e-4899-af61-921f328b50bc-170203105159/75/Exploring-Technological-Knowledge-of-Office-Data-Processing-Teachers_-Using-Factor-Analytic-Methods-17-2048.jpg)