1. The document discusses various AI techniques and problems. It defines AI technique as a method that exploits knowledge represented to capture generalizations, be understood by people, be easily modified, and be used in many situations.

2. It provides examples of common AI problems like tic-tac-toe, the water jug problem, various puzzles, and language understanding.

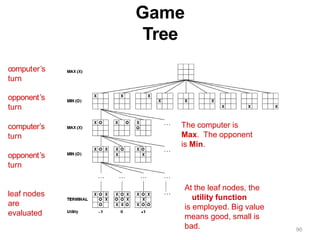

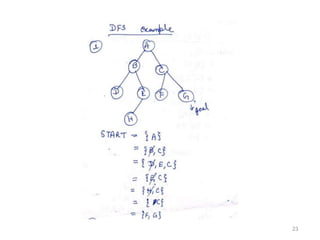

3. It then discusses problem solving and representation, defining key concepts like states, state space, operators, initial and goal states. It outlines general problem solving steps and state space representation.

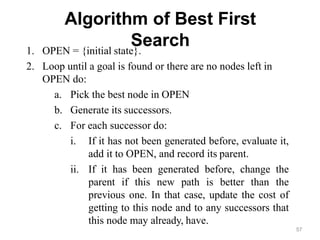

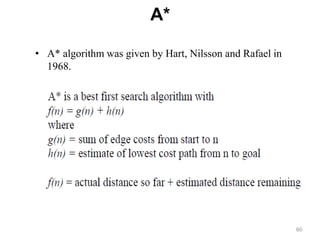

![A*

Algorithm

1. Initialize: set OPEN= {s}, CLOSED={ }

g(s)=0, f(s)=h(s)

2. Fail: if OPEN ={ }, Terminate & fail.

3. Select: select the minimum cost state, n, from OPEN.

Save n in

CLOSED.

4. Terminate: if n G, terminate with success, and return

f(n).

5. Expand: for each successor , m,

of n If m ∉[open U closed]

Set g(m) =g(n) + C(n,m)

Set f(m) =g(m) +

h(m) Insert m in

OPEN.

If m [open U closed]

Set g(m) =min{g(m) ,g(n)+

C(n,m)} Set f(m) =g(m) + h(m)

15

9](https://image.slidesharecdn.com/unit2-230108151158-963fdd52/85/unit-2-pptx-63-320.jpg)