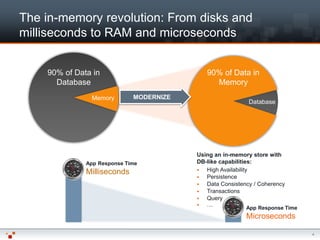

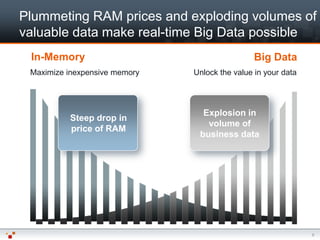

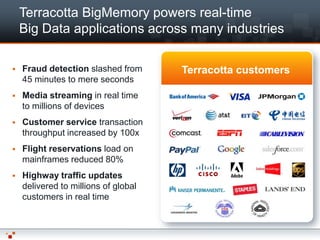

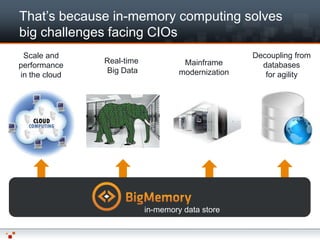

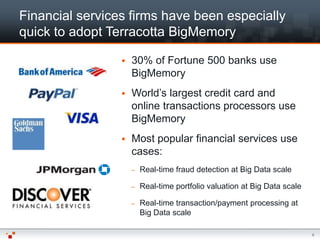

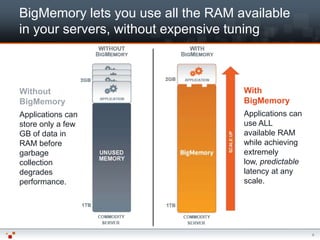

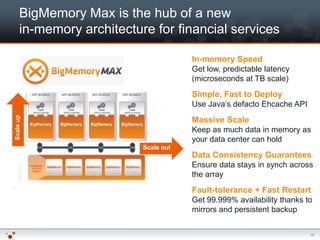

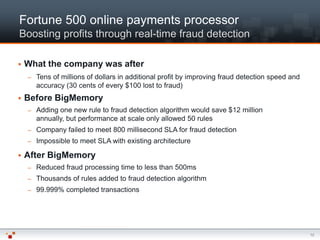

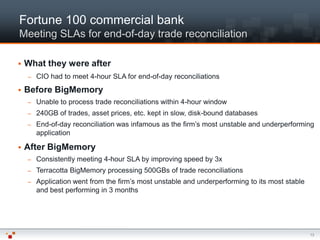

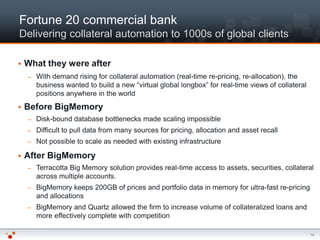

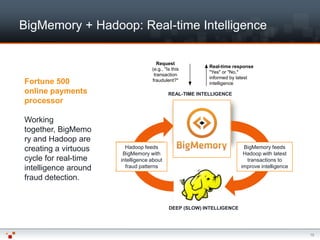

The document discusses the advantages of Terracotta's BigMemory in addressing big data challenges in financial services, focusing on real-time data processing for fraud detection, transaction processing, and risk management. It highlights how BigMemory enhances application speed, scalability, and reliability, enabling firms to meet stringent performance SLAs. Several case studies illustrate substantial improvements in operational efficiency and profitability achieved by companies using BigMemory.