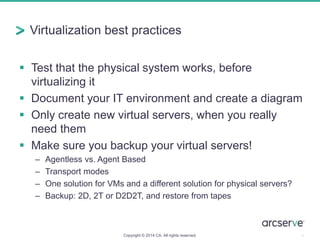

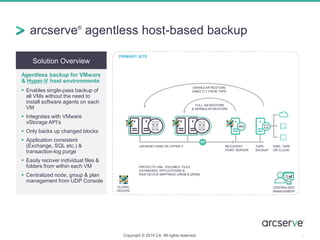

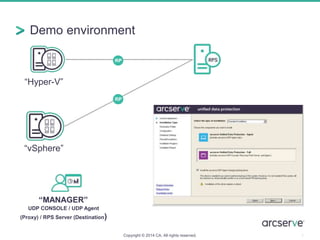

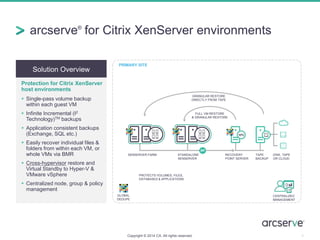

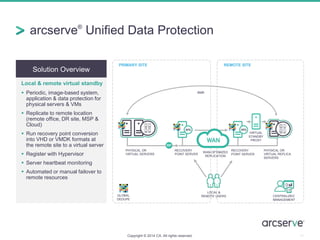

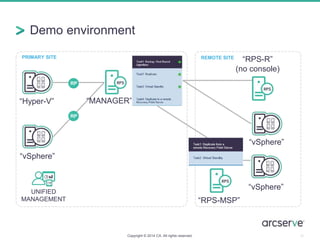

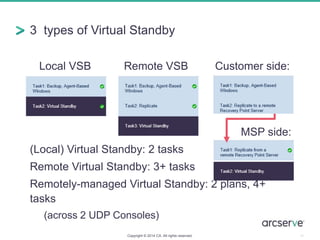

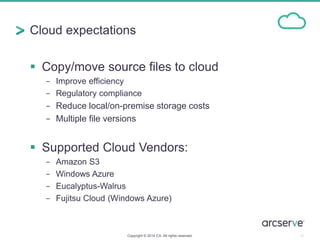

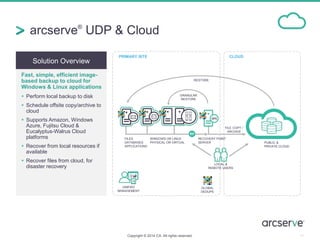

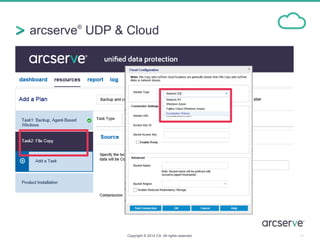

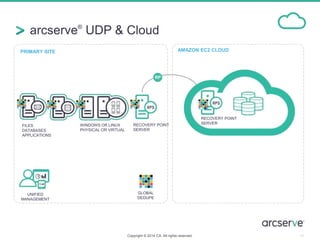

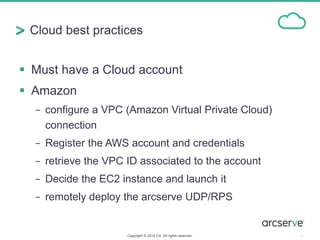

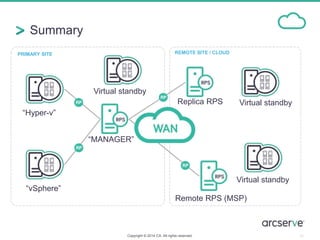

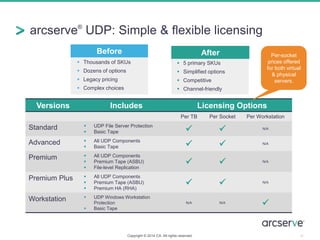

This document summarizes an upcoming presentation and live demo on solutions for virtualization, cloud computing, and licensing and support options for CA Technologies' Unified Data Protection product. The presentation will cover virtualization best practices, agentless backup solutions for VMware and Hyper-V, virtual standby capabilities, backup to public and private clouds, and simplified licensing and support offerings. A demo environment will be used to showcase backup, replication, and recovery features for virtual and physical servers across on-premises and cloud locations.