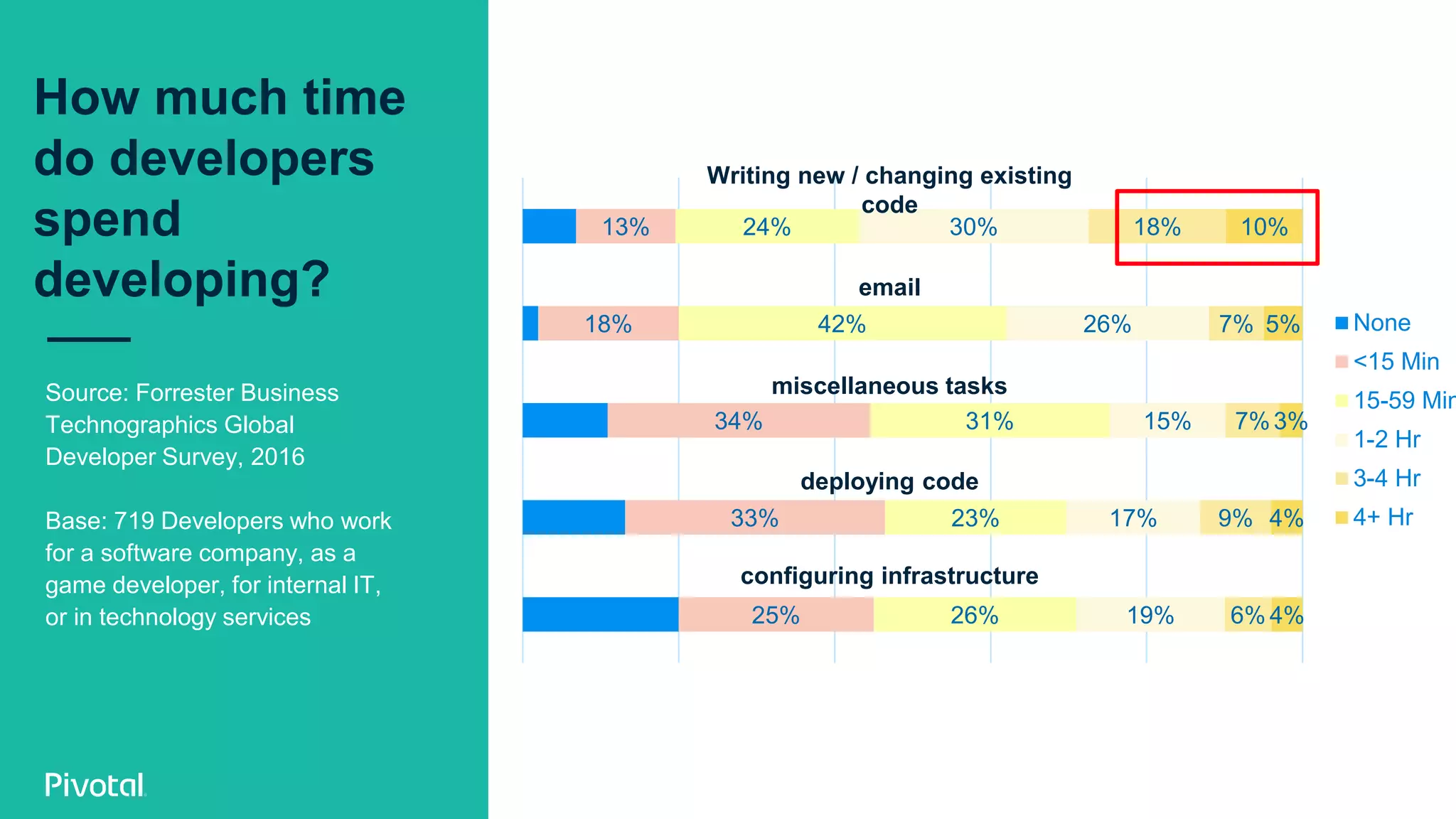

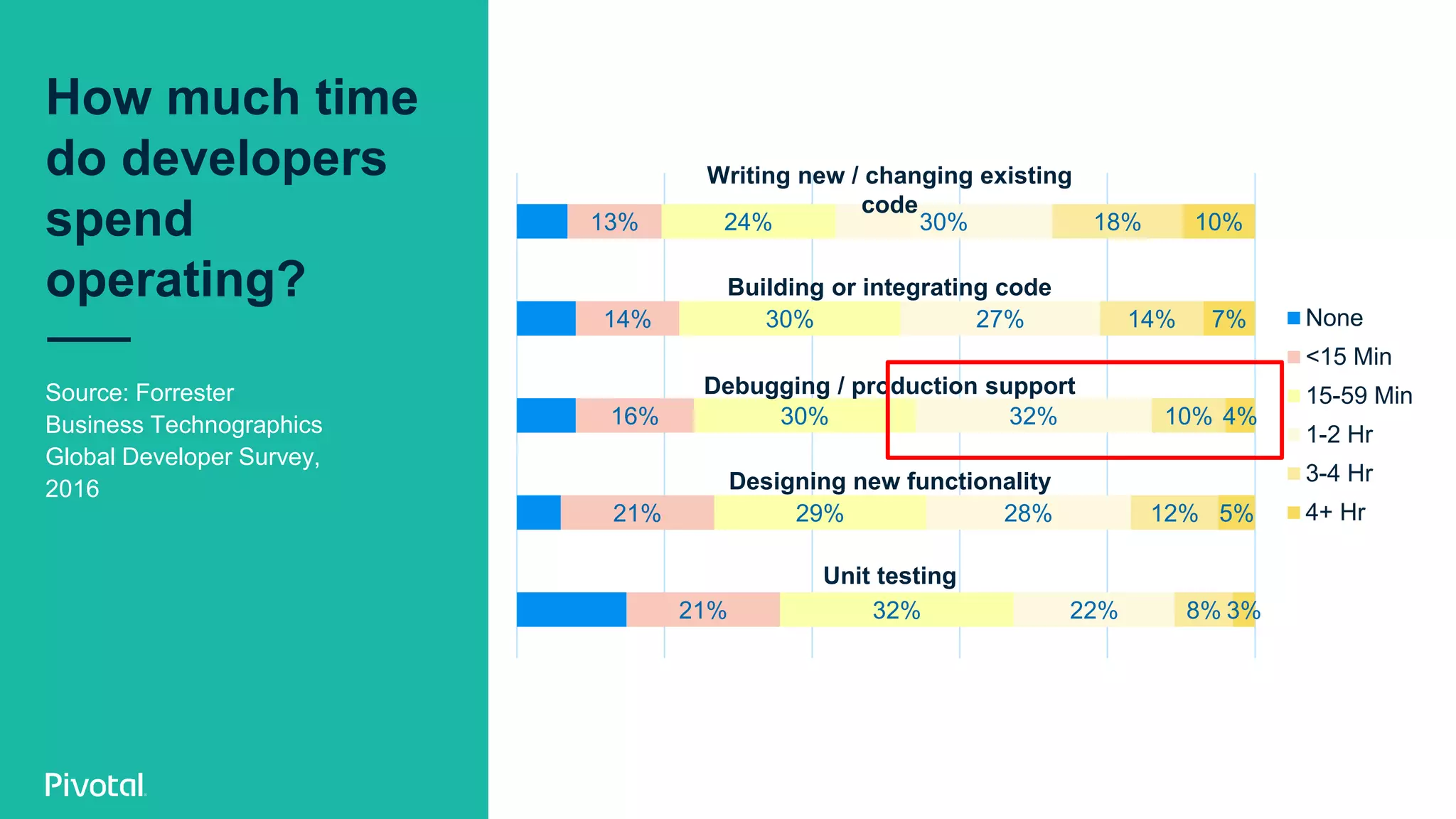

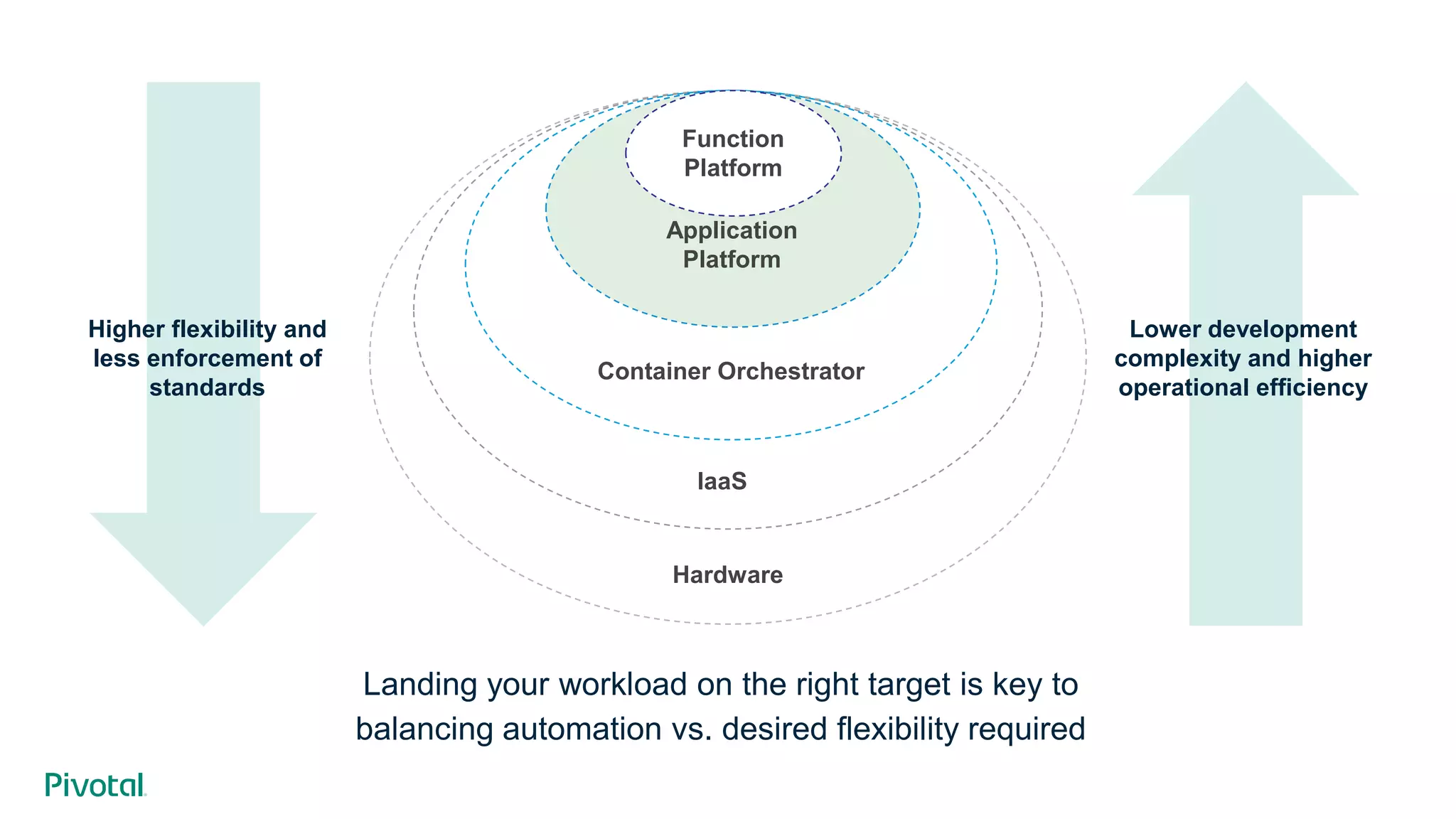

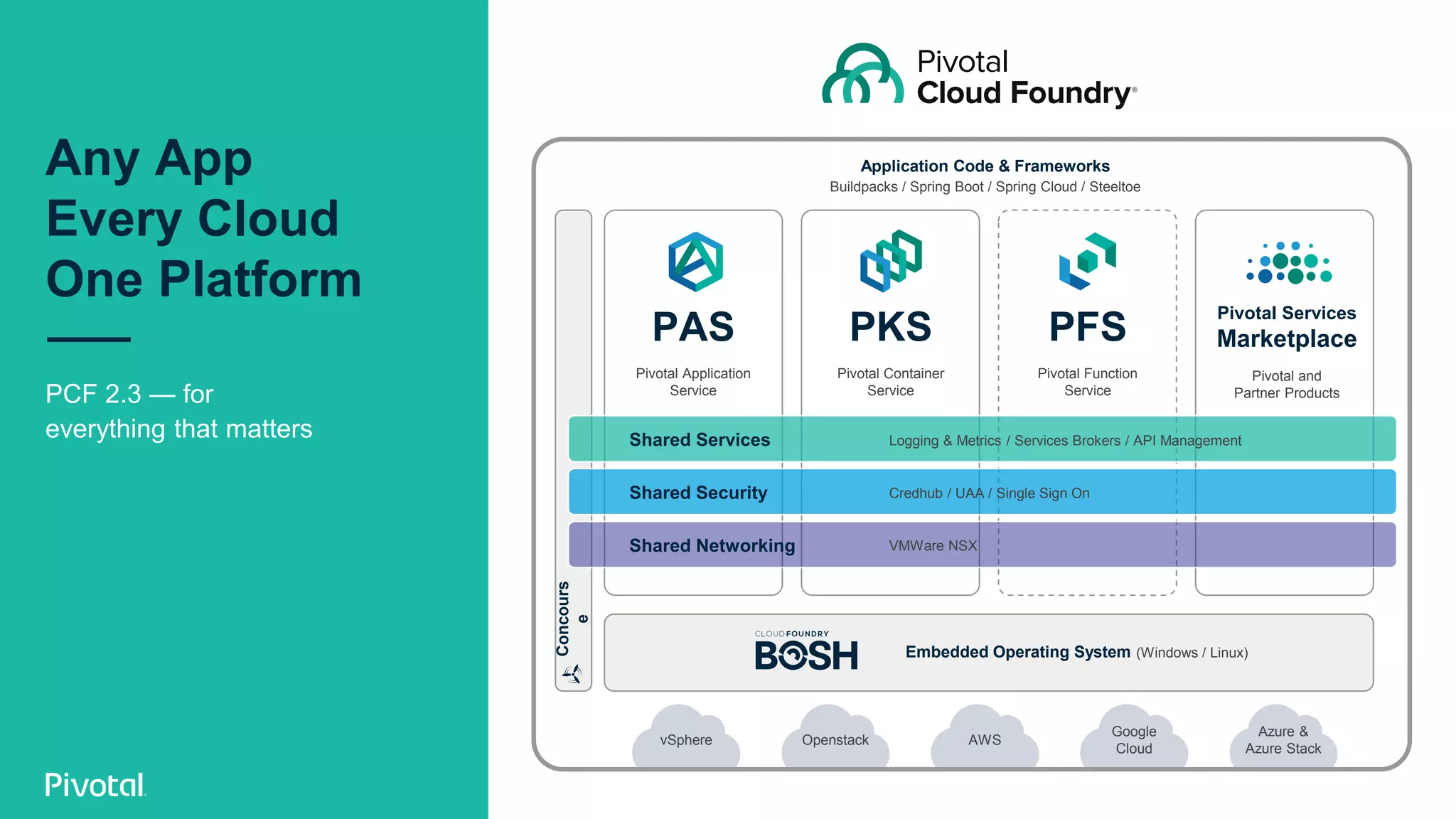

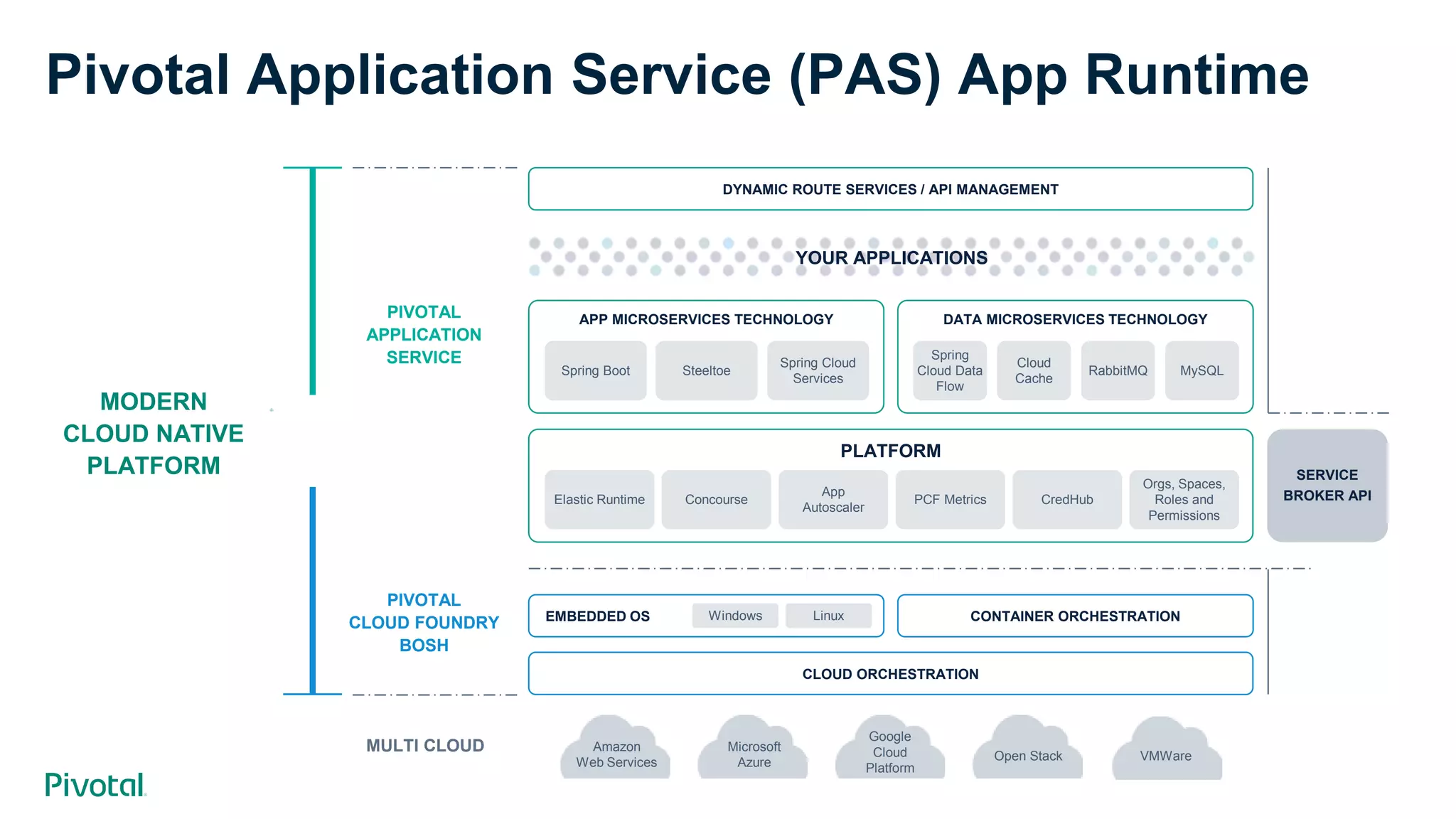

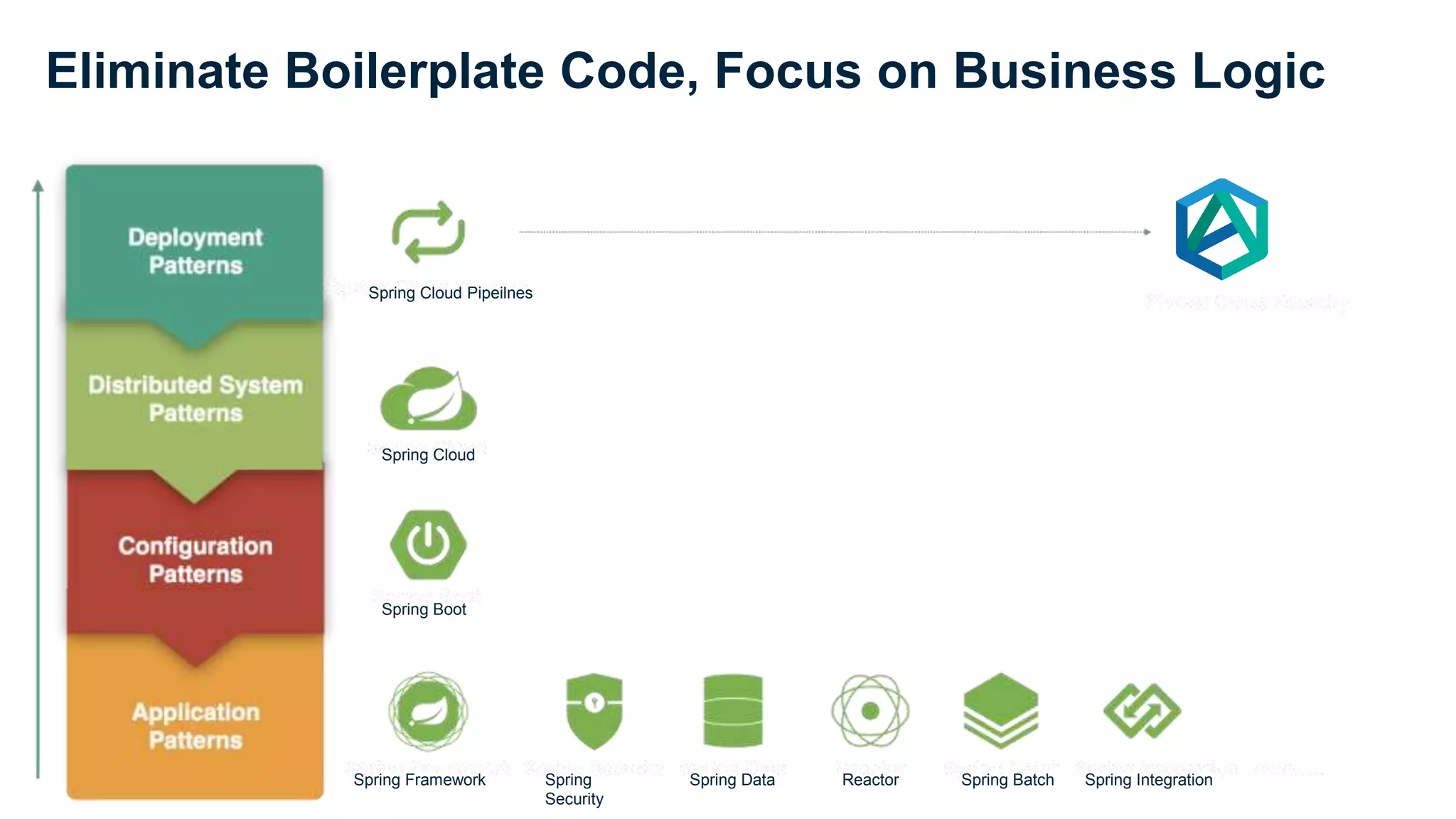

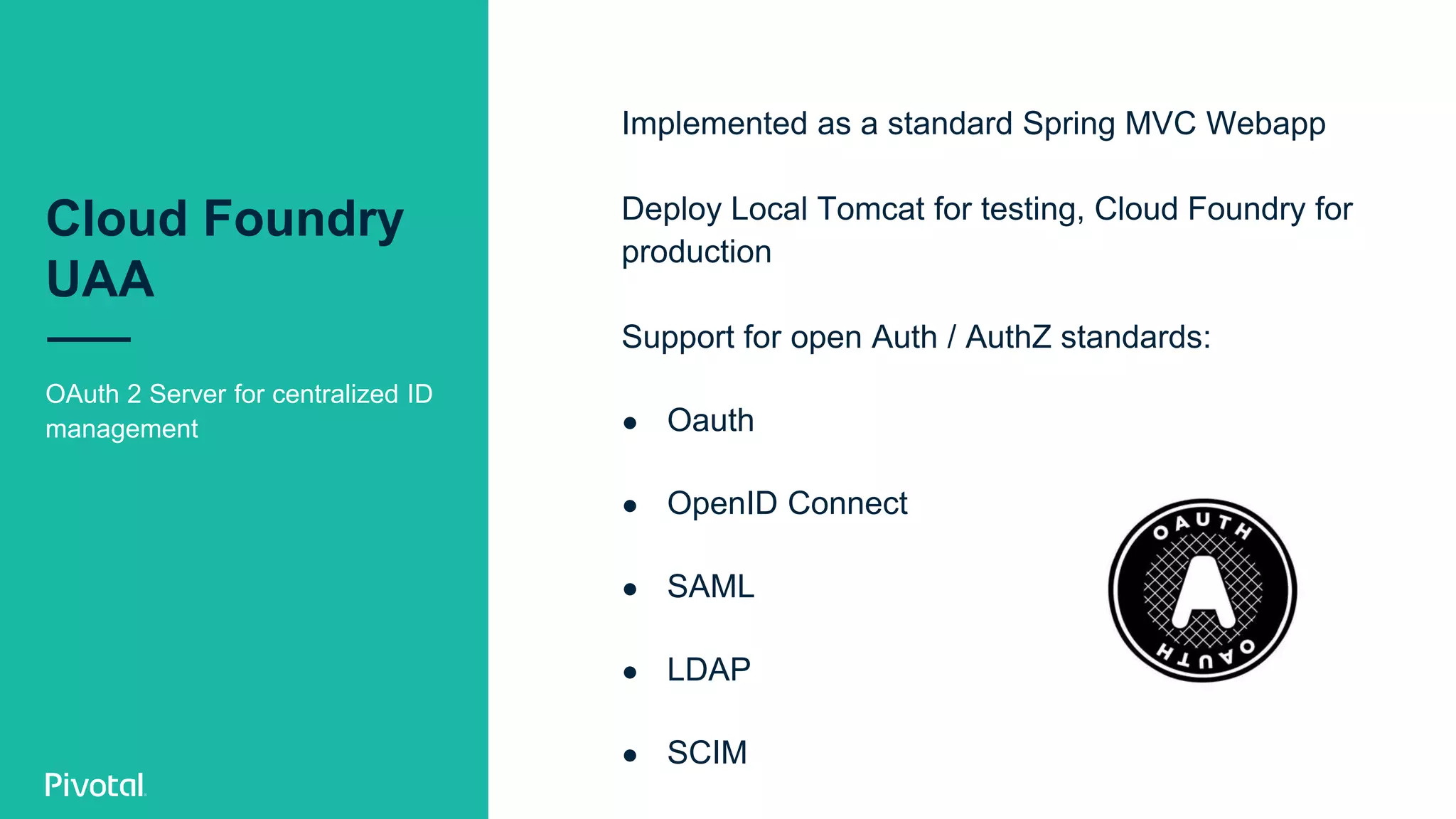

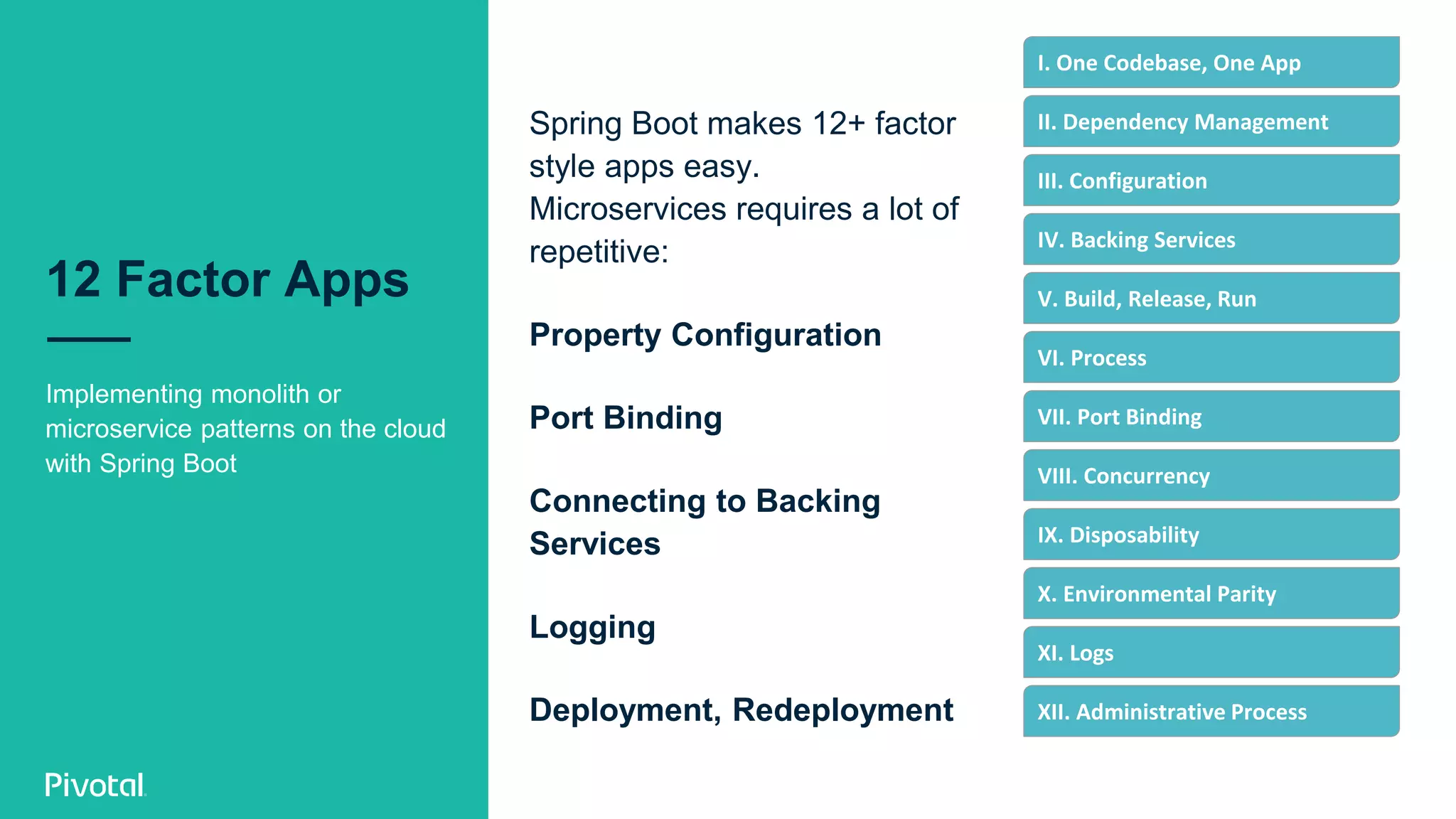

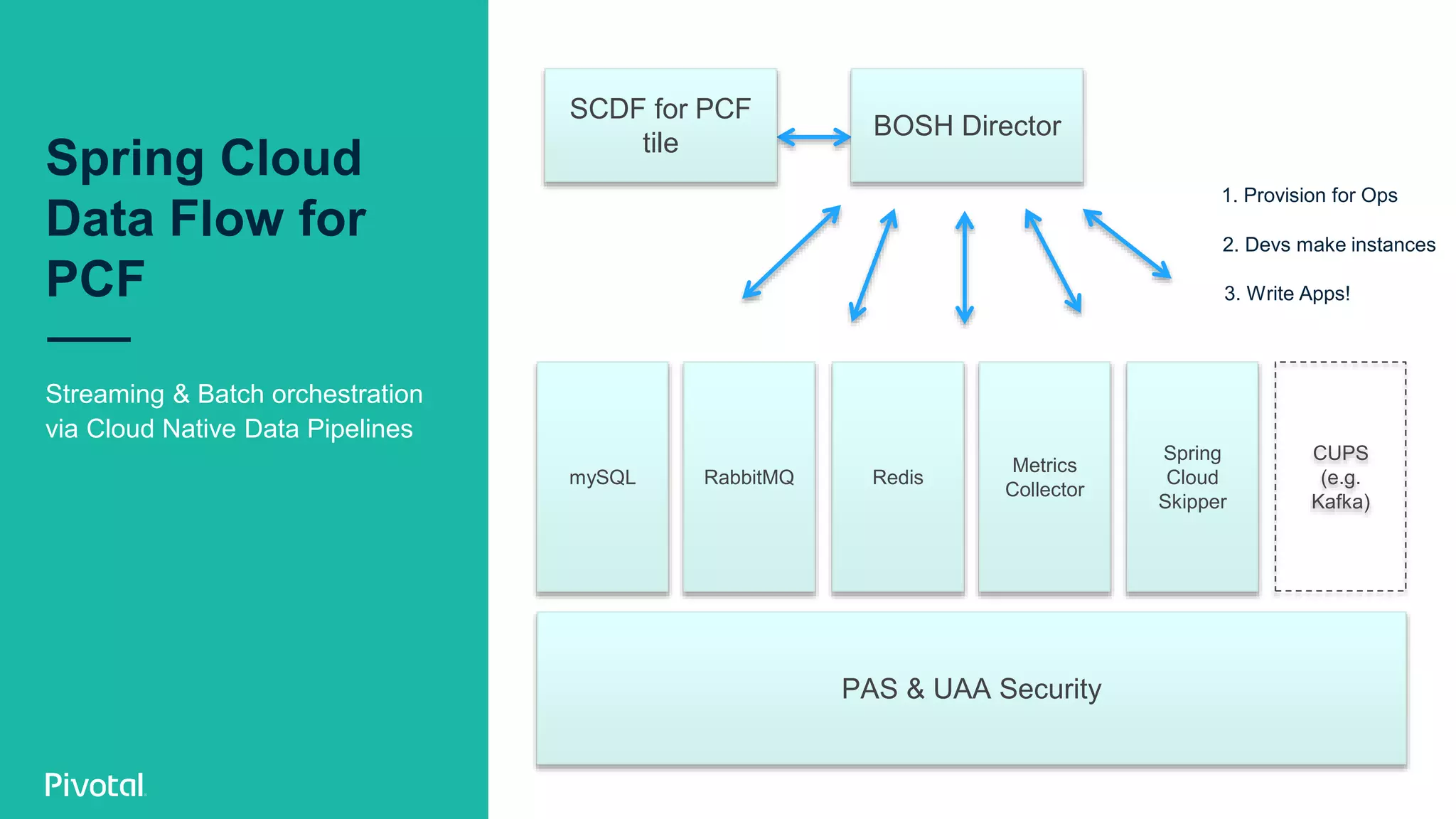

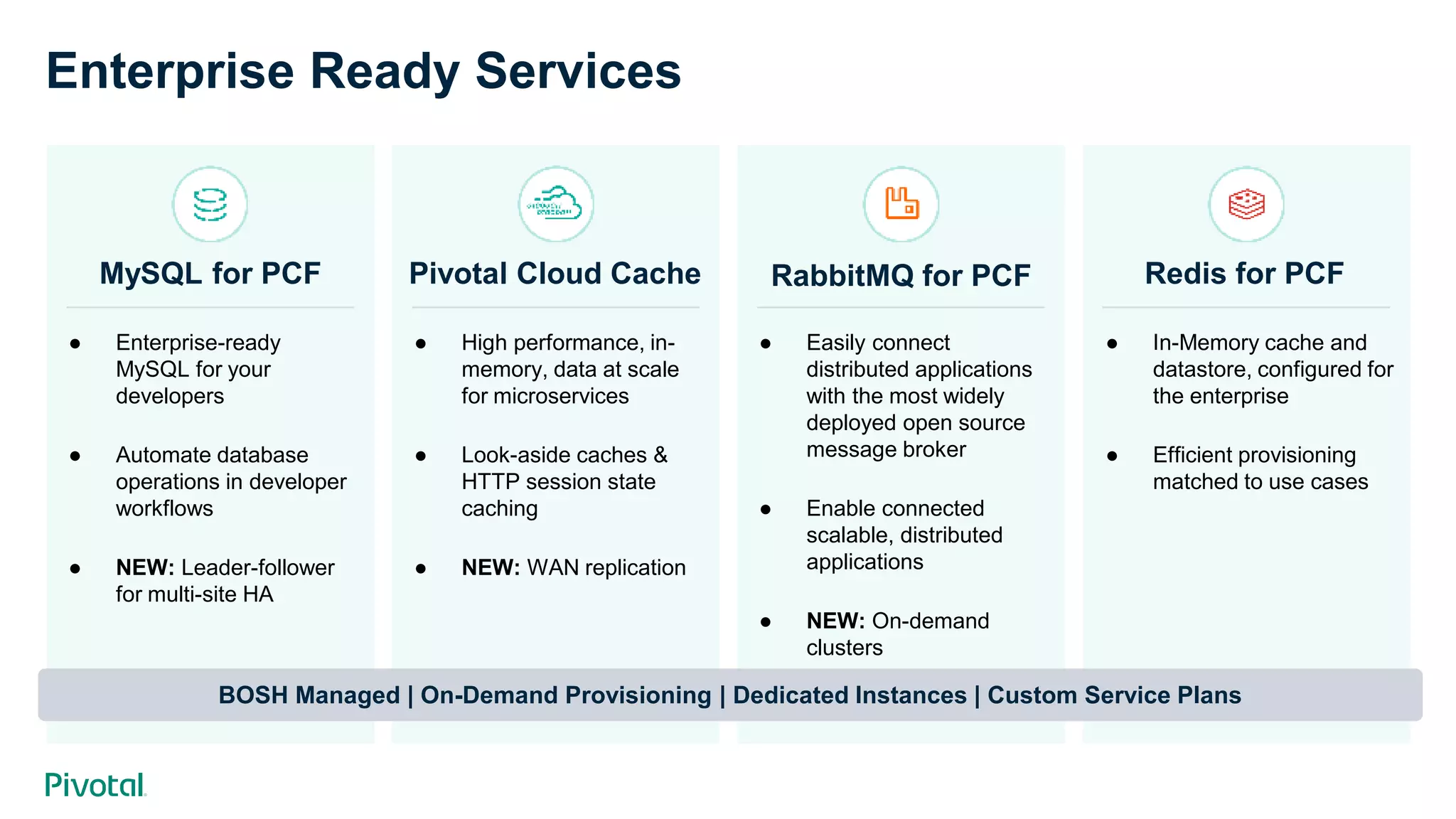

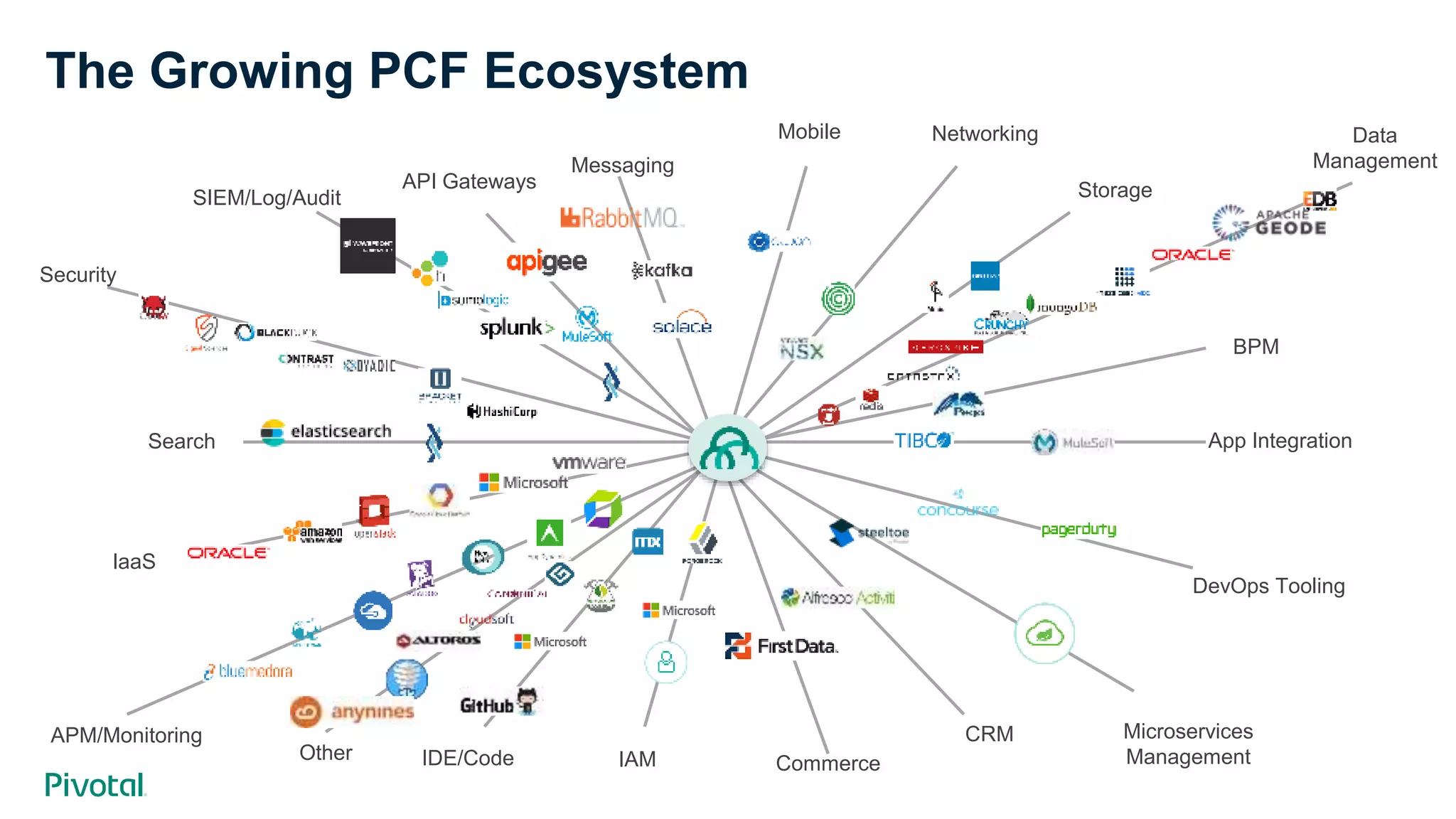

The document provides an overview of Pivotal's Spring and Pivotal Application Service (PAS), emphasizing market-leading support and a robust ecosystem for developing cloud-native applications. It includes insights into developer time allocation for coding and operations, various services offered for Spring apps, and the advantages of using Spring frameworks for building microservices. The document also highlights next steps for engagement and exploration of Pivotal's offerings.