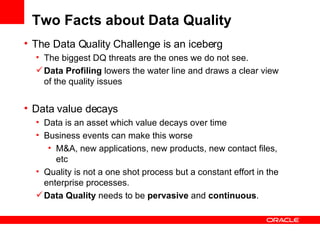

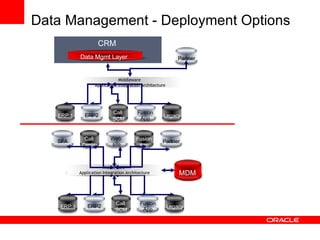

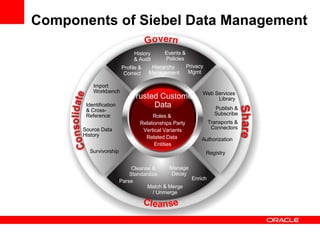

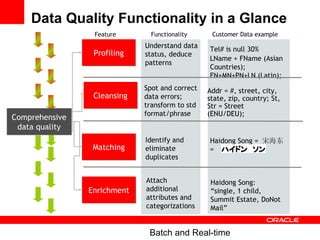

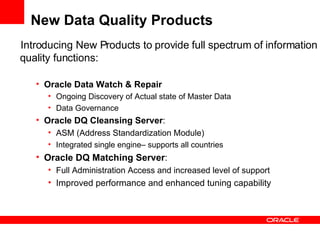

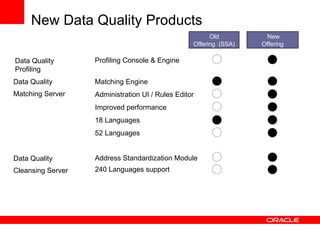

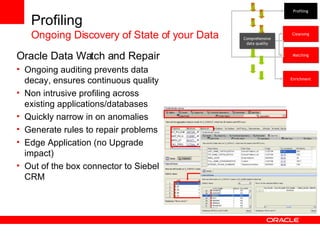

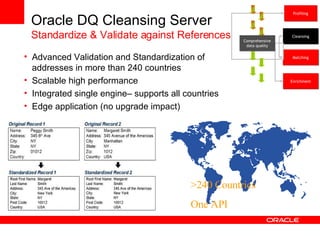

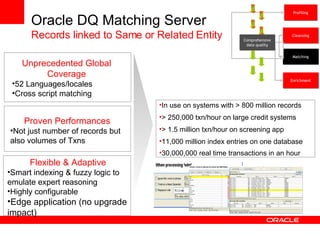

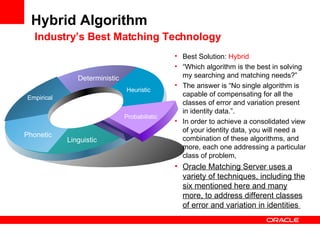

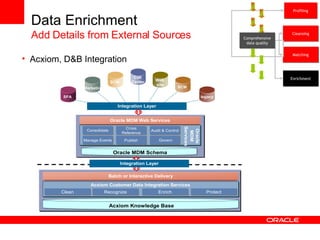

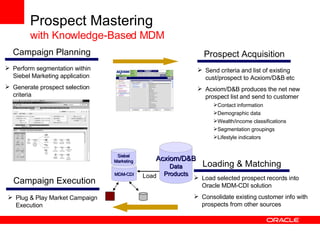

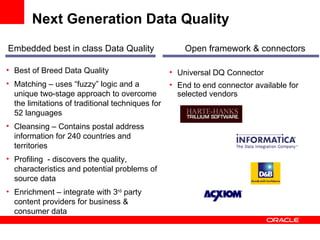

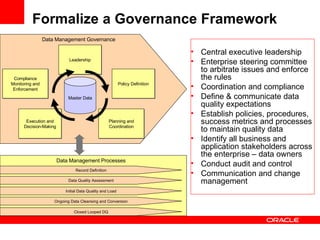

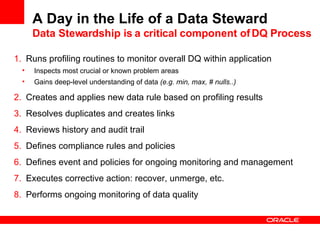

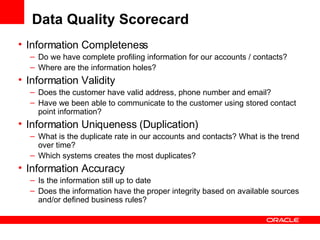

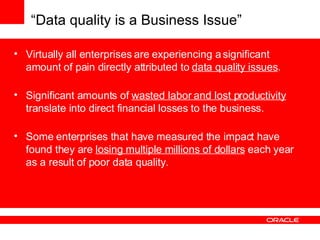

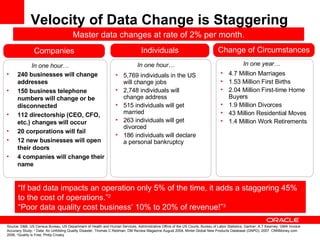

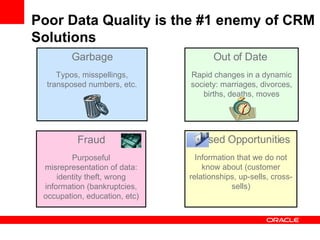

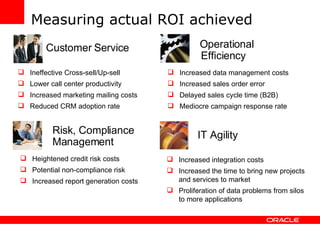

Poor data quality costs businesses significantly through wasted labor, lost productivity and direct financial losses. Rapid changes in circumstances like addresses, phone numbers, jobs and relationships mean that master data changes at a rate of around 2% per month. Siebel's data management solution and new data quality products from Oracle help address these issues through comprehensive data profiling, cleansing, matching and enrichment capabilities. Regular data stewardship is important for ongoing monitoring and governance to maintain high quality master data.

![Example of Customer Data Quality Issue A Simple Customer Table Sample Name Address City State Zip Phone Email Bob Williams 36 Jones Avenue Newton MA 02106 617 555 000 [email_address] Robert Williams 36 Jones Av. MA 02106 617555000 Burkes, Mike and Ilda 38 Jones av. Nweton MA 02106 617-532-9550 [email_address] Jason Bourne, Bourne & Cie. 76 East 51 st Newton MA 617-536-5480 6175541329 … … … … … … … Mis-fielded data Matching Records Typos Mixed business and contact names Multiple Names Non Standard formats Missing Data](https://image.slidesharecdn.com/s300136oow08sound-dq-for-crm-1225440782407066-8/85/Sound-Data-Quality-for-CRM-9-320.jpg)