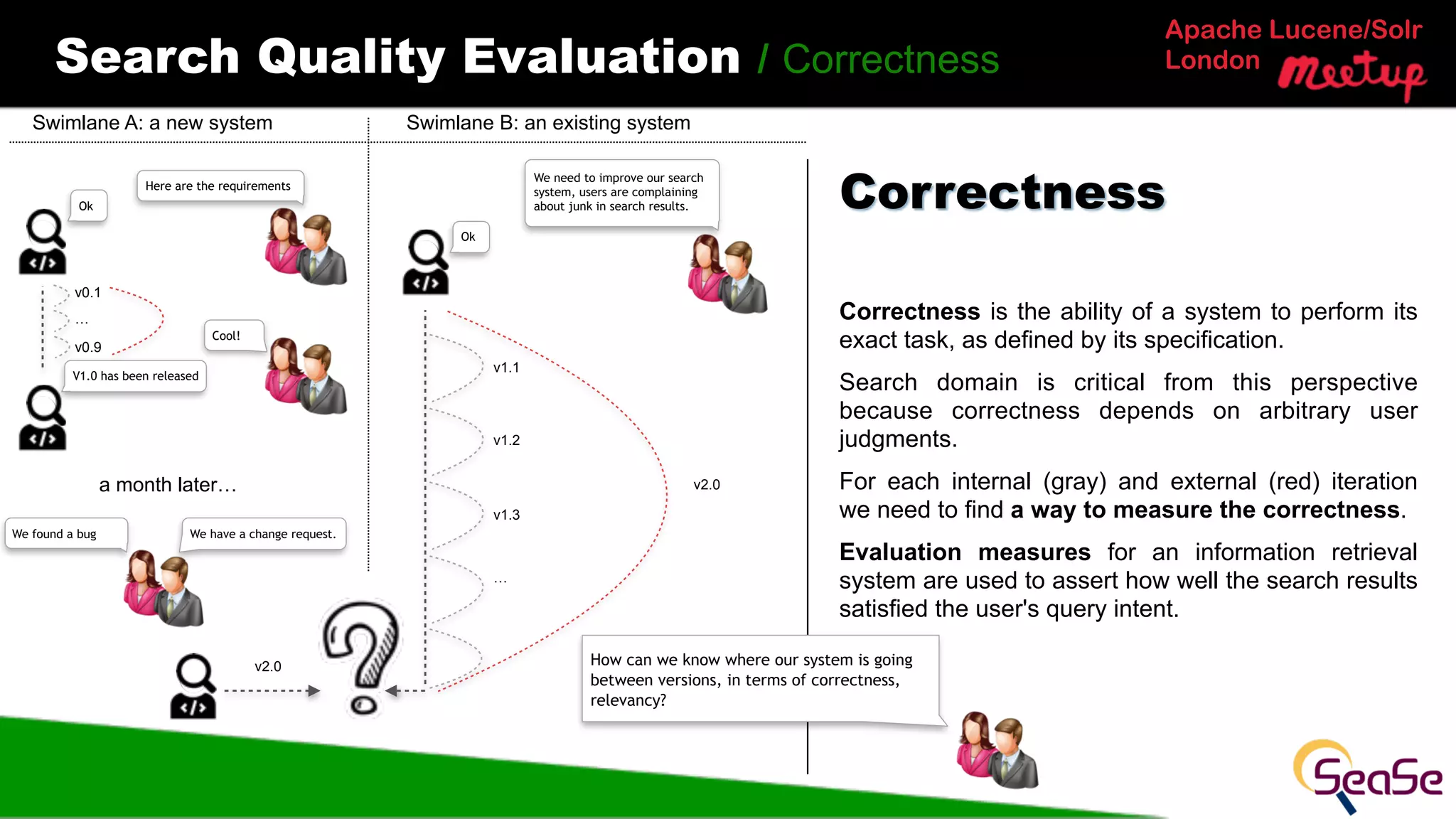

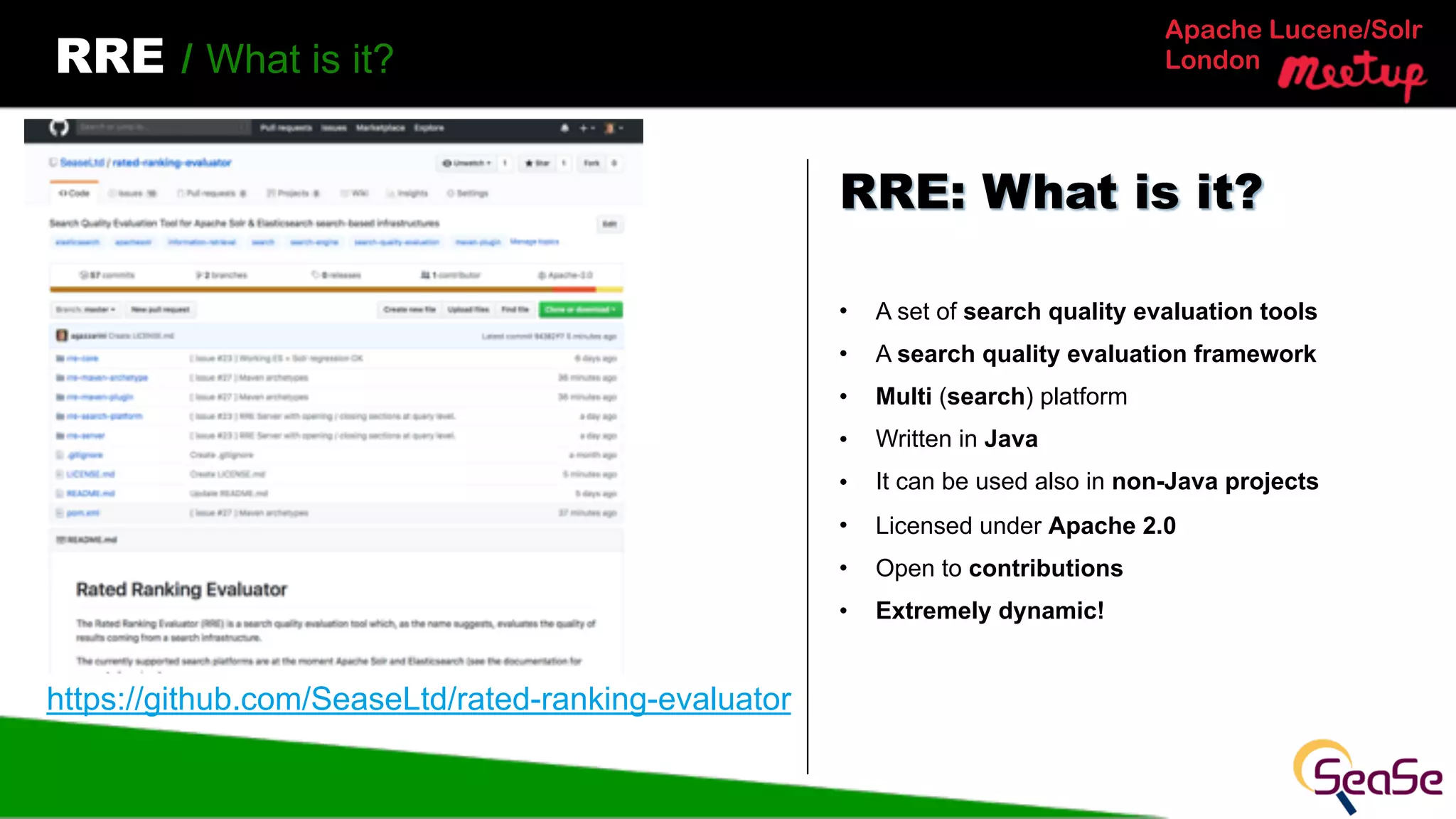

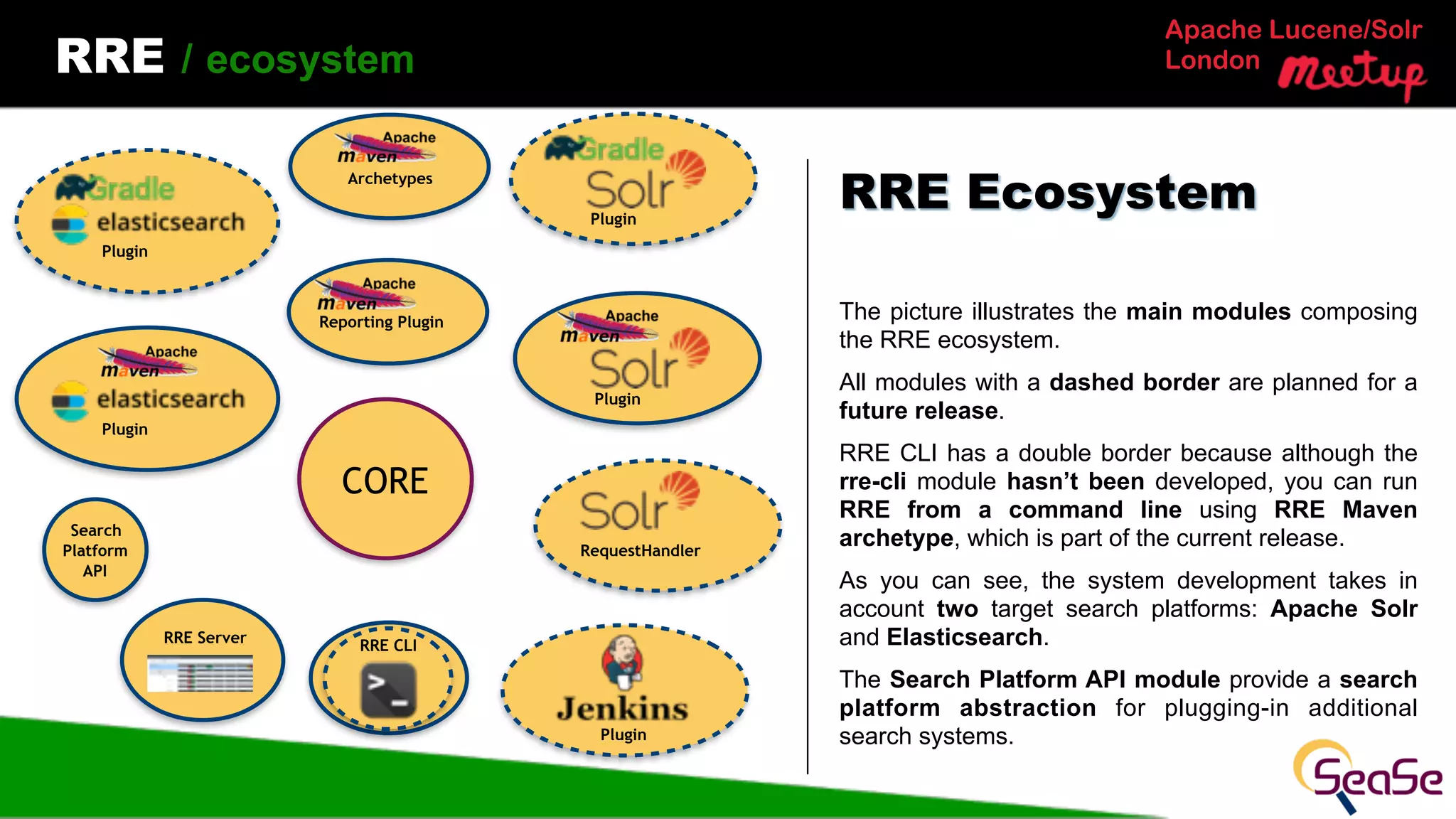

The document discusses search quality evaluation from a developer's perspective, specifically focusing on a framework called Rated Ranking Evaluator (RRE) used for assessing search systems. It emphasizes the importance of evaluating both internal and external factors impacting search system performance, detailing various evaluation measures and the architecture of the RRE tool. Future developments include enhancements for integrating RRE into continuous integration systems and improving user feedback mechanisms.

![Apache Lucene/Solr

LondonRRE / Maven (Solr | Elasticsearch) Archetype

Very useful if

• you’re starting from scratch

• you don’t use Java as main programming

language.

The RRE Maven archetype generates a Maven

project skeleton with all required folders and

configuration. In each folder there’s a README and a

sample content.

The skeleton can be used as a basis for your Java

project.

The skeleton can be used as it is, just for running

the quality evaluation (and in this case your main

project could be somewhere else)

Maven Archetype

> mvn archetype:generate …

[INFO] Scanning for projects...

[INFO]

[INFO] ------------------------------------------------------------------------

[INFO] Building Maven Stub Project (No POM) 1

[INFO] ------------------------------------------------------------------------

[INFO] ………

[INFO] BUILD SUCCESS](https://image.slidesharecdn.com/rre-solr-meetup-london-180628083958/75/Search-Quality-Evaluation-a-Developer-Perspective-25-2048.jpg)

![Apache Lucene/Solr

LondonFuture Works / Solr Rank Eval API

The RRE core will be used for implementing a

RequestHandler which will be able to expose a

Ranking Evaluation endpoint.

That would result in the same functionality introduced

in Elasticsearch 6.2 [1] with some differences.

• rich tree data model

• metrics framework

Here it doesn’t make so much sense to provide

comparisons between versions.

As part of the same module we will have a

SearchComponent, for evaluating a single query

interaction.

[1] https://www.elastic.co/guide/en/elasticsearch/reference/6.2/search-rank-eval.html

Rank Eval API

/rank_eval

?q=something&evaluate=true

+

RRE

RequestHandler

+

RRE

SearchComponent](https://image.slidesharecdn.com/rre-solr-meetup-london-180628083958/75/Search-Quality-Evaluation-a-Developer-Perspective-32-2048.jpg)