Scott Aaronson MIT Freewill Presentation

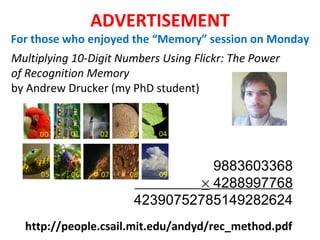

- 1. ADVERTISEMENT For those who enjoyed the “Memory” session on Monday Multiplying 10-Digit Numbers Using Flickr: The Power of Recognition Memory by Andrew Drucker (my PhD student) http://people.csail.mit.edu/andyd/rec_method.pdf 9883603368 4288997768 42390752785149282624

- 2. FREE WILL Scott Aaronson Associate Professor Without Tenure (!), MIT The Looniest Talk I’ve Ever Given In My Life A SCIENTIFICALLY-SUPPORTABLE NOTION OF IN ONLY 6 CONTROVERSIAL STEPS

- 3. Introduction I’ll present a perspective about free will, quantum mechanics, and time that I’ve never seen before Compatibilist? Determinist? Automaton? No problem! You can listen to the talk too I’ll place a much higher premium on being original and interesting than on being right Thanks

- 4. This talk will assume what David Deutsch calls the “momentous dichotomy” : Example application: Quantum computing Either a given technology is possible, or else there’s some principled reason why it’s not possible.

- 5. Conventional wisdom: “Free will is a hopelessly muddled concept. If something isn’t deterministic, then logically, it must be random —but a radioactive nucleus obviously doesn’t have free will!” But the leap from “indeterminism” to “randomness” here is total nonsense! In computer science, we deal all the time with processes that are neither deterministic nor random… Nondeterministic Finite Automaton

- 6. x := x + 5; // Determinism x := random(1…10); // Randomness x := input(); // “Free will” Hopelessly-Muddled? Free will Determinism We can easily imagine “external inputs” to the giant video game we all live in: the problem is just where such inputs could fit into the actual laws of physics!

- 10. How can we define free will in a way that’s amenable to scientific investigation? I propose to consider the question, “Can machines think?” … The original question, “Can machines think?” I believe to be too meaningless to deserve discussion. A. M. Turing, “Computing Machinery and Intelligence,” Mind , 1950 So Turing immediately replaced it with a different question: “ Are there imaginable digital computers which would do well in the imitation game ?” 1. For inspiration, I turned to computer science’s Prophet

- 11. In this talk, I’ll propose a similar “replacement” for the problem of free will People mean many different things by “free will”: - Legal or moral responsibility - The feeling of being in control - “Metaphysical freedom” But arguably, one necessary condition for “free will” is (partial) unpredictability —not by a hypothetical Laplace demon, but by actual or conceivable technologies (DNA testing, brain scanning…) The Envelope Argument: If, after you said anything, you could open a sealed envelope and read what you just said, that would come pretty close to an “empirical refutation of free will” !

- 12. Obviously, many of your actions are predictable, and the fact that they’re predictable doesn’t make them “unfree”! Discussion In general, the better someone knows you, the better they can predict you … but even people who’ve been married for decades can occasionally surprise each other! (Otherwise, they would’ve effectively “melded” into a single person) If someone could predict ALL your actions, it seems to me that you’d be “unmasked as an automaton,” much more effectively than any philosophical argument could unmask you But how do we formalize the notion of “predicting your actions”? After all, if your actions were perfectly random, then in the sense relevant for us , they’d also be perfectly predictable! I’ll solve that problem using a “Prediction Game”

- 13. It’s the year 3000. You enter the brain-scanning machine. The Prediction Game: Setup Phase The machine records all the neural data it can, without killing you The machine outputs a self-contained “model” of you (running on a classical computer, a quantum computer, or whatever) Hardest part of this whole setup to formalize!

- 14. Q #34: Which physicist would you least want to be stranded at sea with: Paul Davies, Sean Carroll, or Max Tegmark? The Prediction Game: Testing Phase “ Max Tegmark” Q #35: Multiverse: for or against? FEEDBACK LOOP

- 15. The Prediction Game: Scoring Phase The Questions: Q 1 ,…,Q n Your Answers: A 1 ,…,A n Predictor’s Guessed Distributions: D 1 ,…,D n where C = some small constant (like 0.01), B = the number of bits in the shortest computer program that outputs A i given Q 1 ,…,Q i and D 1 ,…,D i as input, for all i {1,…,n} We’ll say the predictor “succeeds” if:

- 16. Justification Beautiful Result from Theory of Algorithmic Randomness (paraphrase): Assume you can’t compute anything that’s Turing-uncomputatable. Then the inequality from the last slide can be satisfied with non-negligible probability, in the limit n , if and only if you’re indeed choosing your answers randomly according to the predictor’s claimed distributions D 1 ,…,D n . Note: B is itself an uncomputable quantity! Can falsify a claimed Predictor by computing upper bounds on B, but never prove absolutely that a Predictor works. (But the same issue arises for separate reasons, and even arises in QM itself!) If you don’t like the uncomputable element, can replace B by the number of bits in the shortest efficient program Crucial Point In retrospect , looking back on your entire sequence of answers A 1 ,…,A n , the predictor could always decompose the sequence into (1) a part that has a small Turing-machine description and (2) a part that’s “algorithmically random.” But when it’s forced to guess your answers one by one , it might see a third, “fundamentally unpredictable” component.

- 17. So, can the Prediction Game be won? An “aspirational question” that could play a similar role for neuroscience as the Turing Test plays for AI! Argument for “yes”: All information relevant for cognition seems macroscopic and classical. Even if quantum effects are present, they should get “washed out as noise” But this is by no means obvious! Consider the following… Falsifiable Hypothesis (H): The behavior of (say) a mammalian brain, on a ~10s timescale, can be (and often is) sensitive to molecular-level events 2.

- 18. If you believe Hypothesis H, then there would appear to be a fundamental obstacle to winning the Prediction Game… “ Penrose Lite”: No speculations here about the brain as quantum computer, noncomputable QG effects in microtubules, objective state-vector reduction, etc … just the standard No-Cloning Theorem! 3. The No-Cloning Theorem There’s no general procedure to copy an unknown quantum state, even approximately

- 19. Simple 1-Qubit Model Situation VANILLA CHOCOLATE BOXERS BRIEFS

- 20. But can the No-Cloning Theorem actually be used to get quantum states that are both unclonable and “functional” ? Recent work in quantum computing theory illustrates that the answer is yes… Putting Teeth on the No-Cloning Theorem Quantum Money (Wiesner 1969, A. 2009, Farhi et al. 2010, A.-Christiano 2011…): Quantum state | that a bank can prepare, people can verify as legitimate, but counterfeiters can’t copy Quantum Copy-Protected Software (A. 2009) : Quantum state | f that a software company can prepare, a customer can use to compute some function f, but a pirate can’t use to create more states that also let f be computed While these proposals raise separate issues (e.g., computational complexity), they’re analogous to what we want in one important respect: if you don’t know how the state | or | f was prepared, then you can copy it, but only with exponentially-small success probability (just like if you were trying to guess the outputs by chance!) 4.

- 21. Suppose the Prediction Game can’t be won, even by a being with unlimited computational power who knows the dynamical laws of physics (but is constrained by QM). Then such a being’s knowledge must involve Knightian uncertainty either about the initial state of the universe (say, at the big bang), or about “indexical” questions (e.g., “our” location within the universe or the Everett multiverse) For otherwise, the being could win the Prediction Game! Knightian Uncertainty 5. In economics, Knightian uncertainty means uncertainty that one can’t even accurately quantify using probabilities. There are formal tools to manipulate such uncertainty (e.g., Dempster-Shafer theory) Poetically, we could think of this Knightian uncertainty about initial conditions as “a place for free will (or something like it) to hide in a law-governed world”!

- 22. “ Look, suppose I believed the Prediction Game was unwinnable. Even so, why would that have anything to do with free will? Even if I don’t know the initial state | 0 , there still is such a state, and combined with the dynamical laws, it still probabilistically determines the future!” A Radical Speculation About Time If the Prediction Game was unwinnable, then it would seem just as logically coherent to speak about our decisions determining the initial state, as about the initial state determining our decisions! “ Backwards-in-time causation” , but crucially, not of a sort that can lead to grandfather paradoxes 6.

- 23. |0 |0 |0 |1 |0 |0 |0 |0 INITIAL HYPERSURFACE (AT THE BIG BANG?) MACROSCOPIC AMPLIFICATION | =|1 Bob asks Alice on a date | =|+ Alice says yes MACROSCOPIC AMPLIFICATION | | |1 |+ There’s a “dual description” of the whole spacetime history that lives on an initial hypersurface only, and that has no explicit time parameter—just a partially-ordered set of “decisions” about what the quantum state on the initial hypersurface ought to be. A decision about particle A ’s initial state gets made “before” a decision about particle B ’s initial state, if and only if, in the spacetime history, A ’s amplification to macroscopic scale occurs in the causal past of B ’s amplification to macroscopic scale

- 24. Are there independent reasons, arising from quantum gravity, to find such a picture attractive? (Now comes the speculative part of the talk!) “ The Black Hole Free Will Problem”: You jump into a black hole. While falling toward the singularity, you decide to wave. According to black hole complementarity , there’s a “dual description” living on the event horizon. But how does the event horizon “know” your decision? Could a superintelligent predictor, by collecting the Hawking radiation, reconstruct your decision without having ever seen either “your” past or “your” future? The account of free will I’m suggesting can not only accommodate a dual description living one dimension lower; in some sense, it demands such a description

- 26. Conclusions On the other hand, the idea that the Prediction Game can be won also strikes me as science fiction! (For then how could you ever know you were “you,” rather than one of countless simulations being run by various Predictors?) I admit: the idea that the Prediction Game can’t be won (because of, e.g., quantum mechanics and Knightian uncertainty about the initial state) strikes me as science fiction By Deutsch’s “Momentous Dichotomy,” one of these two science-fiction scenarios has to be right! Crucially, which scenario is right is not just a metaphysical conundrum, but something that physics, CS, neurobiology, and other fields can very plausibly make progress on

Editor's Notes

- I’ll start with a brief ad. For those of you who enjoyed Kathleen McDermott and Henry Roedinger’s talks on Monday – as I certainly did! – my grad student, Andy Drucker, recently did this amazing project where he taught himself to multiply huge numbers in his head, using only a stock photo collection to help him jog his memory. By associating each digit in each position with a different image, he was able to multiply THESE ten-digit numbers, on his first try, in a mere seven hours. In fact, you can do arbitrary Turing machine computations using this technique. Anyone can easily learn it. If you want to know more, you can read Andy’s paper at this URL – or ask me for it later.

- OK, the title of my talk is “A Scientifically-Supportable Notion of Free Will In ONLY SIX Controversial Steps: The Looniest Talk I’ve Ever Given In My Life.“ Yeah, I’m Scott Aaronson, and I’m a theoretical computer scientist at MIT without tenure -- after this talk, probably forever.

- I want to explain a certain perspective about free will, quantum mechanics, and time that seems to lead to a lot of interesting scientific questions, but that I’ve never seen before. In this talk, I’m gonna place a MUCH higher premium on being original and interesting than on being right! Look, I got tired of being right all the time – it got boring. I fully understand the force of the arguments (which Sean Carroll recently summarized in his blog) that free will is just NOT something we should look for in fundamental physics. But even if you believe that, obviously it’s worth seeing how strong a case can be mustered against that view. So, if you’re a compatibilist – that is, someone who thinks free will is compatible with determinism -- and/or a determinist, or even an automaton, that’s no problem! You can listen to the talk too. In fact, I think compatibilists might even find what I have to say surprisingly “compatible” with their own views.

- As a preliminary, this entire talk is gonna be based on something that David Deutsch, in his new book “The Beginning of Infinity,” called the “momentous dichotomy”: “Either a given technology is possible, or else there’s some principled reason why it’s not possible.” That is, whether we’re talking about warp drive, or AI, or cryonics, or time machines, or a cure for seasickness, either it can be done, given enough time, money, etc., or else it can’t be done. But if it can’t be, then there has some REASON why it can’t – which might be the Second Law, or no superluminal signalling, or P!=NP, or some other principle of math or physics that rules the thing out. This sounds almost tautological, but it has all sorts of implications that people find hard to swallow! One example that’s close to home for me is quantum computing. I’m constantly getting into arguments with people who want to believe BOTH that scalable quantum computers are a fantasy – totally impossible, even in principle – but ALSO that there’s nothing wrong with our current understanding of quantum mechanics. But that puts them in a really tight spot. They want to be scientifically conservative, but it’s like, dude! Something has to give.

- So, what’s the conventional wisdom about free will among scientifically-minded people? People say, it’s a hopelessly-muddled concept. Because there are only two possibilities: either something can be deterministic, or else it can be governed by chance. But in neither case is it “free.” A roulette wheel or a radioactive atom is random, but we wouldn’t say those things have free will. I’m sorry---whatever else you believe, this leap from indeterminism to randomness is bullshit. In computer science, we deal all the time with processes that are neither deterministic nor random. An example is a nondeterministic finite automaton: if you’re in state s2 and you see symbol a, you could stay where you are or you could transition to state s3. But we don’t put probabilities on these two arrows: we just say that either could happen. Even more basic, when we design an algorithm, we don’t know which input it’s going to get, we usually don’t even know a probability distribution over inputs. All we know is, we want the thing to work for ANY input.

- The distinction seems clear in the context of video games. From the perspective of someone who lived inside Super Mario World, Mario has free will, whereas the goombas he stomps on don’t have it. Or in terms of programming, here’s a deterministic instruction, here’s a probabilistic one, and here’s an instruction that corresponds to the operation of “free will.”

- Alan Turing, Peace Be Upon Him In his famous paper “Computing Machinery and Intelligence,” published in 1950, he says, “I propose to consider the question ‘Can machines think?’” Then a few pages later, he rejects the question as too meaningless to deserve discussion! So he replaces it by a different question --- one that’s not quite the same, but has the enormous advantage of leading to an actual research program. Are there imaginable digital computers which would do well in the imitation game, or what we now call the Turing Test? That is, computers that could simulate a human so well that a human judge couldn’t tell the difference?

- Legal or moral responsibility The feeling of being in control “ Metaphysical freedom”---this one often gets mixed up with theological questions. For the first two, one can give reasonable arguments it could make sense to talk about them even in a completely deterministic universe (and philosophers like Daniel Dennett have done so). The third one, I take it as given that even if it exists, science could never be in a position to demonstrate such a thing. The sense of free will that we have some empirical handle on, the sense that will interest me, is *partial unpredictability*. Here, I don’t mean unpredictability by some hypothetical Laplace demon, but unpredictability by actual or conceivable technologies. Like DNA testing, or brain scanning, or stuff like that. One could argue that partial unpredictability is a necessary condition for having free will even if it’s not a sufficient condition (sort of a converse to how people argue that passing the Turing Test is a sufficient condition for intelligence but not a necessary one). The argument for this is what I’ll call the “Envelope Argument”:

- If you’re being chased by a bear, someone who’s watching you might be able to predict perfectly well that you’re gonna run for your life – but it’s still your free choice to run. You still could’ve chosen otherwise. I’m told that even people who’ve been married for decades… To do so, I’ll define a certain “Prediction Game”

- The Prediction Game consists of three phases. The first is the Setup Phase. Let’s imagine it’s the year 3000. You enter the super-advanced brain-scanning machine. Using nanobots, or whatever, the machine records everything it can about your pattern of neural connections, the synaptic strengths, etc.---and does it without killing you. Ironically, the not killing you is actually the hardest part of the entire game to formalize! For example, suppose the machine chopped your head off, cut your brain into slices, imaged the slices, and then built a complete working model of you in a computer. Would that be “killing” you, or just transferring you into a better physical substrate? Well, I don’t know! One answer would just be that when we say “don’t kill you,” we mean it in the ordinary sense that we know when we see! That is, while going into the machine might affect you in various subtle ways, you should be able to walk out of the machine living and breathing essentially as you were before. If you don’t like that, I have a variant of the Prediction Game where the machine can go right ahead and kill you, slicing up your brain for analysis however it wants. However, it then needs to output not just one but MANY identical models of you, any one of which can fool someone who knows you extremely well (say, your wife or husband) into thinking it’s you. (We’ll assume, for the purposes of the thought experiment, that your wife or husband wasn’t aware you decided to do this!) Leaving that aside: after more-or-less non-invasively scanning your brain, the machine outputs a self-contained “model” of you, running on a classical computer, a quantum computer, or whatever. If desired, this model can include details about the rest of your body, your clothing, the motion of the air in the room that you’re in … which by the way, we’re going to imagine as a very very isolated, very empty and controlled room in which you’re now going to be asked questions. That brings us to phase two, the testing phase.

- In the testing phase, you get asked a series of multiple-choice questions. For example: You give an answer – in this case, Max Tegmark. I apologize, Max -- it’s not me, it’s him. Meanwhile, the model outputs, not necessarily a *single* answer, but a probability distribution over the answers. In this case, we can see that it did pretty well: it gave Max the highest probability.

- Finally we come to the scoring phase. Let Q1,…,Qn be the list of questions that were asked. Let A1,…,An be your answers. Finally, let D1,…,Dn be the distributions that the predictor guessed. We’ll say the predictor “succeeds” if a certain inequality holds, which basically says that the predictor is “surprised” by your answers about exactly as often as it expects to be surprised. So, the product of the probabilities of all your actual answers, according to the guessed distributions, must be greater than or equal to some small constant (say, 1/100), divided by 2 to the power of the number of bits B in the shortest computer program to simulate your responses (in some Turing-universal programming language).

- Now, there’s a beautiful result from the theory of algorithmic randomness, which says that, as long as you don’t have uncomputable powers – and specifically, the ability to solve the halting problem -- the ONLY way this inequality can be satisfied with any non-negligible probability, in the limit as n gets large, is if you’re indeed choosing your answers randomly according to the Predictor’s claimed distributions. It’s ironic that some people, like Roger Penrose, talk about the brain solving uncomputable problems like the halting problem. By contrast, I take it as obvious that the brain isn’t solving the halting problem, and actually need to assume as much so my definition works out. One drawback of the definition is that the Kolmogorov complexity is itself an uncomputable function. On the other hand, one can certainly compute upper bounds on the Kolmogorov complexity, which will often be enough to *rule out* a claimed Predictor, although not to prove the Predictor is working. But notice that you can never prove beyond doubt that a Predictor is working anyway! For even it correctly predicted your answers to a million questions, maybe there are other questions on which it couldn’t predict your answers. In any case, if you don’t like the uncomputable element, you could easily replace Kolmogorov complexity here by *polynomial-time bounded* Kolmogorov complexity. That’s computable, although it’s conjectured to take exponential time.

- So without further ado: CAN the prediction game be won? ~10s timescale: the timescale relevant for the Prediction Game Sensitive to individual events: in some chaotic manner. The hypothesis is that, by altering the quantum states of some crucial molecules in the brain, while leaving the brain’s overall *macroscopic* configuration unaltered, you could initiate a cascade of events that first changes the configurations of larger groups of molecules, which then effects whether some neuron fires or doesn’t fire, which would then affect the firing pattern of some larger group of neurons, which would finally cause you to say one thing and not another. Whether something like this could be true, or IS true, seems to me like a profound empirical question for neuroscience, biology, chemistry, physics. I don’t want to presume to spout about matters far from my expertise. I’ll just confine myself to two points. First, it seems far from obvious whether this hypothesis is true. There are strong intuitions that one could advance on either side of the question. Second, there’s nothing about this question that should make it fundamentally unanswerable. One could imagine experimental evidence mounting for the hypothesis, and one could also imagine evidence mounting against it.

- The point I want to make is that, IF you believe Hypothesis H… No-Cloning Theorem: There’s no general procedure to copy an unknown state, even approximately – or, what amounts to the same thing, there’s no way to measure a quantum state to unlimited accuracy. This idea of appealing to the No-Cloning Theorem in the context of brain prediction, I like to call “Penrose Lite.” It affirms Roger Penrose’s hope that the actual content of modern physics would have something to say about the brain, but then it rejects almost the entire rest of his program. Notice that there’s no uncomputable quantum gravity effects acting in microtubules, for that matter no coherent quantum computing of any kind being postulated to occur in the brain, no gravitationally-induced objective state vector reduction (or really any particular stance about the measurement problem), and certainly no (I would say) mistaken appeals to Godel’s Incompleteness Theorem. There’s just the No-Cloning Theorem, together with this hypothesis about chaotic amplification of small effects that could take place in the brain.

- As a simple example, you could imagine a qubit that can be measured in two different orthogonal bases – in one basis to learn out whether you prefer chocolate or vanilla, or in a conjugate basis to learn whether you prefer boxers or briefs. Given either question, you can make a measurement to learn the answer to that question. What you can’t do is copy the qubit---or more generally, create ANY second system that encodes the answer to either of the two questions.

- You might say, but that’s a silly example. Can we actually put some teeth on the No-Cloning Theorem, and use it to get quantum states that we can actually DO something useful with, but that we can’t use to produce more quantum states that let us do the same useful thing? Recent results in quantum computing theory have illustrated very strongly that the answer to that question is yes. In fact, it was in the context of these results that I first started thinking about the clonability or otherwise of the brain. (Or maybe it was thinking about clonability of the brain that led me to think about these other things?) The first set of results concerns quantum money: a dollar bill (say) that would have some qubits attached to it, and which therefore couldn’t be counterfeited according to the laws of physics. This is actually an old idea, going back to Stephen Wiesner around 1969. Wiesner proposed a quantum money scheme that was provably secure, but it had the drawback that the only one who knows how to verify the money as legitimate is the bank that printed it. Recently, Ed Farhi, Peter Shor, myself, and a number of others have been revisiting Wiesner’s idea. Our goal has been to find a quantum money scheme where ANYONE can verify the money (but it’s still hard to counterfeit). Here, we need to assume not only the validity of quantum mechanics, but also various computational complexity assumptions. The most recent and probably most satisfactory of these schemes was proposed by myself and Paul Christiano. A related idea is quantum copy-protected software: a quantum state psi_f that… Again, we have evidence that this is indeed possible for certain types of functions f (e.g., a function that recognizes a hidden password).

- The fifth ingredient is something called “Knightian uncertainty.” Some people would argue that the recent financial collapse provided a good illustration of this! While the idea sounds fuzzy, there are actually formal tools for dealing with Knightian uncertainty, of which the best-known is probably the Dempster-Shafer theory of belief functions. These tools let us make sense of assertions like, “I think there’s more than a 40% chance that Obama will get reelected and less than an 80% chance, but I’m unwilling to quantify further than that.” Now, suppose the Prediction Game could not be won, even by a Predictor with unlimited computational power (but who’s constrained to measuring your brain in ways consistent with QM). Then I claim that that Predictor would HAVE to be in a situation of Knightian uncertainty about the relevant initial conditions – which could either mean the state of the universe at the big bang, or indexical questions: where are WE within inflating universe, or which branch of the Everett wavefunction are WE in? My argument is simply that, if the Predictor didn’t have such uncertainty, then it WOULD be able to win the Prediction Game with high probability, contrary to assumption! In other words, after the game was over, the Predictor could always look back and say: well, I didn’t predict this person’s brain perfectly, but that’s to be expected: the brain involves a (known) stochastic process, so NOTHING can predict it perfectly. I did as well as I could given the circumstances. I gave probabilities that, knowing everything I know now about this stochastic system, I couldn’t have improved on. But if the Prediction Game is unwinnable, then that’s not the situation at all! The Predictor will look back on the game and think, “damn! I could’ve done better, knowing what I know now. There *were* regularities in the sequence of answers; I just failed to detect those regularities.”

- At this point, I need to address an obvious objection. People will say: look… This leads to a radical speculation about time, finally bringing things around to the subject of this conference! I submit that, IF the Prediction Game was unwinnable, … … not of a sort that can lead to Grandfather Paradoxes. Why? Because here we’re postulating that this retrocausation can only take place for quantum states that no one could have possibly measured anyway since the beginning of the universe, because of the No-Cloning Theorem. So although there’s retrocausation, it’s still a one-way or directional process. You can imagine, if you like, that there are qubits all over the world today which have been in states of Knightian uncertainty since the Big Bang. Maybe we should call them “willbits.” By making a decision, you can retroactively determine the quantum state of one of these willbits. But then once you determine it, that’s it! There’s no going back.

- We’re led to the following picture of the world: there’s a quantum state of the universe living on some initial hypersurface. Maybe it’s at the Big Bang, or maybe it’s even a different initial hypersurface, say a lightlike one. Together with the Hamiltonian, this quantum state completely determines the future evolution of the universe. However, the initial state ITSELF is not completely determined---at least, not yet. There are qubits living on the initial hypersurface for which we can’t reasonably ascribe a pure state or even a mixed state. We can only say we don’t know their state: we have Knightian uncertainty. Now, the time evolution propagates forward, and things happen – we get stars, galaxies, etc. – and in particular, many of these qubits about which we initially had Knightian uncertainty get amplified to a macroscopic scale. They decohere. And at that point, a decision has to be made about them. So the entire spacetime history can be seen as a process that determines, in retrospect, what its own initial state was! You might reasonably ask: why focus on an initial hypersurface here? Why not some future spacelike hypersurface? Well, we could. That would work just as well. But as many people at this conference have expounded, there DOES seem to have been a state to our past that was very, very special, by virtue of having low entropy! And because of that, it’s much more natural to us to talk about time flowing forward from the initial state than about its flowing backward – except, I’m claiming, in a few special circumstances where it actually makes more sense to talk in terms of retrocausation.

- This seems like a weird picture. We can ask: are there independent reasons… Now comes the speculative part of the talk, where I’m REALLY gonna go out of my depth. Let me describe a slight variant of the traditional problem of free will and determinism, which I’ll call the “Black Hole Free Will Problem.” Here, the story is, you jump into a black hole. When you’re well past the event horizon, but before you hit the singularity, you decide to wave your arm. Here, can you see him waving? ..dual description living on the event horizon. But that seems incredibly hard to reconcile with the idea of free will. How does the event horizon “know” your decision? To put the point more sharply: imagine a superintelligent Predictor who’s sitting outside the event horizon, scooping up all the Hawking radiation as it comes out. Now, assuming the modern belief that the black hole doesn’t destroy any information, in principle, this Predictor who’s outside the event horizon could reconstitute your entire quantum state, and would therefore be able to know that, as you were falling toward the singularity, you waved your arm. But the Predictor has never even met you! Or better, he’s only met you in the rather sorry form of Hawking radiation. He didn’t the state of the universe BEFORE you waved, and work forward from that to predict what you would do. He also didn’t see the state of the universe AFTER you waved, and work backward to figure out what you did. Instead, he only ever saw this quantum state which is supposed to be somehow “dual” to you, but which seems incredibly hard to identify with you or your choices. The point I want to make is that the account of free will I’m suggesting can not only accommodate this dual description of you that lives one dimension lower – in some sense, it DEMANDS that there exist such a description! Because it says that, if you think clearly about the puzzle of free will versus determinism, then you HAVE to look at this dual description that lives on an initial hypersurface. Indeed, it says that the puzzle of free will versus determinism arises precisely BECAUSE we’re incorrectly looking for the answer to it in ordinary (3+1)-dimensional spacetime, rather than in this lower-dimensional dual description that encodes the same information. As I said, this was the speculative part of the talk.

- The principle that James Randi’s million dollars is safe. A crucial question is, why couldn’t you reconceptualize time in the way I’m suggesting even in a purely *classical* universe? The answer is that you could – BUT if you don’t have the No-Cloning Theorem, or some other source of unclonability, then you’ll need to abandon at least one of these two principles. Imagine, for example, that someone writes a classical book that stores the entire state of your brain. We’d then need to imagine that your decision affects not only the current state of your brain, not only the initial state of the universe that evolves into your brain, but that it also affects what’s written in the book. That’s essentially paranormal. What quantum mechanics lets us do – and maybe some people would find this ironic -- is *avoid* the paranormal implication.

- I admit: the idea that the Prediction Game can’t be won strikes me as science fiction! Even setting aside my proposed explanation of how the world might be in such a case, in terms of a dual description with no time in it, which obviously is a further speculation. On the other hand, the idea that the Prediction Game CAN be won ALSO strikes me as science fiction! The problem is NOT that some inner voice tells me I have a soul whose leaps of imagination could never be predicted by a machine. It’s nothing of the kind. For me, the difficulty is much more basic: if there were all these simulated copies of me running around, how could I localize myself in the world for the purpose of doing science? How could I ever know whether I was the “real” me or one of the simulations? If there are two identical copies of me, do I now have twice as much consciousness? Does it matter if only one of the copies is being “run,” and the other one’s just a backup? I’d now have to worry about paradoxes of decision theory, like Newcomb’s Paradox, where I have to do something that seems obviously irrational, like take only a small fraction of money that I’m offered – because some superintelligent Predictor has already infallibly predicted what I’m gonna do, and if he predicted that I’m gonna take ALL the money, then he won’t offer me any of it. In all these cases, it seems simpler, more elegant, to conjecture that perfect prediction is impossible, that there’s some fundamental reason why these copies or simulations of me can’t exist. For me, the most crucial point is that which scenario is right is not just a metaphysical conundrum. Rather, it’s essentially an ordinary scientific question. It’s something that physics, CS, neurobiology, chemistry, and so on could very plausibly make progress on, by better understanding the physical substrate of the brain and to what extent its behavior can actually be predicted.