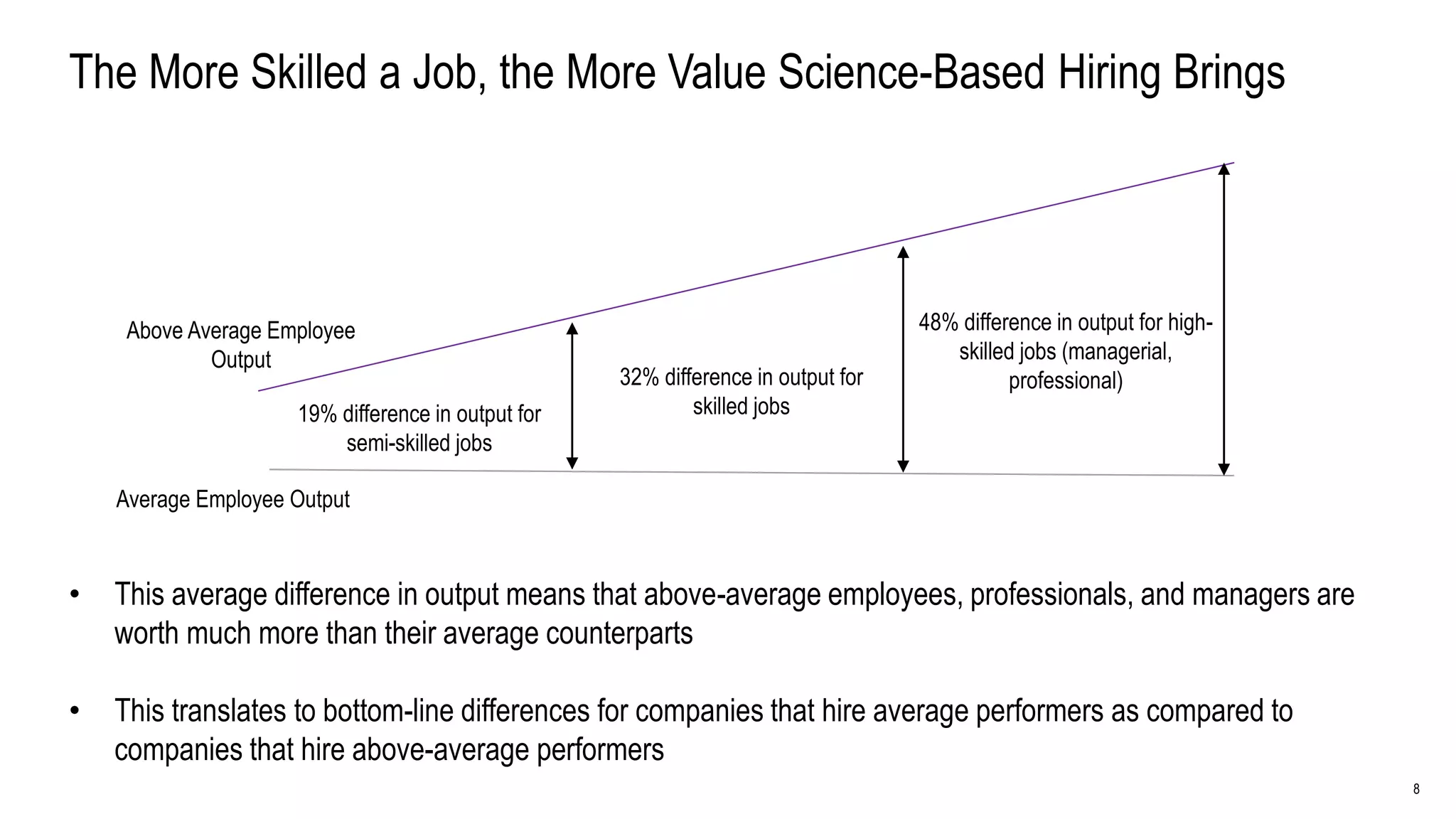

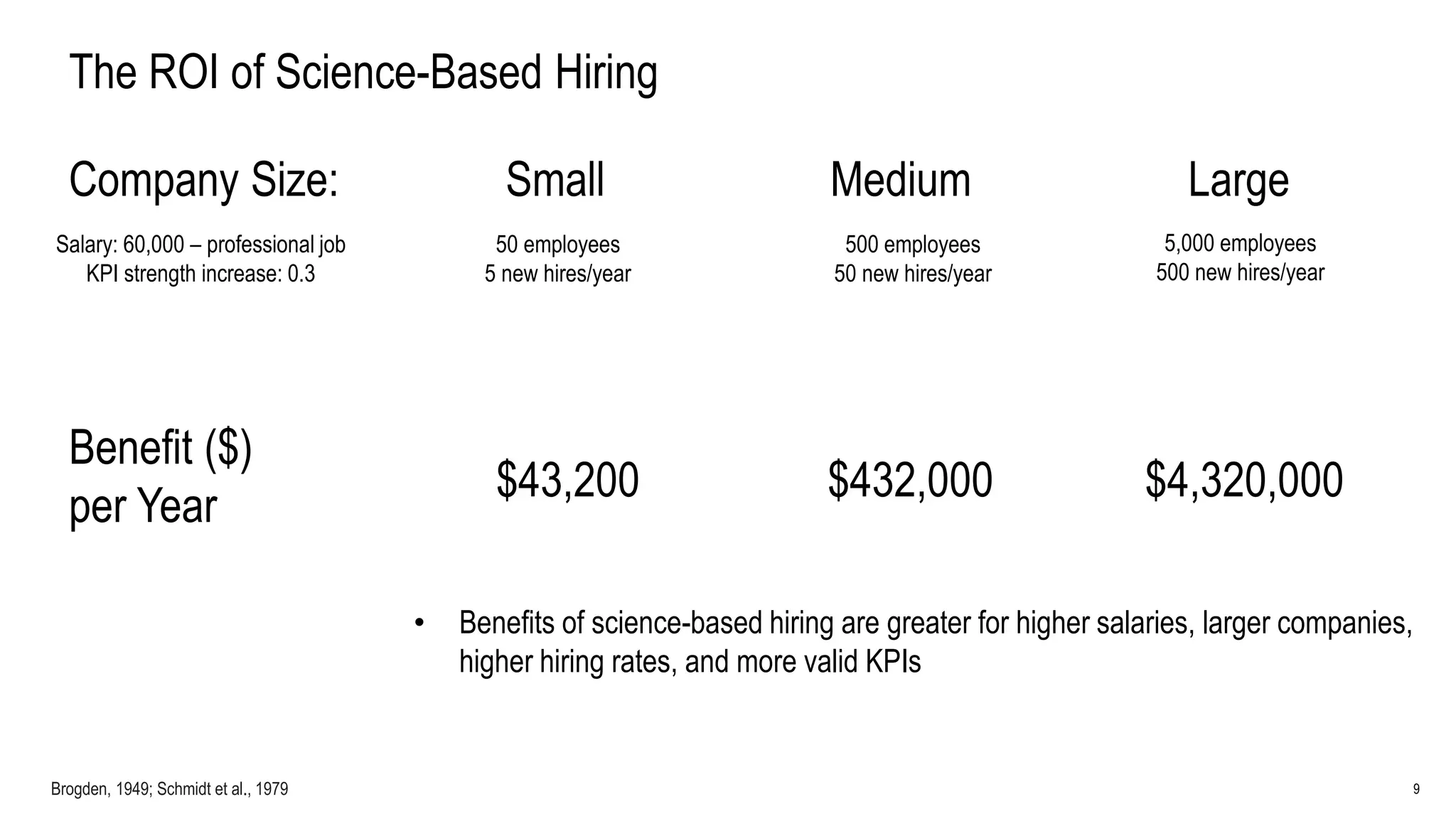

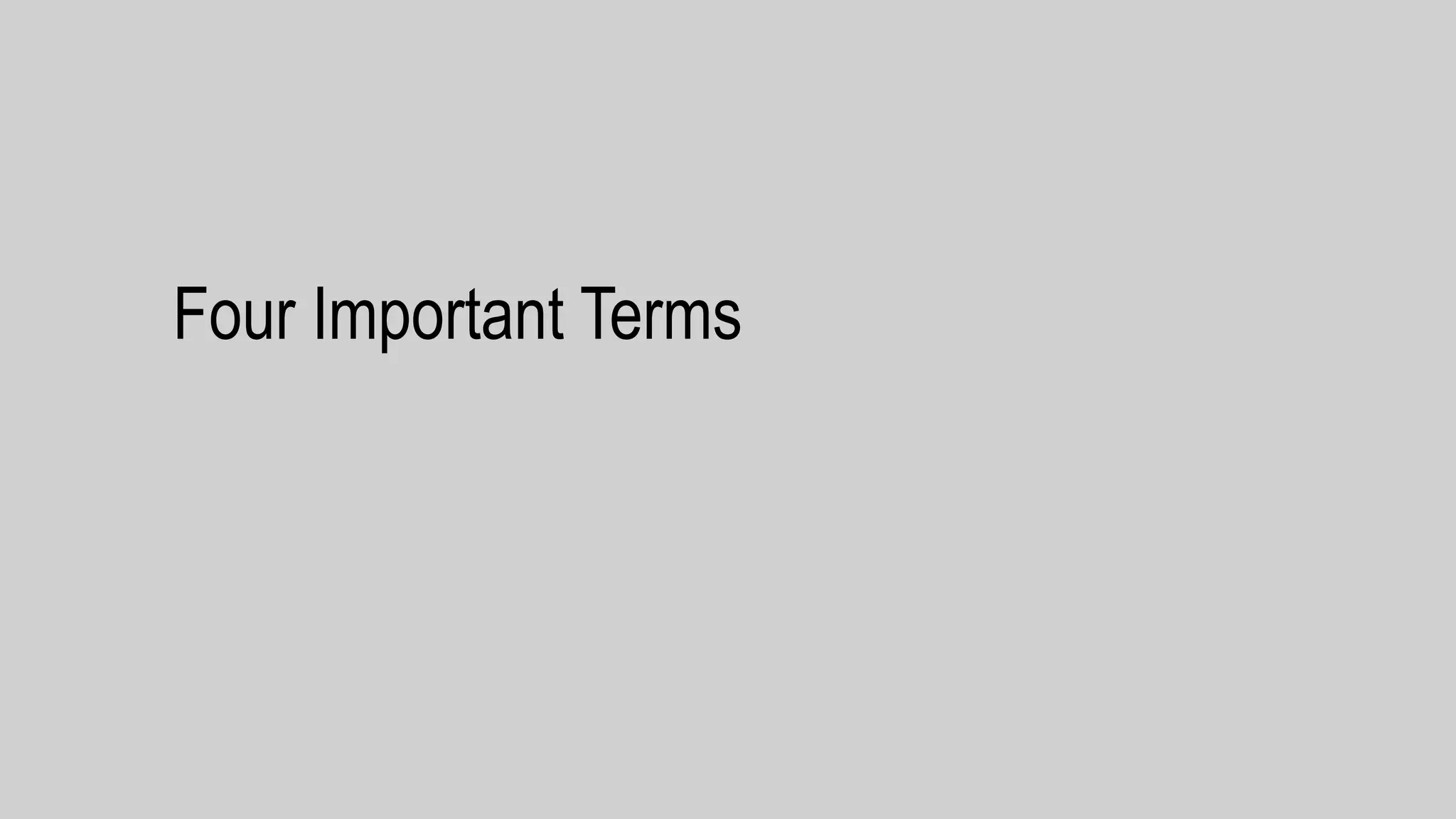

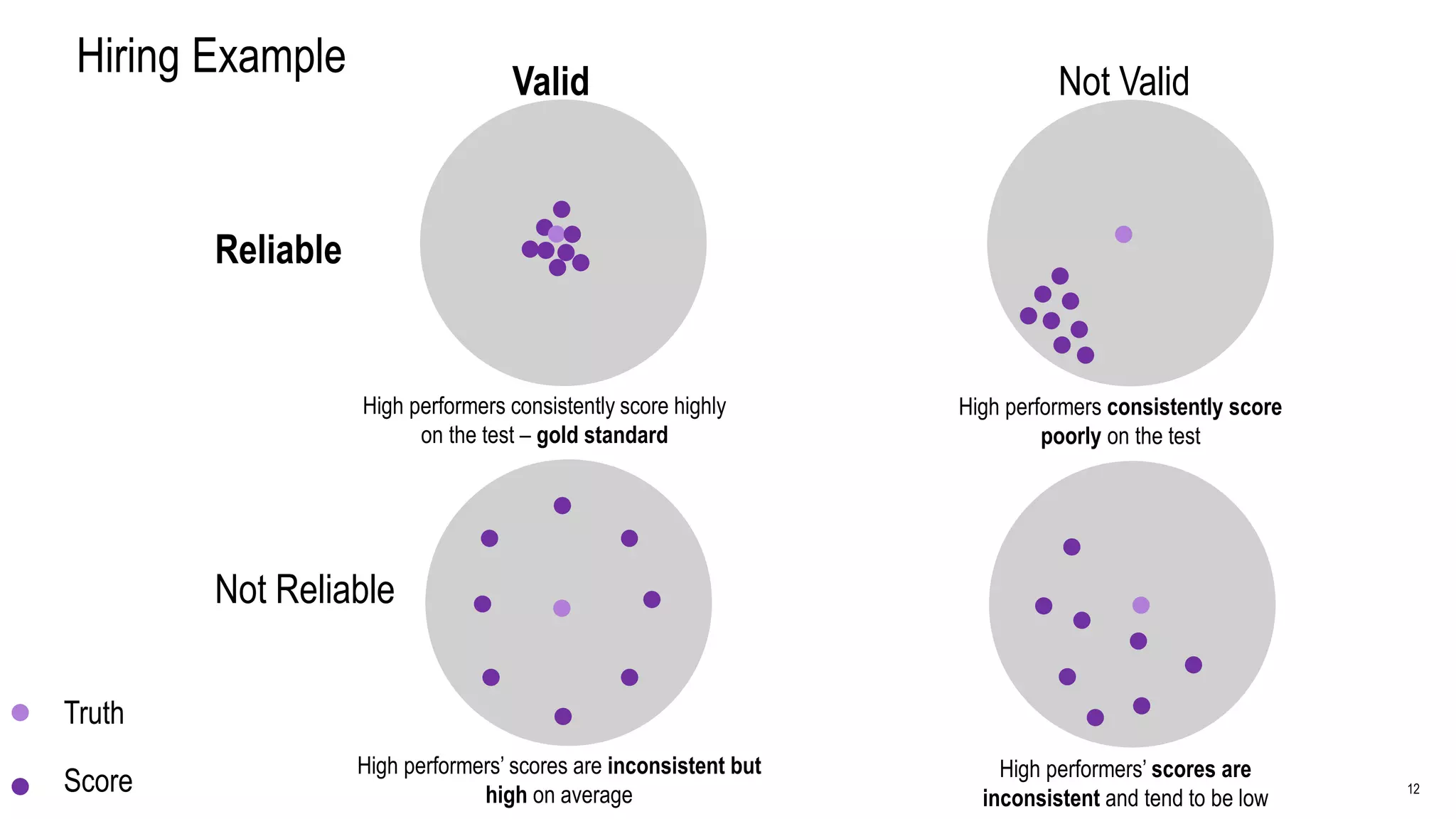

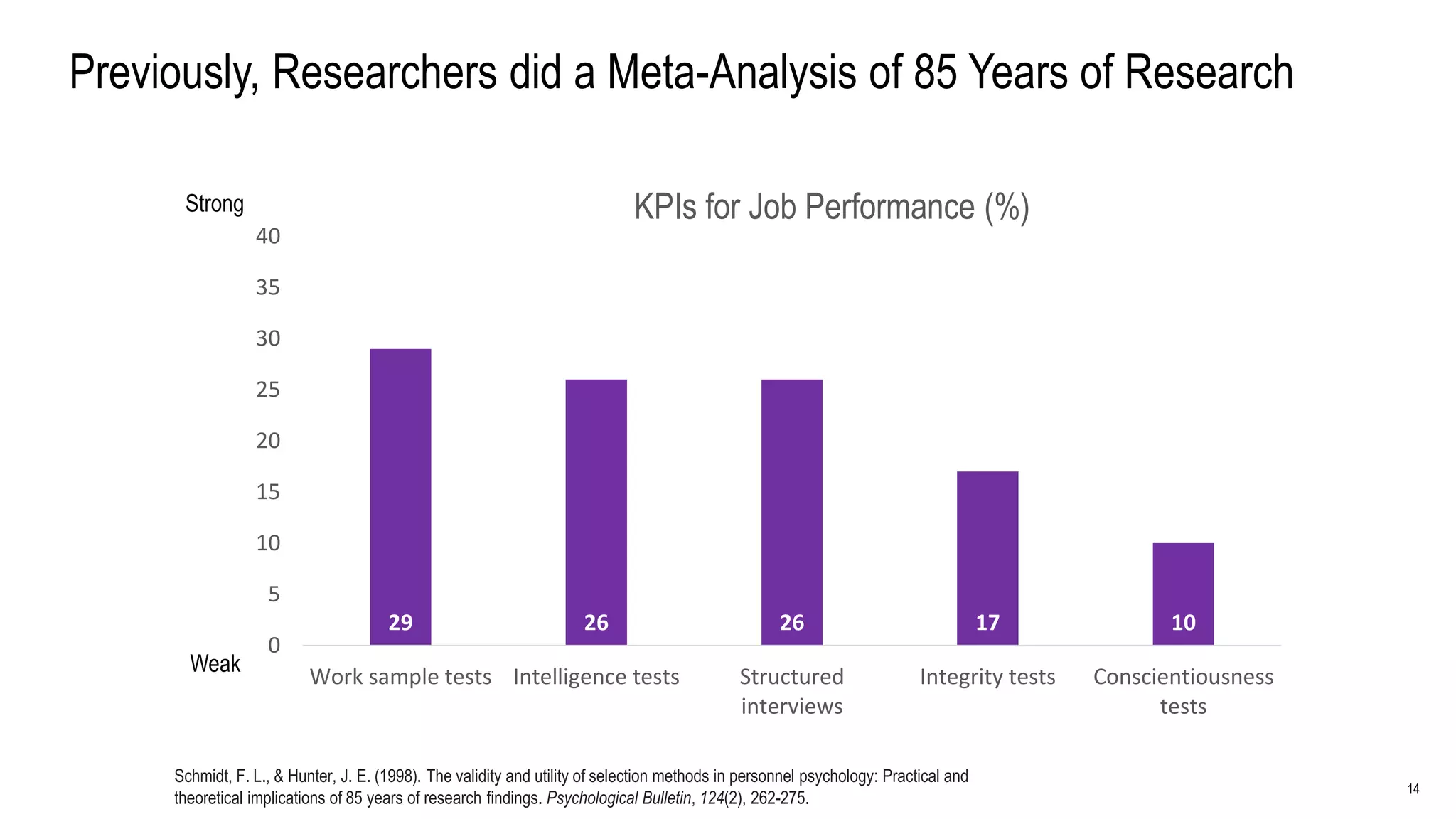

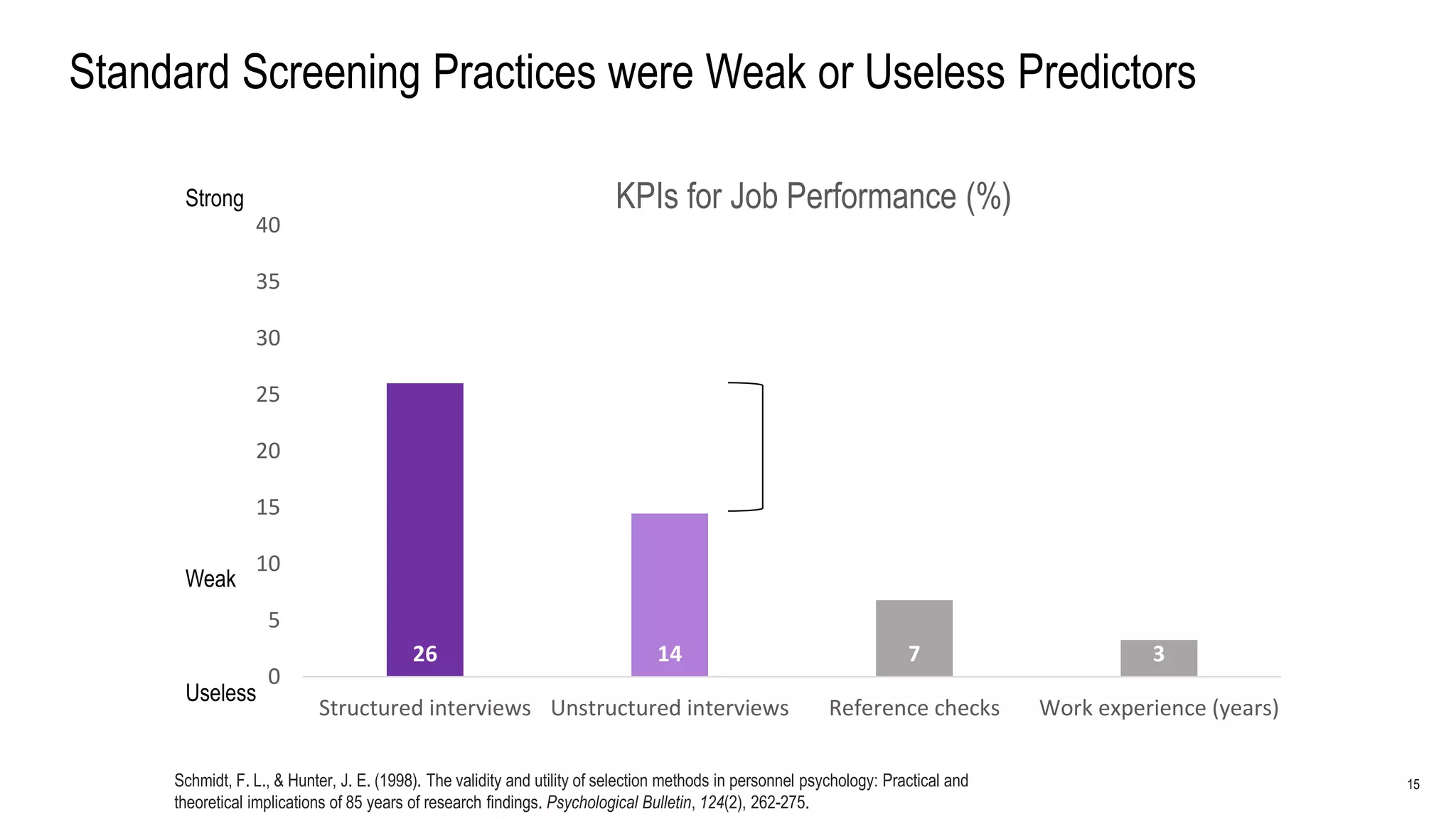

The document reviews a century of hiring research, emphasizing the importance of using science-based hiring methods to enhance employee performance and organizational efficiency. It identifies General Mental Ability (GMA) as the strongest predictor of job performance, advocating for its combination with integrity tests and structured interviews for improved outcomes. Additionally, it highlights the economic benefits of adopting these best practices, particularly in high-skilled job environments.

![24

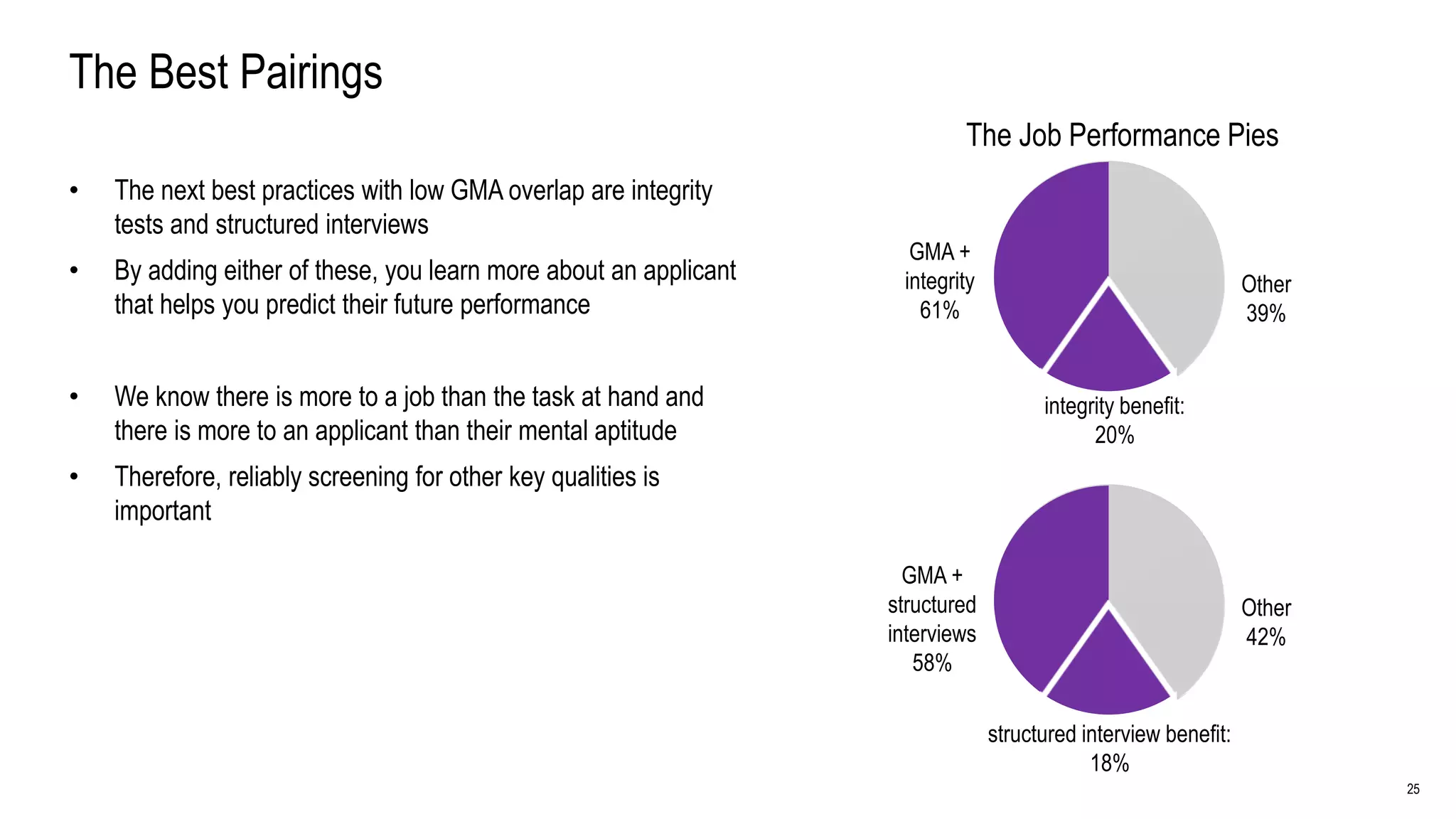

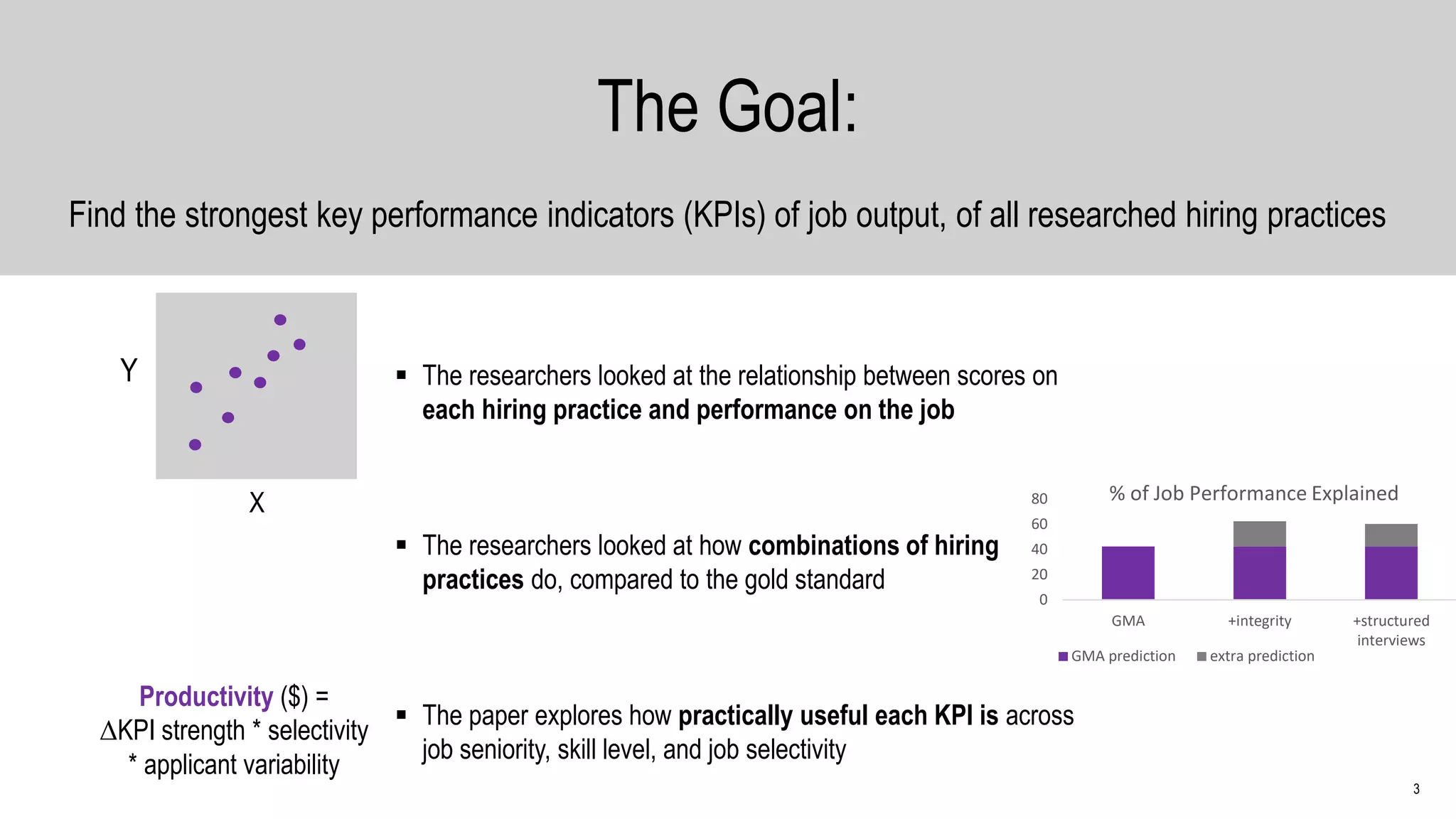

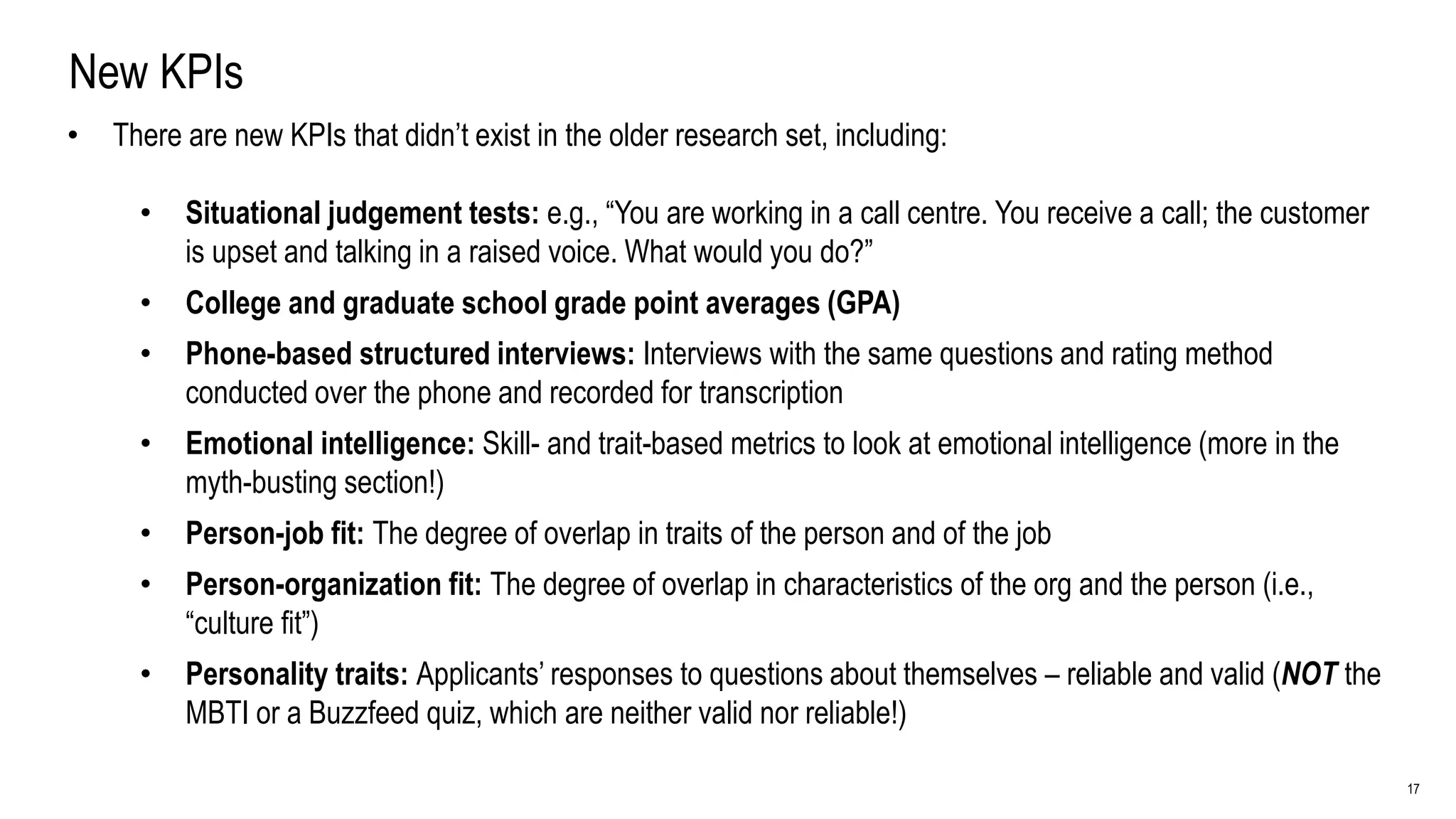

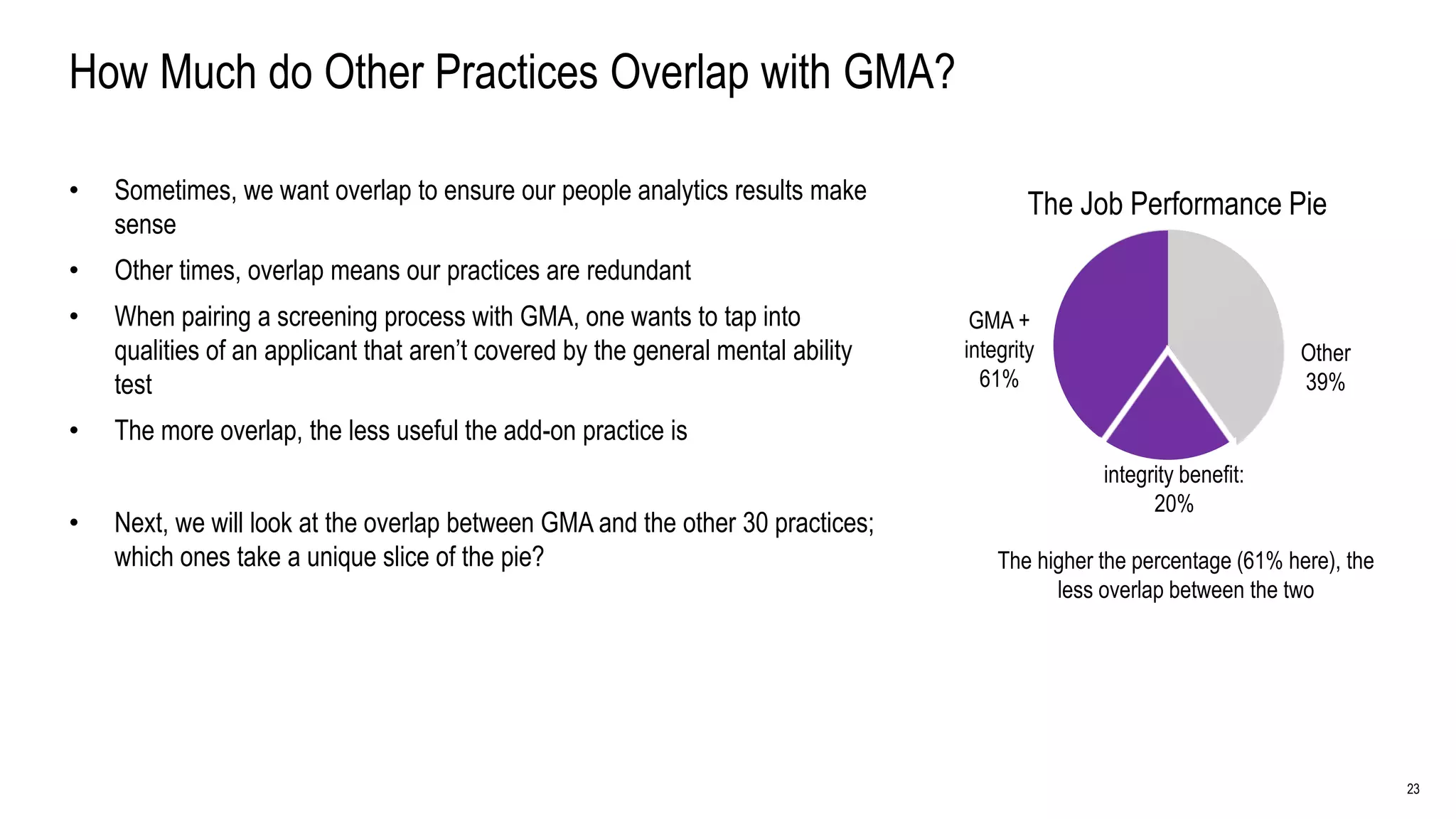

The Added Benefit of Non-GMA Practices GMA +

[practice] Other

[practice] benefit

Ranking Practice

[Practice] Benefit

(correlation)

[Practice] Benefit

(pie slice: %)

1 integrity 0.13 20

2 structured interviews 0.117 18

3 unstructured interviews 0.087 13

4 interests 0.062 10

5 structured phone interview 0.057 9

6 conscientiousness 0.053 8

7 reference checks 0.05 8

8 openness to experience 0.039 6

9 biographical data 0.036 6

10 job experience (years) 0.032 5

11 trait-based emotional intelligence 0.029 5

12 person-organization fit 0.024 4

13 situational judgement test (knowledge) 0.015 2

14 person-job fit 0.014 2

15 assessment centers 0.013 2

16 T&E point method 0.009 1

17 GPA 0.009 1

18 years of education 0.008 1

19 extraversion 0.006 1

20 peer ratings 0.006 1

21 skill-based emotional intelligence 0.004 0

22 agreeableness 0.002 0

23 work sample tests 0.002 0

24 situational judgement test (behavior) 0.001 0

Zero benefit correlation or pie slice %:

• emotional stability

• Graphology

• job shadowing

• past achievements description

• job knowledge tests

• age](https://image.slidesharecdn.com/science-basedhiring-180215230125/75/Science-Based-Hiring-An-Actionable-Guide-24-2048.jpg)