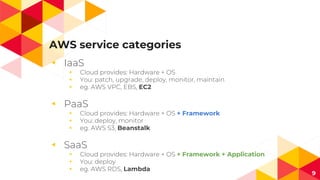

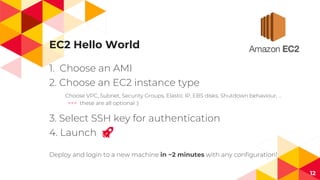

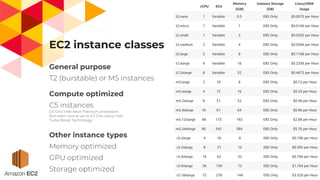

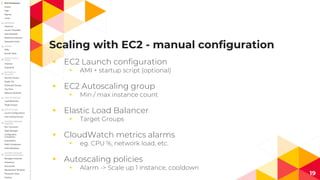

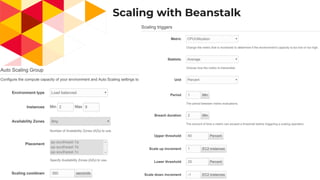

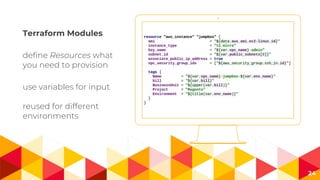

The document discusses scaling on AWS, covering topics such as AWS services, infrastructure as code, and practical experiences in deploying and managing resources. It highlights the advantages of AWS, including the ability to scale resources automatically and offers insights into different service categories like IaaS, PaaS, and SaaS. It emphasizes using tools like Terraform for efficient resource provisioning and management in the cloud.