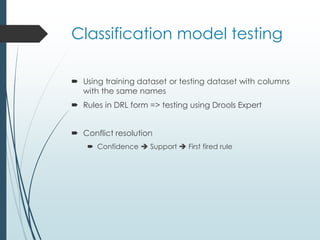

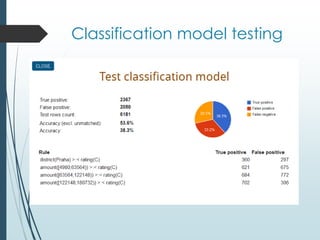

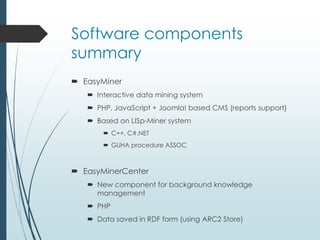

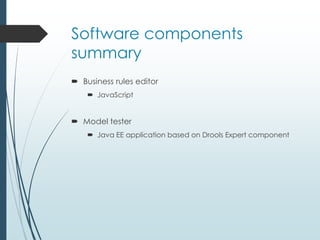

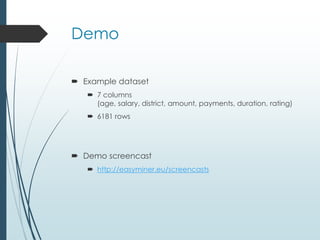

The document discusses an interactive tool called EasyMiner that allows users to both automatically generate association rules from data mining and manually select and edit rules. The tool facilitates data preparation, association rule mining from the data, selecting rules for a classification model, testing and editing the model, and integrating the rules into a knowledge base. Future work is discussed to improve rule pruning and integration with background knowledge.