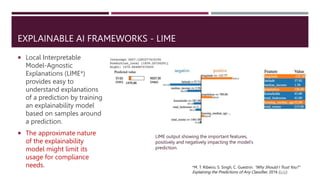

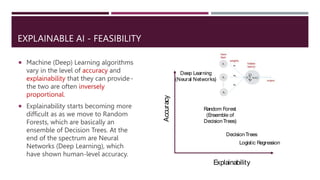

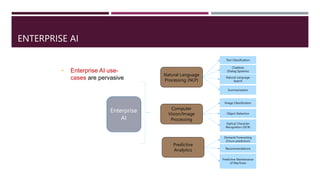

The document discusses the importance of ethical AI in enterprises, emphasizing transparent and fair AI practices to build trust and mitigate risks. It highlights regulatory challenges due to the lack of standardization and different definitions of responsible AI across organizations. Additionally, it covers the complexities of explainable AI, fairness, bias, and the need for robust data practices to ensure equitable outcomes.

![RESPONSIBLE AI

“Ethical AI, also known as responsible AI, is the practice of using AI with good

intention to empower employees and businesses, and fairly impact customers

and society. Ethical AI enables companies to engender trust and scale AI with

confidence.” [1]

Failing to operationalize Ethical AI can not only expose enterprises to

reputational, regulatory, and legal risks; but also lead to wasted resources,

inefficiencies in product development, and even an inability to use data to train

AI models. [2]

[1] R. Porter. Beyond the promise: implementing Ethical AI, 2020

(link)

[2] R. Blackman. A Practical Guide to Building Ethical AI, 2020 (link)](https://image.slidesharecdn.com/icdsaiagenaidebmalya-230913073223-0b477a72/85/Regulating-Generative-AI-LLMOps-pipelines-with-Transparency-4-320.jpg)