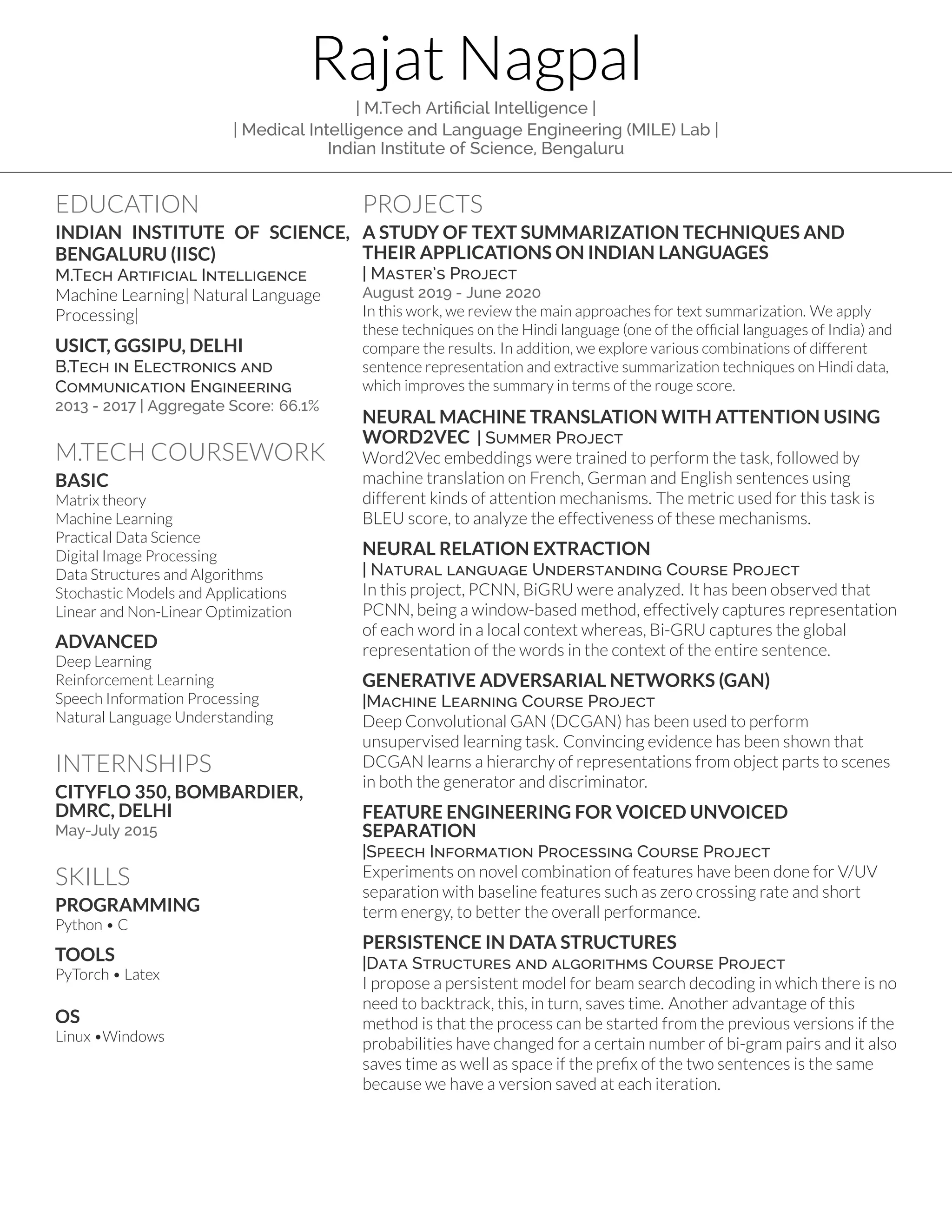

Rajat Nagpal completed his M.Tech in Artificial Intelligence from the Indian Institute of Science, Bengaluru in 2020. His areas of focus included machine learning, natural language processing, deep learning, and speech processing. He conducted several projects related to text summarization, machine translation, relation extraction, generative adversarial networks, and more. His skills include Python, C, PyTorch, Linux, and he has work experience with Cityflo 350 and DMRC.