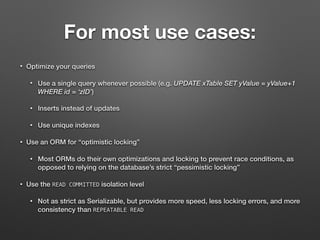

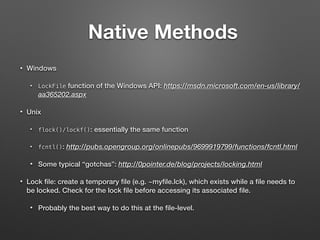

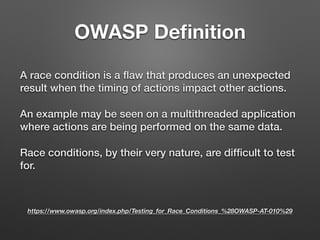

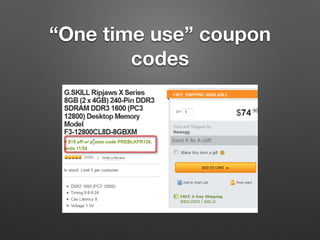

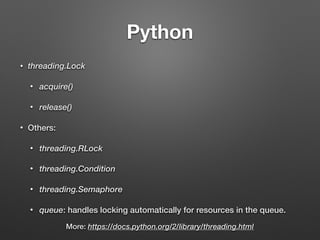

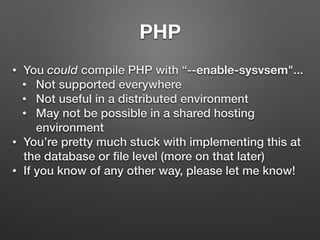

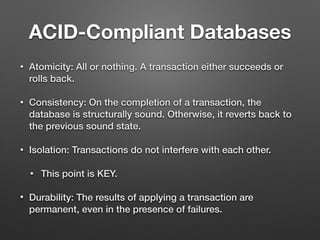

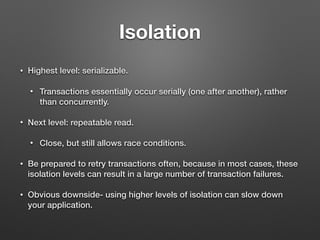

The document discusses race conditions in web applications, defining them as flaws that lead to unexpected results due to timing issues in concurrent actions. It introduces 'race-the-web' (RTW), a tool for automating race condition discovery, and compares its speed and effectiveness to Burp Intruder. The document also emphasizes defense strategies against race conditions, including various locking mechanisms and transaction isolation methods across different programming environments.

![MySQL (cont’d)

• System variable:

SET GLOBAL @@GLOBAL.tx_isolation=`SERIALIZABLE`;

• Command-line option at mysqld startup:

--transaction-isolation=SERIALIZABLE

• Option file:

[mysqld]

transaction-isolation = SERIALIZABLE

• Command-line, BEFORE starting a transaction:

SET TRANSACTION ISOLATION LEVEL SERIALIZABLE;

More Info: https://dev.mysql.com/doc/refman/5.5/en/set-transaction.html](https://image.slidesharecdn.com/racingtheweb-hackfest-170614195953/85/Racing-The-Web-Hackfest-2016-50-320.jpg)