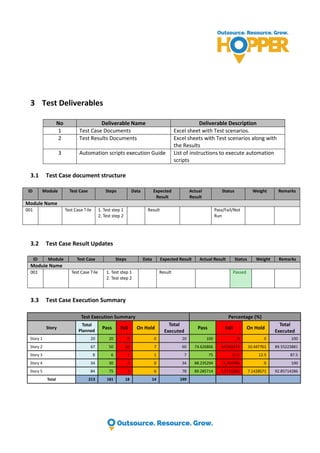

The case study details a QA engagement for a machine vision mobile platform aimed at improving product quality, reducing returns, and enhancing customer ROI. Key components of the engagement included comprehensive testing approaches, automation, and integration with Jira, resulting in successful identification and resolution of issues. The testing achieved high coverage with no reported field issues post-shipment, showcasing the effectiveness of the QA process implemented by the Hopper QA team.