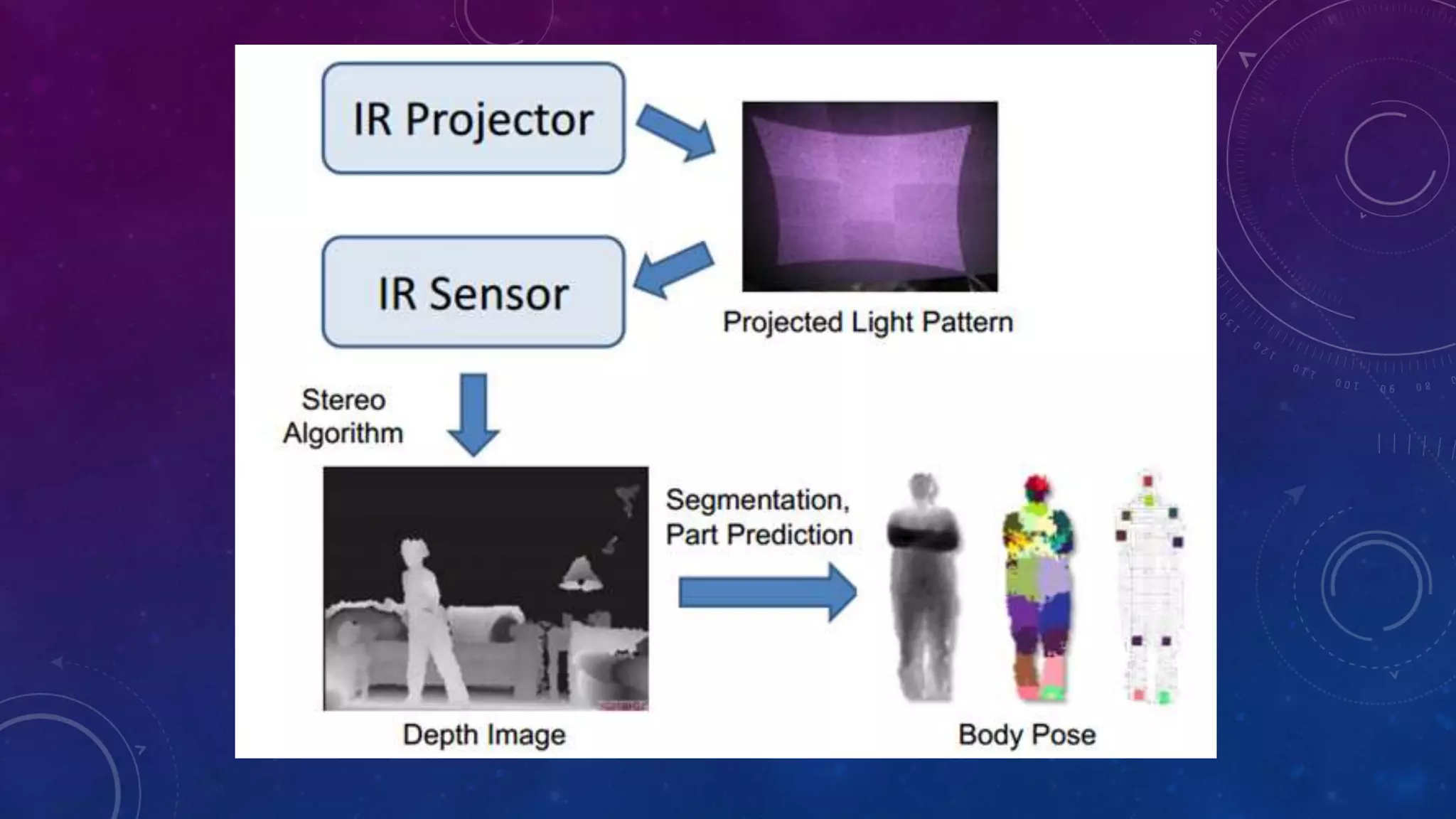

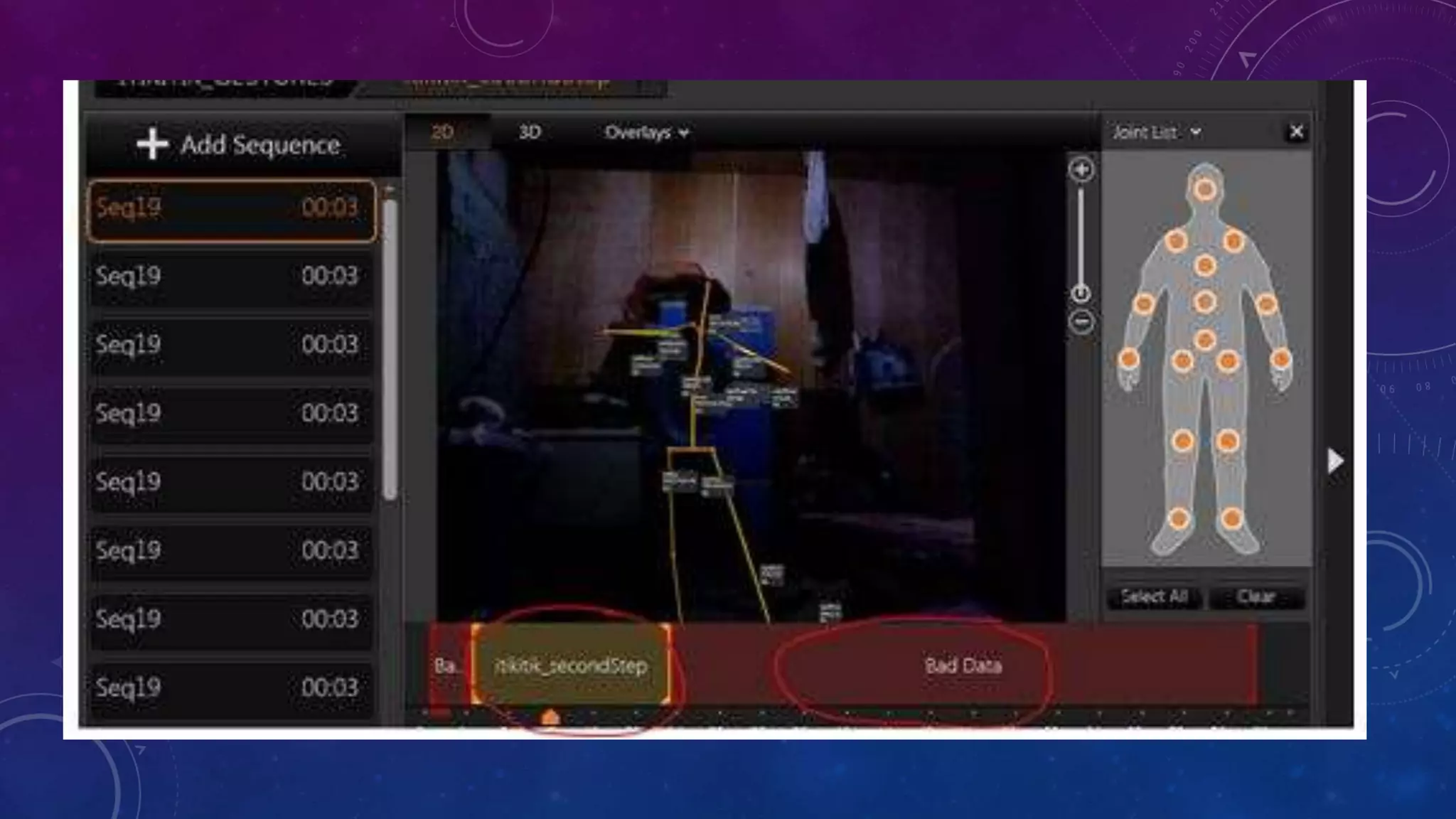

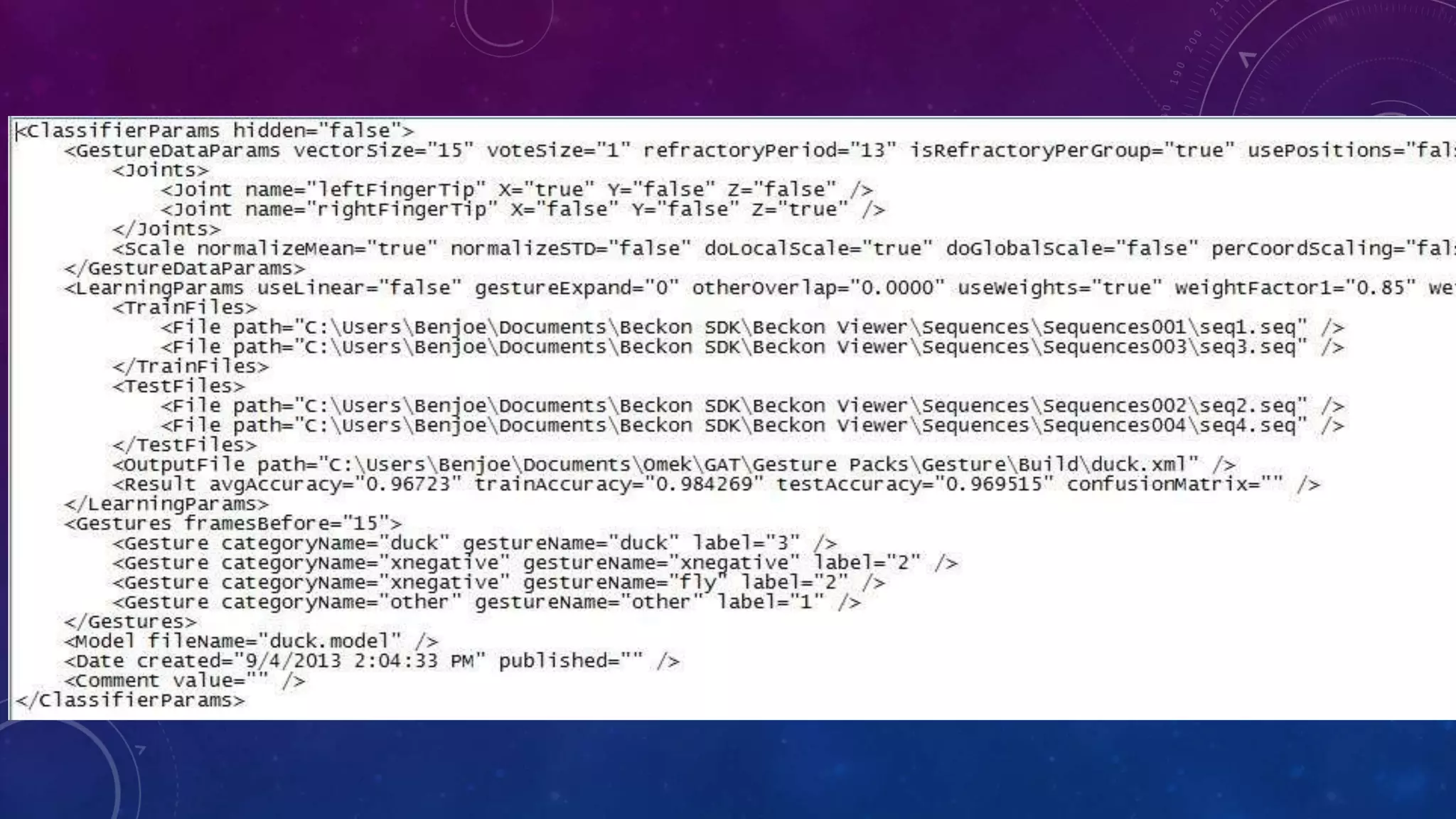

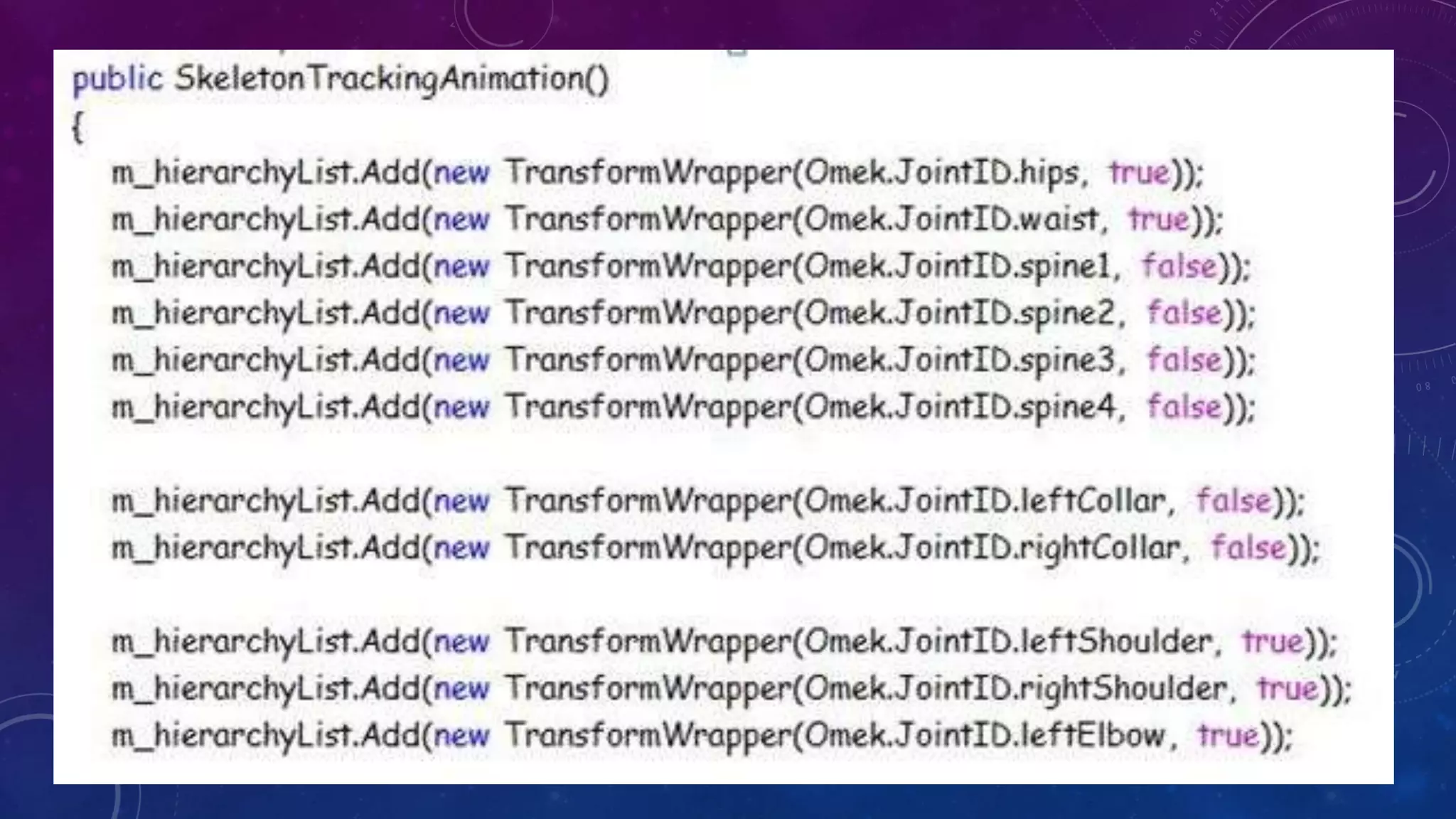

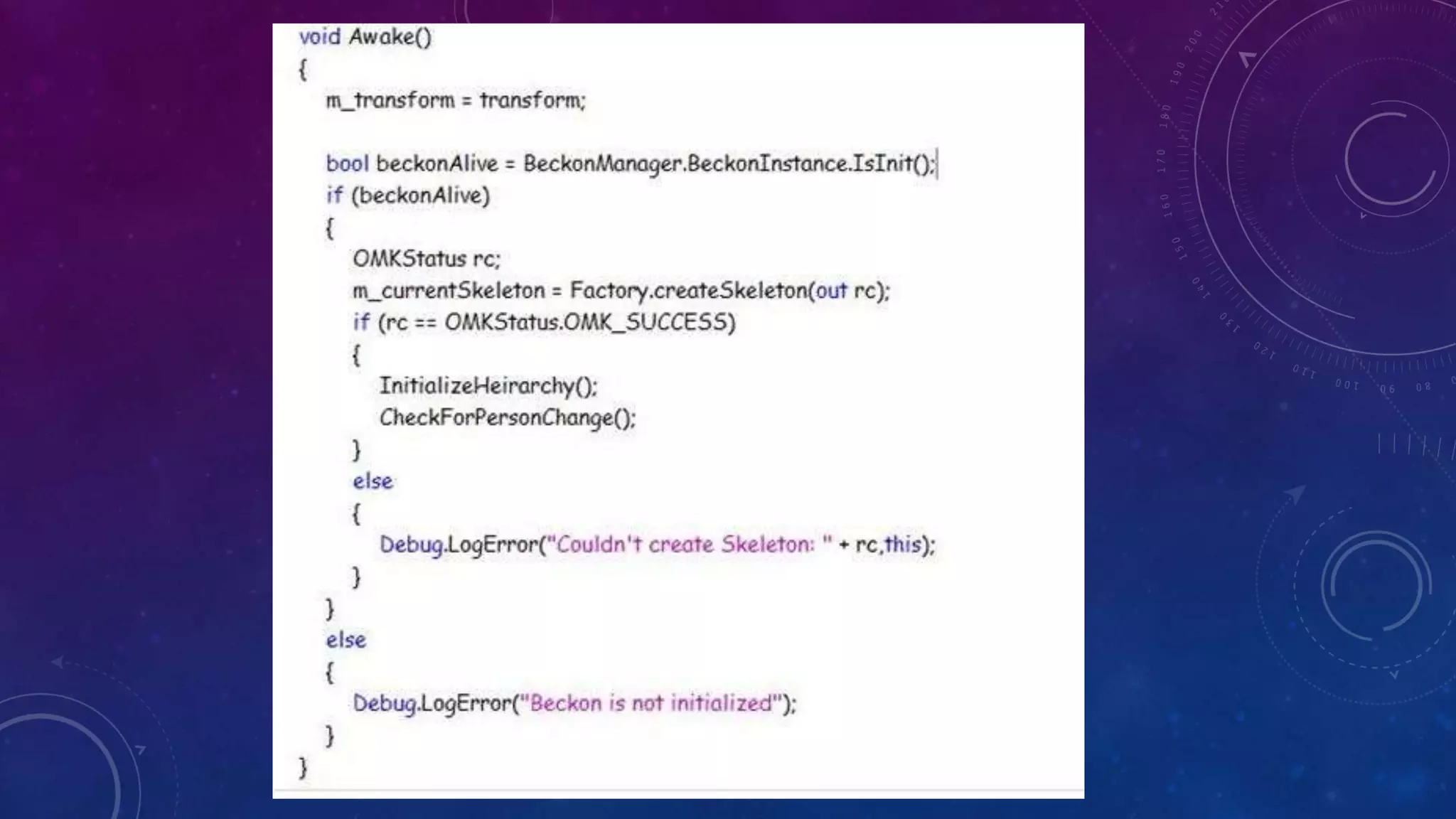

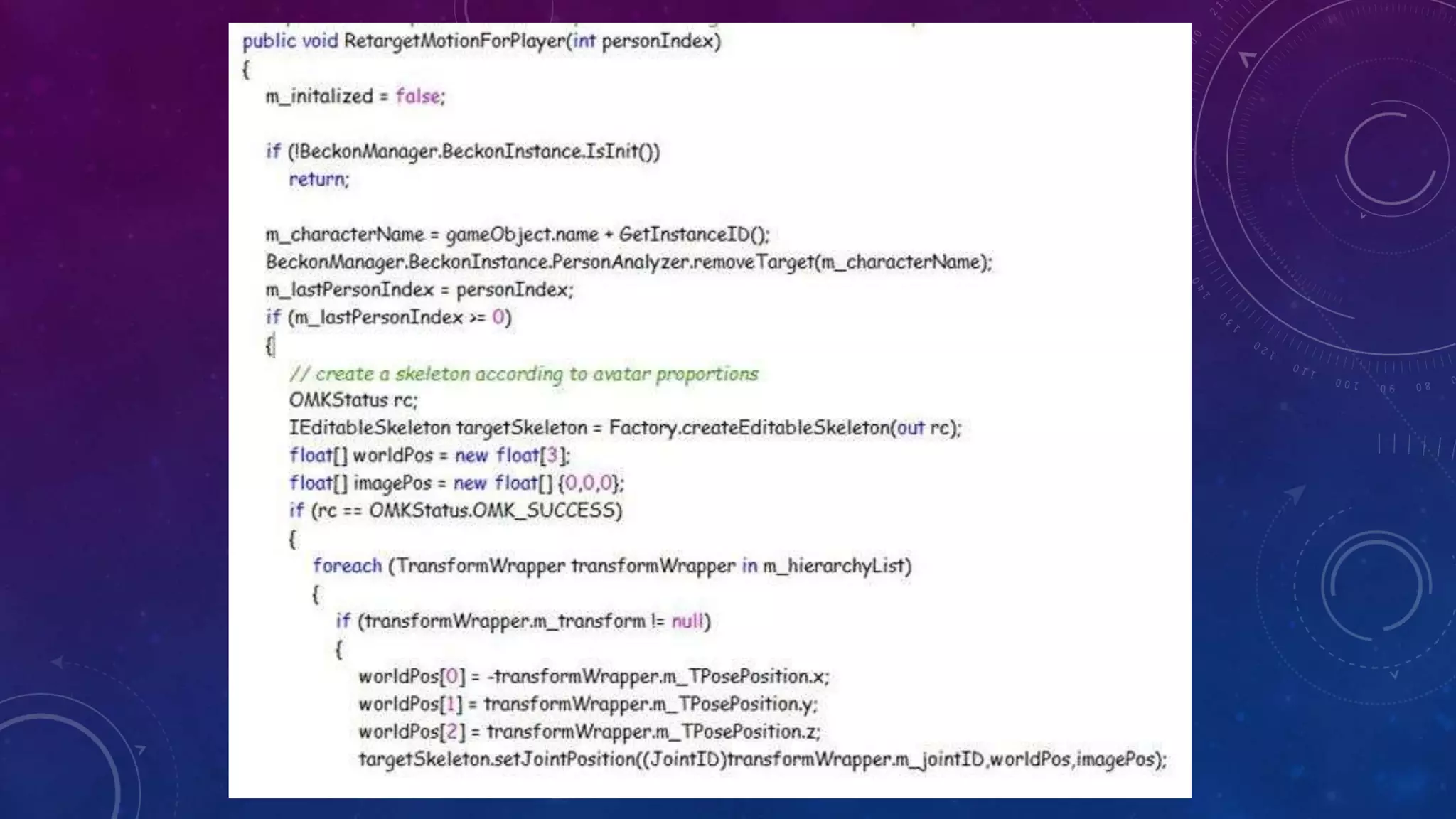

The document discusses the Kinect system, specifically its capabilities in capturing depth images and estimating body poses using infrared technology and time-of-flight cameras. It describes the integration of gesture recognition through pattern matching algorithms and the use of the Iterative Closest Point (ICP) algorithm for geometric alignment. Additionally, it outlines the initialization and animation processes for avatars in gaming applications based on player movements.