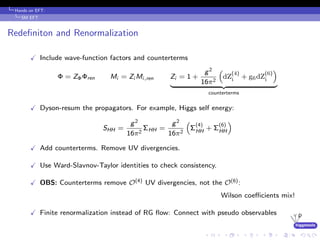

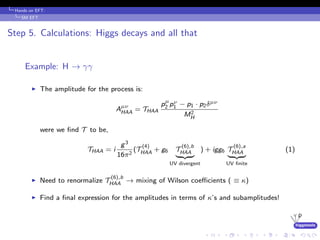

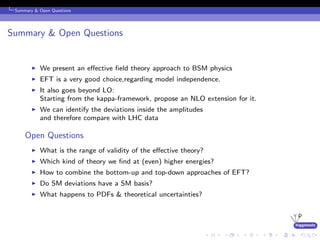

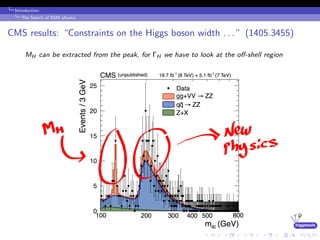

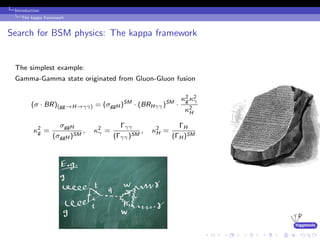

This document discusses using effective field theory (EFT) to search for new physics beyond the Standard Model. It introduces the kappa framework, which parametrizes deviations from the Standard Model in a non-gauge invariant way. EFT provides an alternative approach that is compatible with quantum field theory. The document outlines how to build a basis of higher-dimensional operators in the Standard Model EFT and perform calculations to NLO while renormalizing ultraviolet divergences. Open questions remain about the validity range, possible high-energy completions, and combining bottom-up and top-down EFT approaches.

= DΦ eiSUV [φ,Φ](µ)

Use RG-flow to study the resulting theory in its low-energy regime

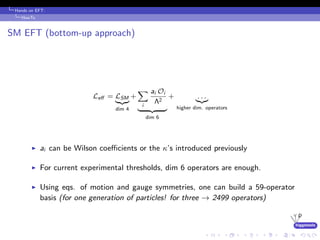

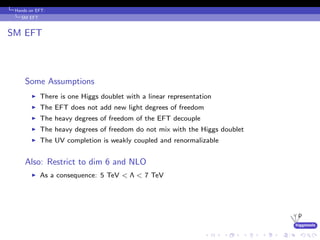

In the Bottom-up approach: (model independent)

Start from a low-energy known theory (the SM).

Add operators consistent with the symmetries

(recall Wilson: only dim > 4 makes sense)

Calculate (pseudo)-observables and compare with experiments](https://image.slidesharecdn.com/planck-raquelgomezambrosio-160921163913/85/NLO-Higgs-Effective-Field-Theory-and-Framework-13-320.jpg)