The document is a technical report on neural networks prepared by Chiradip Bhattacharya for his Bachelor of Technology in Computer Science and Engineering at Techno India, Salt Lake. It covers the fundamentals of artificial neural networks, their historical background, architecture, learning processes, and various applications in fields such as medicine and business. The report concludes with acknowledgments, a table of contents, and references, emphasizing the significance and versatility of neural networks in solving complex computational problems.

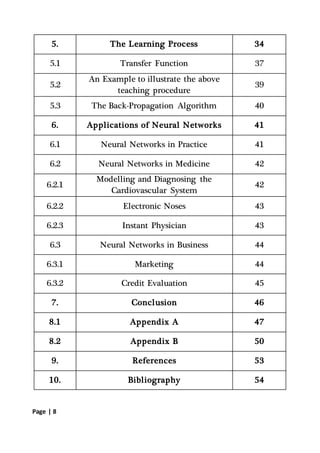

![Page | 5

Please feel free for any query of clarification that you would like me

to explain. I welcome any suggestions you may have after reviewing

the report.

Thanking You

Yours sincerely,

[CHIRADIP BHATTACHARYA]

Department of Computer Science and Engineering

Section – A

Roll Number - 13000216109

ACKNOWLEDGEMENT

I would like to take this opportunity to express my deepest gratitude

and sincere thanks to my project mentor, Mrs. Paulomi Mukherjee

for providing me with the guidelines of the project and allowing me

to explore a whole new domain of knowledge through this report.

I would also like to thank Prof. Tapan Chowdhury and other

departmental faculties for guiding me through.

Lastly, I would like to thank my parents and friends who boosted

my morale when I most needed it.](https://image.slidesharecdn.com/neuralnetworksreport-181103113126/85/Neural-networks-report-5-320.jpg)

![Page | 52

feedforward neural network is integrated with the AMT and was

trained using back-propagation to assist the marketing control of

airline seat allocations. The adaptive neural approach was amenable

to rule expression. Additionally, the application's environment

changed rapidly and constantly, which required a continuously

adaptive solution. The system is used to monitor and recommend

booking advice for each departure. Such information has a direct

impact on the profitability of an airline and can provide a

technological advantage for users of the system. [Hutchison &

Stephens, 1987]

While it is significant that neural networks have been applied to this

problem, it is also important to see that this intelligent technology

can be integrated with expert systems and other approaches to make

a functional system. Neural networks were used to discover the

influence of undefined interactions by the various variables. While

these interactions were not defined, they were used by the neural

system to develop useful conclusions. It is also noteworthy to see

that neural networks can influence the bottom line.

6.3.2 Credit Evaluation

The HNC company, founded by Robert Hecht-Nielsen, has

developed several neural network applications. One of them is the

Credit Scoring system which increase the profitability of the

existing model up to 27%. The HNC neural systems were also

applied to mortgage screening. A neural network automated

mortgage insurance underwriting system was developed by the](https://image.slidesharecdn.com/neuralnetworksreport-181103113126/85/Neural-networks-report-52-320.jpg)