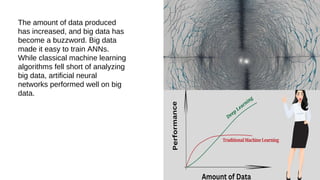

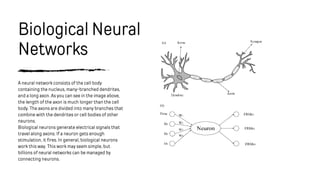

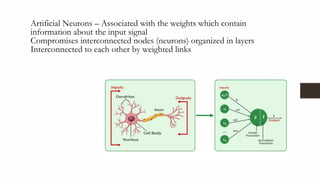

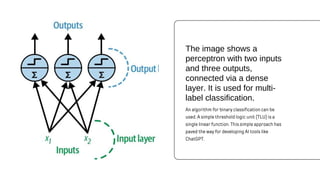

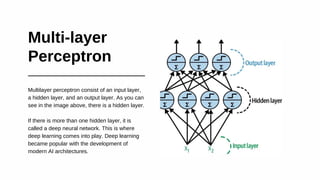

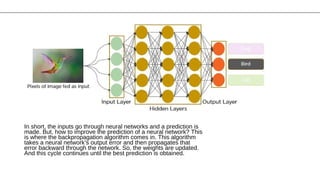

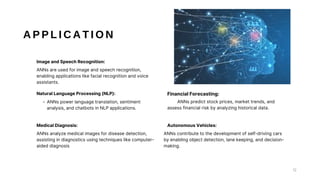

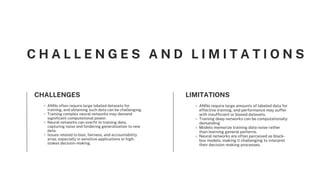

Artificial neural networks (ANNs) are inspired by biological neural networks and are a type of machine learning. They follow principles of neuronal organization and learn by examples to make predictions. ANNs have multiple layers including an input layer, one or more hidden layers, and an output layer. They are often used for applications like image recognition, natural language processing, medical diagnosis, and autonomous vehicles. While ANNs can perform well on large datasets, they also face challenges including overfitting, data and computational requirements, and a lack of transparency.