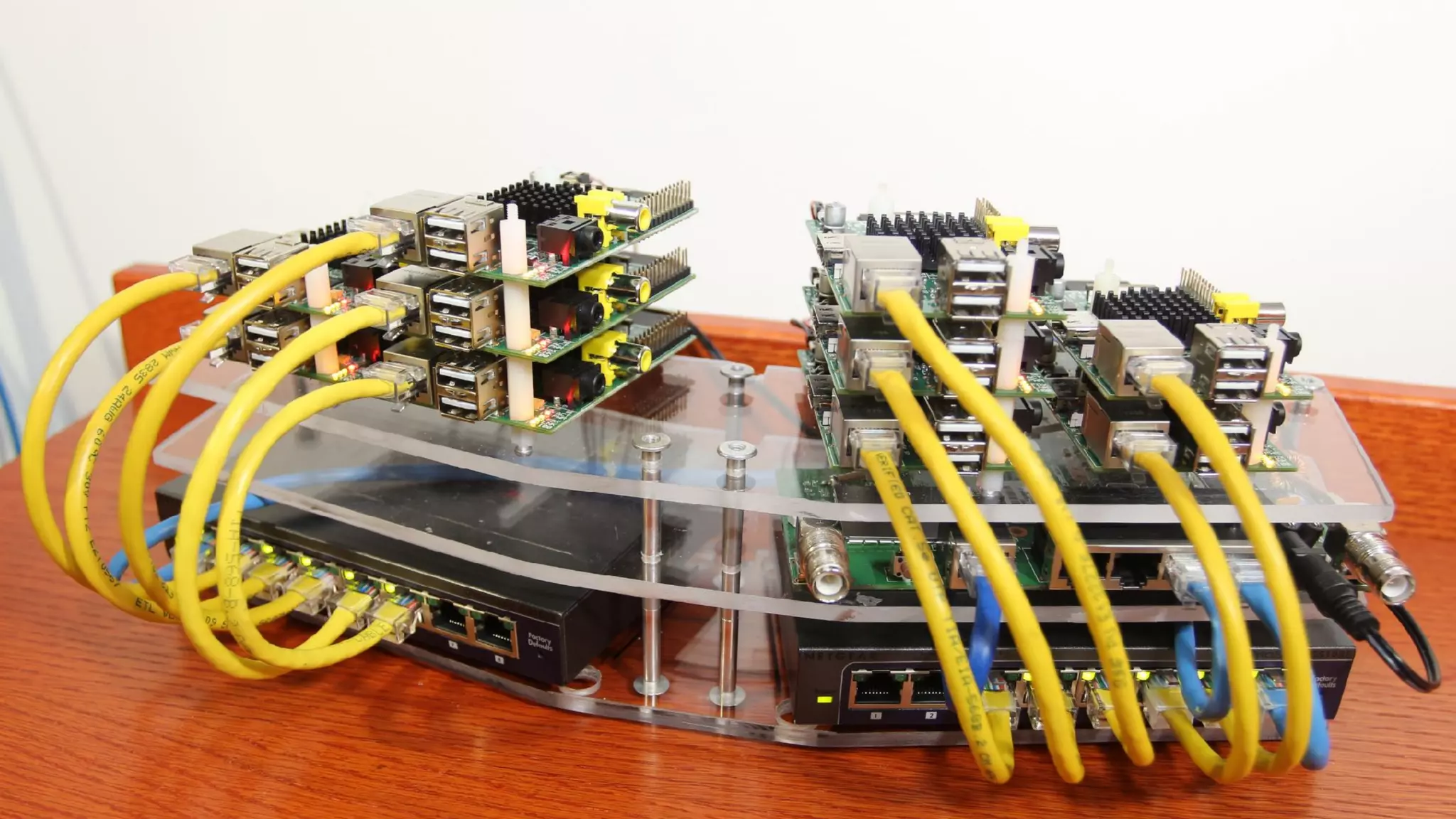

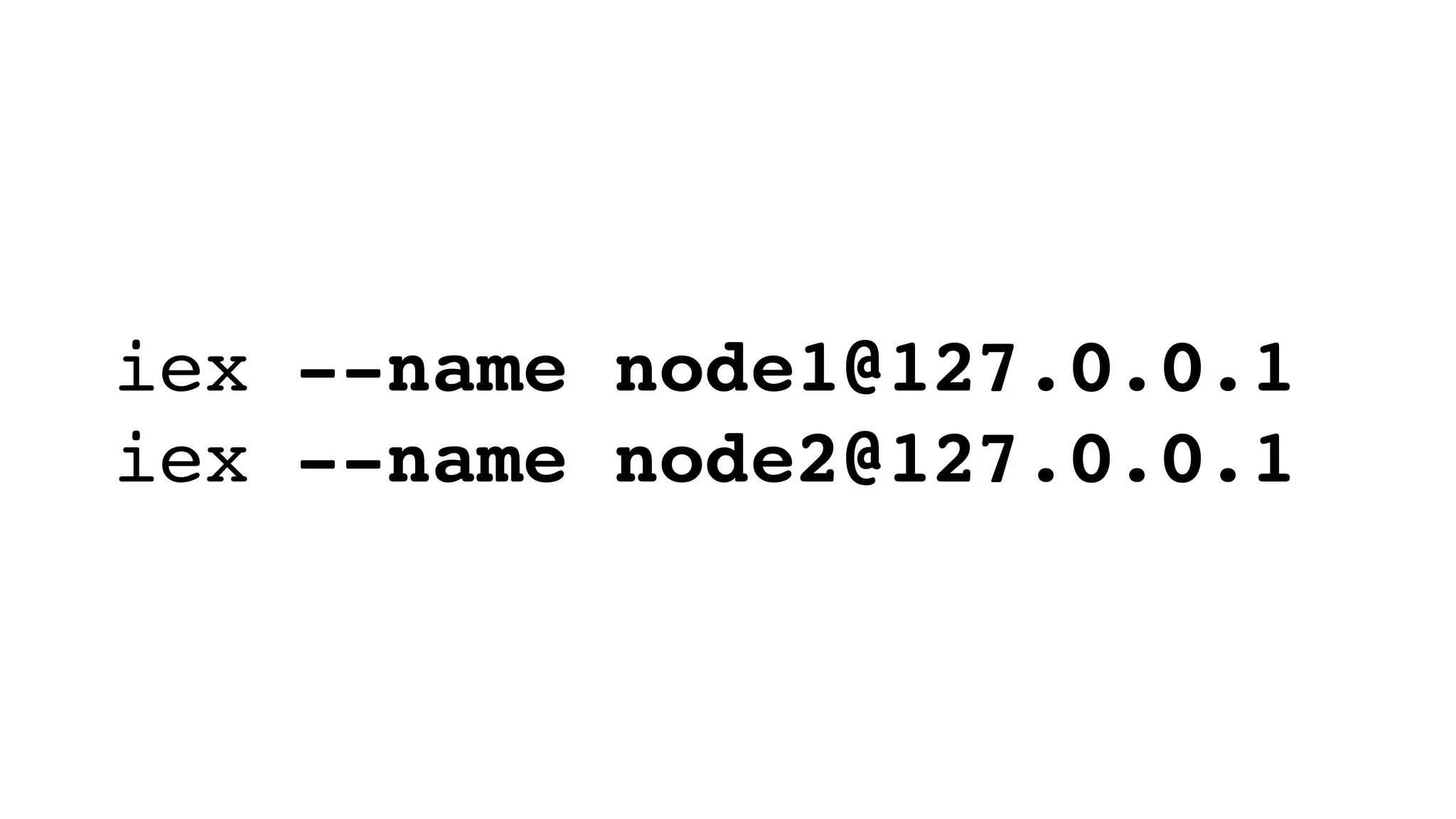

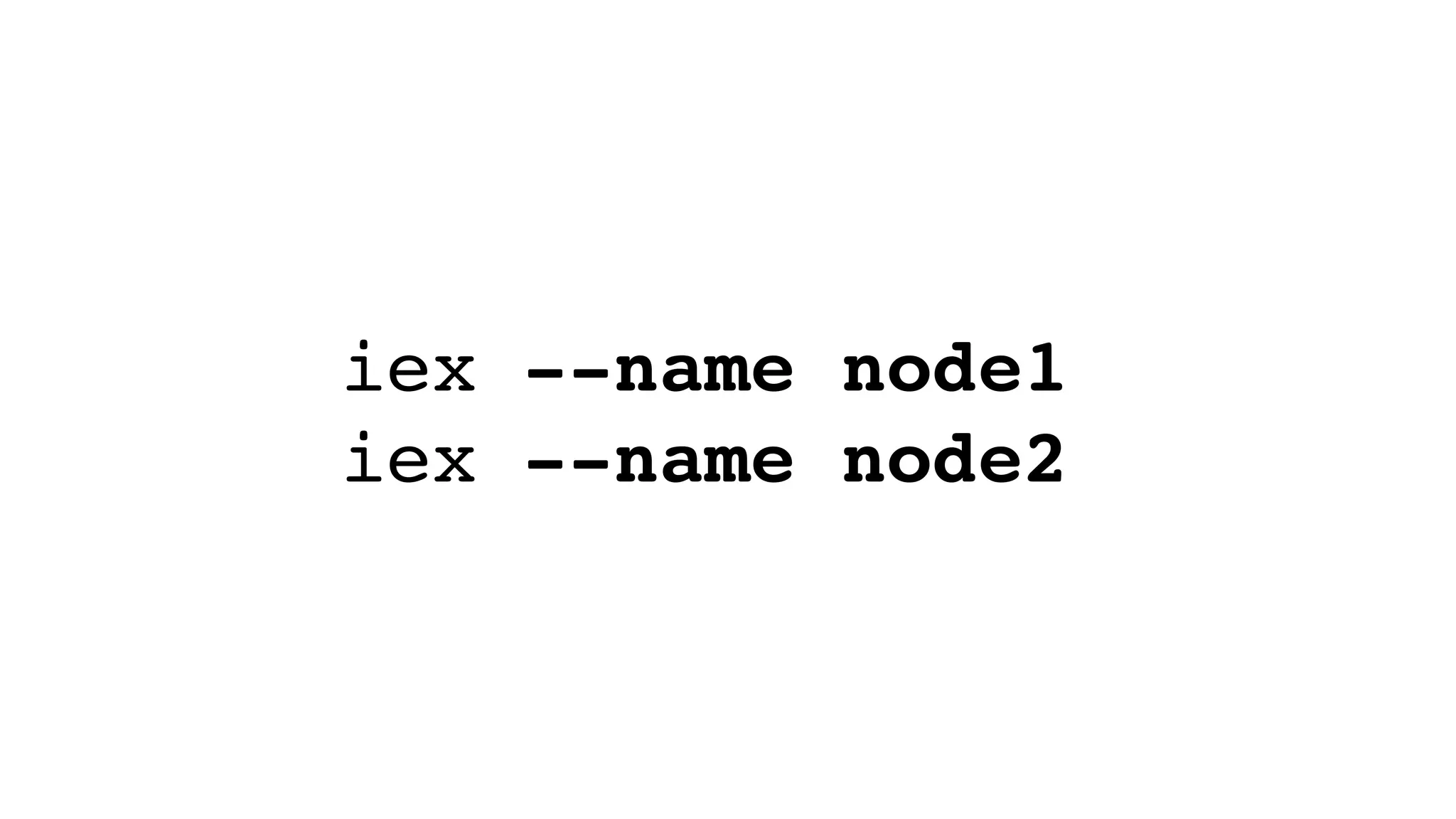

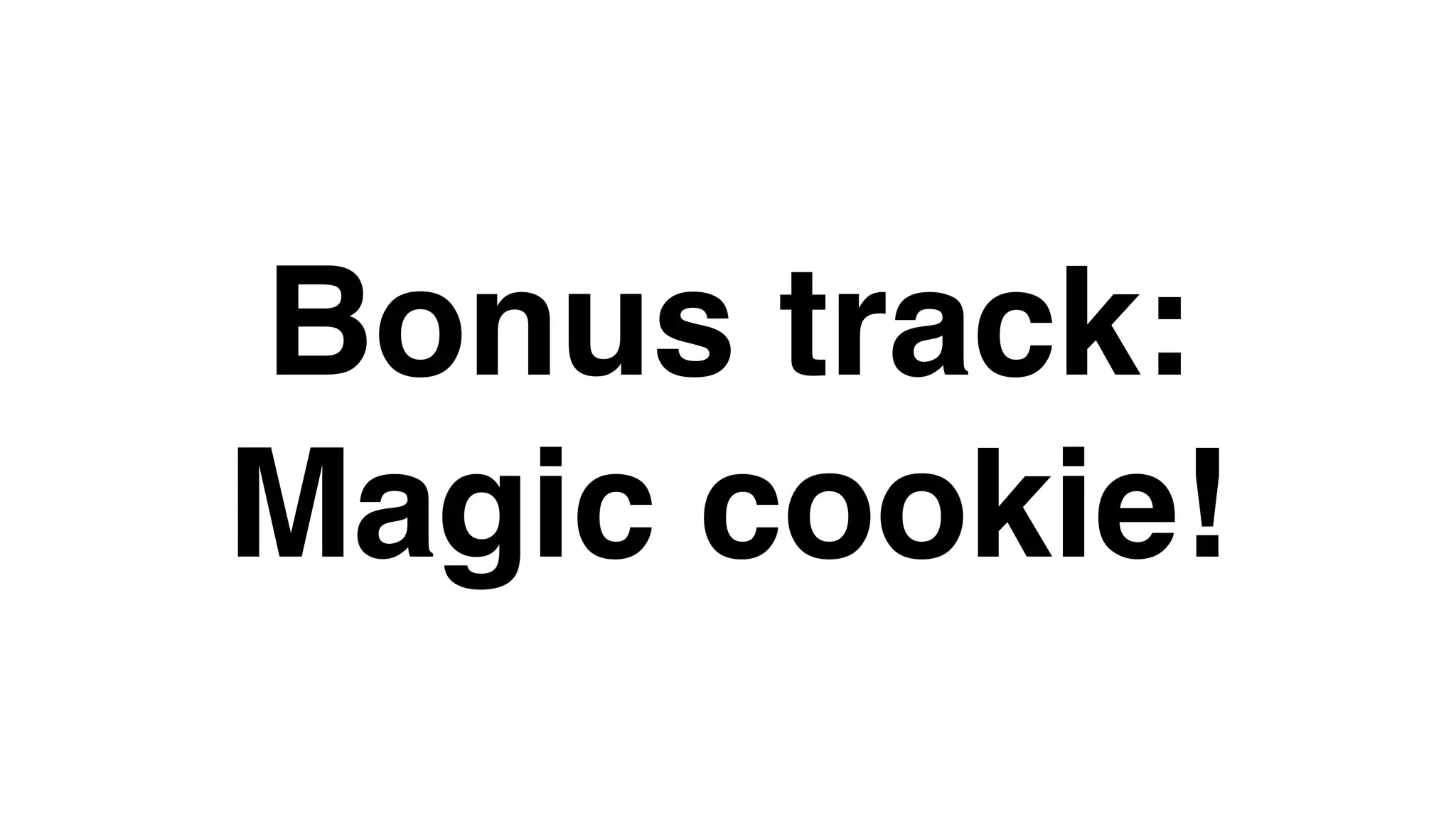

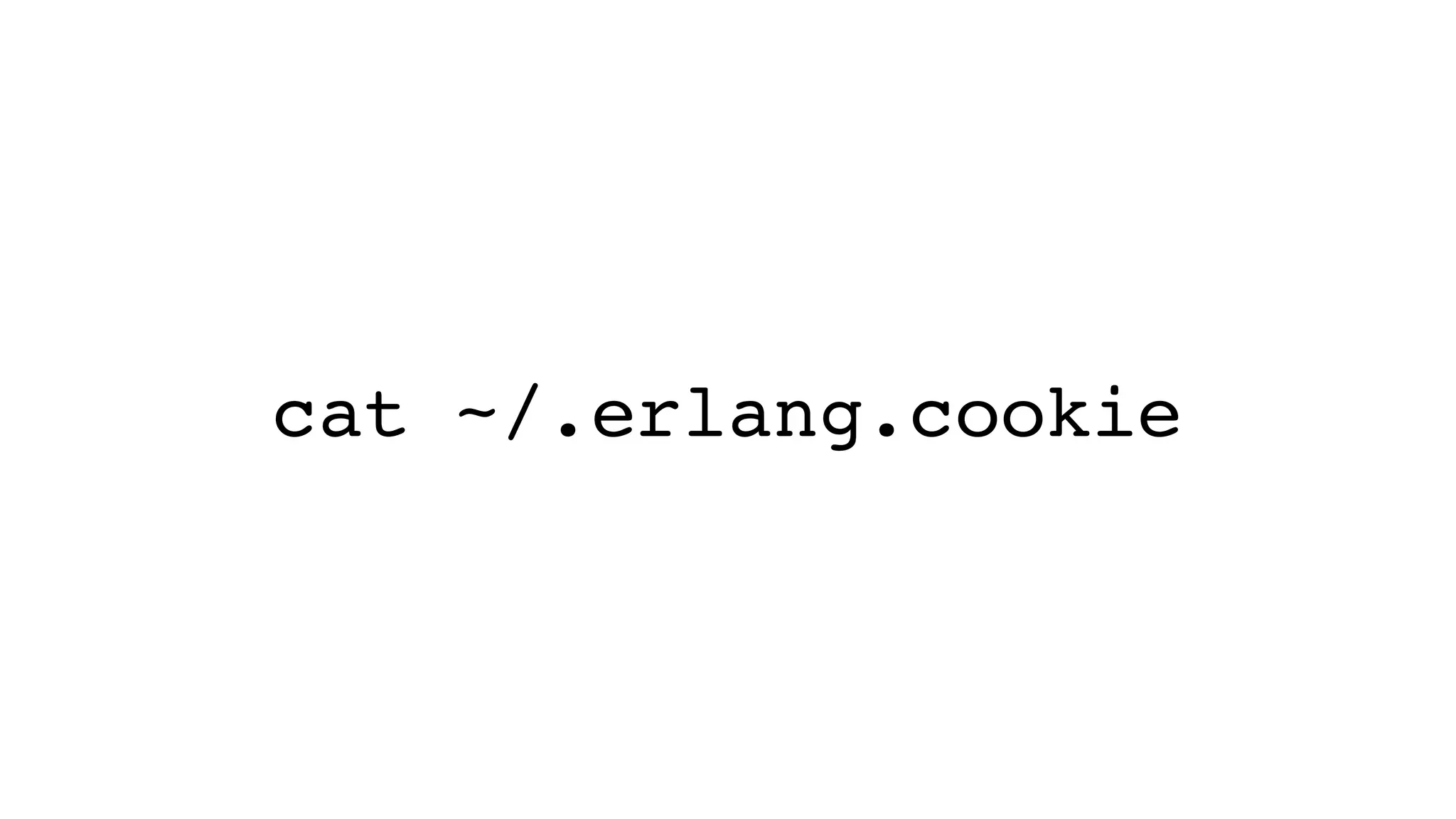

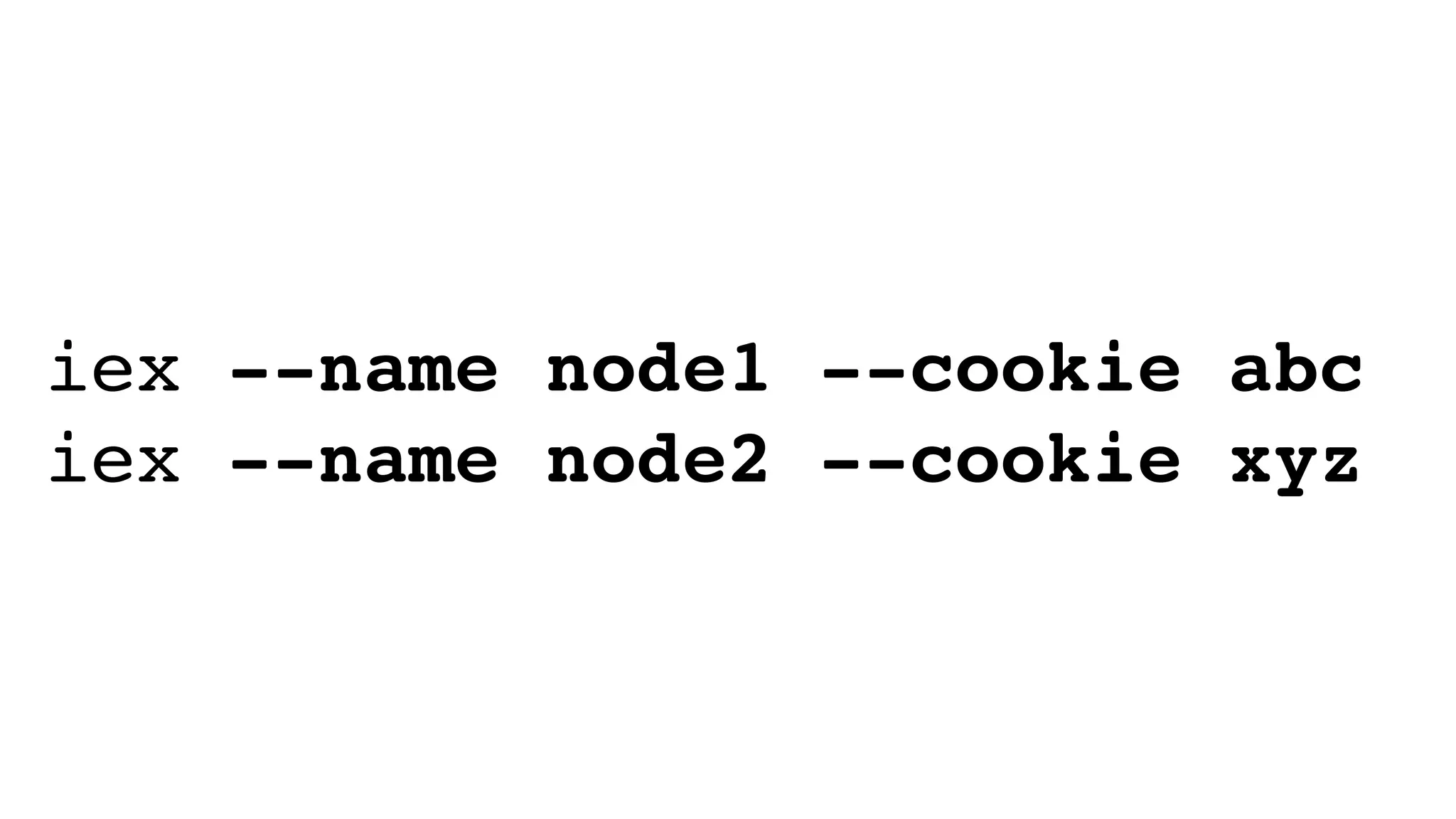

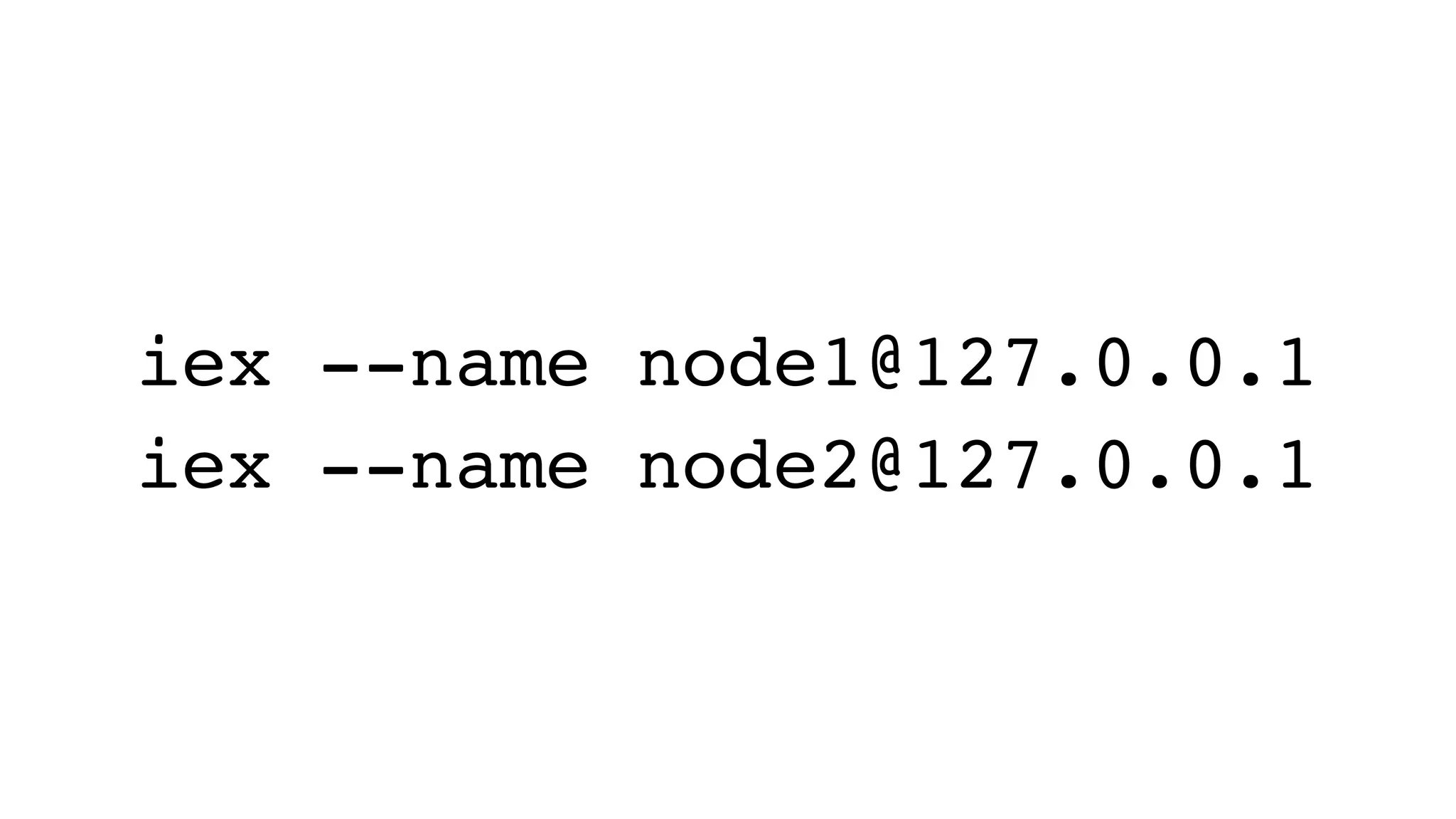

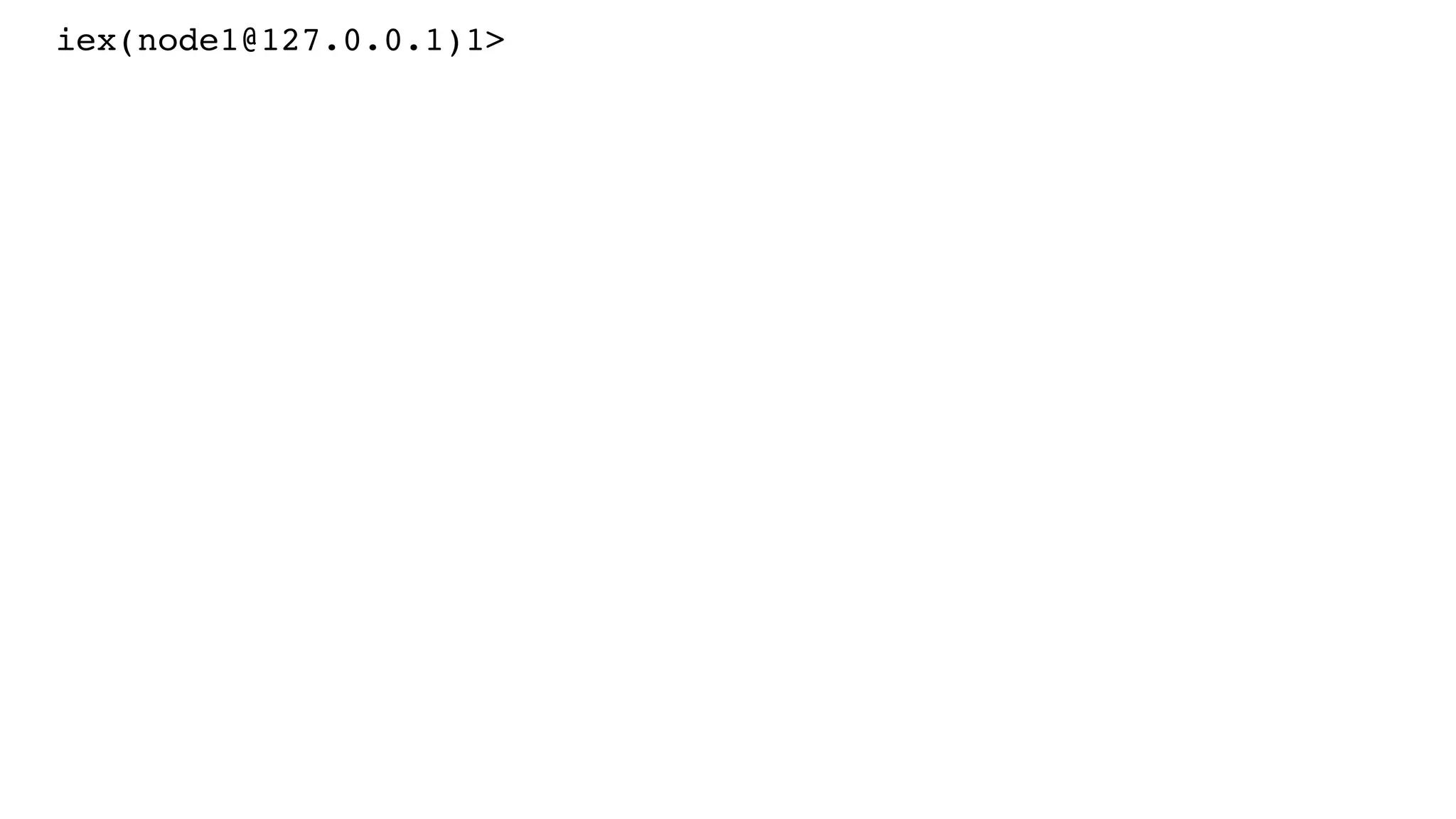

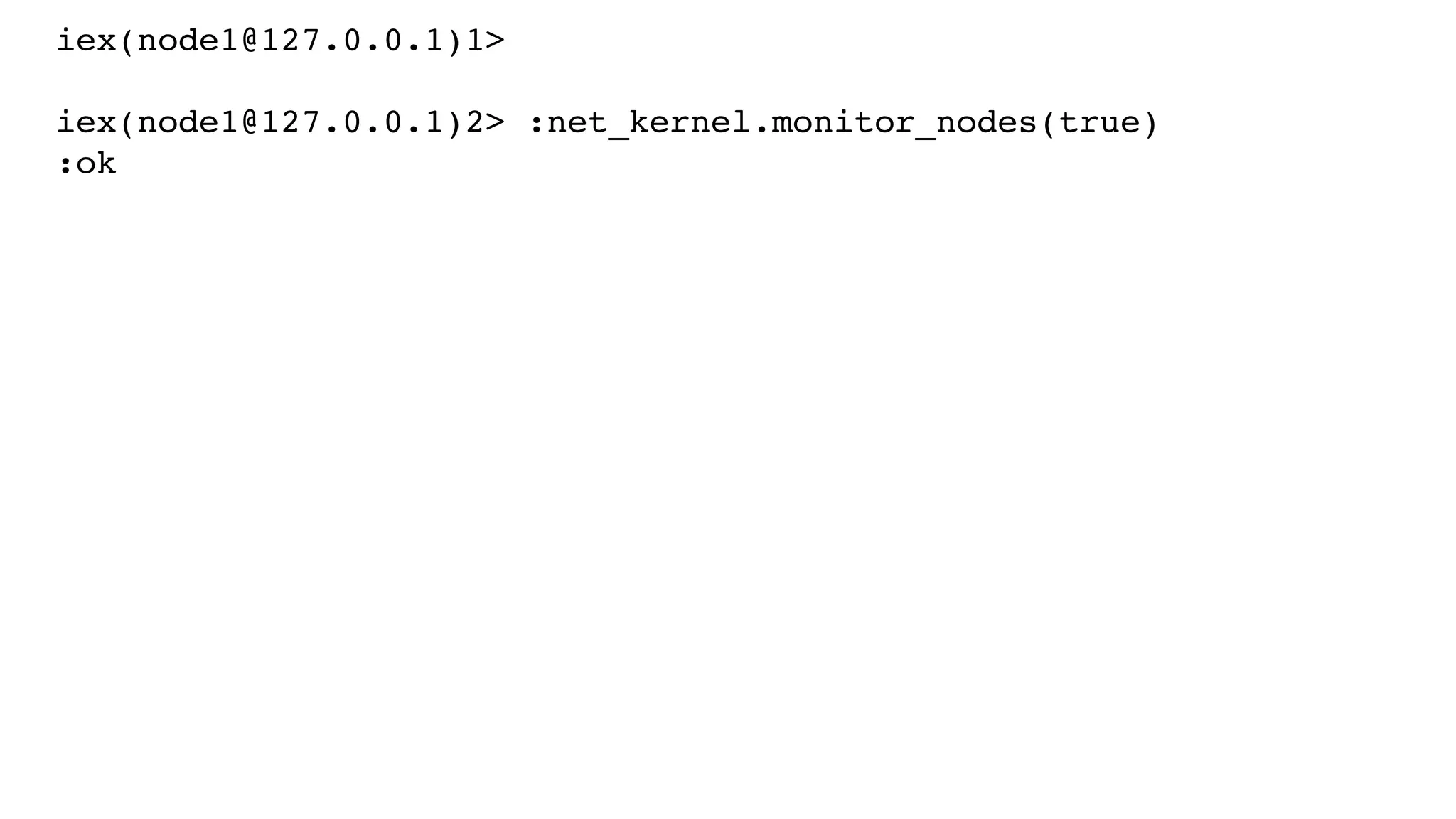

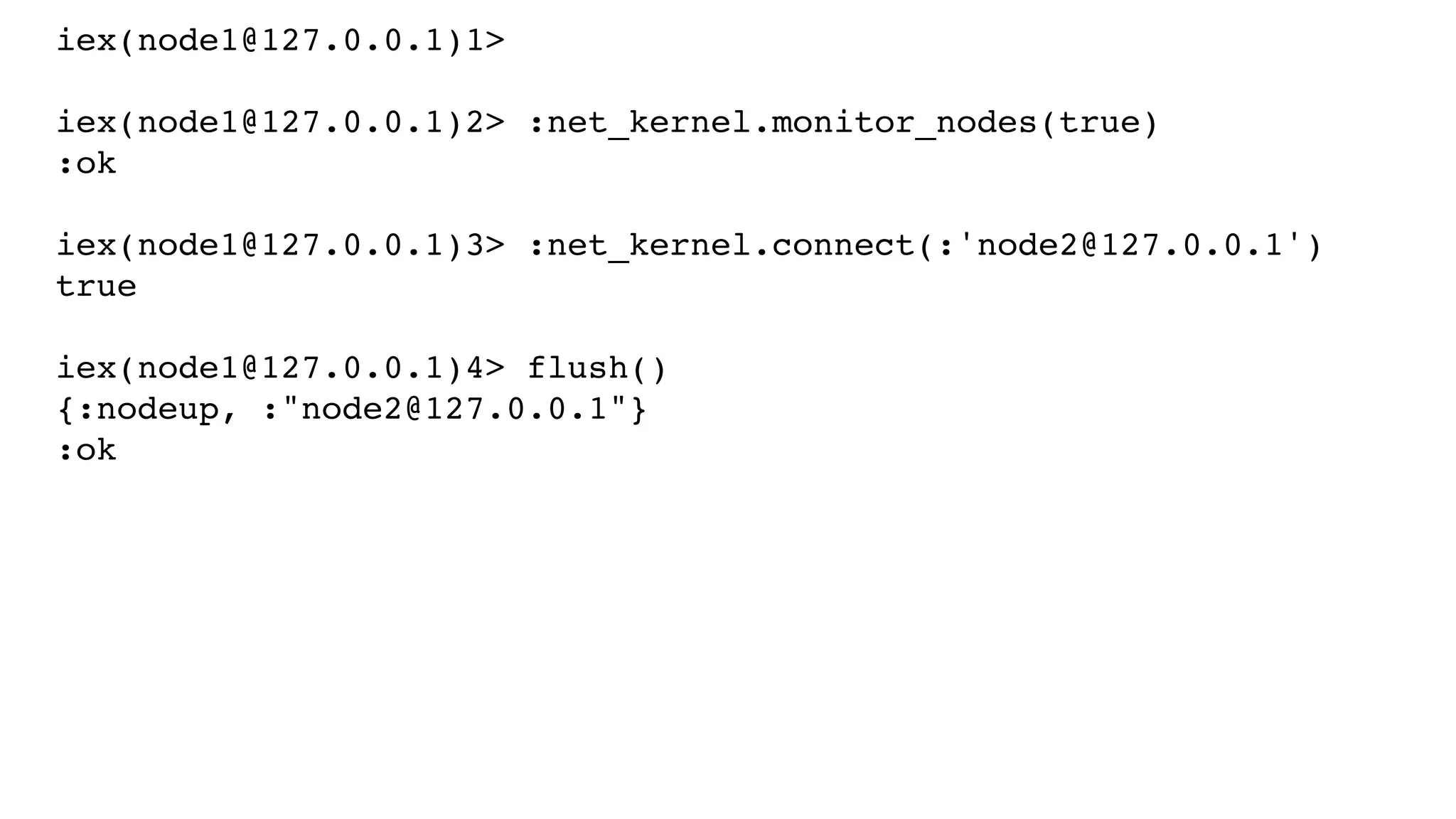

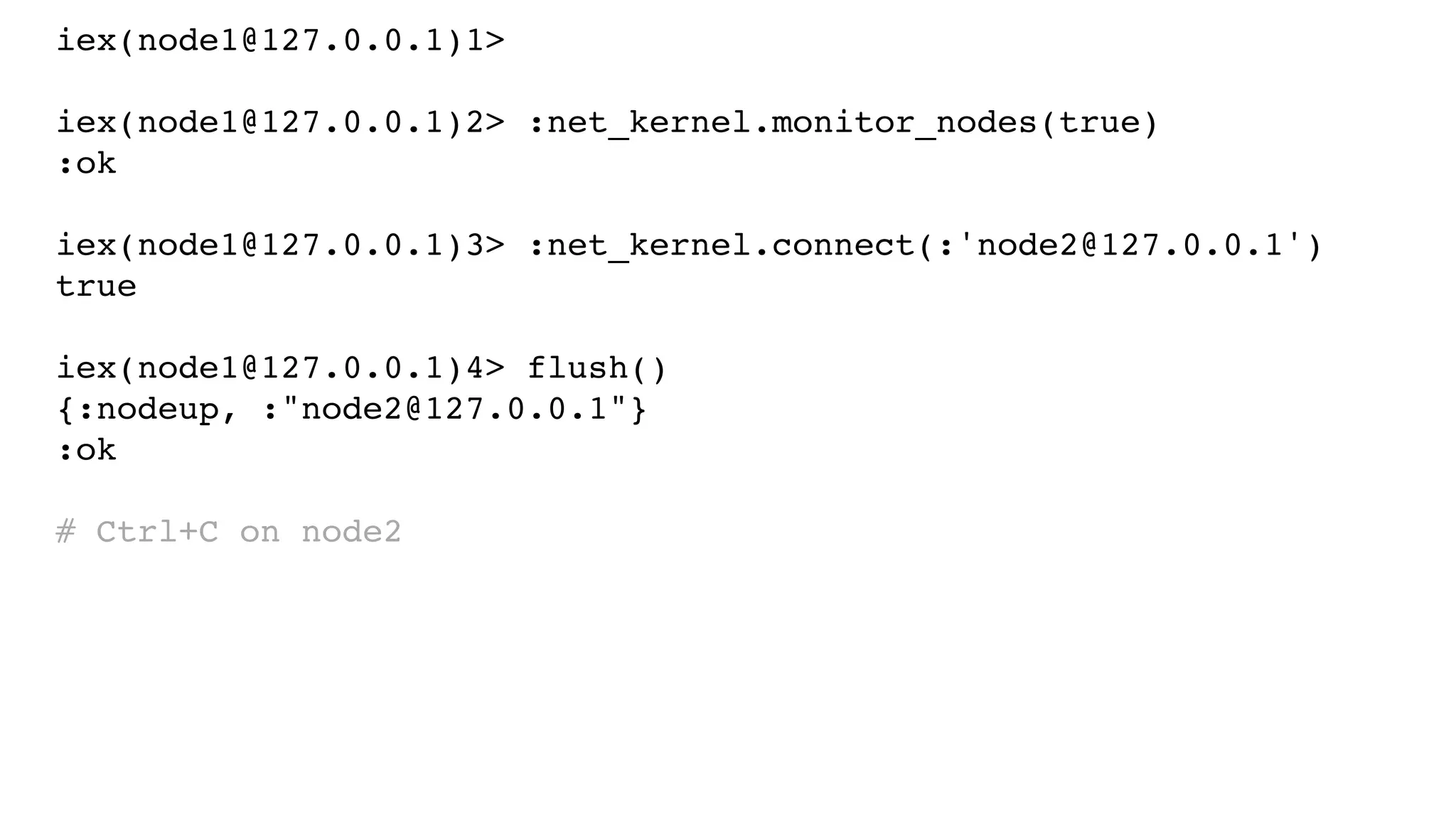

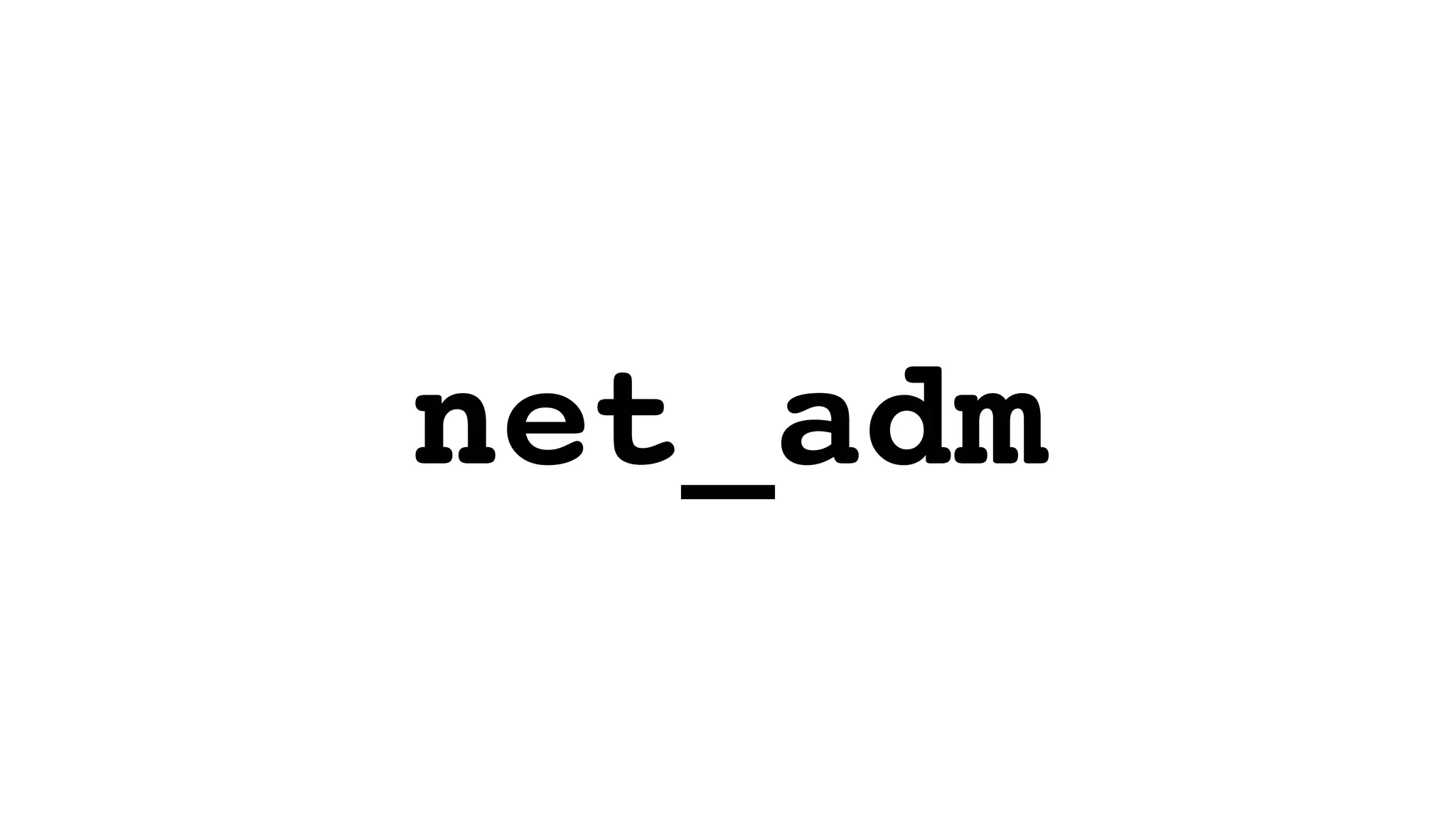

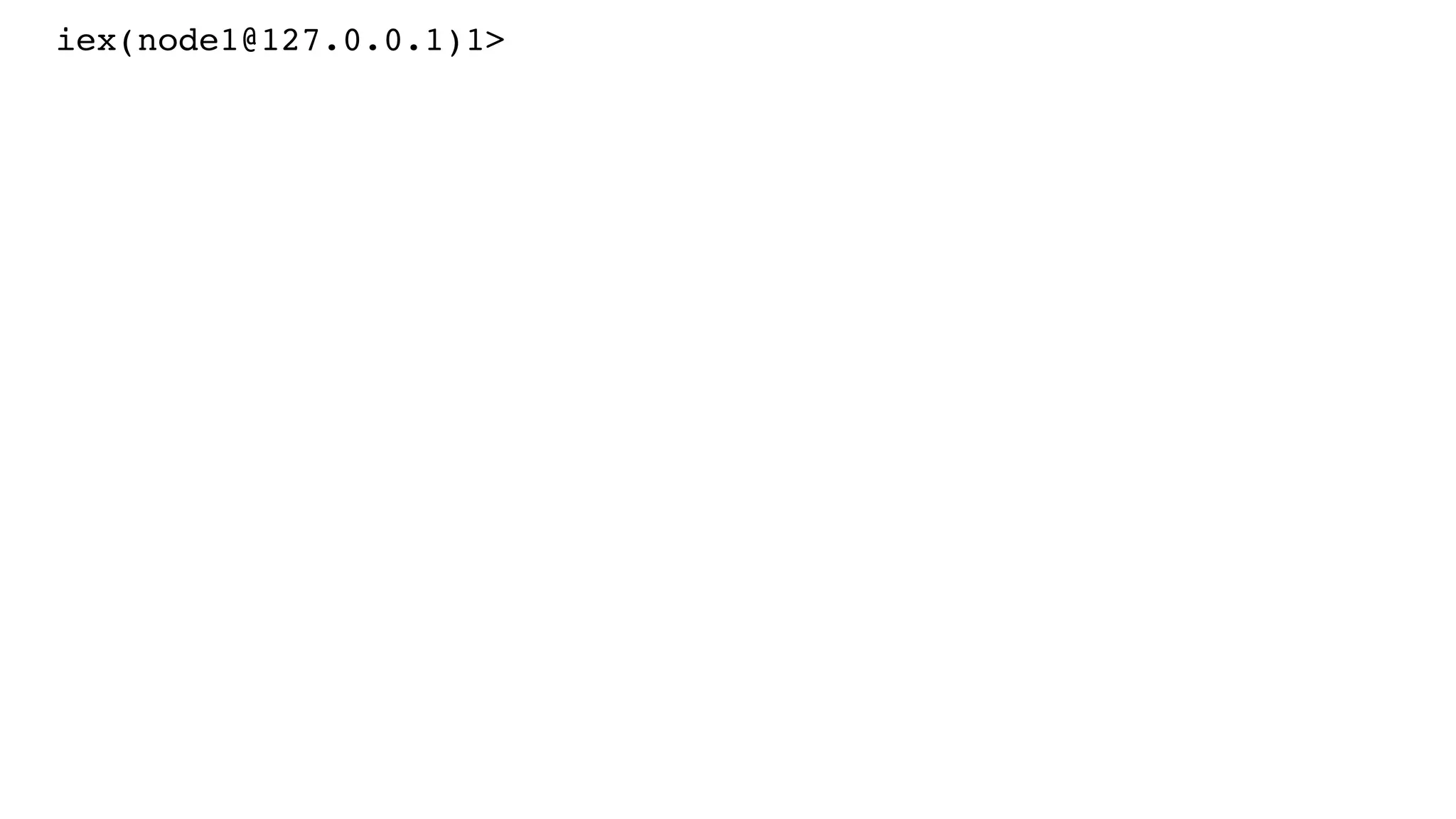

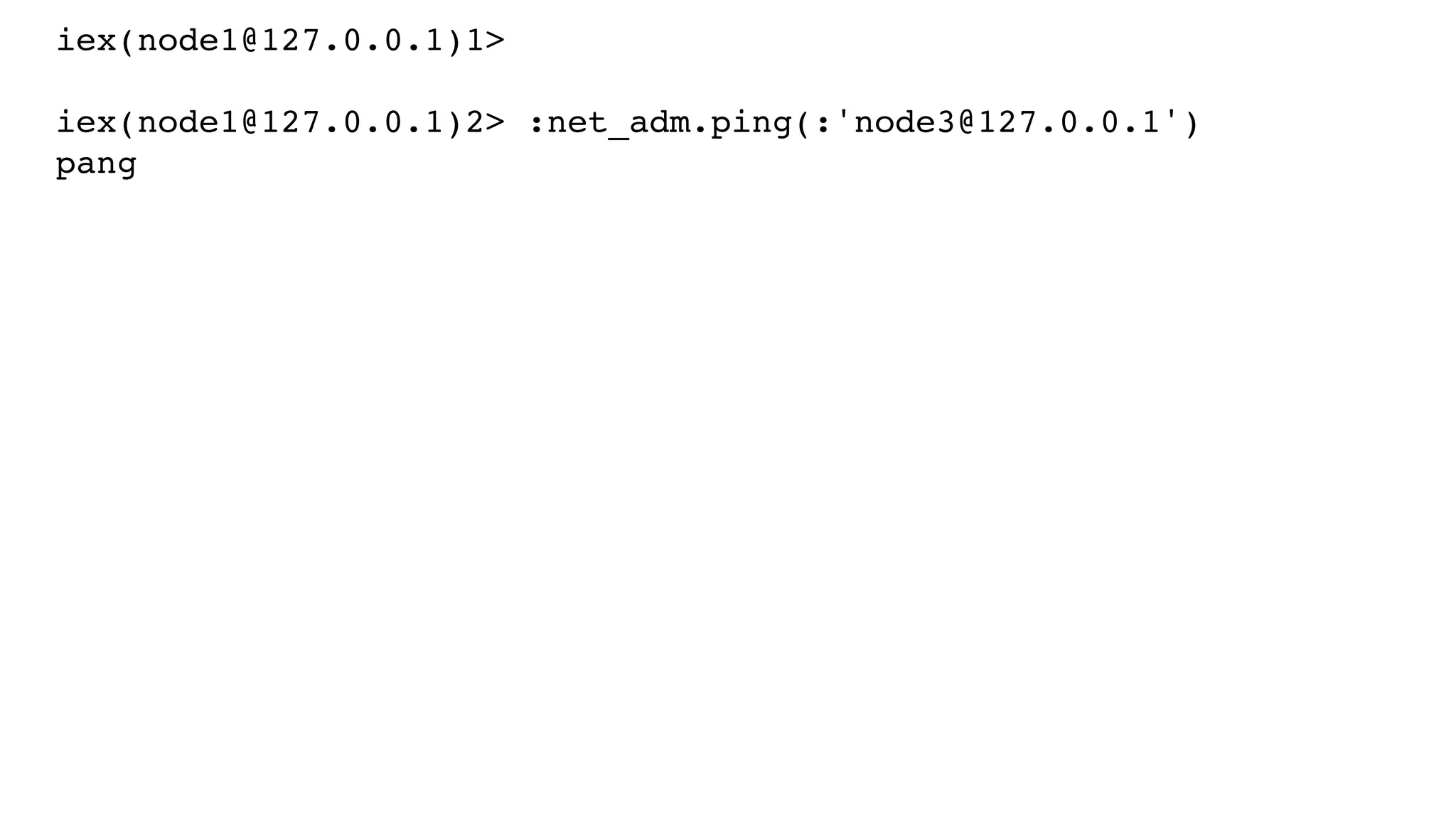

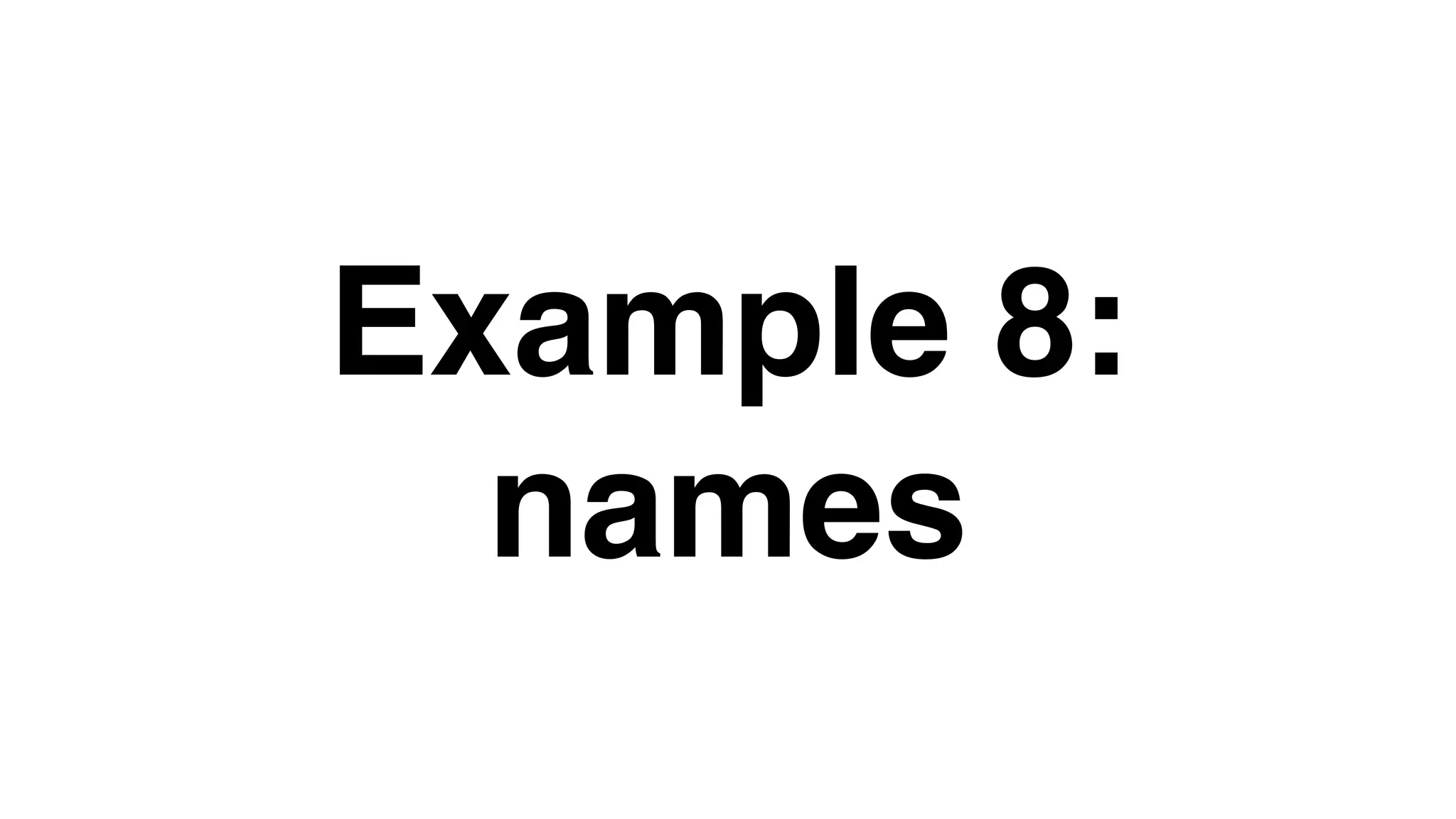

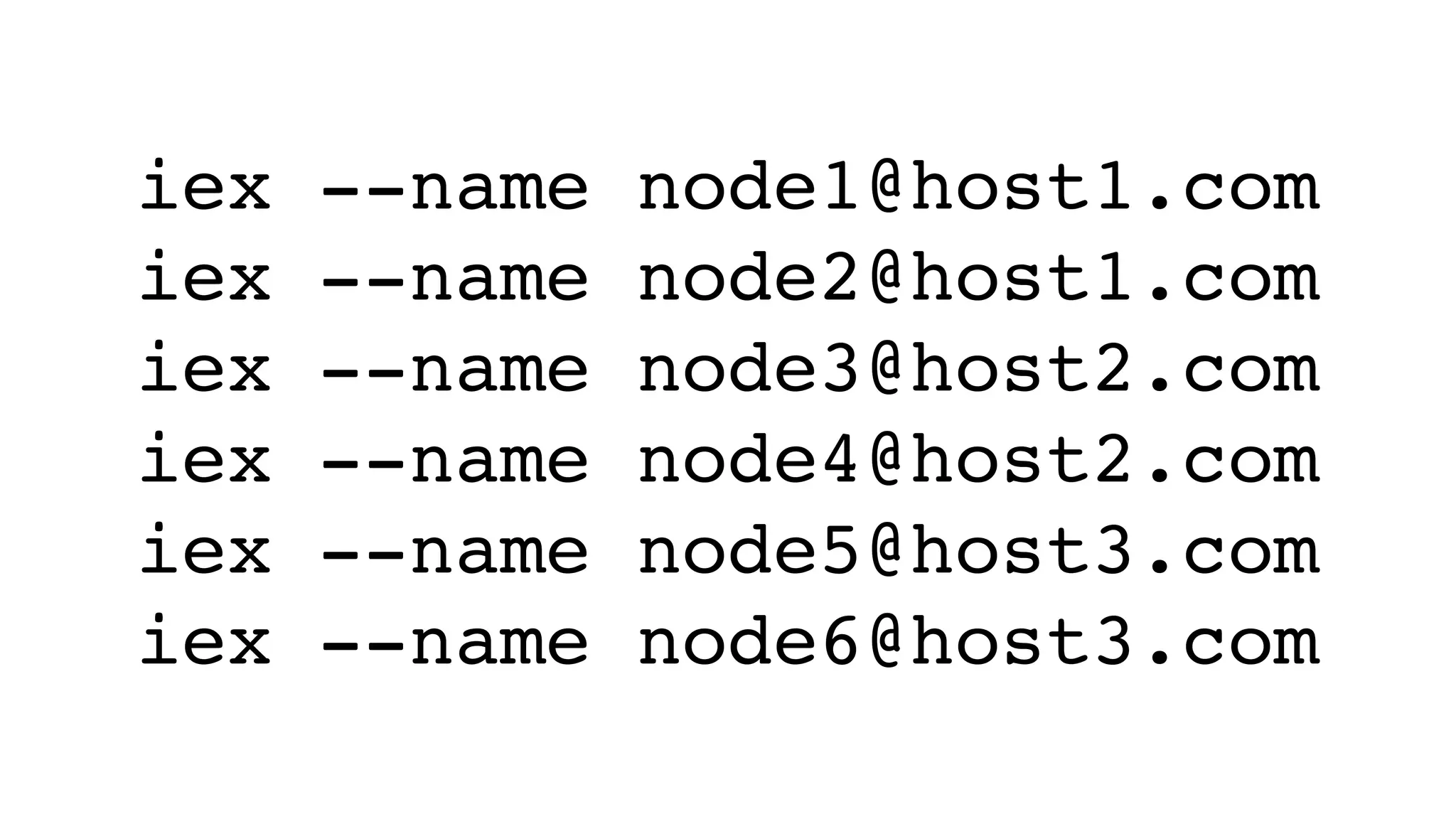

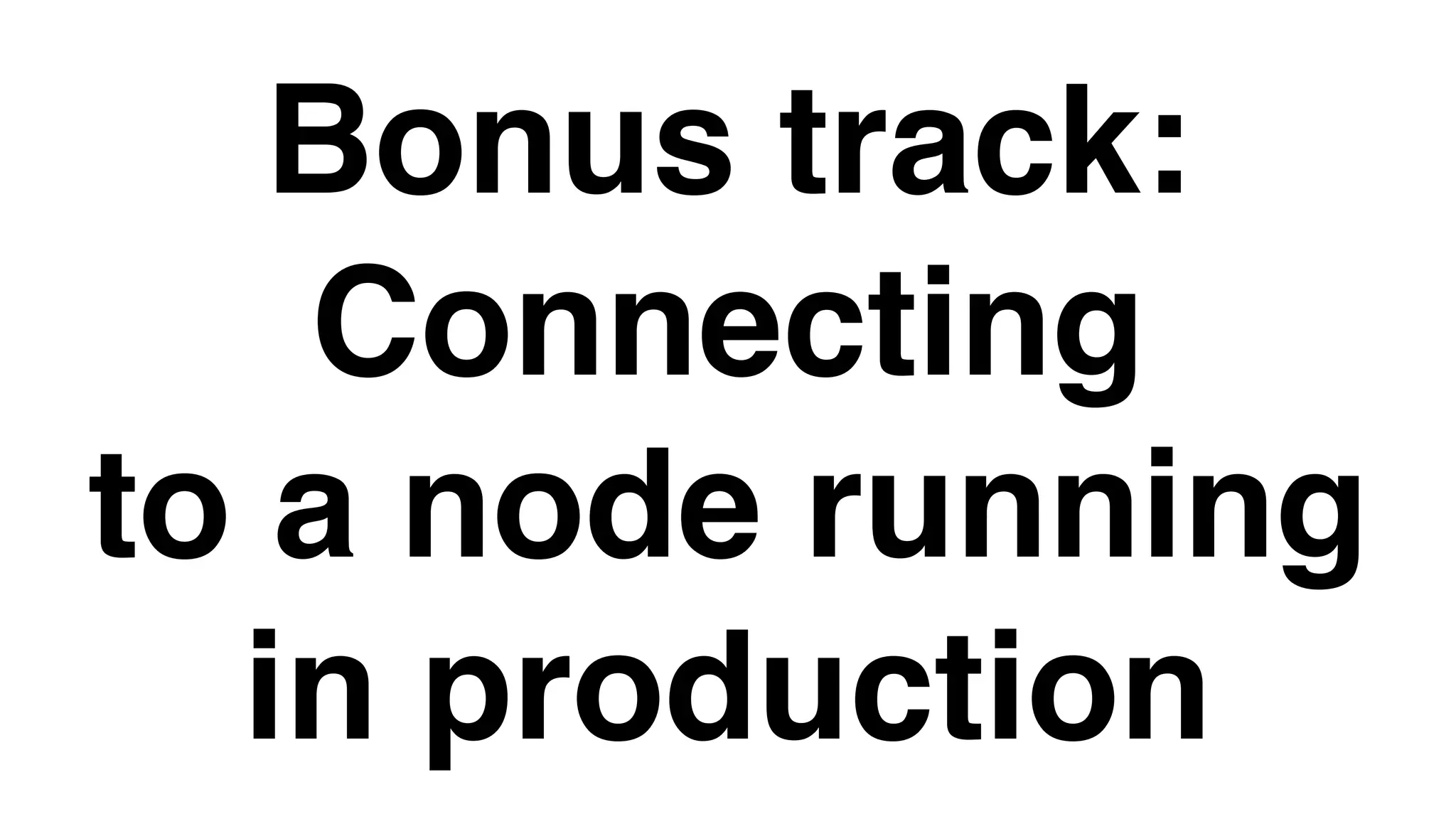

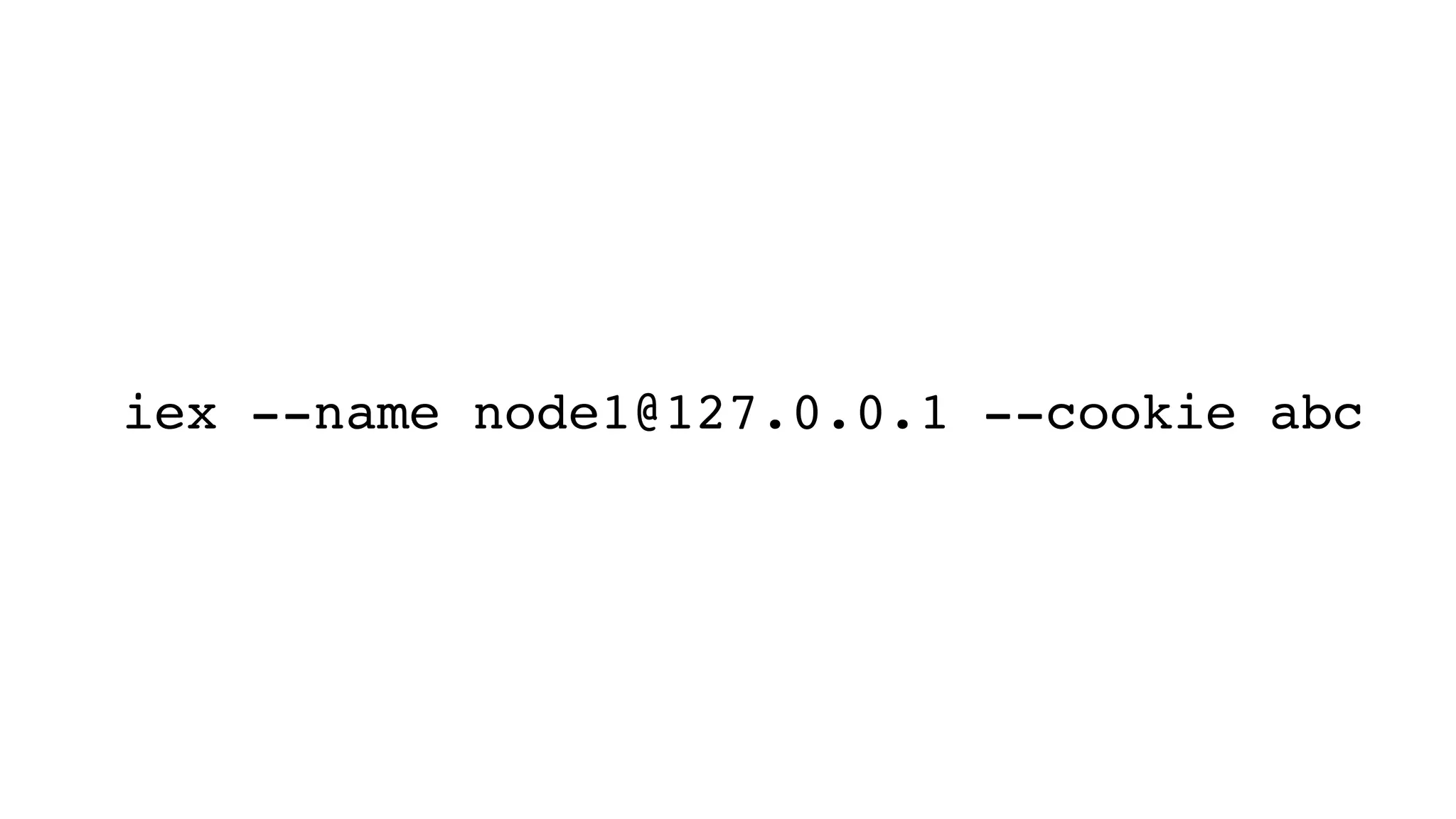

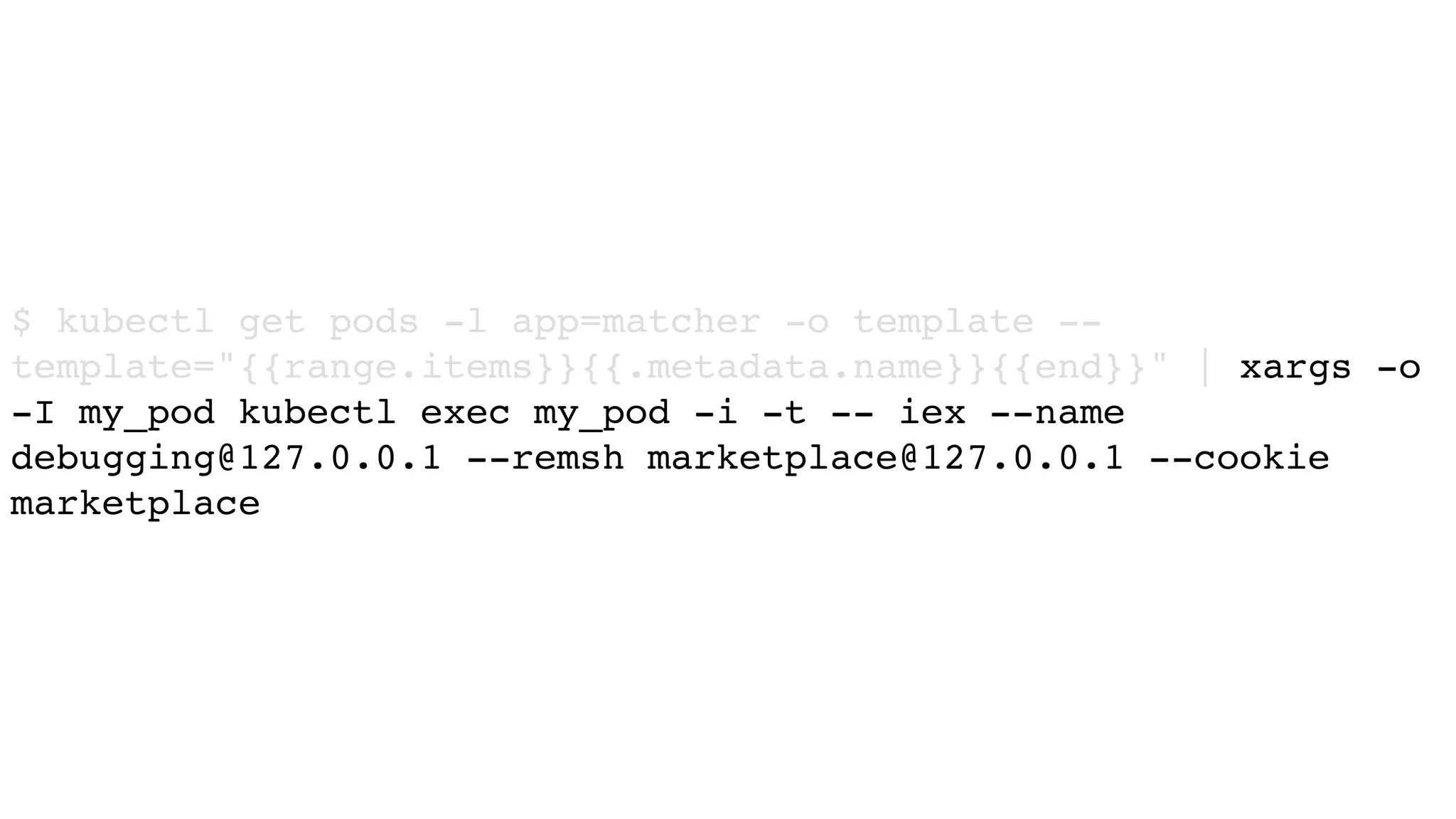

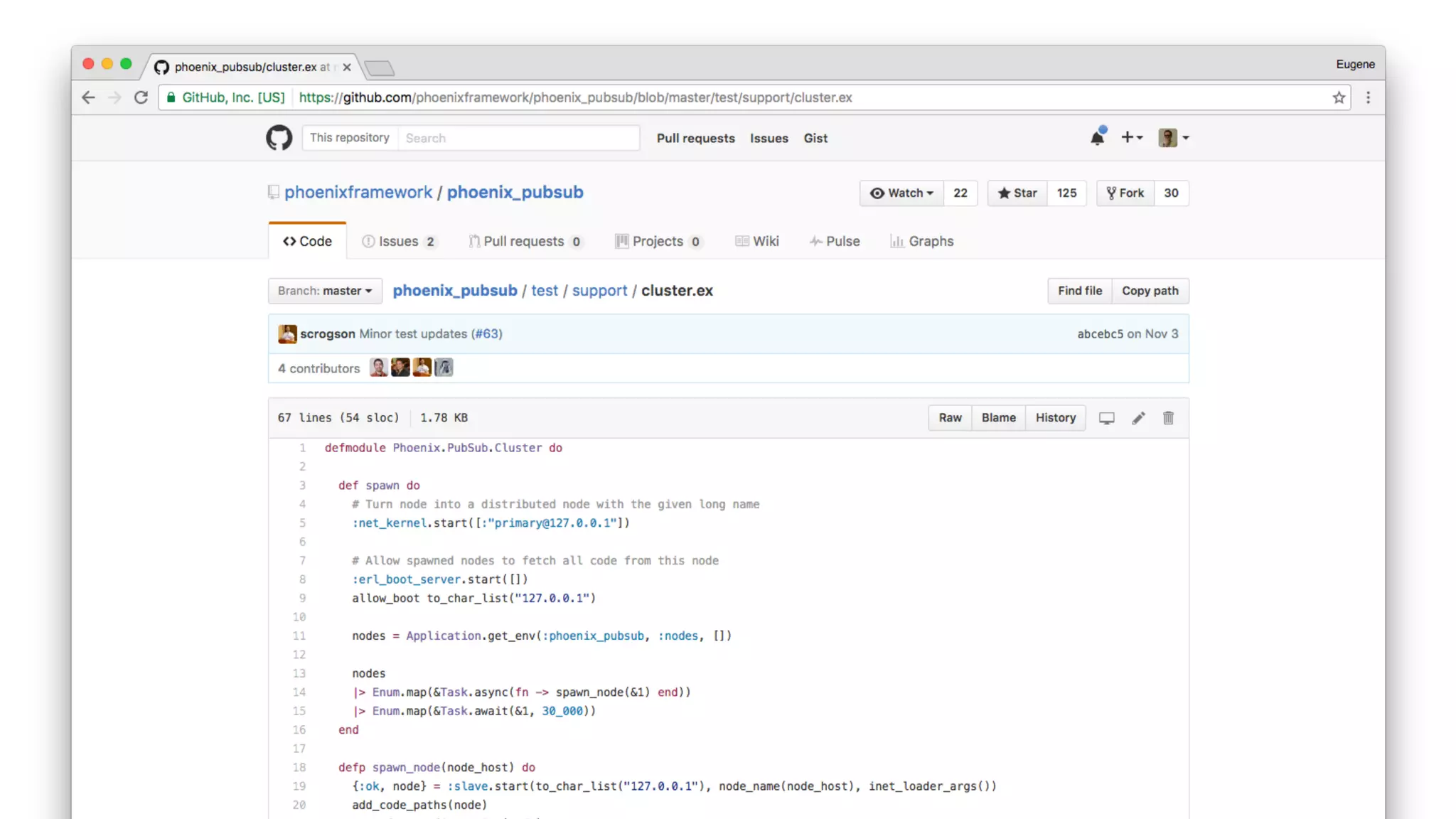

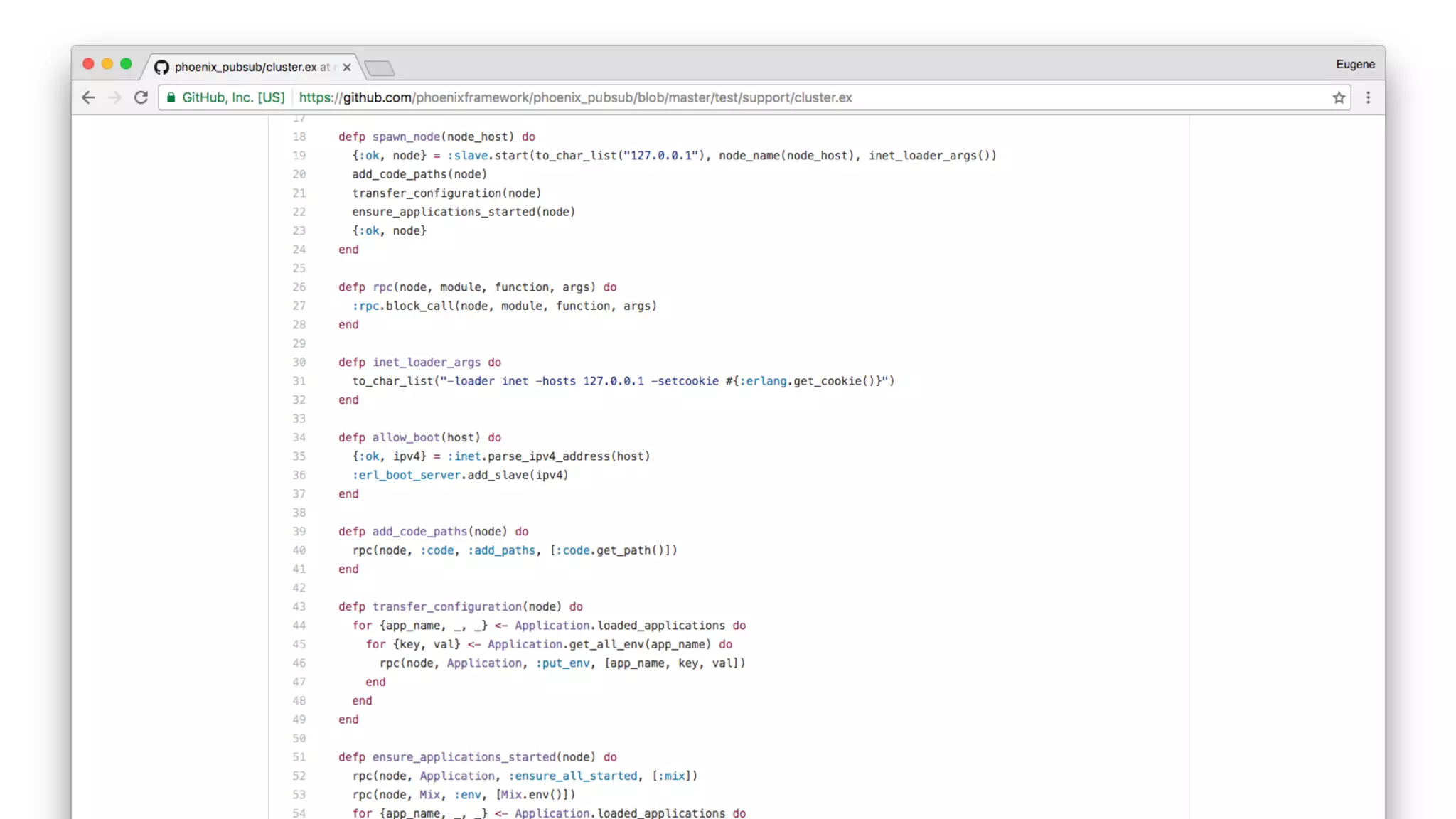

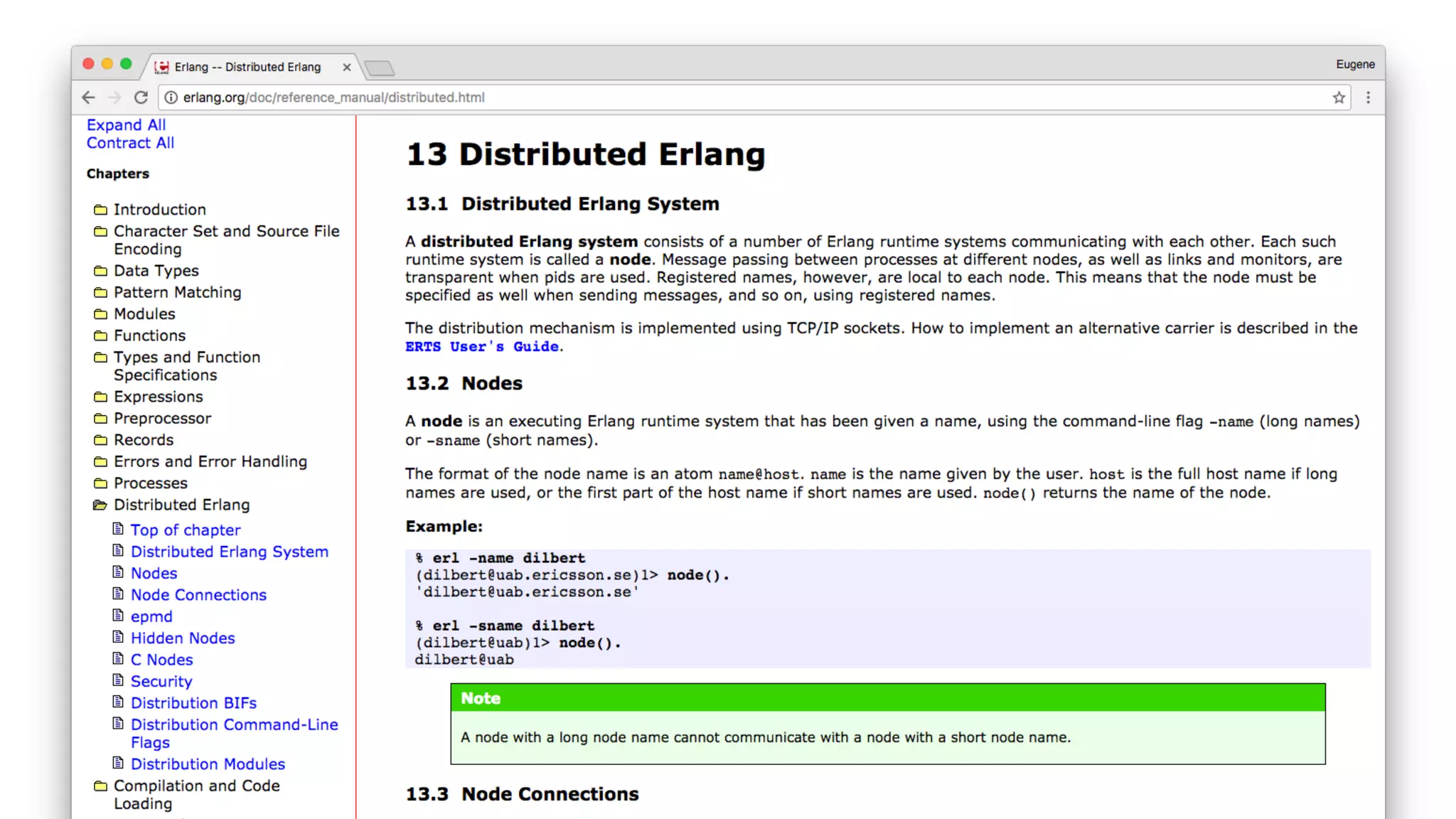

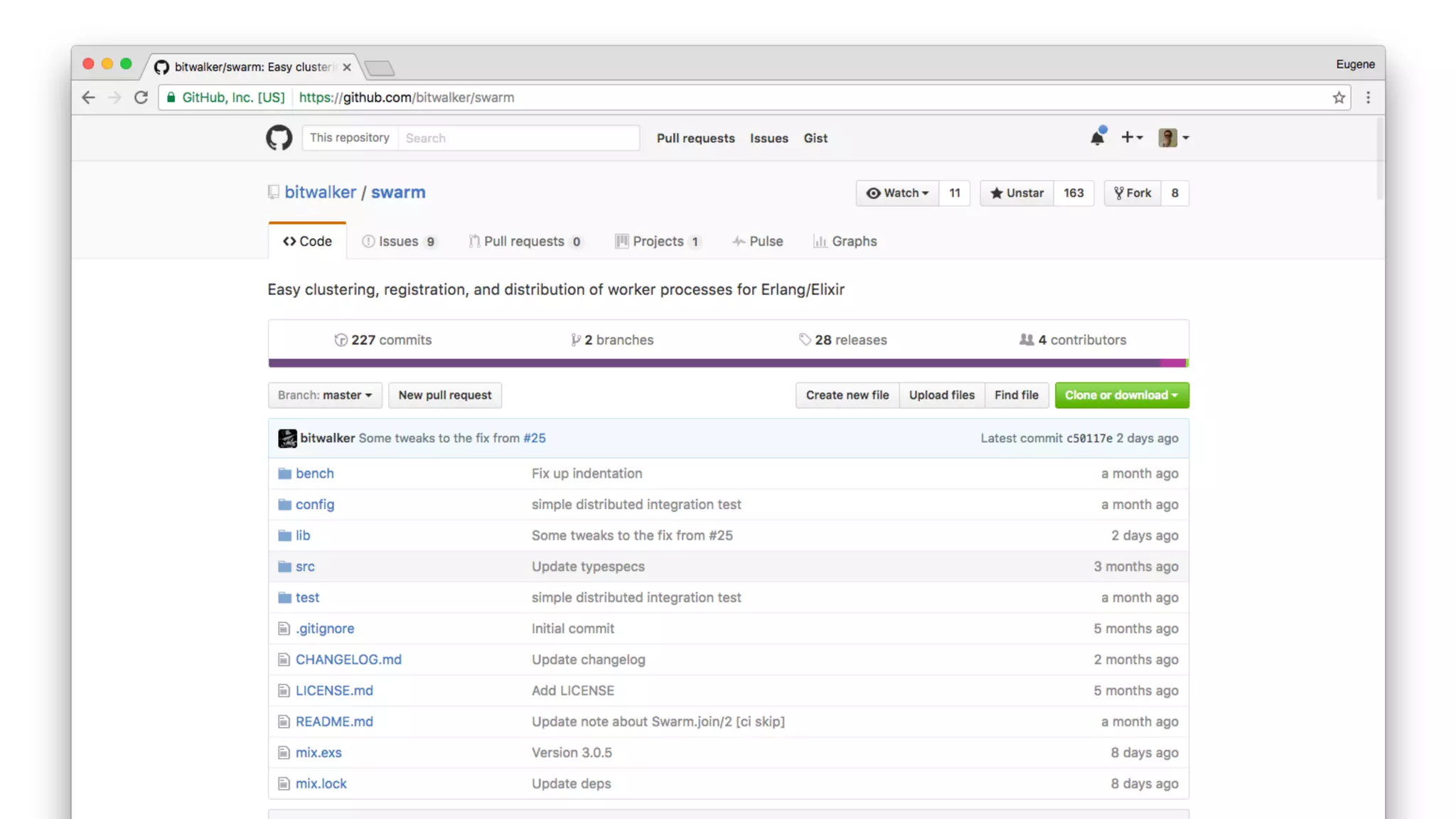

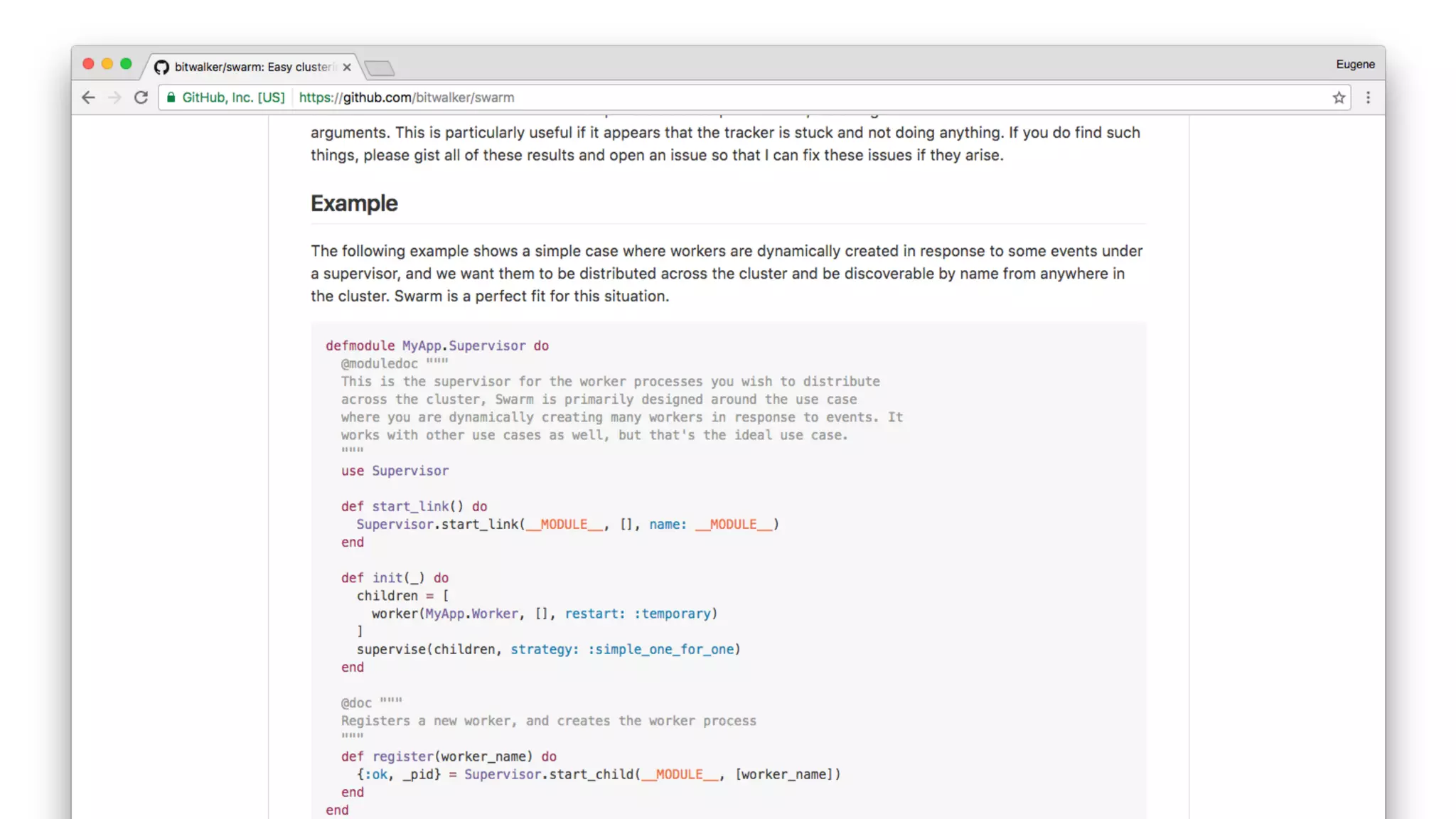

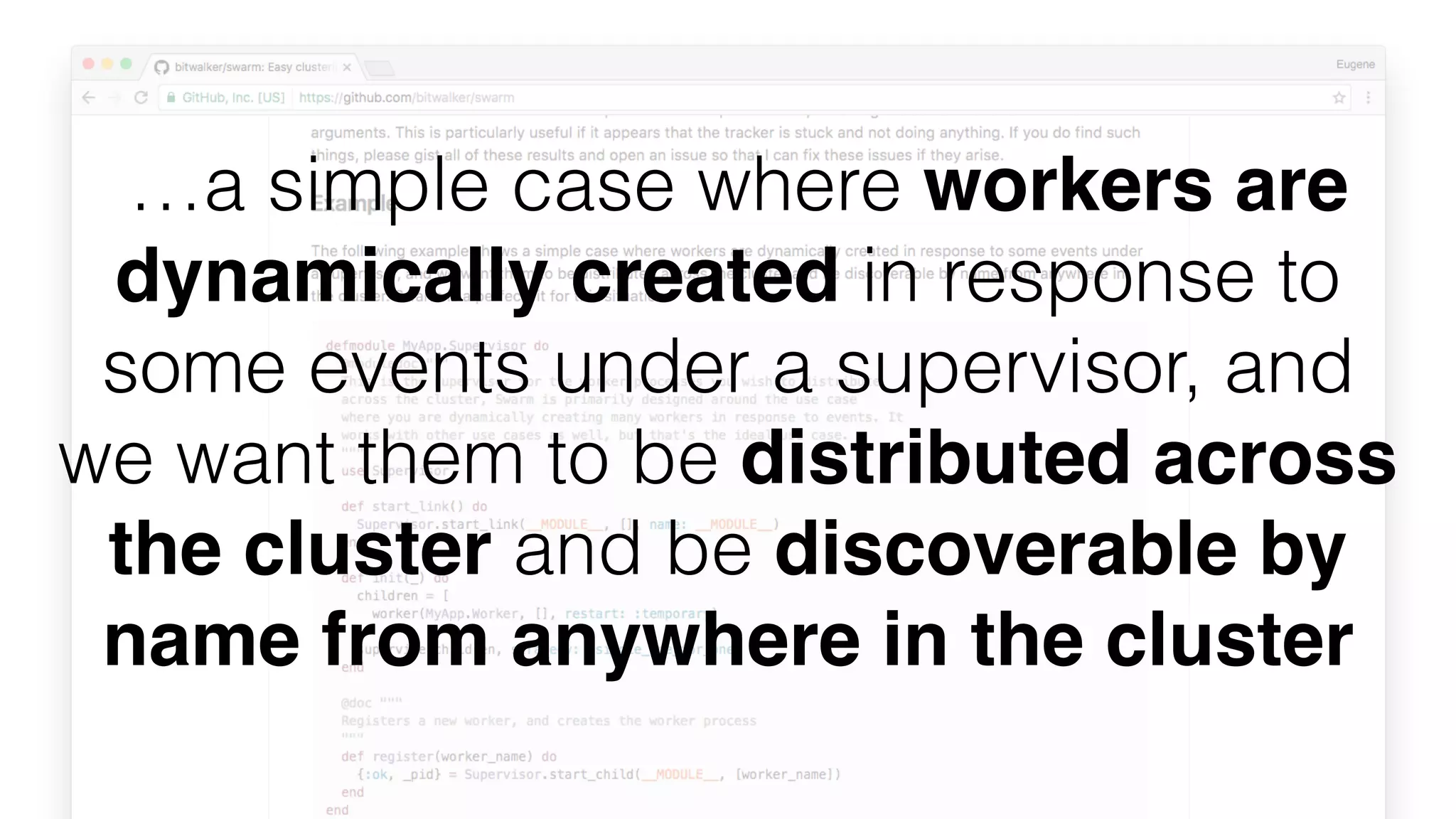

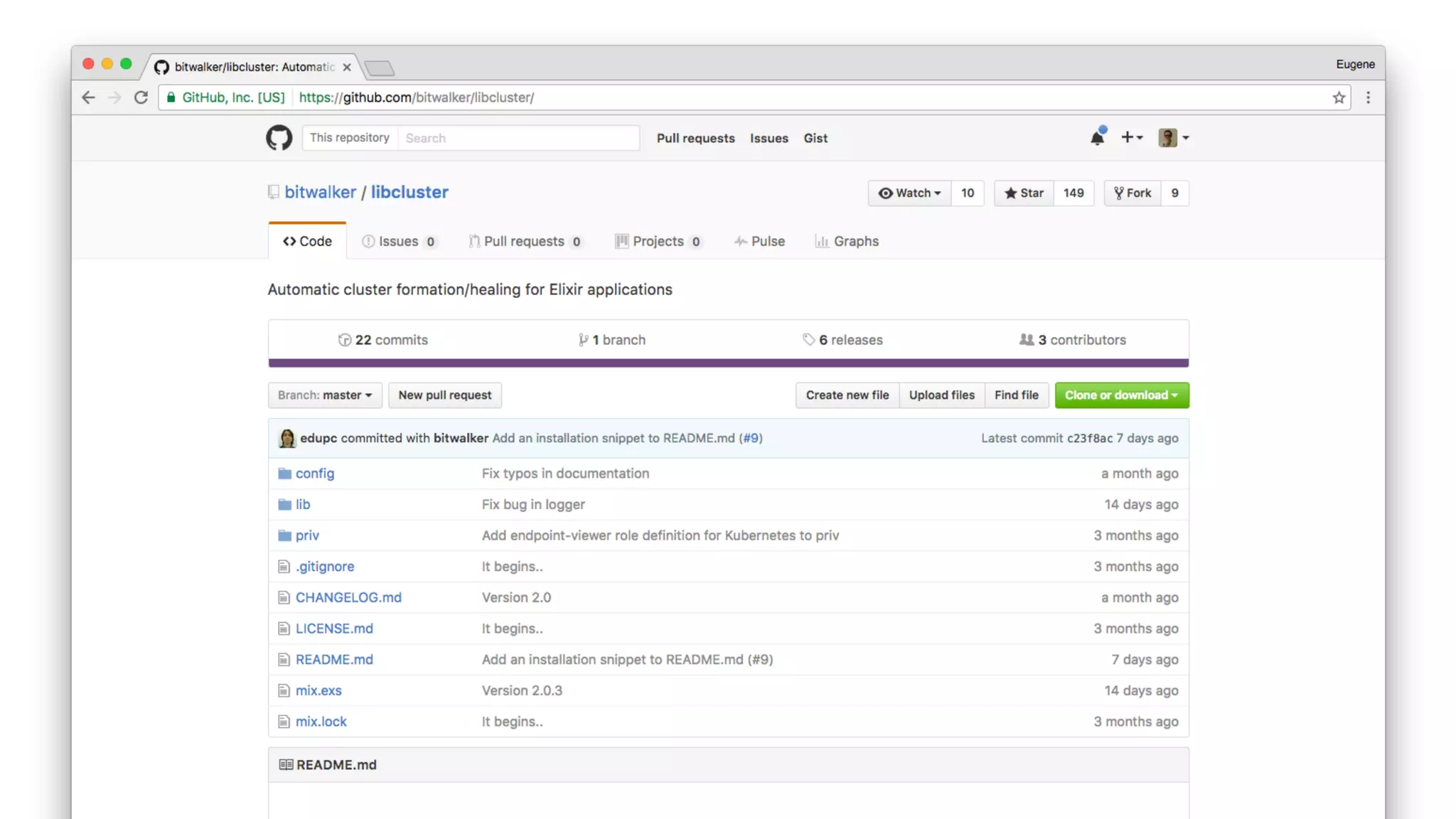

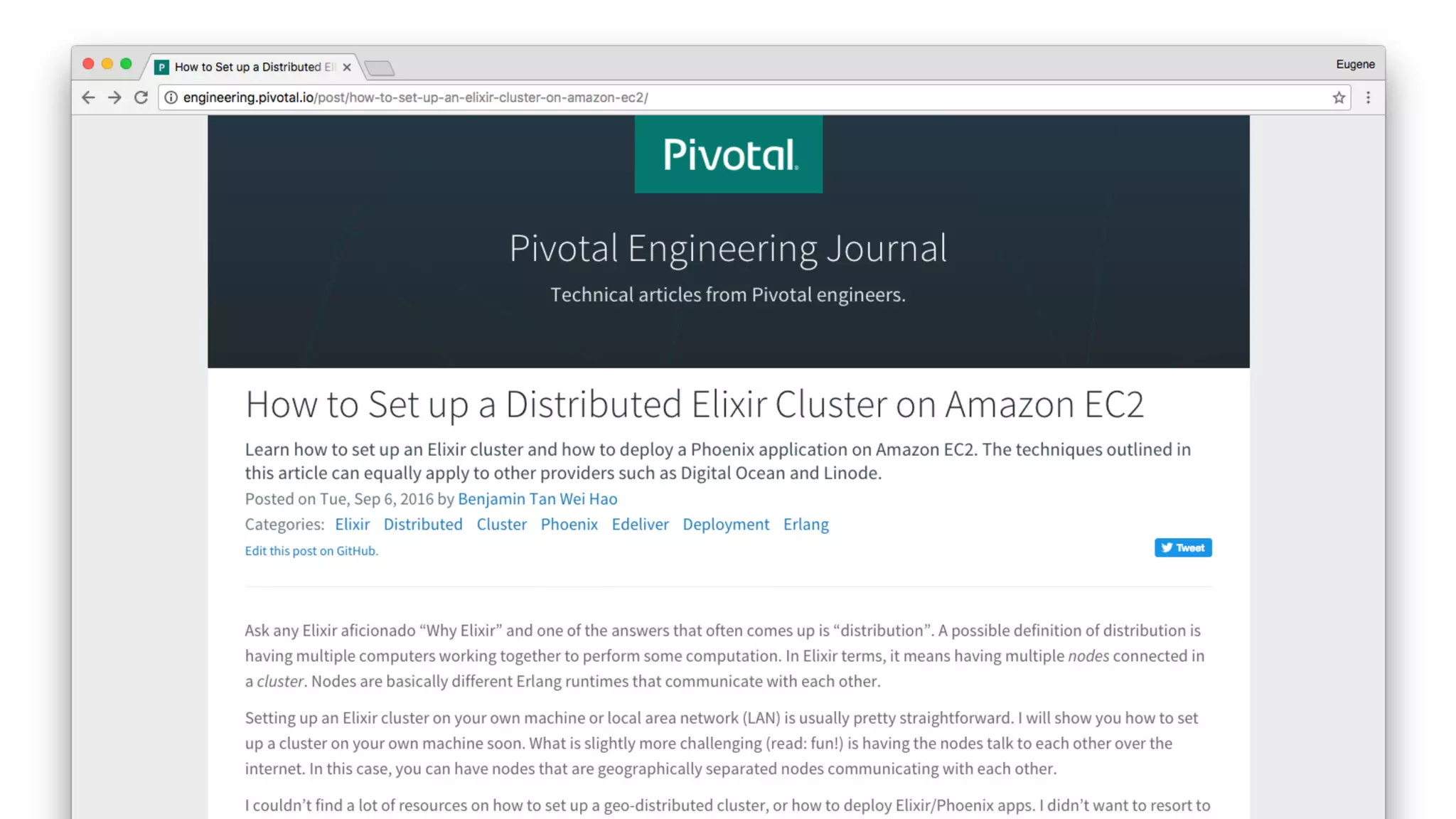

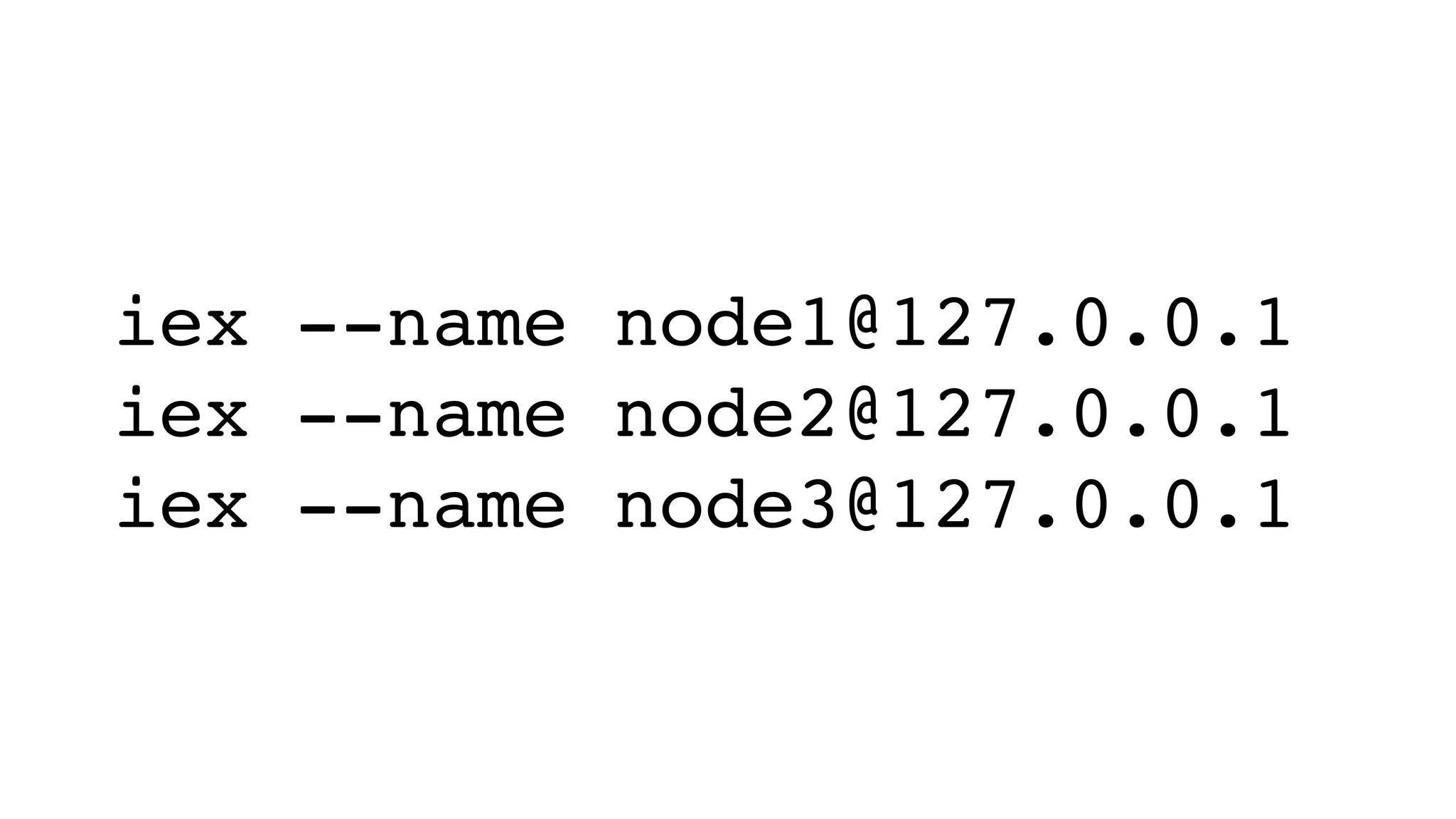

The document discusses clustering in Erlang and Elixir. It defines a cluster as a set of connected computers that work together. It describes different types of clusters and how to start nodes, connect nodes, send messages between nodes, and call functions remotely. It provides examples of monitoring nodes, pinging nodes, and getting node names. Distributed nodes running on different hosts can be connected by configuring /etc/hosts and ~/.hosts.erlang. Libraries like bitwalker/swarm and bitwalker/libcluster can help with clustering worker processes.

![:rpc.call(:nodex, M, :f, [“a”])](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-19-2048.jpg)

![~> iex

Erlang/OTP 19 [erts-8.1] [source] [64-bit] [smp:8:8] [async-

threads:10] [hipe] [kernel-poll:false] [dtrace]

Interactive Elixir (1.3.4) - press Ctrl+C to exit (type h()

ENTER for help)

iex(1)> node()

:nonode@nohost

iex(2)>](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-24-2048.jpg)

![~> iex --name eugene

Erlang/OTP 19 [erts-8.1] [source] [64-bit] [smp:8:8] [async-

threads:10] [hipe] [kernel-poll:false] [dtrace]

Interactive Elixir (1.3.4) - press Ctrl+C to exit (type h()

ENTER for help)

iex(eugene@Eugenes-MacBook-Pro-2.local)1>](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-26-2048.jpg)

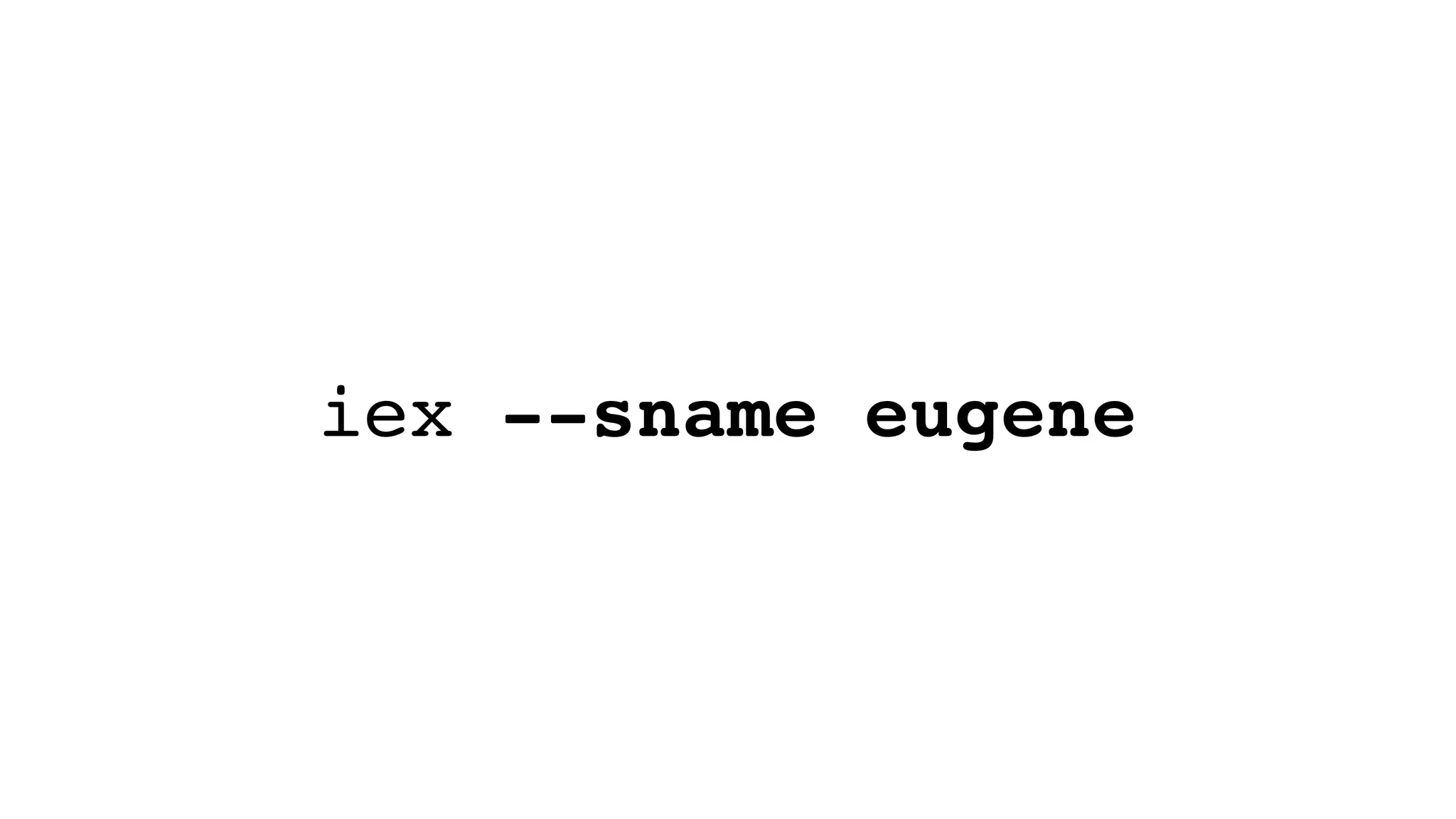

![~> iex --sname eugene

Erlang/OTP 19 [erts-8.1] [source] [64-bit] [smp:8:8] [async-

threads:10] [hipe] [kernel-poll:false] [dtrace]

Interactive Elixir (1.3.4) - press Ctrl+C to exit (type h()

ENTER for help)

iex(eugene@Eugenes-MacBook-Pro-2)1>](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-28-2048.jpg)

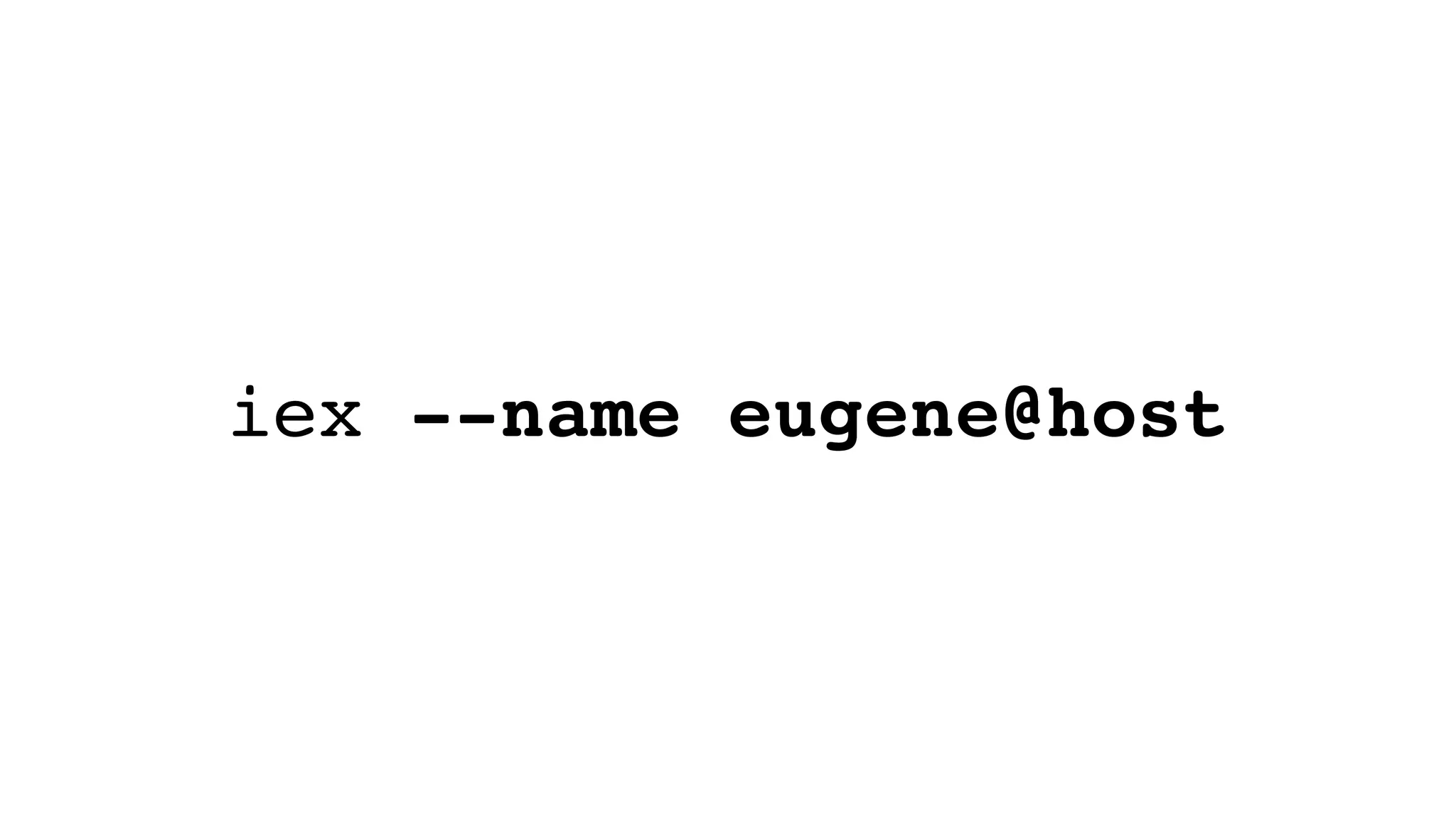

![~> iex --name eugene@host

Erlang/OTP 19 [erts-8.1] [source] [64-bit] [smp:8:8] [async-

threads:10] [hipe] [kernel-poll:false] [dtrace]

Interactive Elixir (1.3.4) - press Ctrl+C to exit (type h()

ENTER for help)

iex(eugene@host)1>](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-30-2048.jpg)

![# On node1

iex(node1@127.0.0.1)1> :rpc.call(:'node2@127.0.0.1', Enum, :reverse, [100..1])

[1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 15, 16, 17, 18, 19, 20, 21,

22, 23, 24, 25, 26, 27, 28, 29, 30, 31, 32, 33, 34, 35, 36, 37, 38, 39, 40,

41, 42, 43, 44, 45, 46, 47, 48, 49, 50, …]

iex(node1@127.0.0.1)2>](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-38-2048.jpg)

![~> iex

Erlang/OTP 19 [erts-8.1] [source] [64-bit] [smp:8:8] [async-

threads:10] [hipe] [kernel-poll:false] [dtrace]

Interactive Elixir (1.3.4) - press Ctrl+C to exit (type h()

ENTER for help)

iex(1)>](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-56-2048.jpg)

![~> iex

Erlang/OTP 19 [erts-8.1] [source] [64-bit] [smp:8:8] [async-

threads:10] [hipe] [kernel-poll:false] [dtrace]

Interactive Elixir (1.3.4) - press Ctrl+C to exit (type h()

ENTER for help)

iex(1)> node()

:nonode@nohost

iex(2)>](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-57-2048.jpg)

![~> iex

Erlang/OTP 19 [erts-8.1] [source] [64-bit] [smp:8:8] [async-

threads:10] [hipe] [kernel-poll:false] [dtrace]

Interactive Elixir (1.3.4) - press Ctrl+C to exit (type h()

ENTER for help)

iex(1)> node()

:nonode@nohost

iex(2)> Process.registered() |> Enum.find(&(&1

== :net_kernel))

nil](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-58-2048.jpg)

![~> iex

Erlang/OTP 19 [erts-8.1] [source] [64-bit] [smp:8:8] [async-

threads:10] [hipe] [kernel-poll:false] [dtrace]

Interactive Elixir (1.3.4) - press Ctrl+C to exit (type h()

ENTER for help)

iex(1)> node()

:nonode@nohost

iex(2)> Process.registered() |> Enum.find(&(&1

== :net_kernel))

nil

iex(3)> :net_kernel.start([:’mynode@127.0.0.1’])

{:ok, #PID<0.84.0>}

iex(mynode@127.0.0.1)4>](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-59-2048.jpg)

![~> iex

Erlang/OTP 19 [erts-8.1] [source] [64-bit] [smp:8:8] [async-

threads:10] [hipe] [kernel-poll:false] [dtrace]

Interactive Elixir (1.3.4) - press Ctrl+C to exit (type h()

ENTER for help)

iex(1)> node()

:nonode@nohost

iex(2)> Process.registered() |> Enum.find(&(&1

== :net_kernel))

nil

iex(3)> :net_kernel.start([:’mynode@127.0.0.1’])

{:ok, #PID<0.84.0>}

iex(mynode@127.0.0.1)4> Process.registered() |>

Enum.find(&(&1 == :net_kernel))

:net_kernel](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-60-2048.jpg)

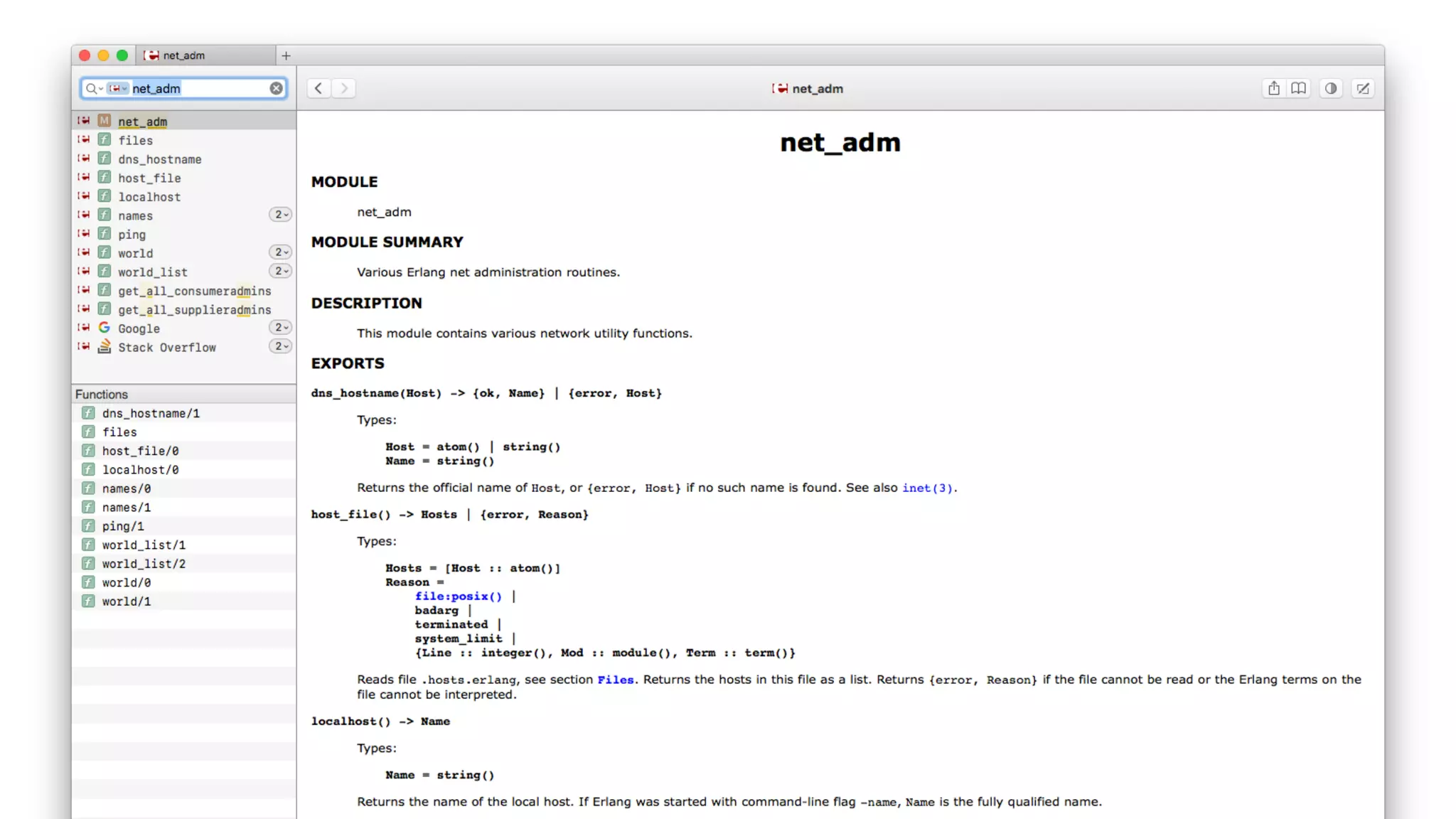

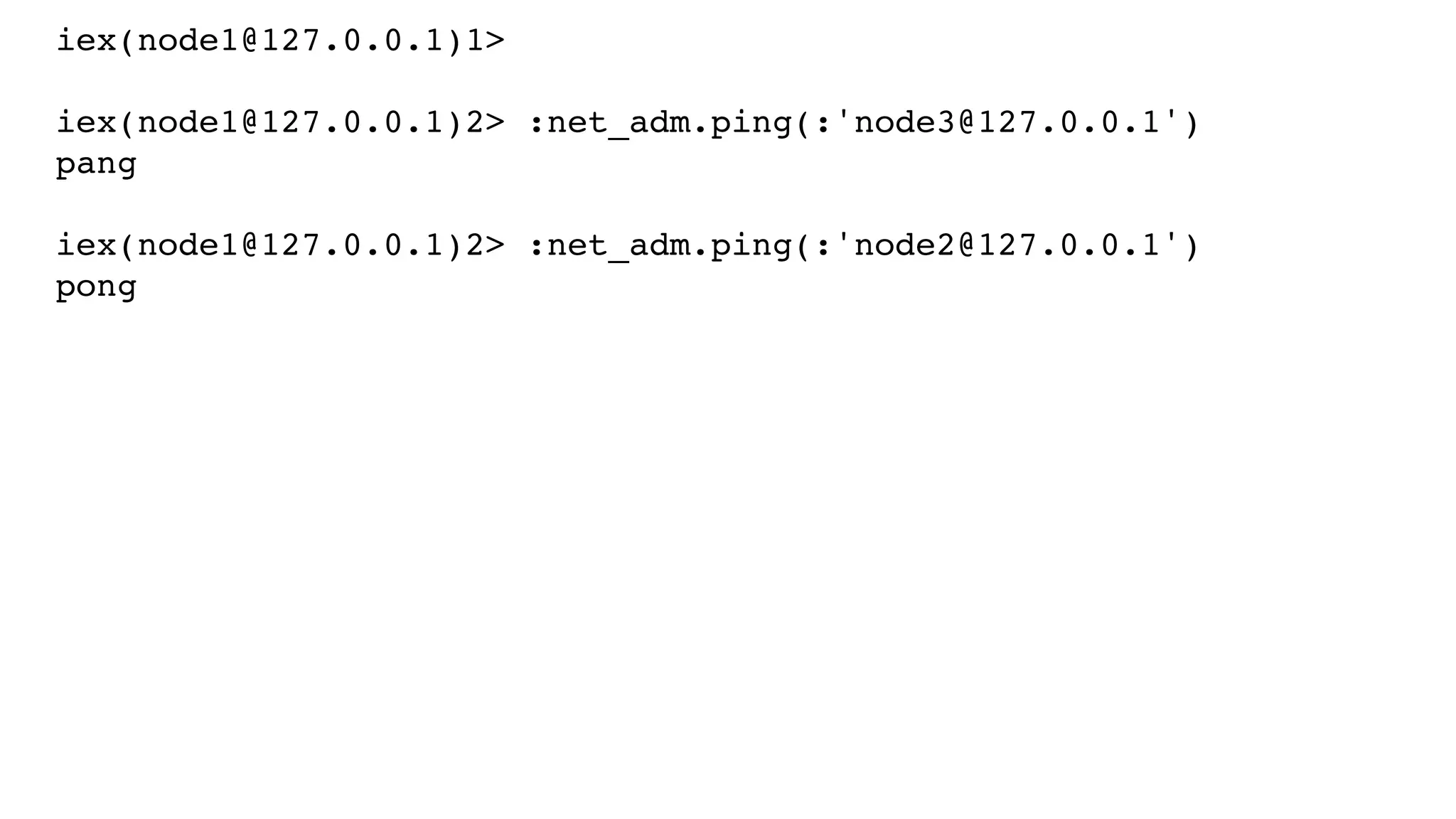

![iex(node1@127.0.0.1)1>

iex(node1@127.0.0.1)2> :net_adm.names()

{:ok, [{'rabbit', 25672}, {'node1', 51813}, {'node2', 51815}]}

iex(node1@127.0.0.1)3>](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-79-2048.jpg)

![iex(node1@127.0.0.1)1>

iex(node1@127.0.0.1)2> :net_adm.names()

{:ok, [{'rabbit', 25672}, {'node1', 51813}, {'node2', 51815}]}

iex(node1@127.0.0.1)3> Node.list()

[]

iex(node1@127.0.0.1)4>](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-80-2048.jpg)

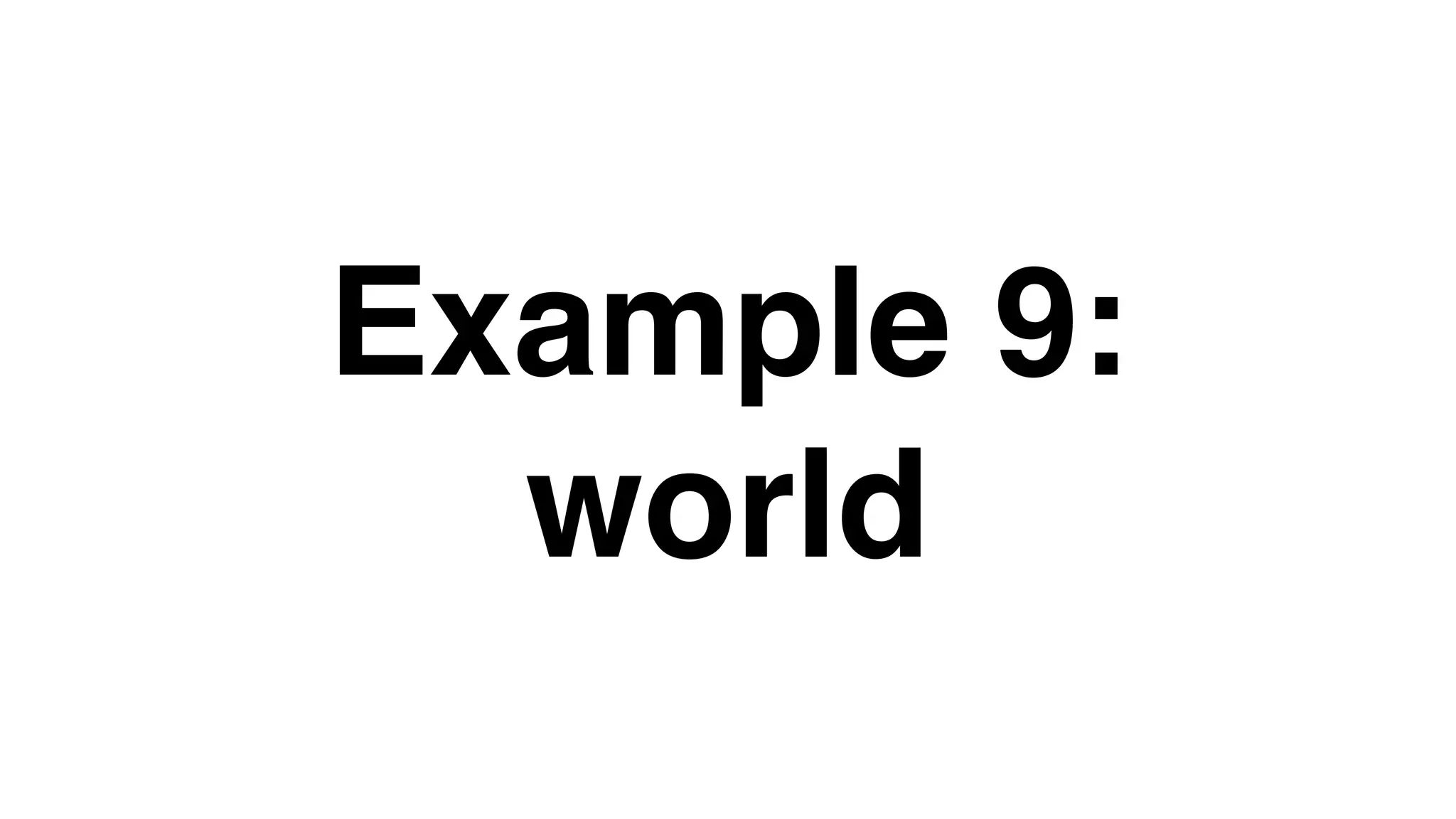

![iex(node1@host1.com)1>

iex(node1@host1.com)1> :net_adm.world()

[:"node1@host1.com", :"node2@host1.com", :"node3@host2.com", :”no

de4@host2.com", :"node5@host3.com", :"node6@host3.com"]

iex(node1@host1.com)2>](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-86-2048.jpg)

![$ iex --remsh foo@127.0.0.1 --cookie abc --

name bar@localhost

Erlang/OTP 19 [erts-8.1] [source] [64-bit]

[smp:8:8] [async-threads:10] [hipe] [kernel-

poll:false] [dtrace]

Interactive Elixir (1.3.4) - press Ctrl+C to

exit (type h() ENTER for help)

iex(foo@127.0.0.1)1>](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-89-2048.jpg)

![iex(node1@127.0.0.1)1> :mnesia.create_schema([:'node1@127.0.0.1'])

:ok](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-119-2048.jpg)

![iex(node1@127.0.0.1)1> :mnesia.create_schema([:'node1@127.0.0.1'])

:ok

iex(node1@127.0.0.1)2> :mnesia.start()

:ok](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-120-2048.jpg)

![iex(node1@127.0.0.1)1> :mnesia.create_schema([:'node1@127.0.0.1'])

:ok

iex(node1@127.0.0.1)2> :mnesia.start()

:ok

iex(node1@127.0.0.1)3> :mnesia.info()

---> Processes holding locks <---

---> Processes waiting for locks <---

---> Participant transactions <---

---> Coordinator transactions <---

---> Uncertain transactions <---

---> Active tables <---

schema : with 1 records occupying 413 words of mem

===> System info in version "4.14.1", debug level = none <===

opt_disc. Directory "/Users/gmile/Mnesia.node1@127.0.0.1" is used.

use fallback at restart = false

running db nodes = ['node1@127.0.0.1']

stopped db nodes = []

master node tables = []

remote = []

ram_copies = []

disc_copies = [schema]

disc_only_copies = []

[{'node1@127.0.0.1',disc_copies}] = [schema]

2 transactions committed, 0 aborted, 0 restarted, 0 logged to disc

0 held locks, 0 in queue; 0 local transactions, 0 remote

0 transactions waits for other nodes: []

:ok

iex(node1@127.0.0.1)4>](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-121-2048.jpg)

![iex(node1@127.0.0.1)1> :mnesia.create_schema([:'node1@127.0.0.1'])

:ok

iex(node1@127.0.0.1)2> :mnesia.start()

:ok

iex(node1@127.0.0.1)3> :mnesia.info()

---> Processes holding locks <---

---> Processes waiting for locks <---

---> Participant transactions <---

---> Coordinator transactions <---

---> Uncertain transactions <---

---> Active tables <---

schema : with 1 records occupying 413 words of mem

===> System info in version "4.14.1", debug level = none <===

opt_disc. Directory "/Users/gmile/Mnesia.node1@127.0.0.1" is used.

use fallback at restart = false

running db nodes = ['node1@127.0.0.1']

stopped db nodes = []

master node tables = []

remote = []

ram_copies = []

disc_copies = [schema]

disc_only_copies = []

[{'node1@127.0.0.1',disc_copies}] = [schema]

2 transactions committed, 0 aborted, 0 restarted, 0 logged to disc

0 held locks, 0 in queue; 0 local transactions, 0 remote

0 transactions waits for other nodes: []

:ok

iex(node1@127.0.0.1)4>

“schema” table exists

as a disk_copy (RAM + disk)

on node1@127.0.0.1](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-122-2048.jpg)

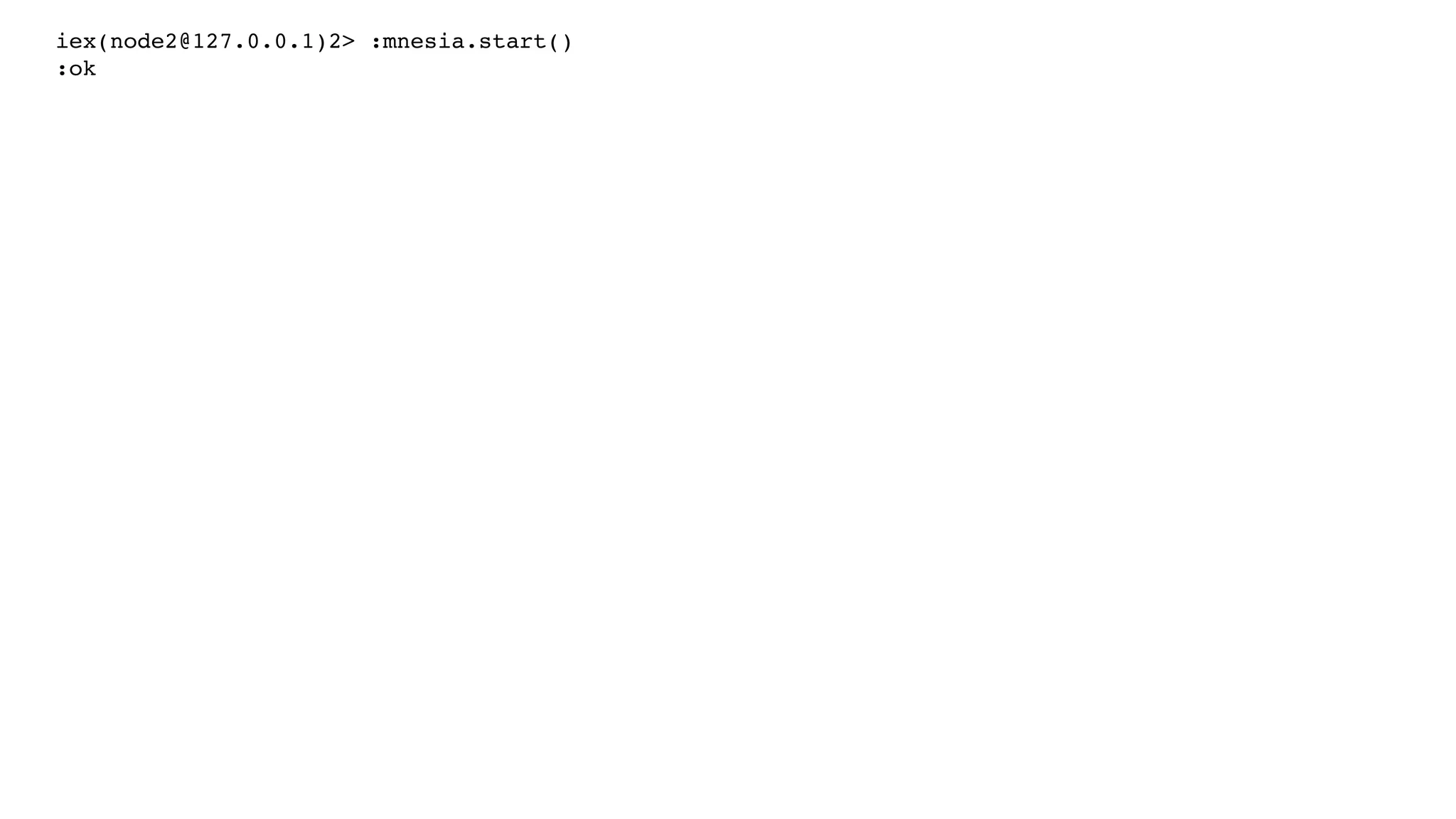

![iex(node2@127.0.0.1)2> :mnesia.start()

:ok

iex(node3@127.0.0.1)2> :mnesia.start()

:ok

iex(node1@127.0.0.1)2> :mnesia.change_config(:extra_db_nodes, [:’node2@127.0.0.1’])

{:ok, [:"node2@127.0.0.1"]}](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-125-2048.jpg)

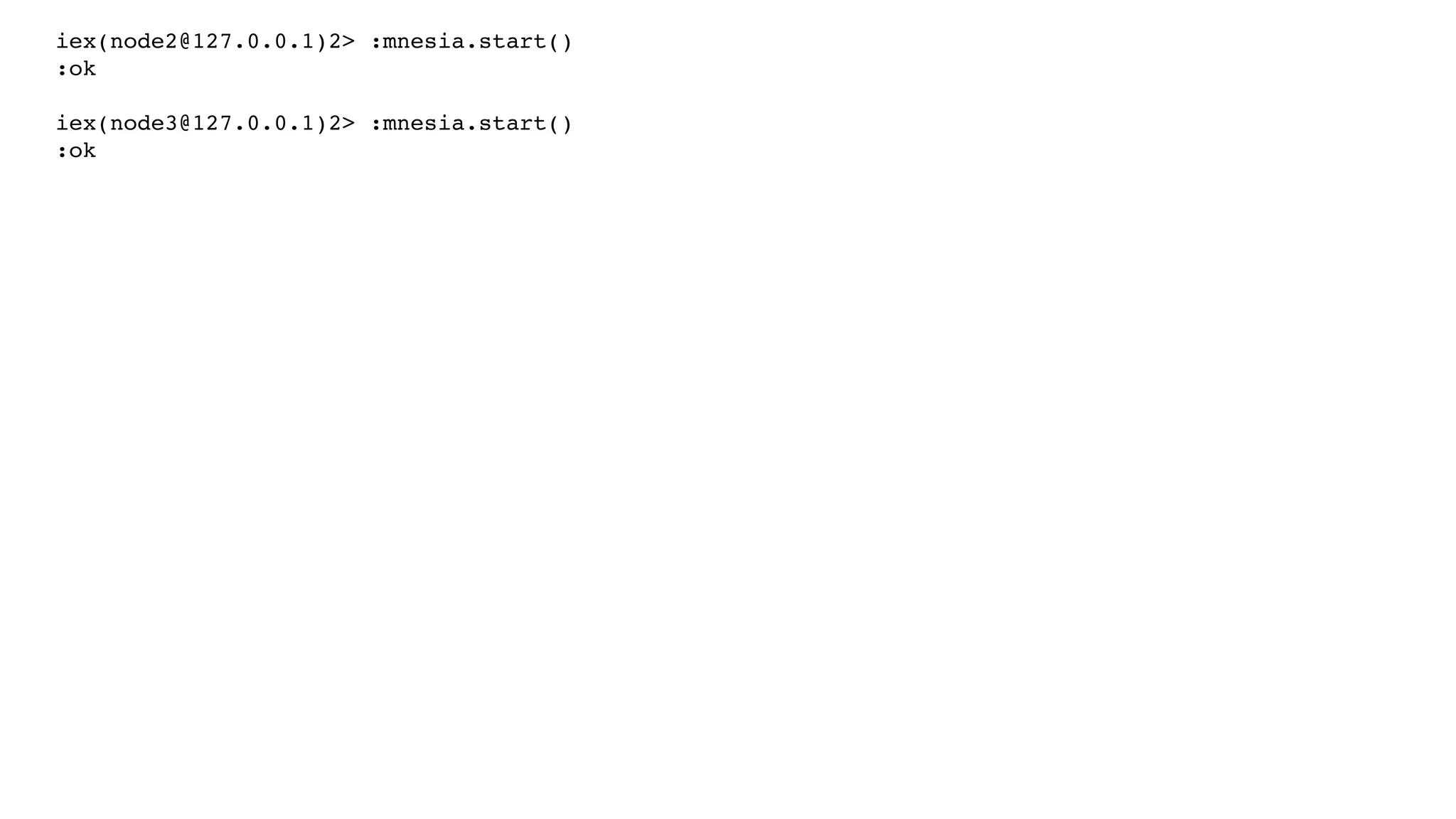

![iex(node2@127.0.0.1)2> :mnesia.start()

:ok

iex(node3@127.0.0.1)2> :mnesia.start()

:ok

iex(node1@127.0.0.1)2> :mnesia.change_config(:extra_db_nodes, [:’node2@127.0.0.1’])

{:ok, [:"node2@127.0.0.1"]}

iex(node1@127.0.0.1)3> :mnesia.change_config(:extra_db_nodes, [:’node3@127.0.0.1’])

{:ok, [:"node3@127.0.0.1"]}](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-126-2048.jpg)

![iex(node2@127.0.0.1)2> :mnesia.start()

:ok

iex(node3@127.0.0.1)2> :mnesia.start()

:ok

iex(node1@127.0.0.1)2> :mnesia.change_config(:extra_db_nodes, [:’node2@127.0.0.1’])

{:ok, [:"node2@127.0.0.1"]}

iex(node1@127.0.0.1)3> :mnesia.change_config(:extra_db_nodes, [:’node3@127.0.0.1’])

{:ok, [:"node3@127.0.0.1"]}

iex(node1@127.0.0.1)1> :mnesia.create_table(:books, [disc_copies: [:'node1@127.0.0.1'],

attributes: [:id, :title, :year]])

:ok](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-127-2048.jpg)

![iex(node2@127.0.0.1)2> :mnesia.start()

:ok

iex(node3@127.0.0.1)2> :mnesia.start()

:ok

iex(node1@127.0.0.1)2> :mnesia.change_config(:extra_db_nodes, [:’node2@127.0.0.1’])

{:ok, [:"node2@127.0.0.1"]}

iex(node1@127.0.0.1)3> :mnesia.change_config(:extra_db_nodes, [:’node3@127.0.0.1’])

{:ok, [:"node3@127.0.0.1"]}

iex(node1@127.0.0.1)1> :mnesia.create_table(:books, [disc_copies: [:'node1@127.0.0.1'],

attributes: [:id, :title, :year]])

:ok

iex(node1@127.0.0.1)4> :mnesia.add_table_copy(:books, :'node2@127.0.0.1', :ram_copies)

:ok](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-128-2048.jpg)

![iex(node2@127.0.0.1)2> :mnesia.start()

:ok

iex(node3@127.0.0.1)2> :mnesia.start()

:ok

iex(node1@127.0.0.1)2> :mnesia.change_config(:extra_db_nodes, [:’node2@127.0.0.1’])

{:ok, [:"node2@127.0.0.1"]}

iex(node1@127.0.0.1)3> :mnesia.change_config(:extra_db_nodes, [:’node3@127.0.0.1’])

{:ok, [:"node3@127.0.0.1"]}

iex(node1@127.0.0.1)1> :mnesia.create_table(:books, [disc_copies: [:'node1@127.0.0.1'],

attributes: [:id, :title, :year]])

:ok

iex(node1@127.0.0.1)4> :mnesia.add_table_copy(:books, :'node2@127.0.0.1', :ram_copies)

:ok

iex(node1@127.0.0.1)5> :mnesia.add_table_copy(:books, :'node3@127.0.0.1', :ram_copies)

:ok](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-129-2048.jpg)

![iex(node1@127.0.0.1)6> :mnesia.info()

---> Processes holding locks <---

---> Processes waiting for locks <---

---> Participant transactions <---

---> Coordinator transactions <---

---> Uncertain transactions <---

---> Active tables <---

books : with 0 records occupying 304 words of mem

schema : with 2 records occupying 566 words of mem

===> System info in version "4.14.1", debug level = none <===

opt_disc. Directory "/Users/gmile/Mnesia.node1@127.0.0.1" is used.

use fallback at restart = false

running db nodes = ['node3@127.0.0.1','node2@127.0.0.1','node1@127.0.0.1']

stopped db nodes = []

master node tables = []

remote = []

ram_copies = []

disc_copies = [books,schema]

disc_only_copies = []

[{'node1@127.0.0.1',disc_copies},

{'node2@127.0.0.1',ram_copies},

{'node3@127.0.0.1',ram_copies}] = [schema,books]

12 transactions committed, 0 aborted, 0 restarted, 10 logged to disc

0 held locks, 0 in queue; 0 local transactions, 0 remote

0 transactions waits for other nodes: []

:ok

iex(node1@127.0.0.1)32>](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-130-2048.jpg)

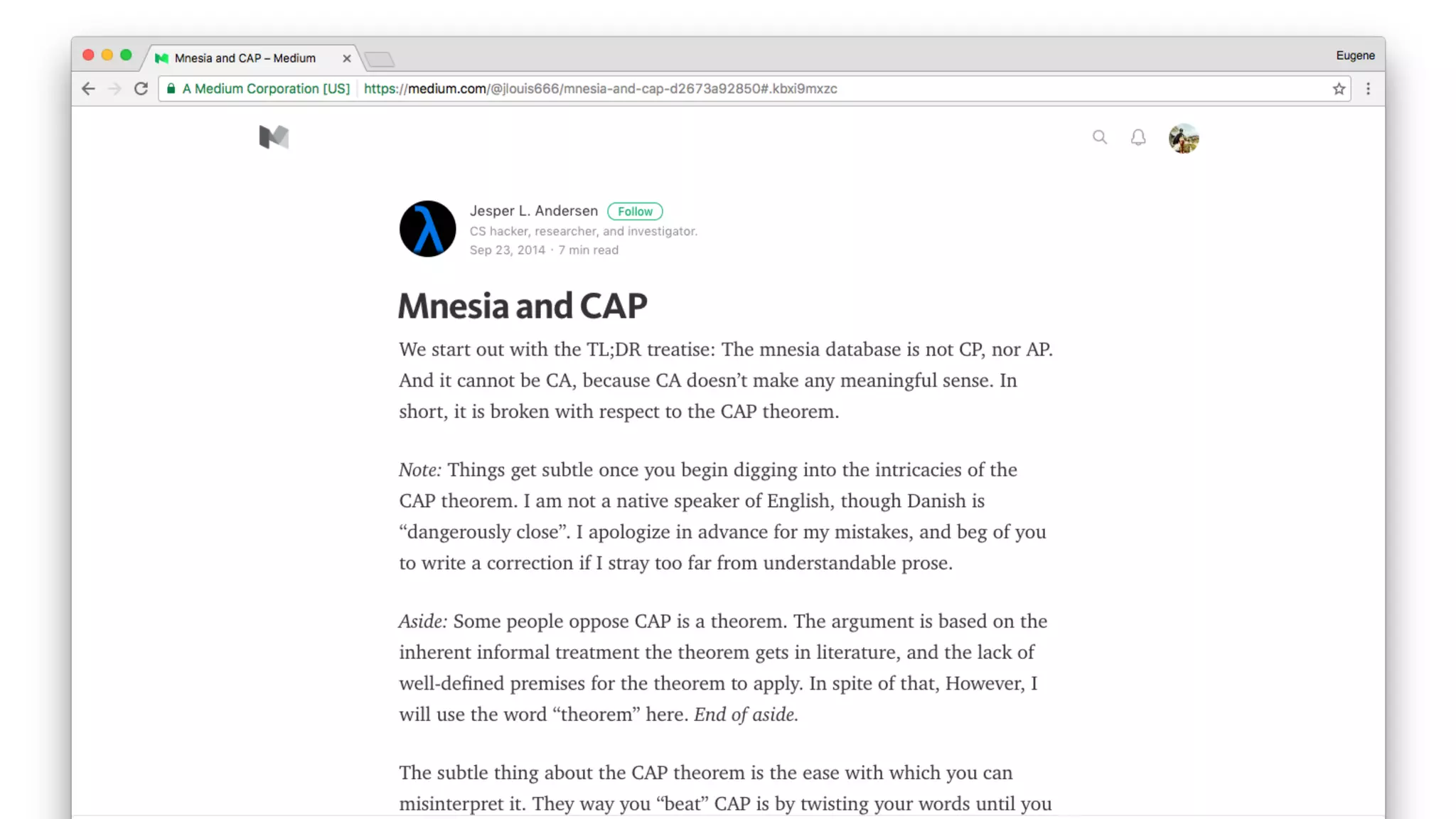

![iex(node1@127.0.0.1)6> :mnesia.info()

---> Processes holding locks <---

---> Processes waiting for locks <---

---> Participant transactions <---

---> Coordinator transactions <---

---> Uncertain transactions <---

---> Active tables <---

books : with 0 records occupying 304 words of mem

schema : with 2 records occupying 566 words of mem

===> System info in version "4.14.1", debug level = none <===

opt_disc. Directory "/Users/gmile/Mnesia.node1@127.0.0.1" is used.

use fallback at restart = false

running db nodes = ['node3@127.0.0.1','node2@127.0.0.1','node1@127.0.0.1']

stopped db nodes = []

master node tables = []

remote = []

ram_copies = []

disc_copies = [books,schema]

disc_only_copies = []

[{'node1@127.0.0.1',disc_copies},

{'node2@127.0.0.1',ram_copies},

{'node3@127.0.0.1',ram_copies}] = [schema,books]

12 transactions committed, 0 aborted, 0 restarted, 10 logged to disc

0 held locks, 0 in queue; 0 local transactions, 0 remote

0 transactions waits for other nodes: []

:ok

iex(node1@127.0.0.1)32>

“schema” + “books” tables exist

on 3 different nodes

3 nodes are running

current node (node1)

keeps 2 tables as RAM + disk](https://image.slidesharecdn.com/elixirkievjan2017-170207122149/75/Magic-Clusters-and-Where-to-Find-Them-2-0-Eugene-Pirogov-131-2048.jpg)