Embed presentation

Downloaded 136 times

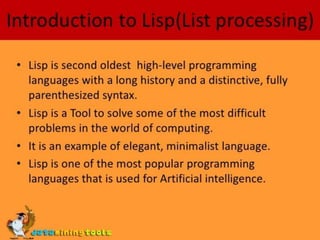

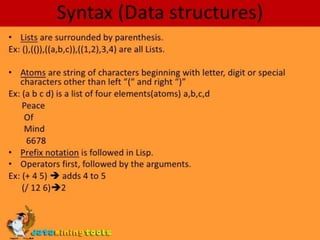

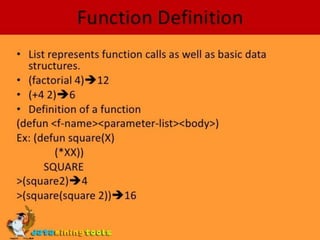

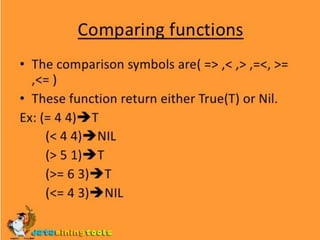

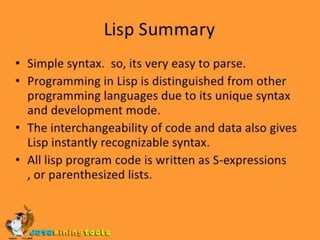

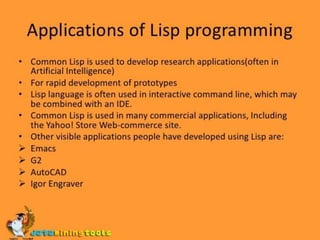

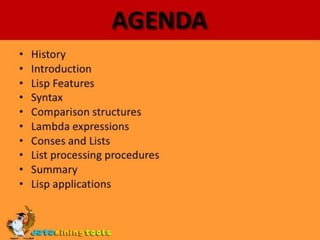

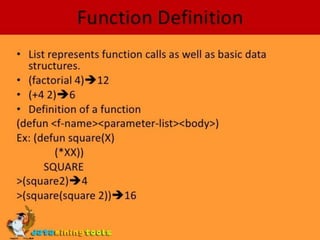

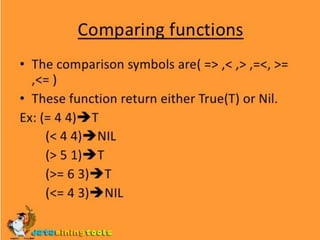

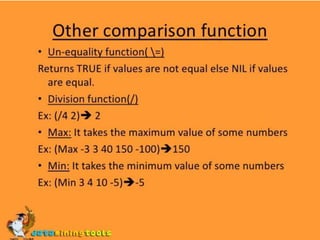

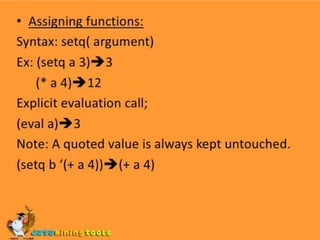

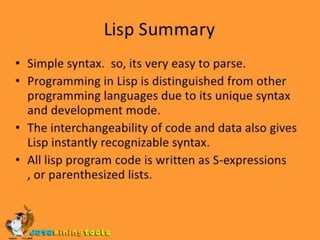

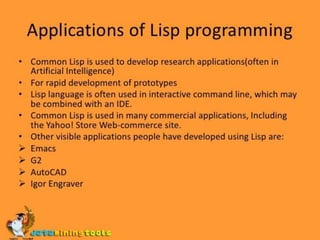

This document is Chapter 9 of an introduction to Lisp, written by Mr. Wahab Khan for a software engineering course at Sarhad University Peshawar. It likely covers key concepts in Lisp programming. The chapter is part of a larger course curriculum.