Embed presentation

Downloaded 10 times

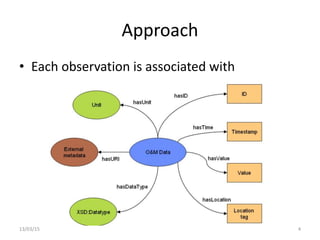

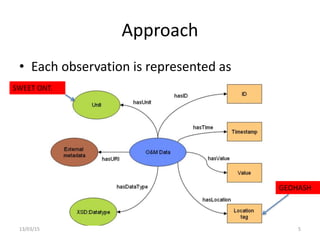

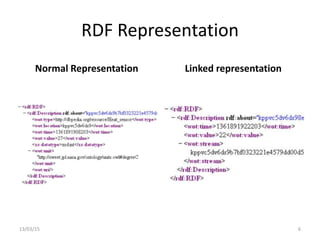

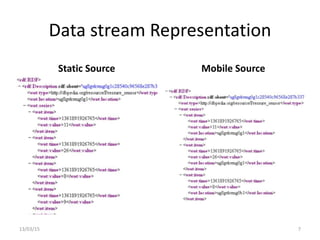

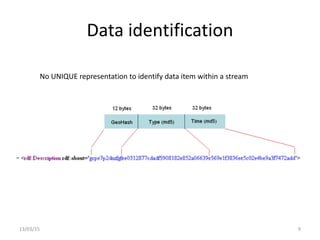

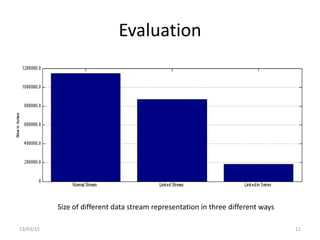

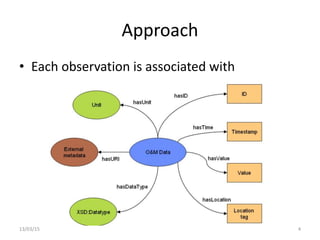

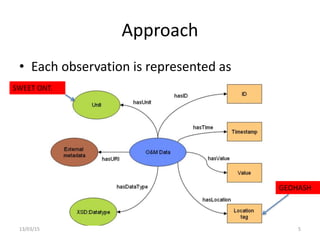

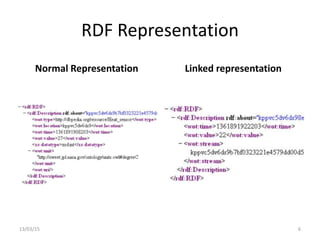

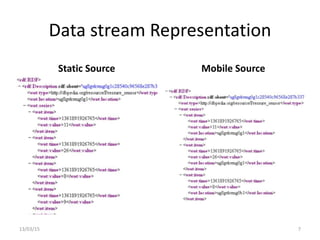

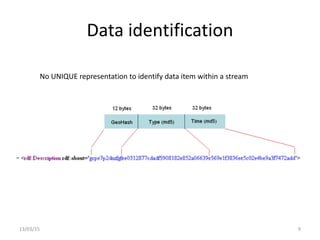

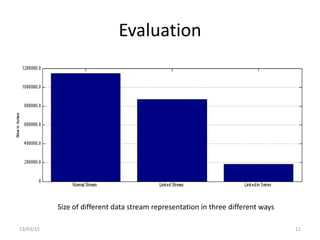

The document discusses a linked-data model aimed at representing semantic sensor data streams to enhance the efficiency of queries on large-scale annotated data. It proposes a solution that centralizes static attributes and facilitates their association with dynamic observations, utilizing a clustering approach for storage and retrieval. Evaluation focuses on data representation, identification, and the efficiency of clustering methods in various scenarios.