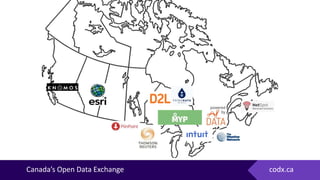

The document highlights Canada's first Open Data Exchange National Summit in 2016, emphasizing the vast potential and challenges of open data. It discusses the importance of improved data analytics, the need for better data governance, and how open data can stimulate innovation and economic value. Moreover, it presents initiatives aimed at promoting open data utilization among businesses and governments to address municipal challenges effectively.