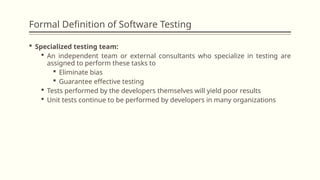

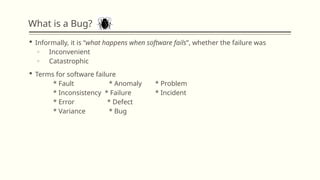

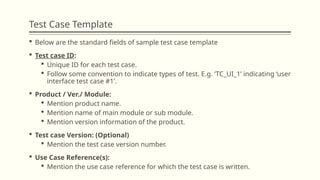

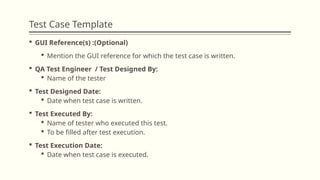

The document provides an overview of software testing, detailing its formal definition, the role of specialized testing teams, and the various testing models such as waterfall, V model, modified V model, spiral model, and agile testing. It emphasizes the importance of structured test cases, defect identification, and the software testing life cycle, including project initiation, defect reporting, and analysis. Agile testing principles are also outlined, highlighting continuous testing and collaboration among team members throughout the development process.