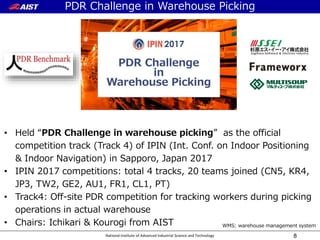

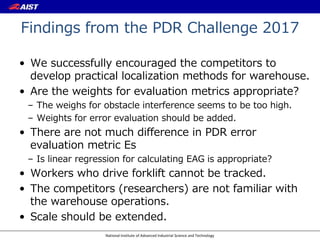

The document reviews the PDR challenge and the XDR challenge in warehouse picking conducted by the National Institute of Advanced Industrial Science and Technology. It discusses benchmarks, competition formats, evaluation metrics, and findings from previous challenges, highlighting the importance of integrating various tracking methods and improving localization in warehouse environments. Additionally, it outlines the structure and objectives of the XDR challenge, which includes both pedestrian and vehicle tracking during warehouse operations.

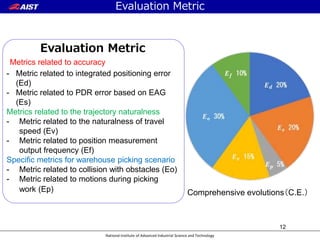

![National Institute of Advanced Industrial Science and Technology

Example of error plot for obtaining EAG

15

In PDR Challenge2017, we adopt simple linear regression whose

intersection equal to 0 for calculating representative EAG

EAG[m/sec]](https://image.slidesharecdn.com/ipin2018sspresentationpublishrp-181005072447/85/Review-of-PDR-Challenge-in-Warehouse-Picking-and-Advancing-to-xDR-Challenge-15-320.jpg)

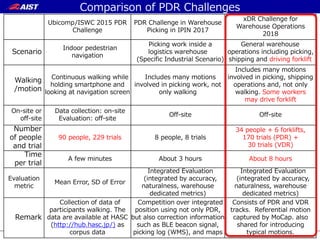

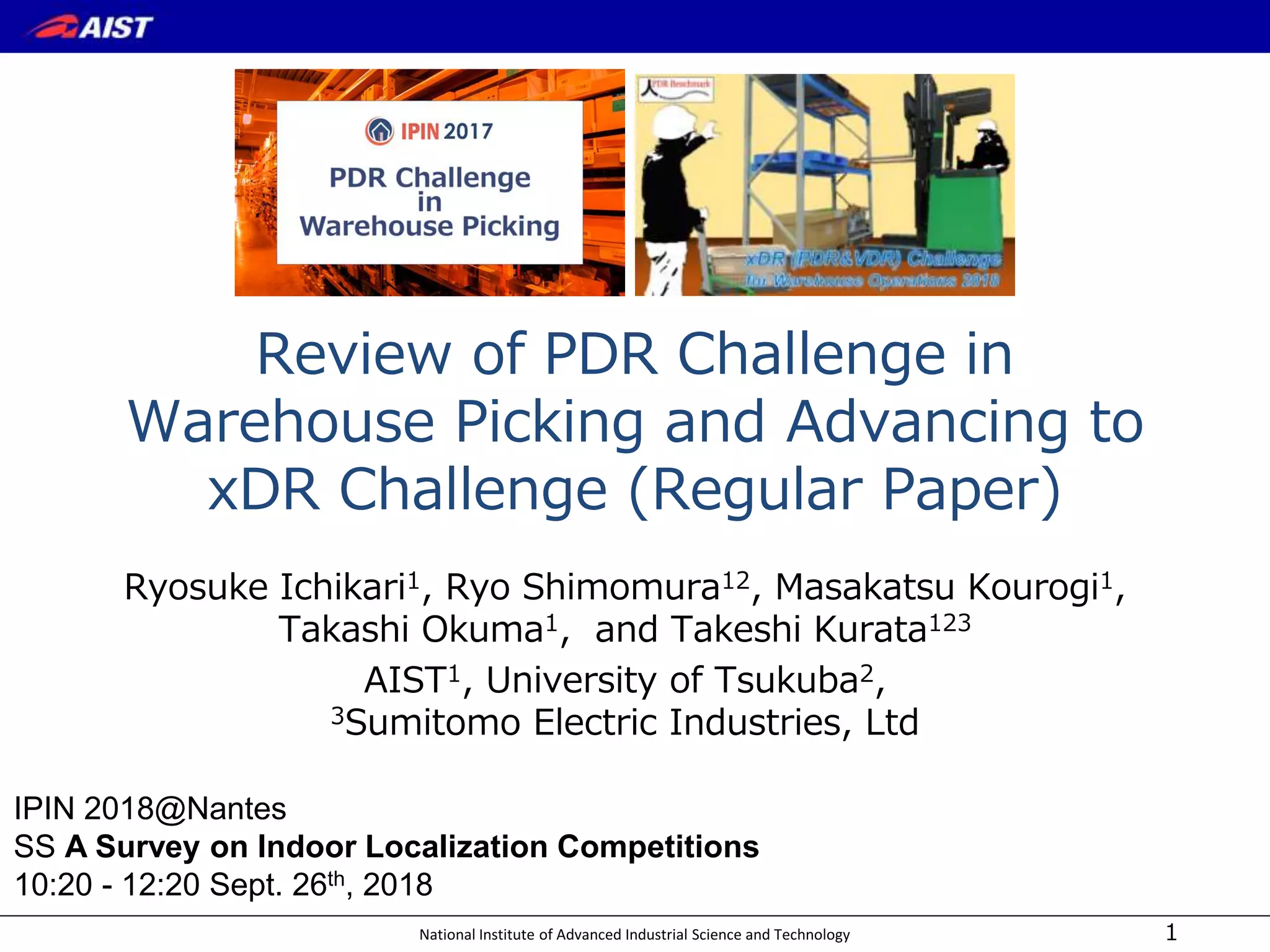

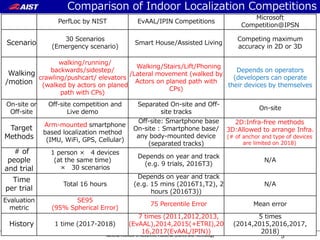

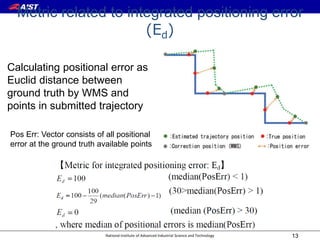

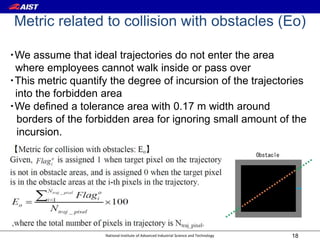

![National Institute of Advanced Industrial Science and Technology 21

Results of the evaluation metrics and final C.E.

Result of Metric Eo

Result of EAG for Metric Es

PDR Challenge in Warehouse Picking

Example:EAG:0.12m/sec. ⇒ 7.2m/min,

Target accuracy of the integrated localization: 4.0 m

Guideline of absolute positioning method: every 30 sec. & 0.4 m or less error (3.6+0.4=4.0m)

Team Ed Es Ep Ev Eo Ef Median

of Error

[m]

Median

of EAG

[m/s]

C.E.

Team1 66.876 93.692 97.195 99.998 51.821 11.323 10.606 0.173 68.652

Team2 71.524 94.872 43.545 100 100 9.258 0.150 90.419

Team3 76.459 95.333 72.719 87.835 93.549 99.271 7.827 0.141 89.161

Team4 51.934 90.769 84.965 95.657 59.623 99.239 14.939 0.230 74.948

Team5 78.386 96.308 97.484 99.093 45.530 100 7.268 0.122 78.336

AIST 80.272 96.718 81.057 98.711 89.968 95.879 6.721 0.114 90.836

99.876](https://image.slidesharecdn.com/ipin2018sspresentationpublishrp-181005072447/85/Review-of-PDR-Challenge-in-Warehouse-Picking-and-Advancing-to-xDR-Challenge-21-320.jpg)

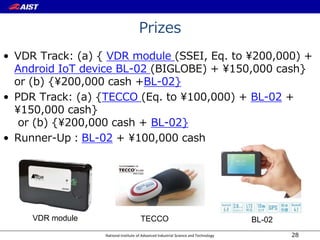

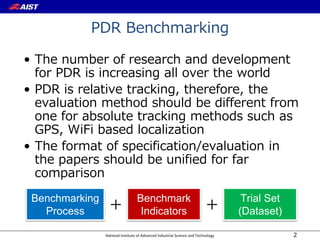

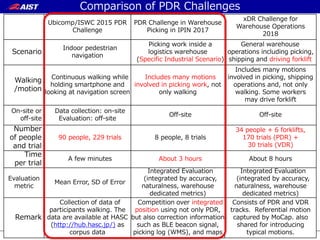

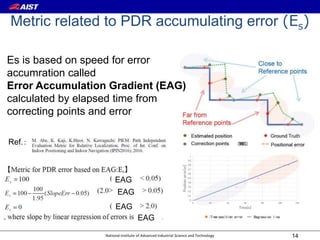

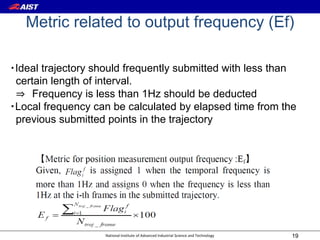

![National Institute of Advanced Industrial Science and Technology

Re-consideration of the weights (1)

• Results with original weights at the competition

Ed(median_error):Es(EAG):Ep(picking):Ev(velocity):Eo(obstacle):Ef(frequency)

=20%:20%:5%:15%:30%:10%

23

Team Ed Es Ep Ev Eo Ef Median

Error

[m]

Median

EAG

[m/s]

C.E.

Team1 66.876 93.692 97.195 99.998 51.821 11.323 10.606 0.173 68.652

Team2 71.524 94.872 43.545 100 99.876 100 9.258 0.150 90.419

Team3 76.459 95.333 72.719 87.835 93.549 99.271 7.827 0.141 89.161

Team4 51.934 90.769 84.965 95.657 59.623 99.239 14.939 0.230 74.948

Team5 78.386 96.308 97.484 99.093 45.530 100 7.268 0.122 78.336](https://image.slidesharecdn.com/ipin2018sspresentationpublishrp-181005072447/85/Review-of-PDR-Challenge-in-Warehouse-Picking-and-Advancing-to-xDR-Challenge-23-320.jpg)

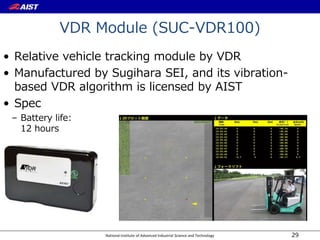

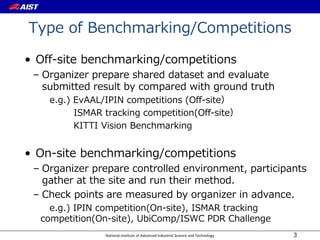

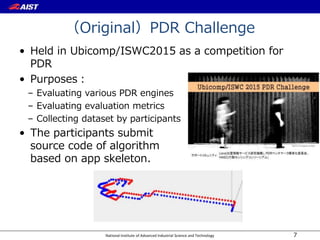

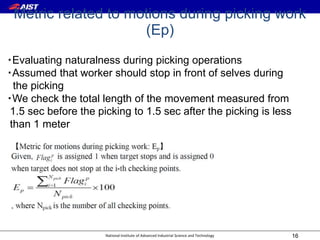

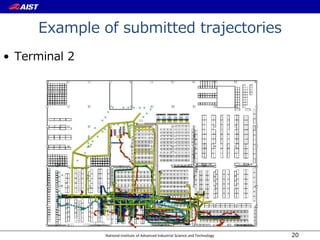

![National Institute of Advanced Industrial Science and Technology

Re-consideration of the weights (2)

• Trial by adding weight for error metrics (Es(median_error),

Ed(EAG)) and reducing weight of Eo(obstacle).

• The weights will be replaced with updated one.

24

Ed(median_error):Es(EAG):Ep(picking):Ev(velocity):Eo(obstacle):Ef(frequency)

=30%:30%:5%:10%:15%:10%

Team Ed Es Ep Ev Eo Ef 50%

eCDF

[m]

50%

eCDF

[m/s]

C.E.

Team1 66.876 87.053 97.195 99.998 51.821 11.323 10.606 0.173 69.944

Team2 71.524 89.474 43.545 100 99.876 100 9.258 0.150 85.458

Team3 76.459 90.421 72.719 87.835 93.549 99.271 7.827 0.141 86.443

Team4 51.934 81.053 84.965 95.657 59.623 99.239 14.939 0.230 72.577

Team5 78.386 96.308 97.484 99.093 45.530 100 7.268 0.122 84.021](https://image.slidesharecdn.com/ipin2018sspresentationpublishrp-181005072447/85/Review-of-PDR-Challenge-in-Warehouse-Picking-and-Advancing-to-xDR-Challenge-24-320.jpg)