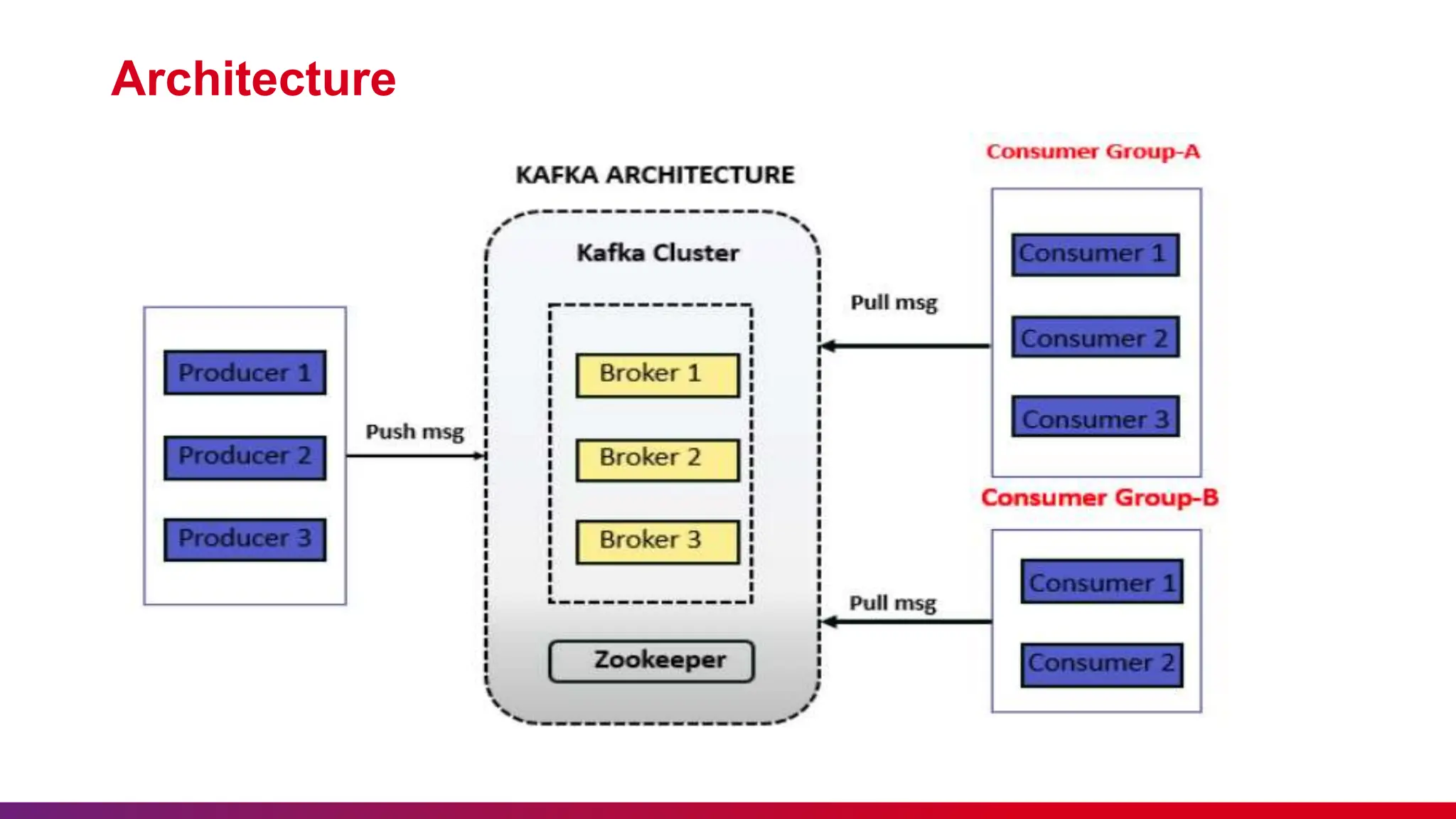

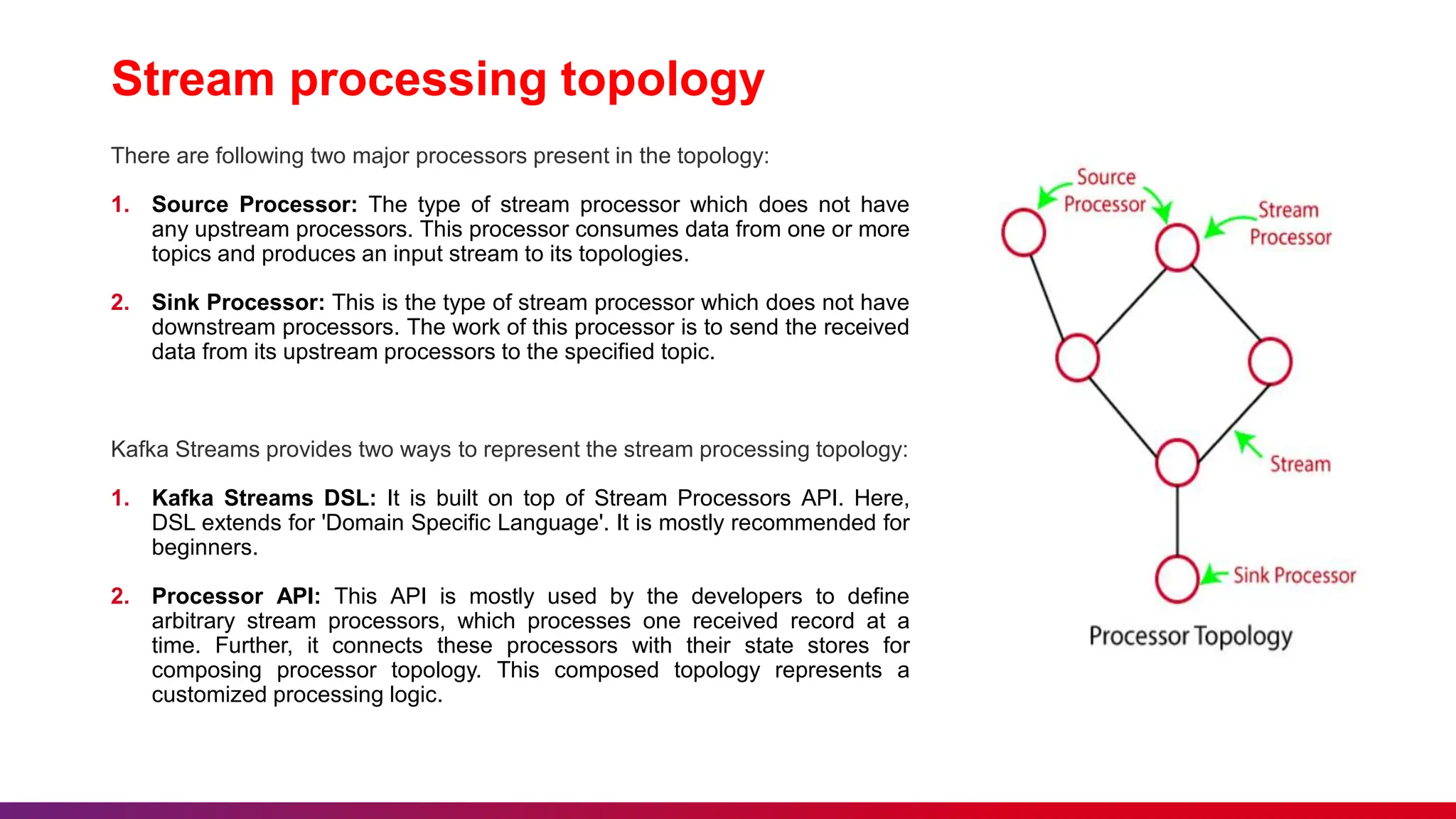

The document presents an overview of Kafka Streams, including etiquette for attendees, key concepts of messaging systems, and detailed functionalities of Apache Kafka. It discusses the architecture, advantages of Kafka Streams, and its application in real-time data processing. Additionally, it outlines the importance of feedback, punctuality, and maintaining silence during the sessions.