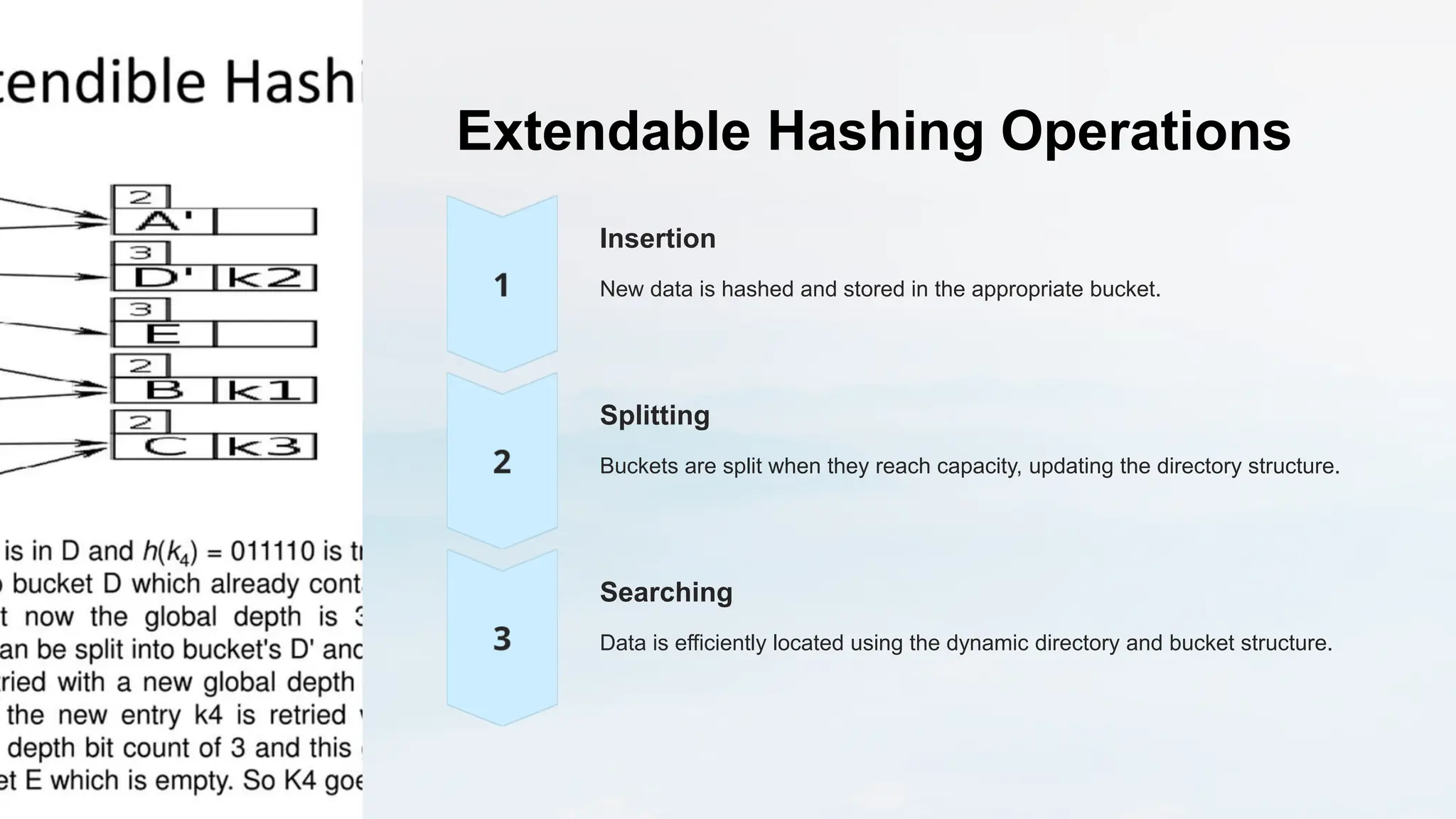

Extendable hashing is an advanced hashing technique that uses a dynamic directory structure and adjustable bucket sizes to effectively manage varying data volumes while minimizing collisions and the need for rehashing. This method enhances scalability and performance in data storage and retrieval, making it ideal for database management and caching systems. Its flexibility allows it to adapt to changing data distributions, addressing the limitations of traditional fixed-size hash tables.